Chapter 7. Typical Deployments

So far we have been looking at Hazelcast in one particular type of deployment, however, there are a number of configurations we could use depending on our particular architecture and application needs. Each deployment strategy tends to be best suited to certain types of configuration or application deployment; so in this chapter we will look at:

The issues of co-locating data too close to the application

Thin client connectivity, where it's best used and the issues that come with it

Lite member node (nee super client) as a middle ground option

Overview of the architectural choices

All heap and nowhere to go

One thing we may have noticed with all the examples we have been working on so far is that as we are running Hazelcast in an embedded mode, each of the JVM instances will provide both the application's functionality and also house the data storage. Hence the persisted cluster data is held within the heap of the various nodes, but this does mean that we will need to control the provisioned heap sizes more accurately as it is now more than just a non-functional advantage to have more; size matters.

However, depending on the type of application we are developing, it may not be convenient or suitable to directly use the application's heap on the running instance for storing in the data. A pertinent example of this situation would be a web application, especially one that runs in a potentially shared web application container (for example, Apache Tomcat).

This would be rather unsuitable for storing extensive amounts of data within the heap as our application's storage...

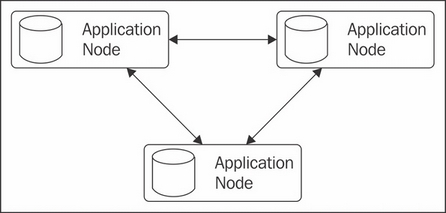

Stepping back from the cluster

To avoid this situation we can separate our application away from the data cluster through the use of a thin client driver that looks and appears very similar to a direct Hazelcast instance; however, in this case, the operations performed are delegated out to a wider cluster of real instances. This has the benefit of separating our application away from the scaling of the Hazelcast cluster, allowing us to scale up our own application without having to scale everything together, maximizing the utilization efficiency of the resources we are running on. However, we can still scale up our data cluster by adding more nodes which will lead to a bottleneck, either for memory storage requirements or performance and compute necessities.

If we create a "server side" vanilla instance to provide us with a cluster of nodes we can connect out to from a client.

Serialization and classes

One issue we do introduce when using the thin client driver( )is that while our cluster can hold, persist, and serve classes it doesn't have to and might not actually hold the POJO class itself; rather a serialization of the object. This means that as long as each of our clients holds the appropriate class in its classpath we can successfully serialize (for persistence) and de-serialize (for retrieval), but our cluster nodes can't. You can most notably see this if we try to retrieve entries via the TestApp console for custom objects, this will produce ClassNotFoundException.

The process used to serialize objects to the cluster starts by checking whether the object is a well-known primitive-like class (String, Long, Integer, byte[], ByteBuffer, Date); if so, these are serialized directly. If not, Hazelcast next checks to see if the object implements com.hazelcast.nio.DataSerializable and if so uses the appropriate methods provided to marshal the object. Otherwise...

One issue with the client method of connecting to the cluster is that most operations will require multiple hops in order to perform an action. This is as we only maintain a connection to a single node of the cluster and run all our operations through it. With the exception of operations performed on partitions owned by that node, all other activities must be handed off to the node responsible out in the wider cluster, with the single node acting as a proxy for the client.

This will add latency to the requests made to the cluster. Should that latency be too high, there is an alternative method of connecting to the cluster known as a lite member (originally known as a super client). This is effectively a non-participant member of the cluster, in that it maintains connections to all the other nodes in the cluster and will directly talk to partition owners, but does not provide any storage or computation to the cluster. This avoids the double hop required by the standard...

As we have seen there are a number of different types of deployment we could use, which one you choose really depends on our application's make up. Each has a number of trade-offs but most deployments tend to use one of the first two, with the client and server cluster approach the usual favorite unless we have a mostly compute focused application where the former is a simpler set up.

So let's have a look at the various architectural setups we could employ and what situations they are best suited to.

This is the standard example we have been mostly using until now, each node houses both our application itself, and data persistence and processing. It is most useful when we have an application that is primarily focused towards asynchronous or high performance computing, and will be executing lots of tasks on the cluster. The greatest drawback is the inability to scale our application and data capacity separately.

Clients and server cluster

This is a more...

We have seen that we have a number of strategies at our deposal for deploying Hazelcast within our architecture. Be it, treating it like a clustered standalone product akin to a traditional data source but with more resilience and scalability. For more complex applications we can directly absorb the capabilities directly into our application, but that does come with some strings attached. But whichever approach we choose for our particular use case, we have easy access to scaling and control at our finger tips.

In the next chapter we will look beyond just Hazelcast and the alternative methods of getting access to our held data in the cluster.