Chapter 2. Parallelism of Statistics and Machine Learning

At first glance, machine learning seems to be distant from statistics. However, if we take a deeper look into them, we can draw parallels between both. In this chapter, we will deep dive into the details. Comparisons have been made between linear regression and lasso/ridge regression in order to provide a simple comparison between statistical modeling and machine learning. These are basic models in both worlds and are good to start with.

In this chapter, we will cover the following:

- Understanding of statistical parameters and diagnostics

- Compensating factors in machine learning models to equate statistical diagnostics

- Ridge and lasso regression

- Comparison of adjusted R-square with accuracy

Comparison between regression and machine learning models

Linear regression and machine learning models both try to solve the same problem in different ways. In the following simple example of a two-variable equation fitting the best possible plane, regression models try to fit the best possible hyperplane by minimizing the errors between the hyperplane and actual observations. However, in machine learning, the same problem has been converted into an optimization problem in which errors are modeled in squared form to minimize errors by altering the weights.

In statistical modeling, samples are drawn from the population and the model will be fitted on sampled data. However, in machine learning, even small numbers such as 30 observations would be good enough to update the weights at the end of each iteration; in a few cases, such as online learning, the model will be updated with even one observation:

Machine learning models can be effectively parallelized and made to work on multiple machines...

Compensating factors in machine learning models

Compensating factors in machine learning models to equate statistical diagnostics is explained with the example of a beam being supported by two supports. If one of the supports doesn't exist, the beam will eventually fall down by moving out of balance. A similar analogy is applied for comparing statistical modeling and machine learning methodologies here.

The two-point validation is performed on the statistical modeling methodology on training data using overall model accuracy and individual parameters significance test. Due to the fact that either linear or logistic regression has less variance by shape of the model itself, hence there would be very little chance of it working worse on unseen data. Hence, during deployment, these models do not incur too many deviated results.

However, in the machine learning space, models have a high degree of flexibility which can change from simple to highly complex. On top, statistical diagnostics on individual...

Machine learning models - ridge and lasso regression

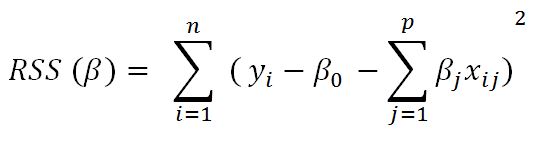

In linear regression, only the residual sum of squares (RSS) is minimized, whereas in ridge and lasso regression, a penalty is applied (also known as shrinkage penalty) on coefficient values to regularize the coefficients with the tuning parameter λ.

When λ=0, the penalty has no impact, ridge/lasso produces the same result as linear regression, whereas λ -> ∞ will bring coefficients to zero:

Before we go deeper into ridge and lasso, it is worth understanding some concepts on Lagrangian multipliers. One can show the preceding objective function in the following format, where the objective is just RSS subjected to cost constraint (s) of budget. For every value of λ, there is an s such that will provide the equivalent equations, as shown for the overall objective function with a penalty factor:

Ridge regression works well in situations where the least squares estimates have high variance. Ridge regression has computational advantages over...

In this chapter, you have learned the comparison of statistical models with machine learning models applied on regression problems. The multiple linear regression methodology has been illustrated with a step-by-step iterative process using the statsmodel package by removing insignificant and multi-collinear variables. Whereas, in machine learning models, removal of variables does not need to be removed and weights get adjusted automatically, but have parameters which can be tuned to fine-tune the model fit, as machine learning models learn by themselves based on data rather than exclusively being modeled by removing variables manually. Though we got almost the same accuracy results between linear regression and lasso/ridge regression methodologies, by using highly powerful machine learning models such as random forest, we can achieve much better uplift in model accuracy than conventional statistical models. In the next chapter, we will be covering a classification example with logistic...