"The irresistible forces meet the movable objects."

—Pat Helland

In this chapter, we will provide an overview of Windows Azure and also briefly explain the history of the platform, why it was created, and why it is interesting and applicable for startup companies. We will also explore the evolution of Windows Azure from its early days back in 2008 right to where it is today. The internals of Windows Azure and the way Microsoft datacenters work will also be explained from a user experience perspective. It describes exactly what happens under the hood of Windows Azure after a developer deploys an application to the platform. The last sections of the chapter contain brief overviews of key features of the platform.

Ray Ozzie arrived at Microsoft in 2005 and stated that survival of the company hinged on a shift to cloud computing. He wrote a manifesto called The Internet Services Disruption in which he stated that there are three tenets that dramatically shift the whole landscape around computing. From his point of view, it was essential to embrace those tenets in Microsoft's products and services. These tenets are as follows:

Advertisement-supported economic models

New delivery and adoption model

Demand for user experience that "just works"

The essence of this manifesto is that he emphasized that the world was changing, the demands of customers were changing, and technology was changing. It was the beginning of a process that finally resulted in the Windows Azure platform.

After the release of Vista and the new Office suite, a project group was formed with top engineers, and Ray Ozzie asked Amitabh Srivastava to lead the project. Also, David Cutler (writer of VMS and leader of the Windows NT team) was involved with this revolutionary initiative. The codename of Windows Azure used to be Red Dog. Virtual machines on Windows Azure are still named with the prefix Red Dog (RD).

On October 27, 2008, at the Professional Developers Conference, Ray Ozzie announced Windows Azure and highlighted its capability in delivering services. The first commercially available release in 2010 of the platform contained:

The Cloud OS (confusingly also called Windows Azure) that offers service management and provisioning, storage, computing power, and networking capabilities

SQL Azure, offering a Database-as-a-Service (currently known as SQL Database)

Microsoft .NET Services, containing features such as workflow and access control (currently known as Windows Azure Service Bus, formerly known as AppFabric)

It was the start of a new era that brought us all into the world of services, agility, faster time to market, new ways of monetizing IT assets, operational expenses versus capital expenses and more. Ever since, Windows Azure has evolved into the mature, enterprise-ready platform it is right now, offering more services, with time.

Windows Azure is about cloud computing. Cloud computing, though, is a vague description of different aspects. Windows Azure is actually a platform that is offered to you as a service (PaaS, meaning Platform as a Service). PaaS enables us to fully concentrate on the application itself and leave all the plumbing to the cloud provider, in this case Microsoft. PaaS offers the management of networking, storage, servers, virtualization, OS, databases, and runtimes. The only thing that's left is the actual application, and that is most important for us since the application is our added value.

Windows Azure runs in large datacenters all around the world. A datacenter is filled with containers, and containers have a lot of servers inside (around 2,000).

Windows Azure offers abstraction to the developer by offering computing power (CPU and memory), storage (disk), and bandwidth (networking hardware). This enables us to treat Windows Azure as a black box without bothering about the internals, although we are curious about the way it works! Well, at least I was.

The best way to describe how a cloud application is created and finally deployed onto a machine in the datacenter is to use an example. Back in the early days, when you wanted to deploy an application, you needed to order hardware, be patient, and install operating systems, database servers, runtimes, and other bits. In the new world of cloud computing, you only need a credit card and a Live ID.

From a developer's perspective, the main entrance to Windows Azure is through the Windows Azure portal (or through the Service Management API, but I'll cover that later in this book). Operators can look at Windows Azure from the Microsoft System Center.

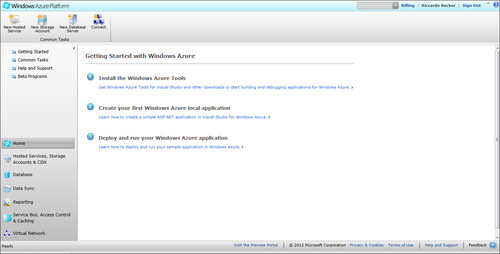

When you go to http://windowsazure.com, you are able to sign up to the Windows Azure Platform. After creating a billing relationship with Microsoft by using your credit card or the invoicing option, you are able to access Windows Azure. The Windows Azure platform portal is your main entrance to massive-scale computing and storage. The following screenshot shows what the portal looks like and how you can access the different features of Windows Azure.

From this portal, you can create applications (hosted services, as per June 2012, called cloud services), enable storage, create databases, and access other offerings from the Windows Azure platform. Let's have a close look at the New Hosted Service option and actually create your first Windows Azure application. Let's prepare the next step by creating a logical area on Windows Azure for your first application.

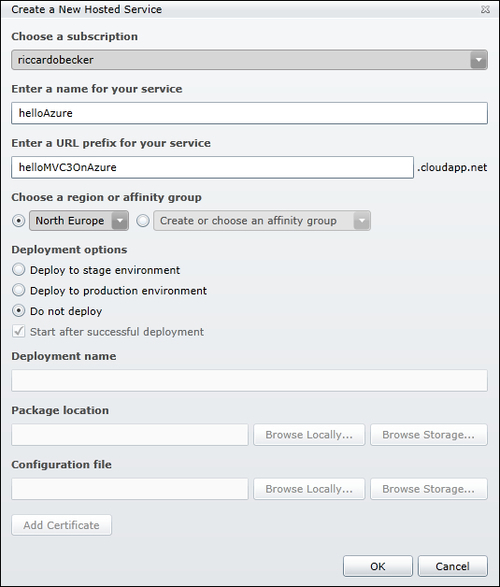

Click on New Hosted Service, and fill out the Create a New Hosted Service screen, as shown in the following screenshot:

You need to pick another name, since the URL prefix needs to be globally unique, and of course, your subscription will be a different one. After clicking on OK, the environment is created for you, and the DNS name entered in the URL textbox is reserved. If you choose the Do not deploy option, only the DNS name will be reserved and you will not get a bill yet, but you can also decide to create the hosted service together with deployment, if you have your binaries and configuration files ready. Hosted services that you create can easily be deleted, and the DNS name will be available again for others.

In order to get your application running on Windows Azure, you need to follow a few initial steps.

Perform the following steps to create and deploy a website:

Install the prerequisites on your machine (you can find them at http://www.microsoft.com/download/en/details.aspx?id=15658).

After downloading and installing both the Windows Azure SDK and Windows Azure Tools for Microsoft Visual Studio 2010, you are able to create your first web application, which can be deployed to Windows Azure.

Start Visual Studio 2010 (make sure you select Run as administrator), go to File | New | Project, and select Cloud from the Installed Templates tab. Name it

MyFirstAzureProjectand click on OK. The following screen appears:

As you can see, creating a Windows Azure service does not mean that you need to learn new skills or new tools; you can leverage your existing .NET skills.

Select ASP.NET MVC3 Web Role and name it MyFirstAzureMVC3Website. A Web Role is in fact a Windows 2008 virtual machine with Internet Information Services enabled. This enables the Web Role to be accessible through the Internet. By picking the MVC3 Web Role, we can again benefit from the already available knowledge on MVC3.

After clicking on OK, you need to pick what project template is used to create the MVC3 Website. For now, it's ok to select the Internet Application and leave the rest of the options at their default values.

Now click on OK, and the solution is created for you:

Your solution looks like an ordinary Visual Studio 2010 solution, but with a few additions to it. As it is a cloud project, not only is the MVC3 project created, but also a cloud project. In the MVC3 project, you will see a class file named WebRole.cs. This standard MVC3 website is ready to be deployed to Windows Azure. The website will run, but some default settings point to local development storage; these will cause the application to crash if somebody tries to reach the deployed website. We will get back to that later on.

To demonstrate upgrade and fault domains, change the ServiceConfiguration.Cloud.cscfg file, and change the Instances count to

2:<Instances count="2" />

This causes two instances of your web role to be deployed on Windows Azure. They are identical, with the same binaries, but having two instances of the same web role running increases availability and enables the website to handle more traffic.

This configuration spins up two servers, has your application deployed onto them, and also creates a load balancer on top of them. Try to imagine how much work this is in a traditional datacenter.

This section will guide you through the deployment of your Windows Azure project.

Right-click on the

MyFirstAzureProjectnode in your solution and select Package.A new popup window appears, but for now it is sufficient to click on the Package button.

Windows Azure Tools will now build your project, zip the binaries, and create the service configuration file.

A Windows Explorer window is opened, and you will see the result of the "packaging" action—a large binary package (

.cspkg) and the configuration file. Copy the location of this folder.Go back to the Windows Azure portal and select the recently created hosted service.

Right-click on the Hosted Service entry and select New Production Deployment.

Name your deployment, select the recently created files in the Package location and Configuration file textboxes, and click on OK.

Note

A warning appears, telling you that you need to create at least two instances to guarantee the 99.95 percent uptime the Windows Azure Compute service-level agreement (SLA) offers.

An SLA is a service contract in which the level of service is formally defined. Please go to http://www.windowsazure.com/en-us/support/legal/sla/ to get details about the SLA.

When two or more instances of a role are running in different fault and upgrade domains, Microsoft can offer at least a 99.95 percent (of the time) Internet connectivity of the designated roles. An availability of 99.95 percent means that your service is guaranteed less than 5 minutes down per week, inside the Fabric.

In the previous section, we deployed our Windows Azure project by using the Windows Azure portal and the Package option in Visual Studio. But what actually happened after uploading the package?

Upgrade domains are groups of nodes that are updated consecutively when there is a new Windows Azure OS version available or when you update your role. As stated before, the Windows Azure SLA is based on having two instances of each distinctive role run in at least two upgrade domains. You can choose to have only one instance of your role running, but this means that on every upgrade (OS, patch, security fix, or role upgrade) that causes a reboot your service will be unreachable.

Organizing your roles in more than one upgrade domain prevents your service from being offline because when one instance is down because of the update, the other one is still running, since it's in a different upgrade domain. The number of upgrade domains your role instances are put in is configurable in the service definition file (ServiceDefinition.csdef) in your solution. By default, the number is five, but you can change this at any time. After redeploying your service, your roles will be distributed among the number of upgrade domains you defined using the Fabric Controller. The capacity of your service during an OS upgrade is one, divided by the number of update domains. So, when you have five role instances running in five upgrade domains, your service capacity will be reduced by 20 percent during the whole upgrade process.

A fault domain is a physical unit of failure and can be mapped to physical infrastructure. A fault domain can be a complete rack or a single computer depending on the organization of the datacenter. Fault domains are meant to enhance fault tolerance of services. Keep your service running at all times, even during a hardware failure in the datacenter. Deploying your services into more than one fault domain will keep your service running, even when, for example, a top rack switch breaks down. Fault domains are physically grouped hardware areas inside the datacenter.

The Fabric Controller (FC) acts like the "kernel" for the datacenters. It has two major tasks:

Resource allocation and provisioning of described hardware and network resources (datacenter hardware)

Service lifecycle and health management based on applied service model and binaries (Windows Azure services)

The FC itself is an application running across different fault domains (just like your services) to ensure its availability. The FC runs on several nodes, and only one instance is the primary FC. All other instances are running in sync with the primary one.

The FC is in charge of all the hardware inside the datacenter.

Note

Servers are placed in racks, racks are organized in clusters, and all the clusters together form the datacenter. A cluster contains approximately 1,000 servers.

Before being able to deploy your service on a single (or several) node(s), the FC actually turns on a node. After that the following process takes place:

The node boots from the network using Preboot Execution Environment (PEX). A maintenance OS image is downloaded to the node and boots into it. The maintenance OS contains a fabric agent, and the FC communicates directly with this host agent.

The maintenance OS downloads a VHD with the operating system for the host partition. This OS contains an FC host agent. The maintenance OS then restarts the node and boots onto the operating system for the host partition.

The FC tells the FC host agent how many partitions need to be set up, depending on the "deployment" request. If a user wants to deploy a multicore VM size, this automatically means that the node can contain fewer instances, since fewer CPUs are available. On the partition, there is a base VHD and a differencing disk. This works in a similar way with Hyper-V technology, since the OS is a version of Hyper-V, written for Windows Azure. The guest VHD contains a modified Windows 2008 Server version, so that it can integrate with the Windows Azure hypervisor.

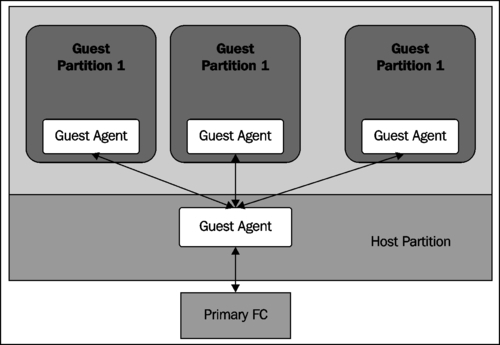

The following figure presents what a node looks like after the partitioning and provisioning of the guest OSs, including the agents that are needed to enable communication between the FC and the guest OSs.

After these steps, the FC can deploy the MVC3 website we created in previous sections.

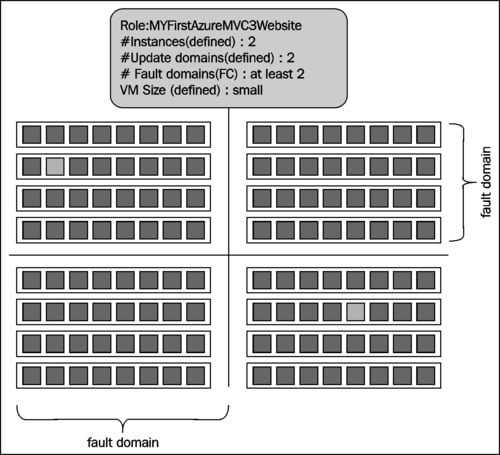

The FC processes the service model you provided during the deployment step. In this case, we told the FC to deploy two instances of our MyFirstAzureMVC3Website node. The VM size is Small, by default. This means 1 CPU core, 1.75 GB of memory, about 230 GB of local storage, and reserved bandwidth of 100 Mbps.

Note

For more information on the characteristics of VM sizes, please visit http://msdn.microsoft.com/en-us/library/windowsazure/ee814754.aspx.

The FCs create two guest partitions, as described in the previous sections, located in two different upgrade and fault domains. The FC then pushes the package (containing the binaries and the configuration file) to the target host agents. The host agents both create a guest partition that fulfills the service model we provided and starts the guest partitions. The guest agents both start the web role we created and call the role entry point, which is located in WebRole.cs. From this point, the role reports the heartbeats back to the host agent, so that the FC can monitor and maintain the health of roles. A role without a heartbeat for a period of time is considered unhealthy and is restarted.

The final step is that the FC programs a load balancer (LB) that routes the traffic to our website and divides it to the two role instances. Windows Azure equally spreads traffic across web role instances that are part of the same deployment. Having multiple instances of the same web role enables your website to handle more user traffic. The following figure shows where the instances are copied and run inside the datacenter, bearing in mind the upgrade and fault domains.

Our website is running now, has an uptime of 99.95 percent, and remains available, even in case of hardware failure or OS updates initiated by Windows Azure.

Windows Azure is often referred to as a platform, but what is actually inside that platform? As you have seen in the previous sections, Windows Azure offers a place where you can run your website, but during the evolution of the platform, more and more features were added. Beside running a client-facing Internet application, it also offers a place where you can run your application code that has no user interface at all (long-running computations or asynchronous tasks), It even offers the possibility of deploying a Windows Server 2008 R2 image to migrate your legacy applications to the cloud and offer the same level of scalability and availability. The underlying infrastructure of every type of role (web, worker, or VM) is a virtual machine that is handled by Windows Azure and that takes care of load balancing and failover. The pricing for every role type is similar and is based on the size of the underlying virtual machine. The details of the pricing models are described in Chapter 7, The Billing Aspect of Windows Azure.

The Windows Azure platform offers three different types of roles:

Web roles

Worker roles

VM roles

This section explains the differences between these different role types.

Web roles run an Internet Information Services web server that can be used to host your frontend web application. It is easy to deploy a web role, and load balancing is included in the offering. You can use both the HTTP and HTTPS protocols.

A worker role is typically used for long-running or asynchronous tasks that require no user input. A common application scenario is a configuration that consists of both web and worker roles, where the web roles are as thin as possible, only handling traffic and being highly responsive to the user. The worker roles take care of the actual work (placing an order, performing a workflow). Queuing mechanisms enable loose coupling and offer you the ability to achieve fine-grained scaling (for example, only scale up your web roles to enhance).

Virtual Machine (VM) roles allow you to deploy your own Windows Server 2008 R2 image to Windows Azure and host it in a hosted service, just like you do with a web or worker role. Applicable scenarios are applications that require OS customizations or native applications running in a standalone fashion. The VM role allows full control of the application environment (for example, registry settings or the old-fashioned .ini files) and enables you to migrate existing applications quickly to Windows Azure and benefit from the PaaS abilities the platform offers. Applications that take a long time to install or that require user input, or applications that are stateless, are suitable candidates to deploy as a VM role. A VM role gives you control over the virtual machine and allows you to build a suitable image from scratch, upload it to Windows Azure, and get it running. You can install software on the VM image and then upload it.

Just like web and worker roles, VM roles benefit from the automation Windows Azure offers, such as load balancing and failover. Full administrator privileges allow you to connect to the VM role and perform OS tweaking and troubleshooting. The cost structure for a VM role is the same as that of web and worker roles, in that you pay by the hour and based on the actual instance size.

Windows Azure offers both a relational Database-as-a-Service feature and a way to synchronize traditional SQL Server databases with SQL Azure databases and vice versa.

Note

The Database-as-a-Service offering used to be called SQL Azure, but in the June 2012 release of Windows Azure, Microsoft decided to rename it to SQL Database.

Windows Azure offers a relational Database-as-a-Service. Windows SQL Database (formerly known as SQL Azure) is a scalable and highly available database service and can be available in just seconds. It is built on top of SQL Server technology and offers (mostly) comparable functionality. The main difference from a developer perspective is that you do not connect to a SQL Server but directly to a database (it is Database-as-a-Service after all). Using SQL Database keeps you from installing, configuring and managing any servers or databases, including mirroring and implementing failover procedures.

SQL Database is still what you expect it to be—a fully relational database system that can be queried by SQL statements (or Linq, of course). After creating a SQL Database, you are able to reach it not only from your cloud application, but also from your on-premises environment. It fits perfectly into a hybrid or distributed scenario where tiers are spread all over on-premises and cloud systems.

Using SQL Database offers the following:

Use the same tools and knowledge you already have, such as the Management Studio and T-SQL: There is no need to learn new technologies or API to use the full power of SQL Database

The ability to grow in size up to 150 GB: SQL Database is getting more and more enterprise-ready both in size of the offered SLAs and high availability

Scale out easily: Using SQL Database Federations drastically simplifies the scaling out to multiple databases to grow beyond the 150 GB limit and to support multi-tenant solutions

Fast creation of databases: Getting a database online is just a click and a few seconds away

Data Sync is a mechanism that provides easy synchronization between SQL Database and SQL Server database premises. Setting up Data Sync is just a matter of configuration and releases you from writing complex database scripts to synchronize and export/import data. Data Sync offers a fine-grained control on what tables or columns to synchronize, or even a subset of rows and columns. Combining Data Sync together with, for example, Traffic Manager, enables you to create geographically wide applications where both application and data are as close as possible to your customers.

Besides SQL Database, Windows Azure also offers other storage capabilities that are secure, highly scalable, and available.

All data in the Windows Azure storage is replicated at least three times in the same datacenter to offer high availability and prevent the loss of data. Window Azure storage offers:

Blobs: A storage service that enables storing any arbitrary data, such as video or other binaries

Tables: A storage service that enables storing information in a tabular fashion with rows and columns

Queue: A storage service that enables messaging between applications or parts of your application

Windows Azure Drive: A storage service that enables users to mount a Blob as a drive

Binary Large Object (blob) storage is a storage service that allows you to store massive data such as video and audio into the cloud in a logical structure and offers you the same advantages as Windows Azure does with its other services, such as availability, scalability, and redundancy.

Table Storage has the ability to store data in a tabular way, with rows and columns. It is possible to store different entities in the same table. It is not possible to create foreign keys between Table Storage tables (a NoSQL database).

Queues provide a way to enable messaging between your services in a reliable way. It can help you build scalable, loosely-coupled applications by implementing message-based communication between parts of your application.

Windows Azure drive allows you to mount a blob as an NTFS VHD, and a drive letter is assigned to it. You can use traditional IO API (System.IO namespace) to manipulate Windows Azure drive.

We will go into much more detail and provide different code examples on how to use Windows Azure Storage in Chapter 4, Storing Your Data.

SQL Azure reporting allows you to enrich your Windows Azure application with reporting capabilities in a way you did before, by using SQL server reporting 2008 R2. This removes the need for on-premises installations of reporting servers but still enables you to create rich reports with tables, charts, and other compelling visualizations, and to additionally scale your reports and benefit from the underlying Windows Azure Platform-as-a-Service capabilities.

SQL Database reporting offers you the ability to:

Benefit from the pay-per-use philosophy that Windows Azure offers

Take advantage of the scalability and high availability the platform offers

Generate reports in multiple file formats, such as Excel, Word, and PDF

Make use of the same tools as Business Intelligence Design Studio

Provide access to reports and data in a secure, authenticated, and authorized manner

The Windows Azure platform offers a powerful mechanism to build secure messaging and relay capabilities for your distributed and loosely coupled applications. Applications may be on-premises, in the cloud or hybrid.

Integrate your enterprise application, running in your own datacenters, with applications running on Windows Azure. Use the Service Bus to build applications that can scale out more easily and reduce dependencies between components within your distributed applications.

Service Bus offers brokered messaging, meaning a scalable way to store messages, and the ability to implement a publish/subscribe pattern by using topics and subscriptions, allowing you to publish messages to hundreds of subscribers. Beside messaging, Service Bus also offers relayed messaging, enabling your applications running on Windows Azure to call back to applications running inside your datacenter. It lowers the burden on maintaining NATs and firewalls, keeping you focused on the actual business value of your applications.

Content delivery networks (CDNs) enable you to move your data close to your clients. There are multiple CDN nodes all over the world, and the CDN caches your data at locations as close to your customers as possible, to enhance performance. CDN can cache static content like pictures, movies, and software, as well as streaming media. CDN can be turned on, both on hosted services and storage accounts. Enabling CDN on your data is just a click away in the Windows Azure portal; see the following screenshot:

Windows Azure offers a caching mechanism that is distributed and in-memory. It is distributed, because the physical memory of different servers can be used as a single entity. It is in memory, because the cached items are stored in physical memory only and will not be swapped to disk or whatsoever, to keep up the high performance.

Using caching can speed up your application and minimize the round trips needed to your database or other stores. Typical candidates for caching are frequently used, read-only data (such as lookup tables), user session data (such as a shopping cart), and typical application data that requires a "singleton" approach.

Virtual network offers networking capabilities that help you migrate and integrate applications and release you from the plumbing burden of low-level networking issues.

Virtual network consists of two major concepts:

Windows Azure Connect

Traffic Manager

New features were added in June 2012 and are mentioned in Chapter 11, What's New in Windows Azure.

Windows Azure Connect (WAC) enables you to create network connectivity between applications running on Windows Azure and resources in your own datacenter. Setting up a "secure" connection based on IPSec between Windows Azure roles (web, worker, or VM) requires an agent to be installed on your local, on-premises machine that needs to be reached from the cloud. This mechanism does not require any changes to your network topology. Consider WAC as being a VPN, not on a gateway level but on a machine level.

Windows Azure Traffic Manager (WATM) enables fine-grained control on load balancing incoming traffic to multiple hosted services. By default, Windows Azure load balances all incoming web requests equally over the role instances. WATM enables load balancing across different hosted services with different DNS names. This can enhance performance (reducing network latency, but increasing the necessary hops) and availability by setting up "standby" hosted services that handle incoming requests if your primary hosted service is completely down.

By managing the traffic to your services, it is possible to ensure high performance, availability, and robustness of your services. WATM can provide a failover mechanism when it detects that one of your services is down. WATM detects some when a service is down and immediately reroutes traffic to the next closest (configured) service.

WATM policies can be set up on the Windows Azure portal very quickly and offer you three types of load balancing methods:

Based on performance: WATM directs traffic to the hosted service with the least internet latency. Remember that this is only applicable if your hosted service is deployed in multiple Windows Azure regions.

Based on failover: Traffic is directed to one single hosted service, but when the WATM is unable to detect a "heartbeat", it changes the DNS records and redirects all the traffic to the hosted service next in line. When this phenomenon occurs, your application will be unavailable for a few minutes, since the WATM needs to detect the heartbeat failure and update the DNS records.

Based on round robin: The WATM uses a round-robin algorithm to equally spread traffic among every hosted service configured in the policy, as defined in the Windows Azure portal. When a listed hosted service is unavailable (no heartbeat), it is automatically removed from the round-robin list.

Windows Azure Active Directory (WAAD) is a cloud service that offer identity and access functionality to both Windows Azure applications, on-premise applications, applications in a hybrid scenario, and even to Microsoft Office 365, the online SaaS equivalent to Microsoft Office. WAAD relies on the proven technology and capabilities of Active Directory, so that migrating your application to the cloud can be made easier.

Access Control Services (ACS) can offer end users a single sign-on experience across all cloud and hybrid applications. You can move your authentication and authorization logic away from your core applications and make it highly configurable by moving it to ACS. ACS is built on open industry standards, such as WS-Trust and WS-Federation, and SAML 2.0 and Simple Web Token, allowing non-Microsoft programming languages to access the WAAD capabilities as well. An internet portal is available, allowing you to set up authentication and authorization rules outside the boundaries of your application.

ACS offers you:

Single sign-on capabilities: Moving away from custom identity stores and authorization logic enables you to focus on the core functionality of your application.

Interoperability with your corporate Active Directory via ADFS: Allowing you to deploy, for example, your intranet applications to cloud and still maintain the single sign-on experience.

Incorporation of identity providers: Enabling you to make use of popular social media such as Facebook and identity, such as Google, Yahoo!, and Windows Live ID. A detailed example of using Facebook as an identity provider is given in Chapter 6, Key Features Explained.

Windows Azure Marketplace allows you to access SaaS applications and datasets comparable with an app store. You can also offer your applications on the marketplace and open up a global market for your product and/or datasets. Windows Azure Marketplace allows you to:

Access datasets and services from third parties: Security, audit trails (who accessed what resource at which time), billing, and authentication are handled by the Marketplace and let you focus on your application. Open standards such as OAuth and OData allow you to reach beyond platform borders.

Quickly monetize your SaaS solution or datasets and enable financial transactions all over the world in different currencies: Create trial offers and terms of use, and report on usage, traffic, and sales.

Search for data or services that suit your needs.

This chapter introduced Windows Azure, the cloud offering from Microsoft. It described the author's first contact with "cloud" in general and the history of the platform, and how Microsoft decided to put a great amount of effort in realizing Windows Azure.

A first deployment of a MVC3 website to Windows Azure was demonstrated. After the first deployment, we took a deep dive in the internals of Windows Azure and we saw in great detail how the platform actually works and how availability and fault-tolerance is maintained.

The next section is about the core concepts of Windows Azure. It provides an overview of the different features, providing a high-level description of the offerings.

The next chapter describes a fictitious startup company with a new idea for social networking. Throughout this book, this scenario of the next-gen social network will be used for code snippets and how to use different Windows Azure features related to this scenario.