In this chapter, we will cover the following:

Configuring host prerequisites

Installing MariaDB database

Installing RabbitMQ

Installing Keystone – Identity service

Generating and configuring tokens PKIs

Installing Glance – images service

Installing Nova – Compute service

Installing Neutron networking service

Configuring Neutron network node

Configuring compute node for Neutron

Installing Horizon – web user interface dashboard

OpenStack is a cloud operating system software that allows running and managing Infrastructure as a Service (IaaS) clouds on the standard commodity hardware. OpenStack is not an operating system of its own, which manages bare metal hardware machines, is a stack of open source software projects. The projects run on top of Linux operating system. The projects usually consist of several components that run as Linux services on top of the operating system.

OpenStack lets users to rapidly deploy instances of virtual machines or Linux containers on the fly, which run different kinds of workloads that serve public online services or deployed privately on company's premise. In some cases, workloads can run both on private environment, and on a public cloud, creating a hybrid model cloud.

OpenStack has a modular architecture. Projects are constructed of functional components. Each project has several components that are responsible for project's sole functionality. An API component exposes its capabilities, functionalities, and objects it manages via standard HTTP Restful API, so it can be consumed as a service by other services and users.

The components are responsible for managing and maintaining services, and the actual implementation of the services leverage exciting technologies such as backend drivers.

Basically, OpenStack is an upper layer management system that leverages existing underlying technologies and presents a standard API layer for services to interconnect and interact.

The computing industry and information technology, in particular, made a major progress in the past 20 year moving toward distributed systems that use common standards. OpenStack is another step forward, introducing a new standard for technologies that were progressing separately in the past two decades, to interconnect in an industry standard manner.

OpenStack environment consists of projects that provide their functionality as services. Each project is designed to provide a specific function. The core projects are the required services in most OpenStack environments, and in most use cases, an OpenStack environment cannot run without them. Additional projects are optional and provide value and add or fulfill certain functionalities for the cloud.

Core services provided by OpenStack projects are as follows:

Keystone authenticates and authorizes commands and requests.

Neutron is the project that provides networking for the instances as a service. Older releases used Nova-network service to provide networking connectivity to the instance, which is a part of the Nova project, but efforts are made to deprecate Nova-network in favor of Neutron.

Optional services provide additional functionality but are not considered as necessary in every OpenStack environment:

The complete list of additional projects is growing rapidly, and every new release has several new projects.

All services use a database service, usually MariaDB to store persistent data, and use a message broker for service inner communication, most commonly, RabbitMQ server.

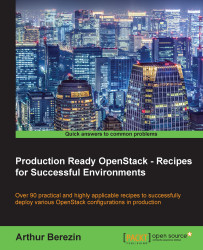

To better understand this design concept, let's take one project to explore this. Nova is a project that manages the compute resources. Basically, Nova is responsible for launching and managing instances for virtual machines. Nova is implemented via several components such as Linux services. Nova-Compute Linux service is responsible for launching the VM instances. It does not implement a virtualization technology hypervisor, rather it uses a virtualization technology as a supportive backend mechanism, kernel-based virtual machine (KVM) with the libvirt driver in most cases, to launch KVM instances.

Nova API Linux service exposes Nova's capabilities via RESTful API and allows launching new instances, using standard RESTful API calls. To launch new instances, Nova needs a base image to boot from, and Nova makes an API call to Glance, which is the project responsible for serving images.

OpenStack consists of several core projects—Nova for compute, Glance for images, Cinder for block storage, Keystone for Identity, Neutron for networking, and additional optional services. Each project exposes all its capabilities via RESTful API. The services inner-communicate over RESTful API calls, so when a service requires resources from another service, it makes a RESTful API call to query services' capabilities, list its resources, or call for a certain action.

Every OpenStack project consists of several components. Each component fulfills a certain functionality or performs a certain task. The components are standard POSIX Linux services, which use a message broker server for inner component communication of that project, using RabbitMQ in most cases. The services save their persistent data and objects states in a database.

All OpenStack services use this modular design. Each services has a component that is responsible to receive API calls, and other components are responsible for performing actions, for example, launching a virtual machine or creating a volume, weighing filters and scheduling, or other tasks that are part of project's functionality.

This design makes OpenStack highly modular; the components of each project can be installed on separate hosts while inner communicating via the message broker. There's no single permutation that fits all use cases OpenStack is used for, but there are a few commonly used layouts. Some layouts are easier for management, some focus on scaling compute resources, other layouts focus on scaling object storage, each with its own benefits and drawbacks.

In all-in-one layout, all OpenStack's services and components, including the database, message broker, and Nova-Compute service are installed on a single host. All-in-one layout is mostly used for testing OpenStack or while running proof of concept environments to evaluate functionality. While Nova-Compute nodes can be added for additional compute scalability, this layout introduces risks when using a single node as management plane, storage pool, and compute.

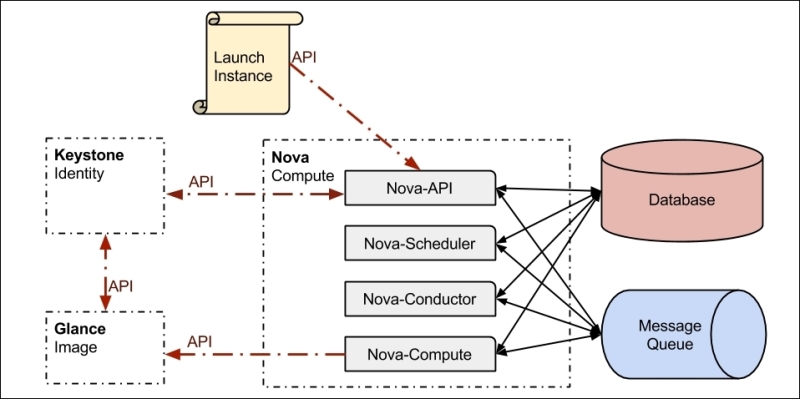

In Controller computes layout, all API services and components responsible for OpenStack management are deployed on a node, named OpenStack controller node. This includes Keystone Identity, Glance for instance images, Cinder block device, Neutron networking, Nova management, Horizon dashboard, message broker, and database services. All networking services are installed on Neutron Network Node—L3 Agent, Open vSwitch (L2) Agent, DHCP agent, and metadata service. Compute Nodes run Nova-Compute services that are responsible for running the instances as shown in the following chart:

This layout allows the compute resources to scale out to multiple nodes while keeping all management and all API components on a single control plane. The controller is easy to maintain and manage as all API interfaces are on a single node. Neutron network node manages the all layer 2 and layer 3 networks, virtual subnets, and all virtual routers. It is also responsible for routing traffic to external networks, which are not managed by Neutron.

Typically, large-scale environments run under heavy loads, along with running large amount of instances and handling large amount of simultaneous API calls. To handle such high loads, it is possible to deploy the services in a distributed layout, where every service is installed on its own dedicated node, and every node can individually scale out to additional nodes according to the load of each component. In the following diagram, all services are distributed based on core functionality. All Nova management services are installed on dedicated nodes:

Project Tuskar aims to become the standard way to deploy and OpenStack environments. While the project started to gain momentum in recent release cycles, the need to deploy OpenStack in a standard predictable manner existed from OpenStacks early days. This need brought various deployment tools to use.

OpenStack project's source code is available on GitHub, while the different distributions compile the source code and ship it as packaged, RPMs for Red Hat bases operating systems, and .deb files for Ubuntu-based systems. One way to deploy OpenStack is to install distribution packages based on a chosen deployed layout and manually configure all services and components needed for fully operational OpenStack environment. This manual process is fairly complex and requires being familiar with all basic configurations needed for OpenStack to function.

This chapter focuses on packages' deployment and manual configuration of the services, as this is a good practice to become familiar with all the basic configurations and getting ready for more advanced OpenStack configurations.

Configuring OpenStack services manually is a complex task, that requires editing lots of files and configuring lots of depending services, as such, it is a very error-prone process. A common way to automate this process is to use configuration management tools, such as Puppet, Chef, or Ansible, for installing OpenStack packages and automate all the configuration needed for OpenStack to operate. There's a large community, developing open source Puppet modules and Chef cookbooks to deploy OpenStack, which are available on GitHub.

PackStack is a utility that uses Puppet modules to deploy OpenStack on multiple preinstalled nodes automatically. It requires neither Puppet skills nor being familiar with OpenStack configuration. Installing OpenStack using PackStack is fairly simple; all it reacquires is to execute a single command #packstack --gen-answer-file to generate an answers file that desires the deployment layout and to initiate deployment run with #packstack --answer-file=/path/to/packstack_answers.txt.

The project Staypuft is an OpenStack deployment tool, which is based on Foreman, a robust and mature life cycle management tool. Staypuft includes a user interface designed specifically to deploy OpenStack and uses supporting Puppet modules. It also includes a discovery tool to easily add and deploy new hardware, and it can deploy the controller node with high availability configuration for production use.

Staypuft makes it easy to install OpenStack, with lower learning curve than managing Puppet modules manually, and it is more robust than using Packstack. Chapter 2, Deploying OpenStack Using Staypuft OpenStack Installer, will describe how to install a new OpenStack environment using Staypuft.

This chapter covers the manual installation of OpenStack from RDO distribution packages and the manual configuration of all basic OpenStack services. As mentioned earlier, manually installing OpenStack is not the optimal way to set up an OpenStack environment, as it involves numerous manual steps that are not easily reproducible and very error-prone, but the manual process is a great way to get familiar with all OpenStack internal components.

The following diagram describes a high-level design of most OpenStack services and outlines the steps needed for configuring an OpenStack service:

Basic OpenStack service configuration will include configuring the service to use Keystone as authentication strategy, authenticating and authorizing incoming API calls, a database connection in which the service will store metadata about the objects it manages, and the message broker, which the Linux services use to inner communicate.

The most important, and usually the most complex, part is to configure the service to use a backend for its core functionality, for example, Nova, which launches virtual machines, can use libvirtd as a backend services provider that actually launches KVM virtual machines on the local Linux node. Another good example is Keystone that can be configured to use Lightweight Directory Access Protocol (LDAP) server as a backend to store user credentials instead of storing user credentials in the SQL database.

Over the course of this chapter, we will install and configure OpenStack using RDO distribution packages of kilo release, on top of CentOS 7.0 Linux operating system. We will deploy controller-Neutron-computes layout with a single controller node, one Neutron network node, and one compute node, additional compute nodes can be easily added to the environment following the same steps to install the compute node.

Operating system: CentOS 7.0 or newer

OpenStack distribution: RDO kilo release

Architecture layout: controller-Neutron-computes

Every service that we install and configure while following this chapter will require its own database user account, and a Keystone user account. It is highly recommended for security reasons to choose a unique password for each account. For ease of deployment, it is recommended to maintain a password list as in the following table:

|

Database accounts |

Password |

|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

Keystone accounts |

Password |

|

|

|

|

|

|

|

|

|

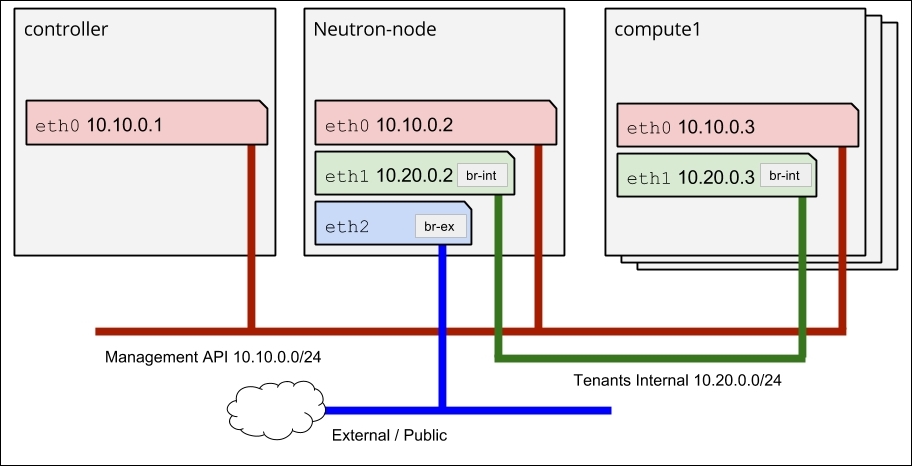

This chapter focuses on the controller-Neutron-computes topology layout. Before starting the installation of packages, we need to ensure that network interfaces are correctly wired and configured.

All nodes in our environment use eth0 as a management interface; the controller node exposes OpenStack's APIs via eth0. Neutron network node and compute nodes use eth1 for tenant's network; Neutron uses the tenant's network to route traffic between instances. Neutron network node uses eth2 for routing traffic from instances to the public network, which could be organization's IT network or publicly accessible network, as shown in the following diagram:

Note

Neutron service configuration in this chapter and Chapter 7, Neutron Networking Service, Neutron software defined network service will further discuss the creation of bridges needed for Neutron br-int and br-ex.

All hostnames should be resolvable on to their management network IP addresses.

|

Role |

Host Name |

NICs |

|---|---|---|

|

Controller node |

|

|

|

Neutron network node |

|

|

|

Compute node |

|

|

Every host running OpenStack services should have the following prerequisite configurations to successfully deploy OpenStack.

To successfully install OpenStack, every host needs to follow a few steps for the configuration. Every host needs to configure RDO yum repository from which we are going to install OpenStack packages. This can be done by manually configuring yum repository /etc/yum.repos.d/OpenStack.repo or installing them directly from RDO repository.

In addition, every node needs to enable firewalld service, enable SELinux and install OpenStack SELinux policies, enable and configure NTP, and also install the OpenStack utils package.

Perform the following steps to install and configure OpenStack prerequisites:

To install OpenStack RDO distribution, we need to add RDO's yum repository on all nodes and epel, yum repository for additional needed packages:

Install

yum-plugin-prioritiespackages, which enables repositories management inyum:# yum install yum-plugin-priorities -yInstall

rdo-releasepackage, which configures RDOreposin/etc/yum.repos.d:# yum install -y https://rdoproject.org/repos/rdo-release.rpmInstall

epelrepository package, which configuresepelrepos in/etc/yum.repos.d:# yum install -y epel-release

The default netfilter firewalld service in CentOS 7.0 is firewall. For security reasons, we need to make sure that firewalld service is running and enabled, so it is started after reboot:

Start

firewalldservice as follows:# systemctl start firewalld.serviceEnable

firewalldservice, as follows, so that it's started after host reboot as well:# systemctl enable firewalld.service

openstack-utils package brings utilities that ease OpenStack configuration and management of OpenStack services. openstack-utils includes the following utilities:

/usr/bin/openstack-config: Manipulates OpenStack configuration files/usr/bin/openstack-db: Creates databases for OpenStack services/usr/bin/openstack-service: Control-enabled OpenStack services/usr/bin/openstack-status: Show status overview of installed OpenStack

Install openstack-utils package:

# yum install openstack-utils

It is highly recommended to ensure that SELinux is enabled and in an enforcing state. the package openstack-selinux adds SELinux policy modules for OpenStack services.

Ensure that SELinux is enforcing, and run the getenforce command as follows:

# getenforceThe output should say SELinux is enforcing

Install

openstack-selinuxpackage:# yum install openstack-selinux

OpenStack services are deployed over multiple nodes. For services' successful synchronization, all nodes running OpenStack need to have a synchronized system clock, and NTP service can be used for this:

Install

ntpdpackage as follows:# yum install ntpStart and enable

ntpdas follows:# systemctl start ntpd # systemctl enable ntpd

Most OpenStack projects and their components keep their persistent data and objects' status in a database. MySQL and MariaDB are the most used and tested databases with OpenStack. In our case, and in the most commonly deployed layout, controller-Neutron-compute, the database is installed on the controller node.

Run the following commands on the controller node!

Proceed with the following steps:

Install MaridaDB packages as follows:

[root@controller ~]# yum install mariadb-galera-serverYum might deploy additional packages after resolving MariaDB's dependencies. A successful installation should output as follows:

Installed: mariadb-galera-server.x86_64 1:5.5.37-7.el7ost Dependency Installed: mariadb.x86_64 1:5.5.37-1.el7_0 mariadb-galera-common.x86_64 1:5.5.37-7.el7ost mariadb-libs.x86_64 1:5.5.37-1.el7_0 perl-DBD-MySQL.x86_64 0:4.023-5.el7 Complete!

Start MariaDB database service using

systemctlcommand as a root:[root@controller ~]# systemctl start mariadb.serviceIf no output is returned, this means the command is completed successfully.

Enable it, so it starts automatically after reboot:

[root@controller ~]# systemctl enable mariadb.serviceMariaDB maintains its own user accounts and passwords;

rootis the default administrative user name account that MariaDB uses. We should change the default password for the root account as keeping the default password is a major security treat.Change the database root password as follows, where

new_passwordis the password we want to set:[root@controller ~]# mysqladmin -u root password new_passwordKeep this in the passwords' list; we will need to create databases for the services we will deploy in the following parts of the chapter.

OpenStack uses a message broker for components to inner communicate. Red Hat-based operating systems (for example, RHEL, CentOS, and Fedora) can run RabbitMQ or QPID message brokers. Both provide roughly similar performance, but as RabbitMQ is more widely used message broker with OpenStack, we are going use it as a message broker for our OpenStack environment.

Install RabbitMQ from the yum repository:

Run the following commands on the controller node!

[root@controller ~]# yum install rabbitmq-server -yRabbitMQ is written in erlang and will probably bring some erlang dependency packages along.

To start the RabbitMQ Linux services, start a service named

rabbitmq-server:[root@controller ~]# systemctl start rabbitmq-server.serviceNow enable it, to make sure that it starts on a system reboot:

[root@controller ~]# systemctl enable rabbitmq-server.service

RabbitMQ maintains its own user accounts and passwords. By default, the user name guest is created with the default password guest. As it is a major security concern to keep default password, we should change this password. We can use the command rabbitmqctl to change guest's account password:

[root@controller ~]# rabbitmqctl change_password guest guest_password Changing password for user "guest" ... ...done.

We need to allow other services to be able to access the message broker over the firewall using firewall-cmd command:

[root@controller ~]# firewall-cmd --add-port=5672/tcp --permanent success

Keystone project provides Identity as a service for all OpenStack services and components. It is recommended to authenticate users and authorize access of OpenStack components. For Example, if a user would like to launch a new instance, Keystone is responsible for making sure that the user account, which issued the instance launch command, is a known authenticated user account and the account has permissions to launch the instance.

Keystone also provides a services catalog, which OpenStack serves, users and other services can query Keystone for the services of a particular OpenStack environment. For each service, Keystone returns an endpoint, which is a network-accessible URL from where users and services can access a certain service.

In this chapter, we are going to configure Keystone to use MariaDB as the backend data store provides, which is the most common configuration. Keystone can also use user account details on an LDAP server or Microsoft Active Directory, which will be covered in Chapter 4, Keystone Identity Service.

Before installing and configuring Keystone, we need to prepare a database for Keystone to use, configure it's user's permissions, and open needed firewall ports, so other nodes would be able to communicate with it. Keystone is usually installed on the controller node as part of OpenStack's control plane.

Run the following commands on the controller node!

To create a database for Keystone, use MySQL command to access the MariaDB instance, This will ask you to type the password you selected for the MariaDB root user:

[root@controller ~]# mysql -u root -pCreate a database named

keystone:MariaDB [(none)]> CREATE DATABASE keystone;Create a user account named

keystonewith the selected password instead of'my_keystone_db_password':MariaDB [(none)]> GRANT ALL ON keystone.* TO 'keystone'@'%' IDENTIFIED BY 'my_keystone_db_password';Grant access for

keystoneuser account to thekeystonedatabase:MariaDB [(none)]> GRANT ALL ON keystone.* TO 'keystone'@'localhost' IDENTIFIED BY 'my_keystone_db_password';Flush database privileges to ensure that they are effective immediately:

MariaDB [(none)]> FLUSH PRIVILEGES;At this point, you can exit the MySQL client:

MariaDB [(none)]> quit

Keystone service uses port 5000 for public access and port 35357 for administration.

[root@controller ~]# firewall-cmd --add-port=5000/tcp --permanent [root@controller ~]# firewall-cmd --add-port=35357/tcp --permanent

Proceed with the following steps:

By now, all OpenStack's prerequisites, including a database service and a message broker, should be installed and configured, and this is the first OpenStack service we install. First, we need to install, configure, enable, and start the package.

Install keystone package using yum command as follows:

[root@controller ~]# yum install -y openstack-keystone

This will also install Python supporting packages and additional packages for more advanced backend configurations.

Keystone's database connection string is set in /etc/keystone/keystone.conf; we can use the #openstack-config command to configure the connection string.

Run the

openstack-configcommand with your chosen keystone database user details and database IP address:[root@controller ~]# openstack-config --set /etc/keystone/keystone.conf sql connection mysql://keystone:'my_keystone_db_password'@10.10.0.1/keystoneAfter the database is configured, we can create the Keystone database tables using

db_synccommand:[root@controller ~]# su keystone -s /bin/sh -c "keystone-manage db_sync"

Before starting the Keystone service, we need to make some initial service configurations for it to start properly.

Keystone can use a token by which it will identify the administrative user:

Set a custom token or use

opensslcommand to generate a random token:[root@controller ~]# export SERVICE_TOKEN=$(openssl rand -hex 10)Store the token in a file for use in the next steps:

[root@controller ~]# echo $SERVICE_TOKEN > ~/keystone_admin_tokenWe need to configure Keystone to use the token we created, we can manually edit the Keystone configuration file

/etc/keystone/keystone.confand manually remove comment mark#next toadmin_tokenor we can use the commandopenstack-configto set the needed property.Use

openstack-configcommand to configureservice_tokenparameter as follows:[root@controller ~]# openstack-config --set /etc/keystone/keystone.conf DEFAULT admin_token $SERVICE_TOKEN

Keystone uses cryptographically signed tokens with a private key and is matched against x509 certificate with a public key. Chapter 4, Keystone Identity Service discusses more advanced configurations. In this chapter, we use keystone-manage pki_setup command to generate PKI key pairs and to configure Keystone to use it.

Proceed with the following steps:

Generate PKI keys using

keystone-manage pki_setupcommand:[root@controller ~]# keystone-manage pki_setup --keystone-user keystone --keystone-group keystoneChange ownership of the generated PKI files:

[root@controller ~]# chown -R keystone:keystone /var/log/keystone /etc/keystone/ssl/Configure Keystone service to use the generated PKI files:

[root@controller ~]# openstack-config --set /etc/keystone/keystone.conf signing token_format PKI [root@controller ~]# openstack-config --set /etc/keystone/keystone.conf signing certfile /etc/keystone/ssl/certs/signing_cert.pem [root@controller ~]# openstack-config --set /etc/keystone/keystone.conf signing keyfile /etc/keystone/ssl/private/signing_key.pem [root@controller ~]# openstack-config --set /etc/keystone/keystone.conf signing ca_certs /etc/keystone/ssl/certs/ca.pem [root@controller ~]# openstack-config --set /etc/keystone/keystone.conf signing key_size 1024 [root@controller ~]# openstack-config --set /etc/keystone/keystone.conf signing valid_days 3650 [root@controller ~]# openstack-config --set /etc/keystone/keystone.conf signing ca_password None

At this point, Keystone is configured and readily run as follows:

[root@controller ~]# systemctl start openstack-keystone

Enable Keystone to start after system reboot:

[root@controller ~]# systemctl enable openstack-keystone

We need to configure a Keystone service endpoint for other services to operate properly:

Set the

SERVICE_TOKENenvironment parameter using thekeystone_admin_tokenwe generated on basic Keystone configuration step:[root@controller ~]# export SERVICE_TOKEN=`cat ~/keystone_admin_token`Set the

SERVICE_ENDPOINTenvironment parameter with Keystone's endpoint URL using your controller's IP address:[root@controller ~]# export SERVICE_ENDPOINT="http://10.10.0.1:35357/v2.0"Create a Keystone service entry:

[root@el7-icehouse-controller ~]# keystone service-create --name=keystone --type=identity --description="Keystone Identity service"An output of a successful execution should look similar to the following, with a different unique ID:

+-------------+----------------------------------+ | Property | Value | +-------------+----------------------------------+ | description | Keystone Identity service | | enabled | True | | id | 1fa0e426e1ba464d95d16c6df0899047 | | name | keystone | | type | identity | +-------------+----------------------------------+

The

endpoint-createcommand allows us to set a different IP addresses that are accessible from public and from internal sources. At this point, we may use our controller's management NIC IP to access Keystone endpoint.Create Keystone service endpoint using keystone endpoint-create command:

[root@controller ~]# keystone endpoint-create --service keystone --publicurl 'http://10.10.0.1:5000/v2.0' --adminurl 'http://10.10.0.1:35357/v2.0'--internalurl 'http://10.10.0.1:5000/v2.0'Create services tenant:

[root@controller ~(keystone_admin)]# keystone tenant-create --name services --description "Services Tenant"

Create an administrative account within Keystone:

[root@controller ~]# keystone user-create --name admin --pass passwordCreate the

adminrole:[root@controller ~]# keystone role-create --name adminCreate an

admintenant:[root@controller ~]# keystone tenant-create --name adminAdd an

adminroles to the admin user with theadmintenant:[root@el7-icehouse-controller ~]# keystone user-role-add --user admin --role admin --tenant adminCreate

keystonerc_adminfile with the following content:[root@controller ~]# cat ~/keystonerc_admin export OS_USERNAME=admin export OS_TENANT_NAME=admin export OS_PASSWORD=password export OS_AUTH_URL=http://10.10.0.1:35357/v2.0/ export PS1='[\u@\h \W(keystone_admin)]\$ '

To load the environment variables, run source command:

[root@controller ~]# source keystonerc_admin

We may also create an unprivileged user account that has no administration permissions on our newly created OpenStack environment:

Create the user account in Keystone:

[root@controller ~(keystone_admin)]# keystone user-create --name USER --pass passwordCreate a new tenant:

[root@el7-icehouse-controller ~(keystone_admin)]# keystone tenant-create --name TENANTAssign the user account to the newly created tenant:

[root@el7-icehouse-controller ~(keystone_admin)]# keystone user-role-add --user USER --role _member_ --tenant TENANTCreate keystonerc_user file with the following content:

[root@controller ~(keystone_admin)]# cat ~/keystonerc_user export OS_USERNAME=USER export OS_TENANT_NAME=TENANT export OS_PASSWORD=password export OS_AUTH_URL=http://10.10.0.1:5000/v2.0/ export PS1='[\u@\h \W(keystone_user)]\$ '

If installation and configuration of Keystone service was successful, Keystone should be operational, and we execute a keystone command to verify that it is operational.

Use the command #tenant-list to list the existing tenants:

[root@controller ~(keystone_admin)]# keystone tenant-list

The output of successful tenant creation should look like this:

+----------------------------------+----------+---------+ | id | name | enabled | +----------------------------------+----------+---------+ | a5b7bf37d1b646cb8ec0eb35481204c4 | admin | True | | fafb926db0674ad9a34552dc05ac3a18 | services | True | +----------------------------------+----------+---------+

Glance images service provides services that allow us to store and retrieve operating system disk images to launch instances from. In our example, environment Glance service is installed on the controller node. Glance service consists of two services: Glance API, which is responsible for all API interactions, and glance-registry, which manages image database registry. Each has a configuration file under /etc/glance/.

Before configuring Glance, we need to create a database for it and grant the needed database credentials. We need to create user account for Glance in the Keystone user registry for Glance to be able to authenticate against Keystone. Finally, we will need to open appropriate firewall ports.

Use MySQL command with root a account to create the Glance database:

[root@controller ~(keystone_admin)]# mysql -u root -p

Create Glance database:

MariaDB [(none)]> CREATE DATABASE glance_db;Create Glance database user account and grant access permissions, where

my_glance_db_passwordis your password:MariaDB [(none)]> GRANT ALL ON glance_db.* TO 'glance_db_user'@'%' IDENTIFIED BY 'my_glance_db_password'; MariaDB [(none)]> GRANT ALL ON glance.* TO 'glance_db'@'localhost' IDENTIFIED BY 'my_glance_db_password';

Flush all changes:

MariaDB [(none)]> FLUSH PRIVILEGES;At this point, we can quit the MariaDB client:

MariaDB [(none)]> quitCreate Glance tables:

[root@controller glance(keystone_admin)]# glance-manage db_sync

Gain Keystone admin privileges to create Glance service account in Keystone:

[root@controller ~]# source keystonerc_admin

Create a Keystone user account for Glance:

[root@controller ~(keystone_admin)]# keystone user-create --name glance --pass glance_passwordAdd an

adminrole to theglanceuser and servicestenants:[root@controller ~(keystone_admin)]# keystone user-role-add --user glance --role admin --tenant servicesCreate a

glanceservice:[root@controller ~(keystone_admin)]# keystone service-create --name glance --type image --description "Glance Image Service"Create an endpoint for

glanceservice:[root@controller ~(keystone_admin)]# keystone endpoint-create --service glance --publicurl "http://10.10.0.1:9292" --adminurl "http://10.10.0.1:9292" --internalurl "http://10.10.0.1:9292"

Follow these steps to configure Glance image service:

Set the connection string for

glance-api:[root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-api.conf DEFAULT sql_connection mysql://glance_db_user:glance_db_password@10.10.0.1/glance_dbSet connection string for

glance-registry:[root@el7-icehouse-controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-registry.conf DEFAULT sql_connection mysql://glance_db_user:glance_db_password@10.10.0.1/glance_dbConfigure the message broker using

openstack-configcommand:# openstack-config --set /etc/glance/glance-api.conf DEFAULT \rpc_backend rabbit # openstack-config --set /etc/glance/glance-api.conf DEFAULT \rabbit_host 10.10.0.1 # openstack-config --set /etc/glance/glance-api.conf DEFAULT \rabbit_userid guest # openstack-config --set /etc/glance/glance-api.conf DEFAULT \rabbit_password guest_password

Configure Glance to use Keystone as an authentication method:

[root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-api.conf paste_deploy flavor keystone [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-api.conf keystone_authtoken auth_host 10.10.0.1 [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-api.conf keystone_authtoken auth_port 35357 [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-api.conf keystone_authtoken auth_protocol http [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-api.conf keystone_authtoken admin_tenant_name services [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-api.conf keystone_authtoken admin_user glance [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-api.conf keystone_authtoken admin_password glance_password

Now configure

glance-registryto use Keystone for authentication:[root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-registry.conf paste_deploy flavor keystone [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken auth_host 192.168.200.258 [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken auth_port 35357 [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken auth_protocol http [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken admin_tenant_name services [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken admin_user glance [root@controller ~(keystone_admin)]# openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken admin_password password

Start and enable the service:

[root@controller ~]# systemctl start openstack-glance-api [root@controller ~]# systemctl start openstack-glance-registry

If the installation and configuration was successful, we can upload our fist image to Glance registry. CirrOS Linux image is a good candidate as it is extremely small in size and functional enough to test most OpenStack's functionalities.

If glance was successfully installed and configured, we may upload our fist image.

First, download a CirrOS image to the controller node:

[root@controller glance(keystone_admin)]# wget http://download.cirros-cloud.net/0.3.4/cirros-0.3.4-x86_64-disk.img Then, upload the image to Glance registry using glance image-create command: [root@controller glance(keystone_admin)]# glance image-create--name="cirros-0.3.2-x86_64" --disk-format=qcow2 --container-format=bare --is-public=true -–file cirros-0.3.2-x86_64-disk.img

List all glance images using glance image-list command:

[root@controller glance(keystone_admin)]# glance image-list If the upload of the image was successful, the Cirros image will appear in the list.

Nova-Compute service implements the compute service, which is the main part of an IaaS cloud. Nova is responsible for launching and managing instance of virtual machines. The compute service scales horizontally on standard hardware.

In our environment, we deploy a Controller/Computes layout. In the first step, we need to configure management services on the controller node and only then to add compute nodes to the environment. On the controller node, first we need to prepare the database, create a Keystone account, then open the needed firewall ports.

Run the following steps on the controller node!

Access the database instance using MySQL command:

[root@controller ~]# mysql -u root -pCreate Nova database:

MariaDB [(none)]> CREATE DATABASE nova_db;Create Nova credentials and allow access:

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_db.* TO 'nova_db_user'@'localhost' IDENTIFIED BY 'nova_db_password'; MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_db.* TO 'nova_db_user'@'%' IDENTIFIED BY 'nova_db_password';

Create Nova database tables:

[root@controller ~]# su -s /bin/sh -c "nova-manage db sync" nova

Create Nova service account in Keystone:

[root@controller ~]# keystone user-create --name=nova --pass=nova_password [root@controller ~]# keystone user-role-add --user=nova --tenant=services --role=admin

[root@controller ~]# keystone endpoint-create --service=nova--publicurl=http://10.10.0.1:8774/v2/%\(tenant_id\) |--internalurl=http://10.10.0.1:8774/v2/%\(tenant_id\s --adminurl=http://10.10.0.1:8774/v2/%\(tenant_id\)s

[root@controller ~]# firewall-cmd --permanent --add-port=8774/tcp [root@controller ~]# firewall-cmd --permanent --add-port=6080/tcp [root@controller ~]# firewall-cmd --permanent --add-port=6081/tcp [root@controller ~]# firewall-cmd --permanent --add-port=5900-5999/tcp

Reload firewall rules to take effect:

[root@controller ~]# firewall-cmd --reload

Follow these steps to configure Nova-Compute service:

Using openstack-config command, we need to set the connection to the database:

[root@controller ~]# openstack-config --set /etc/nova/nova.conf database connection mysql://nova_db_user:nova_db_password@controller/nova_db [root@controller ~]# su -s /bin/sh -c "nova-manage db sync" nova

Set connection to RabbitMQ message broker:

[root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT rpc_backend rabbit [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT rabbit_host 10.10.0.1

Set local IP address of the controller:

# openstack-config --set /etc/nova/nova.conf DEFAULT my_ip 10.10.0.1 # openstack-config --set /etc/nova/nova.conf DEFAULT vncserver_listen 10.10.0.1 # openstack-config --set /etc/nova/nova.conf DEFAULT vncserver_proxyclient_address 10.10.0.1

Configure Keystone as an authentication method:

# openstack-config --set /etc/nova/nova.conf DEFAULT auth_strategy keystone # openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_uri http://10.10.0.1:5000 # openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_host 10.10.0.1 # openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_protocol http # openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_port 35357 # openstack-config --set /etc/nova/nova.conf keystone_authtoken admin_user nova # openstack-config --set /etc/nova/nova.conf keystone_authtoken admin_tenant_name services # openstack-config --set /etc/nova/nova.conf keystone_authtoken admin_password nova_password

Using systemctl command, we can start and enable the service so that it starts after reboot:

[root@controller ~]# systemctl start openstack-nova-api [root@controller ~]# systemctl start openstack-nova-cert [root@controller ~]# systemctl start openstack-nova-consoleauth [root@controller ~]# systemctl start openstack-nova-scheduler [root@controller ~]# systemctl start openstack-nova-conductor [root@controller ~]# systemctl start openstack-nova-novncproxy [root@controller ~]# systemctl enable openstack-nova-api [root@controller ~]# systemctl enable openstack-nova-cert [root@controller ~]# systemctl enable openstack-nova-consoleauth [root@controller ~]# systemctl enable openstack-nova-scheduler [root@controller ~]# systemctl enable openstack-nova-conductor [root@controller ~]# systemctl enable openstack-nova-novncproxy

On successful Nova installation and configuration, you should be able to execute this:

[root@el7-icehouse-controller ~(keystone_admin)]# nova image-list

+-------------------+---------------------+--------+--------+ | ID | Name | Status | Server | +-------------------+---------------------+--------+--------+ | eb9c6911-... | cirros-0.3.2-x86_64 | ACTIVE | | +-------------------+---------------------+--------+--------+

After the controller node is successfully installed and configured, we may add additional compute nodes to the OpenStack environment.

Now we can proceed and configure the compute services on the compute node.

Run the following steps on the compute node!

Configure the Nova database connection:

[root@compute1 ~]# openstack-config --set /etc/nova/nova.conf database connection mysql://nova_db_user:nova_db_password@controller/nova_db

Configure Nova to access the message broker:

[root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT rpc_backend rabbit [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT rabbit_host 10.10.0.1

Edit

/etc/nova/nova.conffor the compute node to use Keystone authentication:[root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT auth_strategy keystone [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_uri http://controller:5000 [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_host controller [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_protocol http [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_port 35357 [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken admin_user nova [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken admin_tenant_name service [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken admin_password nova_password

Configure the remote console for instances terminal access:

[root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT my_ip 192.168.200.159 [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT vnc_enabled True [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DE \FAULT vncserver_listen 0.0.0.0 [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT vncserver_proxyclient_address 192.168.200.159 [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf \DEFAULT novncproxy_base_url http://controller:6080/vnc_auto.html

Configure which glance service to use to retrieve images:

[root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT glance_host controller

Neutron networking service is responsible for the creation and management of layer 2 networks, layer 3 subnets, routers, and services, such as firewalls, VPNs, and DNS. Neutron service is constructed of Neutron-server service, which serves the Neutron API and interacts with the Neutron components since we deploy controller-Neutron-compute layout that we need to install and configure neutron-server and Modular Layer 2 (ML2) plugin on the controller node. Then, we will configure layer 3, DHCP, and metadata services on the Neutron network node. We will configure the compute node to use Neutron networking services.

Before configuring Neutron services, we need to create a Database that will hold Neutron's objects, a Keystone endpoint for Neutron, open the needed firewall ports, and install all needed Neutron packages on the controller, Neutron network node, and on compute nodes.

Run the following commands on the controller node!

Access the database instance using MySQL command with the root user account:

[root@controller ~]# mysql -u root -pCreate a new database for Neutron called

neutron:MariaDB [(none)]> CREATE DATABASE neutron;Create a database user account named

neutron_db_userwith the passwordneutron_db_passwordand grant access to the newly created database:MariaDB [(none)]> GRANT ALL PRIVILEGES ON neutron.* TO 'neutron_user_db'@'localhost' IDENTIFIED BY 'neutron_db_password'; MariaDB [(none)]> GRANT ALL PRIVILEGES ON neutron.* TO 'neutron_user_db'@'%' IDENTIFIED BY 'neutron_db_password';

Keep in mind that for using Keystone command, we need to source Keystone environment parameters with admin credentials: # source ~/keystonerc_admin.

[root@controller ~(keystone_admin)]# keystone user-create --name neutron --pass password [root@controller ~(keystone_admin)]# keystone user-role-add --user neutron --tenant services --role admin [root@controller ~(keystone_admin)]# keystone service-create --name neutron --type network --description "OpenStack Networking"

Create a new endpoint for Neutron in Keystone services catalog:

[root@controller ~(keystone_admin)]# keystone endpoint-create \--service neutron \--publicurl http://controller:9696 \--adminurl http://controller:9696 \--internalurl http://controller:9696

We start by configuring Neutron server service on the controller node. We will configure Neutron to access the database and message broker. Then, we will configure Neutron to use Keystone, as it's an authentication strategy. We will use ML2 driver backend and configure Neutron to use it. Finally, we will configure Nova service to use Neutron and ML2 plugin as networking services.

Use OpenStack configure command to configure the connection string to the database:

[root@controller ~]# openstack-config --set /etc/neutron/neutron.conf database connection mysql://neutron_db_user:neutron_db_password@controller/neutron_db

Configure Neutron to use RabbitMQ message broker:

[root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT rpc_backend rabbit [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT rabbit_host 10.10.0.1

Configure Neutron to use Keystone as an authentication strategy:

[root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \auth_strategy keystone [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \auth_uri http://controller:5000 [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \auth_host controller [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \auth_protocol http [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \auth_port 35357 [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \admin_tenant_name services [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \admin_user neutron [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \admin_password password

Configure Neutron to synchronize networking topology changes with Nova:

[root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \notify_nova_on_port_status_changes True [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \notify_nova_on_port_data_changes True [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \nova_url http://controller:8774/v2 [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \nova_admin_username nova [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \nova_admin_tenant_id $(keystone tenant-list | awk '/ services / { print $2 }') [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \nova_admin_password passowrd [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \nova_admin_auth_url http://controller:35357/v2.0

Now configure Neutron to use ML2 Neutron plugin:

[root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \core_plugin ml2 [root@controller ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \service_plugins router

Configure ML2 plugin to use Open vSwitch agent with GRE segregation for virtual networks for instances:

[root@controller ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 \type_drivers gre [root@controller ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 \tenant_network_types gre [root@controller ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 \mechanism_drivers openvswitch [root@controller ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_gre \tunnel_id_ranges 1:1000 [root@controller ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup \firewall_driver neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver [root@controller ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup \enable_security_group True

Once Neutron and ML2 are configured, we need to configure Nova to use Neutron as its networking provider:

[root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT network_api_class nova.network.neutronv2.api.API [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_url http://controller:9696 [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_auth_strategy keystone [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_admin_tenant_name service [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_admin_username neutron [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_admin_password NEUTRON_PASS [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_admin_auth_url http://controller:35357/v2.0 [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT linuxnet_interface_driver nova.network.linux_net.LinuxOVSInterfaceDriver [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT firewall_driver nova.virt.firewall.NoopFirewallDriver [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT security_group_api neutron

Since we are using ML2 Neutron plugin, we need to add a symbolic link associated with ML2 and Neutron plugin as follows:

[root@controller ~]# ln -s plugins/ml2/ml2_conf.ini /etc/neutron/plugin.iniPrepare Nova to use Neutron metadata service:

[root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT service_neutron_metadata_proxy true [root@controller ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_metadata_proxy_shared_secret SHARED_SECRET

If Nova services are running, we need to restart them:

[root@controller ~]# systemctl restart openstack-nova-api [root@controller ~]# systemctl restart openstack-nova-scheduler [root@controller ~]# systemctl restart openstack-nova-conductor

At this point, we can start and enable Neutron-server service:

[root@controller ~]# systemctl start neutron-server [root@controller ~]# systemctl enable neutron-server

This concludes configuring Neutron server on the controller node, now we can configure Neutron network node.

After we have configured Neutron-server on the controller node, we can proceed and configure the network server that is responsible for routing and connecting the OpenStack environment to the public network.

Neutron network node runs the networking services layer 2 management agent, DHCP service, L3 management agent, and metadata services agent. We will install and configure Neutron network node services to use the ML2 plugin.

Run the following commands on Neutron network node!

Enable IP forwarding and reverse path filtering, edit

/etc/sysctl.confto contain the following:net.ipv4.ip_forward=1 net.ipv4.conf.all.rp_filter=0 net.ipv4.conf.default.rp_filter=0

and apply the new settings:

[root@nnn ~]# sysctl -pThen install the needed packages:

[root@nnn ~]# yum install openstack-neutron openstack-neutron-ml2 openstack-neutron-openvswitch

Configure Neutron to use RabbitMQ message broker of the controller:

[root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT rpc_backend rabbit [root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT rabbit_host 10.10.0.1

Configure Neutron to use Keystone as an authentication strategy:

[root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT \ auth_strategy keystone [root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_uri http://controller:5000 [root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_host controller [root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_protocol http [root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_port 35357 [root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken admin_tenant_name services [root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken admin_user neutron [root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken admin_password password

Configure Neutron to use the ML2 Neutron plugin:

[root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT core_plugin ml2 [root@nnn ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT service_plugins router

Configure Layer 3 agent that provides routing services for instances:

[root@nnn ~]# openstack-config --set /etc/neutron/l3_agent.ini DEFAULT interface_driver neutron.agent.linux.interface.OVSInterfaceDriver [root@nnn ~]# openstack-config --set /etc/neutron/l3_agent.ini DEFAULT use_namespaces True

Configure the DHCP agent, which provides DHCP services for instances:

[root@nnn ~]# openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT interface_driver neutron.agent.linux.interface.OVSInterfaceDriver [root@nnn ~]# openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT dhcp_driver neutron.agent.linux.dhcp.Dnsmasq [root@nnn ~]# openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT use_namespaces True

Configure instances Metadata service:

[root@nnn ~]# openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT \auth_url http://controller:5000/v2.0 [root@nnn ~]# openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT \auth_region regionOne [root@nnn ~]# openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT \admin_tenant_name services [root@nnn ~]# openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT \admin_user neutron [root@nnn ~]# openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT \admin_password password [root@nnn ~]# openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT \nova_metadata_ip controller [root@nnn ~]# openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT \metadata_proxy_shared_secret SHARED_SECRET

Configure the ML2 plugin to use GRE tunneling segregation:

[root@nnn ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 \type_drivers gre [root@nnn ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 \tenant_network_types gre [root@nnn ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 \mechanism_drivers openvswitch [root@nnn ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_gre \tunnel_id_ranges 1:1000 [root@nnn ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs \local_ip 10.20.0.2 [root@nnn ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs \tunnel_type gre [root@nnn ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs \enable_tunneling True [root@nnn ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup \firewall_driver neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver [root@nnn ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup \enable_security_group True

Create bridges for Neutron layer 2 and Neutron layer 3 agents. First, start the Open vSwitch service:

[root@nnn ~]# systemctl start openvswitch [root@nnn ~]# systemctl enable openvswitch

Create a bridge for instances' inner-commutation:

[root@nnn ~]# ovs-vsctl add-br br-intCreate a bridge that the instance will use for communication with public networks:

[root@nnn ~]# ovs-vsctl add-br br-exBind the external bridge with the NIC used for external communication:

[root@nnn ~]# ovs-vsctl add-port br-ex eth2Create symbolic link for ML2 Neutron plugin:

[root@nnn ~]# ln -s plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

At this point, we can start and enable L2 Open vSwitch agent, L3 agent, HDCP agent, and metadata agent services:

[root@nnn ~]# systemctl start neutron-openvswitch-agent [root@nnn ~]# systemctl start neutron-l3-agent [root@nnn ~]# systemctl start neutron-dhcp-agent [root@nnn ~]# systemctl start neutron-metadata-agent [root@nnn ~]# systemctl enable neutron-openvswitch-agent [root@nnn ~]# systemctl enable neutron-l3-agent [root@nnn ~]# systemctl enable neutron-dhcp-agent [root@nnn ~]# systemctl enable neutron-metadata-agent

This concludes configuring Neutron network node. Now we can configure the Nova-Compute nodes to use Neutron networking.

After we have configured the Neutron network node, we can go ahead and configure our compute nodes to use Neutron networking service.

When the controller and Neutron network node are ready, we can configure Nova-Compute node to use Neutron for networking. We will configure Neutron access to the message broker. Then, we will configure Neutron to use ML2 plugin with GRE tunneling segmentation.

Run the following commands on compute1!

Disable reverse path filtering, Edit /etc/sysctl.conf to contain the following:

net.ipv4.conf.all.rp_filter=0 net.ipv4.conf.default.rp_filter=0 and apply the new configuration: [root@compute1 ~]# sysctl -p

Install the Neutron ML2 and Open vSwitch packages:

[root@compute1 ~]# yum install -y openstack-neutron-ml2 openstack-neutron-openvswitch

Configure Neutron to use RabbitMQ message broker of the controller:

[root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT rpc_backend rabbit [root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT rabbit_host 10.10.0.1

Configure Neutron to use Keystone as an authentication strategy:

[root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULTauth_strategy keystone [root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \auth_uri http://controller:5000 [root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \auth_host controller [root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \auth_protocol http [root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \auth_port 35357 [root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \admin_tenant_name services [root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \admin_user neutron [root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf keystone_authtoken \admin_password password

Now configure Neutron to use ML2 Neutron plugin:

[root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT core_plugin ml2 [root@compute1 ~]# openstack-config --set /etc/neutron/neutron.conf DEFAULT service_plugins router

Configure the ML2 Plugin to use GRE tunneling segregation:

[root@compute1 ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 \type_drivers gre [root@compute1 ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 \tenant_network_types gre [root@compute1 ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 \mechanism_drivers openvswitch [root@compute1 ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_gre \tunnel_id_ranges 1:1000 [root@compute1 ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs \local_ip 10.20.0.3 [root@compute1 ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs \tunnel_type gre [root@compute1 ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs \enable_tunneling True [root@compute1 ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup \firewall_driver neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver [root@compute1 ~]# openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup \enable_security_group True

Create bridges for Neutron layer 2 and Neutron layer 3 agents. First, start the enable vSwitch service:

[root@compute1 ~]# systemctl start openvswitch [root@compute1 ~]# systemctl enable openvswitch

After starting Open vSwitch service, we can create the needed bridge:

[root@compute1 ~]# ovs-vsctl add-br br-intConfigure Nova to use Neutron Networking:

[root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT network_api_class nova.network.neutronv2.api.API [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_url http://controller:9696 [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_auth_strategy keystone [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_admin_tenant_name service [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_admin_username neutron [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_admin_password NEUTRON_PASS [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT neutron_admin_auth_url http://controller:35357/v2.0 [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT linuxnet_interface_driver nova.network.linux_net.LinuxOVSInterfaceDriver [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT firewall_driver nova.virt.firewall.NoopFirewallDriver [root@compute1 ~]# openstack-config --set /etc/nova/nova.conf DEFAULT security_group_api neutron

Create a symbolic link for ML2 Neutron plugin:

[root@compute1 ~]# ln -s plugins/ml2/ml2_conf.ini /etc/neutron/plugin.iniRestart the Nova-Compute service:

[root@compute1 ~]# systemctl restart openstack-nova-computeStart and enable Neutron Open vSwitch agent service:

[root@compute1 ~]# systemctl start neutron-openvswitch-agent [root@compute1 ~]# systemctl enable neutron-openvswitch-agent

At this point, we should have the controller, Neutron network node, and compute1 configured for using Neutron networking. We can go ahead and create Neutron virtual networks needed for instances to be able to communicate with external public networks. We are going to create two layer 2 networks, one for the instances, and another to connect external networks.

Run the following commands on the controller node!

By default, networks are own and managed by the Admin user, under Admin tenant and shared for other tenants' use.

Source Admin tenant credentials:

[root@controller ~]# source keystonerc_adminCreate an external shared network:

[root@controller ~(keystone_admin)]# neutron net-create external-net --shared --router:external=TrueIn this example, we allocate a range of IPs from our existing external physical network, 192.168.200.0/24 for instances to use when communicating with the Internet or with external hosts in the IT environment.

Create a subnet in the newly created network:

[root@controller ~(keystone_admin)]# neutron subnet-create external-net --name ext-subnet --allocation-pool start=192.168.200.100,end=192.168.200.200 --disable-dhcp --gateway 192.168.200.1 192.168.200.0/24The IP range is ought to be routable by the external public network and not overlap with the existing configured networks. Chapter 7, Neutron Networking Service, will further discuss Neutron networks planning.

Create a tenant network, which is an isolated network for instances to inner-communicate:

[root@controller ~(keystone_admin)]# neutron net-create tenant_net [root@controller ~(keystone_admin)]# neutron subnet-create tenant_net --name tenant_net_subnet --gateway 192.168.1.1 192.168.1.0/24 [root@controller ~(keystone_admin)]# neutron router-create ext-router [root@controller ~(keystone_admin)]# neutron router-interface-add ext-router tenant-subnet [root@controller ~(keystone_admin)]# neutron router-gateway-set ext-router external-net

Horizon dashboard service is the web user interface for users to consume OpenStack services and for administrator to manage and operate OpenStack.

Install packages needed for Horizon as follows:

[root@controller ~]# yum install mod_wsgi openstack-dashboard Use firewall-cmd command to open port 80: [root@controller ~]# firewall-cmd --permanent --add-port=80/tcp [root@controller ~]# firewall-cmd --reload Configure SELinux: # setsebool -P httpd_can_network_connect on

Perform the following steps to configure and enable the Horizon dashboard service:

Edit

/etc/openstack-dashboard/local_settings:ALLOWED_HOSTS = ['localhost', '*'] OPENSTACK_HOST = "controller"

Start and enable service. At this point, we can start and enable Neutron-server service:

[root@controller ~]# systemctl start httpd [root@controller ~]# systemctl enable httpd

Horizon is a Django-based web application, running on Apache HTTPD service, it interacts with all services' APIs to gather information from OpenStack's services and to create new resources.

We can verify whether Horizon dashboard service was installed successfully after we completed configuring the service.

You can now access the dashboard via web browser at http://controller/dashb using the admin user account and password chosen during the admin account creation.