No matter which industry you work in—IT, fashion, food, or finance—there is no doubt that data affects your life and work. At some point this week, you will either have or hear a conversation about data. News outlets are covering more and more stories about data leaks, cybercrimes, and how data can give us a glimpse into our lives. But why now? What makes this era such a hotbed of data-related industries?

In the nineteenth century, the world was in the grip of the Industrial Age. Mankind was exploring its place in the industrial world, working with giant mechanical inventions. Captains of industry, such as Henry Ford, recognized that using these machines could open major market opportunities, enabling industries to achieve previously unimaginable profits. Of course, the Industrial Age had its pros and cons. While mass production placed goods in the hands of more consumers, our battle with pollution also began at around this time.

By the twentieth century, we were quite skilled at making huge machines; the goal now was to make them smaller and faster. The Industrial Age was over and was replaced by what we now refer to as the Information Age. We started using machines to gather and store information (data) about ourselves and our environment for the purpose of understanding our universe.

Beginning in the 1940s, machines such as ENIAC (considered one of the first—if not the first—computers) were computing math equations and running models and simulations like never before. The following photograph shows ENIAC:

ENIAC—The world's first electronic digital computer (Ref: http://ftp.arl.mil/ftp/historic-computers/)

We finally had a decent lab assistant who could run the numbers better than we could! As with the Industrial Age, the Information Age brought us both the good and the bad. The good was the extraordinary works of technology, including mobile phones and televisions. The bad was not as bad as worldwide pollution, but still left us with a problem in the twenty-first century—so much data.

That's right—the Information Age, in its quest to procure data, has exploded the production of electronic data. Estimates show that we created about 1.8 trillion gigabytes of data in 2011 (take a moment to just think about how much that is). Just one year later, in 2012, we created over 2.8 trillion gigabytes of data! This number is only going to explode further to hit an estimated 40 trillion gigabytes of created data in just one year by 2020. People contribute to this every time they tweet, post on Facebook, save a new resume on Microsoft Word, or just send their mom a picture by text message.

Not only are we creating data at an unprecedented rate, but we are also consuming it at an accelerated pace as well. Just five years ago, in 2013, the average cell phone user used under 1 GB of data a month. Today, that number is estimated to be well over 2 GB a month. We aren't just looking for the next personality quiz—what we are looking for is insight. With all of this data out there, some of it has to be useful to me! And it can be!

So we, in the twenty-first century, are left with a problem. We have so much data and we keep making more. We have built insanely tiny machines that collect data 24/7, and it's our job to make sense of it all. Enter the Data Age. This is the age when we take machines dreamed up by our nineteenth century ancestors and the data created by our twentieth century counterparts and create insights and sources of knowledge that every human on Earth can benefit from. The United States created an entirely new role in the government of chief data scientist. Many companies are now investing in data science departments and hiring data scientists. The benefit is quite obvious—using data to make accurate predictions and simulations gives us insight into our world like never before.

Sounds great, but what's the catch?

This chapter will explore the terminology and vocabulary of the modern data scientist. We will learn keywords and phrases that will be essential in our discussion of data science throughout this book. We will also learn why we use data science and learn about the three key domains that data science is derived from before we begin to look at the code in Python, the primary language used in this book. This chapter will cover the following topics:

- The basic terminology of data science

- The three domains of data science

- The basic Python syntax

What is data science?

Before we go any further, let's look at some basic definitions that we will use throughout this book. The great/awful thing about this field is that it is so young that these definitions can differ from textbook to newspaper to whitepaper.

The definitions that follow are general enough to be used in daily conversations, and work to serve the purpose of this book, an introduction to the principles of data science.

Let's start by defining what data is. This might seem like a silly first definition to look at, but it is very important. Whenever we use the word "data," we refer to a collection of information in either an organized or unorganized format. These formats have the following qualities:

- Organized data: This refers to data that is sorted into a row/column structure, where every row represents a single observation and the columns represent the characteristics of that observation.

- Unorganized data: This is the type of data that is in a free form, usually text or raw audio/signals that must be parsed further to become organized.

Whenever you open Excel (or any other spreadsheet program), you are looking at a blank row/column structure waiting for organized data. These programs don't do well with unorganized data. For the most part, we will deal with organized data as it is the easiest to glean insights from, but we will not shy away from looking at raw text and methods of processing unorganized forms of data.

Data science is the art and science of acquiring knowledge through data.

What a small definition for such a big topic, and rightfully so! Data science covers so many things that it would take pages to list it all out (I should know—I tried and got told to edit it down).

Data science is all about how we take data, use it to acquire knowledge, and then use that knowledge to do the following:

- Make decisions

- Predict the future

- Understand the past/present

- Create new industries/products

This book is all about the methods of data science, including how to process data, gather insights, and use those insights to make informed decisions and predictions.

Data science is about using data in order to gain new insights that you would otherwise have missed.

As an example, using data science, clinics can identify patients who are likely to not show up for an appointment. This can help improve margins, and providers can give other patients available slots.

That's why data science won't replace the human brain, but complement it, working alongside it. Data science should not be thought of as an end-all solution to our data woes; it is merely an opinion—a very informed opinion, but an opinion nonetheless. It deserves a seat at the table.

In this Data Age, it's clear that we have a surplus of data. But why should that necessitate an entirely new set of vocabulary? What was wrong with our previous forms of analysis? For one, the sheer volume of data makes it literally impossible for a human to parse it in a reasonable time frame. Data is collected in various forms and from different sources, and often comes in a very unorganized format.

Data can be missing, incomplete, or just flat out wrong. Oftentimes, we will have data on very different scales, and that makes it tough to compare it. Say that we are looking at data in relation to pricing used cars. One characteristic of a car is the year it was made, and another might be the number of miles on that car. Once we clean our data (which we will spend a great deal of time looking at in this book), the relationships between the data become more obvious, and the knowledge that was once buried deep in millions of rows of data simply pops out. One of the main goals of data science is to make explicit practices and procedures to discover and apply these relationships in the data.

Earlier, we looked at data science in a more historical perspective, but let's take a minute to discuss its role in business today using a very simple example.

Ben Runkle, the CEO of xyz123 Technologies, is trying to solve a huge problem. The company is consistently losing long-time customers. He does not know why they are leaving, but he must do something fast. He is convinced that in order to reduce his churn, he must create new products and features, and consolidate existing technologies. To be safe, he calls in his chief data scientist, Dr. Hughan. However, she is not convinced that new products and features alone will save the company. Instead, she turns to the transcripts of recent customer service tickets. She shows Ben the most recent transcripts and finds something surprising:

- ".... Not sure how to export this; are you?"

- "Where is the button that makes a new list?"

- "Wait, do you even know where the slider is?"

- "If I can't figure this out today, it's a real problem..."

It is clear that customers were having problems with the existing UI/UX, and weren't upset because of a lack of features. Runkle and Hughan organized a mass UI/UX overhaul and their sales have never been better.

Of course, the science used in the last example was minimal, but it makes a point. We tend to call people like Runkle drivers. Today's common stick-to-your-gut CEO wants to make all decisions quickly and iterate over solutions until something works. Dr. Hughan is much more analytical. She wants to solve the problem just as much as Runkle, but she turns to user-generated data instead of her gut feeling for answers. Data science is about applying the skills of the analytical mind and using them as a driver would.

Both of these mentalities have their place in today's enterprises; however, it is Hughan's way of thinking that dominates the ideas of data science—using data generated by the company as her source of information, rather than just picking up a solution and going with it.

It is a common misconception that only those with a PhD or geniuses can understand the math/programming behind data science. This is absolutely false. Understanding data science begins with three basic areas:

- Math/statistics: This is the use of equations and formulas to perforanalysis.

- Computer programming: This is the ability to use code to create outcomes on computer.

- Domain knowledge: This refers to understanding the problem domain (medicine, finance, social science, d so on).

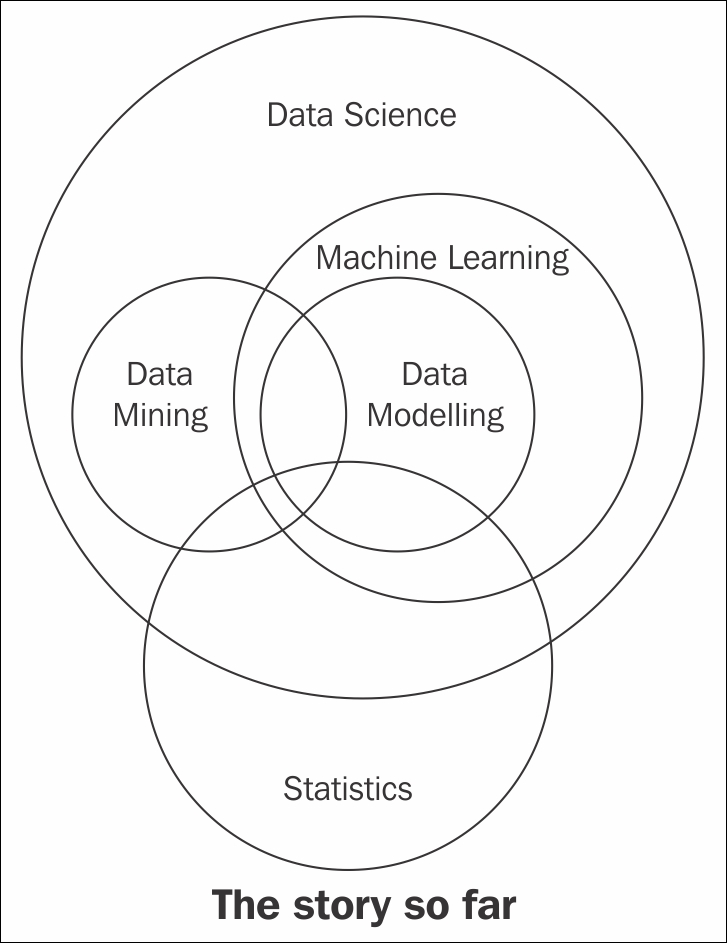

The following Venn diagram provides a visual representation of how these three areas of data science intersect:

The Venn diagram of data science

Those with hacking skills can conceptualize and program complicated algorithms using computer languages. Having a math and statistics background allows you to theorize and evaluate algorithms and tweak the existing procedures to fit specific situations. Having substantive expertise (domain expertise) allows you to apply concepts and results in a meaningful and effective way.

While having only two of these three qualities can make you intelligent, it will also leave a gap. Let's say that you are very skilled in coding and have formal training in day trading. You might create an automated system to trade in your place, but lack the math skills to evaluate your algorithms. This will mean that you end up losing money in the long run. It is only when you boost your skills in coding, math, and domain knowledge that you can truly perform data science.

The quality that was probably a surprise for you was domain knowledge. It is really just knowledge of the area you are working in. If a financial analyst started analyzing data about heart attacks, they might need the help of a cardiologist to make sense of a lot of the numbers.

Data science is the intersection of the three key areas mentioned earlier. In order to gain knowledge from data, we must be able to utilize computer programming to access the data, understand the mathematics behind the models we derive, and, above all, understand our analyses' place in the domain we are in. This includes the presentation of data. If we are creating a model to predict heart attacks in patients, is it better to create a PDF of information, or an app where you can type in numbers and get a quick prediction? All these decisions must be made by the data scientist.

Note

The intersection of math and coding is machine learning. This book will look at machine learning in great detail later on, but it is important to note that without the explicit ability to generalize any models or results to a domain, machine learning algorithms remain just that—algorithms sitting on your computer. You might have the best algorithm to predict cancer. You could be able to predict cancer with over 99% accuracy based on past cancer patient data, but if you don't understand how to apply this model in a practical sense so that doctors and nurses can easily use it, your model might be useless.

Both computer programming and math are covered extensively in this book. Domain knowledge comes with both the practice of data science and reading examples of other people's analyses.

Most people stop listening once someone says the word "math." They'll nod along in an attempt to hide their utter disdain for the topic. This book will guide you through the math needed for data science, specifically statistics and probability. We will use these subdomains of mathematics to create what are called models.

A data model refers to an organized and formal relationship between elements of data, usually meant to simulate a real-world phenomenon.

Essentially, we will use math in order to formalize relationships between variables. As a former pure mathematician and current math teacher, I know how difficult this can be. I will do my best to explain everything as clearly as I can. Between the three areas of data science, math is what allows us to move from domain to domain. Understanding the theory allows us to apply a model that we built for the fashion industry to a financial domain.

The math covered in this book ranges from basic algebra to advanced probabilistic and statistical modeling. Do not skip over these chapters, even if you already know these topics or you're afraid of them. Every mathematical concept that I will introduce will be introduced with care and purpose, using examples. The math in this book is essential for data scientists.

In biology, we use, among many other models, a model known as the spawner-recruit model to judge the biological health of a species. It is a basic relationship between the number of healthy parental units of a species and the number of new units in the group of animals. In a public dataset of the number of salmon spawners and recruits, the graph further down (titled spawner-recruit model) was formed to visualize the relationship between the two. We can see that there definitely is some sort of positive relationship (as one goes up, so does the other). But how can we formalize this relationship? For example, if we knew the number of spawners in a population, could we predict the number of recruits that the group would obtain, and vice versa?

Essentially, models allow us to plug in one variable to get the other. Consider the follo In this example, let's say we knew that a group of salmon had 1.15 (in thousands) spawners. Then, we would have t

In this example, let's say we knew that a group of salmon had 1.15 (in thousands) spawners. Then, we would have t

This result can be very beneficial to estimate how the health of a population is changing. If we can create these models, we can visually observe how the relationship between the two variables can change.

This result can be very beneficial to estimate how the health of a population is changing. If we can create these models, we can visually observe how the relationship between the two variables can change.

There are many types of data models, including probabilistic and statistical models. Both of these are subsets of a larger paradigm, called machine learning. The essential idea behind these three topics is that we use data in order to come up with the best model possible. We no longer rely on human instincts—rather, we rely on data, such as that displayed in the following graph:

The spawner-recruit model visualized

The purpose of this example is to show how we can define relationships between data elements using mathematical equations. The fact that I used salmon health data was irrelevant! Throughout this book, we will look at relationships involving marketing dollars, sentiment data, restaurant reviews, and much more. The main reason for this is that I would like you (the reader) to be exposed to as many domains as possible.

Math and coding are vehicles that allow data scientists to step back and apply their skills virtually anywhere.

Let's be honest: you probably think computer science is way cooler than math. That's ok, I don't blame you. The news isn't filled with math news like it is with news on technology. You don't turn on the TV to see a new theory on primes—rather, you will see investigative reports on how the latest smartphone can take better photos of cats, or something. Computer languages are how we communicate with machines and tell them to do our bidding. A computer speaks many languages and, like a book, can be written in many languages; similarly, data science can also be done in many languages. Python, Julia, and R are some of the many languages that are available to us. This book will focus exclusively on using Python.

We will use Python for a variety of reasons, listed as follows:

- Python is an extremely simple language to read and write, even if you've never coded before, which will make future examples easy to understand and read later on, even after you have read this book.

- It is one of the most common languages, both in production and in the academic setting (one of the fastest growing, as a matter of fact).

- The language's online community is vast and friendly. This means that a quick search for the solution to a problem should yield many people who have faced and solved similar (if not exactly the same) situations

- Python has prebuilt data science modules that both the novice and the veteran data scientist can utilize.

The last point is probably the biggest reason we will focus on Python. These prebuilt modules are not only powerful, but also easy to pick up. By the end of the first few chapters, you will be very comfortable with these modules. Some of these modules include the following:

- pandas

- scikit-learn

- seaborn

- numpy/scipy

- requests (to mine data from the web)

- BeautifulSoup (for web–HTML parsing)

Before we move on, it is important to formalize many of the requisite coding skills in Python.

In Python, we have variables that are placeholders for objects. We will focus on just a few types of basic objects at first, as shown in the following table:

|

Object Type |

Example |

|---|---|

|

|

3, 6, 99, -34, 34, 11111111 |

|

|

3.14159, 2.71, -0.34567 |

|

|

|

|

|

"I love hamburgers" (by the way, who doesn't?) "Matt is awesome" A tweet is a string |

|

|

|

We will also have to understand some basic logistical operators. For these operators, keep the Boolean datatype in mind. Every operator will evaluate to either True or False. Let's take a look at the following operators:

|

Operators |

Example |

|---|---|

|

|

Evaluates to

|

|

|

|

|

|

|

|

|

|

|

|

|

When coding in Python, I will use a pound sign (#) to create a "comment," which will not be processed as code, but is merely there to communicate with the reader. Anything to the right of a # sign is a comment on the code being executed.

In Python, we use spaces/tabs to denote operations that belong to other lines of code.

Note that the following list variable, my_list, can hold multiple types of objects. This one has an int, a float, a boolean, and string inputs (in that order):

my_list = [1, 5.7, True, "apples"] len(my_list) == 4 # 4 objects in the list my_list[0] == 1 # the first object my_list[1] == 5.7 # the second object

In the preceding code, I used the len command to get the length of the list (which was 4). Also, note the zero-indexing of Python. Most computer languages start counting at zero instead of one. So if I want the first element, I call index 0, and if I want the 95th element, I call index 94.

Here is some more Python code. In this example, I will be parsing some tweets about stock prices (one of the important case studies in this book will be trying to predict market movements based on popular sentiment regarding stocks on social media):

tweet = "RT @j_o_n_dnger: $TWTR now top holding for Andor, unseating $AAPL"

words_in_tweet = tweet.split(' ') # list of words in tweet

for word in words_in_tweet: # for each word in list

if "$" in word: # if word has a "cashtag"

print("THIS TWEET IS ABOUT", word) # alert the user I will point out a few things about this code snippet line by line, as follows:

- First, we set a variable to hold some text (known as a string in Python). In this example, the tweet in question is

"RT @robdv: $TWTR now top holding for Andor, unseating $AAPL". - The

words_in_tweetvariable tokenizes the tweet (separates it by word). If you were to print this variable, you would see the following:['RT', '@robdv:', '$TWTR', 'now', 'top', 'holding', 'for', 'Andor,', 'unseating', '$AAPL']

- We iterate through this list of words; this is called a

forloop. It just means that we go through a list one by one. - Here, we have another

ifstatement. For each word in this tweet, if the word contains the$character it represents stock tickers on Twitter. - If the preceding

ifstatement isTrue(that is, if the tweet contains a cashtag), print it and show it to the user.

The output of this code will be as follows:

THIS TWEET IS ABOUT $TWTR THIS TWEET IS ABOUT $AAPL

We get this output as these are the only words in the tweet that use the cashtag. Whenever I use Python in this book, I will ensure that I am as explicit as possible about what I am doing in each line of code.

As I mentioned earlier, domain knowledge focuses mainly on having knowledge of the particular topic you are working on. For example, if you are a financial analyst working on stock market data, you have a lot of domain knowledge. If you are a journalist looking at worldwide adoption rates, you might benefit from consulting an expert in the field. This book will attempt to show examples from several problem domains, including medicine, marketing, finance, and even UFO sightings!

Does this mean that if you're not a doctor, you can't work with medical data? Of course not! Great data scientists can apply their skills to any area, even if they aren't fluent in it. Data scientists can adapt to the field and contribute meaningfully when their analysis is complete.

A big part of domain knowledge is presentation. Depending on your audience, it can matter greatly on how you present your findings. Your results are only as good as your vehicle of communication. You can predict the movement of the market with 99.99% accuracy, but if your program is impossible to execute, your results will go unused. Likewise, if your vehicle is inappropriate for the field, your results will go equally unused.

This is a good time to define some more vocabulary. By this point, you're probably excitedly looking up a lot of data science material and seeing words and phrases I haven't used yet. Here are some common terms that you are likely to encounter.

- Machine learning: This refers to giving computers the ability to learn from data without explicit "rules" being given by a programmer. We have seen the concept of machine learning earlier in this chapter as the union of someone who has both coding and math skills. Here, we are attempting to formalize this definition. Machine learning combines the power of computers with intelligent learning algorithms in order to automate the discovery of relationships in data and create powerful data models. Speaking of data models, in this book, we will concern ourselves with the following two basic types of data model:

- Probabilistic model: This refers to using probability to find a relationship between elements that includes a degree of randomness.

- Statistical model: This refers to taking advantage of statistical theorems to formalize relationships between data elements in a (usually) simple mathematical formula.

Note

While both the statistical and probabilistic models can be run on computers and might be considered machine learning in that regard, we will keep these definitions separate, since machine learning algorithms generally attempt to learn relationships in different ways. We will take a look at the statistical and probabilistic models in later chapters.

- Exploratory data analysis (EDA): This refers to preparing data in order to standardize results and gain quick insights. EDA is concerned with data visualization and preparation. This is where we turn unorganized data into organized data and clean up missing/incorrect data points. During EDA, we will create many types of plots and use these plots to identify key features and relationships to exploit in our data models.

- Data mining: This is the process of finding relationships between elements of data. Data mining is the part of data science where we try to find relationships between variables (think the spawn-recruit model).

I have tried pretty hard not to use the term big data up until now. This is because I think this term is misused, a lot. Big data is data that is too large to be processed by a single machine (if your laptop crashed, it might be suffering from a case of big data).

The following diagram shows the relationship between these data science concepts:

The state of data science (so far)

The preceding diagram is incomplete and is meant for visualization purposes only.

The combination of math, computer programming, and domain knowledge is what makes data science so powerful. Oftentimes, it is difficult for a single person to master all three of these areas. That's why it's very common for companies to hire teams of data scientists instead of a single person. Let's look at a few powerful examples of data science in action and their outcomes.

Social security claims are known to be a major hassle for both the agent reading it and the person who wrote the claim. Some claims take over two years to get resolved in their entirety, and that's absurd! Let's look at the following diagram, which shows what goes into a claim:

Sample social security form

Not bad. It's mostly just text, though. Fill this in, then that, then this, and so on. You can see how it would be difficult for an agent to read these all day, form after form. There must be a better way!

Well, there is. Elder Research Inc. parsed this unorganized data and was able to automate 20% of all disability social security forms. This means that a computer could look at 20% of these written forms and give its opinion on the approval.

Not only that—the third-party company that is hired to rate the approvals of the forms actually gave the machine-graded forms a higher grade than the human forms. So, not only did the computer handle 20% of the load on average, it also did better than a human.

Before I get a load of angry emails claiming that data science is bringing about the end of human workers, keep in mind that the computer was only able to handle 20% of the load. This means that it probably performed terribly on 80% of the forms! This is because the computer was probably great at simple forms. The claims that would have taken a human minutes to compute took the computer seconds. But these minutes add up, and before you know it, each human is being saved over an hour a day!

Forms that might be easy for a human to read are also likely easy for the computer. It's when the forms are very terse, or when the writer starts deviating from the usual grammar, that the computer starts to fail. This model is great because it lets the humans spend more time on those difficult claims and gives them more attention without getting distracted by the sheer volume of papers.

A dataset shows the relationships between TV, radio, and newspaper sales. The goal is to analyze the relationships between the three different marketing mediums and how they affect the sale of a product. In this case, our data is displayed in the form of a table. Each row represents a sales region, and the columns tell us how much money was spent on each medium, as well as the profit that was gained in that region. For example, from the following table, we can see that in the third region, we spent $17,200 on TV advertising and sold 9,300 widgets:

Note

Usually, the data scientist must ask for units and the scale. In this case, I will tell you that the TV, radio, and newspaper categories are measured in "thousands of dollars" and the sales in "thousands of widgets sold." This means that in the first region, $230,100 was spent on TV advertising, $37,800 on radio advertising, and $69,200 on newspaper advertising. In the same region, 22,100 items were sold.

Advertising budgets' data

If we plot each variable against the sales, we get the following graph:

import pandas as pd

import seaborn as sns

%matplotlib inline

data = pd.read_csv('http://www-bcf.usc.edu/~gareth/ISL/Advertising.csv', index_col=0)

data.head()

sns.pairplot(data, x_vars=['TV','radio','newspaper'], y_vars='sales', height=4.5, aspect=0.7)

Results – Graphs of advertising budgets

Note how none of these variables form a very strong line, and that therefore they might not work well in predicting sales on their own. TV comes closest in forming an obvious relationship, but even that isn't great. In this case, we will have to create a more complex model than the one we used in the spawner-recruiter model and combine all three variables in order to model sales.

This type of problem is very common in data science. In this example, we are attempting to identify key features that are associated with the sales of a product. If we can isolate these key features, then we can exploit these relationships and change how much we spend on advertising in different places with the hope of increasing our sales.

Looking for a job in data science? Great! Let me help. In this case study, I have "scraped" (taken from the web) 1,000 job descriptions for companies that are actively hiring data scientists. The goal here is to look at some of the most common keywords that people use in their job descriptions, as shown in the following screenshot:

An example of data scientist job listings

In the following Python code, the first two imports are used to grab web data from the website http://indeed.com/, and the third import is meant to simply count the number of times a word or phrase appears, as shown in the following code:

import requests

from bs4 import BeautifulSoup

from sklearn.feature_extraction.text import CountVectorizer

# grab postings from the webtexts = []

for i in range(0,1000,10): # cycle through 100 pages of indeed job resources

soup = BeautifulSoup(requests.get('http://www.indeed.com/jobs?q=data+scientist&start='+str(i)).text)

texts += [a.text for a in soup.findAll('span', {'class':'summary'})]

print(type(texts))

print(texts[0]) # first job descriptionAll that this loop is doing is going through 100 pages of job descriptions, and for each page, grabbing each job description. The important variable here is texts, which is a list of over 1,000 job descriptions, as shown in the following code:

type(texts) # == list vect = CountVectorizer(ngram_range=(1,2), stop_words='english') # Get basic counts of one and two word phrases matrix = vect.fit_transform(texts) # fit and learn to the vocabulary in the corpus print len(vect.get_feature_names()) # how many features are there # There are 10,587 total one and two words phrases in my case!! Since web pages are scraped in real-time and these pages may change since this code is run, you may get different number than 10587.

I have omitted some code here, but it exists in the GitHub repository for this book. The results are as follows (represented as the phrase and then the number of times it occurred):

The following list shows some things that we should mention:

- "Machine learning" and "experience" are at the top of the list. Experience comes with practice. A basic idea of machine learning comes with this book.

- These words are followed closely by statistical words implying a knowledge of math and theory.

- The word "team" is very high up, implying that you will need to work with a team of data scientists; you won't be a lone wolf.

- Computer science words such as "algorithms" and "programming" are prevalent.

- The words "techniques", "understanding", and "methods" imply a more theoretical approach, unrelated to any single domain.

- The word "business" implies a particular problem domain.

There are many interesting things to note about this case study, but the biggest take away is that there are many keywords and phrases that make up a data science role. It isn't just math, coding, or domain knowledge; it truly is a combination of these three ideas (whether exemplified in a single person or across a multiperson team) that makes data science possible and powerful.

At the beginning of this chapter, I posed a simple question: what's the catch of data science? Well, there is one. It isn't all fun, games, and modeling. There must be a price for our quest to create ever-smarter machines and algorithms. As we seek new and innovative ways to discover data trends, a beast lurks in the shadows. I'm not talking about the learning curve of mathematics or programming, nor am I referring to the surplus of data. The Industrial Age left us with an ongoing battle against pollution. The subsequent Information Age left behind a trail of big data. So, what dangers might the Data Age bring us?

The Data Age can lead to something much more sinister — the dehumanization of the individual through mass data.

More and more people are jumping head-first into the field of data science, most with no prior experience of math or CS, which, on the surface, is great. Average data scientists have access to millions of dating profiles' data, tweets, online reviews, and much more in order to jump start their education.

However, if you jump into data science without the proper exposure to theory or coding practices, and without respect for the domain you are working in, you face the risk of oversimplifying the very phenomenon you are trying to model.

For example, let's say you want to automate your sales pipeline by building a simplistic program that looks at LinkedIn for very specific keywords in a person's LinkedIn profile. You could use the following code to do this:

keywords = ["Saas", "Sales", "Enterprise"]

Great. Now you can scan LinkedIn quickly to find people who match your criteria. But what about that person who spells out "Software as a Service", instead of "SaaS," or misspells "enterprise" (it happens to the best of us; I bet someone will find a typo in my book). How will your model figure out that these people are also a good match? They should not be left behind just because the cut-corners data scientist has overgeneralized people in such an easy way.

The programmer chose to simplify their search for another human by looking for three basic keywords and ended up with a lot of missed opportunities left on the table.

In the next chapter, we will explore the different types of data that exist in the world, ranging from free-form text to highly structured row/column files. We will also look at the mathematical operations that are allowed for different types of data, as well as deduce insights based on the form of the data that comes in.

Download code from GitHub

Download code from GitHub