In the world of Microsoft system administration, it is becoming impossible to avoid PowerShell. If you've never looked at PowerShell before or want a refresher to make sure you understand what is going on, this chapter would be a good place to start. We will cover the following topics:

- Determining the installed PowerShell version

- Installing/Upgrading PowerShell

- Starting a PowerShell session

- Simple PowerShell commands

- PowerShell Aliases

There are many ways to determine which version of PowerShell, if any, is installed on a computer. We will examine how this information is stored in the registry and how to have PowerShell tell you the version.

Tip

It's helpful while learning new things to actually follow along on the computer as much as possible. A big part of the learning process is developing "muscle memory", where you get used to typing the commands you see throughout Module 1. As you do this, it will also help you pay closer attention to the details in the code you see, since the code won't work correctly if you don't get the syntax right.

If you're running at least Windows 7 or Server 2008, you already have PowerShell installed on your machine. Windows XP and Server 2003 can install PowerShell, but it isn't pre-installed as part of the Operating System (OS). To see if PowerShell is installed at all, no matter what OS is running, inspect the following registry entry:

HKLM\Software\Microsoft\PowerShell\1\Install

If this exists and contains the value of 1, PowerShell is installed. To see, using the registry, what version of PowerShell is installed, there are a couple of places to look at.

First, the following value will exist if PowerShell 3.0 or higher is installed:

HKLM\SOFTWARE\Microsoft\PowerShell\3\PowerShellEngine\PowerShellVersion

If this value exists, it will contain the PowerShell version that is installed. For instance, my Windows 7 computer is running PowerShell 4.0, so regedit.exe shows the value of 4.0 in that entry:

If this registry entry does not exist, you have either PowerShell 1.0 or 2.0 installed. To determine which, examine the following registry entry (note the 3 is changed to 1):

HKLM\SOFTWARE\Microsoft\PowerShell\1\PowerShellEngine\PowerShellVersion

This entry will either contain 1.0 or 2.0, to match the installed version of PowerShell.

It is important to note that all versions of PowerShell after 2.0 include the 2.0 engine, so this registry entry will exist for any version of PowerShell. For example, even if you have PowerShell 5.0 installed, you will also have the PowerShell 2.0 engine installed and will see both 5.0 and 2.0 in the registry.

No matter what version of PowerShell you have installed, it will be installed in the same place. This installation directory is called PSHOME and is located at:

%WINDIR%\System32\WindowsPowerShell\v1.0\

In this folder, there will be an executable file called PowerShell.exe. There should be a shortcut to this in your start menu, or in the start screen, depending on your operating system. In either of these, search for PowerShell and you should find it. Running this program opens the PowerShell console, which is present in all the versions.

At first glance, this looks like Command Prompt but the text (and perhaps the color) should give a clue that something is different:

In this console, type $PSVersionTable in the command-line and press Enter. If the output is an error message, PowerShell 1.0 is installed, because the $PSVersionTable variable was introduced in PowerShell 2.0. If a table of version information is seen, the PSVersion entry in the table is the installed version. The following is the output on a typical computer, showing that PowerShell 4.0 is installed:

If you don't have PowerShell installed or want a more recent version of PowerShell, you'll need to find the Windows Management Framework (WMF) download that matches the PowerShell version you want. WMF includes PowerShell as well as other related tools such as Windows Remoting (WinRM), Windows Management Instrumentation (WMI), and Desired State Configuration (DSC). The contents of the distribution change from version to version, so make sure to read the release notes included in the download. Here are links to the installers:

|

PowerShell Version |

URL |

|---|---|

|

1.0 | |

|

2.0 | |

|

3.0 |

http://www.microsoft.com/en-us/download/details.aspx?id=34595 |

|

4.0 |

http://www.microsoft.com/en-us/download/details.aspx?id=40855 |

|

5.0 (Feb. Preview) |

http://www.microsoft.com/en-us/download/details.aspx?id=45883 |

Note that PowerShell 5.0 has not been officially released, so the table lists the February 2015 preview, the latest at the time of writing.

The PowerShell 1.0 installer was released as an executable (.exe), but since then the releases have all been as standalone Windows update installers (.msu). All of these are painless to execute. You can simply download the file and run it from the explorer or from the Run… option in the start menu. PowerShell installs don't typically require a reboot but it's best to plan on doing one, just in case.

It's important to note that you can only have one version of PowerShell installed, and you can't install a lower version than the version that was shipped with your OS. Also, there are noted compatibility issues between various versions of PowerShell and Microsoft products such as Exchange, System Center, and Small Business Server, so make sure to read the system requirements section on the download page. Most of the conflicts can be resolved with a service pack of the software, but you should be sure of this before upgrading PowerShell on a server.

We already started a PowerShell session earlier in the section on using PowerShell to find the installed version. So, what more is there to see? It turns out that there is more than one program used to run PowerShell, possibly more than one version of each of these programs, and finally, more than one way to start each of them. It might sound confusing but it will all make sense shortly.

A PowerShell host is a program that provides access to the PowerShell engine in order to run PowerShell commands and scripts. The PowerShell.exe that we saw in the PSHOME directory earlier in this chapter is known as the console host. It is cosmetically similar to Command Prompt (cmd.exe) and only provides a command-line interface. Starting with Version 2.0 of PowerShell, a second host was provided.

The Integrated Scripting Environment (ISE) is a graphical environment providing multiple editors in a tabbed interface along with menus and the ability to use plugins. While not as fully featured as an Integrated Development Environment (IDE), the ISE is a tremendous productivity tool used to build PowerShell scripts and is a great improvement over using an editor, such as notepad for development.

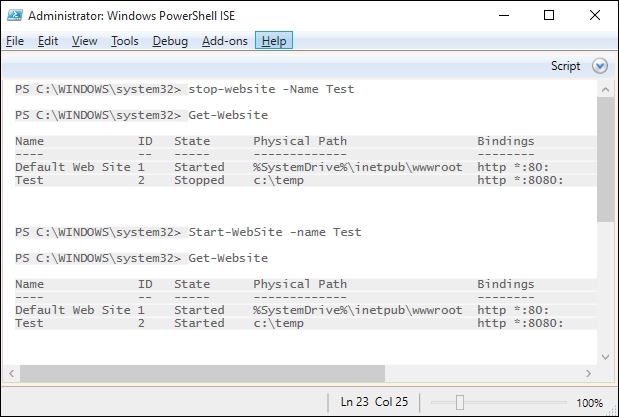

The ISE executable is stored in PSHOME, and is named powershell_ise.exe. In Version 2.0 of the ISE, there were three sections, a tabbed editor, a console for input, and a section for output. Starting with Version 3.0, the input and output sections were combined into a single console that is more similar to the interface of the console host. The Version 4.0 ISE is shown as follows:

I will be using the Light Console, Light Editor theme for the ISE in most of the screenshots for Module 1, because the dark console does not work well on the printed page. To switch to this theme, open the Options item in the Tools Menu and select Manage Themes... in the options window:

Press the Manage Themes... button, select the Light Console, Light Editor option from the list and press OK. Press OK again to exit the options screen and your ISE should look something similar to the following:

Note that you can customize the appearance of the text in the editor and the console pane in other ways as well. Other than switching to the light console display, I will try to keep the settings to default.

In addition to the console host and the ISE, if you have a 64-bit operating system, you will also have 64-bit and 32-bit PowerShell installations that will include separate copies of both the hosts.

As mentioned before, the main installation directory, or PSHOME, is found at %WINDIR%\System32\WindowsPowerShell\v1.0\. The version of PowerShell in PSHOME matches that of the the operating system. In other words, on a 64-bit OS, the PowerShell in PSHOME is 64-bit. On a 32-bit system, PSHOME has a 32-bit PowerShell install. On a 64-bit system, a second 32-bit system is found in %WINDIR%\SysWOW64\WindowsPowerShell\v1.0.

Tip

Isn't that backward?

It seems backward that the 64-bit install is in the System32 folder and the 32-bit install is in SysWOW64. The System32 folder is always the primary system directory on a Windows computer, and this name has remained for backward compatibility reasons. SysWOW64 is short for Windows on Windows 64-bit. It contains the 32-bit binaries required for 32-bit programs to run in a 64-bit system, since 32-bit programs can't use the 64-bit binaries in System32.

Looking in the Program Files\Accessories\Windows PowerShell menu in the start menu of a 64-bit Windows 7 install, we see the following:

Here, the 32-bit hosts are labeled as (x86) and the 64-bit versions are undesignated. When you run the 32-bit hosts on a 64-bit system, you will also see the (x86) designation in the title bar:

When you run a PowerShell host, the session is not elevated. This means that even though you might be an administrator of the machine, the PowerShell session is not running with administrator privileges. This is a safety feature to help prevent users from inadvertently running a script that damages the system.

In order to run a PowerShell session as an administrator, you have a couple of options. First, you can right-click on the shortcut for the host and select Run as administrator from the context menu. When you do this, unless you have disabled the UAC alerts, you will see a User Account Control (UAC) prompt verifying whether you want to allow the application to run as an administrator.

Selecting Yes allows the program to run as an administrator, and the title bar reflects that this is the case:

The second way to run one of the hosts as an administrator is to right-click on the shortcut and choose Properties. On the shortcut tab of the properties window, press the Advanced button. In the Advanced Properties window that pops up, check the Run as administrator checkbox and press OK, and OK again to exit out of the properties window:

Using this technique will cause the shortcut to always launch as an administrator, although the UAC prompt will still appear.

PowerShell hosts

A PowerShell host is a program that provides access to the PowerShell engine in order to run PowerShell commands and scripts. The PowerShell.exe that we saw in the PSHOME directory earlier in this chapter is known as the console host. It is cosmetically similar to Command Prompt (cmd.exe) and only provides a command-line interface. Starting with Version 2.0 of PowerShell, a second host was provided.

The Integrated Scripting Environment (ISE) is a graphical environment providing multiple editors in a tabbed interface along with menus and the ability to use plugins. While not as fully featured as an Integrated Development Environment (IDE), the ISE is a tremendous productivity tool used to build PowerShell scripts and is a great improvement over using an editor, such as notepad for development.

The ISE executable is stored in PSHOME, and is named powershell_ise.exe. In Version 2.0 of the ISE, there were three sections, a tabbed editor, a console for input, and a section for output. Starting with Version 3.0, the input and output sections were combined into a single console that is more similar to the interface of the console host. The Version 4.0 ISE is shown as follows:

I will be using the Light Console, Light Editor theme for the ISE in most of the screenshots for Module 1, because the dark console does not work well on the printed page. To switch to this theme, open the Options item in the Tools Menu and select Manage Themes... in the options window:

Press the Manage Themes... button, select the Light Console, Light Editor option from the list and press OK. Press OK again to exit the options screen and your ISE should look something similar to the following:

Note that you can customize the appearance of the text in the editor and the console pane in other ways as well. Other than switching to the light console display, I will try to keep the settings to default.

In addition to the console host and the ISE, if you have a 64-bit operating system, you will also have 64-bit and 32-bit PowerShell installations that will include separate copies of both the hosts.

As mentioned before, the main installation directory, or PSHOME, is found at %WINDIR%\System32\WindowsPowerShell\v1.0\. The version of PowerShell in PSHOME matches that of the the operating system. In other words, on a 64-bit OS, the PowerShell in PSHOME is 64-bit. On a 32-bit system, PSHOME has a 32-bit PowerShell install. On a 64-bit system, a second 32-bit system is found in %WINDIR%\SysWOW64\WindowsPowerShell\v1.0.

Tip

Isn't that backward?

It seems backward that the 64-bit install is in the System32 folder and the 32-bit install is in SysWOW64. The System32 folder is always the primary system directory on a Windows computer, and this name has remained for backward compatibility reasons. SysWOW64 is short for Windows on Windows 64-bit. It contains the 32-bit binaries required for 32-bit programs to run in a 64-bit system, since 32-bit programs can't use the 64-bit binaries in System32.

Looking in the Program Files\Accessories\Windows PowerShell menu in the start menu of a 64-bit Windows 7 install, we see the following:

Here, the 32-bit hosts are labeled as (x86) and the 64-bit versions are undesignated. When you run the 32-bit hosts on a 64-bit system, you will also see the (x86) designation in the title bar:

When you run a PowerShell host, the session is not elevated. This means that even though you might be an administrator of the machine, the PowerShell session is not running with administrator privileges. This is a safety feature to help prevent users from inadvertently running a script that damages the system.

In order to run a PowerShell session as an administrator, you have a couple of options. First, you can right-click on the shortcut for the host and select Run as administrator from the context menu. When you do this, unless you have disabled the UAC alerts, you will see a User Account Control (UAC) prompt verifying whether you want to allow the application to run as an administrator.

Selecting Yes allows the program to run as an administrator, and the title bar reflects that this is the case:

The second way to run one of the hosts as an administrator is to right-click on the shortcut and choose Properties. On the shortcut tab of the properties window, press the Advanced button. In the Advanced Properties window that pops up, check the Run as administrator checkbox and press OK, and OK again to exit out of the properties window:

Using this technique will cause the shortcut to always launch as an administrator, although the UAC prompt will still appear.

64-bit and 32-bit PowerShell

In addition to the console host and the ISE, if you have a 64-bit operating system, you will also have 64-bit and 32-bit PowerShell installations that will include separate copies of both the hosts.

As mentioned before, the main installation directory, or PSHOME, is found at %WINDIR%\System32\WindowsPowerShell\v1.0\. The version of PowerShell in PSHOME matches that of the the operating system. In other words, on a 64-bit OS, the PowerShell in PSHOME is 64-bit. On a 32-bit system, PSHOME has a 32-bit PowerShell install. On a 64-bit system, a second 32-bit system is found in %WINDIR%\SysWOW64\WindowsPowerShell\v1.0.

Tip

Isn't that backward?

It seems backward that the 64-bit install is in the System32 folder and the 32-bit install is in SysWOW64. The System32 folder is always the primary system directory on a Windows computer, and this name has remained for backward compatibility reasons. SysWOW64 is short for Windows on Windows 64-bit. It contains the 32-bit binaries required for 32-bit programs to run in a 64-bit system, since 32-bit programs can't use the 64-bit binaries in System32.

Looking in the Program Files\Accessories\Windows PowerShell menu in the start menu of a 64-bit Windows 7 install, we see the following:

Here, the 32-bit hosts are labeled as (x86) and the 64-bit versions are undesignated. When you run the 32-bit hosts on a 64-bit system, you will also see the (x86) designation in the title bar:

When you run a PowerShell host, the session is not elevated. This means that even though you might be an administrator of the machine, the PowerShell session is not running with administrator privileges. This is a safety feature to help prevent users from inadvertently running a script that damages the system.

In order to run a PowerShell session as an administrator, you have a couple of options. First, you can right-click on the shortcut for the host and select Run as administrator from the context menu. When you do this, unless you have disabled the UAC alerts, you will see a User Account Control (UAC) prompt verifying whether you want to allow the application to run as an administrator.

Selecting Yes allows the program to run as an administrator, and the title bar reflects that this is the case:

The second way to run one of the hosts as an administrator is to right-click on the shortcut and choose Properties. On the shortcut tab of the properties window, press the Advanced button. In the Advanced Properties window that pops up, check the Run as administrator checkbox and press OK, and OK again to exit out of the properties window:

Using this technique will cause the shortcut to always launch as an administrator, although the UAC prompt will still appear.

PowerShell as an administrator

When you run a PowerShell host, the session is not elevated. This means that even though you might be an administrator of the machine, the PowerShell session is not running with administrator privileges. This is a safety feature to help prevent users from inadvertently running a script that damages the system.

In order to run a PowerShell session as an administrator, you have a couple of options. First, you can right-click on the shortcut for the host and select Run as administrator from the context menu. When you do this, unless you have disabled the UAC alerts, you will see a User Account Control (UAC) prompt verifying whether you want to allow the application to run as an administrator.

Selecting Yes allows the program to run as an administrator, and the title bar reflects that this is the case:

The second way to run one of the hosts as an administrator is to right-click on the shortcut and choose Properties. On the shortcut tab of the properties window, press the Advanced button. In the Advanced Properties window that pops up, check the Run as administrator checkbox and press OK, and OK again to exit out of the properties window:

Using this technique will cause the shortcut to always launch as an administrator, although the UAC prompt will still appear.

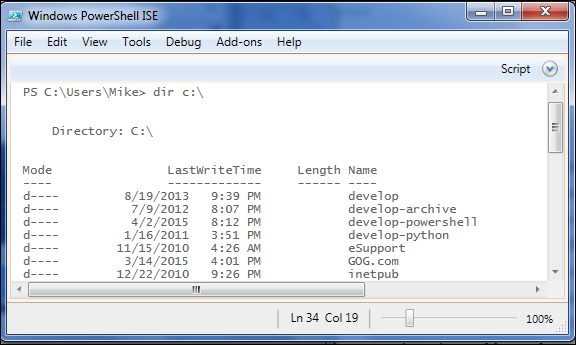

Now that we know all the ways that can get a PowerShell session started, what can we do in a PowerShell session? I like to introduce people to PowerShell by pointing out that most of the command-line tools that they already know work fine in PowerShell. For instance, try using DIR, CD, IPCONFIG, and PING. Commands that are part of Command Prompt (think DOS commands) might work slightly different in PowerShell if you look closely, but typical command-line applications work exactly the same as they have always worked in Command Prompt:

PowerShell commands, called cmdlets, are named with a verb-noun convention. Approved verbs come from a list maintained by Microsoft and can be displayed using the get-verb cmdlet:

By controlling the list of verbs, Microsoft has made it easier to learn PowerShell. The list is not very long and it doesn't contain verbs that have the same meaning (such as Stop, End, Terminate, and Quit), so once you learn a cmdlet using a specific verb, you can easily guess the meaning of the cmdlet names that include the verb.

Some other easy to understand cmdlets are:

Clear-Host(clears the screen)Get-Date(outputs the date)Start-Service(starts a service)Stop-Process(stops a process)Get-Help(shows help about something)

Note that these use several different verbs. From this list, you can probably guess what cmdlet you would use to stop a service. Since you know there's a Start-Service cmdlet, and you know from the Stop-Process cmdlet that Stop is a valid verb, it is logical that Stop-Service is what you would use. The consistency of PowerShell cmdlet naming is a tremendous benefit to learners of PowerShell, and it is a policy that is important as you write the PowerShell code.

Tip

What is a cmdlet?

The term cmdlet was coined by Jeffery Snover, the inventor of PowerShell to refer to the PowerShell commands. The PowerShell commands aren't particularly different from other commands, but by giving a unique name to them, he ensured that PowerShell users would be able to use search engines to easily find PowerShell code simply by including the term cmdlet.

If you tried to use DIR and CD in the last section, you may have noticed that they didn't work exactly as the DOS commands that they resemble. In case you didn't see this, enter DIR /S on a PowerShell prompt and see what happens. You will either get an error complaining about a path not existing, or get a listing of a directory called S. Either way, it's not the listing of files including subdirectories. Similarly, you might have noticed that CD in PowerShell allows you to switch between drives without using the /D option and even lets you change to a UNC path. The point is that, these are not DOS commands. What you're seeing is a PowerShell alias.

Aliases in PowerShell are alternate names that PowerShell uses for both PowerShell commands and programs. For instance, in PowerShell, DIR is actually an alias for the Get-ChildItem cmdlet. The CD alias points to the Set-Location cmdlet. Aliases exist for many of the cmdlets that perform operations similar to the commands in DOS, Linux, or Unix shells. Aliases serve two main purposes in PowerShell, as follows:

- They allow more concise code on Command Prompt

- They ease users' transition from other shells to PowerShell

To see a list of all the aliases defined in PowerShell, you can use the Get-Alias cmdlet. To find what an alias references, type Get-Alias <alias>. For example, see the following screenshot:

To find out what aliases exist for a cmdlet, type Get-Alias –Definition <cmdlet> as follows:

Here we can see that the Get-ChildItem cmdlet has three aliases. The first and last assist in transitioning from DOS and Linux shells, and the middle one is an abbreviation using the first letters in the name of the cmdlet.

This chapter focused on figuring out what version of PowerShell was installed and the many ways to start a PowerShell session. A quick introduction to PowerShell cmdlets showed that a lot of the command-line knowledge we have from DOS can be used in PowerShell and that aliases make this transition easier.

In the next chapter, we will look at the Get-Command, Get-Help, and Get-Member cmdlets, and learn how they unlock the entire PowerShell ecosystem for us.

The Monad Manifesto, which outlines the original vision of the PowerShell project: http://blogs.msdn.com/b/powershell/archive/2007/03/19/monad-manifesto-the-origin-of-windows-powershell.aspx

Microsoft's approved cmdlet verb list: http://msdn.microsoft.com/en-us/library/ms714428.aspx

get-help about_aliasesget-help about_PowerShell.exeget-help about_ PowerShell_ISE.exe

Even though books, videos, and the Internet can be helpful in your efforts to learn PowerShell, you will find that your greatest ally in this quest is PowerShell itself. In this chapter, we will look at two fundamental weapons in any PowerShell scripter's arsenal, the Get-Command and Get-Help cmdlets. The topics covered in this chapter include the following:

- Finding commands using

Get-Command - Finding commands using tab completion

- Using

Get-Helpto understand cmdlets - Interpreting the command syntax

You saw in the previous chapter that you are able to run standard command-line programs in PowerShell and that there are aliases defined for some cmdlets that allow you to use the names of commands that you are used to from other shells. Other than these, what can you use? How do you know which commands, cmdlets, and aliases are available?

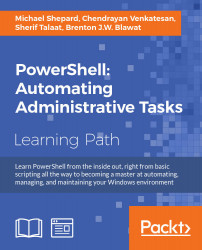

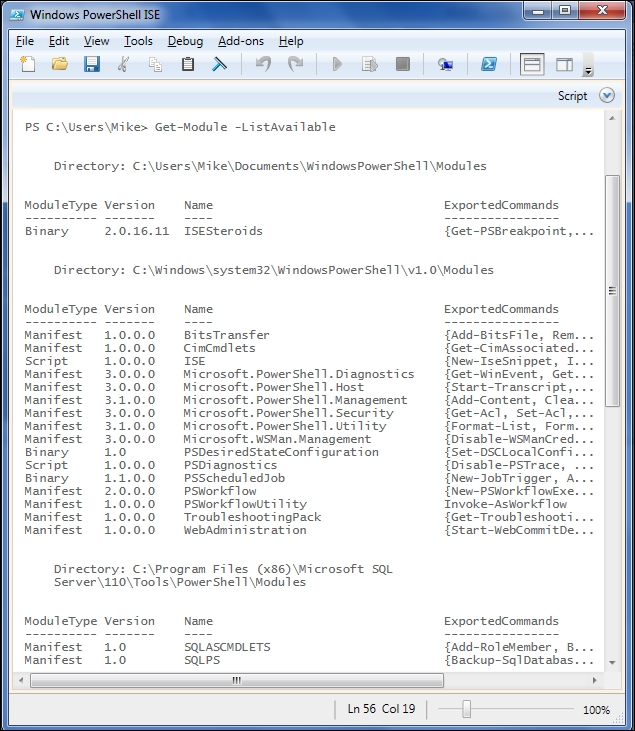

The answer is the first of the big three cmdlets, the Get-Command cmdlet. Simply executing Get-Command with no parameters displays a list of all the entities that PowerShell considers to be executable. This includes programs in the path (the environment variable), cmdlets, functions, scripts, and aliases.

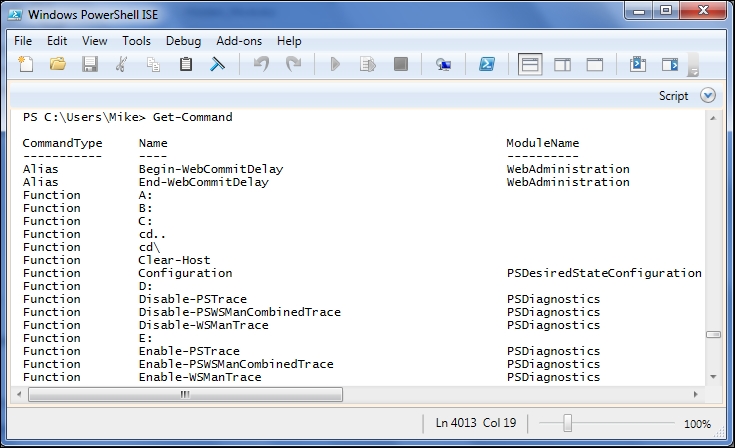

This list of commands is long and gets longer with each new operating system and PowerShell release. To count the output, we can use this command:

Get-Command | Measure-Object

The output of this command is as follows:

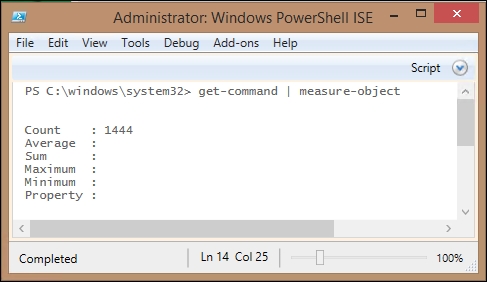

The Measure-Object cmdlet can be used to count the number of items in the output from a command. In this case, it tells us that there are 1444 different commands available to us. In the output, we can see that there is a CommandType column. There is a corresponding parameter to the Get-Command cmdlet that allows us to filter the output and only shows us certain kinds of commands. Limiting the output to the cmdlets shows that there are 706 different cmdlets available:

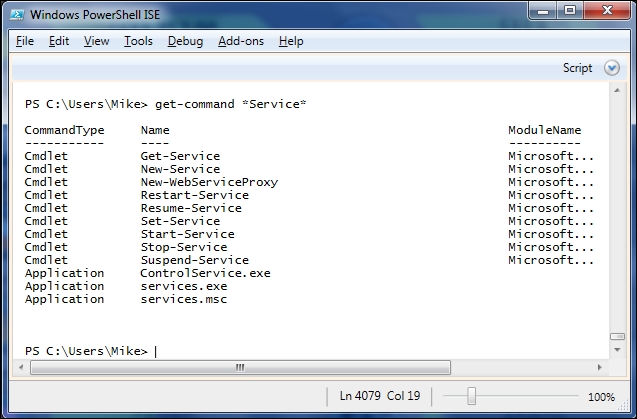

Getting a big list such as this isn't very useful though. In reality, you are usually going to use Get-Command to find commands that pertain to a component you want to work with. For instance, if you need to work with Windows services, you could issue a command such as Get-Command *Service* using wildcards to include any command that includes the word service in its name. The output would look something similar to the following:

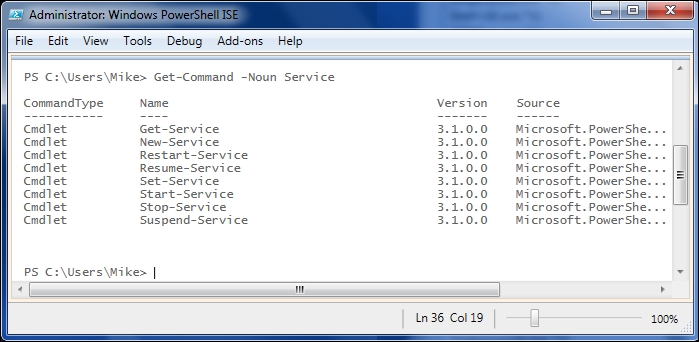

You can see in the output that applications found in the current path and the PowerShell cmdlets are both included. Unfortunately, there's also a cmdlet called New-WebServiceProxy, which clearly doesn't have anything to do with Windows services. To better control the matching of cmdlets with your subject, you can use the –Noun parameter to filter the commands. Note that by naming a noun, you are implicitly limiting the results to PowerShell objects because nouns are a PowerShell concept. Using this approach gives us a smaller list:

Although Get-Command is a great way to find cmdlets, the truth is that the PowerShell cmdlet names are very predictable. In fact, they're so predictable that after you've been using PowerShell for a while you won't probably turn to Get-Command very often. After you've found the noun or the set of nouns that you're working with, the powerful tab completion found in both the PowerShell console and the ISE will allow you to enter just a part of the command and press tab to cycle through the list of commands that match what you have entered. For instance, in keeping with our examples dealing with services, you could enter *-Service at the command line and press tab. You would first see

Get-Service, followed by the rest of the items in the previous screenshot as you hit tab. Tab completion is a huge benefit for your scripting productivity for several reasons, such as:

- You get the suggestions where you need them

- The suggestions are all valid command names

- The suggestions are consistently capitalized

In addition to being able to complete the command names, tab completion also gives suggestions for parameter names, property, and method names, and in PowerShell 3.0 and above, it gives the parameter values. The combination of tab completion improvements and IntelliSense in the ISE is so impressive that I recommend that people upgrade their development workstation to at least PowerShell 3.0, even if they are developing PowerShell code that will run in a 2.0 environment.

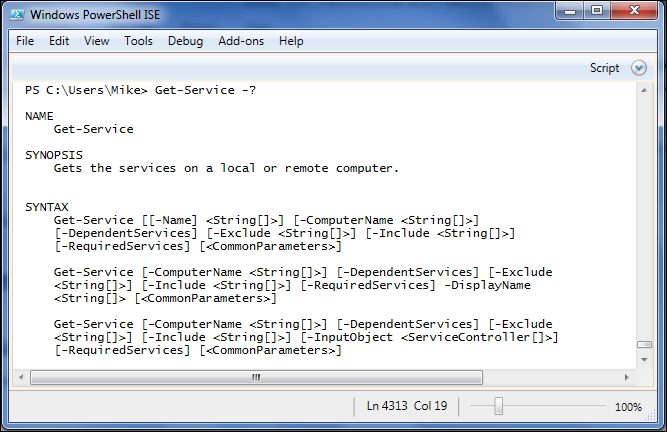

Knowing what commands you can execute is a big step, but it doesn't help much if you don't know how you can use them. Again, PowerShell is here to help you. To see a quick hint of how to use a cmdlet, write the cmdlet name followed by -?. The beginning of this output for Get-Service is shown as follows:

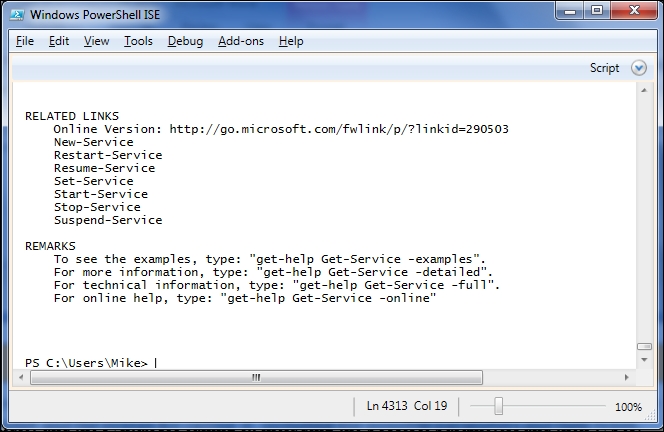

Even this brief help, which was truncated to fit on the page, shows a brief synopsis of the cmdlet and the syntax to call it, including the possible parameters and their types. The rest of the display shows a longer description of the cmdlet, a list of the related topics, and some instructions about getting more help about Get-Service:

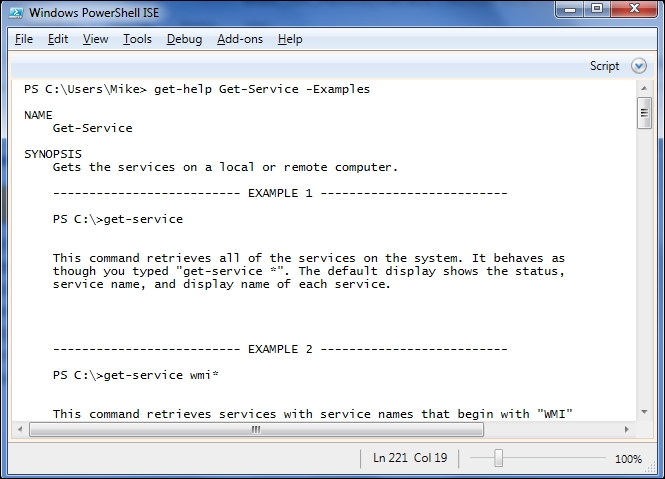

In the Remarks section, we can see that there's a cmdlet called Get-Help (the second of the "big 3" cmdlets) that allows us to view more extensive help in PowerShell. The first type of extra help we can see is the examples. The example output begins with the name and synopsis of the cmdlet and is followed, in the case of Get-Service, by 11 examples that range from simple to complex. The help for each cmdlet is different, but in general you will find these examples to be a treasure trove providing an insight into not only how the cmdlets behave in isolation, but also in combination with other commands in real scenarios:

Also, mentioned in this are the ways to display more information about the cmdlet using the –Detailed or –Full switches with Get-Help. The –Detailed switch shows the examples as well as the basic descriptions of each parameter. The –Full switch adds sections on inputs, outputs, and detailed parameter information to the –Detailed output.

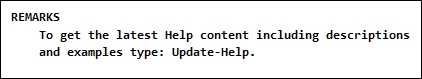

If you're running PowerShell 3.0 or above, instead of getting a complete help entry, you probably received a message like this:

This is because in PowerShell 3.0, the PowerShell team switched to the concept of update-able help. In order to deal with time constraints around shipping and the complexity of releasing updates to installed software, the help content for the PowerShell modules is now updated via a cmdlet called Update-Help. Using this mechanism, the team can revise, expand, and correct the help content on a continual basis, and users can be sure to have the most recent content at all times:

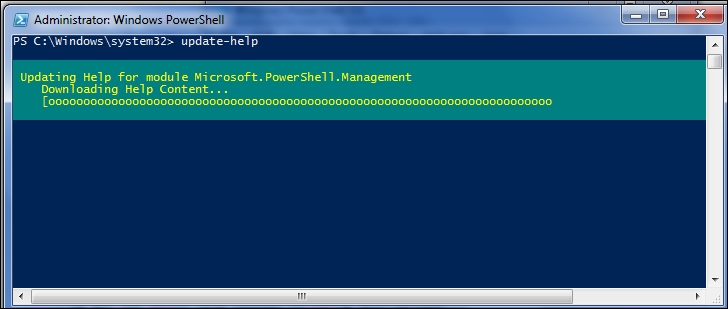

In addition to the help with individual cmdlets, PowerShell includes help content about all aspects of the PowerShell environment. These help topics are named beginning with about_ and can also be viewed with the Get-Help cmdlet. The list of topics can be retrieved using get-Help about_*:

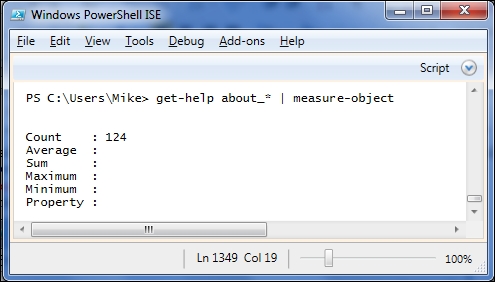

Using measure-object, as we saw in a previous section, we can see that there are 124 topics listed in my installation:

These help topics are often extremely long and contain some of the best documentation on the working of PowerShell you will ever find. For that reason, I will end each chapter in Module 1 with a list of help topics to read about the topics covered in the chapter.

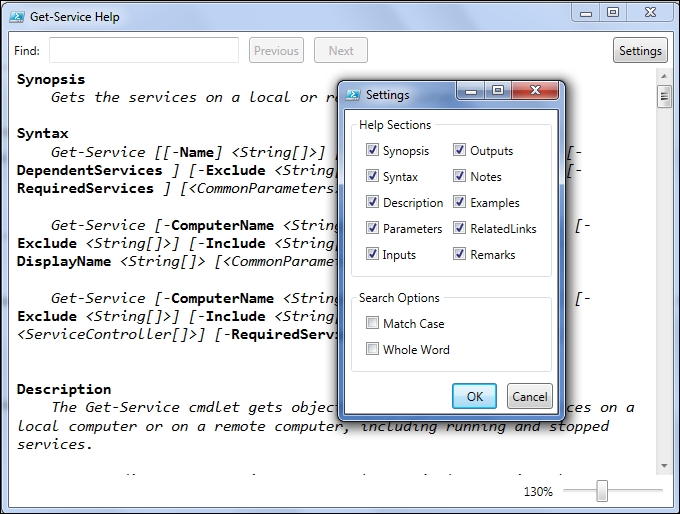

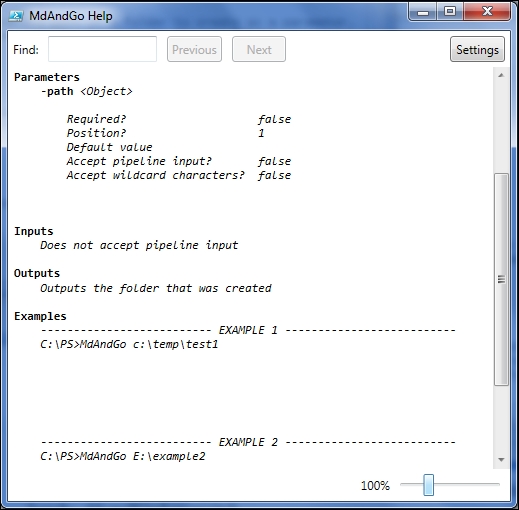

In addition to reading the help content in the output area of the ISE and the text console, PowerShell 3.0 added a –ShowWindow switch to get-help that allows viewing in a separate window. All help content is shown in the window, but sections can be hidden using the settings button:

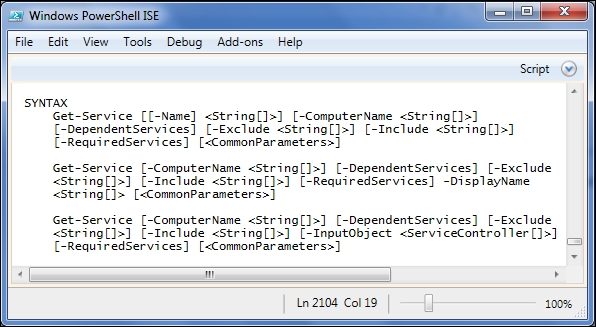

The syntax section of the cmdlet help can be overwhelming at first, so let's drill into it a bit and try to understand it in detail. I will use the get-service cmdlet for an example, but the principles are same for any cmdlet:

The first thing to notice is that there are three different get-service calls illustrated here. They correspond to the PowerShell concept of ParameterSets, but you can think of them as different use cases for the cmdlet. Each ParameterSet, or use case will have at least one unique parameter. In this case, the first includes the –Name parameter, the second includes –DisplayName, and the third has the –InputObject parameter.

Each ParameterSet lists the parameters that can be used in a particular scenario. The way the parameter is shown in the listing, tells you how the parameter can be used. For instance, [[-Name] <String[]>] means that the name parameter has the following attributes:

- It is optional (because the whole definition is in brackets)

- The parameter name can be omitted (because the name is in brackets)

- The parameter values can be an array (list) of strings (String[])

In general, a parameter will be shown in one of the following forms:

|

Form |

Meaning |

|---|---|

|

|

Optional parameter of type |

|

|

Optional parameter of type |

|

|

Required parameter of type |

|

|

A switch (flag) |

Applying this to the Get-Service syntax shown previously, we see that some valid calls of the cmdlet could be:

This chapter dealt with the first two of the "big three" cmdlets for learning PowerShell, get-Command and get-Help. These two cmdlets allow you to find out which commands are available and how to use them. In the next chapter, we will finish the "big 3" with the get-member cmdlet that will help us to figure out what to do with the output we receive.

In this chapter, we will learn about objects and how they relate to the PowerShell output. The specific topics in this chapter include the following:

- What are objects?

- Comparing DOS and PowerShell output

- The

Get-Membercmdlet

One major difference between PowerShell and other command environments is that, in PowerShell, everything is an object. One result of this is that the output from the PowerShell cmdlets is always in the form of objects. Before we look at how this affects PowerShell, let's take some time to understand what we mean when we talk about objects.

If everything is an object, it's probably worth taking a few minutes to talk about what this means. We don't have to be experts in object-oriented programming to work in PowerShell, but a knowledge of a few things is necessary.

In a nutshell, object-oriented programming involves encapsulating the related values and functionality in objects. For instance, instead of having variables for speed, height, and direction and a function called PLOTPROJECTILE, in object-oriented programming you might have a Projectile object that has the properties called Speed, Height, and Direction as well as a method called Plot. This Projectile object could be treated as a single unit and passed as a parameter to other functions. Keeping the values and the related code together has a certain sense to it, and there are many other benefits to object-oriented programming.

The main concepts in object-oriented programming are types, classes, and objects. These concepts are all related and often confusing, but not difficult once you have them straight:

- A type is an abstract representation of structured data combining values (called properties), code (called methods), and behavior (called events). These attributes are collectively known as members.

- A class is a specific implementation of one or more types. As such, it will contain all the members that are specified in each of these types and possibly other members as well. In some languages, a class is also considered a type. This is the case in C#.

- An object is a specific instance of a class. Most of the time in PowerShell, we will be working with objects. As mentioned earlier, all output in PowerShell is in the form of objects.

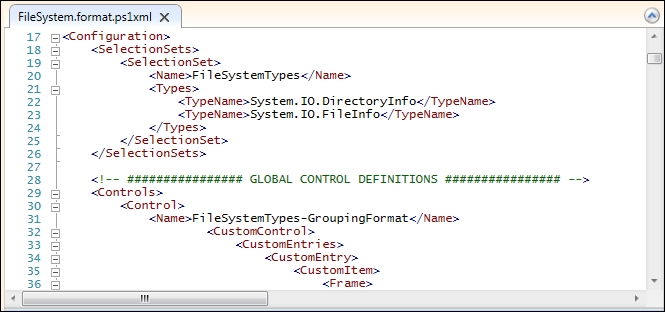

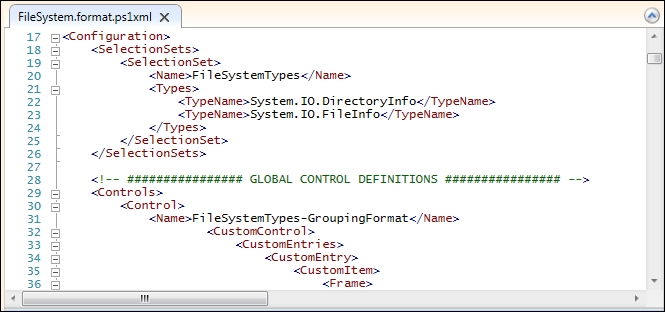

PowerShell is build upon the .NET framework, so we will be referring to the .NET framework throughout Module 1. In the .NET framework, there is a type called System.IO.FileSystemInfo, which describes the items located in a filesystem (of course). Two classes that implement this type are System.IO.FileInfo and System.IO.DirectoryInfo. A reference to a specific file would be an object that was an instance of the System.IO.FileInfo class. The object would also be considered to be of the System.IO.FileSystemInfo and System.IO.FileInfo types.

Here are some other examples that should help make things clear:

- All the objects in the .NET ecosystem are of the

System.Objecttype - Many collections in .NET are of the

IEnumerabletype - The ArrayList is of the

IListandICollectiontypes among others

Members are the attributes of types that are implemented in classes and instantiated in objects. Members come in many forms in the .NET framework, including properties, methods, and events.

Properties represent the data contained in an object. For instance, in a FileInfo object (System.IO.FileInfo), there would be properties referring to the filename, file extension, length, and various DateTime values associated with the file. An object referring to a service (of the System.ServiceProcess.ServiceController type) would have properties for the display name of the service and the state (running or not).

Methods refer to the operations that the objects (or sometimes classes) can perform. A service object, for instance, can Start and Stop. A database command object can Execute. A file object can Delete or Encrypt itself. Most objects have some methods (for example, ToString and Equals) because they are of the System.Object type.

Events are a way for an object to notify that something has happened or that a condition has been met. Button objects, for instance, have an OnClick event that is triggered when they are clicked.

Now that we have an idea about objects, let's look back at a couple of familiar DOS commands and see how they deal with the output.

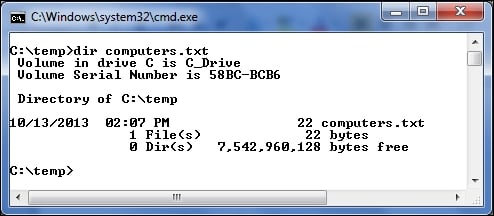

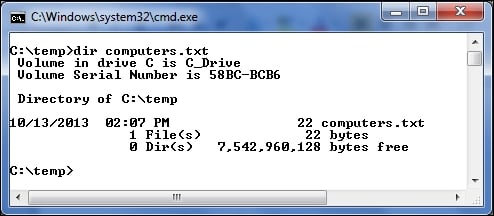

The output that we see in the preceding screenshot includes several details about a single file. Note that there is a lot of formatting included with the static text (for example, Volume in drive) and some tabular information, but the only way to get to these details is to understand exactly how the output is formatted and parse the output string accordingly. Think about how the output would change, if there were more than one file listed. If we included the /S switch, the output would have spanned over multiple directories, and would have been broken into sections accordingly. Finding a specific piece of information from this is not a trivial operation, and the process of retrieving the file length, for example, would be different from how you would go about retrieving the file extension.

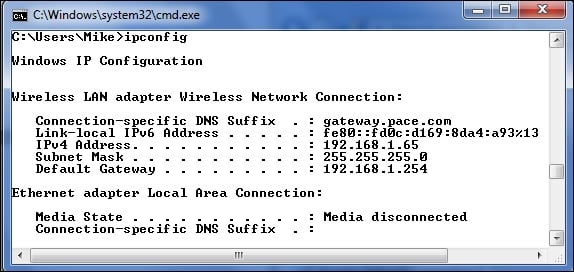

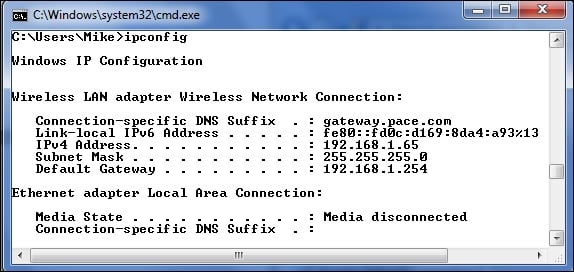

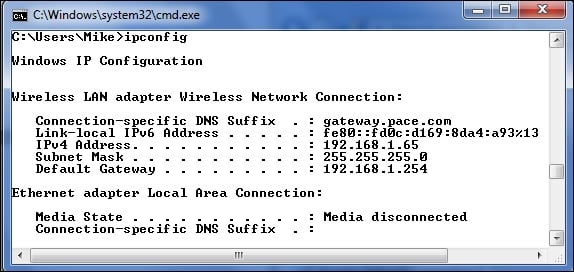

Another command that we are all familiar with is the IPConfig.exe tool, which shows network adapter information. Here is the beginning of the output in my laptop:

Here, again, the output is very mixed. There is a lot of static text and a list of properties and values. The property names are very readable, which is nice for humans, but not so nice for computers trying to parse things out. The dot-leaders are again something that help to guide the eyes towards the property values, but will get in the way when we try to parse out the values that we are looking for. Since some of the values (such as IP addresses and subnet masks) already include dots, I imagine it will cause some confusion.

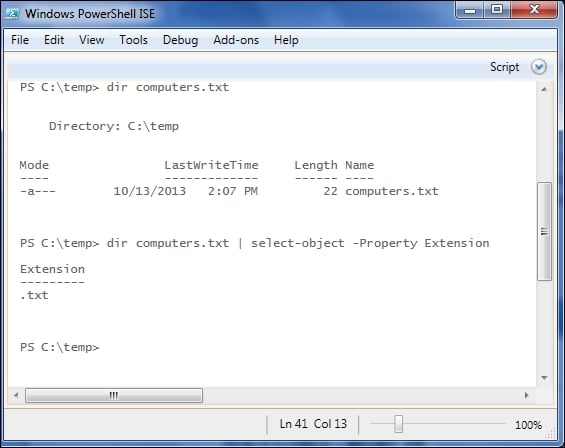

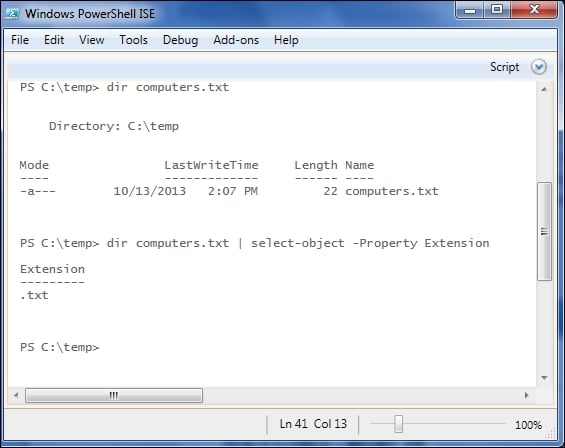

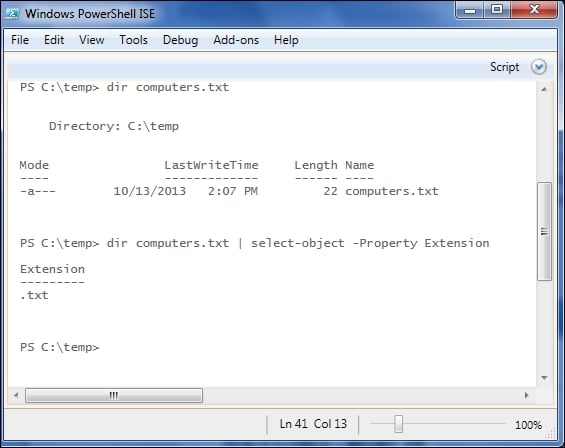

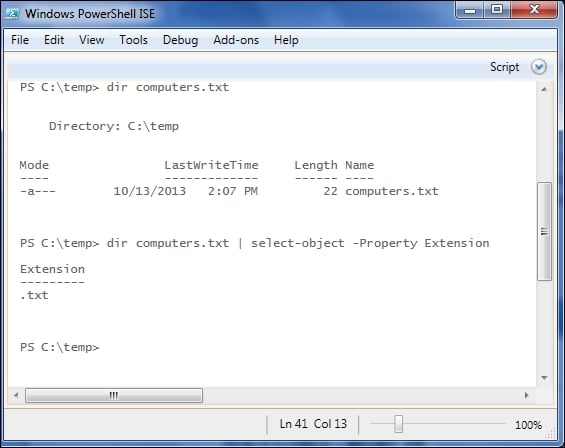

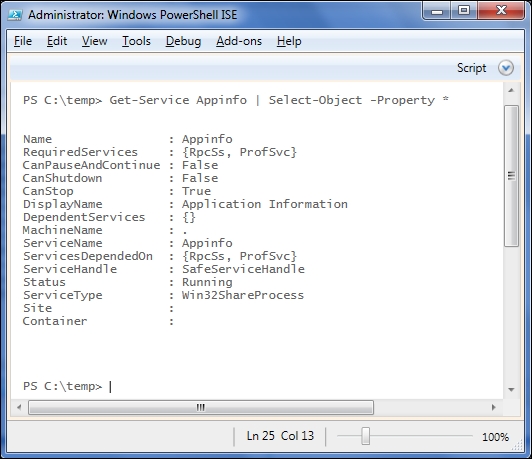

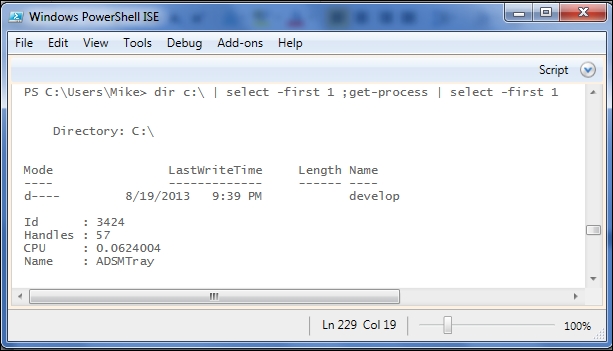

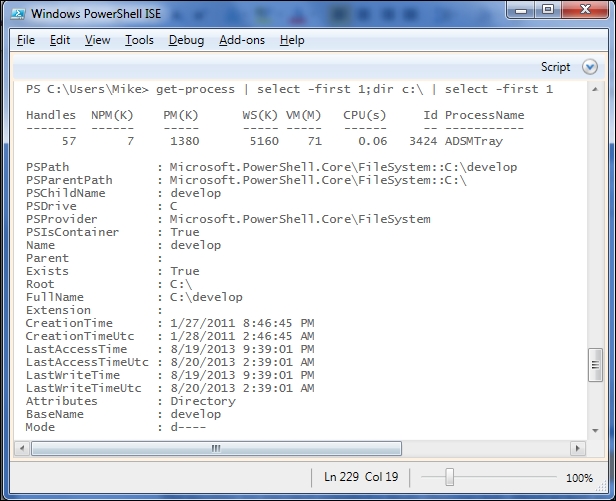

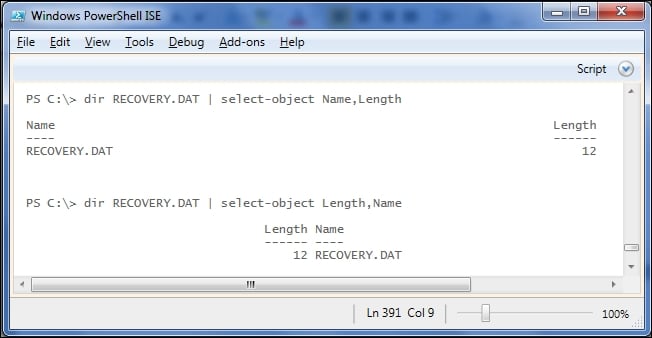

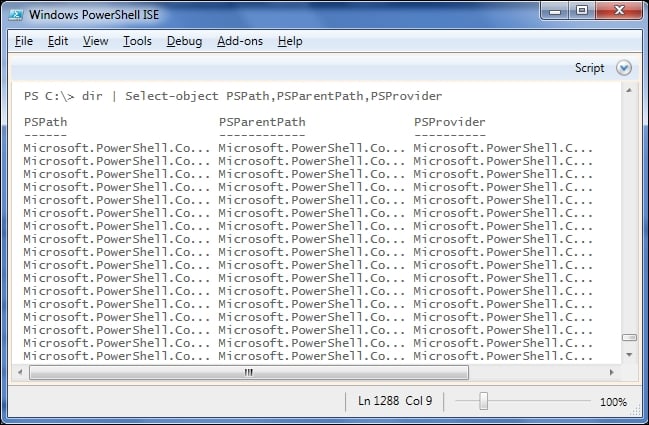

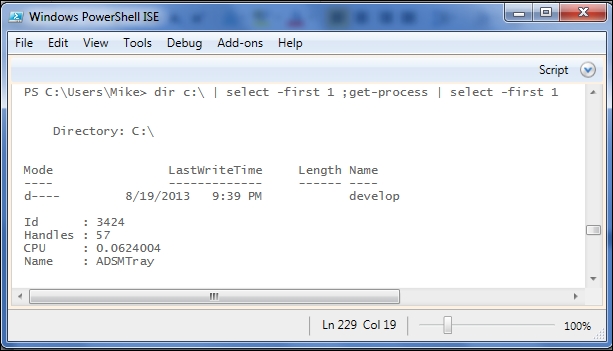

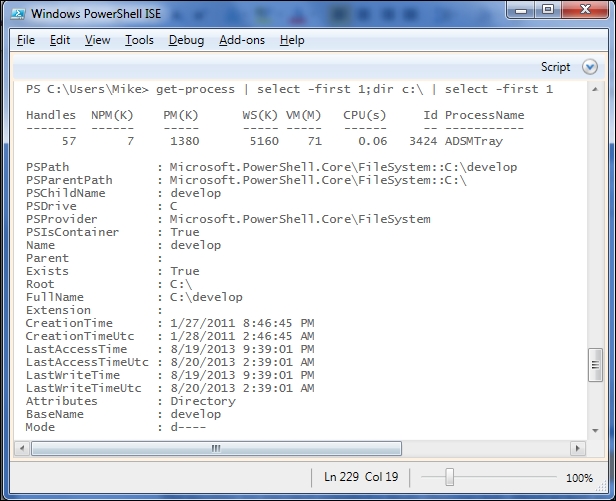

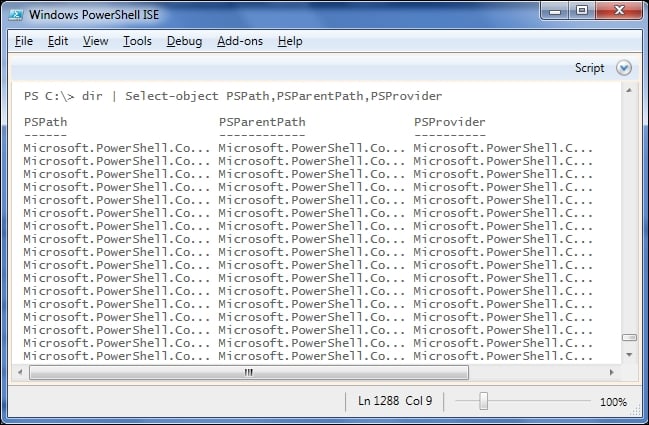

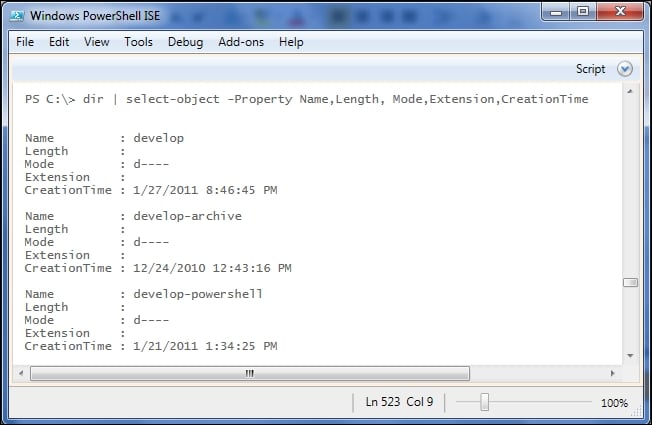

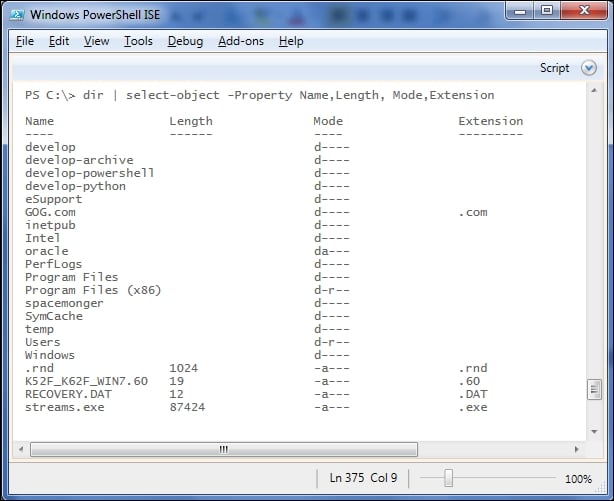

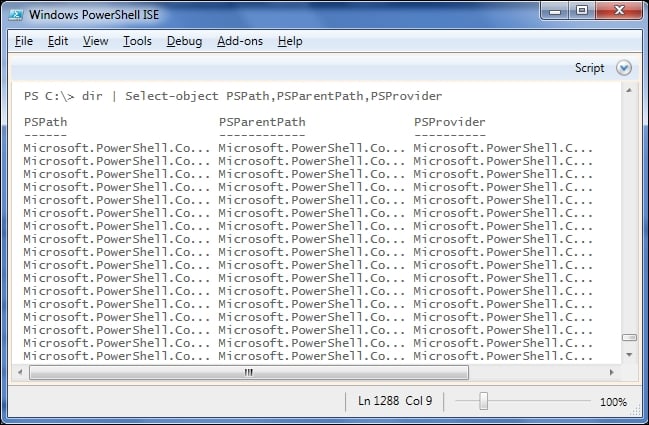

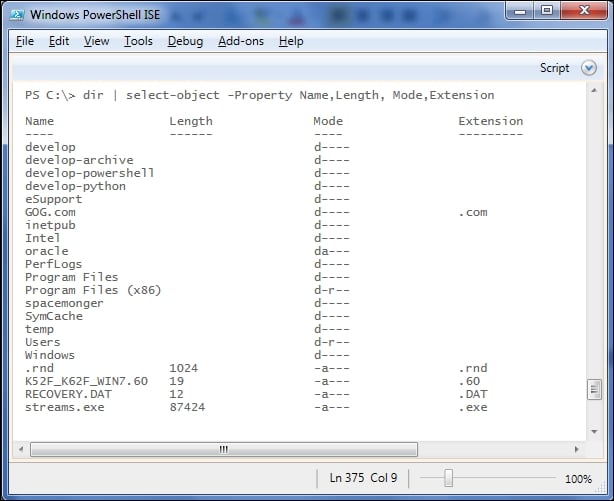

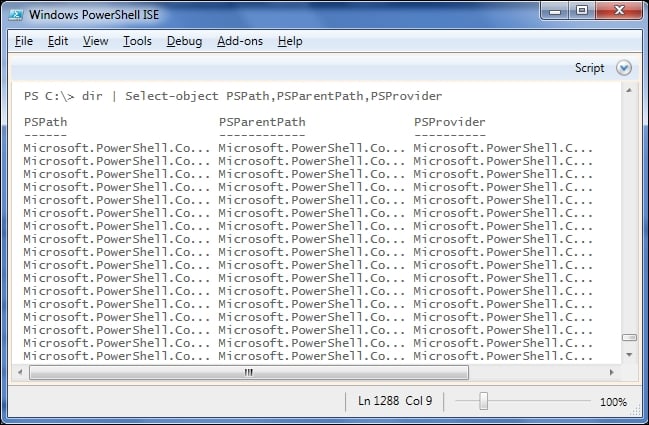

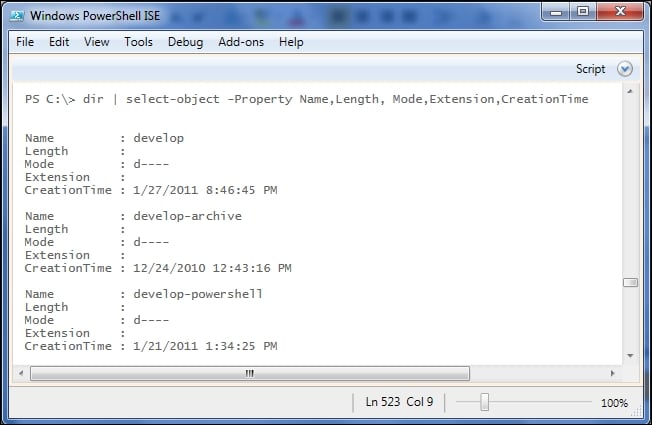

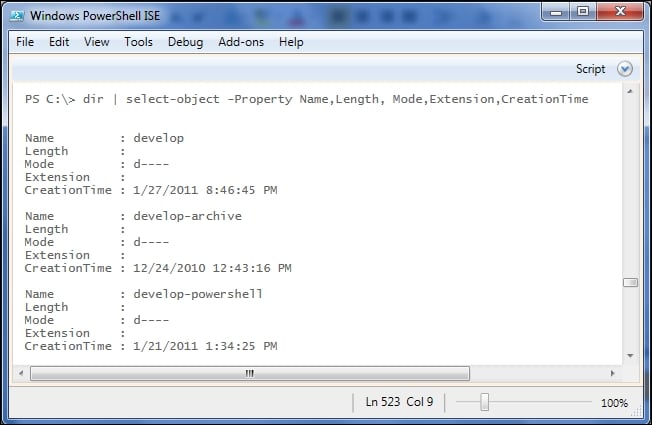

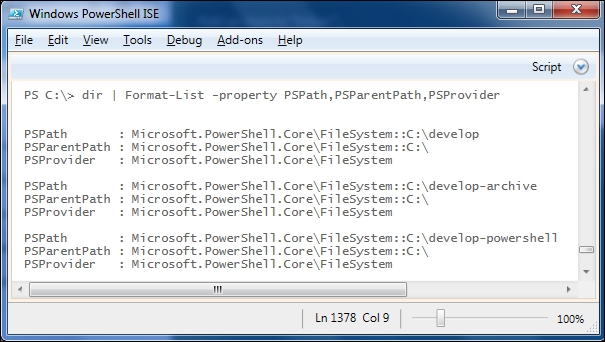

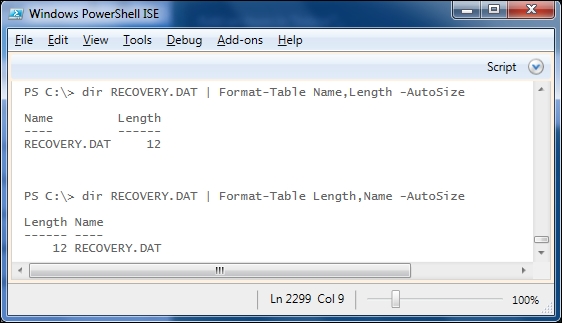

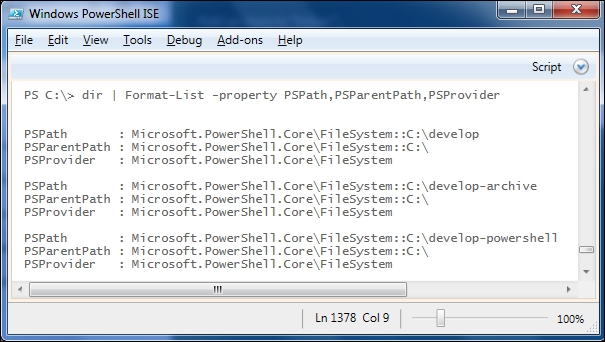

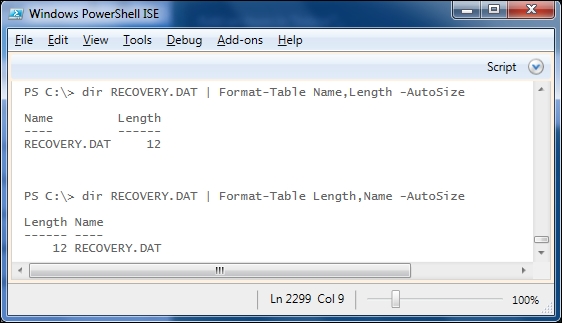

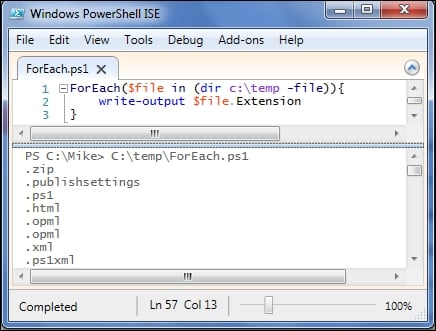

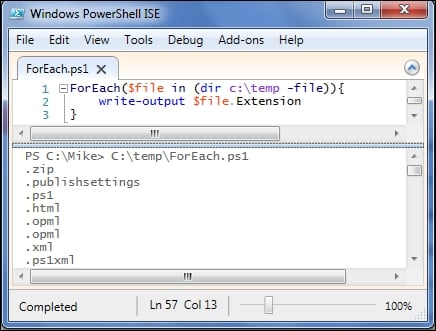

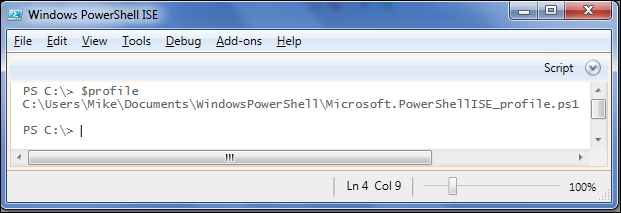

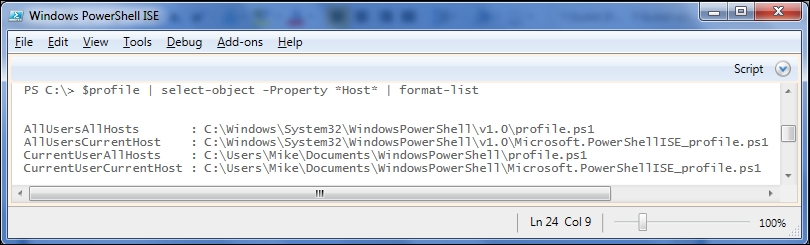

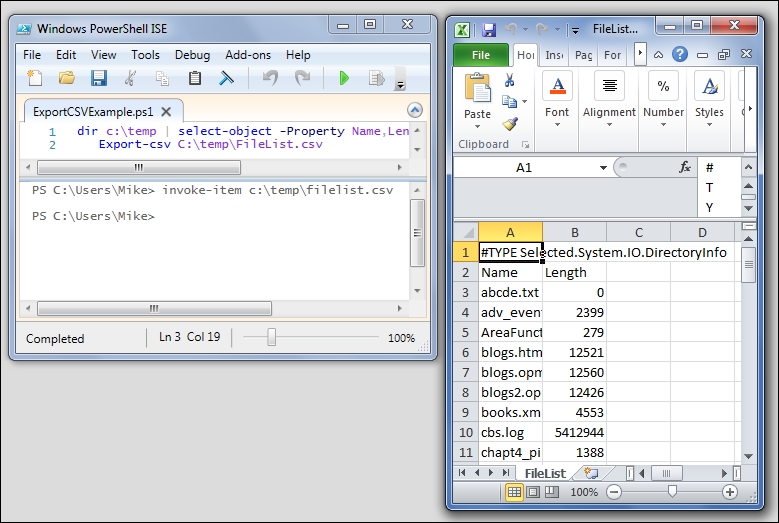

Let's look at some PowerShell commands and compare how easy it is to get what we need out of the output:

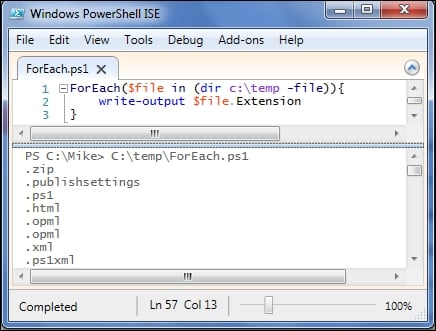

We can see that the output of the dir command looks similar to the output we saw in DOS, but we can follow this with Select-Object to pull out a specific property. This is possible because PowerShell commands always output objects. In this case, the object in question has a property called

Extension, which we can inspect. We will talk about the select-object cmdlet in detail in the next chapter, but for now it is enough to know that it can be used to limit the output to a specific set of properties from the original objects.

The DOS DIR command

The output that we see in the preceding screenshot includes several details about a single file. Note that there is a lot of formatting included with the static text (for example, Volume in drive) and some tabular information, but the only way to get to these details is to understand exactly how the output is formatted and parse the output string accordingly. Think about how the output would change, if there were more than one file listed. If we included the /S switch, the output would have spanned over multiple directories, and would have been broken into sections accordingly. Finding a specific piece of information from this is not a trivial operation, and the process of retrieving the file length, for example, would be different from how you would go about retrieving the file extension.

Another command that we are all familiar with is the IPConfig.exe tool, which shows network adapter information. Here is the beginning of the output in my laptop:

Here, again, the output is very mixed. There is a lot of static text and a list of properties and values. The property names are very readable, which is nice for humans, but not so nice for computers trying to parse things out. The dot-leaders are again something that help to guide the eyes towards the property values, but will get in the way when we try to parse out the values that we are looking for. Since some of the values (such as IP addresses and subnet masks) already include dots, I imagine it will cause some confusion.

Let's look at some PowerShell commands and compare how easy it is to get what we need out of the output:

We can see that the output of the dir command looks similar to the output we saw in DOS, but we can follow this with Select-Object to pull out a specific property. This is possible because PowerShell commands always output objects. In this case, the object in question has a property called

Extension, which we can inspect. We will talk about the select-object cmdlet in detail in the next chapter, but for now it is enough to know that it can be used to limit the output to a specific set of properties from the original objects.

The IPCONFIG command

Another command that we are all familiar with is the IPConfig.exe tool, which shows network adapter information. Here is the beginning of the output in my laptop:

Here, again, the output is very mixed. There is a lot of static text and a list of properties and values. The property names are very readable, which is nice for humans, but not so nice for computers trying to parse things out. The dot-leaders are again something that help to guide the eyes towards the property values, but will get in the way when we try to parse out the values that we are looking for. Since some of the values (such as IP addresses and subnet masks) already include dots, I imagine it will cause some confusion.

Let's look at some PowerShell commands and compare how easy it is to get what we need out of the output:

We can see that the output of the dir command looks similar to the output we saw in DOS, but we can follow this with Select-Object to pull out a specific property. This is possible because PowerShell commands always output objects. In this case, the object in question has a property called

Extension, which we can inspect. We will talk about the select-object cmdlet in detail in the next chapter, but for now it is enough to know that it can be used to limit the output to a specific set of properties from the original objects.

PowerShell for comparison

Let's look at some PowerShell commands and compare how easy it is to get what we need out of the output:

We can see that the output of the dir command looks similar to the output we saw in DOS, but we can follow this with Select-Object to pull out a specific property. This is possible because PowerShell commands always output objects. In this case, the object in question has a property called

Extension, which we can inspect. We will talk about the select-object cmdlet in detail in the next chapter, but for now it is enough to know that it can be used to limit the output to a specific set of properties from the original objects.

One way to find the members of a class is to look up this class online in the

MicroSoft Developers Network (MSDN). For instance, the FileInfo class is found at https://msdn.microsoft.com/en-us/library/system.io.fileinfo.

Although this is a good reference, it's not very handy to switch back and forth between PowerShell and a browser to look at the classes all the time. Fortunately, PowerShell has a very handy way to give this information, the Get-Member cmdlet. This is the third of the "big 3" cmdlets, following Get-Command and Get-Help.

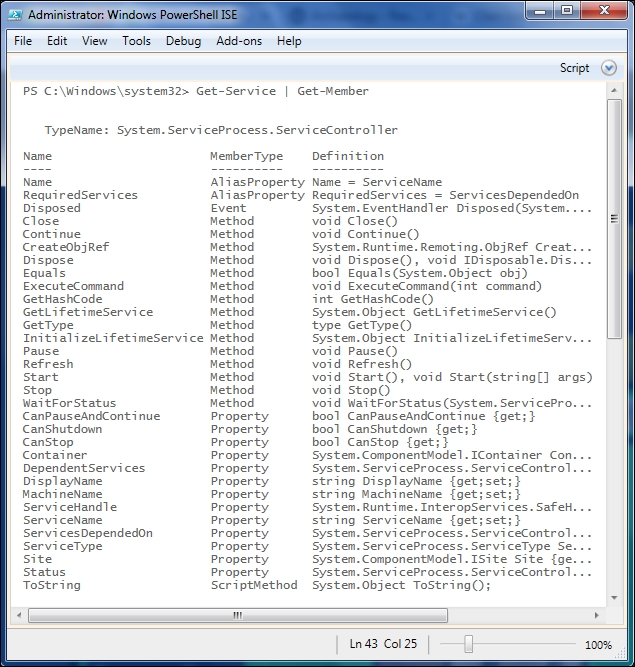

The most common way to use the Get-Member cmdlet is to pipe data into it. Piping is a way to pass data from one cmdlet to another, and is covered in depth in the next chapter. Using a pipe with Get-Member looks like this:

The Get-Member cmdlet looks at all the objects in its input, and provides output for each distinct class. In this output, we can see that Get-Service only outputs a single type, System.ServiceProcess.ServiceController. The name of the class is followed by the list of members, type of each member as well as definition for the member. The member definition shows the type of properties, signature for events, and methods, which includes the return type as well as the types of parameters.

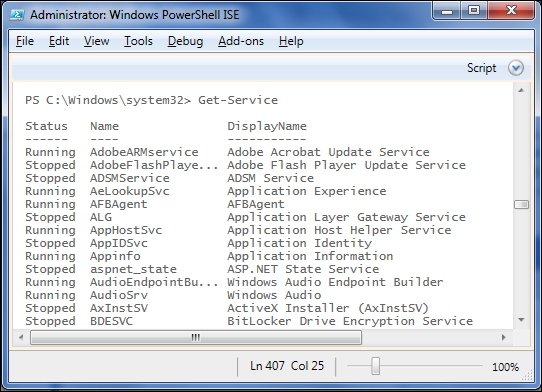

One thing that can be confusing is that get-member usually shows more properties than those shown in the output. For instance, the Get-Member output for the previous Get-Service cmdlet shows 13 properties but the output of Get-Service displays only three.

The reason for this is that PowerShell has a powerful formatting system that is configured with some default formats for familiar objects. Rest assured that all the properties are there. A couple of quick ways to see all the properties are to use the Select-Object cmdlet we saw earlier in this chapter or to use the Format-List cmdlet and force all the properties to be shown. Here, we use Select-Object and a wildcard to specify all the properties:

The Format-List cmdlet with a wildcard for properties gives output that looks the same . We will discuss how the output of these two cmdlets actually differs, as well as elaborate on PowerShell's formatting system in Chapter 5, Formatting Output.

If you look closely at the ServiceController members listed in the previous figure, you will notice a few members that aren't strictly properties, methods, or events. PowerShell has a mechanism called the Extended Type System that allows PowerShell to add members to classes or to individual objects. In the case of the SystemController objects, PowerShell adds a Name alias for the ServiceName property and a RequiredServices alias for the built-in ServicesDependedOn property.

In this chapter, we discussed the importance of objects as output from the PowerShell cmdlets. After a brief primer on types, classes, and objects, we spent some time getting used to the Get-Member cmdlet. In the next chapter, we will cover the PowerShell pipeline and common pipeline cmdlets.

The object-oriented pipeline is one of the distinctive features of the PowerShell environment. The ability to refer to properties of arbitrary objects without parsing increases the expressiveness of the language and allows you to work with all kinds of objects with ease.

In this chapter, we will cover the following topics:

- How the pipeline works

- Some of the most common cmdlets to deal with data on the pipeline:

Sort-ObjectWhere-ObjectSelect-ObjectGroup-Object

The pipeline in PowerShell is a mechanism to get data from one command to another. Simply put, the data that is output from the first command is treated as input to the next command in the pipeline. The pipeline isn't limited to two commands, though. It can be extended practically as long as you like, although readability would suffer if the line got too long.

Here is a simple pipeline example:

Tip

In this example, I've used some common aliases for cmdlets (where, select) to keep the line from wrapping. I'll try to include aliases when I mention cmdlets, but if you can't figure out what a command is referring to, remember you can always use Get-Command to find out what is going on. For example, Get-Command where tells you Where is an alias for Where-Object. In this case, select is an alias for Select-Object.

The execution of this pipeline can be thought of in the following sequence:

- Get the list of services

- Choose the services that have the

Runningstatus - Select the first five services

- Output the Name and Display Name of each one

Even though this is a single line, it shows some of the power of PowerShell. The line is very expressive, doesn't include a lot of extra syntactic baggage, and doesn't even require any variables. It also doesn't use explicit types or loops. It is a very unexceptional bit of PowerShell code, but this single line represents logic that would take several lines of code in a traditional language to express.

DOS and Linux (and Unix, for that matter) have had pipes for a long time. Pipes in these systems work similar to how PowerShell pipes work on one level. In all of them, output is streamed from one command to the next. In other shells, though, the data being passed is simple, flat text.

For a command to use the text, it either needs to parse the text to get to the interesting bits, or it needs to treat the entire output like a blob. Since Linux and Unix use text-based configurations for most operating system functions, this makes sense. A wide range of tools to parse and find substrings is available in these systems, and scripting can be very complex.

In Windows, however, there aren't a lot of text-based configurations. Most components are managed using the Win32 or .NET APIs. PowerShell is built upon the .NET framework and leverages the .NET object model instead of using text as the primary focus. As data in the pipeline is always in the form objects, you rarely need to parse it and can directly deal with the properties of the objects themselves. As long as the properties are named reasonably (and they usually are), you will be able to quickly get to the data you need.

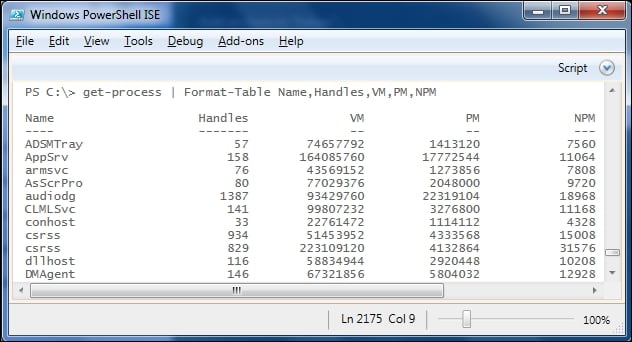

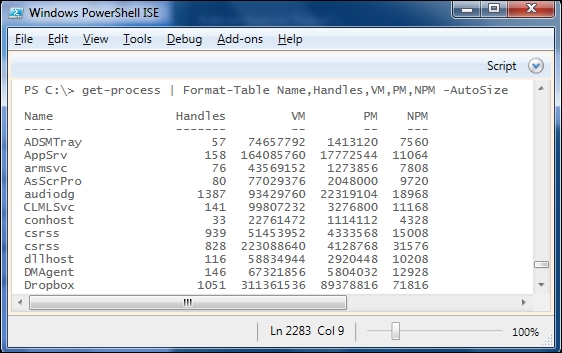

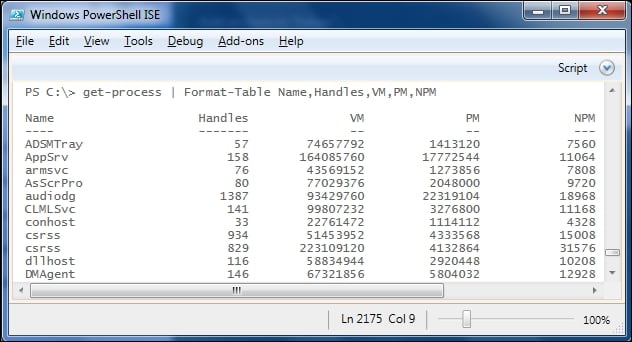

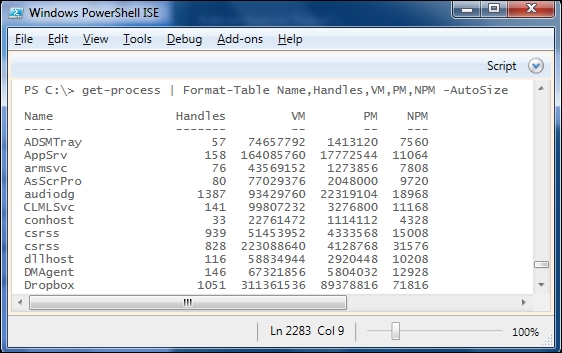

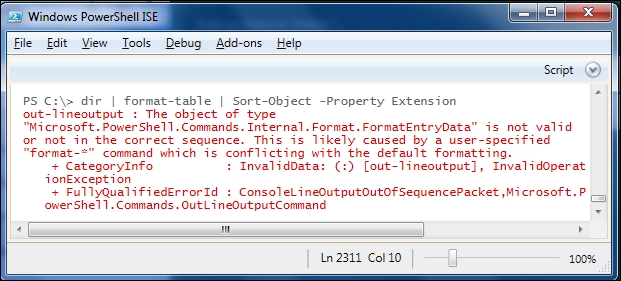

Since commands on the pipeline have access to object properties, several general-purpose cmdlets exist to perform common operations. Since these cmdlets can work with any kind of object, they use Object as the noun (you remember the Verb-Noun naming convention for cmdlets, right?).

To find the list of these cmdlets, we can use the Get-Command cmdlet:

Tip

ft is an alias for the Format-Table cmdlet, which I'm using here to get the display to fit more nicely on the screen. It will be covered in depth in Chapter 5, Formatting Output.

The Sort-Object, Select-Object, and Where-Object cmdlets are some of the most used cmdlets in PowerShell.

Sorting data can be interesting. Have you ever sorted a list of numbers only to find that 11 came between 1 and 2? That's because the sort that you used treated the numbers as text. Sorting dates with all the different culture-specific formatting can also be a challenge. Fortunately, PowerShell handles sorting details for you with the Sort-Object cmdlet.

Let's look at a few examples of the Sort-Object cmdlet before getting into the details. We'll start by sorting a directory listing by length:

Sorting this in reverse isn't difficult either.

Sorting by more than one property is a breeze as well. Here, I omitted the parameter name (-Property) to shorten the command-line a bit:

Looking at the brief help for the Sort-Object cmdlet, we can see a few other parameters, such as:

-Unique(return distinct items found in the input)-CaseSensitive(force a case-sensitive sort)-Culture(specify what culture to use while sorting)

As it turns out, you will probably find few occasions to use these parameters and will be fine with the –Property and –Descending parameters.

Another cmdlet that is extremely useful is the Where-Object cmdlet. Where-Object is used to filter the pipeline data based on a condition that is tested for each item in the pipeline. Any object in the pipeline that causes the condition to evaluate to a true value is output from the Where-Object cmdlet. Objects that cause the condition to evaluate to a false value are not passed on as output.

For instance, we might want to find all the files that are below 100 bytes in size in the c:\temp directory. One way to do that is to use the simplified, or comparison syntax for Where-Object, which was introduced in PowerShell 3.0. In this syntax, the command would look like this:

Dir c:\temp | Where-Object Length –lt 100

In this syntax, we can compare a single property of each object with a constant value. The comparison operator here is –lt, which is how PowerShell expresses "less than".

Tip

All PowerShell comparison operators start with a dash. This can be confusing, but the < and > symbols have an entrenched meaning in shells, so they can't be used as operators. Common operators include -eq, -ne, -lt, -gt, -le, -ge, -not, and -like. For a full list of operators, try get-help about_operators.

If you need to use PowerShell 1.0 or 2.0, or need to specify a condition more complex than it is allowed in the simplified syntax, you can use the general or scriptblock syntax. Expressing the same condition using this form looks as follows:

Dir c:\temp | Where-Object {$_.Length –lt 100}

This looks a lot more complicated, but it's not so bad. The construction in curly braces is called a scriptblock, and is simply a block of the PowerShell code. In the scriptblock syntax, $_ stands for the current object in the pipeline and we're referencing the Length property of that object using dot-notation. The good thing about the general syntax of Where-Object is that we can do more inside the scriptblock than simply test one condition. For instance, we could check for files below 100 bytes or those that were created after 1/1/2015, as follows:

Dir c:\temp | Where-Object {$_.Length –lt 100 –or $_.CreationTime –gt '1/1/2015'}

Here's this command running on my laptop:

If you're using PowerShell 3.0 or above and need to use the scriptblock syntax, you can substitute $_ with $PSItem in the scriptblock. The meaning is the same and $PSItem is a bit more readable. It makes the line slightly longer, but it's a small sacrifice to make for readability's sake.

The examples that I've shown so far, have used simple comparisons with properties, but in the scriptblock syntax, any condition can be included. Also, note that any value other than a logical false value (expressed by $false in PowerShell), 0, or an empty string ('') is considered to be true ($true) in PowerShell. So, for example, we could filter objects using the Get-Member cmdlet to only show objects that have a particular property, as follows:

Dir | where-object {$_ | get-member Length}

This will return all objects in the current directory that have a Length property. Since files have lengths and directories don't, this is one way to get a list of files and omit the subdirectories.

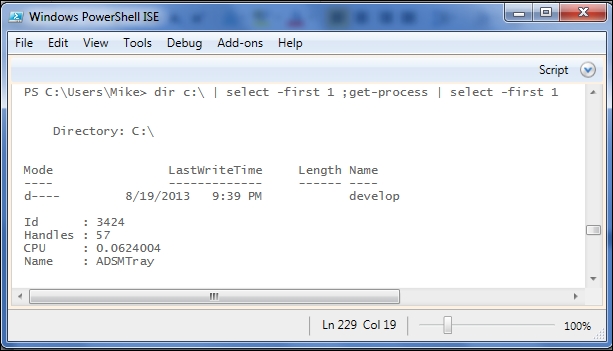

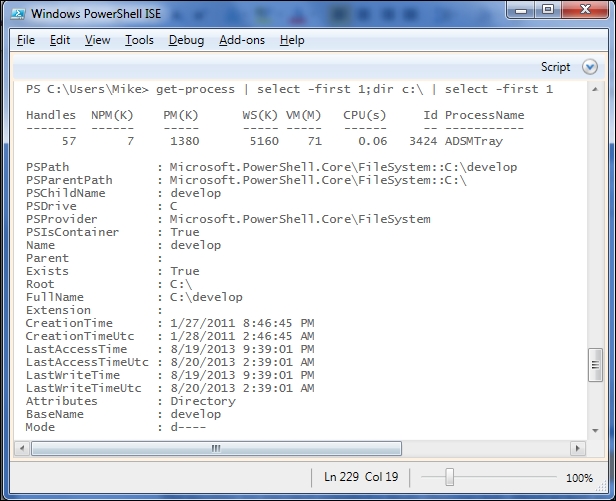

The Select-Object cmdlet is a versatile cmdlet that you will find yourself using often. There are three main ways that it is used:

Sometimes, you just want to see a few of the objects that are in the pipeline. To accomplish this, you can use the -First, -Last, and -Skip parameters. The -First parameter indicates that you want to see a particular number of objects from the beginning of the list of objects in the pipeline. Similarly, the -Last parameter selects objects from the end of the list of objects in the pipeline.

For instance, getting the first two processes in the list from Get-Process is simple:

Since we didn't use Sort-Object to force the order of the objects in the pipeline, we don't know that these are the first alphabetically, but they were the first two that were output from Get-Process.

You can use –Skip to cause a certain number of objects to be bypassed before returning the objects. It can be used by itself to output the rest of the objects after the skipped ones, or in conjunction with –First or –Last to return all but the beginning or end of the list of objects. As an example, -Skip can be used to skip over header lines when reading a file using the Get-Content cmdlet.

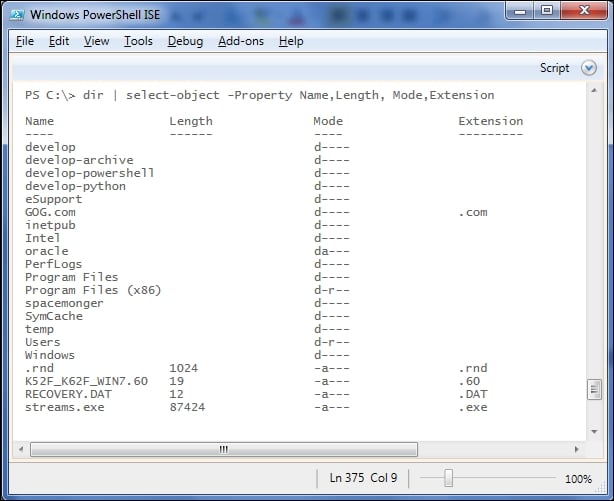

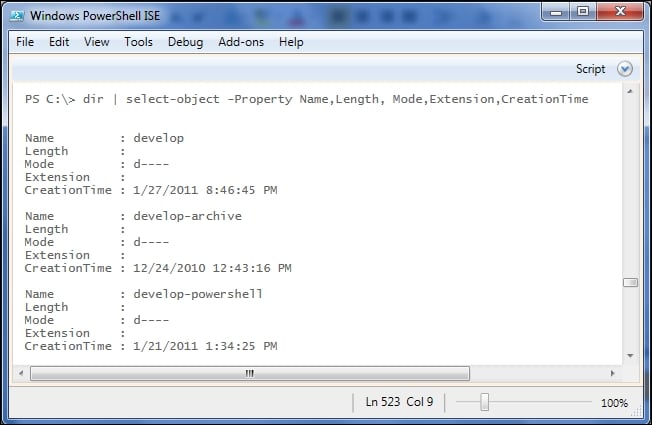

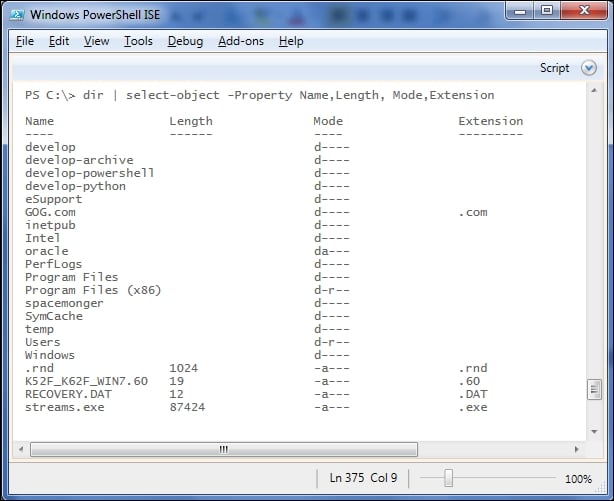

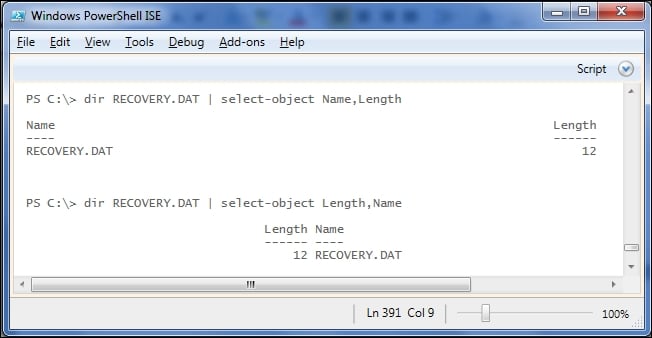

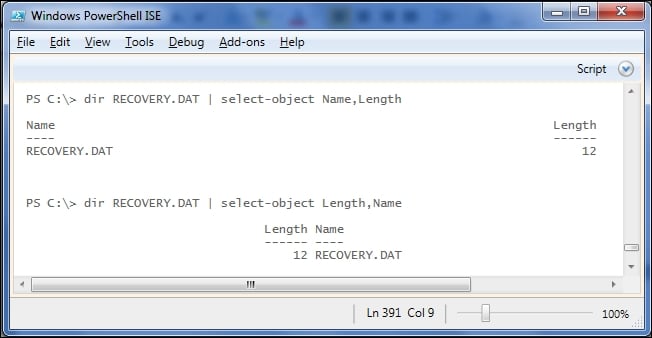

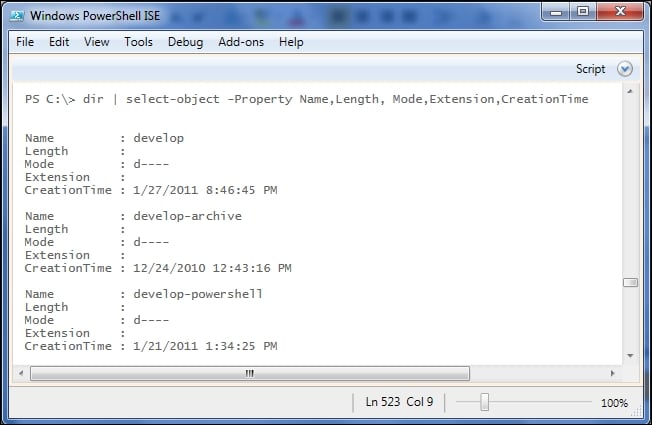

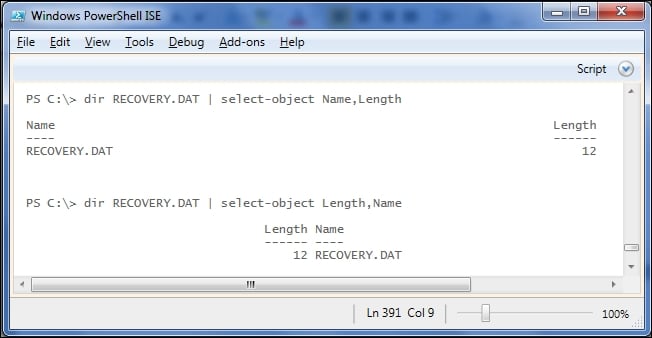

Sometimes, the objects in the pipeline have more properties than you need. To select only certain properties from the list of objects in the pipeline, you can use the –Property parameter with a list of properties. For instance, to get only the Name, Extension, and Length from the directory listing, you could do something like this:

I used the –First parameter as well to save some space in the output, but the important thing is that we only got the three properties that we asked for.

Note that, here, the first two objects in the pipeline were directories, and directory objects don't have a length property. PowerShell provides an empty value of $null for missing properties like this. Also, note that these objects weren't formatted like a directory listing. We'll talk about formatting in detail in the next chapter, but for now, you should just know that these limited objects are not of the same type as the original objects, so the formatting system treated them differently.

Sometimes, you want to get the values of a single property of a set of objects. For instance, if you wanted to get the display names of all of the services installed on your computer, you might try to do something like this:

This is close to what you were looking for, but instead of getting a bunch of names (strings), you got a bunch of objects with the DisplayName properties. A hint that this is what happened is seen by the heading (and underline) of DisplayName. You can also verify this using the Get-Member cmdlet:

In order to just get the values and not objects, you need to use the –ExpandProperty parameter. Unlike the –Property parameter, you can only specify a single property with –ExpandProperty, and the output is a list of raw values. Notice that with –ExpandProperty, the column heading is gone:

We can also verify using Get-Member that we just got strings instead of objects with a DisplayName property:

There are a few other parameters for Select-Object, but they will be less commonly used than the ones listed here.

The Measure-Object cmdlet has a simple function. It calculates statistics based on the objects in the pipeline. Its most basic form takes no parameters, and simply counts the objects that are in the pipeline.

To count the files in c:\temp and its subfolders, you could write:

Dir c:\temp –recurse –file | Measure-Object

The output shows the count, and also some other properties that give a hint about the other uses of the cmdlet. To populate these other fields, you will need to provide the name of the property that is used to calculate them and also specify which field(s) you want to calculate. The calculations are specified using the –Sum, -Minimum, -Maximum, and –Average switches.

For instance, to add up (sum) the lengths of the files in the C:\Windows directory, you could issue this command:

Dir c:\Windows | Measure-Object –Property Length –Sum

The Group-Object cmdlet divides the objects in the pipeline into distinct sets based on a property or a set of properties. For instance, we can categorize the files in a folder by their extensions using Group-Object like this:

You will notice in the output that PowerShell has provided the count of items in each set, the value of the property (Extension) that labels the set, and a property called Group, which contains all of the original objects that were on the pipeline that ended up in the set. If you have used the GROUP BY clause in SQL and are used to losing the original information when you group, you'll be pleased to know that PowerShell retains those objects in their original state in the Group property of the output.

If you don't need the objects and are simply concerned with what the counts are, you can use the –NoElement switch, which causes the Group property to be omitted.

You're not limited to grouping by a single property either. If you want to see files grouped by mode (read-only, archive, etc.) and extension, you can simply list both properties.

Note that the Name

property of each set is now a list of two values corresponding to the two properties defining the group.

The Group-Object cmdlet can be useful to summarize the objects, but you will probably not use it nearly as much as Sort-Object, Where-Object, and Select-Object.

The Sort-Object cmdlet

Sorting data can be interesting. Have you ever sorted a list of numbers only to find that 11 came between 1 and 2? That's because the sort that you used treated the numbers as text. Sorting dates with all the different culture-specific formatting can also be a challenge. Fortunately, PowerShell handles sorting details for you with the Sort-Object cmdlet.

Let's look at a few examples of the Sort-Object cmdlet before getting into the details. We'll start by sorting a directory listing by length:

Sorting this in reverse isn't difficult either.

Sorting by more than one property is a breeze as well. Here, I omitted the parameter name (-Property) to shorten the command-line a bit:

Looking at the brief help for the Sort-Object cmdlet, we can see a few other parameters, such as:

-Unique(return distinct items found in the input)-CaseSensitive(force a case-sensitive sort)-Culture(specify what culture to use while sorting)

As it turns out, you will probably find few occasions to use these parameters and will be fine with the –Property and –Descending parameters.

Another cmdlet that is extremely useful is the Where-Object cmdlet. Where-Object is used to filter the pipeline data based on a condition that is tested for each item in the pipeline. Any object in the pipeline that causes the condition to evaluate to a true value is output from the Where-Object cmdlet. Objects that cause the condition to evaluate to a false value are not passed on as output.

For instance, we might want to find all the files that are below 100 bytes in size in the c:\temp directory. One way to do that is to use the simplified, or comparison syntax for Where-Object, which was introduced in PowerShell 3.0. In this syntax, the command would look like this:

Dir c:\temp | Where-Object Length –lt 100

In this syntax, we can compare a single property of each object with a constant value. The comparison operator here is –lt, which is how PowerShell expresses "less than".

Tip

All PowerShell comparison operators start with a dash. This can be confusing, but the < and > symbols have an entrenched meaning in shells, so they can't be used as operators. Common operators include -eq, -ne, -lt, -gt, -le, -ge, -not, and -like. For a full list of operators, try get-help about_operators.

If you need to use PowerShell 1.0 or 2.0, or need to specify a condition more complex than it is allowed in the simplified syntax, you can use the general or scriptblock syntax. Expressing the same condition using this form looks as follows:

Dir c:\temp | Where-Object {$_.Length –lt 100}

This looks a lot more complicated, but it's not so bad. The construction in curly braces is called a scriptblock, and is simply a block of the PowerShell code. In the scriptblock syntax, $_ stands for the current object in the pipeline and we're referencing the Length property of that object using dot-notation. The good thing about the general syntax of Where-Object is that we can do more inside the scriptblock than simply test one condition. For instance, we could check for files below 100 bytes or those that were created after 1/1/2015, as follows:

Dir c:\temp | Where-Object {$_.Length –lt 100 –or $_.CreationTime –gt '1/1/2015'}

Here's this command running on my laptop:

If you're using PowerShell 3.0 or above and need to use the scriptblock syntax, you can substitute $_ with $PSItem in the scriptblock. The meaning is the same and $PSItem is a bit more readable. It makes the line slightly longer, but it's a small sacrifice to make for readability's sake.

The examples that I've shown so far, have used simple comparisons with properties, but in the scriptblock syntax, any condition can be included. Also, note that any value other than a logical false value (expressed by $false in PowerShell), 0, or an empty string ('') is considered to be true ($true) in PowerShell. So, for example, we could filter objects using the Get-Member cmdlet to only show objects that have a particular property, as follows:

Dir | where-object {$_ | get-member Length}

This will return all objects in the current directory that have a Length property. Since files have lengths and directories don't, this is one way to get a list of files and omit the subdirectories.

The Select-Object cmdlet is a versatile cmdlet that you will find yourself using often. There are three main ways that it is used:

Sometimes, you just want to see a few of the objects that are in the pipeline. To accomplish this, you can use the -First, -Last, and -Skip parameters. The -First parameter indicates that you want to see a particular number of objects from the beginning of the list of objects in the pipeline. Similarly, the -Last parameter selects objects from the end of the list of objects in the pipeline.

For instance, getting the first two processes in the list from Get-Process is simple:

Since we didn't use Sort-Object to force the order of the objects in the pipeline, we don't know that these are the first alphabetically, but they were the first two that were output from Get-Process.

You can use –Skip to cause a certain number of objects to be bypassed before returning the objects. It can be used by itself to output the rest of the objects after the skipped ones, or in conjunction with –First or –Last to return all but the beginning or end of the list of objects. As an example, -Skip can be used to skip over header lines when reading a file using the Get-Content cmdlet.

Sometimes, the objects in the pipeline have more properties than you need. To select only certain properties from the list of objects in the pipeline, you can use the –Property parameter with a list of properties. For instance, to get only the Name, Extension, and Length from the directory listing, you could do something like this:

I used the –First parameter as well to save some space in the output, but the important thing is that we only got the three properties that we asked for.

Note that, here, the first two objects in the pipeline were directories, and directory objects don't have a length property. PowerShell provides an empty value of $null for missing properties like this. Also, note that these objects weren't formatted like a directory listing. We'll talk about formatting in detail in the next chapter, but for now, you should just know that these limited objects are not of the same type as the original objects, so the formatting system treated them differently.

Sometimes, you want to get the values of a single property of a set of objects. For instance, if you wanted to get the display names of all of the services installed on your computer, you might try to do something like this:

This is close to what you were looking for, but instead of getting a bunch of names (strings), you got a bunch of objects with the DisplayName properties. A hint that this is what happened is seen by the heading (and underline) of DisplayName. You can also verify this using the Get-Member cmdlet:

In order to just get the values and not objects, you need to use the –ExpandProperty parameter. Unlike the –Property parameter, you can only specify a single property with –ExpandProperty, and the output is a list of raw values. Notice that with –ExpandProperty, the column heading is gone:

We can also verify using Get-Member that we just got strings instead of objects with a DisplayName property:

There are a few other parameters for Select-Object, but they will be less commonly used than the ones listed here.

The Measure-Object cmdlet has a simple function. It calculates statistics based on the objects in the pipeline. Its most basic form takes no parameters, and simply counts the objects that are in the pipeline.

To count the files in c:\temp and its subfolders, you could write:

Dir c:\temp –recurse –file | Measure-Object

The output shows the count, and also some other properties that give a hint about the other uses of the cmdlet. To populate these other fields, you will need to provide the name of the property that is used to calculate them and also specify which field(s) you want to calculate. The calculations are specified using the –Sum, -Minimum, -Maximum, and –Average switches.

For instance, to add up (sum) the lengths of the files in the C:\Windows directory, you could issue this command:

Dir c:\Windows | Measure-Object –Property Length –Sum

The Group-Object cmdlet divides the objects in the pipeline into distinct sets based on a property or a set of properties. For instance, we can categorize the files in a folder by their extensions using Group-Object like this:

You will notice in the output that PowerShell has provided the count of items in each set, the value of the property (Extension) that labels the set, and a property called Group, which contains all of the original objects that were on the pipeline that ended up in the set. If you have used the GROUP BY clause in SQL and are used to losing the original information when you group, you'll be pleased to know that PowerShell retains those objects in their original state in the Group property of the output.

If you don't need the objects and are simply concerned with what the counts are, you can use the –NoElement switch, which causes the Group property to be omitted.

You're not limited to grouping by a single property either. If you want to see files grouped by mode (read-only, archive, etc.) and extension, you can simply list both properties.

Note that the Name

property of each set is now a list of two values corresponding to the two properties defining the group.

The Group-Object cmdlet can be useful to summarize the objects, but you will probably not use it nearly as much as Sort-Object, Where-Object, and Select-Object.

The Where-Object cmdlet

Another cmdlet that is extremely useful is the Where-Object cmdlet. Where-Object is used to filter the pipeline data based on a condition that is tested for each item in the pipeline. Any object in the pipeline that causes the condition to evaluate to a true value is output from the Where-Object cmdlet. Objects that cause the condition to evaluate to a false value are not passed on as output.

For instance, we might want to find all the files that are below 100 bytes in size in the c:\temp directory. One way to do that is to use the simplified, or comparison syntax for Where-Object, which was introduced in PowerShell 3.0. In this syntax, the command would look like this:

Dir c:\temp | Where-Object Length –lt 100

In this syntax, we can compare a single property of each object with a constant value. The comparison operator here is –lt, which is how PowerShell expresses "less than".

Tip

All PowerShell comparison operators start with a dash. This can be confusing, but the < and > symbols have an entrenched meaning in shells, so they can't be used as operators. Common operators include -eq, -ne, -lt, -gt, -le, -ge, -not, and -like. For a full list of operators, try get-help about_operators.

If you need to use PowerShell 1.0 or 2.0, or need to specify a condition more complex than it is allowed in the simplified syntax, you can use the general or scriptblock syntax. Expressing the same condition using this form looks as follows:

Dir c:\temp | Where-Object {$_.Length –lt 100}

This looks a lot more complicated, but it's not so bad. The construction in curly braces is called a scriptblock, and is simply a block of the PowerShell code. In the scriptblock syntax, $_ stands for the current object in the pipeline and we're referencing the Length property of that object using dot-notation. The good thing about the general syntax of Where-Object is that we can do more inside the scriptblock than simply test one condition. For instance, we could check for files below 100 bytes or those that were created after 1/1/2015, as follows:

Dir c:\temp | Where-Object {$_.Length –lt 100 –or $_.CreationTime –gt '1/1/2015'}

Here's this command running on my laptop:

If you're using PowerShell 3.0 or above and need to use the scriptblock syntax, you can substitute $_ with $PSItem in the scriptblock. The meaning is the same and $PSItem is a bit more readable. It makes the line slightly longer, but it's a small sacrifice to make for readability's sake.

The examples that I've shown so far, have used simple comparisons with properties, but in the scriptblock syntax, any condition can be included. Also, note that any value other than a logical false value (expressed by $false in PowerShell), 0, or an empty string ('') is considered to be true ($true) in PowerShell. So, for example, we could filter objects using the Get-Member cmdlet to only show objects that have a particular property, as follows:

Dir | where-object {$_ | get-member Length}

This will return all objects in the current directory that have a Length property. Since files have lengths and directories don't, this is one way to get a list of files and omit the subdirectories.

The Select-Object cmdlet is a versatile cmdlet that you will find yourself using often. There are three main ways that it is used:

Sometimes, you just want to see a few of the objects that are in the pipeline. To accomplish this, you can use the -First, -Last, and -Skip parameters. The -First parameter indicates that you want to see a particular number of objects from the beginning of the list of objects in the pipeline. Similarly, the -Last parameter selects objects from the end of the list of objects in the pipeline.

For instance, getting the first two processes in the list from Get-Process is simple:

Since we didn't use Sort-Object to force the order of the objects in the pipeline, we don't know that these are the first alphabetically, but they were the first two that were output from Get-Process.

You can use –Skip to cause a certain number of objects to be bypassed before returning the objects. It can be used by itself to output the rest of the objects after the skipped ones, or in conjunction with –First or –Last to return all but the beginning or end of the list of objects. As an example, -Skip can be used to skip over header lines when reading a file using the Get-Content cmdlet.

Sometimes, the objects in the pipeline have more properties than you need. To select only certain properties from the list of objects in the pipeline, you can use the –Property parameter with a list of properties. For instance, to get only the Name, Extension, and Length from the directory listing, you could do something like this:

I used the –First parameter as well to save some space in the output, but the important thing is that we only got the three properties that we asked for.

Note that, here, the first two objects in the pipeline were directories, and directory objects don't have a length property. PowerShell provides an empty value of $null for missing properties like this. Also, note that these objects weren't formatted like a directory listing. We'll talk about formatting in detail in the next chapter, but for now, you should just know that these limited objects are not of the same type as the original objects, so the formatting system treated them differently.

Sometimes, you want to get the values of a single property of a set of objects. For instance, if you wanted to get the display names of all of the services installed on your computer, you might try to do something like this:

This is close to what you were looking for, but instead of getting a bunch of names (strings), you got a bunch of objects with the DisplayName properties. A hint that this is what happened is seen by the heading (and underline) of DisplayName. You can also verify this using the Get-Member cmdlet:

In order to just get the values and not objects, you need to use the –ExpandProperty parameter. Unlike the –Property parameter, you can only specify a single property with –ExpandProperty, and the output is a list of raw values. Notice that with –ExpandProperty, the column heading is gone:

We can also verify using Get-Member that we just got strings instead of objects with a DisplayName property:

There are a few other parameters for Select-Object, but they will be less commonly used than the ones listed here.

The Measure-Object cmdlet has a simple function. It calculates statistics based on the objects in the pipeline. Its most basic form takes no parameters, and simply counts the objects that are in the pipeline.

To count the files in c:\temp and its subfolders, you could write:

Dir c:\temp –recurse –file | Measure-Object

The output shows the count, and also some other properties that give a hint about the other uses of the cmdlet. To populate these other fields, you will need to provide the name of the property that is used to calculate them and also specify which field(s) you want to calculate. The calculations are specified using the –Sum, -Minimum, -Maximum, and –Average switches.

For instance, to add up (sum) the lengths of the files in the C:\Windows directory, you could issue this command:

Dir c:\Windows | Measure-Object –Property Length –Sum

The Group-Object cmdlet divides the objects in the pipeline into distinct sets based on a property or a set of properties. For instance, we can categorize the files in a folder by their extensions using Group-Object like this: