This chapter gives you an overview of the process to install PostgreSQL from the source. The system used for installation and providing examples in the following sections is a 64-bit CentOS (6.4) machine. Other Unix/Linux systems typically have similar commands. For those using Windows systems, there is a set of utilities available at http://sourceforge.net/projects/unxutils/, which makes it possible to execute most of the Unix commands (find, grep, cut, and so on) in the Windows environment. The steps to be followed to install PostgreSQL on Windows are very different compared to those for Unix/Linux systems and are not covered in this chapter.

There are many possible ways to install PostgreSQL on a system. For Windows, downloading the Graphical Installer and using this is the easy way. For Linux systems such as Red Hat Enterprise Linux or CentOS, we could either use

Yellow dog Updater Modified (yum) or

Red Hat Package Manager or RPM Package Manager (rpm) commands to install PostgreSQL. For Ubuntu, PostgreSQL can be installed using the apt-get command, which in turn works with Ubuntu's Advanced Packaging Tool (APT). While these options work, we do not get to see what is happening when we execute these commands, except, of course, that the database gets installed.

Then there are situations where we might want to build from the source. Assume that all we have is one production server and one development or staging server. We are on version 9.3. Version 9.4 is about to be released and there are quite a few interesting features in 9.4 that we want to try out. If we want to install 9.4 in the test server and use it alongside 9.3, without the installations stepping on each other's toes, compiling from the source with the --prefix= option and specifying different installation directories is the right approach. We could also set different default ports. It's also possible that the new version (source) is ready, but the package for our Linux distribution is not ready yet.

We might use a flavor of Linux for which an installation package is not available at all. Installation from source is the way forward in these situations. One advantage with installing from the source is that we don't have to worry too much about which package to download, the version of operating system (CentOS 6.3 or 6.4?), architecture (32 bit or 64 bit), and so on. These are more or less irrelevant. Of course, we should be using an operating system/architecture that is supported by the database, but that's about it! We also need to download and install all the tools and utilities necessary to compile and make the software, in this case, PostgreSQL.

So let's get down to it.

The source for PostgreSQL is available at http://www.postgresql.org/ftp/source/.

We can see a number of versions all the way down to version 1 when it was called Postgres95 and up to the latest production and beta versions. If you belong to the group who believe that one shouldn't try software that is not at least a few months old, so that its teething issues are resolved, you should opt for the last-but-one version. It's a good idea to opt for the latest stable version. The latest versions have added quite a few very useful features, such as materialized views and an improved set of JSON functions and operators.

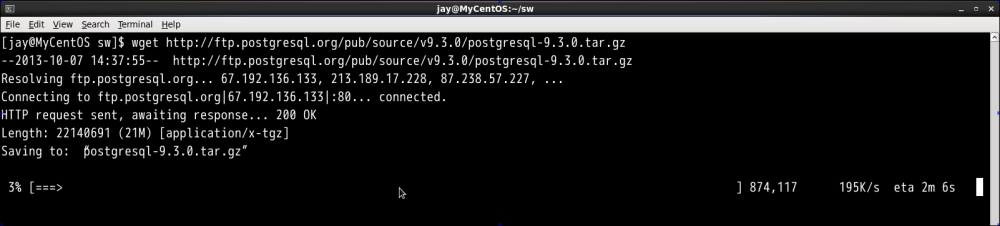

We will use the following wget command to download the source:

wget http://ftp.postgresql.org/pub/source/v9.3.0/postgresql-9.3.0.tar.gz

Executing this command will give us a window that looks like this:

As we can see, the tarred and gzipped source code comes to about 21 MB. As an aside, the installation files of Oracle—the big RDBMS out here—weighs over 2.2 GB.

The files can be extracted using the following command:

tar -xvf postgresql-9.3.0.tar.gz

The tar command is used to create or extract TapeARchive files. In the preceding command, the x option is used to extract, v for verbose is used so that we can see the list of files and folders getting extracted, and the f option is for, well, passing the name of the file, which will undergo the extraction process. We might need to provide the z option, so the command will be tar -xzvf if the preceding code in the tar command does not work. Some versions of tar are intelligent enough to figure out whether it is a gzipped file or not and will unzip it automatically. The untarred unzipped files come to around 115 MB.

Let's inspect the contents:

cd postgresql-9.3.0 find ./ -maxdepth 1 -type d

The find command searches for files meeting specific criteria. Here, we instructed find to limit itself to scanning just one level of subdirectories using maxdepth 1. We used the type option along with d to tell find that we need files of type directory, as shown in the following screenshot:

There are four directories:

src: This directory has most of the core code, namely, code for the backend processes, optimizer, storage, client utilities (such aspsql) and code to take care of replication, and so on. It also contains the makefiles for various distributions. For example, we have the filesMakefile.hpux,Makefile.linux,Makefile.openbsd, andMakefile.scoundersrc/makefile.doc: This directory has the source for documentation written in DocBook, DocBook being an application of Standard Generalized Markup Language (SGML). It's possible to generate documentation in an HTML format, PDF format, and a few other formats.contrib: This directory is where many extensions are available. These are add-on modules that do not form part of the core installation, but can be installed as needed. For example, those who have to connect to other PostgreSQL databases can install the Foreign Data Wrapper extension:postgres_fdw. For those who want to access the contents of a file on the server from a table, there is thefile_fdwextension.config: This directory contains a few macros that help you configure and compile the package.

Now let's move on to the dependencies, configuration options, and the actual installation itself.

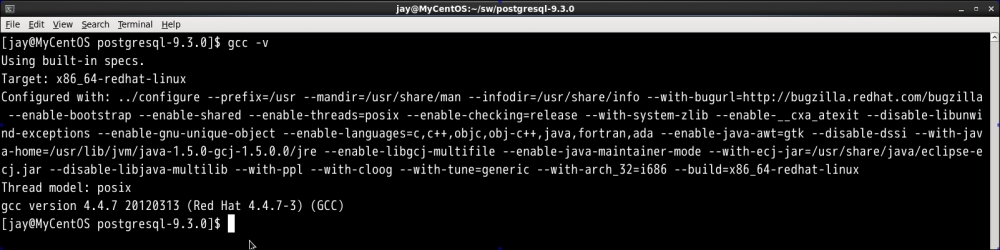

To compile and build PostgreSQL from source, we need GNU Make Version 3.8 or higher. The gmake -v command will tell us whether we have gmake and its version.

A compiler is also necessary. GNU Compiler Collection (GCC) is one such toolset that is included in almost all the Unix systems. The gcc -v command will provide you with the version of gcc as well as options with which it was configured on the system, as shown in the following screenshot:

Note

We can use the following commands to install the necessary packages if they are missing:

On Ubuntu:

sudo apt-get install build-essentialOn RHEL/CentOS:

sudo yum groupinstall 'Development Tools'

The process of building a package from source involves preprocessing the source (including the header files, expanding macros, and so on), compiling, assembly, and linking (linking the libraries). The make utility automates the process of building the executable from source code. The make command uses a makefile, which contains rules on how to build the executables.

Other than GNU Make and a compiler, there is nothing else that is really necessary to continue. However, it is better to have at least the following two components:

readline: The GNUreadlinelibrary is very useful once we start usingpsql, the PostgreSQL command-line client, which is covered later. Havingreadlinehelps us work in a very "bash-like" environment, using Tab to autocomplete/suggest table names and up and down keys to browse command history, and so on and so forth. It also helps to havezlibin place before we proceed with the installation.zlib: This compression library can be handy when we are taking backups (a process definitely to be followed when we have a database).

Adding SQL/XML support will also be useful as sooner or later we will want to extract data from tables in an XML format or load data from the XML files to tables. Still, this might not be as useful as the other two, namely, readline and zlib.

The next step is to execute configure. This is a shell script which will run, to quote documentation, a number of tests to determine several system dependent variables. It will also create many files that will be used during compilation. We can get an idea about the options by executing the following command:

./configure --help > /tmp/config.txt

We can vi /tmp/config.txt and verify that there are over 80 options that can be used. These options can be broadly grouped into the following categories:

Related to choosing directories. If architecture-independent files go to

/usr/local/pgsqlor elsewhere, where should the binaries go, where should the documentation files go, and so on.Related to debugging, profiling, tracing, and so on to be used with care in production.

Related to choosing nondefault settings for parameters such as blocksize, port, and segment size. Changing default setting for parameters such as blocksize can have significant impact on performance. So, we need to be cautious here. Changing the default port is a good idea from a security perspective. It can be changed later in the configuration file also.

Related to enabling options, such as OpenSSL support, SELinux support, and LDAP support.

Related to building modules for several languages (Perl, Python, and PL/TcL).

Related to disabling a few features (such as

zlibandreadline).Tip

Pay attention to the

--prefixoption. If you would like to do a clean upgrade without causing disruption to the existing environment, provide a directory in which the installation files should be written to. This way, each version will be in a different directory. For example:./configure --prefix=/opt/pg/9.3

When we run ./configure, it's likely that we get an output like this:

The output tells us that readline is not available. However, if we list installation packages, it is very much there. The reason is that readline-devel is missing. It contains files needed by programs (such as psql) that use the readline library. This can be installed using the following command:

yum install readline-devel.x86_64

It also installs ncurses-devel. You will have to execute the command using sudo or root. You might also get a similar error for zlib, although zlib itself is already installed. Again, the corrective action is to install devel, in this case, zlib-devel.

Once this is done, we can run configure again and it should go through without any issues, as shown in the following screenshot:

The two files are now created in the current directory in addition to a few more files in subdirectories. One is config.status and the other (config.log. config.status) is a bash script that can be executed to recreate the configuration. The config.log file can be reviewed to understand the various options used, variables, and errors, if any. It's possible that the config.log file has a few errors that are marked fatal, and the compilation process can still be completed without any issue.

This step compiles all the source files and generates the executables. The makefile created in the configure step is used by the gmake utility. These files are not copied to standard directories, such as bin, /usr/bin, /usr/local/bin, and so on. We have the option to make all the options available (the contrib modules, source, and so on), or just the core. It's also possible to build just the core now and add the necessary contrib modules later on. We will build everything now, rather than adding the necessary modules later. Hence, the command is:

gmake world

The process takes a few minutes to complete, and in the end says PostgreSQL, contrib, and documentation successfully made. Ready to install, as shown in the following screenshot:

This is the step where the files are copied to the correct directories. As the installation process involves writing files to directories, which an ordinary user cannot write to, we need to use su for this step:

su

If you are interested in seeing what happens during the installation step, redirect the output to a file, for example, gmake install-world > /tmp/install.out.

We used the keyword world for make. We will use a similar option for installation too:

gmake install-world

If all goes well, we will see a message that says PostgreSQL, contrib, and documentation successfully made. Ready to install. If the output was directed to a file as mentioned, we can open it and see that the installation process created a /usr/local/pgsql directory with a few subdirectories for various components. Then, the install command copied the directories and files to target directories and set attributes such as permissions. Refer to the highlighted portion in the following screenshot:

As we did not make any changes to the default options, the installation files will be at /usr/local/pgsql. In this, we have four directories, namely include, lib, share, and bin. The include directory contains header files (.h) extension, and the lib directory contains all the libraries to be linked dynamically (.so in the case of Linux/Unix systems and .dll in the case of Windows systems). The bin directory, of course, contains executables.

It is the share directory that is a bit more interesting. Here, we have a number of sample files, namely, pg_hba.conf.sample, pg_ident.conf.sample, pg_service.conf.sample, postgresql.conf.sample, psqlrc.sample, and recovery.conf.sample. Once we initialize a cluster and make changes to various configuration files and then lose track of the changes, we can compare with these files and understand what changes have been made or roll back the changes if necessary.

This directory also has a few SQL files such as information_schema.sql and system_view.sql, which go into creating metadata views when a database cluster is initialized.

At the next level of directories under share, we have the doc directory that holds the documentation, and the man directory that holds the manual pages, and so on. The directory of interest under share is the one named extension. Here, we can see all the extensions, which we can install as per our need. Most extensions have a .control file that provides basic information, as shown here:

[jay@MyCentOS extension]$ more dblink.control # dblink extension comment = 'connect to other PostgreSQL databases from within a database' default_version = '1.1' module_pathname = '$libdir/dblink' relocatable = true

There will be SQL files that correspond to each extension, and these will be used when we install the extension in a database we choose.

The documentation to install PostgreSQL from source is at http://www.postgresql.org/docs/current/static/installation.html.

Note that we have just installed the database software. There is no database available to connect to yet. Adding a user for the database administration tasks and initializing a database cluster are the next steps.

First we add an OS user. This user will be used to start/stop/restart the database. This user will also be the superuser for the cluster. The following command has to be executed as root or with sudo:

adduser postgres

Next, we create a directory that will be the base directory for the new cluster. This could be anywhere. A standard location can be /usr/local/pgsql/data. However, you might want to have the database cluster on a separate partition. In the event of an OS and associated file system crash, your database data remains intact. It can also be that you want to use faster spinning disks or Solid State Disks for the database cluster to improve performance. In short, performance and/or reliability concerns can make you choose a location other than the default location to initialize the database cluster. As root, we execute the commands:

[root@MyCentOS extension]# mkdir -p /pgdata/9.3

The -p option ensures that the parent directory is also created if it is nonexistent:

[root@MyCentOS extension]# chown postgres /pgdata/9.3

Then, we switch user to postgres. This is necessary because when we initialize the cluster, the user under which the command was executed becomes the owner of the cluster. The server process will also be owned by this user. We will go with the standard user –postgres, which we created:

su - postgres

The next step is to run the initdb script. Well! Not exactly. If we run initdb now, we will get an error:

[postgres@MyCentOS ~]$ initdb -bash: initdb: command not found

This is because we haven't added the directory containing the PostgreSQL executables to the environment variable PATH yet. We could provide the absolute path and make it work. However, it is better to set the variables.

In .bash_profile of postgres, we have the following lines:

PATH=$PATH:$HOME/bin export PATH

Just before the export PATH line, add:

PATH=$PATH:/usr/local/pgsql/bin

Then, try this:

[postgres@MyCentOS ~]$ which initdb /usr/bin/which: no initdb in (/usr/local/bin:/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/sbin:/home/postgres/bin)

Not surprising, as .bash_profile doesn't get executed unless we source it or log out and log in. Log out, log in, and try again:

[postgres@MyCentOS ~]$ exit logout [root@MyCentOS ~]# su - postgres [postgres@MyCentOS ~]$ which initdb /usr/local/pgsql/bin/initdb

Now we are good to go! It's a good idea to execute the following:

initdb --help | more

Among the many parameters available, the important ones, in most cases, will be -D or --pgdata. This parameter is used to define the directory where the cluster will be initialized (where the database cluster should be stored). This is the only mandatory parameter. Another parameter that can be useful is --pwprompt. Using this, we can set the password for the database superuser. So, we execute the following command:

initdb --pgdata=/pgdata/9.3 --pwprompt

If this is not set now and password authentication is to be used, we have to set the password later, as shown here:

As seen in the preceding screenshot, the process asks for the superuser password. Towards the end, it gives a warning that the trust authentication is enabled for local connections. This means that it will be possible to make connections from the localhost without being prompted for a password. It's a good idea to change this setting. We will come to this later. For more options available for initdb, please refer to http://www.postgresql.org/docs/current/static/app-initdb.html.

As always, let's see what happened; which directories got created when we initialized the cluster:

cd /pgdata/9.3 [postgres@MyCentOS 9.3]$ find ./ -maxdepth 1 -type d ./ ./base ./pg_stat ./pg_clog ./pg_xlog ./pg_tblspc ./pg_twophase ./pg_subtrans ./global ./pg_notify ./pg_stat_tmp ./pg_snapshots ./pg_multixact ./pg_serial

We will start the cluster as it will help us to relate the files system with the databases:

[postgres@MyCentOS base]$ expo rt PGDATA=/pgdata/9.3/

PGDATA is the default data directory location.

[postgres@MyCentOS base]$ pg_ctl start server starting [postgres@MyCentOS base]$ LOG: database system was shut down at 2013-10-13 13:48:07 IST LOG: database system is ready to accept connections LOG: autovacuum launcher started

The pg_ctl is a utility to start, stop, check the status, or restart the database cluster. Passing init or initdb as a parameter results in the initialization of a cluster. More options can be explored at http://www.postgresql.org/docs/current/static/app-pg-ctl.html.

Now, we will go through the directories:

base: This directory holds the databases that will be created by database users. This also holds thepg_defaulttablespacewith the databases:postgres,template0, andtemplate1:[postgres@MyCentOS base]$ pwd /pgdata/9.3/base [postgres@MyCentOS base]$ find ./ -type d ./ ./1 ./12891 ./12896 [postgres@MyCentOS base]$ oid2name All databases: Oid Database Name Tablespace ---------------------------------- 12896 postgrespg_default 12891 template0pg_default 1 template1 pg_default

We can see here that

Oidpoints to the directory names. We can try creating and dropping a database, as shown in the following code:[postgres@MyCentOS base]$ pwd /pgdata/9.3/base [postgres@MyCentOS base]$ psql psql (9.3.0) Type "help" for help. postgres=# \! ls 1 12891 12896 postgres=# CREATE DATABASE test; CREATE DATABASE postgres=# \! ls 1 12891 12896 16385 postgres=# DROP DATABASE test; DROP DATABASE postgres=# \! ls 1 12891 12896

Tip

OID stands for Object Identifier. They are used internally by PostgreSQL as primary keys for various system tables. Objects created in the cluster (tables, databases, and so on) will have OIDs associated with them. The

oid2nameutility is available as acontribmodule and helps us examine the databases, tables, and related file nodes.We note a few things here. First, we were not prompted for a password (remember the warning about trust authentication?). Next, we can execute host commands from

psql(more on this in a later chapter). Third, creating/dropping databases are actually similar to creating/deleting directories. PostgreSQL does do quite a bit of internal book-keeping when we create or drop objects in addition to manipulating directories.

global: This directory contains cluster-wide tables. There are many tables and associated views that keep track of the entire cluster, namely, database roles, system catalog data, and so on.pg_clog: This directory contains the transaction commit status data.pg_multixact: This directory contains multitransaction status data (concurrent transactions waiting for a lock).pg_notify: This directory contains the LISTEN/NOTIFY status data.pg_serial: This directory contains information about committed serializable transactions.pg_stat_tmp: This directory contains temporary files for the statistics subsystem.pg_subtrans: This directory contains subtransaction status data.pg_tblspc: This directory contains symbolic links to tablespaces.pg_twophase: This directory contains state files for prepared transactions.pg_xlog: This directory contains Write Ahead Log (WAL) files.

In short, we have directories to hold containers of real user created data (tables and tablespaces), directories to hold data about all the data and data structures (metadata), and then directories to hold data about the state of transactions.

Let's take a look at the processes spawned when we started PostgreSQL:

[root@MyCentOS ~]# ps -fupostgres

The following screenshot illustrates the processes spawned:

We can see that there is one parent process (pid1566), which spawned a few child processes.

One important file that gets created when we initialize a database cluster is postgresql.conf. This file contains a number of critical parameters related to the server processes and resource allocation, memory management, logging, file locations, and so on. Another file is pg_hba.conf."hba" (which stands for host-based authentication). Changes to these files are necessary to enable client connection to the database from a different machine. Both of these are in the PGDATA folder.

The postmaster.pid file in the same directory is used by pg_ctl to determine whether the database is running:

[postgres@MyCentOS 9.3]$ pg_ctl status pg_ctl: server is running (PID: 1566)

The contents of the file are as follows:

[postgres@MyCentOS 9.3]$ head -1 postmaster.pid 1566

The 1566 number in the pid file is the same as what we got for the parent process when we did a process listing earlier.

We have so far initialized the database cluster. However, we made quite a few extensions available using the world option. What about them? We can list the installed extensions using the dx (describe extension) command at the psql prompt:

postgres=# \dx List of installed extensions Name | Version | Schema | Description ---------+---------+------------+------------------------------ plpgsql | 1.0 | pg_catalog | PL/pgSQL procedural language (1 row)

To get a list of available extensions, we can query the pg_available_extensions view, as shown here:

postgres=# SELECT name,comment FROM pg_available_extensions limit 5; name | comment ----------+-------------------------------------------------------------- dblink | connect to other PostgreSQL databases from within a database isn | data types for international product numbering standards file_fdw | foreign-data wrapper for flat file access tsearch2 | compatibility package for pre-8.3 text search functions unaccent | text search dictionary that removes accents (5 rows)

Let's try installing one extension and then see the list of installed extensions again:

postgres=# CREATE EXTENSION dblink ; CREATE EXTENSION postgres=# \dx List of installed extensions Name | Version | Schema | Description ---------+---------+------------+-------------------------------------------------------------- dblink | 1.1 | public | connect to other PostgreSQL databases from within a database plpgsql | 1.0 | pg_catalog | PL/pgSQL procedural language (2 rows)

The dblink extension has been added to the list of installed extensions. To remove it, just drop it:

postgres=# DROP EXTENSION dblink ; DROP EXTENSION postgres=# \dx List of installed extensions Name | Version | Schema | Description ---------+---------+------------+------------------------------ plpgsql | 1.0 | pg_catalog | PL/pgSQL procedural language (1 row)

Tip

Downloading the example code

You can download the example code files from your account at http://www.packtpub.com for all the Packt Publishing books you have purchased. If you purchased this book elsewhere, you can visit http://www.packtpub.com/support and register to have the files e-mailed directly to you.

In this chapter, we covered the steps to install PostgreSQL from the source: downloading, configuring, building and installing. We went through the directory structure of the source tree, the cluster initialization process, the resultant directories and files, as well as the process to install and remove extensions.

In the next chapter, we will cover PostgreSQL Server Architecture. We will cover the various background processes, their functions, memory structures and how they all work with each other to provide the many features available in PostgreSQL.