Vulnerability Management Governance

Today's technology landscape is changing at an extremely fast pace. Almost every day, some new technology is introduced and gains popularity within no time. Although most organizations do adapt to rapidly changing technology, they often don't realize the change in the organization's threat landscape with the use of new technology. While the existing technology landscape of an organization might already be vulnerable, the induction of new technology could add more IT security risks in the technology landscape.

In order to effectively mitigate all the risks, it is important to implement a robust vulnerability management program across the organization. This chapter will introduce some of the essential governance concepts that will help lay a solid foundation for implementing the vulnerability management program. Key learning points in this chapter will be as follows:

- Security basics

- Understanding the need for security assessments

- Listing down the business drivers for vulnerability management

- Calculating ROIs

- Setting up the context

- Developing and rolling out a vulnerability management policy and procedure

- Penetration testing standards

- Industry standards

Security basics

Security is a subjective matter and designing security controls can often be challenging. A particular asset may demand more protection for keeping data confidential while another asset may demand to ensure utmost integrity. While designing the security controls, it is also equally important to create a balance between the effectiveness of the control and the ease of use for an end user. This section introduces some of the essential security basics before moving on to more complex concepts further in the book.

The CIA triad

Confidentiality, integrity, and availability (often referred as CIA), are the three critical tenets of information security. While there are many factors that help determine the security posture of a system, confidentiality, integrity, and availability are most prominent among them. From an information security perspective, any given asset can be classified based on the confidentiality, integrity, and availability values it carries. This section conceptually highlights the importance of CIA along with practical examples and common attacks against each of the factors.

Confidentiality

The dictionary meaning of the word confidentiality states: the state of keeping or being kept secret or private. Confidentiality, in the context of information security, implies keeping the information secret or private from any unauthorized access, which is one of the primary needs of information security. The following are some examples of information that we often wish to keep confidential:

- Passwords

- PIN numbers

- Credit card number, expiry date, and CVV

- Business plans and blueprints

- Financial information

- Social security numbers

- Health records

Common attacks on confidentiality include:

- Packet sniffing: This involves interception of network packets in order to gain unauthorized access to information flowing in the network

- Password attacks: This includes password guessing, cracking using brute force or dictionary attack, and so on

- Port scanning and ping sweeps: Port scans and ping sweeps are used to identify live hosts in a given network and then perform some basic fingerprinting on the live hosts

- Dumpster driving: This involves searching and mining the dustbins of the target organization in an attempt to possibly get sensitive information

- Shoulder surfing: This is a simple act wherein any person standing behind you may peek in to see what password you are typing

- Social engineering: Social engineering is an act of manipulating human behavior in order to extract sensitive information

- Phishing and pharming: This involves sending false and deceptive emails to a victim, spoofing the identity, and tricking the victim to give out sensitive information

- Wiretapping: This is similar to packet sniffing though more related to monitoring of telephonic conversations

- Keylogging: This involves installing a secret program onto the victim's system which would record and send back all the keys the victim types in

Integrity

Integrity in the context of information security refers to the quality of the information, meaning the information, once generated, should not be tampered with by any unauthorized entities. For example, if a person sends X amount of money to his friend using online banking, and his friend receives exactly X amount in his account, then the integrity of the transaction is said to be intact. If the transaction gets tampered at all in between, and the friend either receives X + (n) or X - (n) amount, then the integrity is assumed to have been tampered with during the transaction.

Common attacks on integrity include:

- Salami attacks: When a single attack is divided or broken into multiple small attacks in order to avoid detection, it is known as a salami attack

- Data diddling attacks: This involves unauthorized modification of data before or during its input into the system

- Trust relationship attacks: The attacker takes benefit of the trust relationship between the entities to gain unauthorized access

- Man-in-the-middle attacks: The attacker hooks himself to the communication channel, intercepts the traffic, and tampers with the data

- Session hijacking: Using the man-in-the-middle attack, the attacker can hijack a legitimate active session which is already established between the entities

Availability

The availability principle states that if an authorized individual makes a request for a resource or information, it should be available without any disruption. For example, a person wants to download his bank account statement using an online banking facility. For some reason, the bank's website is down and the person is unable to access it. In this case, the availability is affected as the person is unable to make a transaction on the bank's website. From an information security perspective, availability is as important as confidentiality and integrity. For any reason, if the requested data isn't available within time, it could cause severe tangible or intangible impact.

Common attacks on availability include the following:

- Denial of service attacks: In a denial of service attack, the attacker sends a large number of requests to the target system. The requests are so large in number that the target system does not have the capacity to respond to them. This causes the failure of the target system and requests coming from all other legitimate users get denied.

- SYN flood attacks: This is a type of denial of service attack wherein the attacker sends a large number of SYN requests to the target with the intention of making it unresponsive.

- Distributed denial of service attacks: This is quite similar to the denial of service attack, the difference being the number of systems used to attack. In this type of attack, hundreds and thousands of systems are used by the attacker in order to flood the target system.

- Electrical power attacks: This type of attack involves deliberate modification in the electrical power unit with an intention to cause a power outage and thereby bring down the target systems.

- Server room environment attacks: Server rooms are temperature controlled. Any intentional act to disturb the server room environment can bring down the critical server systems.

- Natural calamities and accidents: These involve earthquakes, volcano eruptions, floods, and so on, or any unintentional human errors.

Identification

Authentication is often considered the first step of interaction with a system. However, authentication is preceded by identification. A subject can claim an identity by process of identification, thereby initiating accountability. For initiating the process of authentication, authorization, and accountability (AAA), a subject must provide an identity to a system. Typing in a password, swiping an RFID access card, or giving a finger impression, are some of the most common and simple ways of providing individual identity. In the absence of an identity, a system has no way to correlate an authentication factor with the subject. Upon establishing the identity of a subject, thereafter all actions performed would be accounted against the subject, including information-system tracks activity based on identity, and not by the individuals. A computer isn't capable of differentiating between humans. However, a computer can well distinguish between user accounts. It clearly understands that one user account is different from all other user accounts. However, simply claiming an identity does not implicitly imply access or authority. The subject must first prove its identity in order to get access to controlled resources. This process is known as identification.

Authentication

Verifying and testing that the claimed identity is correct and valid is known as the process of authentication. In order to authenticate, the subject must present additional information that should be exactly the same as the identity established earlier. A password is one of the most common types of mechanism used for authentication.

The following are some of the factors that are often used for authentication:

- Something you know: The something you know factor is the most common factor used for authentication. For example, a password or a simple personal identification number (PIN). However, it is also the easiest to compromise.

- Something you have: The something you have factor refers to items such as smart cards or physical security tokens.

- Something you are: The something you are factor refers to using your biometric properties for the process of authentication. For example, using fingerprint or retina scans for authentication.

Identification and authentication are always used together as a single two-step process.

Providing an identity is the first step, and providing the authentication factor(s) is the second step. Without both, a subject cannot gain access to a system. Neither element alone is useful in terms of security.

Common attacks on authentication include:

- Brute force: A brute force attack involves trying all possible permutations and combinations of a particular character set in order to get the correct password

- Insufficient authentication: Single-factor authentication with a weak password policy makes applications and systems vulnerable to password attacks

- Weak password recovery validation: This includes insufficient validation of password recovery mechanisms, such as security questions, OTP, and so on

Authorization

Once a subject has successfully authenticated, the next logical step is to get an authorized access to the resources assigned.

Upon successful authorization, an authenticated identity can request access to an object provided it has the necessary rights and privileges.

An access control matrix is one of the most common techniques used to evaluate and compare the subject, the object, and the intended activity. If the subject is authorized, then a specific action is allowed, and denied if the subject is unauthorized.

It is important to note that a subject who is identified and authenticated may not necessarily be granted rights and privileges to access anything and everything. The access privileges are granted based on the role of the subject and on a need-to-know basis. Identification and authentication are all-or-nothing aspects of access control.

The following table shows a sample access control matrix:

| Resource | ||

| User | File 1 | File 2 |

| User 1 | Read | Write |

| User 2 | - | Read |

| User 3 | Write | Write |

From the preceding sample access control matrix, we can conclude the following:

- User 1 cannot modify file 1

- User 2 can only read file 2 but not file 1

- User 3 can read/write both file 1 and file 2

Common attacks on authorization include the following:

- Authorization creep: Authorization creep is a term used to describe that a user has intentionally or unintentionally been given more privileges than he actually requires

- Horizontal privilege escalation: Horizontal privilege escalation occurs when a user is able to bypass the authorization controls and is able to get the privileges of a user who is at the same level in the hierarchy

- Vertical privilege escalation: Vertical privilege escalation occurs when a user is able to bypass the authorization controls and is able to get the privileges of a user higher in the hierarchy

Auditing

Auditing, or monitoring, is the process through which a subject's actions could be tracked and/or recorded for the purpose of holding the subject accountable for their actions once authenticated on a system. Auditing can also help monitor and detect unauthorized or abnormal activities on a system. Auditing includes capturing and preserving activities and/or events of a subject and its objects as well as recording the activities and/or events of core system functions that maintain the operating environment and the security mechanisms.

The minimum events that need to be captured in an audit log are as follows:

- User ID

- Username

- Timestamp

- Event type (such as debug, access, security)

- Event details

- Source identifier (such as IP address)

The audit trails created by capturing system events to logs can be used to assess the health and performance of a system. In case of a system failure, the root cause can be traced back using the event logs. Log files can also provide an audit trail for recreating the history of an event, backtracking an intrusion, or system failure. Most of the operating systems, applications, and services have some kind of native or default auditing function for at least providing bare-minimum events.

Common attacks on auditing include the following:

- Log tampering: This includes unauthorized modification of audit logs

- Unauthorized access to logs: An attacker can have unauthorized access to logs with an intent to extract sensitive information

- Denial of service through audit logs: An attacker can send a large number of garbage requests just with the intention to fill the logs and subsequently the disk space resulting in a denial of service attack

Accounting

Any organization can have a successful implementation of its security policy only if accountability is well maintained. Maintaining accountability can help in holding subjects accountable for all their actions. Any given system can be said to be effective in accountability based on its ability to track and prove a subject's identity.

Various mechanisms, such as auditing, authentication, authorization, and identification, help associate humans with the activities they perform.

Using a password as the only form of authentication creates a significant room for doubt and compromise. There are numerous easy ways of compromising passwords and that is why they are considered the least secure form of authentication. When multiple factors of authentication, such as a password, smart card, and fingerprint scan, are used in conjunction with one another, the possibility of identity theft or compromise reduces drastically.

Non–repudiation

Non-repudiation is an assurance that the subject of an activity or event cannot later deny that the event occurred. Non-repudiation prevents a subject from claiming not to have sent a message, not to have performed an action, or not to have been the cause of an event.

Various controls that can help achieve non-repudiation are as follows:

- Digital certificates

- Session identifiers

- Transaction logs

For example, a person could send a threatening email to his colleague and later simply deny the fact that he sent the email. This is a case of repudiation. However, had the email been digitally signed, the person wouldn't have had the chance to deny his act.

Vulnerability

In very simple terms, vulnerability is nothing but a weakness in a system or a weakness in the safeguard/countermeasure. If a vulnerability is successfully exploited, it could result in loss or damage to the target asset. Some common examples of vulnerability are as follows:

- Weak password set on a system

- An unpatched application running on a system

- Lack of input validation causing XSS

- Lack of database validation causing SQL injection

- Antivirus signatures not updated

Vulnerabilities could exist at both the hardware and software level. A malware-infected BIOS is an example of hardware vulnerability while SQL injection is one of the most common software vulnerabilities.

Threats

Any activity or event that has the potential to cause an unwanted outcome can be considered a threat. A threat is any action that may intentionally or unintentionally cause damage, disruption, or complete loss of assets.

The severity of a threat could be determined based on its impact. A threat can be intentional or accidental as well (due to human error). It can be induced by people, organizations, hardware, software, or nature. Some of the common threat events are as follows:

- A possibility of a virus outbreak

- A power surge or failure

- Fire

- Earthquake

- Floods

- Typo errors in critical financial transactions

Exposure

A threat agent may exploit the vulnerability and cause an asset loss. Being susceptible to such an asset loss is known as an exposure.

Exposure does not always imply that a threat is indeed occurring. It simply means that if a given system is vulnerable and a threat could exploit it, then there's a possibility that a potential exposure may occur.

Risk

A risk is the possibility or likelihood that a threat will exploit a vulnerability to cause harm to an asset.

Risk can be calculated with the following formula:

Risk = Likelihood * Impact

With this formula, it is evident that risk can be reduced either by reducing the threat agent or by reducing the vulnerability.

When a risk is realized, a threat agent or a threat event has taken advantage of a vulnerability and caused harm to or disclosure of one or more assets. The whole purpose of security is to prevent risks from becoming realized by removing vulnerabilities and blocking threat agents and threat events from exposing assets. It's not possible to make any system completely risk free. However, by putting countermeasures in place, risk can be brought down to an acceptable level as per the organization's risk appetite.

Safeguards

A safeguard, or countermeasure, is anything that mitigates or reduces vulnerability. Safeguards are the only means by which risk is mitigated or removed. It is important to remember that a safeguard, security control, or countermeasure may not always involve procuring a new product; effectively utilizing existing resources could also help produce safeguards.

The following are some examples of safeguards:

- Installing antivirus on all the systems

- Installing a network firewall

- Installing CCTVs and monitoring the premises

- Deploying security guards

- Installing temperature control systems and fire alarms

Attack vectors

An attack vector is nothing but a path or means by which an attacker can gain access to the target system. For compromising a system, there could be multiple attack vectors possible. The following are some of the examples of attack vectors:

- Attackers gained access to sensitive data in a database by exploiting SQL injection vulnerability in the application

- Attackers gained access to sensitive data by gaining physical access to the database system

- Attackers deployed malware on the target systems by exploiting the SMB vulnerability

- Attackers gained administrator-level access by performing a brute force attack on the system credentials

To sum up the terms we have learned, we can say that assets are endangered by threats that exploit vulnerabilities resulting in exposure, which is a risk that could be mitigated using safeguards.

Understanding the need for security assessments

Many organizations invest substantial amounts of time and cost in designing and implementing various security controls. Some even deploy multi-layered controls following the principle of defense-in-depth. Implementing strong security controls is certainly required; however, it's equally important to test if the controls deployed are indeed working as expected.

For example, an organization may choose to deploy the latest and best in the class firewall to protect its perimeters. The firewall administrator somehow misconfigures the rules. So however good the firewall may be, if it's not configured properly, it's still going to allow bad traffic in. In this case, a thorough testing and/or review of firewall rules would have helped identify and eliminate unwanted rules and retain the required ones.

Whenever a new system is developed, it strictly and vigorously undergoes quality assurance (QA) testing. This is to ensure that the newly developed system is functioning correctly as per the business requirements and specifications. On parallel lines, testing of security controls is also vital to ensure they are functioning as specified. Security tests could be of different types, as discussed in the next section.

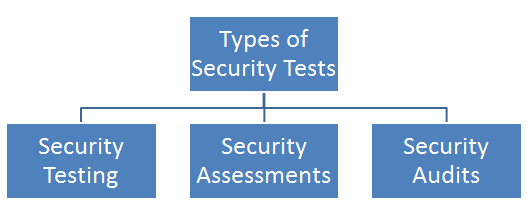

Types of security tests

Security tests could be categorized in multiple ways based on the context and the purpose they serve. The following diagram shows a high-level classification of the types of security tests:

Security testing

The primary objective of security tests is to ensure that a control is functioning properly. The tests could be a combination of automated scans, penetration tests using tools, and manual attempts to reveal security flaws. It's important to note that security testing isn't a one-time activity and should be performed at regular intervals. When planning for testing of security controls, the following factors should be considered:

- Resources (hardware, software, and skilled manpower) available for security testing

- Criticality rating for the systems and applications protected by the controls

- The probability of a technical failure of the mechanism implementing the control

- The probability of a misconfiguration of a control that would endanger the security

- Any other changes, upgrades, or modifications in the technical environment that may affect the control performance

- Difficulty and time required for testing a control

- Impact of the test on regular business operations

Only after determining these factors, a comprehensive assessment and testing strategy can be designed and validated. This strategy may include regular automated tests complemented by manual tests. For example, an e-commerce platform may be subjected to automated vulnerability scanning on a weekly basis with immediate alert notifications to administrators when the scan detects a new vulnerability. The automated scan requires intervention from administrators once it's configured and triggered, so it is easy to scan frequently.

The security team may choose to complement automated scans with a manual penetration test performed by an internal or external consultant for a fixed fee. Security tests can be performed on quarterly, bi-annually, or on an annual basis to optimize costs and efforts.

Unfortunately, many security testing programs begin on a haphazard and ad hoc basis by simply pointing fancy new tools at whatever systems are available in the network. Testing programs should be thoughtfully designed and include rigorous, routine testing of systems using a risk-based approach.

Certainly, security tests cannot be termed complete unless the results are carefully reviewed. A tool may produce a lot of false positives which could be eliminated only by manual reviews. The manual review of a security test report also helps in determining the severity of the vulnerability in context to the target environment.

For example, an automated scanning tool may detect cross-site scripting in a publicly hosted e-commerce application as well as in a simple help-and-support intranet portal. In this case, although the vulnerability is the same in both applications, the earlier one carries more risk as it is internet-facing and has many more users than the latter.

Vulnerability assessment versus penetration testing

Vulnerability assessment and penetration testing are quite often used interchangeably. However, both are different with respect to the purpose they serve. To understand the difference between the two terms, let's consider a real-world example.

There is a bank that is located on the outskirts of a city and in quite a secluded area. There is a gang of robbers who intend to rob this bank. The robbers start planning on how they could execute their plan. Some of them visit the bank dressed as normal customers and note a few things:

- The bank has only one security guard who is unarmed

- The bank has two entrances and three exits

- There are no CCTV cameras installed

- The door to the locker compartment appears to be weak

With these findings, the robbers just did a vulnerability assessment. Now whether or not these vulnerabilities could be exploited in reality to succeed with the robbery plan would become evident only when they actually rob the bank. If they rob the bank and succeed in exploiting the vulnerabilities, they would have achieved penetration testing.

So, in a nutshell, checking whether a system is vulnerable is vulnerability assessment, whereas actually exploiting the vulnerable system is penetration testing. An organization may choose to do either or both as per their requirement. However, it's worth noting that a penetration test cannot be successful if a comprehensive vulnerability assessment hasn't been performed first.

Security assessment

A security assessment is nothing but detailed reviews of the security of a system, application, or other tested environments. During a security assessment, a trained professional conducts a risk assessment that uncovers potential vulnerabilities in the target environment that may allow a compromise and makes suggestions for mitigation, as required.

Like security testing, security assessments also normally include the use of testing tools but go beyond automated scanning and manual penetration tests. They also include a comprehensive review of the surrounding threat environment, present and future probable risks, and the asset value of the target environment.

The main output of a security assessment is generally a detailed assessment report intended for an organization's top management and contains the results of the assessment in nontechnical language. It usually concludes with precise recommendations and suggestions for improvising the security posture of the target environment.

Security audit

A security audit often employs many of the similar techniques followed during security assessments but are required to be performed by independent auditors. An organization's internal security staff perform routine security testing and assessments. However, security audits differ from this approach. Security assessments and testing are internal to the organization and are intended to find potential security gaps.

Audits are similar to assessments but are conducted with the intent of demonstrating the effectiveness of security controls to a relevant third party. Audits ensure that there's no conflict of interest in testing the control effectiveness. Hence, audits tend to provide a completely unbiased view of the security posture.

The security assessment reports and the audit reports might look similar; however, they are both meant for different audiences. The audience for the audit report mainly includes higher management, the board of directors, government authorities, and any other relevant stakeholders.

There are two main types of audits:

- Internal audit: The organization's internal audit team performs the internal audit. The internal audit reports are intended for the organization's internal audience. It is ensured that the internal audit team has a completely independent reporting line to avoid conflicts of interest with the business processes they assess.

- External audit: An external audit is conducted by a trusted external auditing firm. External audits carry a higher degree of external validity since the external auditors virtually don't have any conflict of interest with the organization under assessment. There are many firms that perform external audits, but most people place the highest credibility with the so-called big four audit firms:

- Ernst & Young

- Deloitte & Touche

- PricewaterhouseCoopers

- KPMG

Audits performed by these firms are generally considered acceptable by most investors and governing bodies and regulators.

Business drivers for vulnerability management

To justify investment in implementing any control, a business driver is absolutely essential. A business driver defines why a particular control needs to be implemented. Some of the typical business drivers for justifying the vulnerability management program are described in the following sections.

Regulatory compliance

For more than a decade, almost all businesses have become highly dependent on the use of technology. Ranging from financial institutions to healthcare organizations, there has been a large dependency on the use of digital systems. This has, in turn, triggered the industry regulators to put forward mandatory requirements that the organizations need to comply. Noncompliance to any of the requirements specified by the regulator attracts heavy fines and bans.

The following are some of the regulatory standards that demand the organizations to perform vulnerability assessments:

- Sarbanes-Oxley (SOX)

- Statements on Standards for Attestation Engagements 16 (SSAE 16/SOC 1 (https://www.ssae-16.com/soc-1/))

- Service Organization Controls (SOC) 2/3

- Payment Card Industry Data Security Standard (PCI DSS)

- Health Insurance Portability and Accountability Act (HIPAA)

- Gramm Leach Bliley Compliance (GLBA)

- Federal Information System Controls Audit Manual (FISCAM)

Satisfying customer demands

Today's customers have become more selective in terms of what offerings they get from the technology service provider. A certain customer might be operating in one part of the world with certain regulations that demand vulnerability assessments. The technology service provider might be in another geographical zone but must perform the vulnerability assessment to ensure the customer being served is compliant. So, customers can explicitly demand the technology service provider to conduct vulnerability assessments.

Response to some fraud/incident

Organizations around the globe are constantly subject to various types of attacks originating from different locations. Some of these attacks succeed and cause potential damage to the organization. Based on the historical experience of internal and/or external fraud/attacks, an organization might choose to implement a complete vulnerability management program.

For example, the WannaCry ransomware that spread like fire, exploited a vulnerability in the SMB protocol of Windows systems. This attack must have triggered the implementation of a vulnerability management program across many affected organizations.

Gaining a competitive edge

Let's consider a scenario wherein there are two technology vendors selling a similar e-commerce platform. One vendor has an extremely robust and documented vulnerability management program that makes their product inherently resilient against common attacks. The second vendor has a very good product but no vulnerability management program. A wise customer would certainly choose the first vendor product as the product has been developed in line with a strong vulnerability management process.

Safeguarding/protecting critical infrastructures

This is the most important of all the previous business drivers. An organization may simply proactively choose to implement a vulnerability management program, irrespective of whether it has to comply with any regulation or satisfy any customer demand. The proactive approach works better in security than the reactive approach.

For example, an organization might have payment details and personal information of its customers and doesn't want to put this data at risk of unauthorized disclosure. A formal vulnerability management program would help the organization identify all probable risks and put controls in place to mitigate this.

Calculating ROIs

Designing and implementing security controls is often seen as a cost overhead. Justifying the cost and effort of implementing certain security controls to management can often be challenging. This is when one can think of estimating the return-on-investment for a vulnerability management program. This can be quite subjective and based on both qualitative and quantitative analysis.

While the return-on-investment calculation can get complicated depending on the complexity of the environment, let's get started with a simple formula and example:

Return-on-investment (ROI) = (Gain from Investment – Cost of Investment) * 100/ Cost of Investment

For a simplified understanding, let's consider there are 10 systems within an organization that need to be under the purview of the vulnerability management program. All these 10 systems contain sensitive business data and if they are attacked, the organization could suffer a loss of $75,000 along with reputation loss. Now the organization can design, implement, and monitor a vulnerability management program by utilizing resources worth $25,000. So, the ROI would be as follows:

Return-on-investment (ROI) = (75,000 – 25,000) * 100/ 25,000 = 200%

In this case, the ROI of implementing the vulnerability management program is 200%, which is indeed quite a good justifier to senior management for approval.

The preceding example was a simplified one meant for understanding the ROI concept. However, practically, organizations might have to consider many more factors while calculating the ROI for the vulnerability management program, including:

- What would be the scope of the program?

- How many resources (head-count) would be required to design, implement, and monitor the program?

- Are any commercial tools required to be procured as part of this program?

- Are any external resources required (contract resources) during any of the phases of the program?

- Would it be feasible and cost-effective to completely outsource the program to a trusted third-party vendor?

Setting up the context

Changes are never easy and smooth. Any kind of change within an organization typically requires extensive planning, scoping, budgeting, and a series of approvals. Implementing a complete vulnerability management program in an organization with no prior security experience can be very challenging. There would be obvious resistance from many of the business units and questions asked against the sustainability of the program. The vulnerability management program can never be successful unless it is deeply induced within the organization's culture. Like any other major change, this could be achieved using two different approaches, as described in the following sections.

Bottom-up

The bottom-up approach is where the ground-level staff initiate action to implement the new initiative. Speaking in the context of the vulnerability management program, the action flow in a bottom-up approach would look something similar to the following:

- A junior team member of the system administrator team identifies some vulnerability in one of the systems

- He reports it to his supervisor and uses a freeware tool to scan other systems for similar vulnerabilities

- He consolidates all the vulnerabilities found and reports them to his supervisor

- The supervisor then reports the vulnerabilities to higher management

- The higher management is busy with other activities and therefore fails to prioritize the vulnerability remediation

- The supervisor of the system administrator team tries to fix a few of the vulnerabilities with the help of the limited resources he has

- A set of systems is still lying vulnerable as no one is much interested in fixing them

What we can notice in the preceding scenario is that all the activities were unplanned and ad hoc. The junior team member was doing a vulnerability assessment on his own initiative without much support from higher management. Such an approach would never succeed in the longer run.

Top-down

Unlike the bottom-up approach, where the activities are initiated by the ground-level staff, the top-down approach works much better as it is initiated, directed, and governed by the top management. For implementing a vulnerability management program using a top-down approach, the action flow would look like the following:

- The top management decides to implement a vulnerability management program

- The management calculates the ROI and checks the feasibility

- The management then prepares a policy procedure guideline and a standard for the vulnerability management program

- The management allocates a budget and resources for the implementation and monitoring of the program

- The mid-management and the ground-level staff then follow the policy and procedure to implement the program

- The program is monitored and metrics are shared with top management

The top-down approach for implementing a vulnerability management program as stated in the preceding scenario has a much higher probability of success since it's initiated and driven by top management.

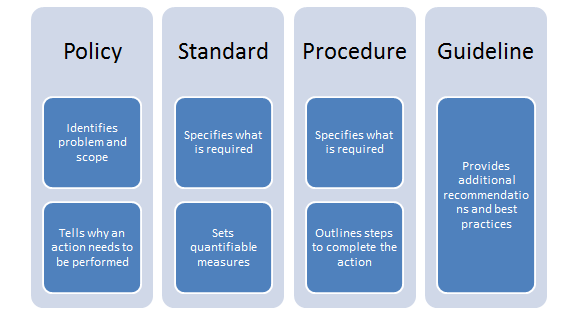

Policy versus procedure versus standard versus guideline

From a governance perspective, it is important to understand the difference between a policy, procedure, standard, and guideline. Note the following diagram:

- Policy: A policy is always the apex among the other documents. A policy is a high-level statement that reflects the intent and direction from the top management. Once published, it is mandatory for everyone within the organization to abide by the policy. Examples of a policy are internet usage policy, email policy, and so on.

- Standard: A standard is nothing but an acceptable level of quality. A standard can be used as a reference document for implementing a policy. An example of a standard is ISO27001.

- Procedure: A procedure is a series of detailed steps to be followed for accomplishing a particular task. It is often implemented or referred to in the form of a standard operating procedure (SOP). An example of a procedure is a user access control procedure.

- Guideline: A guideline contains additional recommendations or suggestions that are not mandatory to follow. They are best practices that may or may not be followed depending on the context of the situation. An example of a guideline is the Windows security hardening guideline.

Vulnerability assessment policy template

The following is a sample vulnerability assessment policy template that outlines various aspects of vulnerability assessment at a policy level:

|

|

Name |

Title |

|

Created By |

|

|

|

Reviewed By |

|

|

|

Approved By |

|

Overview

This section is a high-level overview of what vulnerability management is all about.

A vulnerability assessment is a process of identifying and quantifying security vulnerabilities within a given environment. It is an assessment of information security posture, indicating potential weaknesses as well as providing the appropriate mitigation procedures wherever required to either eliminate those weaknesses or reduce them to an acceptable level of risk.

Generally vulnerability assessment follows these steps:

- Create an inventory of assets and resources in a system

- Assign quantifiable value and importance to the resources

- Identify the security vulnerabilities or potential threats to each of the identified resource

- Prioritize and then mitigate or eliminate the most serious vulnerabilities for the most valuable resources

Purpose

This section is to state the purpose and intent of writing the policy.

The purpose of this policy is to provide a standardized approach towards conducting security reviews. The policy also identifies roles and responsibilities during the course of the exercise until the closure of identified vulnerabilities.

Scope

This section defines the scope for which the policy would be applicable; it could include an intranet, extranet, or only a part of an organization's infrastructure.

Vulnerability assessments can be conducted on any asset, product, or service within <Company Name>.

Policy

The team under the authority of the designation would be accountable for the development, implementation, and execution of the vulnerability assessment process.

All the network assets within the company name's network would comprehensively undergo regular or continuous vulnerability assessment scans.

A centralized vulnerability assessment system will be engaged. Usage of any other tools to scan or verify vulnerabilities must be approved, in writing, by the designation.

All the personnel and business units within the company name are expected to cooperate with any vulnerability assessment being performed on systems under their ownership.

All the personnel and business units within the company name are also expected to cooperate with the team in the development and implementation of a remediation plan.

The designation may instruct to engage third-party security companies to perform the vulnerability assessment on critical assets of the company.

Vulnerability assessment process

This section provides a pointer to an external procedure document that details the vulnerability assessment process.

For additional information, go to the vulnerability assessment process.

Exceptions

It’s quite possible that, for some valid justifiable reason, some systems would need to be kept out of the scope of this policy. This section instructs on the process to be followed for getting exceptions from this policy.

Any exceptions to this policy, such as exemption from the vulnerability assessment process, must be approved via the security exception process. Refer to the security exception policy for more details.

Enforcement

This section is to highlight the impact if this policy is violated.

Any company name personnel found to have violated this policy may be subject to disciplinary action, up to and including termination of employment and potential legal action.

Related documents

This section is for providing references to any other related policies, procedures, or guidelines within the organization.

The following documents are referenced by this policy:

- Vulnerability assessment procedure

- Security exception policy

Revision history

| Date | Revision number | Revision details | Revised by |

| MM/DD/YYYY | Rev #1 | Description of change | <Name/Title> |

| MM/DD/YYYY | Rev #2 | Description of change | <Name/Title> |

This section contains details about who created the policy, timestamps, and the revisions.

Glossary

This section contains definitions of all key terms used throughout the policy.

Penetration testing standards

Penetration testing is not just a single activity, but a complete process. There are several standards available that outline steps to be followed during a penetration test. This section aims at introducing the penetration testing lifecycle in general and some of the industry-recognized penetration testing standards.

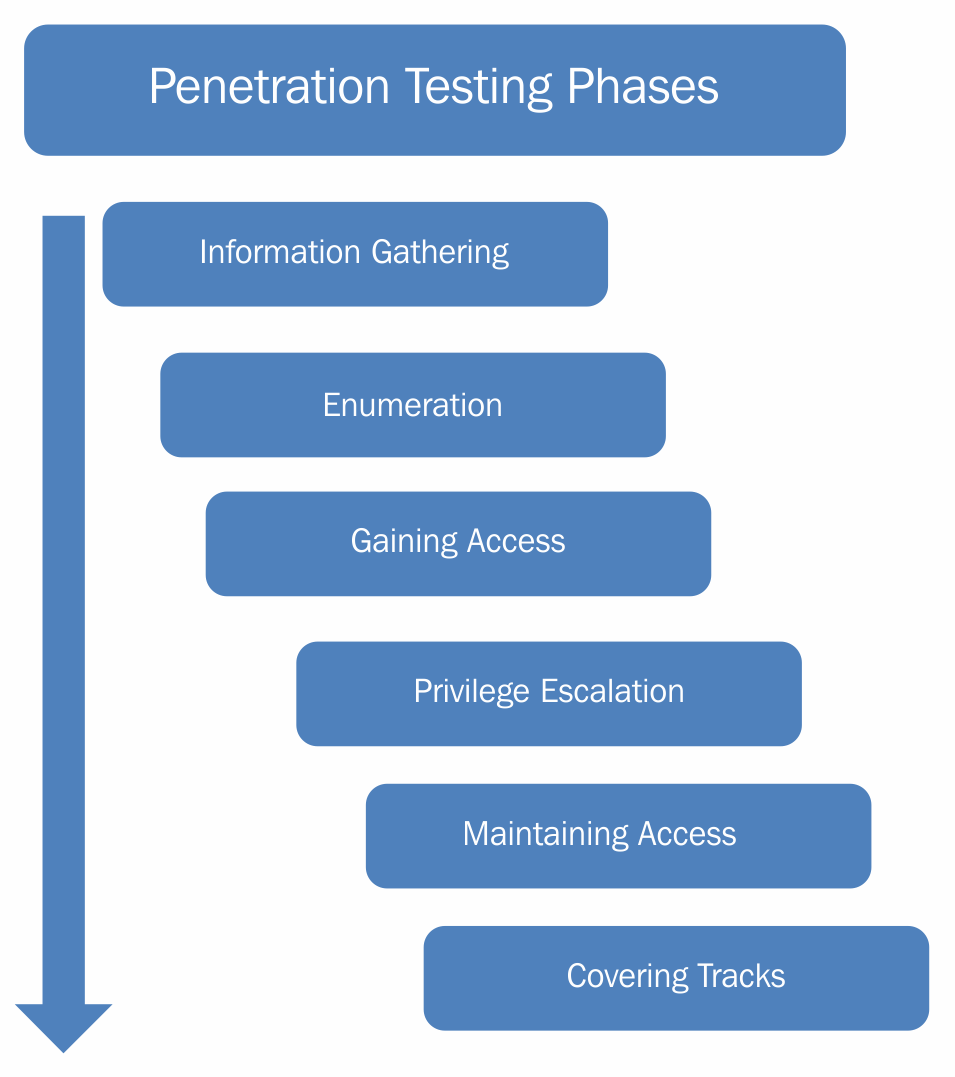

Penetration testing lifecycle

Penetration testing is not just about using random tools to scan the targets for vulnerabilities, but a detail-oriented process involving multiple phases. The following diagram shows various stages of the penetration testing lifecycle:

- Information gathering phase: The information gathering phase is the first and most important phase of the penetration testing lifecycle. Before we can explore vulnerabilities on the target system, it is crucial to gather information about the target system. The more information you gather, the greater is the possibility of successful penetration. Without properly knowing the target system, it's not possible to precisely target the vulnerabilities. Information gathering can be of two types:

- Passive information gathering: In passive information gathering, no direct contact with the target is established. For example, information about a target could be obtained from publicly available sources, such as search engines. Hence, no direct contact with the target is made.

- Active information gathering: In active information gathering, a direct contact with the target is established in order to probe for information. For example, a ping scan to detect live hosts in a network would actually send packets to each of the target hosts.

- Enumeration: Once the basic information about the target is available, the next phase is to enumerate the information for more details. For example, during the information gathering phase, we might have a list of live IP's in a network. Now we need to enumerate all these live IPs and possibly get the following information:

- The operating system running on the target IPs

- Services running on each of the target IPs

- Exact versions of services discovered

- User accounts

- File shares, and so on

- Gaining access: Once the information gathering and enumeration have been performed thoroughly, we will have a detailed blueprint of our target system/network. Based on this blueprint, we can now plan to launch various attacks to compromise and gain access to the target system.

- Privilege escalation: We may exploit a particular vulnerability in the target system and gain access to it. However, it's quite possible that the access is limited with privileges. We may want to have full administrator/root-level access. Various privilege escalation techniques could be employed to elevate the access from a normal user to that of an administrator/root.

- Maintaining access: By now, we might have gained high-privilege access to our target system. However, that access might last only for a while, for a particular period. We would not like to have to repeat all the efforts again, in case we want to gain the same access to the target system. Hence, using various techniques, we can make our access to the compromised system persistent.

- Covering tracks: After all the penetration has been completed and documented, we might want to clear the tracks and traces, including tools and backdoors used in the compromise. Depending on the penetration testing agreement, this phase may or may not be required.

Industry standards

When it comes to the implementation of security controls, we can make use of several well-defined and proven industry standards. These standards and frameworks provide a baseline that they can be tailored to suit the organization's specific needs. Some of the industry standards are discussed in the following section.

Open Web Application Security Project testing guide

OWASP is an acronym for Open Web Application Security Project. It is a community project that frequently publishes the top 10 application risks from an awareness perspective. The project establishes a strong foundation to integrate security throughout all the phases of SDLC.

The OWASP Top 10 project essentially application security risks by assessing the top attack vectors and security weaknesses and their relation to technical and business impacts. OWASP also provides specific instructions on how to identify, verify, and remediate each of the vulnerabilities in an application.

Though the OWASP Top 10 project focuses only on the common application vulnerabilities, it does provide extra guidelines exclusively for developers and auditors for effectively managing the security of web applications. These guides can be found at the following locations:

- Latest testing guide: https://www.owasp.org/index.php/OWASP_Testing_Guide_v4_Table_of_Contents

- Developer's guide: www.owasp.org/index.php/Guide

- Secure code review guide: www.owasp.org/index.php/Category:OWASP_Code_Review_Project

The OWASP top 10 list gets revised on a regular basis. The latest top 10 list can be found at: https://www.owasp.org/index.php/Top_10_2017-Top_10.

Benefits of the framework

The following are the key features and benefits of OWASP:

- When an application is tested against the OWASP top 10, it ensures that the bare minimum security requirements have been met and the application is resilient against most common web attacks.

- The OWASP community has developed many security tools and utilities for performing automated and manual application tests. Some of the most useful tools are WebScarab, Wapiti, CSRF Tester, JBroFuzz, and SQLiX.

- OWASP has developed a testing guide that provides technology or vendor-specific testing guidelines; for example, the approach for the testing of Oracle is different than MySQL. This helps the tester/auditor choose the best-suited procedure for testing the target system.

- It helps design and implement security controls during all stages of development, ensuring that the end product is inherently secure and robust.

- OWASP has an industry-wide visibility and acceptance. The OWASP top 10 could also be mapped with other web application security industry standards.

Penetration testing execution standard

The penetration testing execution standard (PTES) was created by of the brightest minds and definitive experts in the penetration testing industry. It consists of seven phases of penetration testing and can be used to perform an effective penetration test on any environment. The details of the methodology can be found at: http://www.pentest-standard.org/index.php/Main_Page.

The seven stages of penetration testing that are detailed by this standard are as follows (source: www.pentest-standard.org):

- Pre-engagement interactions

- Intelligence gathering

- Threat modeling

- Vulnerability analysis

- Exploitation

- Post-exploitation

- Reporting

Each of these stages is provided in detail on the PTES site along with specific mind maps that detail the steps required for each phase. This allows for the customization of the PTES standard to match the testing requirements of the environments that are being tested. More details about each step can be accessed by simply clicking on the item in the mind map.

Benefits of the framework

The following are the key features and benefits of the PTES:

- It is a very thorough penetration testing framework that covers the technical as well as operational aspects of a penetration test, such as scope creep, reporting, and safeguarding the interests and rights of a penetration tester

- It has detailed instructions on how to perform many of the tasks that are required to accurately test the security posture of an environment

- It is put together for penetration testers by experienced penetration testing experts who perform these tasks on a daily basis

- It is inclusive of the most commonly found technologies as well as ones that are not so common

- It is simple to understand and can be easily adapted for security testing needs

Summary

In this chapter, we became familiar with some absolute security basics and some of the essential governance concepts for building a vulnerability management program. In the next chapter, we'll learn how to set up an environment for performing vulnerability assessments.

Exercises

- Explore how to calculate ROI for security controls

- Become familiar with the PTES standard