What’s Wrong with Layers?

Chances are that you have developed a layered (web) application in the past. You might even be doing it in your current project right now.

Thinking in layers has been drilled into us in computer science classes, tutorials, and best practices. It has even been taught in books.1

1 Layers as a pattern are, for example, taught in Software Architecture Patterns by Mark Richards, O'Reilly, 2015.

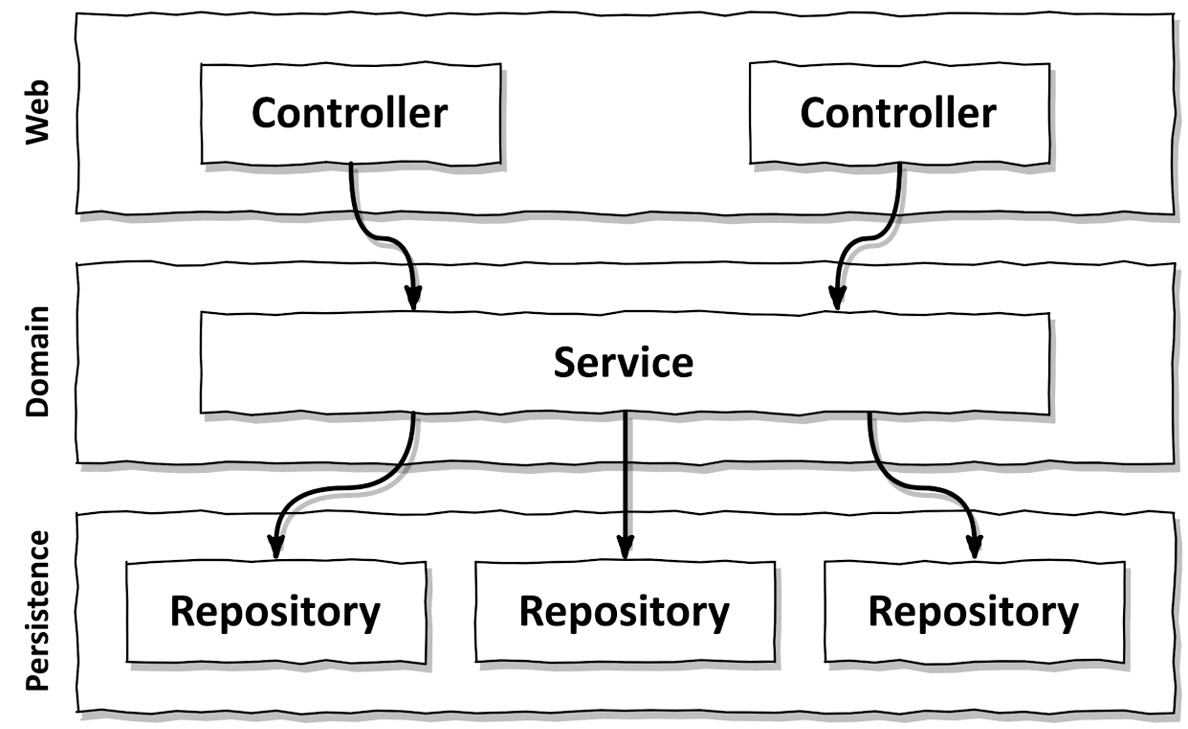

Figure 2.1 – A conventional web application architecture consists of a web layer, a domain layer, and a persistence layer

Figure 2.1 shows a high-level view of the very common three-layer architecture. We have a web layer that receives requests and routes them to a service in the domain layer.2 The service does some business logic and calls components from the persistence layer to query for or modify the current state of our domain entities in the database.

2 Domain versus business: in this book, I use the terms “domain” and “business” synonymously. The domain layer or business layer is the place in the code that solves the business problems, as opposed to code that solves technical problems, like persisting things in a database or processing web requests.

You know what? Layers are a solid architecture pattern! If we get them right, we’re able to build domain logic that is independent of the web and persistence layers. We can switch out the web or persistence technologies without affecting our domain logic, if the need arises. We can also add new features without affecting existing features.

With a good layered architecture, we’re keeping our options open and are able to quickly adapt to changing requirements and external factors (such as our database vendor doubling their prices overnight). A good layered architecture is maintainable.

So, what’s wrong with layers?

In my experience, a layered architecture is very vulnerable to changes, which makes it hard to maintain. It allows bad dependencies to creep in and make the software increasingly harder to change over time. Layers don’t provide enough guardrails to keep the architecture on track. We need to rely too much on human discipline and diligence to keep it maintainable.

In the following sections, I’ll tell you why.

They promote database-driven design

By its very definition, the foundation of a conventional layered architecture is the database. The web layer depends on the domain layer, which in turn depends on the persistence layer and thus the database. Everything builds on top of the persistence layer. This is problematic for several reasons.

Let’s take a step back and think about what we’re trying to achieve with almost any application we’re building. We’re typically trying to create a model of the rules or “policies” that govern the business in order to make it easier for the users to interact with them.

We’re primarily trying to model behavior, not the state. Yes, the state is an important part of any application, but the behavior is what changes the state and thus drives the business!

So, why are we making the database the foundation of our architecture and not the domain logic?

Think back to the last use cases you implemented in any application. Did you start by implementing the domain logic or the persistence layer? Most likely, you thought about what the database structure would look like and only then moved on to implementing the domain logic on top of it.

This makes sense in a conventional layered architecture since we’re going with the natural flow of dependencies. But it makes absolutely no sense from a business point of view! We should build the domain logic before building anything else! We want to find out whether we have understood the business rules correctly. And only once we know we’re building the right domain logic should we move on to build a persistence and web layer around it.

A driving force in such a database-centric architecture is the use of object-relational mapping (ORM) frameworks. Don’t get me wrong, I love those frameworks and work with them regularly. But if we combine an ORM framework with a layered architecture, we’re easily tempted to mix business rules with persistence aspects.

Figure 2.2 – Using the database entities in the domain layer leads to strong coupling with the persistence layer

Usually, we have ORM-managed entities as part of the persistence layer, as shown in Figure 2.2. Since a layer may access the layers below it, the domain layer is allowed to access those entities. And if it’s allowed to use them, it will use them at some point.

This creates a strong coupling between the domain layer and the persistence layer. Our business services use the persistence model as their business model and have to deal not only with the domain logic but also with eager versus lazy loading, database transactions, flushing caches, and similar housekeeping tasks.3

3 In his seminal book Refactoring (Pearson, 2018), Martin Fowler calls this symptom “divergent change”: having to change seemingly unrelated parts of the code to implement a single feature. This is a code smell that should trigger a refactoring.

The persistence code is virtually fused into the domain code and thus it’s hard to change one without the other. That’s the opposite of being flexible and keeping options open, which should be the goal of our architecture.

They’re prone to shortcuts

In a conventional layered architecture, the only global rule is that from a certain layer, we can only access components in the same layer or a layer below. There may be other rules that a development team has agreed upon and some of them might even be enforced by tooling, but the layered architecture style itself does not impose those rules on us.

So, if we need access to a certain component in a layer above ours, we can just push the component down a layer and we’re allowed to access it. Problem solved. Doing this once may be OK. But doing it once opens the door for doing it a second time. And if someone else was allowed to do it, so am I, right?

I’m not saying that as developers, we take such shortcuts lightly. But if there is an option to do something, someone will do it, especially in combination with a looming deadline. And if something has been done before, the likelihood of someone doing it again will increase drastically. This is a psychological effect called the Broken Windows Theory – more on this in Chapter 11, Taking Shortcuts Consciously.

Figure 2.3 – Since any layer may access everything in the persistence layer, it tends to grow fat over time

Over years of development and maintenance of a software project, the persistence layer may very well end up like in Figure 2.3.

The persistence layer (or, in more generic terms, the bottom-most layer) will grow fat as we push components down through the layers. Perfect candidates for this are helper or utility components since they don’t seem to belong to any specific layer.

So, if we want to disable shortcut mode for our architecture, layers are not the best option, at least not without enforcing some kind of additional architecture rules. And by enforcing, I don’t mean a senior developer doing code reviews, but automatically enforced rules that make the build fail when they’re broken.

They grow hard to test

A common evolution within a layered architecture is that layers are skipped. We access the persistence layer directly from the web layer since we’re only manipulating a single field of an entity, and for that, we need not bother the domain layer, right?

Figure 2.4 – Skipping the domain layer tends to scatter domain logic across the code base

Figure 2.4 shows how we’re skipping the domain layer and accessing the persistence layer right from the web layer.

Again, this feels OK the first couple of times, but it has two drawbacks if it happens often (and it will, once someone has made the first step).

First, we’re implementing domain logic in the web layer, even if it’s only manipulating a single field. What if the use case expands in the future? We’re most likely going to add more domain logic to the web layer, mixing responsibilities and spreading essential domain logic across all layers.

Second, in the unit tests of our web layer, we not only have to manage the dependencies on the domain layer but also the dependencies on the persistence layer. If we’re using mocks in our tests, that means we have to create mocks for both layers. This adds complexity to the tests. And a complex test setup is the first step toward no tests at all because we don’t have time for them. As the web component grows over time, it may accumulate a lot of dependencies on different persistence components, adding to the test’s complexity. At some point, it takes more time for us to understand the dependencies and create mocks for them than to actually write test code.

They hide the use cases

As developers, we like to create new code that implements shiny new use cases. But we usually spend much more time changing existing code than we do creating new code. This is not only true for those dreaded legacy projects in which we’re working on a decades-old code base but also for a hot new greenfield project after the initial use cases have been implemented.

Since we’re so often searching for the right place to add or change functionality, our architecture should help us to quickly navigate the code base. How does a layered architecture hold up in this regard?

As already discussed previously, in a layered architecture, it easily happens that domain logic is scattered throughout the layers. It may exist in the web layer if we’re skipping the domain logic for an “easy” use case. And it may exist in the persistence layer if we have pushed a certain component down so it can be accessed from both the domain and persistence layers. This already makes finding the right spot to add new functionality hard.

But there’s more. A layered architecture does not impose rules on the “width” of domain services. Over time, this often leads to very broad services that serve multiple use cases (see Figure 2.5).

Figure 2.5 – “Broad” services make it hard to find a certain use case within the code base

A broad service has many dependencies on the persistence layer and many components in the web layer depend on it. This not only makes the service hard to test but also makes it hard for us to find the code responsible for the use case we want to work on.

How much easier would it be if we had highly specialized, narrow domain services that each serve a single use case? Instead of searching for the user registration use case in UserService, we would just open up RegisterUserService and start hacking away.

They make parallel work difficult

Management usually expects us to be done with building the software they sponsor on a certain date. Actually, they even expect us to be done within a certain budget as well, but let’s not complicate things here.

Aside from the fact that I have never seen “done” software in my career as a software engineer, to be “done” by a certain date usually implies that multiple people have to work in parallel.

You probably know this famous conclusion from “The Mythical Man-Month,” even if you haven’t read the book: Adding manpower to a late software project makes it later.4

44 The Mythical Man-Month: Essays on Software Engineering by Frederick P. Brooks, Jr., Addison-Wesley, 1995.

This also holds true, to a degree, in software projects that are not (yet) late. You cannot expect a large group of 50 developers to be 5 times faster than a smaller team of 10 developers. If they’re working on a very large application where they can split up into sub-teams and work on separate parts of the software, it may work, but in most contexts, they will step on each other’s feet.

But on a healthy scale, we can certainly expect to be faster with more people on the project. And management is right to expect that of us.

To meet this expectation, our architecture must support parallel work. This is not easy. And a layered architecture doesn’t really help us here.

Imagine we’re adding a new use case to our application. We have three developers available. One can add the needed features to the web layer, one to the domain layer, and the third to the persistence layer, right?

Well, it usually doesn’t work that way in a layered architecture. Since everything builds on top of the persistence layer, the persistence layer must be developed first. Then comes the domain layer and finally the web layer. So only one developer can work on the feature at a time!

“Ah, but the developers can define interfaces first,” you say, “and then each developer can work against these interfaces without having to wait for the actual implementation.”

Sure, this is possible, but only if we haven’t mixed our domain and persistence logic as discussed previously, blocking us from working on each aspect separately.

If we have broad services in our code base, it may even be hard to work on different features in parallel. Working on different use cases will cause the same service to be edited in parallel, which leads to merge conflicts and potentially regressions.

How does this help me build maintainable software?

If you have built layered architectures in the past, you can probably relate to some of the issues discussed in this chapter, and you could maybe even add some more.

If done correctly, and if some additional rules are imposed on it, a layered architecture can be very maintainable and can make changing or adding to the code base a breeze.

However, the discussion shows that a layered architecture allows many things to go wrong. Without good self-discipline, it’s prone to degrading and becoming less maintainable over time. And our self-discipline usually takes a hit each time a team member rotates into or out of the team, or a manager draws a new deadline around the development team.

Keeping the traps of layered architecture in mind will help us the next time we argue against taking a shortcut and for building a more maintainable solution instead – be it in a layered architecture or a different architecture style.

Download code from GitHub

Download code from GitHub