Introducing Location Intelligence

- The first law of geography by Waldo Tobler

Location data is data with a geographic dimension. Location data is everywhere as all actions that occur in or near the Earth's surface happen to use geographic aspects. It is generally referred to as any data with coordinates (latitude, longitude, and sometimes altitude) but also encompasses different aggregated geographic units, including addresses, zip codes, landmarks, districts, cities, regions, and much more.

Location intelligence, on the other hand, is the process of turning geographic (spatial) data into insights and business outcomes. Any data with a geographical position, either implicitly or explicitly, requires location-aware preprocessing methods, visualization, as well as analytical methods to derive insights from it. Thus, location intelligence applications can reveal hidden patterns of spatial relationships that cannot be derived through other normal means. It leads to better decision making on spatial problems, where things happen, why they happen in some places, and the spatial trends in time-series analysis. Understanding the location dimension of today's challenges in, industrial, retail, agricultural, climate, and environment, can lead to a better understanding of why economic, social, and environmental activities tend to locate where they are.

In this chapter, we give an overview of location data and location data intelligence. Here, we briefly introduce different location data types and location data intelligence applications and examples. We cover how to identify location data from publicly available open datasets. We briefly discuss and highlight the difference between location data and other non-geographic data. At the end of this chapter, we explore how location data fits into data science and what opportunities and challenges bring location data into the interdisciplinarity of data science.

We will specifically focus on the following topics:

- Location data

- Location data intelligence

- Location data and data science

- A primer on Google Colab and Jupyter Notebooks

Location data

What is location data and why is it different than other data formats? It is quite common to see phrases such as spatial data is special or another more popular adage, 80% of data is geographic. While these are not easily provable, we tend to witness an increased amount of location data. From geotagged images, text, and sensor data, location data is ubiquitous and the world is datafied. In this connected and data-driven driven era, we generate, keep track of, and store huge mounts of data every day. Think of the number of tweets, Instagram images, bank transactions, searches on the web, and routing requests from APIs. We collect more data than at any other period of time in the past, and thus the big data revolution. Many of the datasets collected have an inherent location dimension but are often hidden within the data and not utilized fully.

Understanding location data from various perspectives

We can examine location data from different perspectives: business, technical, and data perspectives.

From a business perspective

From a business perspective, the value of maps and location data is crucial in many business applications. A quick look at big companies such as Google, Apple, Microsoft, and Nokia shows that each of these companies has their own location and mapping services and products.

Think about how often you use Google Maps API's location service through your phone. This also highlights the importance of location data as all these companies would not go to such lengths to have their own in-house location data production if it was not necessary. Business applications in location data include not only individual uses of location data but also innovative applications spanning from individualized marketing, autonomous vehicles, logistics, and transportation to healthcare.

From a technical perspective

The technical perspective of location data indicates that it entails both opportunities as well as challenges. Location data, in contrast to other data, has a topology, which holds the relationships between geometry (points, lines, and polygons) and geographic features that they represent. In the case of conventional data, we store data into tables or a Relational Database Management System (RDBMS). However, spatial relations and topology require us to store the geometry of objects.

Due to the nature of location data, which is derived from Tobler's first law of geography, Everything is related to everything else, but near things are more related than distant things. The essence of this law entails also the presence of strong autocorrelation and interdependency in continuous near locations, which is not necessarily present in conventional data (non-spatial attributes).

From a data perspective

Having looked into the nature of location data from a technical perspective, let's also examine it from a data perspective. How is location data different than other data? In location data, we use geographic coordinates (2D) to represent the world (3D).

For example, Digital Elevation Models (DEMs) are used to represent heights and terrain surface. The first law of geography applies here as well. At a certain point of time, a particular terrain is very likely to have the same height with its relatively close surrounding, while we can expect a difference based on elevation in two areas distant from each other. As mentioned earlier, spatial autocorrelation in location data is assumed to be present in spatial data, while in other types of data, such as the statistical analysis of conventional data, we assume the independence of data points. That means location data can be categorized as stochastic, while other data is probabilistic.

Another complication in location data also arises from what we call Modifiable Area Unit Problem (MAUP), which arises from different aggregated units that produce different results. An example of this is poverty or crime estimates and aggregations. For example, areas of high poverty rates could be overestimated or underestimated depending on the boundaries of measured areas. By moving into different aggregations (that is, zip code, neighborhood, or district level), which can create different impressions and patterns created by the different scales and aggregations.

Types of location data

Geographic data types can be divided into two broad categories:

- Vector data: This is represented as points, lines, or polygons. The data is likely created by digitizing it and storing information in longitude and latitude. This type of data is useful for storing data that has discrete and distinct boundaries such as borders, land parcels, streets, and points of interest.

- Raster data: This stores information in cells and therefore is suitable for storing data that is continuous, such as satellite images, elevation models, and other aerial photographs.

Location data intelligence

Every industry uses location intelligence. It helps industries understand what their customers are doing, where their customers are based, what the geographic environment of their customers is, and what their interests are. Location intelligence is normally defined as using location data with other attributes to add context and derive useful information, services, and products that help organizations make effective and efficient decisions. The information derived through location intelligence can have a business and economic insights as well as environmental and social insights.

Application of location data intelligence

To illustrate how location intelligence is applied in a real-world application, we will take as an example Foursquare check-ins. Foursquare initially started in 2009 as a social platform to collect user check-ins and provide guides and search-results for its users to recommend places to visit near the user's current location. However, recently, Foursquare repositioned itself as a less social platform to a location intelligence company. The company describes itself as a "technology company that uses location intelligence to build meaningful consumer experiences and business solutions" and claims the following:

In its anonymized and aggregated trends of check-ins in physical brand locations, Foursquare provides insights and metrics that were not easily available before. Take, for example, the loyalty of customers, frequency of their visits, brand losses, and profits. This allows analysts and brands to understand their customers, reveal demographic insights and track patterns of customers, and look into and understand competition brands. To illustrate how powerful location intelligence is, let's explore a subset of Foursquare data in NYC. We will use this dataset later in Chapter 3, Performing Spatial Operations Like a Pro, but for now let's look into what it consists and how location intelligence is derived from it.

The NYC Foursquare check-in dataset has 10 months' worth of data spanning from April 12, 2012 to February 16, 2013.

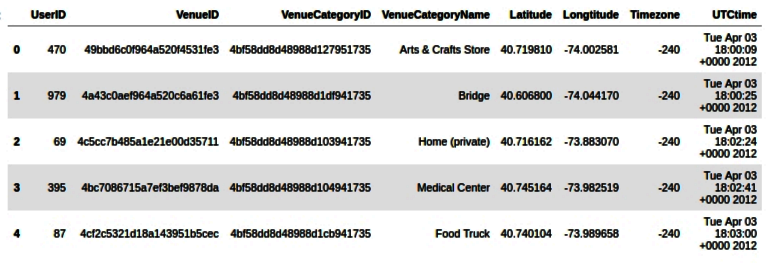

The following table shows the first five rows of the data and consists of eight columns with a unique UserID and VenueID. Both of these features are anonymized for privacy issues; VenueCategoryID and VenueCategoryName indicate aggregated types of business. Here, we have more than 250 business types, including a medical center, arts store, burger joint, hardware store, and so on; Latitude and Longitude columns store the geographic coordinates of the venues.

The last two columns indicate the time of the check-in:

Here, we have the first five rows of the Foursquare data. In this chapter, we will only look at the data from a wider perspective. The code for this chapter is available, but you do not need to understand it right now. We will come to learn the details of reading and processing location data with Python in the next chapters.

So, what kind of location intelligence can be derived from this type of data? We will cover this from two broad perspectives: the user/customer perspective and the venue/business perspective.

User or customer perspective

Here we will get a clear idea from a customer perspective. Often the following questions will come into picture:

Where does customer X spend his/her time? What does this place offer? How often does he/she visit these places? When does he/she visit these places?

Let's take an example for the UserID = 395 from the fourth row in the preceding table. This particular user has made 106 check-ins in total during this period of the dataset visiting 36 unique venues in NY (visualized as the map as follows):

User 395: Venues visited in NY

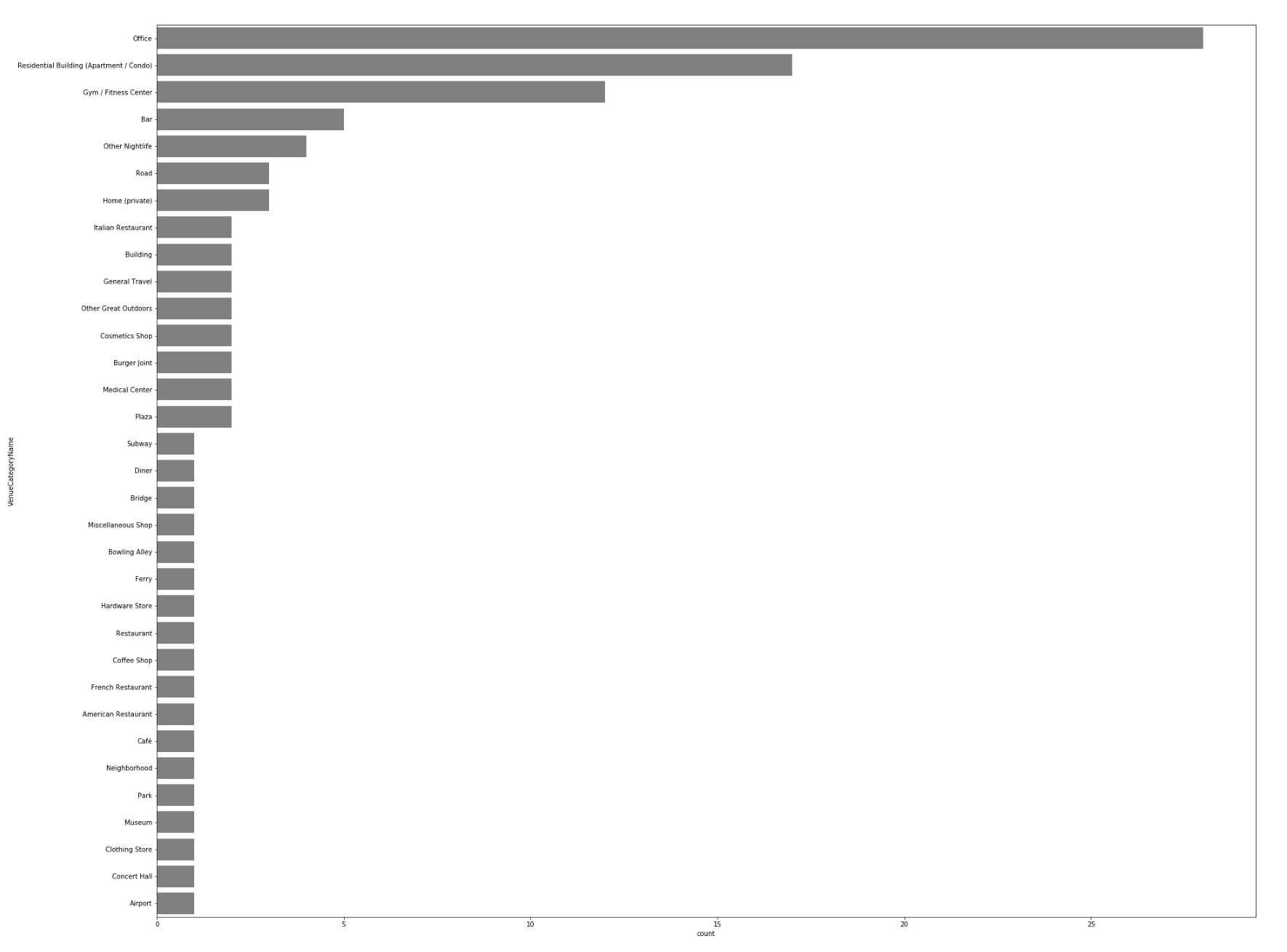

We can also look at what type of venues this particular user has visited. In this case, this user has visited frequently an office, a residential building, and a gym, in NYC. Other less-visited venues include an airport, outdoors, a medical center, and many others, as you can see from the following graph:

The user perspective can elicit many aspects related to the frequency of visits, preferences, and activities of the user that can guide location intelligence and decision making. Privacy issues in location data are very sensitive and require diligence. In this case, although it is anonymized data, it still reveals patterns and other useful information as we have shown. Now let's also look from the business perspective in the following section.

Venue or business perspective

Here we will get a clear idea from a business perspective. Often the following questions will come into picture:

How many customers does venue X Receive per day? What about per hour? What is the pattern? Can we estimate business value based on the check-ins?

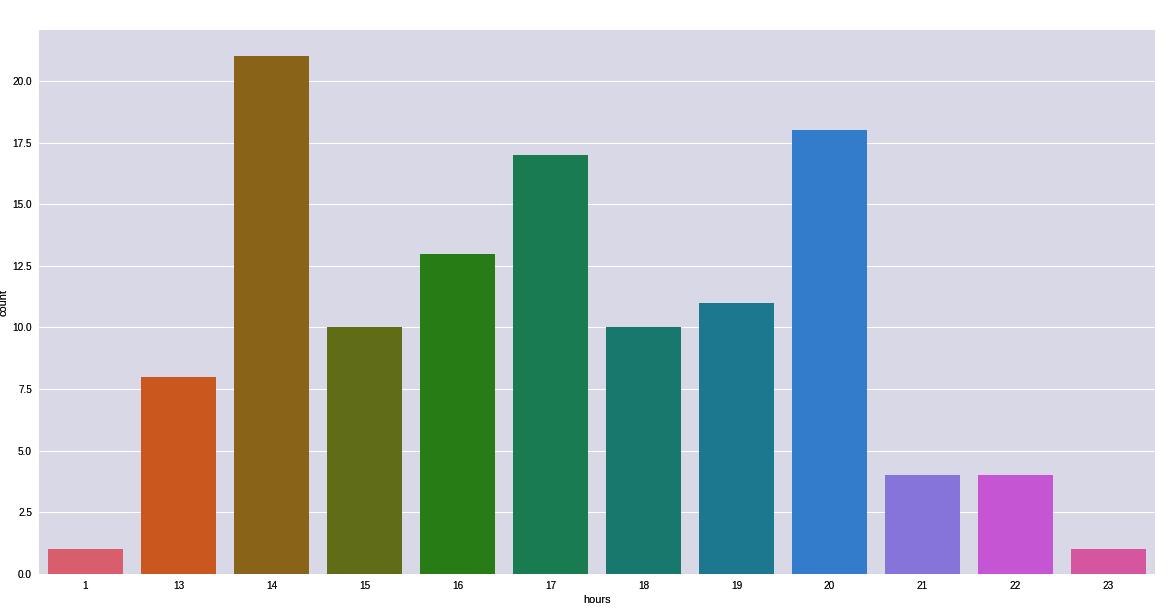

We will use a gym venue as an example here, with VenueID = 4aca718ff964a520f6c120e3. For this dataset, this gym has 118 check-ins. Although the data is small and cannot be generalizable in this particular VenueID, imagine it has enough data for a longer period of time. We can estimate the peak times of this gym as the following graph shows. There is a peak of check-ins at 14:00 and at 20:00:

This kind of business perspective analysis helps both decision makers and competitors to gain an insight into businesses. This is only an individual business example, but this can simply be extended to businesses in this dataset and look further into it. In fact, Foursquare predicted Chipotle's sales (link available in the information box), a Mexican grill, to drop 30% during the months of 2016 before the company announced its loss.

Let's now look at how location data science is different than data science in the next section.

Location data science versus data science

Now that we have learned that location data is beyond mapping, and specifically is manipulation and processing of geographic data and applying analytical methods, we will move into the interdisciplinarity of location data science. We have also studied location data intelligence and how insights are derived from location data by illustrating this with diagrams. But how is location data science (spatial data science) different than data science? How do they relate to each other? In this section, we will cover the commonalities as well as differences between location data science and data science as a discipline.

Data science

What is data science? Data science as a field consists of computer science, mathematics and statistics, and domain expertise and is generally referred to as the process of extracting insights and useful information from data. Mostly, it involves importing data and tidying it to make it ready for analysis. An iterative process of data science also implies transforming, visualizing, and modeling data to understand phenomena and hidden patterns within the data. The final process in data science which is often explored less, is to communicate the insights. Now you may realize from what we have covered so far that this is not far from location intelligence, and that is right. The location dimension is critical in many domains and applications with data science. Next, let's look at what spatial data science.

Location (spatial) data science

Adding location data and the underlying spatial science entails additional challenges and opportunities. It will form a combination of the interdisciplinary field consisting of computer science, mathematics and statistics, domain expertise, and spatial science. This does not only indicate the addition of spatial science but also whole new concepts, theories, and the application of spatial and location analysis, including spatial patterns, location clusters, hot spots, location optimization, and decision-making, as well as spatial autocorrelation and spatial exploratory data analysis. For example, in data science, histograms and scatter plots are used for data distributions analysis, but this won't help with location data analysis, as it requires specific methods, such as spatial autocorrelation and spatial distribution to get location insights.

To get the reader up and running quickly and without burdening the local setup of Python environments, we will use Google Colab Jupyter Notebooks in this book. In the next section, we will cover a primer on how to use Google Colab and Jupyter Notebooks.

A primer on Google Colaboratory and Jupyter Notebooks

Jupyter Notebooks have become the favorite tool for data scientists, as they are flexible and combine code, computational output/multimedia, as well as comments. It is free, open source, and provides computational capabilities and interactive web-based notebooks. Anaconda distributions make the installation process easy if you want to install Jupyter Notebook on your local machine. The official Anaconda documents to install Jupyter Notebooks and Python is easy to follow and intuitive, so feel free to follow the instructions if you would like to work on your local machine.

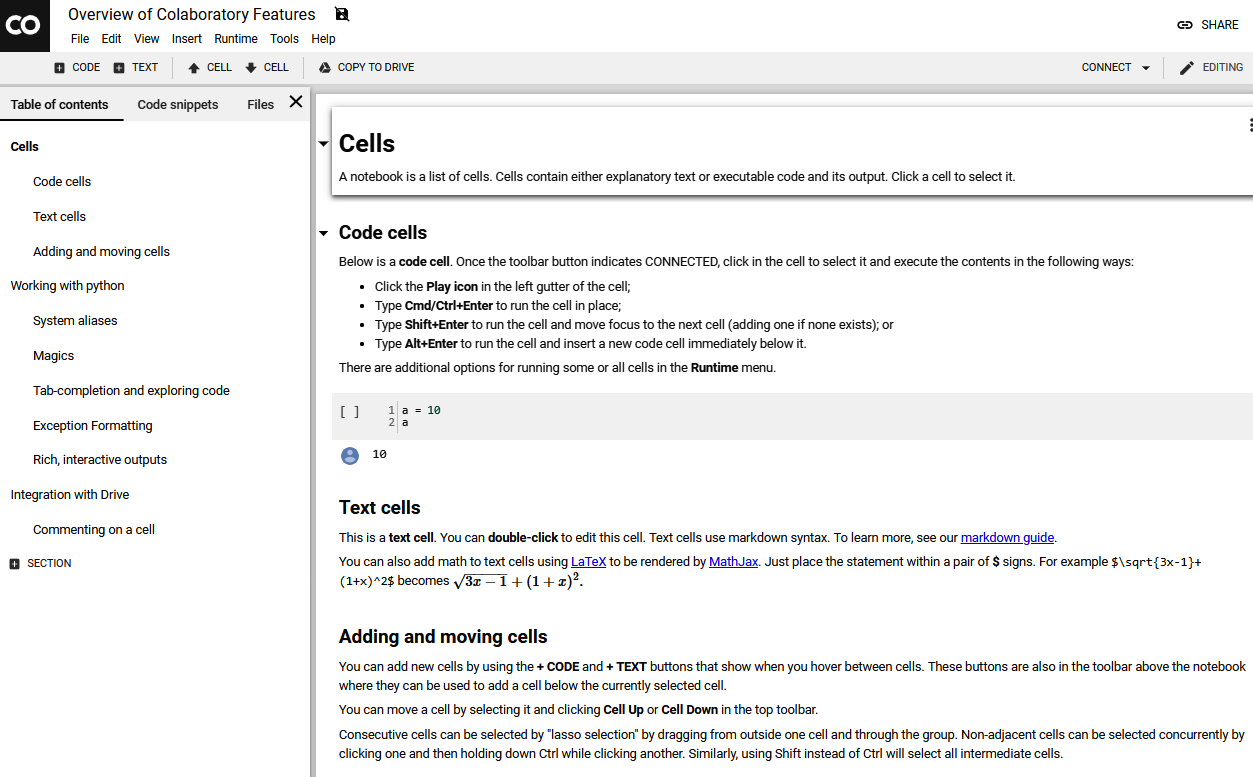

However, we will use Google Colab, which is a free Jupyter Notebook environment that requires no installation or setup and runs entirely in the cloud, just like using Google Docs or Google Sheets. Google Colab enables you to write code, run the code, and share it. You just need to have a working Gmail to save and access Google Colab Jupyter Notebooks. In heavy computational tasks, such as machine learning or deep learning with big data, Google Colab allows you to use its Graphics Processing Unit (GPU) or Tensor Processing Unit (TPU) for free.

Google Colab interface is shown as follows. In the upper part, you have the main menu. The right part is where we can write our code and comments:

You can open Google Colab from this URL: https://colab.research.google.com. There are two main types of cells: code and text. With a code cell, you can write your code and execute it, while a text cell allows you to write down your text with a markdown. Here, you can have different text types, including several heading levels as well as a bulleted and a numbered list. To execute a cell, you can either use a Ctrl + Enter shortcut or press the Run button (small triangle) next to the cell.

We will learn Google by using it as our coding platform for this book. If you are new to Jupyter Notebooks or Google Colab, here is a useful guide to get started: https://colab.research.google.com/notebooks/welcome.ipynb#scrollTo=GJBs_flRovLc.

Summary

We have introduced location data and location data intelligence in this chapter by looking from different perspectives: business, technical, and data. We have also covered applications of location data intelligence and provided some simple and concrete examples from the Foursquare dataset. Here, both customer perspectives, as well as user business perspectives, were considered in our location data intelligence applications and examples. Furthermore, we have compared and contrasted data science and location data science. Finally, we have introduced a primer on using Jupyter Notebooks and Google Colab.

We will learn to process location data and apply machine learning models in the next chapter while consuming location data like a data scientist. We will use the New York taxi trajectory data to predict trip durations for New York taxicab trips.

Download code from GitHub

Download code from GitHub