Introduction to Data Modeling in Power BI

Power BI is not just a reporting tool to build sophisticated reports; it is a platform that supplies a wide range of features from data preparation to data modeling and visualization. It is also a very well-designed ecosystem, giving a variety of users the ability to contribute to their organization’s data analysis journey in many ways, from sharing datasets, reports, and dashboards to using their mobile phones to add some comments to a report, ask questions, and circulate it back to the relevant people. All of this is only possible if we take the correct steps in building our Power BI ecosystem. A very eye-catching and beautiful report is worth nothing if it shows incorrect figures or if the report is too slow to render, so the user does not have the appetite to use it.

One of the most critical aspects of building a good Power BI ecosystem is to get the data right. In real-world scenarios, you usually get data from various data sources. Getting data from the data sources and then preparing it are just the beginning. Then, you need to develop a well-designed data model that guarantees you always represent the correct figures supporting the business logic, leading to well-performing related reports.

In this chapter, we start learning about the different Power BI layers and how data flows between the different layers to be able to fix any potential issues more efficiently. Then, we study one of the essential aspects of Power BI implementation, data modeling. You learn more about the data modeling feature availabilities and limitations under various Power BI licensing plans. Finally, we discuss the iterative data modeling approach and its different phases.

This chapter covers the following main sections:

- Power BI Desktop layers

- What data modeling means in Power BI

- Power BI licensing considerations for data modeling

- The iterative data modeling approach

Understanding the Power BI layers

As stated before, Power BI is not just a reporting tool. As this book focuses on data modeling, we will not spend much time explaining the tool itself; instead, we cover some concepts that should be pointed out. When we talk about data modeling in Power BI, we refer to Power BI Desktop as a development tool. You can think of Power BI Desktop like Visual Studio when developing an SQL Server Analysis Services (SSAS) Tabular model. Power BI Desktop is a free tool offered by Microsoft that can be downloaded from https://powerbi.microsoft.com/en-us/downloads/.

This book refers to Power BI Desktop when mentioning Power BI unless stated otherwise.

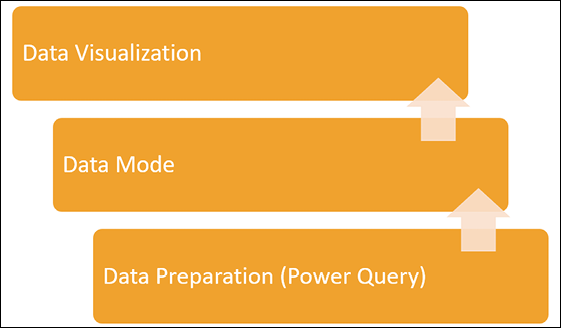

The following illustration shows a straightforward process we usually go through while building a report in Power BI Desktop:

Figure 1.1: Building a new report process in Power BI

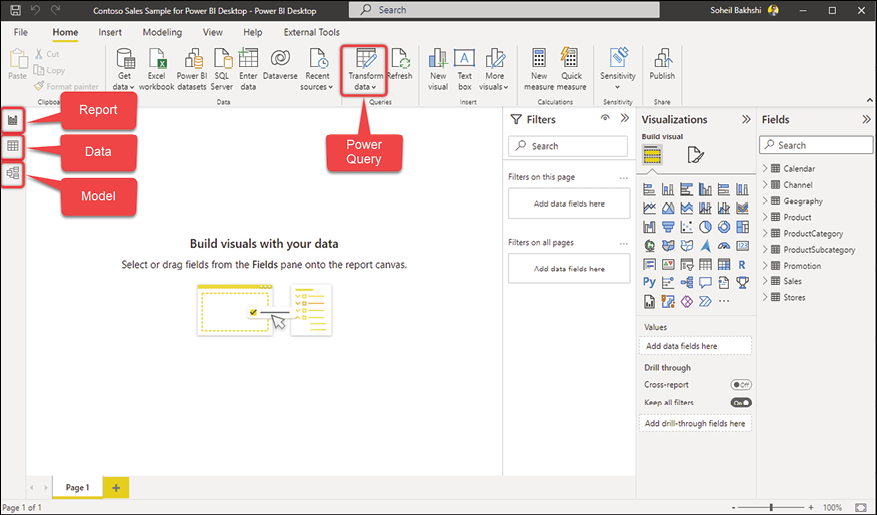

We use different conceptual layers of Power BI to go through the preceding processes. The following image shows where to access these layers in Power BI Desktop:

Figure 1.2: Power BI layers

Let us discuss each point in detail:

- The Power Query (data preparation) layer

- The Model layer (data model)

- The Report layer (data visualization)

The Data tab shown in the preceding image is where we can see the actual data loaded into the data model, so it is not considered a layer in Power BI Desktop.

To follow the following exercises, download the Microsoft Contoso Sales sample for Power BI Desktop from https://www.microsoft.com/en-us/download/confirmation.aspx?id=46801.

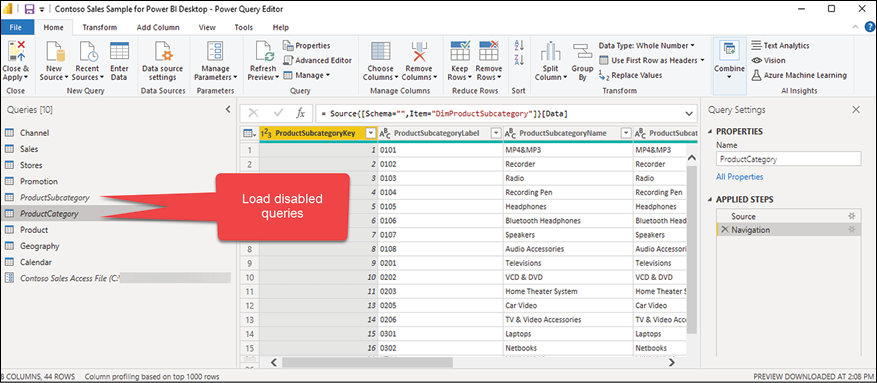

The data preparation layer (Power Query)

In this layer, we get the data from various data sources, transform and cleanse that data, and make it available for the next layer. This is the first layer that touches the data, so it is an essential part of the data journey in Power BI. In the Power Query layer, we decide which queries load data into the data model and which ones take care of data transformation and data cleansing without loading the data into the data model:

Figure 1.3: Power Query

The data model layer

This layer has two views, the Data view and the Model view. In the Data view, we see the data being loaded; in the Model view, we see the data model, including the tables and their relationships.

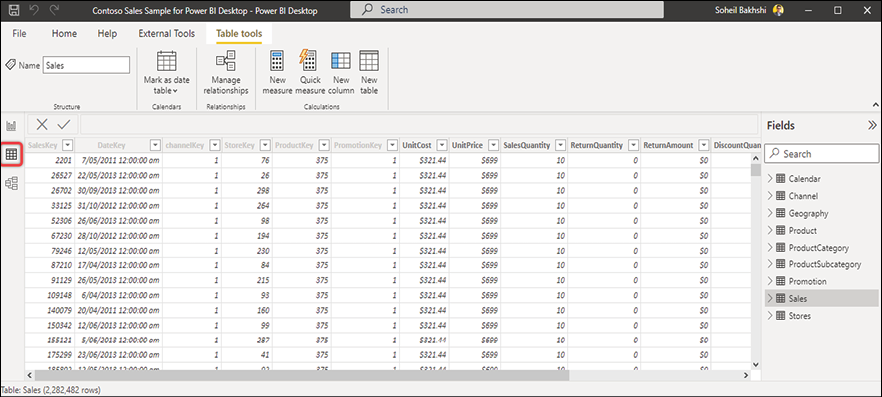

The Data view

After finishing the data preparation in the Power Query layer, we load the data into the data model layer. We can see the underlying data in our data model using the Data view. Depending on the connection mode, the Data view may or may not show the underlying data. We can take actions such as creating calculated tables, calculated columns, and measures or copying data from tables within the Data view.

All objects we create using DAX (measures, calculated columns, and so on) are a part of our data model.

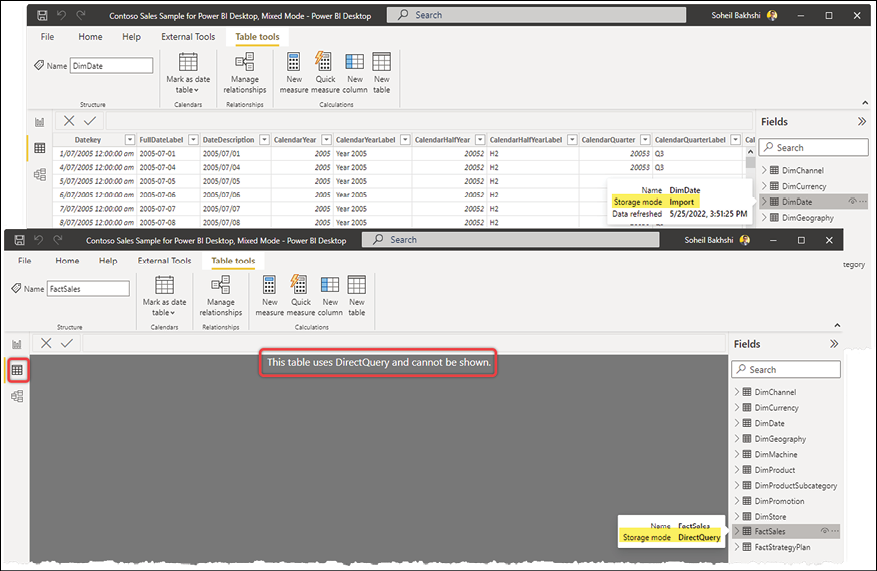

The following screenshot shows the Data view in Power BI Desktop when the storage mode of the Sales table is Import:

Figure 1.4: Data view; storage mode: Import

If the table’s storage mode is DirectQuery, then the Data view does not show the underlying data, as the following image illustrates:

Figure 1.5: Data view; storage mode: DirectQuery

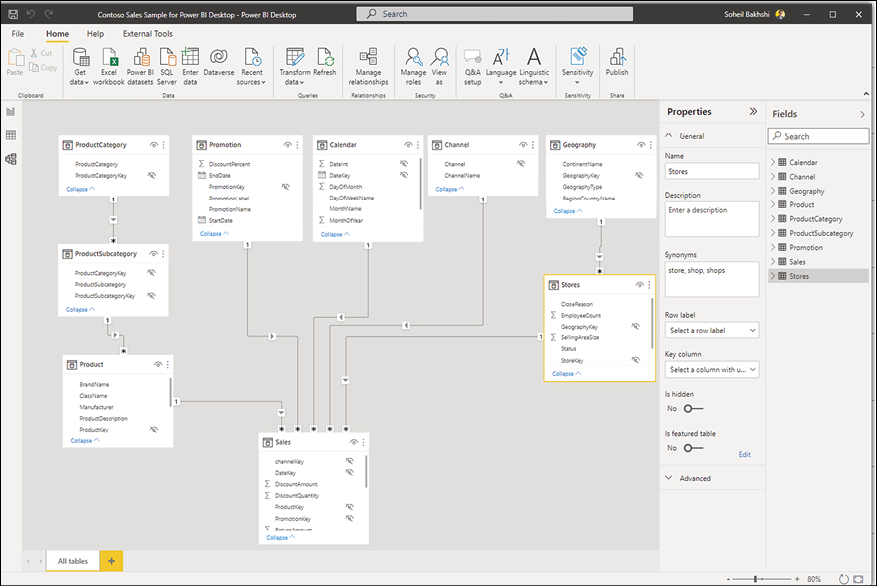

The Model view

As its name implies, the Model view is where we stitch all the pieces together. We can see the current relationships between the tables, create new relationships, format fields, define synonyms, and show and hide fields in the Model view. The following image shows the Model view of the Contoso Sales Sample when we selected the Store table:

Figure 1.6: Model view

The data visualization layer

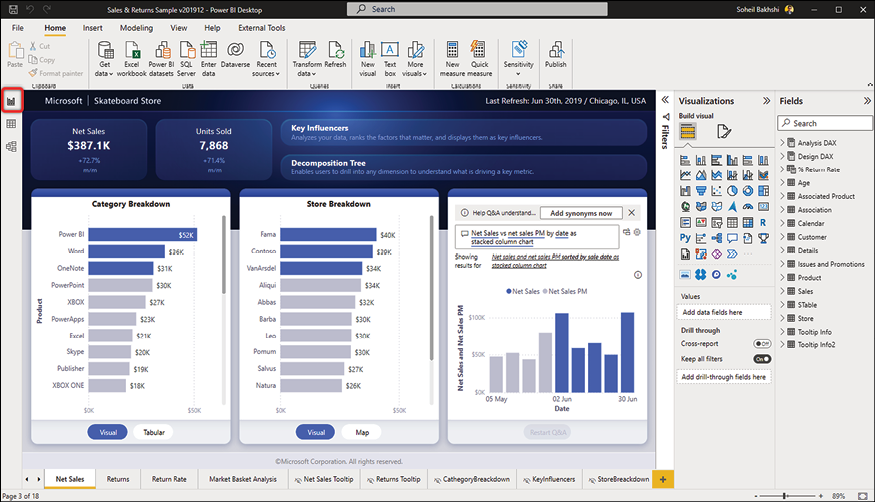

In this layer, we bring the data to life by making meaningful and professional-looking data visualizations. The data visualization layer is accessible via the Report view, the default view in Power BI Desktop.

The Report view

In the Report view, we can build storytelling visualizations to help businesses make data-driven decisions. We can also create analytical calculations with DAX, such as calculated tables, calculated columns, and measures from the Fields pane in the Report view, but this does not mean those objects are a part of the data visualization layer. Indeed, they are a part of the data model layer.

The following image shows the Report view of the Sales & Returns Sample:

Figure 1.7: The Report view

To load the preceding view, download the Sales & Returns sample.pbix file from https://docs.microsoft.com/en-us/power-bi/create-reports/sample-datasets#sales--returns-sample-pbix-file.

How data flows in Power BI

Understanding how data flows during its journey in Power BI is vital. For instance, when we face an issue with some calculations in a report, we know how to analyze the root cause and trace the issue back to an actionable point. When we find an incorrect value in a line chart, and the line chart uses a measure dependent on a calculated column, we know that we do not find that calculated column in Power Query. The reason is that the objects we create in the data model are not accessible in Power Query. So, in that sense, we never look for a measure in the Power Query layer. We also do not expect to use custom functions created within Power Query in the data model layer. We discuss custom functions in the Custom Functions section of Chapter 3, Data Preparation in Power Query Editor.

The following image shows the flow of data between different layers in Power BI:

Figure 1.8: The flow of data in Power BI

To understand the flow of data better, let us go through a scenario.

In a Power BI report, the developer has defined a query parameter. The parameter has a list of capital letters, E, O, and P. There is also a Product query in Power Query holding descriptive information about the product. The parameter filters the Product Name column. Therefore, when the developer selects E from the parameter, the Product query filters the results showing only the products whose name starts with E. The connection mode is Import.

We put a Table visual on the report canvas with the Product Name column. Can we add a Slicer visual to the report canvas showing the parameter’s values, so the end user changes the values in the Slicer and sees the changes in the Table visual?

To answer the question, we need to think about Power BI layers. Let us do some analysis:

- The query parameters are defined in the data preparation layer in Power Query.

- Filtering a query is also a transformation step in Power Query, which changes the result sets of the query. Therefore, when we import the data into the data model, the result sets do not change unless we change the parameter’s values, which changes the result sets of the Product query and imports the new result sets to the data model.

- By default, query parameter’s values are not loaded into the data model unless the developer sets Enable load. Setting Enable load only loads the selected values from the parameters list and not the whole list.

- We refer to the data visualization layer when we talk about a Slicer visual. This means that the Slicer can only access the data loaded into the data model.

So, the answer is no. After importing the curated data into the data model, it is accessible to the data visualization layer.

Now that we understand the flow of data in Power BI, it is time to learn more about data modeling in Power BI.

What data modeling means in Power BI

Data modeling is undoubtedly one of the most important parts of Power BI development. It is crucial to understand the purpose of data modeling in Power BI from data models in transactional systems. In a transactional system, the goal is to have a model optimized for recording transactional data. Nevertheless, a well-designed data model in Power BI must be optimized for querying the data and reducing the dataset size by aggregating the data.

In reality, not everyone has the luxury of having a data warehouse, so it is a vital skill to know how to create a data model in Power BI. While it is very tempting to get all the data from various data sources and import it to Power BI, answering business questions can quickly translate to complex queries that take a long time to process, which is not ideal. The best practice is to avoid importing everything from the data sources into Power BI and solving the related problems later such as performance issues, data model complexities, and having unnecessarily large data models. Instead, it is wise to get the data model right to precisely answer business-driven questions in the most performant way. When modeling data in Power BI, we must build a data model based on the business logic. So, we may need to join different tables and aggregate the data to a level that answers all business-driven questions, which can be tricky when we have data from various data sources of different grains.

Therefore, we need to transform and reshape the data in Power Query before loading it into the data model. After cutting all the noise from the data, we have a clean, easy-to-understand, and easy-to-work-with data model.

Semantic model

Power BI inherits its characteristics from Power Pivot and SSAS Tabular. Both of them use the xVelocity engine, an updated version of the VertiPaq engine designed for in-memory data analysis. The xVelocity engine leverages column store indexing and consists of semantic model objects such as tables, relationships, hierarchies, and measures stored in memory. All of this means that we would expect tremendous performance gains over highly compressed data, right? Well, it depends. We can expect fast and responsive reports if we efficiently transform and model the data supporting the business logic. Conceptually, the data model in Power BI is a semantic model. Let us untangle this a bit.

A semantic model is a unified data model that provides business contexts to data. The semantic model can be accessed from various data visualization tools, such as Excel, without further transformation. When we publish a Power BI report file (PBIX) to the Power BI Service, the service stores the report in two separate objects. The transformation steps and the data model are stored as a Dataset object and the report as a Report object. A dataset in the Power BI Service is indeed our semantic model. We can connect to the datasets from Power BI Desktop, analyze the datasets in Excel, or use third-party tools such as Tableau. The latter requires an XMLA endpoint connection to a Power BI Premium dataset.

We will not cover the details of XMLA endpoints in this book. You can read more about XMLA endpoints here: https://docs.microsoft.com/en-us/power-bi/enterprise/service-premium-connect-tools?WT.mc_id=DP-MVP-5003466.

Building an efficient data model in Power BI

An efficient data model is easy to understand and easy to maintain. At the same time, it must answer all data-driven questions the business may ask. Let us analyze the preceding sentence. An efficient model must do the following:

- Perform well (be fast)

- Be business-driven

- Decrease the complexity of the required DAX expressions (easy to understand)

- Low maintenance (low cost)

Let us look at the preceding points with a scenario.

We are tasked to create a report on top of the following three different data sources:

- An OData data source with 15 tables. The tables have between 50 and 250 columns.

- An Excel file with 20 sheets with interdependencies and many formulas.

- A data warehouse hosted in SQL Server. The data comes from five dimensions and two fact tables:

- Within those five dimensions is a Date and a Time dimension. The grain of the Time dimension is the hour and minute.

- Each of the fact tables has between 50 and 200 million rows. From a Date and Time perspective, the grain of both fact tables is the day, hour, and minute.

- The organization owns Power BI Pro licenses.

Before getting the data from the source systems, there are essential points in the preceding scenario, and many points are unclear at this stage.

Let us analyze the scenario, along with some related questions we might ask the business to optimize the performance of the report and avoid customer dissatisfaction:

- OData: OData is an online data source, so it could be slow to load the data.

- The tables are wide, which can potentially impact the performance.

- Do we need to import all the columns from those 15 tables?

- Do we also need to import all data, or is just a portion of the data enough? For example, if the data source contains 10 years of data, does the business need to analyze all the historical data, or does bringing 1 or 2 years of data fit the purpose?

- The organization owns Power BI Pro licenses, so a 1 GB file size limit applies.

- Excel: Excel files with many formulas can be tricky data sources.

- Does the business require you to analyze all the data contained in the 20 sheets? We may be able to exclude some of those sheets.

- How often are the formulas edited? This is critical as modifying the formulas can easily break the data processing in Power Query and generate errors. It is best to replicate the Excel formulas in Power BI and load the raw data from Excel before the formulas are applied.

- Data warehouse in SQL Server: It is beneficial to have a data warehouse as a source system, as data warehouses typically have a much better structure from an analytical viewpoint. In our scenario, the finest grain of both fact tables is down to a minute, which can quickly become an issue. Remember, we have Power BI Pro licenses, so we are limited to a 1 GB file size only.

- Does the business need to analyze all the metrics down to the minute, or is day-level enough?

- Is the business required to analyze the whole history, or is bringing a portion of the data enough?

We now have a handful of questions to ask. One common question on the list is about the necessity of analyzing all the historical data. What if the business needs to analyze the whole history? In that case, we should consider using some advanced modeling techniques such as composite models and aggregations.

On the bright side, the fact that we already have five dimensions in the data warehouse might become handy. We might reuse those dimensions with minimal changes in our data model. So, it is wise to look at the other data sources and find commonalities in the data patterns.

We may come up with some more legitimate points and questions later. The takeaway is that we have to talk to the business and ask questions before starting the job. It is a big mistake to start getting data from the source systems before framing the questions around the business processes, requirements, and technology limitations. There are also other points we need to think about from a project management perspective, which are beyond the scope of this book.

The following are initial points to take into account for building an efficient data model:

- We must ask questions to avoid confusion and potential future reworks.

- We need to understand the technical limitations and come up with solutions.

- We have to have a good understanding of data modeling to look for common data patterns to prevent overlaps.

At this point, you may think, “OK, but how can we get there?” This book aims to cover all the preceding points and more. The rest is about you and how you apply your learning to your daily Power BI challenges. The section explains the star schema and snowflaking.

Star schema (dimensional modeling) and snowflaking

First things first, the star schema and dimensional modeling are the same things. In Power BI data modeling, the term star schema is more commonly used. The following sections are generic reminders about some star schema concepts.

Transactional modeling versus star schema modeling

In transactional systems, the main goal is improving the solution’s performance in creating new records and updating/deleting existing ones. So, when designing transactional systems, it is essential to go through the normalization process to decrease data redundancy and increase data entry performance by breaking the tables down into master-detail tables.

But the goal of a business analysis system is very different. In a business analysis solution, we need a data model optimized for querying in the most performant way.

Let us continue with a scenario. Suppose we have a transactional retail system for an international retail shop. We have hundreds of transactions every second from different parts of the world. The company owners want to see the total sales in the past 6 months.

This calculation sounds easy. It is just a simple SUM of sales. But wait, we have hundreds of transactions every second, right? If we have 100 transactions per second, then we have 8,640,000 transactions a day. So, for 6 months of data, we have more than 1.5 billion rows. Therefore, a simple SUM of sales takes a reasonable amount of time to process.

Now, the business raises a new request. The company owners now want to see the total sales in the past 6 months by country and city. They simply want to know what the best-selling cities are.

We need to add another condition to our simple SUM calculation, which translates into a join to the geography table. For those coming from a relational database design background, it is trivial that joins are relatively expensive operations. This scenario can go on and on. So, you can imagine how quickly a simple scenario turns into a rather tricky situation.

In the star schema, however, we already joined all those tables based on business entities. We aggregated and loaded the data into denormalized tables. In the preceding scenario, the business is not interested in seeing every transaction at the second level. So, summarizing the data at the day level decreases the row count from 1.5 billion to approximately 18,000 rows for the 6 months. Now you can imagine how fast the summation would run over 18,000 instead of 1.5 billion rows.

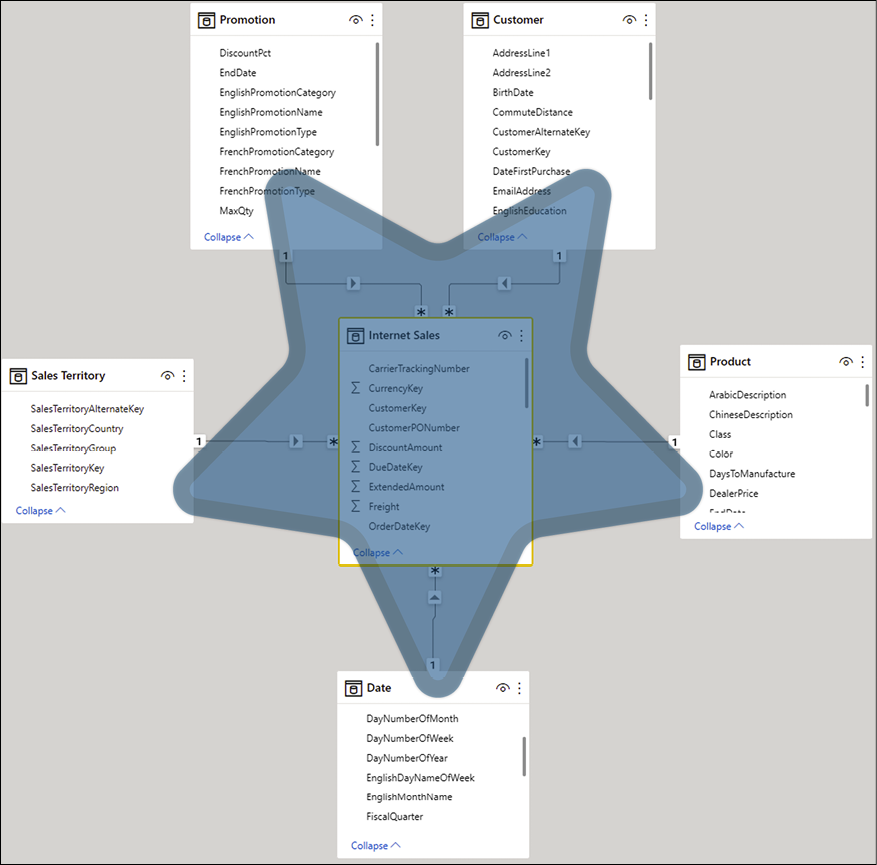

The idea of the star schema is to keep all numeric values in separate tables called fact tables and put all descriptive information into other tables called dimension tables. Usually, the fact tables are surrounded by dimensions explaining the facts. A data model with a fact table in the middle surrounded by dimensions looks like a star, which is why this modeling approach is called a star schema.

This book generally uses Adventure Works DW data, a renowned Microsoft sample dataset, unless stated otherwise. Adventure Works is an imaginary international bike shop selling products online and in their retail shops.

The following figure shows Internet Sales in a star schema shape:

Figure 1.9: Internet Sales star schema

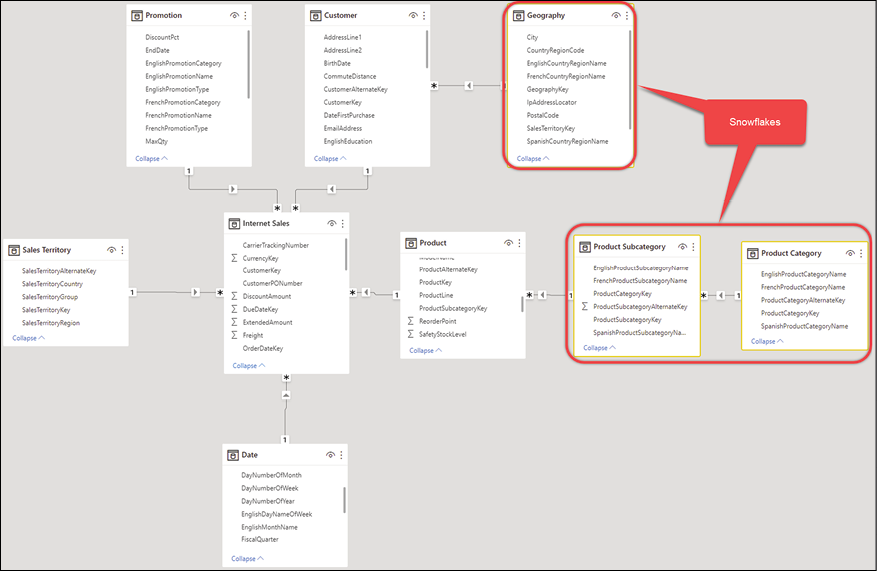

Snowflaking

Snowflaking is when we do not have a perfect star schema when dimension tables surround the fact tables. In some cases, we have some levels of descriptions stored in different tables. Therefore, some dimensions in the model are linked to other tables describing the dimensions in more detail. Snowflaking is normalizing the dimension tables. In some cases, snowflaking is inevitable; nevertheless, the general rule of thumb in data modeling in Power BI (when following a star schema) is to avoid snowflaking as much as possible to have a simpler and more performant model. The following figure shows snowflaking in Adventure Works Internet Sales:

Figure 1.10: Adventure Works, Internet Sales snowflakes

We could avoid the Product Category and Product Subcategory snowflakes in the preceding model by denormalizing them into the Product table. The following section explains denormalization.

Understanding denormalization

In real-world scenarios, not everyone has the luxury of having a pre-built data warehouse designed in a star schema. In reality, snowflakes in data warehouse designs are inevitable. The data models are usually connected to various data sources, including transactional database systems and non-transactional data sources such as Excel files and CSV files. So, we almost always need to denormalize the model to a certain degree. Depending on the business requirements, we may have some normalization along with some denormalization. The reality is that there is no specific rule for the level of normalization and denormalization. The general rule of thumb is denormalizing the model, so each dimension describes all the related details as much as possible.

In the preceding example from Adventure Works DW, we have snowflakes of Product Category and Product Subcategory that can be denormalized into the Product dimension.

Let us go through a hands-on exercise.

Go through the following steps to denormalize the Product Category and Product Subcategory into the Product dimension.

You need to download the Adventure Works, Internet Sales.pbix file from here:

You also need to download the Excel file used as the data source in the PBIX file from here:

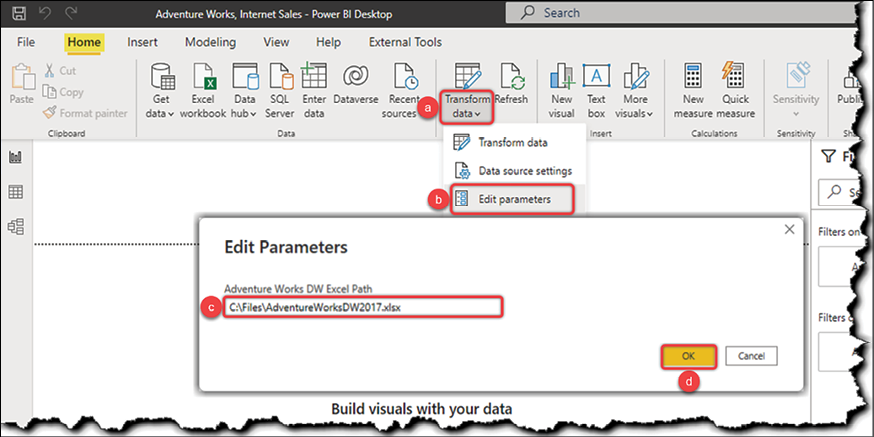

Before we start, let us set up the sample file first. Open the Adventure Works, Internet Sales.pbix file, then change the Adventure Works DW Excel Path parameter as follows:

- Click the Transform data drop-down button.

- Click Edit parameters.

- Enter the path for the .save location of the

AdventureWorksDW2017.xlsxfile. - Click OK.

The following image illustrates the preceding steps:

Figure 1.11: Setting up the Adventure Works, Internet Sales.pbix sample file

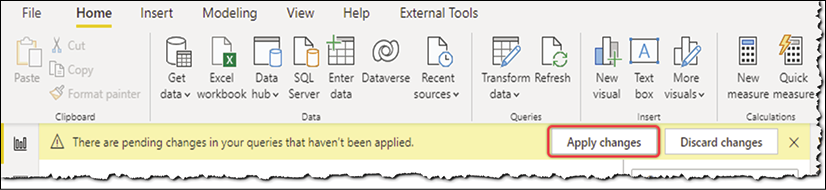

After changing the values of the parameter, we have to Apply changes.

Figure 1.12: Applying changes after changing the parameter value

The preceding process reloads the data from the Excel file.

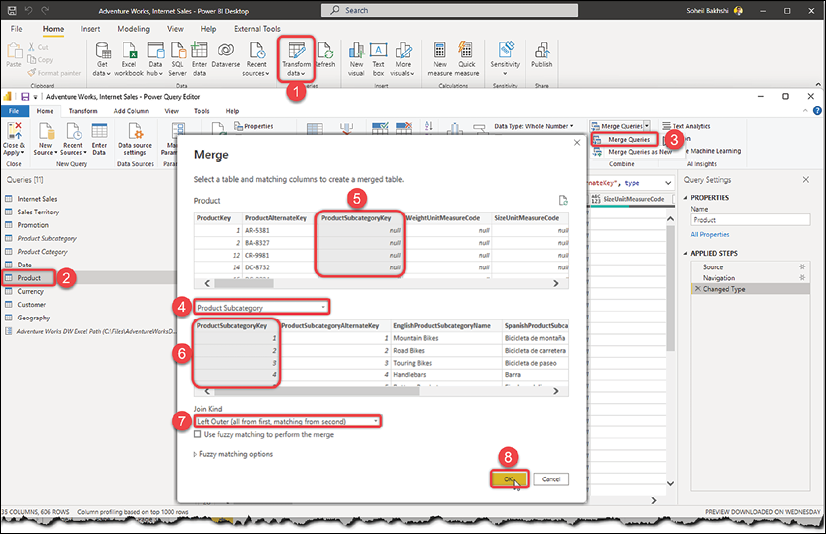

Now that we have correctly set up the sample file, follow these steps:

- Click Transform data in the Home tab of the Queries section.

- Click the Product query.

- Click Merge Queries in the Home tab of the Combine section.

- Select Product Subcategory from the drop-down list.

- Click ProductSubcategoryKey in the Product table.

- Click ProductSubcategoryKey in the Product Subcategory table.

- Select Left Outer (all from first matching from the second) from the Join Kind drop-down.

- Click OK.

Figure 1.13: Merging Product and Product Subcategory

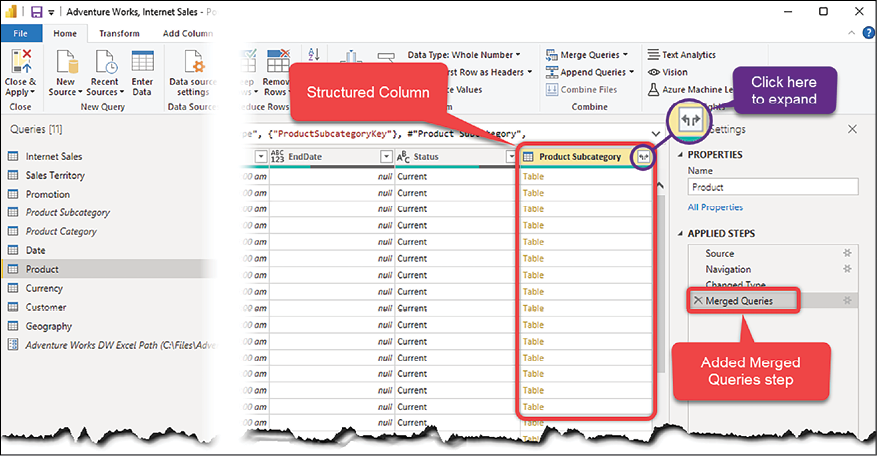

This adds a new step named Merged Queries. As you can see, the values of this column are all Table. This type of column is called a Structured Column. The merging step creates a new structured column named Product Subcategory:

You will learn more about structured columns in Chapter 3, Data Preparation in Power Query Editor.

Figure 1.14: Merging the Product and Product Subcategory tables

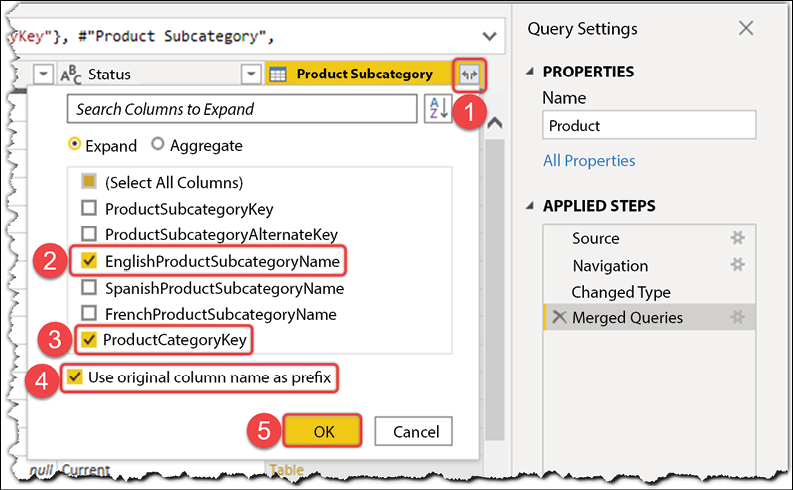

Now let us look at how to expand a structured column in the Query Editor:

- Click the Expand button to expand the Product Subcategory column.

- Select ProductCategoryKey.

- Select the EnglishProductSubcategoryName columns and unselect the rest.

- Unselect Use original column name as prefix.

- Click OK.

Figure 1.15: Expanding Structured Column in the Query Editor

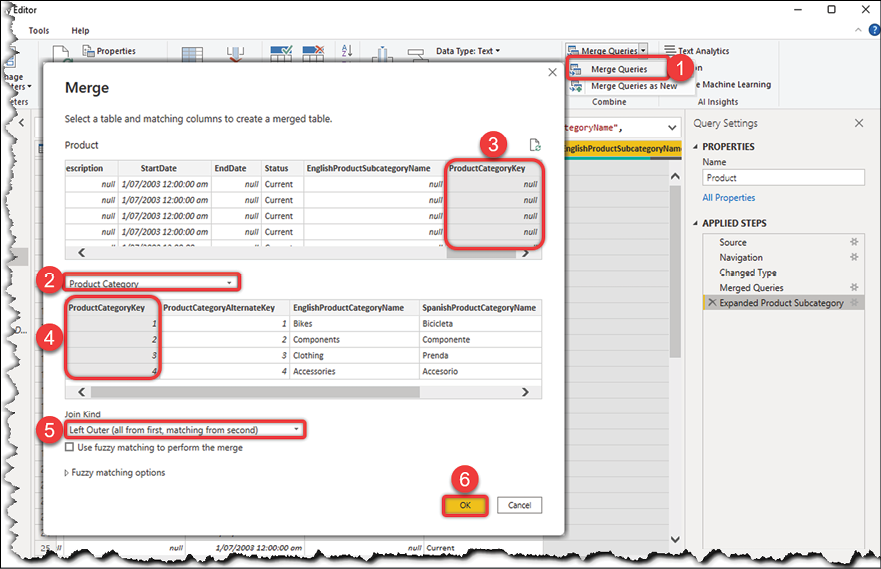

So far, we have added the EnglishProductSubcategoryName and ProductCategoryKey columns from the Product Subcategory query to the Product query. The next step is to add EnglishProductCategoryName from the Product Category query. To do so, we need to merge the Product query with Product Category:

- Click Merge Queries again.

- Select Product Category from the drop-down list.

- Select ProductCategoryKey from the Product table.

- Select ProductCategoryKey from the Product Category table.

- Select Left Outer (all from first matching from second).

- Click OK:

Figure 1.16: Merging Product and Product Category

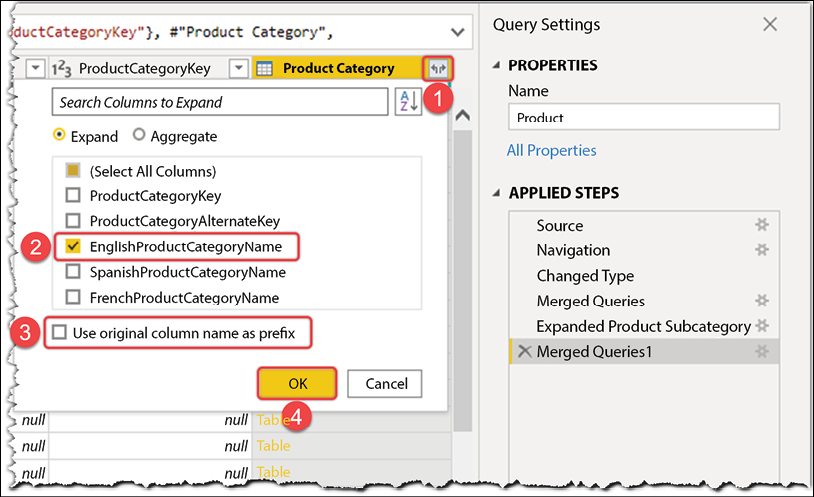

This adds a new structured column named Product Category. We now need to do the following:

- Expand the new column.

- Pick EnglishProductCategoryName from the list.

- Unselect Use original column name as prefix.

- Click OK:

Figure 1.17: Merging Product and Product Category

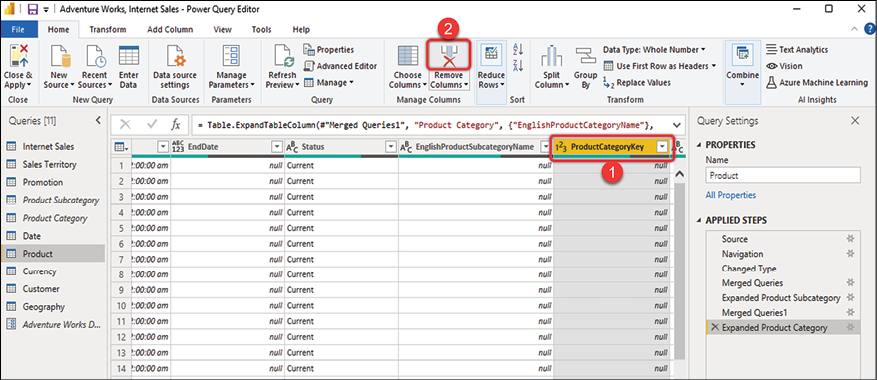

So far, we moved the EnglishProductCategoryName into the Product table. Therefore, the ProductCategoryKey column is no longer needed. So, the next step is removing the ProductCategoryKey column as we no longer need it. To do so, follow these steps:

- Click on the ProductCategoryKey column.

- Click the Remove Columns button in the Managed Column section of the Home tab:

Figure 1.18: Removing a column in the Query Editor

We have merged the Product Category and Product Subcategory snowflakes with the Product query. So, we successfully denormalized the snowflakes.

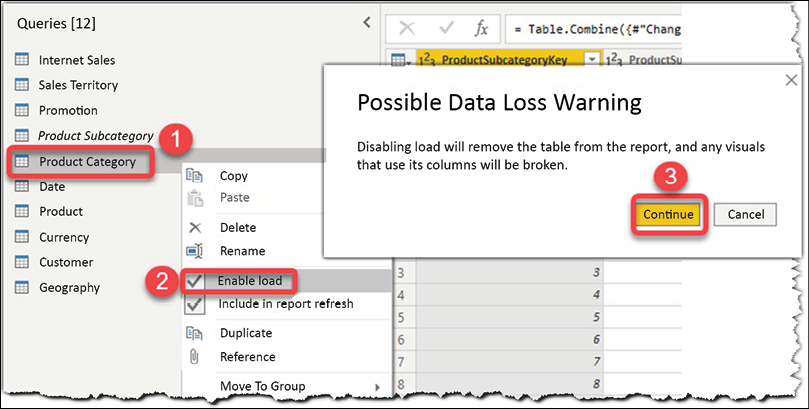

The very last step is to unload both the Product Category and Product Subcategory queries:

- Right-click on each query.

- Untick Enable load from the menu.

- Click Continue on the Possible Data Loss Warning pop-up message:

Figure 1.19: Unloading queries in the Query Editor

Now we need to import the data into the data model by clicking Close & Apply:

Figure 1.20: Importing data into the data model

We have now achieved what we were after: we denormalized the Product Category and Product Subcategory tables, so instead of loading those two tables, we now have EnglishProductCategoryName and EnglishProductSubcategoryName as new columns in the Product table. So the data model is simpler now, and we load less data, leading to better performance.

Job done!

Power BI licensing considerations

At this point, you may be wondering how Power BI licensing affects data modeling. It does, as each licensing tier comes with a set of features that can potentially affect the data modeling. Nevertheless, regardless of the licensing tier, Power BI Desktop is free. This section explains some licensing considerations related to data modeling.

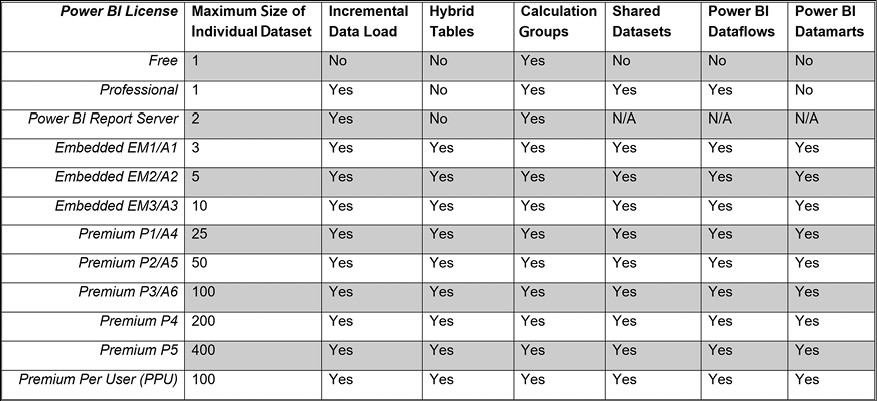

The following table is a simplified version of the Power BI feature comparisons published on the Microsoft website separately based on different licenses:

Figure 1.21: A simplified version of Power BI feature comparisons

The following few sections briefly explain each feature.

Maximum size of an individual dataset

As the preceding table shows, we are limited to 1 GB for each dataset published to the Power BI Service under Free or Professional licensing. Therefore, managing the file size is quite important. There are several ways to keep the file size just below the limit, as follows:

- Import the necessary columns only.

- Import just a portion of data when possible. Explain the technology limitation to the business and ask whether you can filter out some data. For instance, the business may not need to analyze 10 years of data, so filter older data in Power Query.

- Use aggregations. In many cases, you may have the data stored in the source at a very low granularity. However, the business requires data analysis on a higher grain. Therefore, you can aggregate the data to a higher granularity, then import it into the data model. For instance, you may have data stored at a minute level. At the same time, the business only needs to analyze that data at the day level.

- Consider disabling the auto date/time settings in Power BI Desktop.

- Consider optimizing the data types.

We cover all the preceding points in the upcoming chapters.

Incremental data load

One of the most remarkable features available in Power BI is the ability to set up incremental data loads. Incremental data loading in Power BI is inherited from SSAS to work with large models. Power BI does not truncate the dataset and re-import all the data from scratch when the incremental data load is set up correctly. Instead, it only imports the data that has been changed since the last data refresh. Therefore, incremental data load can significantly improve the data refresh performance and decrease the processing load on the Power BI tenant. Incremental data load is available in both Professional and Premium licenses.

Hybrid tables

Microsoft announced a new feature in December 2021 called Hybrid tables, which takes the incremental data refresh capability to the next level. A hybrid table is a regular table with incremental data refresh where the table has one or more partitions in Import mode and another (the last partition) in DirectQuery mode. Therefore, we get all the performance benefits of the two worlds; the historical data is imported into the dataset and is available in memory, while we also have the real-time data in place as the last partition is in DirectQuery mode to the source system. The hybrid table capability is only available in Premium licenses.

Calculation groups

Calculation groups are similar to calculated members in Multi-Dimensional eXpressions (MDX) in SQL Server Analysis Services Multi-Dimensional (SSAS MD). Calculation groups were initially introduced in SSAS Tabular 2019. They are also available in Azure Analysis Services (AAS) and all Power BI licensing tiers.

It is a common scenario that we create (or already have) some base measures in the Power BI model. We then create multiple time intelligence measures on top of those base measures. Suppose we have three measures, as follows:

Product cost = SUM('Internet Sales'[TotalProductCost])Order quantity = SUM('Internet Sales'[OrderQuantity])Internet sales = SUM('Internet Sales'[SalesAmount])

Imagine a scenario when the business requires the following time intelligence calculations on top of the preceding measures:

- Year to date

- Quarter to date

- Month to date

- Last year to date

- Last quarter to date

- Last month to date

- Year over year

- Quarter over quarter

- Month over month

We have nine calculations to be built on top of the three measures in our model. Hence, we have 9 x 3 = 27 measures to build in our model. You can imagine how quickly the number of measures can increase in the model, so do not get surprised if someone says they have hundreds of measures in their Power BI model.

Another common scenario is when we have multiple currencies. Without calculation groups, we need to convert the values into strings and use the FORMAT() function in DAX to represent the numbers in the currency format. Now, if you think about the latter point, combined with time intelligence functions, you see how serious the issue can get very quickly.

Calculation groups solve those sorts of problems. We cover calculation groups in Chapter 10, Advanced Data Modeling Techniques.

Shared datasets

As the name implies, a shared dataset is used across various reports in a workspace within the Power BI Service. Therefore, it is only available in the Power BI Professional and Power BI Premium licenses. This feature is quite crucial to data modelers. It provides more flexibility in creating a generic dataset, covering the business requirements in a single dataset instead of having several datasets that may share many commonalities.

Power BI Dataflows

Dataflows, also called Power Query Online, provide a centralized data preparation mechanism in the Power BI Service that other people across the organization can take advantage of. Like using Power Query in Power BI Desktop for data preparation, we can prepare, cleanse, and transform the data in dataflows. Unlike Power Query queries in Power BI Desktop that are isolated within a dataset after being published to the Power BI Service, with dataflows, we can share all data preparations, cleansing, and transformation processes across the organization.

We can create Power BI dataflows inside a workspace, which is available to Professional and Premium users. We cover Power BI dataflows in Chapter 13, Introduction to Dataflows.

Power BI Datamarts

The Datamart capability is a new feature announced in May 2022. The primary purpose of datamarts is to enable organizations to build self-service, no-code/low-code analytical solutions connecting to multiple data sources, creating ETL (Extraction, Transformation, and Load) pipelines with Power Query, and then loading the data into an Azure SQL Database. The datamart capability is currently available for Premium users only. We cover the datamarts capability in Chapter 15, New Options, Features, and DAX Functions.

We discussed the Power BI licensing considerations for data modeling in Power BI; the following chapter describes the iterative approach to data modeling.

The iterative data modeling approach

Data modeling is usually an ongoing process. We talk to the business to understand their requirements and then apply the business logic to the model. In many cases, we build the data visualizations and then find that we get better results if we make changes to our model. In many other cases, the business logic applied to the model is not what the business needs. This is a typical comment that many of us get from the business after the first few iterations:

This looks really nice, but unfortunately, it is not what we want.

So, taking advantage of an agile approach would be genuinely beneficial for Power BI development. Here is the iterative approach to follow in Power BI development:

Figure 1.22: The iterative data modeling approach

The following few sections describe the pillars of the preceding approach.

Conducting discovery workshops

The Power BI development process starts with gathering information from the business by conducting discovery workshops to understand the requirements better. A business analyst may take care of this step in the real world, and many Power BI users are indeed business analysts. Whether we are business analysts or not, we are data modelers, so we need to analyze the information we receive from the business. We have to ask relevant questions that lead us toward various design possibilities. We have to identify potential risks, limitations, and associated costs and discuss them with the customer. After we get the answers, we can confidently take the next steps to design a data model that best covers the business requirements, mitigates the risks, and aligns with the technology limitations well.

Data preparation based on the business logic

We have a lot on our plate by now. We must get the data from multiple sources and go through the data preparation steps. Now that we have learned a lot about business logic, we can take the proper data preparation steps. For instance, if the business needs to connect to an OData data source and get a list of the columns required, we can prepare the data more efficiently with all the design risks and technology limitations in mind. After consciously preparing the data, we go to the next step, data modeling.

Data modeling

If we properly go through the previous steps, we can build the model more efficiently. We need to think about the analytical side of things while considering all the business requirements, design possibilities, risks, and technology limitations. For instance, if the business cannot tolerate data latency longer than 5 minutes, we may need to consider using DirectQuery. Using DirectQuery comes with some limitations and performance risks. So, we must consider the design approach that satisfies the business requirements the most. We cover DirectQuery in Chapter 4, Getting Data from Various Sources, in the Dataset storage modes section.

Testing the logic

One of the most trivial and yet most important steps in data modeling is testing all the business logic we implement to meet the requirements. Not only do we need to test the figures to ensure the results are accurate, but we also need to test the solution from a performance and user experience perspective. Be prepared for tons of mixed feedback and sometimes strong criticism from the end users, especially when we think everything is OK.

Demonstrating the business logic in basic data visualizations

As we are modeling the data, we do not need to worry about data visualization. Confirming with the business SMEs (Subject Matter Experts) is the fastest way to ensure all the reporting logic is correct. The fastest way to get confirmation from SMEs is to demonstrate the logic in the simplest possible way, such as using table and matrix visuals and some slicers on the page. When demonstrating the solution to SMEs, do not forget to highlight that the visualization is only to confirm the reporting logic with them and not the actual product delivery. In the real world, we have many new discussions and surprises during the demonstration sessions, which usually means we are at the beginning of the second iteration, so we start gathering information about the required changes and the new requirements, if any.

We gradually become professional data modelers as we go through the preceding steps several times. This book also follows an iterative approach, so we go back and forth between different chapters to cover some scenarios.

In the next section, we quickly cover how professional data modelers think.

Thinking like a professional data modeler

None of us become a professional in something overnight. We make many mistakes, and we learn from them. Professionalism comes with experience. To get the experience, we need to practice and practice a lot. Let me share a short back story about myself. Back in the day, in the late 90s, I was working on transactional database systems. So it is essential to know how to normalize the data model to the third normal form.

In some cases, we normalize the model to the Boyce-Codd normal form. I carried out many projects, faced challenges, and made many mistakes, but I learned from those mistakes. Gradually, I could visualize the data model to the second or, sometimes, even the third normal form in my mind while I was in the discovery sessions with the customer.

Regardless of their usage, all data modeling approaches that I had a chance to work with or read about are based on relational models, such as transactional models, star schema, Inmon, and data vaults. They are all based on relational data modeling. Data modeling in Power BI is no different. Some professional data modelers can visualize the data model in their minds from their first discovery sessions with the customer. But as mentioned, this capability comes with experience.

Once we have enough experience in data modeling, we ask more relevant questions from the SMEs. We already know about successful practices, the challenges, and the pitfalls, so we can quickly recognize similar situations. Therefore, we can avoid many future changes by asking more relevant questions early. Moreover, we can also give the customer some new ideas to solve other problems they may face down the road. Usually, the business requirements change during the project’s lifetime. So, we are not surprised when those changes happen.

Summary

This chapter discussed the different layers of Power BI and what is accessible in which layer. Therefore, when we face an issue, we know exactly where we should look to fix the problem. Then, we discussed making a semantic layer when building a data model in Power BI. We also discussed some star schema and snowflaking concepts essential to an efficient data model. We then covered different Power BI licensing considerations and how they can affect our data modeling. Lastly, we looked at the data modeling iterative approach to deliver a precise and reliable data model that solves many problems that the report writers may face down the road.

The next chapter looks at DAX and data modeling. We discuss a somewhat confusing topic, virtual tables. We also look into time intelligence scenarios that help with many data modeling tasks.

Join us on Discord!

Join The Big Data and Analytics Community on the Packt Discord Server!

Hang out with 558 other members and enjoy free voice and text chat.

Download code from GitHub

Download code from GitHub