Chapter 1: Domo Ecosystem Overview

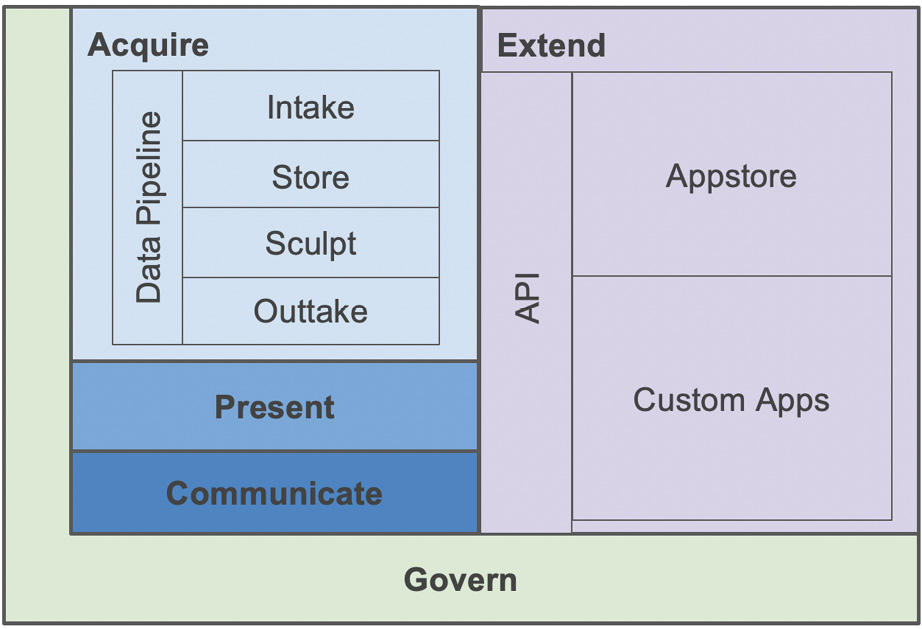

Before Domo, answering business questions was often a laborious, cross-disciplinary process requiring a diverse mix of technical and business resources to accomplish it and, far too often, was much too slow and expensive—falling short on the objective of informing decisions. Enter the Domo ecosystem, a Platform-as-a-Service (PaaS) solution consisting of five core integrated parts: Acquire, Present, Communicate, Extend, and Govern. This integrated architecture democratizes the process of answering business questions, which means that everything a user needs to use data to answer relevant business questions is available to them without having to wait for specialists.

You'll explore the Domo ecosystem by reading through the following topics:

- Introducing the Domo ecosystem

- Acquiring a data pipeline

- Presenting the story dashboard

- Communicating with Domo Buzz

- Extending with apps and APIs

- Governing security and operations

Introducing the Domo ecosystem

The Domo ecosystem was designed from its inception to be disruptive to how data is acquired, structured, stored, transformed, shared, used, extended, and governed. The overarching objective in its creation was to empower everyone to answer business questions at the speed of business. For example, no more waiting in the proverbial BI breadline for IT or technical resources. Understanding that many questions have a shelf life and are perishable in their utility is a foundational belief that translated into access, speed, and agility in the product. A large part of the product investment was made in data acquisition/pipeline capabilities to ensure that the most difficult and time-consuming activities in answering business questions, namely data intake, storage, and sculpting, are streamlined for the non-technical user while preserving appropriate security.

Therefore, to answer questions quickly, it follows that a typical user needs to be able to do the following:

- Intake data quickly, whatever the data volume, variety, and velocity.

- Provide data extraction to outtake data from Domo to other applications.

- Have the data automatically stored and indexed for fast queries at scale.

- Sculpt the data and automate data pipelines.

- Present relevant stories that drive favorable outcomes.

- Securely share information and communicate with context.

- Enable extensions with a crowd-sourced app store and custom apps.

This unique combination of pre-integrated and universally governed layers in the ecosystem enables non-technical users to quickly, accurately, securely, and relevantly deliver end-to-end solutions.

The following diagram visualizes the Domo ecosystem:

Figure 1.1 – The Domo ecosystem

Let's look at each of the layers shown in Figure 1.1 in turn, starting with Acquire.

Acquiring a data pipeline

The Acquire layer contains the data pipeline processes for collecting data from a source system(s), storing the data, sculpting the data, and providing data back to other applications.

Intake tools – importing data from various data sources

This section will discuss the main options in the Domo ecosystem for data intake:

- A connector pulls data into the Domo cloud from files, cloud apps, and databases. It's a Domo application that, through a wizard-like interface, allows a user to select source systems to get data from. The user then simply enters a few configuration inputs and provides credentials in order to copy and store the data in the Domo cloud data warehouse as a dataset.

There is a rapidly growing universe of cloud-based applications. As of the time of writing, the Domo ecosystem supports over 670 cloud app connectors. Domo's connector team constantly keeps the connectors updated to the vendors' current API versions. And they also created an API so that if you can't find the connector you need, you can create your own custom connector.

- Workbench pushes on-premises data from behind firewalls into the Domo cloud. It is a Windows-based application that you can download and install on a Windows machine, sometimes called the universal data connector as it can handle any flat file or database table upload. It typically sits behind a firewall and pushes the data up to the Domo cloud. It mainly uses ODBC queries to connect to databases, and essentially bulk loads the query result into the Domo cloud. Organizations that do not want to whitelist Domo's cloud connectors find this a great solution—avoiding the need for Domo connectors to reach into their systems from the cloud.

Workbench has robust job scheduling and monitoring capabilities and can even listen to a directory and automatically upload new or changing Excel files.

- Federated queries enable access to source data from wherever the data currently resides, for example, in a data lake. It is Domo's data-at-rest acquisition method for organizations with large existing data lakes. It facilitates the need to have a way to use content where it lies rather than having to copy it. So, with federated queries, the data is not permanently persisted in the Domo cloud. Rather, with federated queries, the query data is cached in memory with a time-to-live setting. The time-to-live setting determines how long the query results will persist in memory before a new request causes a re-query or simply times out and is released. Federated queries effectively make Domo Analyzer a powerful data lake discovery tool and unlock data lake information without any data transport requirement.

- Internet of Things (IoT): Machine-to-machine streaming communication data capture is the newest data acquisition type supported in the Domo ecosystem. This area addresses capturing the information flowing from internet devices and machine sensor data. Existing connectors include AWS IoT Core, AWS IoT Device Management, AWS IoT Device Defender, AWS IoT Analytics, AWS Kinesis, OPC, Matomo, Apache Kafka, MQTT, Particle.io, Beonic Traffic, and more. Domo has partnered with AWS and Verizon ThingSpace to bring innovative solutions to the market. Another example is how SharkNinja is using Domo IoT to improve the customer experience using sensor data: https://bit.ly/32I44w7.

- Webforms are your go-to tool when you just need a simple brute-force way to get data into the Domo cloud. You can enter or cut and paste tabular data into a webform. Think of it as a cloud spreadsheet lite. This is used for lookup table creation, test data, and other miscellaneous needs to create a dataset on the fly.

Store – automatic schema management and performance optimization

Figuring out where and how to store data can be a time-consuming and costly exercise. After you have decided on a structure and location to store the data, then the ongoing process of tuning the data structure so that it is performant requires specialized technical skills. All this means that the storage problem can become a significant obstacle. With Domo, that obstacle is removed via automatic data structure design, storage, and performance tuning, all enabled in a business user-empowering way.

Data architecture (Vault, Adrenaline, Tundra, Federated)

The Domo data architecture consists of four major parts:

- Vault is the persistent storage component that is just a bunch of disk storage. Everything is flattened and stored in tabular arrays called datasets. Vault is a collection of datasets whose data structures are created and cataloged automatically as the data is ingested. The acquired data is physically stored in leading cloud infrastructure providers such as Amazon S3 or Azure.

- Adrenaline is an in-memory data store indexing vault datasets for high query performance. The Domo visuals, beast modes, and filters run a query language called DQL (short for Domo Query Language) against Adrenaline to retrieve the data for the visual.

- Tundra is a specialized in-memory query optimizer companion to Adrenaline and handles queries that specifically fit its optimization algorithm.

- Federated storage is virtually mapped into the Domo ecosystem but is physically stored outside of Domo. If you already have a data lake, then federated queries, which work on federated storage, are a good option. All the preceding storage components work seamlessly together. Behind the scenes, the Domo operations team works to monitor and tune the performance for you.

Cloud data lakes

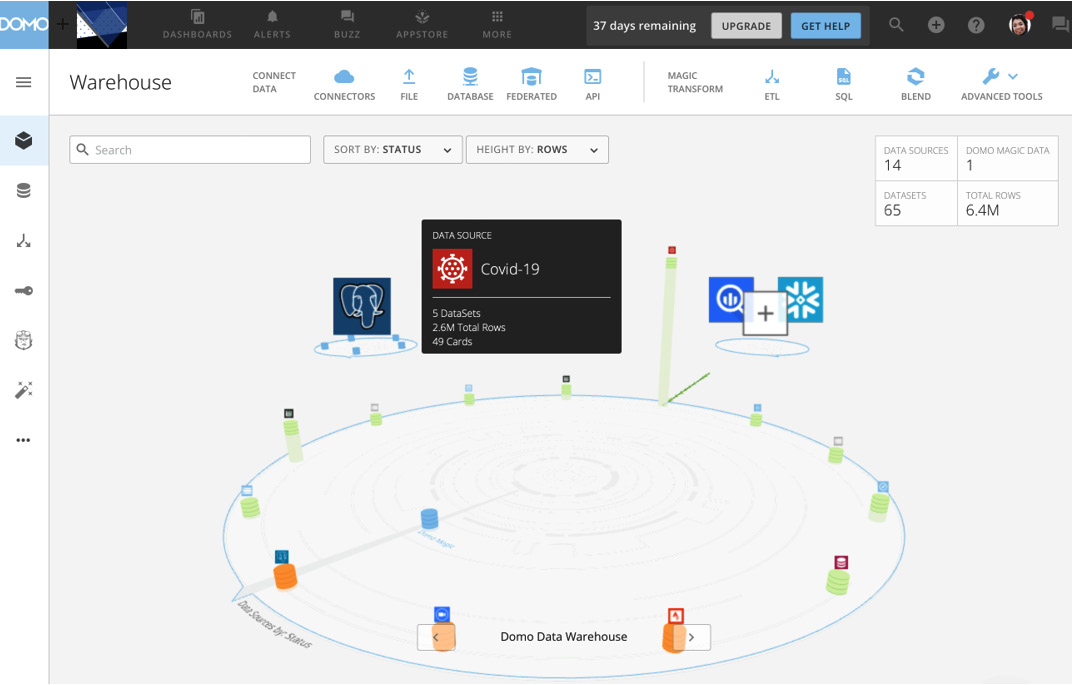

Importantly, UI components are built on top of the storage layer that showcases the cloud data lake user artifacts. The basic data lake user artifacts are data sources, dataflows, and datasets. Users can search, manage, and enhance the data lake user artifacts. Conveniently, the data lake catalog and data lineage information are auto-generated and can be tag enhanced by the users for the data dictionary. The UI even shows in real time how the data is flowing into the data lake. All the external data sources are represented in the outermost ring of the platter. The data source platter's inner ring shows the data sources created from data sculpting as shown in Figure 1.2:

Figure 1.2 – Data source platter

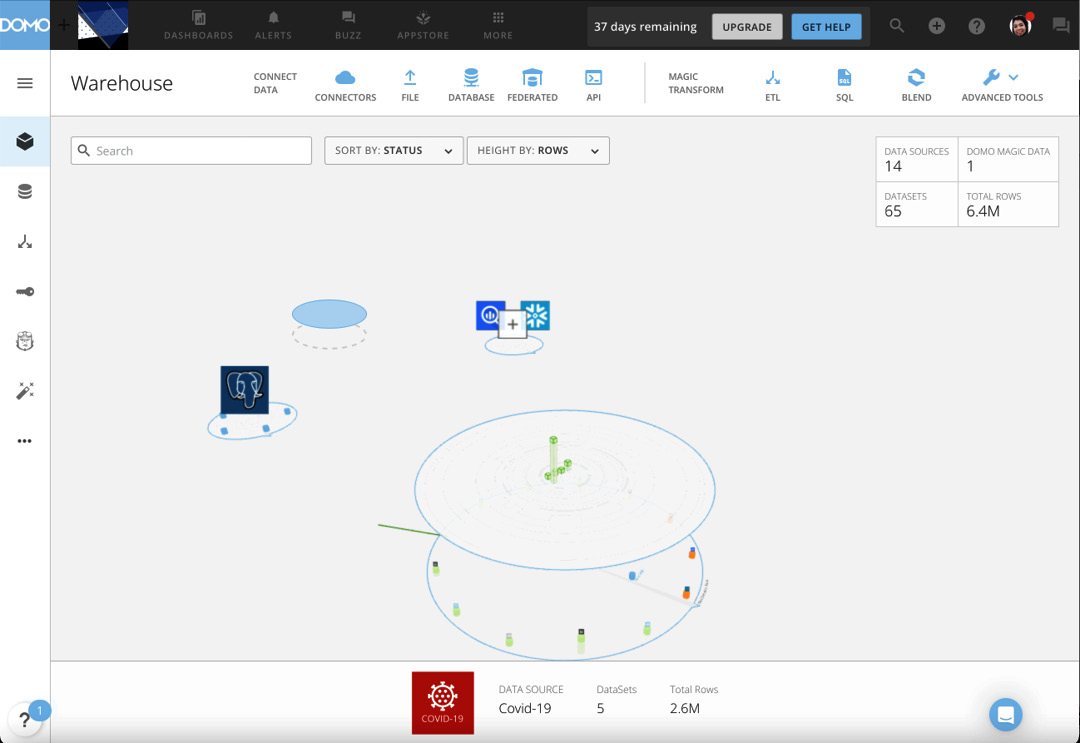

Additionally, to see datasets derived from the data source, you click on a particular data source and then another platter layer opens containing the dataset(s), as seen in Figure 1.3:

Figure 1.3 – Datasets platter

Data sculpting

Data sculpting tools are not new; for example, Informatica, Boomi, Alteryx, and so on have been around for years. Some have limitations regarding the lack of auto schema discovery, and the lack of auto-generated, non-siloed data cataloging. This leads to a cumbersome data sculpting experience. Domo's data sculpting tools are schema-aware and integrated from source to report. This enables the user to see potential reuse or do new transforms on data quickly. The fully integrated and schema-aware data sculpting toolset includes the ability to stitch data together and transform data, similar to well-known ETL tools. Primarily, it's a no-code, visual programming paradigm for greater adoption. Secondarily, for those SQL scripters out there, a SQL serialization tool is available as well. As you will see, the integration and ease of use truly democratize the data sculpting activity.

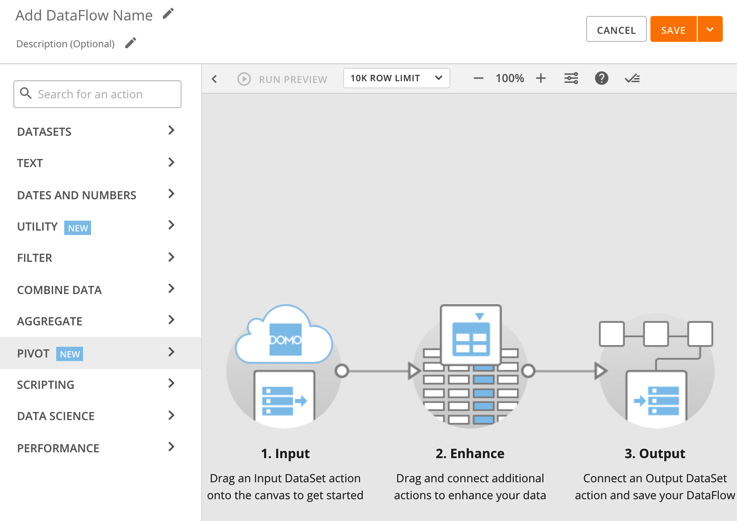

Magic ETL

Magic ETL is a drag-and-drop, no-code data sculpting tool that enables a typical user to sculpt the data they have acquired into Domo Vault. It leverages the Apache Spark framework and is a simple yet powerful visual data transformation tool. Transforms include text, date, and number operations. Custom formulas, parsing, and mapping operations are provided, as are filter and de-duplication operations. Of course, merge and join operations are included along with aggregation and pivot operations. Additionally, data science operations for classification, clustering, forecasting, outlier detection, multivariate outliers, and prediction are available and can be automated via Python and R scripting support.

Figure 1.4 – Magic ETL

Magic SQL

The Magic sculpting tools have evolved over time from scripting to no code. Magic ETL is the newest no-code data sculpting tool while MySQL Dataflows, which is a Structured Query Language (SQL) tool supporting serialized SQL statement execution, was the original sculpting tool option. MySQL Dataflows runs on dynamic MySQL instances and is perfect for the SQL developer who doesn't want to transition to Magic ETL; although, it is not as scalable as Magic ETL.

Blend

Blend, formally Fusion, is the Domo equivalent of a VLOOKUP function in Excel creating a new virtual dataset by adding additional information to the primary dataset by combining data from the lookup dataset. It also has properties that allow functions to append datasets like a UNION operation in SQL.

Data Views

Data Views is a new feature that enables users to create aggregates and/or filtered views on an underlying dataset as well as join to other datasets. This feature is very similar to views available on relational databases. Views in Domo are non-materialized but can be materialized using a Magic ETL dataflow.

Important Note

Because Domo is integrated from bottom to top, the data sculpting jobs can be triggered to run when any of the source data changes. No more worrying about batch window timing because the job will run when the source data has changed. Of course, the jobs can also run on a specific time schedule as needed.

Outtaking data

Don't leave your insights stranded. A crucial part of any data platform resides in the ability of other systems to outtake data. Several ways exist to export data from the Domo cloud.

Exporting

The first question many analysts ask when evaluating Domo as a platform is What are the options to export data? So, the product makes sure that getting data exported to Excel is simple, fast, and always possible.

In addition to Excel exports, there are PowerPoint exports and add-ins for Excel and PowerPoint live data embedding.

Finally, there is a RESTful data API for those who want to hit Vault directly to retrieve data.

Writeback connectors

A relatively new feature is writeback connectors. As the name implies, this allows Domo to write data in Domo directly into other systems. A quick search in Domo for writeback connectors reveals 22 connectors to many popular systems, as seen in Figure 1.5. Specific writeback connectors are part of Domo Integration Cloud and require your Domo account team to unlock the feature for your use.

Figure 1.5 – Writeback connectors

RESTful API

For those interested in taking a code-based approach to extracting data from Domo, a RESTful API exists to extract data from the Domo cloud as well: https://developer.domo.com/docs/dev-studio-references/data-api.

Now that we have examined the data acquisition pipeline (Intake, Store, Sculpt, and Outtake), it's time to discuss options for presenting the data.

Presenting the story dashboard

Presenting refers to the parts of Domo that are used to visualize and distribute content. The main presentation artifacts in Domo are cards (tables, charts, and graph visuals) and pages (dashboards/stories). The visuals are linked to the underlying datasets and update in real time as the datasets are updated. Beast Mode allows you to create powerful custom formulas on the fly.

Analyzer

Analyzer is the card creation and editing tool. This is where the visuals are created. It also happens to have solid real-time filtering and is a powerful ad hoc analysis tool—though it is not often thought of in that way. The drag-and-drop user interface will be very familiar to anyone who has created a chart or used a pivot table before.

Figure 1.6 – Analyzer card design UI

Beast Mode

Beast Mode is a data sculpting tool that enables the user to create new fields from calculations on the fly. It's called Beast Mode because it enables you to overcome many data challenges with custom formulas. If you know about case statements and Excel formulas, this is where you go to do that kind of work. Beast Mode is accessed through the analyzer UI.

Important Note

Currently, Beast Mode is a one-pass calculation engine, which means it cannot perform post operations on aggregations such as SUM() or MAX(). For those of you familiar with SQL coding, this would be like using a HAVING clause and it is not supported in Beast Mode. However, there is a way to get a 2-pass result by using a Data View as the first pass and then doing a beast mode on the view. This is the equivalent of a SQL HAVING clause.

Dashboards and stories

Pages are the standard presentation artifact in Domo. The destination of any card is to a page. Pages typically contain several cards (sometimes more) and the pages can be layered up to three menu levels. Each page is commonly referred to as a dashboard and has built-in layout formatting and card placement capabilities. In order to tell a story as an analyst would typically do in a PowerPoint deck, a page can be converted into a story layout, which provides much more granular control of the presentation commentary, as well as the look and feel.

Pages and the card(s) they contain are updated in real time and typically address data from multiple datasets. Pages and cards on the page, either in standard or story layout, are instantly deployed to the Domo mobile app for iOS, Android, and mobile web.

Important Note

If you are considering developing a custom mobile app, take the time to evaluate the Domo mobile app as it may already have the ability to cover what you are looking to do out of the box. Search for Domo in the mobile app stores for Apple or Android.

Content distribution

Pages enable people to take the initiative and look at information of interest. But what are your options if you want to push content to people?

Scheduled reports

Fortunately, the Domo platform allows for the distribution of content as a scheduled email with links and attachments.

Public or private URLs

Domo has a publish feature that allows any page to become a URL presentation. The URL is visible to anyone with the link but can be password protected. A downside of this feature is the content is no longer interactive. This feature is often used to drive office wallboards that rotate through cards on a timer.

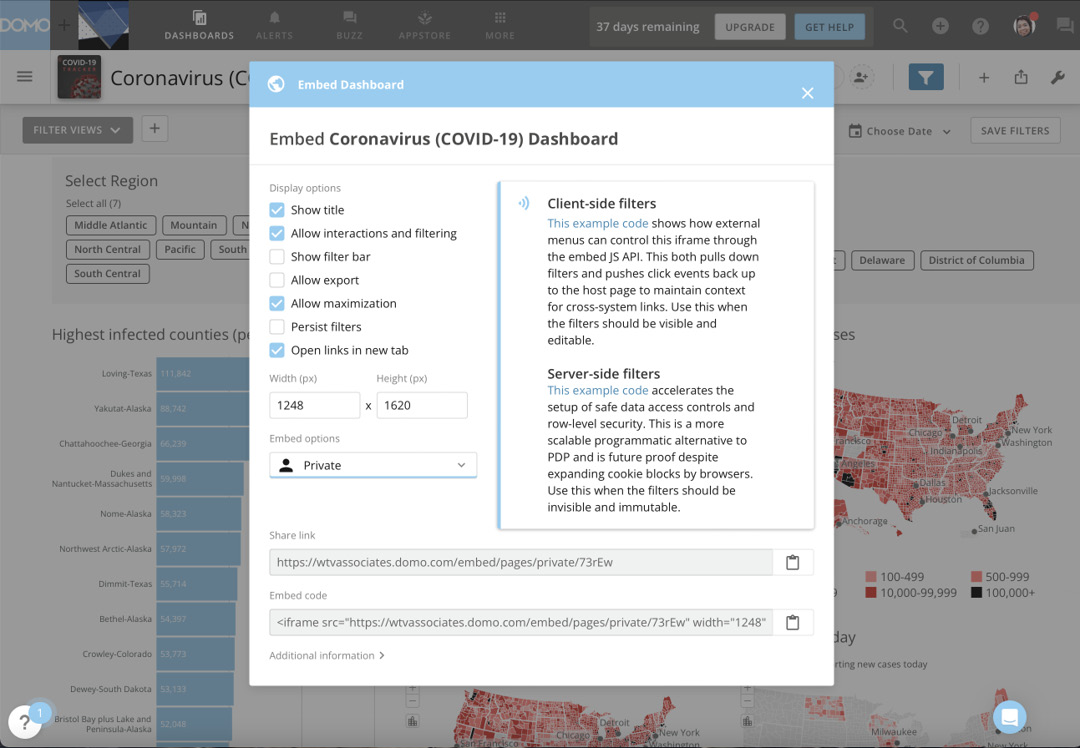

Domo Everywhere – Embed

Domo pages and cards are designed to be embedded or white-labeled in other portals and applications. Many Domo customers use Domo content in their external customer/supplier portals as well as internal employee portals. Some customers enhance their product and reduce their time to market and enhance feature capabilities by embedding Domo best-of-breed visuals in their products. There are both private-embed and public-embed offerings for internal- and external-facing content respectively. Embedded content is interactive.

Figure 1.7 – Domo Everywhere Embed configuration

Important Note

If you can evaluate how content is presented to external stakeholders and your IT organization is considering a bespoke build, you will likely get to market faster, with more features, more securely, on mobile and desktop, with fewer costs, and less risk if the Domo Everywhere Embed feature is adopted rather than a custom build.

Next, let's peruse Domo's integrated communication tools.

Communicating with Domo Buzz

Presenting data is often just a step toward resolving an action. The dialog that ensues is important to the process and can sometimes be an ongoing process. To facilitate these kinds of communication in an integrated way, Domo has several communication tools.

Domo Buzz

Domo Buzz is a Slack-like tool that enables the dialog around topics and supports threads. In Domo, topics are available automatically around pages and cards. This means both the topic and the content are auto-linked and you don't have to bring your own content. No more digging through email threads to find the relevant data and wondering whether it is still accurate; Buzz is always available as a pop-out shelf in the UI, and conversations can be public or private.

Figure 1.8 – Domo Buzz card panel

Next, let's look at the alerting feature.

Alerts

Alerts in Domo are sourced from multiple places: cards, datasets, and Mr. Roboto, the Domo AI.

User alerts

User-originated alerts are created on cards and datasets, are supported through a publish and subscribe mechanism, and are visible in the alert center. When triggered, an alert can be received by push notification, text, or email.

Figure 1.9 – Alert center

Next, we'll see some of Domo's AI capabilities around communications.

Mr. Roboto

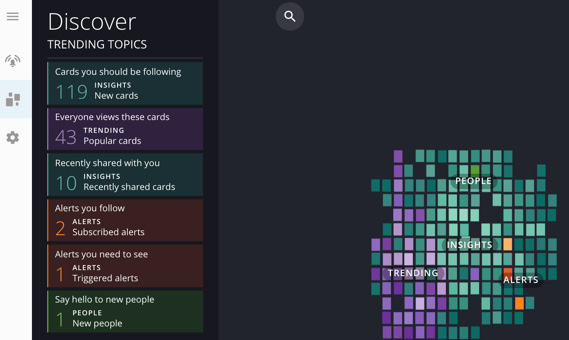

Mr. Roboto insights are system-generated by the Domo AI engine and are found in two places: first, the Discover console under the ALERTS menu shows insights on activity in the Domo instance.

Figure 1.10 – Discover console

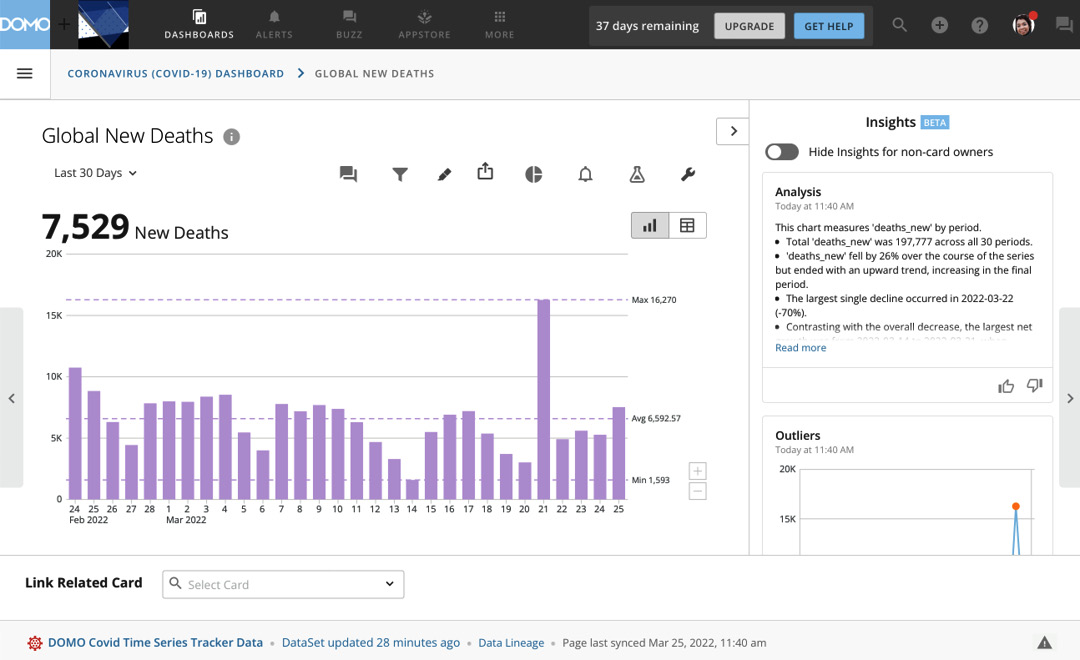

And second, the Card Insights panel on the card details page (which surfaces insights generated by the AI on the card).

Figure 1.11 – Card Insights panel

Next, let's review the profile feature.

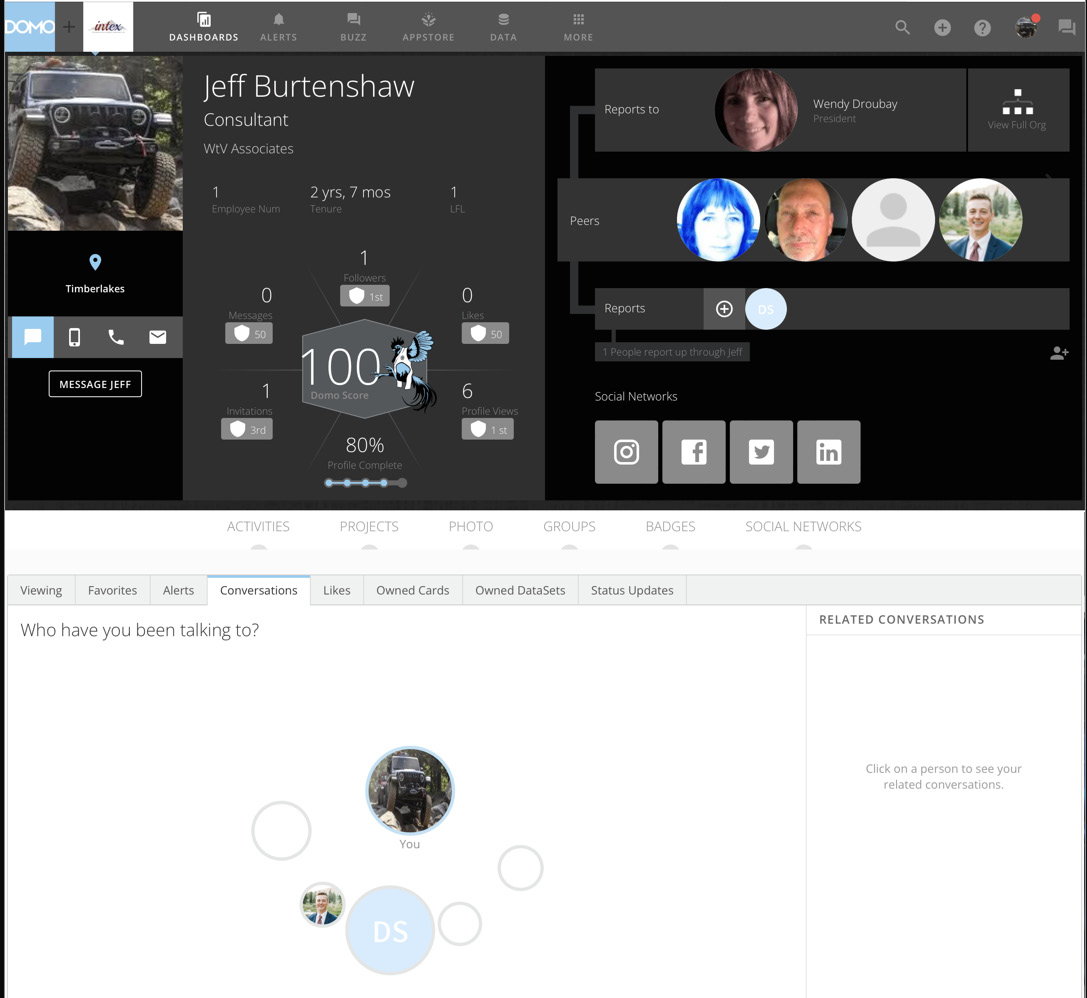

Profiles

Effective communicators realize that knowing what is important to the other person(s) and being able to build on common ground are crucial. The profile feature is a way to quickly learn about a person's interests and what is important to them in terms of the information they consume and the people they converse with. Profiles also function as an updatable user organization directory as seen in Figure 1.12:

Figure 1.12 – Profile

Next, let's review the project management features.

Projects and Tasks

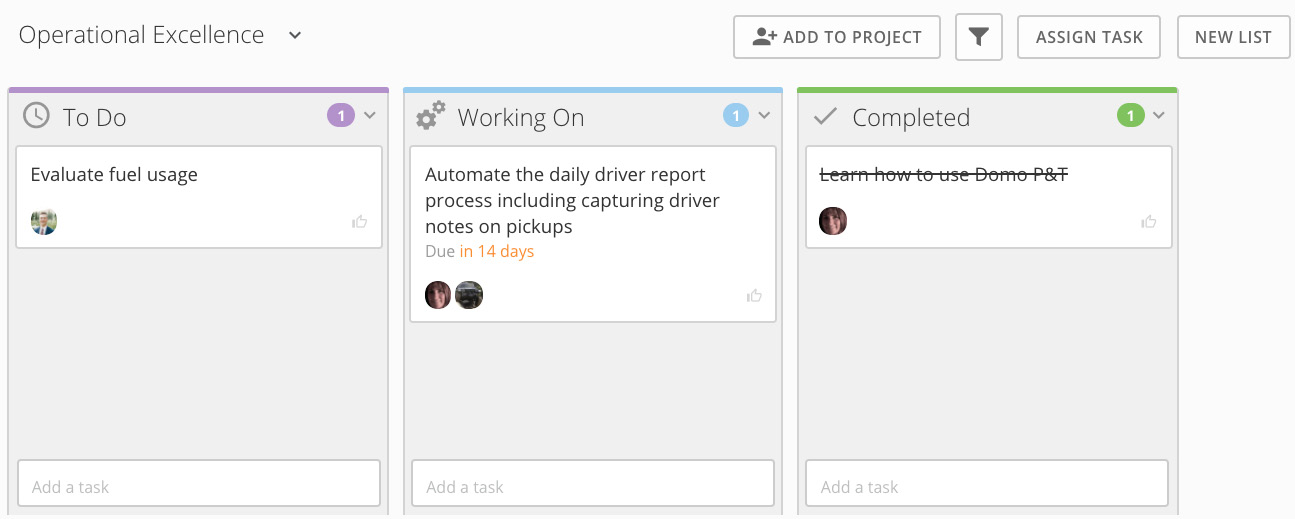

Projects and Tasks, as the name implies, is a lightweight Trello-like project task management tool. Any Domo Buzz message can be converted into a task to be assigned and tracked.

To enable analytics on Projects and Tasks, install the DomoStats Projects and Tasks App from the Appstore, which creates the datasets that contain the Projects and Tasks data and a dashboard. The dashboard content can be edited just like any other dashboard, as seen Figure 1.13:

Figure 1.13 – Projects and Tasks project board

Important Note

DomoStats is a moniker used for internal data about Domo. Search DomoStats in the data connectors area to see a full list of DomoStats data sources and related apps.

Next, let's learn about how to extend Domo using apps and APIs.

Extending with apps and APIs

The Domo ecosystem is extensible to the crowd through the Appstore and through APIs.

Appstore

The Domo Appstore contains many pre-built, configurable applications that can be installed into your Domo instance from the Appstore. Many are free of charge while some are created by partners and have a charge to use them. One innovative feature is that any dashboard can be uploaded as an app into the Appstore. The uploaded app can be private to your instance or shared across all Domo instances.

APIs

APIs exist to use connectors, data, pages, accounts, users, groups, projects, and tasks in Domo. More information on the Domo APIs can be found here: https://developer.domo.com/.

The most common use for APIs is to create custom data pipelines and build custom applications.

Next, let's discuss governance and security.

Governing, security, and operations

To govern a system, you need to see what artifacts are in the system, who owns the artifacts, who can access the artifacts, and how the artifacts are being used. If you can't track it, then you can't govern it. Let's look at some of the tools enabling governance of the platform.

Tools

The Domo ecosystem has an extensive feature set supporting enterprise governance through all platform layers. A must-have for any MajorDomo Domo system administrator is the Domo Governance Datasets Connector available in the Appstore. This connector app allows you to create datasets with metadata and usage for Beast Mode, cards, pages, datasets, dataflows, users, groups, and more.

Data lineage is tracked from source to consumption, similar to many industries where it is important to understand the chain of delivery, for example, from field to fork.

User and group security roles and privileges are enterprise-grade, including single sign-on and row-level data access controls.

The DomoStats People app can be installed from the Appstore and shows user security and usage patterns.

Domo CourseBuilder is a downloadable app available to create training content. Search for CourseBuilder in the Appstore.

Visual indicators of artifact ownership and access sharing are pervasive, creating trust and facilitating communications.

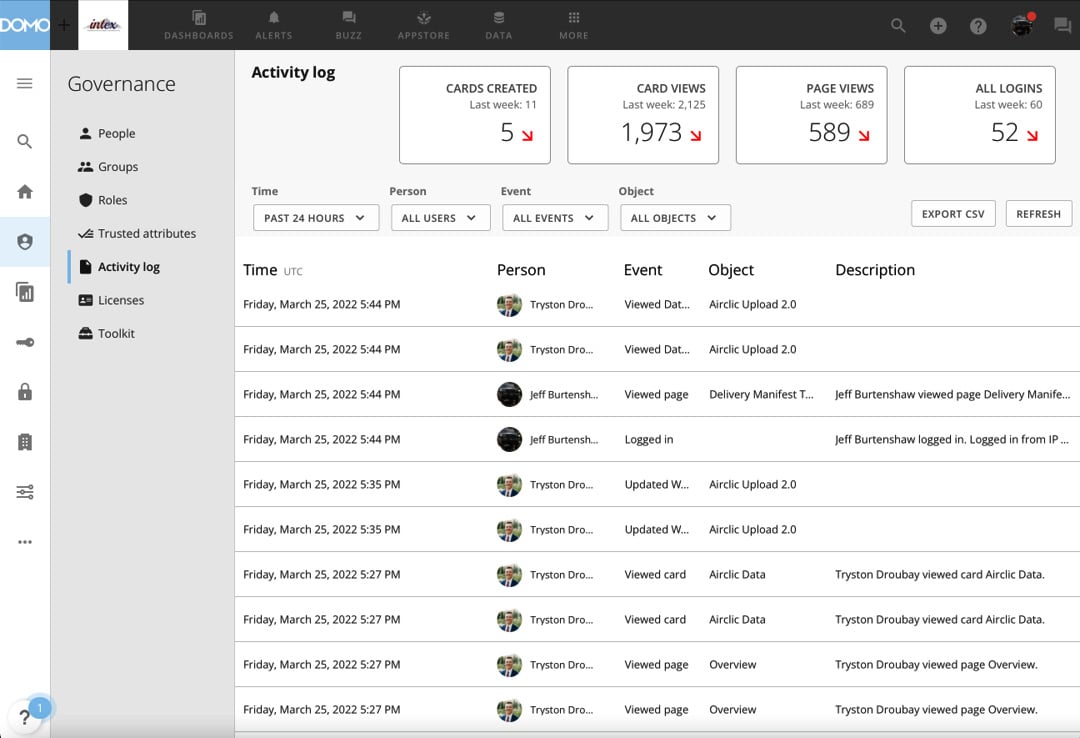

For the audit-oriented, there is even a detailed activity log, as seen in Figure 1.14:

Figure 1.14 – Activity log

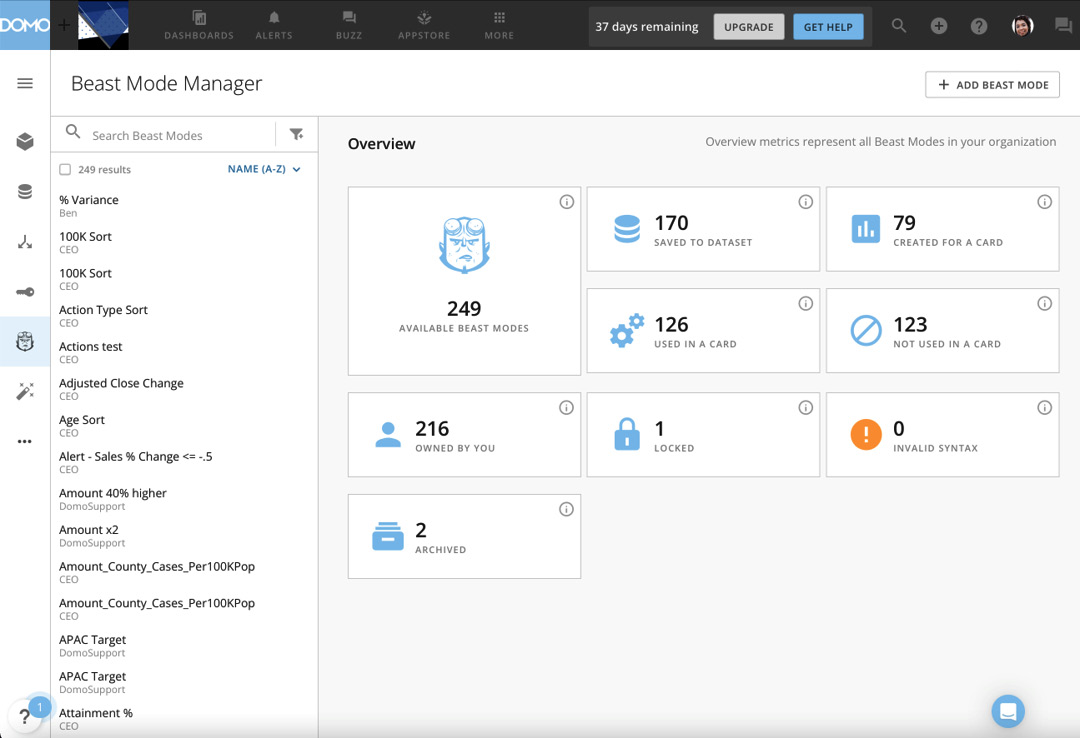

Impact tools for datasets, fields, and Beast Mode are at the ready to prune and standardize the experience, as seen in Figure 1.15:

Figure 1.15 – Beast Mode Manager

Overall, the Domo ecosystem has the enterprise-grade tools to be a trusted and heavily utilized platform.

Next, let's discuss some people and organizational considerations.

People – organizational role design

Domo provides powerful technology, but the appropriate organization of people's responsibilities is also a critical factor in getting the most from the technology. Domo suggests the following roles be assigned in any Domo implementation:

- Executive Sponsor: A business leader who has the budgetary and result accountability for the Domo ecosystem in the organization

- MajorDomo: A person who has overall administrative ownership, driving clear artifact ownership and sharing policies, and is able to synthesize business needs with the Domo ecosystem's capabilities

- Data Specialist: Data architects who have overall responsibility for the data pipelines and data governance in specific subject areas

The following are additional roles that come with large/global organizations:

- Domo Master: A tactical expert on Domo platform features serving in consultative positions to execute business priorities enabled in the ecosystem

- Team Champion: Analytic talent that executes business strategy for a given business area primarily in the present and communicate layers of the ecosystem

That concludes our introduction to the architecture of Domo. Let's recap what we've learned.

Summary

In this chapter, we reviewed five major areas in the Domo ecosystem (acquiring, storing, presenting, extending, and governing). We learned all these areas are integrated, working together as seen in Figure 1.1. This integration simplifies the user experience, making it so that a typical business user can use the entire ecosystem without having to rely on other more technical resources. This democratizes the process while also tracking user activities so that proper governance can be enforced. We learned that it is important to not only create content but also have proper roles in place to get the most out of the technology and that as the organization's scope grows, the roles also expand.

In the following chapters, we will be diving deeper into all five areas, providing detailed steps for how to accomplish various tasks. We will start with getting data into Domo, then move on to sculpting the data, then address presenting and distributing content. Additionally, we will cover extending the system beyond Domo. And finally, we will walk through governing activities.

In the next chapter, we will take a hands-on approach to using the acquire pipeline intake features of the Domo ecosystem.

Download code from GitHub

Download code from GitHub