AUTHOR, FUTURIST

Artificial intelligence is rapidly transitioning from the realm of science fiction to the reality of our daily lives. Our devices understand what we say, speak to us, and translate between languages with ever-increasing fluency. AI-powered visual recognition algorithms are outperforming people and beginning to find applications in everything from self-driving cars to systems that diagnose cancer in medical images. Major media organizations increasingly rely on automated journalism to turn raw data into coherent news stories that are virtually indistinguishable from those written by human journalists.

The list goes on and on, and it is becoming evident that AI is poised to become one of the most important forces shaping our world. Unlike more specialized innovations, artificial intelligence is becoming a true general-purpose technology. In other words, it is evolving into a utility—not unlike electricity—that is likely to ultimately scale across every industry, every sector of our economy, and nearly every aspect of science, society and culture.

The demonstrated power of artificial intelligence has, in the last few years, led to massive media exposure and commentary. Countless news articles, books, documentary films and television programs breathlessly enumerate AI’s accomplishments and herald the dawn of a new era. The result has been a sometimes incomprehensible mixture of careful, evidence-based analysis, together with hype, speculation and what might be characterized as outright fear-mongering. We are told that fully autonomous self-driving cars will be sharing our roads in just a few years—and that millions of jobs for truck, taxi and Uber drivers are on the verge of vaporizing. Evidence of racial and gender bias has been detected in certain machine learning algorithms, and concerns about how AI-powered technologies such as facial recognition will impact privacy seem well-founded. Warnings that robots will soon be weaponized, or that truly intelligent (or superintelligent) machines might someday represent an existential threat to humanity, are regularly reported in the media. A number of very prominent public figures—none of whom are actual AI experts—have weighed in. Elon Musk has used especially extreme rhetoric, declaring that AI research is “summoning the demon” and that “AI is more dangerous than nuclear weapons.” Even less volatile individuals, including Henry Kissinger and the late Stephen Hawking, have issued dire warnings.

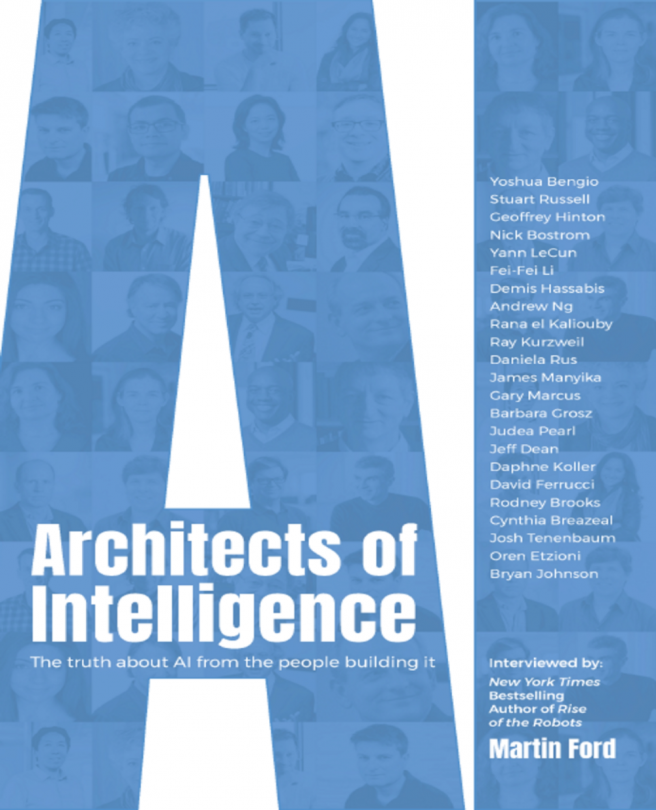

The purpose of this book is to illuminate the field of artificial intelligence—as well as the opportunities and risks associated with it—by having a series of deep, wide-ranging conversations with some of the world’s most prominent AI research scientists and entrepreneurs. Many of these people have made seminal contributions that directly underlie the transformations we see all around us; others have founded companies that are pushing the frontiers of AI, robotics and machine learning.

Selecting a list of the most prominent and influential people working in a field is, of course, a subjective exercise, and without doubt there are many other people who have made, or are making, critical contributions to the advancement of AI. Nonetheless, I am confident that if you were to ask nearly anyone with a deep knowledge of the field to compose a list of the most important minds who have shaped contemporary research in artificial intelligence, you would receive a list of names that substantially overlaps with the individuals interviewed in this book. The men and women I have included here are truly the architects of machine intelligence—and, by extension, of the revolution it will soon unleash.

The conversations recorded here are generally open-ended, but are designed to address some of the most pressing questions that face us as artificial intelligence continues to advance: What specific AI approaches and technologies are most promising, and what kind of breakthroughs might we see in the coming years? Are true thinking machines—or human-level AI—a real possibility and how soon might such a breakthrough occur? What risks, or threats, associated with artificial intelligence should we be genuinely concerned about? And how should we address those concerns? Is there a role for government regulation? Will AI unleash massive economic and job market disruption, or are these concerns overhyped? Could superintelligent machines someday break free of our control and pose a genuine threat? Should we worry about an AI “arms race,” or that other countries with authoritarian political systems, particularly China, may eventually take the lead?

It goes without saying that no one really knows the answers to these questions. No one can predict the future. However, the AI experts I’ve spoken to here do know more about the current state of the technology, as well as the innovations on the horizon, than virtually anyone else. They often have decades of experience and have been instrumental in creating the revolution that is now beginning to unfold. Therefore, their thoughts and opinions deserve to be given significant weight. In addition to my questions about the field of artificial intelligence and its future, I have also delved into the backgrounds, career trajectories and current research interests of each of these individuals, and I believe their diverse origins and varied paths to prominence will make for fascinating and inspiring reading.

Artificial intelligence is a broad field of study with a number of subdisciplines, and many of the researchers interviewed here have worked in multiple areas. Some also have deep experience in other fields, such as the study of human cognition. Nonetheless, what follows is a brief attempt to create a very rough road map showing how the individuals interviewed here relate to the most important recent innovations in AI research and to the challenges that lie ahead. More background information about each person is available in his or her biography, which is located immediately after the interview.

The vast majority of the dramatic advances we’ve seen over the past decade or so—everything from image and facial recognition, to language translation, to AlphaGo’s conquest of the ancient game of Go—are powered by a technology known as deep learning, or deep neural networks. Artificial neural networks, in which software roughly emulates the structure and interaction of biological neurons in the brain, date back at least to the 1950s. Simple versions of these networks are able to perform rudimentary pattern recognition tasks, and in the early days generated significant enthusiasm among researchers. By the 1960s, however—at least in part as the direct result of criticism of the technology by Marvin Minsky, one of the early pioneers of AI—neural networks fell out of favor and were almost entirely dismissed as researchers embraced other approaches.

Over a roughly 20-year period beginning in the 1980s, a very small group of research scientists continued to believe in and advance the technology of neural networks. Foremost among these were Geoffrey Hinton, Yoshua Bengio and Yann LeCun. These three men not only made seminal contributions to the mathematical theory underlying deep learning, they also served as the technology’s primary evangelists. Together they refined ways to construct much more sophisticated—or “deep”—networks with many layers of artificial neurons. A bit like the medieval monks who preserved and copied classical texts, Hinton, Bengio and LeCun ushered neural networks through their own dark age—until the decades-long exponential advance of computing power, together with a nearly incomprehensible increase in the amount of data available, eventually enabled a “deep learning renaissance.” That progress became an outright revolution in 2012, when a team of Hinton’s graduate students from the University of Toronto entered a major image recognition contest and decimated the competition using deep learning.

In the ensuing years, deep learning has become ubiquitous. Every major technology company—Google, Facebook, Microsoft, Amazon, Apple, as well as leading Chinese firms like Baidu and Tencent—have made huge investments in the technology and leveraged it across their businesses. The companies that design microprocessor and graphics (or GPU) chips, such as NVIDIA and Intel, have also seen their businesses transformed as they rush to build hardware optimized for neural networks. Deep learning—at least so far—is the primary technology that has powered the AI revolution.

This book includes conversations with the three deep learning pioneers, Hinton, LeCun and Bengio, as well as with several other very prominent researchers at the forefront of the technology. Andrew Ng, Fei-Fei Li, Jeff Dean and Demis Hassabis have all advanced neural networks in areas like web search, computer vision, self-driving cars and more general intelligence. They are also recognized leaders in teaching, managing research organizations, and entrepreneurship centered on deep learning technology.

The remaining conversations in this book are generally with people who might be characterized as deep learning agnostics, or perhaps even critics. All would acknowledge the remarkable achievements of deep neural networks over the past decade, but they would likely argue that deep learning is just “one tool in the toolbox” and that continued progress will require integrating ideas from other spheres of artificial intelligence. Some of these, including Barbara Grosz and David Ferrucci, have focused heavily on the problem of understanding natural language. Gary Marcus and Josh Tenenbaum have devoted large portions of their careers to studying human cognition. Others, including Oren Etzioni, Stuart Russell and Daphne Koller, are AI generalists or have focused on using probabilistic techniques. Especially distinguished among this last group is Judea Pearl, who in 2012 won the Turing Award—essentially the Nobel Prize of computer science—in large part for his work on probabilistic (or Bayesian) approaches in AI and machine learning.

Beyond this very rough division defined by their attitude toward deep learning, several of the researchers I spoke to have focused on more specific areas. Rodney Brooks, Daniela Rus and Cynthia Breazeal are all recognized leaders in robotics. Breazeal along with Rana El Kaliouby are pioneers in building systems that understand and respond to emotion, and therefore have the ability to interact socially with people. Bryan Johnson has founded a startup company, Kernel, which hopes to eventually use technology to enhance human cognition.

There are three general areas that I judged to be of such high interest that I delved into them in every conversation. The first of these concerns the potential impact of AI and robotics on the job market and the economy. My own view is that as artificial intelligence gradually proves capable of automating nearly any routine, predictable task—regardless of whether it is blue or white collar in nature—we will inevitably see rising inequality and quite possibly outright unemployment, at least among certain groups of workers. I laid out this argument in my 2015 book, Rise of the Robots: Technology and the Threat of a Jobless Future.

The individuals I spoke to offered a variety of viewpoints about this potential economic disruption and the type of policy solutions that might address it. In order to dive deeper into this topic, I turned to James Manyika, the Chairman of the McKinsey Global Institute. Manyika offers a unique perspective as an experienced AI and robotics researcher who has lately turned his efforts toward understanding the impact of these technologies on organizations and workplaces. The McKinsey Global Institute is a leader in conducting research into this area, and this conversation includes many important insights into the nature of the unfolding workplace disruption.

The second question I directed at everyone concerns the path toward human-level AI, or what is typically called Artificial General Intelligence (AGI). From the very beginning, AGI has been the holy grail of the field of artificial intelligence. I wanted to know what each person thought about the prospect for a true thinking machine, the hurdles that would need to be surmounted and the timeframe for when it might be achieved. Everyone had important insights, but I found three conversations to be especially interesting: Demis Hassabis discussed efforts underway at DeepMind, which is the largest and best funded initiative geared specifically toward AGI. David Ferrucci, who led the team that created IBM Watson, is now the CEO of Elemental Cognition, a startup that hopes to achieve more general intelligence by leveraging an understanding of language. Ray Kurzweil, who now directs a natural language-oriented project at Google, also had important ideas on this topic (as well as many others). Kurzweil is best known for his 2005 book, The Singularity is Near. In 2012, he published a book on machine intelligence, How to Create a Mind, which caught the attention of Larry Page and led to his employment at Google.

As part of these discussions, I saw an opportunity to ask this group of extraordinarily accomplished AI researchers to give me a guess for just when AGI might be realized. The question I asked was, “What year do you think human-level AI might be achieved, with a 50 percent probability?” Most of the participants preferred to provide their guesses anonymously. I have summarized the results of this very informal survey in a section at the end of this book. Two people were willing to guess on the record, and these will give you a preview of the wide range of opinions. Ray Kurzweil believes, as he has stated many times previously, that human-level AI will be achieved around 2029—or just eleven years from the time of this writing. Rodney Brooks, on the other hand, guessed the year 2200, or more than 180 years in the future. Suffice it to say that one of the most fascinating aspects of the conversations reported here is the starkly differing views on a wide range of important topics.

The third area of discussion involves the varied risks that will accompany progress in artificial intelligence in both the immediate future and over much longer time horizons. One threat that is already becoming evident is the vulnerability of interconnected, autonomous systems to cyber attack or hacking. As AI becomes ever more integrated into our economy and society, solving this problem will be one of the most critical challenges we face. Another immediate concern is the susceptibility of machine learning algorithms to bias, in some cases on the basis of race or gender. Many of the individuals I spoke with emphasized the importance of addressing this issue and told of research currently underway in this area. Several also sounded an optimistic note—suggesting that AI may someday prove to be a powerful tool to help combat systemic bias or discrimination.

A danger that many researchers are passionate about is the specter of fully autonomous weapons. Many people in the artificial intelligence community believe that AI-enabled robots or drones with the capability to kill, without a human “in the loop” to authorize any lethal action, could eventually be as dangerous and destabilizing as biological or chemical weapons. In July 2018, over 160 AI companies and 2,400 individual researchers from across the globe—including a number of the people interviewed here—signed an open pledge promising to never develop such weapons. (https://futureoflife.org/lethal-autonomous-weapons-pledge/) Several of the conversations in this book delve into the dangers presented by weaponized AI.

A much more futuristic and speculative danger is the so-called “AI alignment problem.” This is the concern that a truly intelligent, or perhaps superintelligent, machine might escape our control, or make decisions that might have adverse consequences for humanity. This is the fear that elicits seemingly over-the-top statements from people like Elon Musk. Nearly everyone I spoke to weighed in on this issue. To ensure that I gave this concern adequate and balanced coverage, I spoke with Nick Bostrom of the Future of Humanity Institute at the University of Oxford. Bostrom is the author of the bestselling book Superintelligence: Paths, Dangers, Strategies, which makes a careful argument regarding the potential risks associated with machines that might be far smarter than any human being.

The conversations included here were conducted from February to August 2018 and virtually all of them occupied at least an hour, some substantially more. They were recorded, professionally transcribed, and then edited for clarity by the team at Packt. Finally, the edited text was provided to the person I spoke to, who then had the opportunity to revise it and expand it. Therefore, I have every confidence that the words recorded here accurately reflect the thoughts of the person I interviewed.

The AI experts I spoke to are highly varied in terms of their origins, locations, and affiliations. One thing that even a brief perusal of this book will make apparent is the outsized influence of Google in the AI community. Of the 23 people I interviewed, seven have current or former affiliations with Google or its parent, Alphabet. Other major concentrations of talent are found at MIT and Stanford. Geoff Hinton and Yoshua Bengio are based at the Universities of Toronto and Montreal respectively, and the Canadian government has leveraged the reputations of their research organizations into a strategic focus on deep learning. Nineteen of the 23 people I spoke to work in the United States. Of those 19, however, more than half were born outside the US. Countries of origin include Australia, China, Egypt, France, Israel, Rhodesia (now Zimbabwe), Romania, and the UK. I would say this is pretty dramatic evidence of the critical role that skilled immigration plays in the technological leadership of the US.

As I carried out the conversations in this book, I had in mind a variety of potential readers, ranging from professional computer scientists, to managers and investors, to virtually anyone with an interest in AI and its impact on society. One especially important audience, however, consists of young people who might consider a future career in artificial intelligence. There is currently a massive shortage of talent in the field, especially among those with skills in deep learning, and a career in AI or machine learning promises to be exciting, lucrative and consequential.

As the industry works to attract more talent into the field, there is widespread recognition that much more must be done to ensure that those new people are more diverse. If artificial intelligence is indeed poised to reshape our world, then it is crucial that the individuals who best understand the technology—and are therefore best positioned to influence its direction—be representative of society as a whole.

About a quarter of those interviewed in this book are women, and that number is likely significantly higher than what would be found across the entire field of AI or machine learning. A recent study found that women represent about 12 percent of leading researchers in machine learning. (https://www.wired.com/story/artificial-intelligence-researchers-gender-imbalance) A number of the people I spoke to emphasized the need for greater representation for both women and members of minority groups.

As you will learn from her interview in this book, one of the foremost women working in artificial intelligence is especially passionate about the need to increase diversity in the field. Stanford University’s Fei-Fei Li co-founded an organization now called AI4ALL (http://ai-4-all.org/) to provide AI-focused summer camps geared especially to underrepresented high school students. AI4ALL has received significant industry support, including a recent grant from Google, and has now scaled up to include summer programs at six universities across the United States. While much work remains to be done, there are good reasons to be optimistic that diversity among AI researchers will increase significantly in the coming years and decades.

While this book does not assume a technical background, you will encounter some of the concepts and terminology associated with the field. For those without previous exposure to AI, I believe this will afford an opportunity to learn about the technology directly from some of the foremost minds in the field. To help less experienced readers get started, a brief overview of the vocabulary of AI follows this introduction, and I recommend you take a few moments to read this material before beginning the interviews. Additionally, the interview with Stuart Russell, who is the co-author of the leading AI textbook, includes an explanation of many of the field’s most important ideas.

It has been an extraordinary privilege for me to participate in the conversations in this book. I believe you will find everyone I spoke with to be thoughtful, articulate, and deeply committed to ensuring that the technology he or she is working to create will be leveraged for the benefit of humanity. What you will not so often find is broad-based consensus. This book is full of varied, and often sharply conflicting, insights, opinions, and predictions. The message should be clear: Artificial intelligence is a wide open field. The nature of the innovations that lie ahead, the rate at which they will occur, and the specific applications to which they will be applied are all shrouded in deep uncertainty. It is this combination of massive potential disruption together with fundamental uncertainty that makes it imperative that we begin to engage in a meaningful and inclusive conversation about the future of artificial intelligence and what it may mean for our way of life. I hope this book will make a contribution to that discussion.

The conversations in this book are wide-ranging and in some cases delve into the specific techniques used in AI. You don’t need a technical background to understand this material, but in some cases you may encounter the terminology used in the field. What follows is a very brief guide to the most important terms you will encounter in the interviews. If you take a few moments to read through this material, you will have all you need to fully enjoy this book. If you do find that a particular section is more detailed or technical than you would prefer, I would advise you to simply skip ahead to the next section.

MACHINE LEARNING is the branch of AI that involves creating algorithms that can learn from data. Another way to put this is that machine learning algorithms are computer programs that essentially program themselves by looking at information. You still hear people say “computers only do what they are programmed to do…” but the rise of machine learning is making this less and less true. There are many types of machine learning algorithms, but the one that has recently proved most disruptive (and gets all the press) is deep learning.

DEEP LEARNING is a type of machine learning that uses deep (or many layered) ARTIFICIAL NEURAL NETWORKS—software that roughly emulates the way neurons operate in the brain. Deep learning has been the primary driver of the revolution in AI that we have seen in the last decade or so.

There are a few other terms that less technically inclined readers can translate as simply “stuff under the deep learning hood.” Opening the hood and delving into the details of these terms is entirely optional: BACKPROPAGATION (or BACKPROP) is the learning algorithm used in deep learning systems. As a neural network is trained (see supervised learning below), information propagates back through the layers of neurons that make up the network and causes a recalibration of the settings (or weights) for the individual neurons. The result is that the entire network gradually homes in on the correct answer. Geoff Hinton co-authored the seminal academic paper on backpropagation in 1986. He explains backprop further in his interview. An even more obscure term is GRADIENT DESCENT. This refers to the specific mathematical technique that the backpropagation algorithm uses to the reduce error as the network is trained. You may also run into terms that refer to various types, or configurations, of neural networks, such as RECURRENT and CONVOLUTIONAL neural nets and BOLTZMANN MACHINES. The differences generally pertain to the ways the neurons are connected. The details are technical and beyond the scope of this book. Nonetheless, I did ask Yann LeCun, who invented the convolutional architecture that is widely used in computer vision applications, to take a shot at explaining this concept.

BAYESIAN is a term that can be generally be translated as “probabilistic” or “using the rules of probability.” You may encounter terms like Bayesian machine learning or Bayesian networks; these refer to algorithms that use the rules of probability. The term derives from the name of the Reverend Thomas Bayes (1701 to 1761) who formulated a way to update the likelihood of an event based on new evidence. Bayesian methods are very popular with both computer scientists and with scientists who attempt to model human cognition. Judea Pearl, who is interviewed in this book, received the highest honor in computer science, the Turing Award, in part for his work on Bayesian techniques.

There are several ways that machine learning systems can be trained. Innovation in this area—finding better ways to teach AI systems—will be critical to future progress in the field.

SUPERVISED LEARNING involves providing carefully structured training data that has been categorized or labeled to a learning algorithm. For example, you could teach a deep learning system to recognize a dog in photographs by feeding it many thousands (or even millions) of images containing a dog. Each of these would be labeled “Dog.” You would also need to provide a huge number of images without a dog, labeled “No Dog.” Once the system has been trained, you can then input entirely new photographs, and the system will tell you either “Dog” or “No Dog”—and it might well be able to do this with a proficiency that exceeds that of a typical human being.

Supervised learning is by far the most common technique used in current AI systems, accounting for perhaps 95 percent of practical applications. Supervised learning powers language translation (trained with millions of documents pre-translated into two different languages) and AI radiology systems (trained with millions of medical images labeled either “Cancer” or “No Cancer”). One problem with supervised learning is that it requires massive amounts of labeled data. This explains why companies that control huge amounts of data, like Google, Amazon, and Facebook, have such a dominant position in deep learning technology.

REINFORCEMENT LEARNING essentially means learning through practice or trial and error. Rather than training an algorithm by providing the correct, labeled outcome, the learning system is set loose to find a solution for itself, and if it succeeds it is given a “reward.” Imagine training your dog to sit, and if he succeeds, giving him a treat. Reinforcement learning has been an especially powerful way to build AI systems that play games. As you will learn from the interview with Demis Hassabis in this book, DeepMind is a strong proponent of reinforcement learning and relied on it to create the AlphaGo system.

The problem with reinforcement learning is that it requires a huge number of practice runs before the algorithm can succeed. For this reason, it is primarily used for games or for tasks that can be simulated on a computer at high speed. Reinforcement learning can be used in the development of self-driving cars—but not by having actual cars practice on real roads. Instead virtual cars are trained in simulated environments. Once the software has been trained it can be moved to real-world cars.

UNSUPERVISED LEARNING means teaching machines to learn directly from unstructured data coming from their environments. This is how human beings learn. Young children, for example, learn languages primarily by listening to their parents. Supervised learning and reinforcement learning also play a role, but the human brain has an astonishing ability to learn simply by observation and unsupervised interaction with the environment.

Unsupervised learning represents one of the most promising avenues for progress in AI. We can imagine systems that can learn by themselves without the need for huge volumes of labeled training data. However, it is also one of the most difficult challenges facing the field. A breakthrough that allowed machines to efficiently learn in a truly unsupervised way would likely be considered one of the biggest events in AI so far, and an important waypoint on the road to human-level AI.

ARTIFICIAL GENERAL INTELLIGENCE (AGI) refers to a true thinking machine. AGI is typically considered to be more or less synonymous with the terms HUMAN-LEVEL AI or STRONG AI. You’ve likely seen several examples of AGI—but they have all been in the realm of science fiction. HAL from 2001 A Space Odyssey, the Enterprise’s main computer (or Mr. Data) from Star Trek, C3PO from Star Wars and Agent Smith from The Matrix are all examples of AGI. Each of these fictional systems would be capable of passing the TURING TEST—in other words, these AI systems could carry out a conversation so that they would be indistinguishable from a human being. Alan Turing proposed this test in his 1950 paper, Computing Machinery and Intelligence, which arguably established artificial intelligence as a modern field of study. In other words, AGI has been the goal from the very beginning.

It seems likely that if we someday succeed in achieving AGI, that smart system will soon become even smarter. In other words, we will see the advent of SUPERINTELLIGENCE, or a machine that exceeds the general intellectual capability of any human being. This might happen simply as a result of more powerful hardware, but it could be greatly accelerated if an intelligent machine turns its energies toward designing even smarter versions of itself. This might lead to what has been called a “recursive improvement cycle” or a “fast intelligence take off.” This is the scenario that has led to concern about the “control” or “alignment” problem—where a superintelligent system might act in ways that are not in the best interest of the human race.

I have judged the path to AGI and the prospect for superintelligence to be topics of such high interest that I have discussed these issues with everyone interviewed in this book.

MARTIN FORD is a futurist and the author of two books: The New York Times Bestselling Rise of the Robots: Technology and the Threat of a Jobless Future (winner of the 2015 Financial Times/McKinsey Business Book of the Year Award and translated into more than 20 languages) and The Lights in the Tunnel: Automation, Accelerating Technology and the Economy of the Future, as well as the founder of a Silicon Valley-based software development firm. His TED Talk on the impact of AI and robotics on the economy and society, given on the main stage at the 2017 TED Conference, has been viewed more than 2 million times.

Martin is also the consulting artificial intelligence expert for the new “Rise of the Robots Index” from Societe Generale, underlying the Lyxor Robotics & AI ETF, which is focused specifically on investing in companies that will be significant participants in the AI and robotics revolution. He holds a computer engineering degree from the University of Michigan, Ann Arbor and a graduate business degree from the University of California, Los Angeles.

He has written about future technology and its implications for publications including The New York Times, Fortune, Forbes, The Atlantic, The Washington Post, Harvard Business Review, The Guardian, and The Financial Times. He has also appeared on numerous radio and television shows, including NPR, CNBC, CNN, MSNBC and PBS. Martin is a frequent keynote speaker on the subject of accelerating progress in robotics and artificial intelligence—and what these advances mean for the economy, job market and society of the future.

Martin continues to focus on entrepreneurship and is actively engaged as a board member and investor at Genesis Systems, a startup company that has developed a revolutionary atmospheric water generation (AWG) technology. Genesis will soon deploy automated, self-powered systems that will generate water directly from the air at industrial scale in the world’s most arid regions.