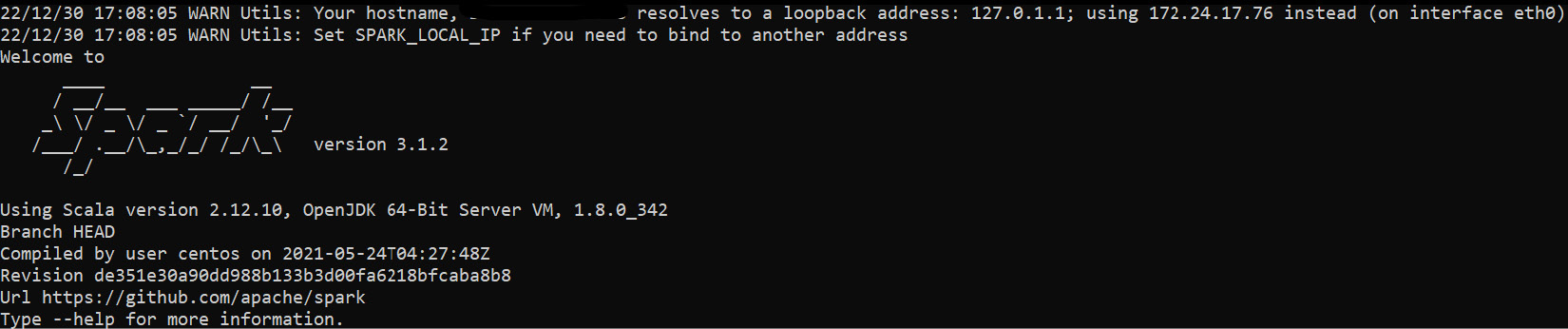

Using PySpark with Defined and Non-Defined Schemas

Generally, schemas are forms used to create or apply structures to data. As someone who works or will work with large volumes of data, it is essential to understand how to manipulate DataFrames and apply structure when it is necessary to bring more context to the information involved.

However, as seen in the previous chapters, data can come from different sources or be present without a well-defined structure, and applying a schema can be challenging. Here, we will see how to create schemas and standard formats using PySpark with structured and unstructured data.

In this chapter, we will cover the following recipes:

- Applying schemas to data ingestion

- Importing structured data using a well-defined schema

- Importing unstructured data with an undefined schema

- Ingesting unstructured data with a well-defined schema and format

- Inserting formatted SparkSession logs to facilitate your work...