What is business intelligence? Before answering this question, I want to pose and answer another question. What isn't business intelligence? It is not spreadsheet analysis done with transactional data with hundreds of thousands of rows. One of the goals of Business Intelligence (BI) is to shield the users of the data from the intelligent logic lurking behind the scenes of the application that is delivering that same data to them. If the integrity of the data is compromised in any way by an individual not intimately familiar with the data source, then there cannot, by definition, be intelligence in the business decisions made with that same data. The following statement is a common theme that will be revisited frequently throughout the book:

Business intelligence works best when the intelligent logic and data delivery are isolated to ensure a single source of truth.

This single source of truth is the key for any business intelligence operation whether it is a Mom-and-Pop soda shop or a Fortune 500 company. Any successfully built report, dashboard, or application that is delivering information to a user through a BI tool must allow transparency between the numbers available in the data source and those that appear in the final product. If the numbers cannot be traced back to the original source, trust between the user and the data will be compromised and this will ultimately defeat the overall purpose of business intelligence.

In my opinion, the most successful tools used for business intelligence directly shield the business user from the query logic used to display that same data in a visual manner of same kind. Business intelligence has taken many forms in terms of labels over the years. For the purposes of this book, we will use the following definition:

Business intelligence is the process of delivering actionable business decisions from analytical manipulation and presentation of data within the confines of a business environment.

The delivery process mentioned in the definition is where the bulk of this book will focus its attention on. The beauty of BI is that it is not owned by any one particular tool that is proprietary to a specific industry or company. Business intelligence can be delivered using many different tools, including some that were not even originally intended to be used for BI. The tool itself should not be the source where the query logic is applied to generate the business logic of the data. The tool should primarily serve as the delivery mechanism of the query that is generated by the data warehouse that houses both the data as well as the logic.

Before we continue on with this chapter, a little bit about me may help understand where I'm coming from. I started out as a data analyst over 10 years ago in 2005 using-at that time-a very popular tool called BusinessObjects. I thought I knew what I was talking about and was quite confident in my skills as an analyst. One day I saw a position open in a department that I wanted to work in. I had thought the qualifications were within my wheelhouse, but apparently the interviewing manager didn't see it the same way. Initially, he was eager and excited to interview me. He must've thought that on paper I was a strong candidate. However, as he kept asking me question after question about my SQL skills his facial expressions showed frustration and disappointment. I will never forget what he said to me at the end, "You have glaring weaknesses". I was quite heartbroken at the time but ultimately used the opportunity to strengthen my technical skills so that I would never put myself in that position again.

I would dig behind the reports to understand the hidden query logic that is pushed out to users. I would then take the query logic (SQL) and connect directly to the data source to understand the logic behind the scenes, which was not directly evident in the final product. As a data analyst, I found my true passion in data visualization (dataviz). Regardless of the data available, I found that I could tell a story with a chart or graph more effectively than a spreadsheet.

However, I didn't always have the relevant data available to do the visualizations that were needed or requested by the business. That ultimately took me down the path from a frontend developer to a backend developer. Only in the backend could I ensure that I have the necessary data available and the level of granularity needed for it to be properly visualized in the front end. As you go through this chapter and other chapters in this book, you will see that I place an emphasis on getting the data right at the earliest stage possible to prevent tedious workarounds for the end users. I love the field of business intelligence and hope to continue to work to bridge the gap between what a business needs and what IT can deliver. More and more now, business intelligence is becoming its own department within an organization as opposed to two separate silos that do not communicate using the same language... literally.

I find that the best way to learn a new tool or a programming language is to plunge in and begin developing right away. Performing basic tasks is good but they don't have to be the goal of an assignment; they should be the means to an end result. If you approach this book as more of a cookbook of recipes for delivering powerful BI applications, you will find much success. The way we will approach a design session is not necessarily the only way, but just one of many ways. Many of these tools are in their infancy and will change as new versions are released in the upcoming years, but they will only grow in their abilities to provide new insights to users. The goal of this book is to get you up and running in every chapter with downloading, installing, and developing a business intelligence application with a different tool. In the back of my mind, as I wrote each chapter, I couldn't help but think about that young aspiring BI developer who was humbled by that interview over 10 years ago and what would have helped him be better prepared for that job.

In this chapter, we will cover the following topics:

Understanding the Kimball method

Understanding business intelligence architecture

Who will benefit from this book?

Working with Data and SQL

Working with business intelligence tools

Downloading and installing MS SQL Server 2014

Downloading and installing AdventureWorks

As we discuss the data warehouse where our data is being housed, we will be remised not to bring up Ralph Kimball, one of the original architects of the data warehouse. Kimball's methodology incorporated dimensional modeling, which has become the standard for modeling a data warehouse for business intelligence purposes. Dimensional modeling incorporates joining tables that have detail data and tables that have lookup data.

A detail table is known as a fact table in dimensional modeling. An example of a fact table would be a table holding thousands of rows of transactional sales from a retail store. The table will house several IDs affiliated with the product, the sales person, the purchase date, and the purchaser, just to name a few. Additionally, the fact table will store numeric data for each individual transaction, such as the sales quantity for sales amount. These numeric values are generally referred to as measures.

While there is usually one fact table, there will also be several lookup or dimensional tables that will have one table for each ID that is used in a fact table. So, for example, there would be one dimensional table for the product name affiliated with a product ID. There would be one dimensional table for the month, week, day, and year of the ID affiliated with the date. These dimensional tables are also referred to as lookup tables, because they basically look up what the name of a dimension ID is affiliated with. Usually, you would find as many dimensional tables as there are IDs in the fact table. The dimensional tables will all be joined to one fact table creating something of a "star" look. Hence, the name for this table arrangement is star schema, as seen in the following screenshot:

It is likely that the fact table will be the largest table in a data warehouse, while the lookup tables will most likely have fewer rows, some just one row. The tables are joined by keys, also known as foreign keys and primary keys. Foreign keys are referenced in fact tables to a unique identifier in a separate lookup table as primary keys. Foreign keys allow the most efficient join between a fact table and a dimensional table as they are usually a numeric data type. The purpose of a foreign key is to locate a single row in a lookup table to join to and establish a relationship. This rule is referred to as the referential integrity constraint and it exists to ensure that a key in a detail or fact table has a unique description to a lookup or dimensional table. As more and more rows are added to a lookup table, that new dimension is just given the next number of the identifier in line, usually starting with something like 1. Query performance between table joins suffers when we introduce non-numeric characters into the join, or worse, symbols (although most databases will not allow that).

As this book progresses, I will continue to emphasize the benefits that arise when the bulk of logic used to produce datasets for visualization is pushed to the database level as opposed to the reporting level. There will always be exceptions, where there will be a need to produce some logic at the reporting level, and I will highlight those scenarios as they arise. Ultimately, the database is more adept at handling complex logic and will also be closer to the source of information, so this will make data quality and data validation more straightforward. If we minimize the number of locations where the logic that feeds a particular BI tool is applied, we can retain our single source of truth for our users. For example, if the requirement for a BI dashboard is to show the current and previous years' sales for US regions only, the filter for region code would be ideally applied in the data warehouse as opposed to within the BI tool. The following is a query written in SQL joining two tables from the AdventureWorks database; it highlights the difference between dimensions and measures. The region column is a dimension column and SalesYTD and SalesPY are measure columns:

Select region.Name as Region, round(sum(sales.SalesYTD),2) as SalesYTD, round(sum(sales.SalesLastYear),2) as SalesPY FROM [AdventureWorks2014].[Sales].[SalesTerritory] region left outer join [AdventureWorks2014].[Sales].[SalesPerson] sales on sales.TerritoryID = region.TerritoryID where region.CountryRegionCode = 'US' Group by region.Name order by region.Name asc

In this example, TerritoryID is serving as the key join between SalesTerritory and 'SalesPerson'. Since the measures are coming from the SalesPerson table, that table will serve as the fact table and SalesPerson.TerritoryID will serve as the foreign key. Since the Region column is dimensional and coming from the SalesTerritory table, that table will serve as the dimensional or lookup table and SalesTerritory.TerritoryID will serve as the dimension ID. In a finely tuned data warehouse, both the fact ID and the dimension ID would be indexed to allow efficient query performance. The output of the SQL statement can be seen in the following screenshot:

This performance is obtained by sorting IDs numerically so that a row from one table that is being joined to another table does not have to be searched through the entire table but only a subset of that table. When the table is only a few hundred rows, it may not seem necessary to index columns, but when the table grows to a few hundred million rows, it may become necessary.

Why is it then that quite often the logic is not applied at the database level but instead at the reporting level on a Tableau dashboard or a Qlik application? Frequently a user of the dashboard will get a request to filter out parts of the results. The user will go to the dashboard developer and put in the request. Sometimes this request goes through an arduous ticketing process with IT that could take weeks or even months. So rather than rely on IT to make the change, the dashboard developer will apply the filter logic at the reporting level instead. If these filters are being performed to correct data quality issues, then applying the logic at the reporting level just masks a more serious issue that needs to be addressed across the entire data warehouse. You will be performing a disservice in the long run as you will be establishing a precedent for data quality being handled by the report developer as opposed to the database administrator. This can ultimately take an organization down a slippery slope towards multiple sources of truth.

Ideal BI tools will quickly connect to the data source and then allow for slicing and dicing of your dimensions and measures in a manner that will quickly inform the business of useful and practical information. Ultimately, the choice of a BI tool by an individual or an organization will come down to the ease of use of the tool as well as the flexibility to showcase data through various components such as graphs, charts, widgets, and infographics.

As you are reading this book, you may be asking yourself, "How will this book benefit me if I'm too technical or if I'm not technical at all? Is the book geared towards managers rather than developers?" The answer to your questions is "Yes." Not every chapter of this book will be for everyone, but having spent the last 10 years in the Business Intelligence industry, I believe there is something for everyone in this book. Certain tools such as Tableau, Qlik, and Power BI allow for quick and flashy visualizations out of the box without much customization. Other tools such as R, Python, and D3.js require more of a programming background, which can lead to massive customization of a visualization but also more of a learning curve when it comes to producing something out of the box.

If you are a business intelligence manager looking to establish a department with a variety of tools to help flesh out your requirements, this book will serve as a good source of interview questions to weed out unqualified candidates. Additionally, the book will highlight specific tools more geared towards data scientists as opposed to data analysts, dashboard developers, and computer programmers. A manager could use this book to distinguish some of the nuances between these different skillsets and prioritize hiring based on immediate needs. In addition to hiring resources, managers are also tasked with licensing decisions based on new and existing software used by their department. At the last count, the Gartner BI Magic Quadrant listed 24 different BI platforms in the current market (https://www.gartner.com/doc/reprints?id=1-2XXET8P&ct=160204). That does not even take into account that some companies, such as SAP, offer multiple sub BI platforms within their main BI platform. This can be a daunting task for a BI manager when it comes to evaluating which platform tool is best suited to meet their organization's needs. With the emphasis on a different BI tool in each chapter, a manager can compare the similarities and differences for each one and evaluate which is more appropriate for them.

Data science is a relatively new position to fill within organizations and in 2012 was deemed the sexiest job of the 21st century by the Harvard Business Review (https://hbr.org/2012/10/data-scientist-the-sexiest-job-of-the-21st-century).

The term data scientist has been more often misused in the BI industry than any other position. It has been lumped in with data analyst as well as BI developer. Unfortunately, these three positions have separate skill sets and you will do yourself a disservice by assuming that one person can do multiple positions successfully. A data scientist will be able to apply statistical algorithms behind the data that is being extracted from the BI tools and make predictions about what will happen in the future with that same dataset. Due to this skill set, a data scientist may find the chapters focusing on R and Python to be of particular importance because of their abilities to leverage predictive capabilities within their BI delivery mechanisms. Very often data scientists find themselves doing the job of a BI developer to prepare the data that they need in a way that allows for statistical analysis. Ideally this task should be left to the BI developer with strong querying skills and allow the data scientist to focus on the hidden story behind the data.

Data analyst is probably the second most misused position behind a data scientist. Typically, a data analyst should be analyzing the data that is coming out of the BI tools that are connected to the data warehouse. Most data analysts are comfortable working with Microsoft Excel. Additionally, may have some working knowledge of how to build or alter existing SQL scripts. Often, they are asked to take on additional roles in developing dashboards that require programming skills outside their comfort level. This is where they would find some comfort using a tool such as Power BI, Tableau, or Qlik. These tools allow a data analyst to quickly develop a storyboard or visualization that allows a quick analysis with minimal programming skills.

A dataviz developer is someone who can create complex visualizations out of data and showcase interesting interactions between different measures inside a dataset that cannot necessarily be seen with a traditional chart or graph. More often than not, these developers possess some programming background such as JavaScript, HTML, or CSS. These developers are also used to developing applications directly for the Web and therefore would find D3.js a comfortable environment to program in.

The examples and exercises that will be utilized in this book will come from the AdventureWorks database. This database has a comprehensive list of tables that mimics a fictional bicycle retailer called AdventureWorks. The examples in this book will draw on different tables from the database to highlight BI reporting from various segments appropriate for the AdventureWorks organization. The areas that we will report on for the AdventureWorks organization are the following:

Human resources

Inventory

Sales

Discounts

A different segment of the data will be highlighted in each chapter, utilizing a specific set of tools. We've already mentioned SQL earlier on in this chapter. SQL or Structured Query Language is the programming language used by databases to communicate relationships between all of the tables in their system. The beauty of SQL is that is pretty much universal with regard to how the tables communicate with each other. A cursory understanding of SQL will be helpful to get a grasp of how data is being aggregated with dimensions and measures. Additionally, an understanding of the SQL statements used will help with the validation process to ensure a single source of truth between the source data and the output inside the BI tool of choice.

Every database environment, whether it is Oracle, Teradata, SAP, or Microsoft, will use a slightly modified version of SQL syntax. The essence is the same but the formatting may be slightly different. Since we will be using Microsoft SQL Server to develop our SQL statements, it will be important for us to become familiar with its formatting and syntax. For more information about learning Microsoft SQL syntax, visit the following website: https://www.techonthenet.com/sql_server/select.php.

Over the course of the last 20 years, there have been a growing number of software products released that were geared towards business intelligence. In addition, there have been a number of software products and programming languages that were not initially built for BI but later on became a staple for the industry. The tools used in this book were chosen based on the fact that they were either built on open source technology or products from companies that provided free versions of their software for development purposes. Many big enterprise firms have their own BI tools and they are quite popular. However, unless you have a license with them, it is unlikely that you will be able to use their tool without having to shell out a small fortune. The tools that we will cover in this book will fall under one of these two general categories:

Traditional programming languages such as R, Python, and D3.js (JavaScript)

Data discovery desktop applications such as Tableau, Qlik, and Power BI

Power BI is one of the relatively newer BI tools from Microsoft. It is known as a self-service solution and integrates seamlessly with other data sources such as Microsoft Excel and Microsoft SQL Server. Our primary purpose in using Power BI will be to generate interactive dashboards, reports, and datasets for users.

In addition to using Power BI, we will also focus on utilizing Microsoft Excel to assist with some data analysis and the validation of results pulled from our data warehouse. Pivot tables are very popular within MS Excel and will be used to validate aggregations done inside the data warehouse.

D3.js, also known as data-driven documents, is a JavaScript library known for its delivery of beautiful visualizations by manipulating documents based on data. Since D3 is rooted in JavaScript, all visualizations make a seamless transition to the Web. D3 allows major customization to any part of a visualization, and because of this flexibility it will require a steeper learning curve that probably any other software program discussed in this book. D3 can consume data easily as a .json or a .csv file. Additionally, the data can be imbedded directly within the JavaScript code that renders the visualization on the Web.

R is a free and open source statistical programming language that produces beautiful graphics. The R language has been widely used among the statistical community, and more recently in the data science and machine learning community as well. Due to this fact, it has gathered momentum in recent years as a platform for displaying and delivering effective and practical BI. In addition to visualizing BI, R has the ability to visualize predictive analyses with algorithms and forecasts. While R is a bit raw in its interface, some IDEs (Integrated Development Environments) have been developed to ease the user experience. For the purposes of this book, RStudio will be used to deliver visualizations developed within R.

Python is considered the most traditional programming language of all the different languages that will be covered in this book. It is a widely used in general-purpose programming language with several modules that are very powerful in analyzing and visualizing data. Similar to R, Python is a bit raw in its own form for delivering beautiful graphics as a BI tool; however, with the incorporation of an IDE, the user interface becomes a much more pleasurable development experience. PyCharm will be the IDE used to develop BI with Python. PyCharm is free to use and allows the creation of the IPython (now called Jupyter) notebook, which delivers seamless integration between Python and the powerful modules that will assist with BI.

Tip

As a note, for the purposes of this book all code in Python will be developed using the Python 3 syntax.

Qlik is a software company specializing in delivering business intelligence solutions using their desktop tool. Qlik is one of the leaders in delivering quick visualizations based on data and queries through their desktop application. They advertise themselves as a self-service BI for business users. While they do offer solutions that target more enterprise organizations, they also offer a free version of their tool for personal use. It is this version that will be discussed in this book. Tableau is probably the closest competitor to Qlik in terms of delivering similar BI solutions.

Tableau is a software company specializing in delivering business intelligence solutions using their desktop tool. If this sounds familiar to Qlik, it's probably because it's true. Both are leaders in the field of establishing a delivery mechanism with easy installation, setup, and connectivity to the available data. Tableau has a free version of their desktop tool, which will be primarily used in discussions in this book. Again, Tableau excels at delivering both quick, beautiful visualizations as well as self-service data discovery to more advanced business users.

Microsoft SQL Server 2014 will serve as the data warehouse for the examples that we will use with the BI Tools discussed previously in this book. Microsoft SQL Server is relatively simple to install and set up, and it is free to download. Additionally, there are example databases that configure seamlessly with it, such as the AdventureWorks database.

First things first. We will need to get started with getting our database and data warehouse up and running so that we can begin to develop our BI environment.

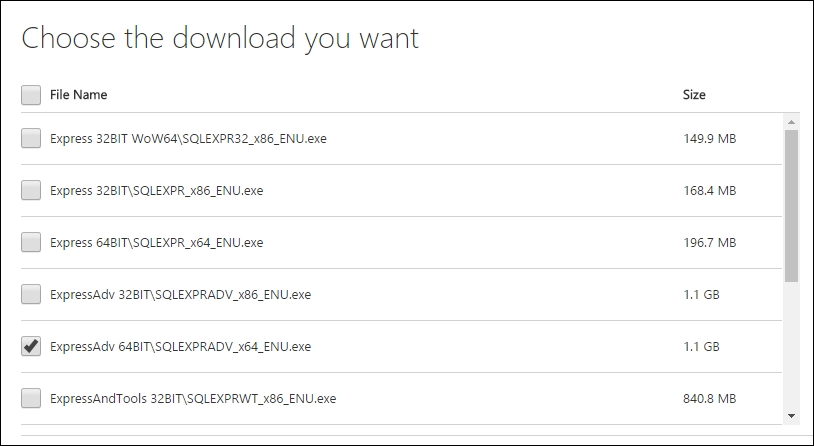

We will visit this Microsoft link to start the download selection process: https://www.microsoft.com/en-us/download/details.aspx?id=42299.

Select the specified language that is applicable to you and also select the MS SQL Server Express version with advanced features, that is, the 64-bit edition, as shown in the following screenshot:

Ideally you'll want to be working in the 64-bit edition when dealing with servers. After selecting the file, the download process should begin. Depending on your connection speed, it could take some time as the file is slightly larger than 1 GB.

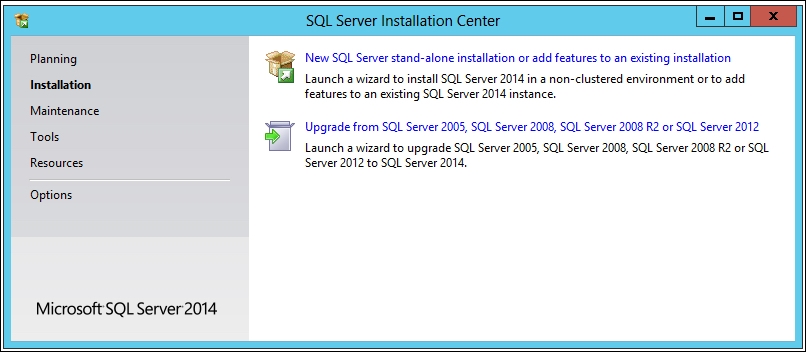

The next step in the process is selecting a new standalone instance of SQL Server 2014, as shown in the following screenshot:

After accepting the license terms, continue through the steps in Global Rules, as well as Product Updates, to get to the setup installation files.

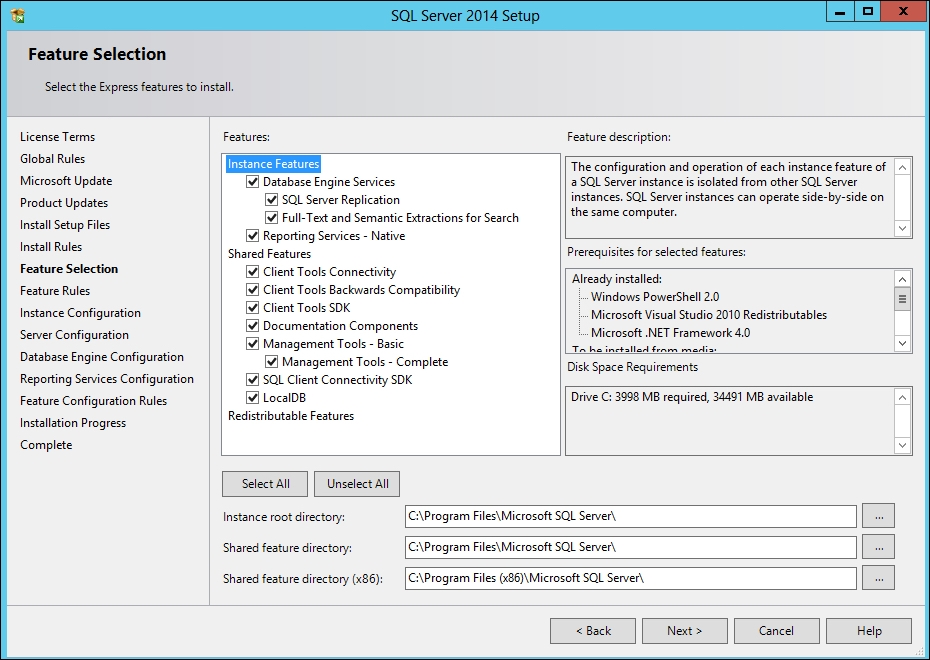

For the Features selection tab, make sure that the following features are selected for your installation:

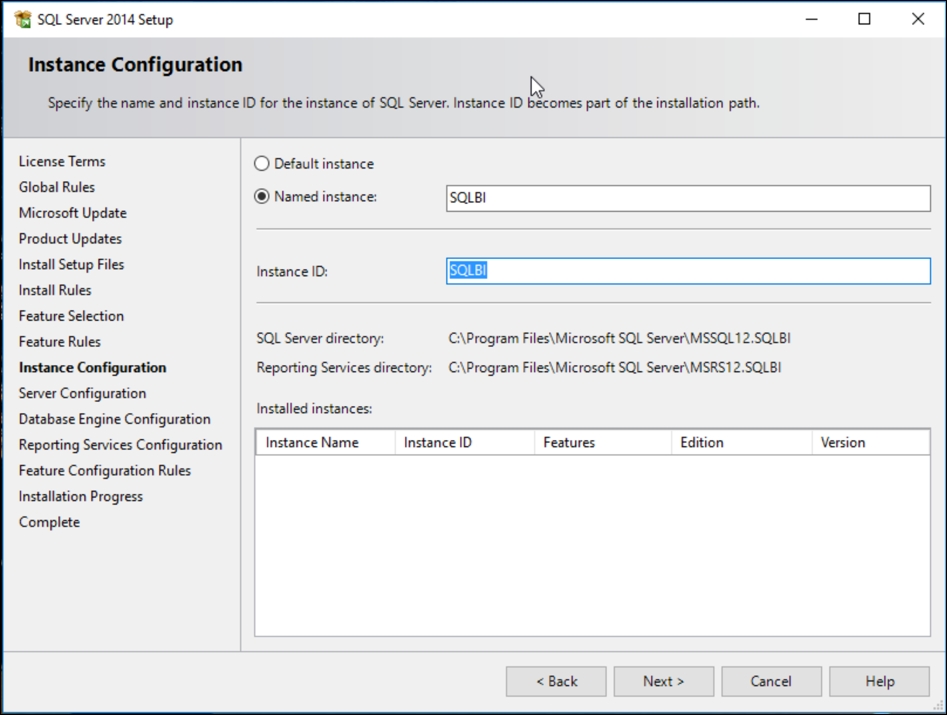

Our preference is to label a Named instance of this database with something related to the environment we will be developing in. Since this will be used for business intelligence, I went ahead and named this instance SQLBI, as shown in the following screenshot:

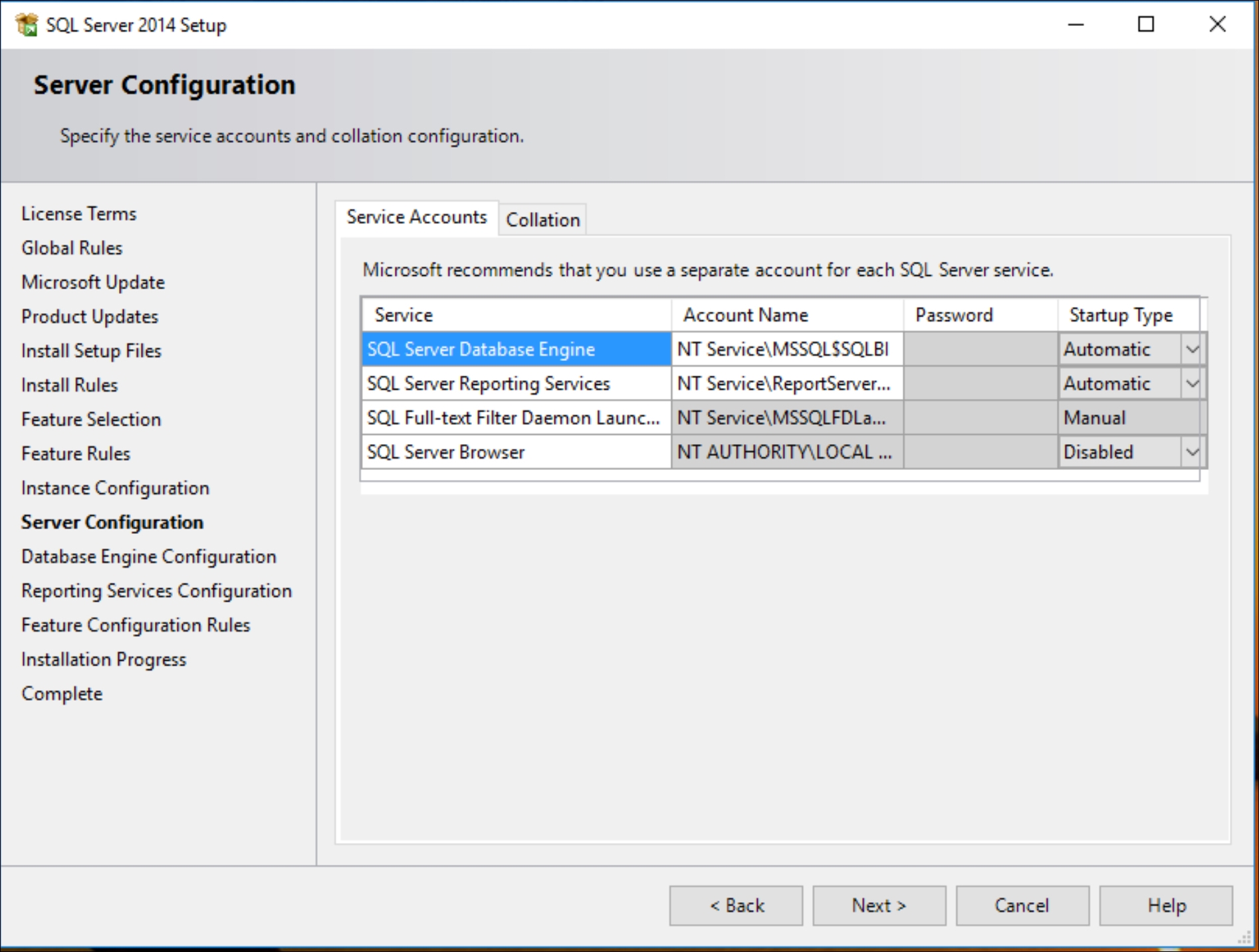

The default Server Configuration settings are sufficient for now; there is no need for further configuration:

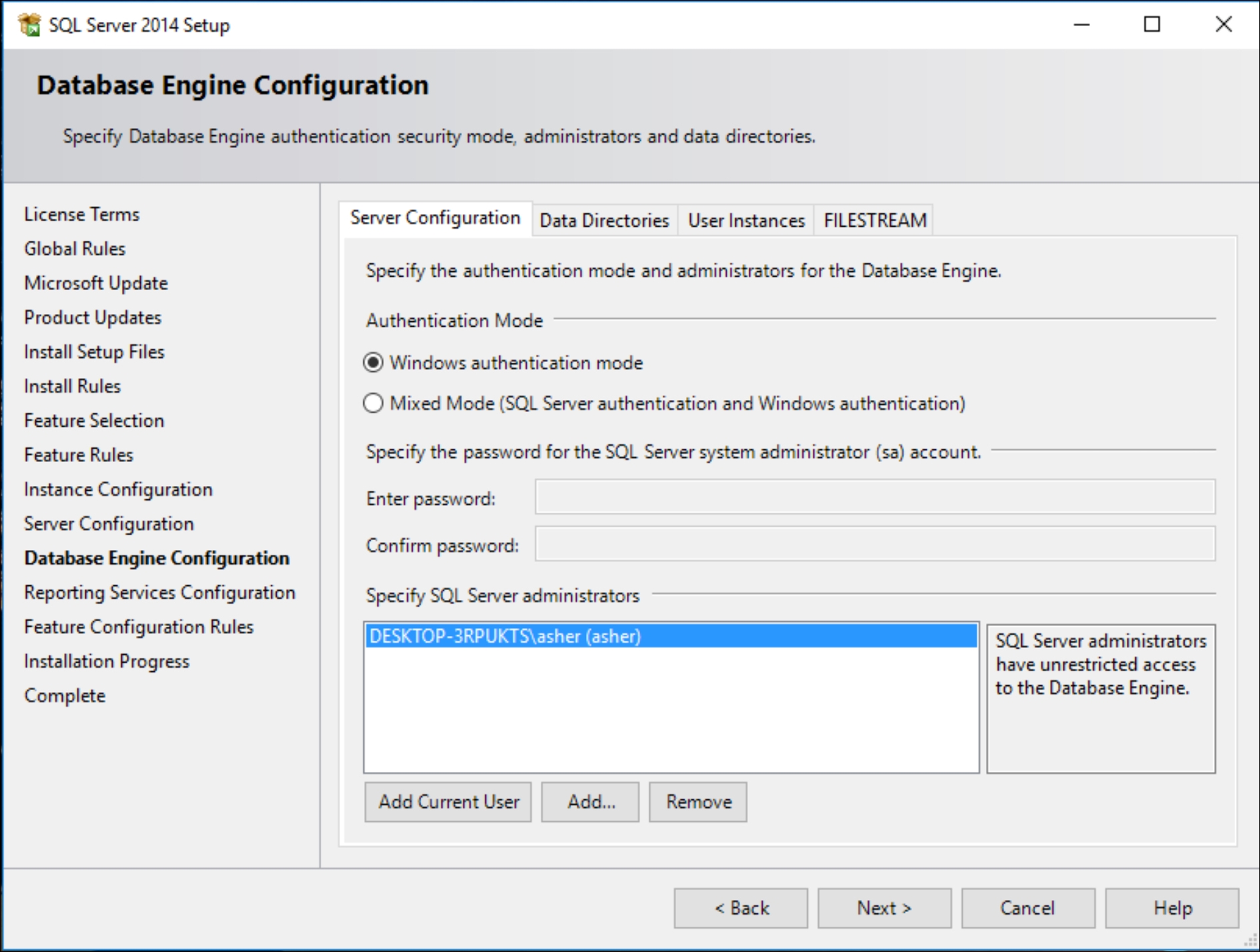

Unless you are required to do so within your company or organization, for personal use it is sufficient to just go with Windows authentication mode for sign-on, as shown in the following screenshot:

We will not need to do any configuring of reporting services, so it is sufficient for our purposes to just proceed with installing Reporting Services Native mode without any need for configuration at this time.

At this point, the installation will proceed and may take anywhere between 20-30 minutes, depending on available resources.

Note

If you have issues with your installation, you can visit the following website from Microsoft for additional help: http://social.technet.microsoft.com/wiki/contents/articles/23878.installing-sql-server-2014-step-by-step-tutorial.aspx.

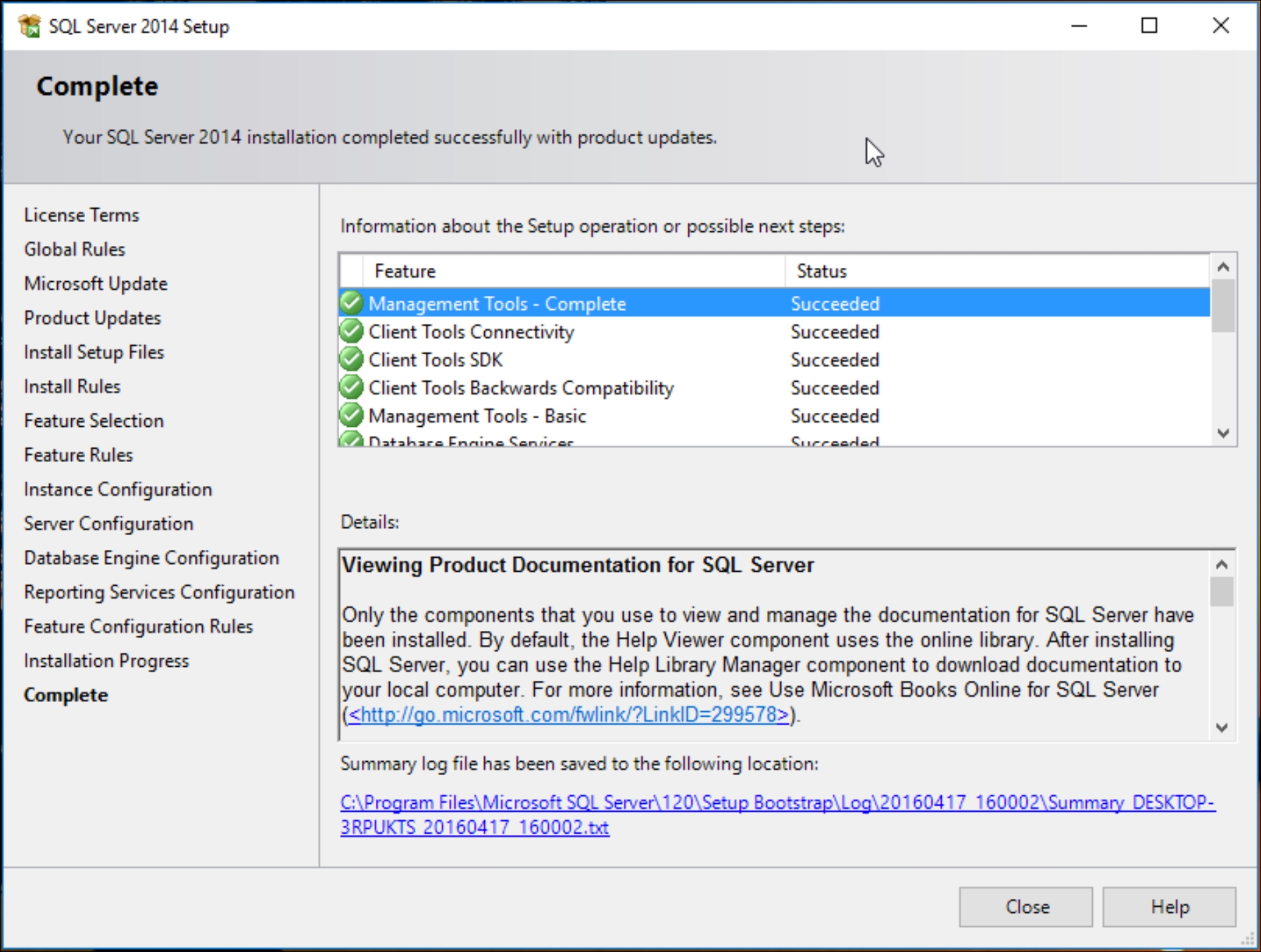

Ultimately, if everything goes well with the installation, you'll want to verify that all portions of the installation have a check mark next to their name and be labeled Succeeded, as shown in the following screenshot:

We are almost finished with establishing our business intelligence data warehouse. We are now at the stage where we will extract and load data into our data warehouse. The last part is to download and install the AdventureWorks database from Microsoft. The zipped file for AdventureWorks 2014 is located at the following link:

https://msftdbprodsamples.codeplex.com/downloads/get/880661.

Once the file is downloaded and unzipped, you will find a file named AdventureWorks2014.bak.

Copy the aforementioned file and paste it in the following folder, where it will be incorporated with your Microsoft SQL Server 2014 Express Edition: C:\Program Files\Microsoft SQL Server\MSSQL12.SQLBI\MSSQL\Backup.

Also note that the MSSQL12.SQLBI subfolder will vary from user to user depending on how you named your SQL instance when you were installing MS SQL Server 2014.

Once that has been copied over, we can fire up Management Studio for SQL Server 2014 and start up a blank new query by going to File | New | Query with Current Connection.

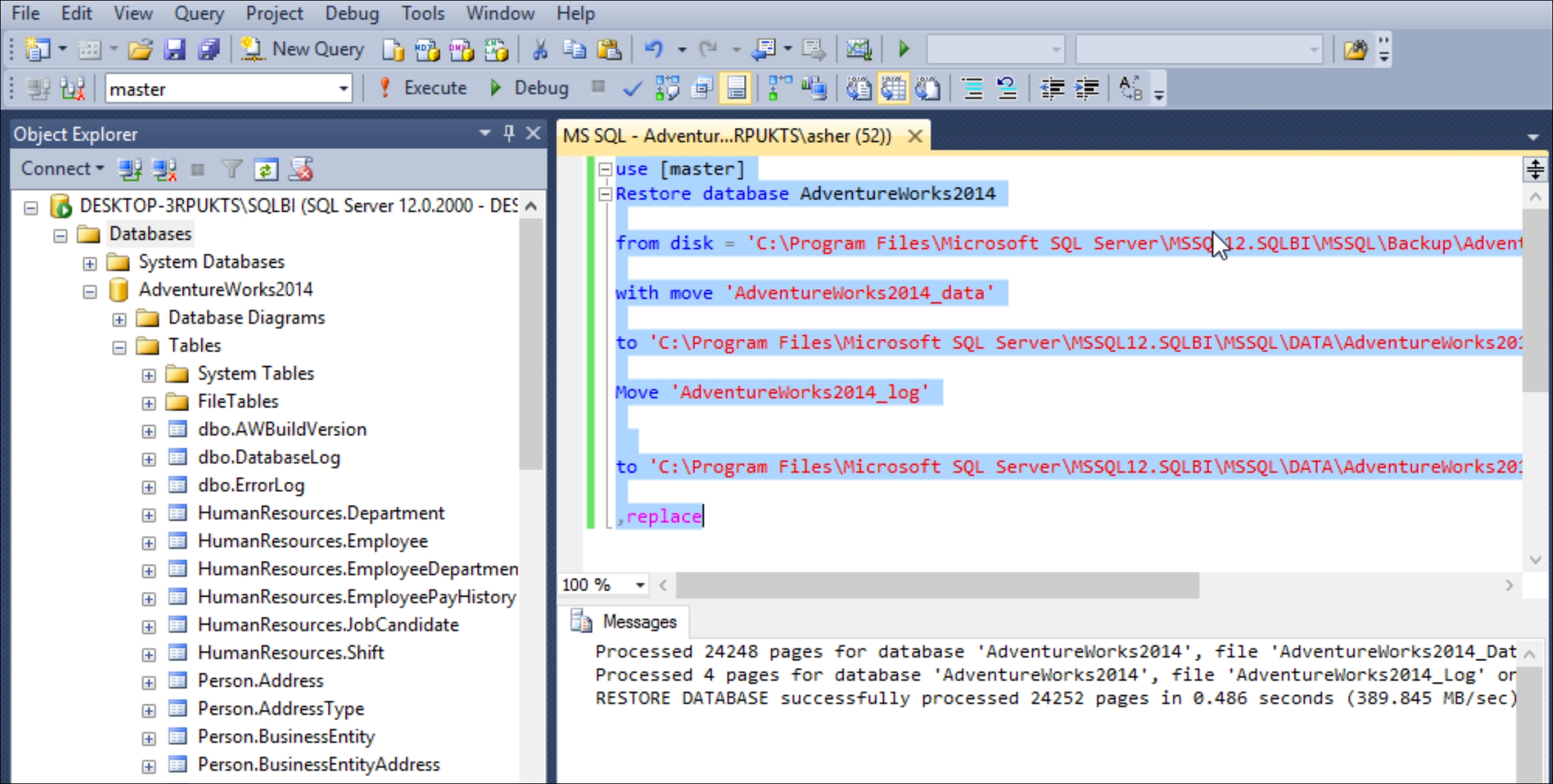

Once you have a blank query set up, copy and paste the following code and execute it:

use [master] Restore database AdventureWorks2014 from disk = 'C:\Program Files\Microsoft SQL Server\MSSQL12.SQLBI\MSSQL\Backup\AdventureWorks2014.bak' with move 'AdventureWorks2014_data' to 'C:\Program Files\Microsoft SQL Server\MSSQL12.SQLBI\MSSQL\DATA\AdventureWorks2014.mdf', Move 'AdventureWorks2014_log' to 'C:\Program Files\Microsoft SQL Server\MSSQL12.SQLBI\MSSQL\DATA\AdventureWorks2014.ldf' , replace

Once again, note that the MSSQL12.SQLBI subfolder will vary from user to user depending on how you named your SQL instance when you were installing MS SQL Server 2014.

At this point, within the database you should have received a message saying that Microsoft SQL Server has Processed 24248 pages for database 'AdventureWorks2014'. Once you have refreshed your database tab in the upper-left corner of SQL Server, the AdventureWorks database will become visible, and so will all the appropriate tables, as shown in this screenshot:

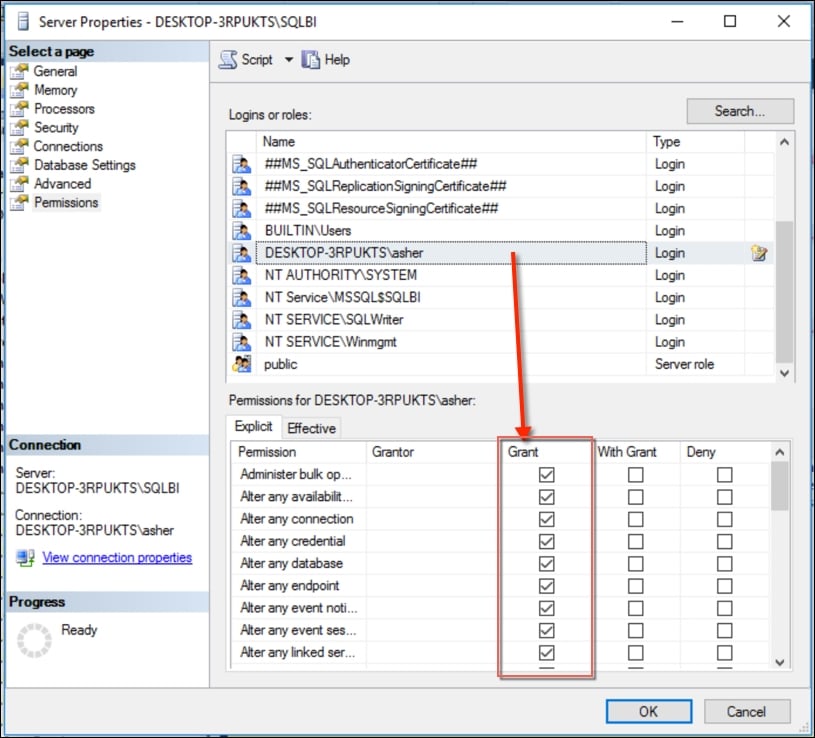

One more step that we will need is to verify that our login account has all the appropriate server settings. We right-click on the SQL Server name in the upper-left portion of Management Studio, select the properties, and then select Permissions inside Properties.

Find your username and check all the rights under the Grant column, as shown in the following screenshot:

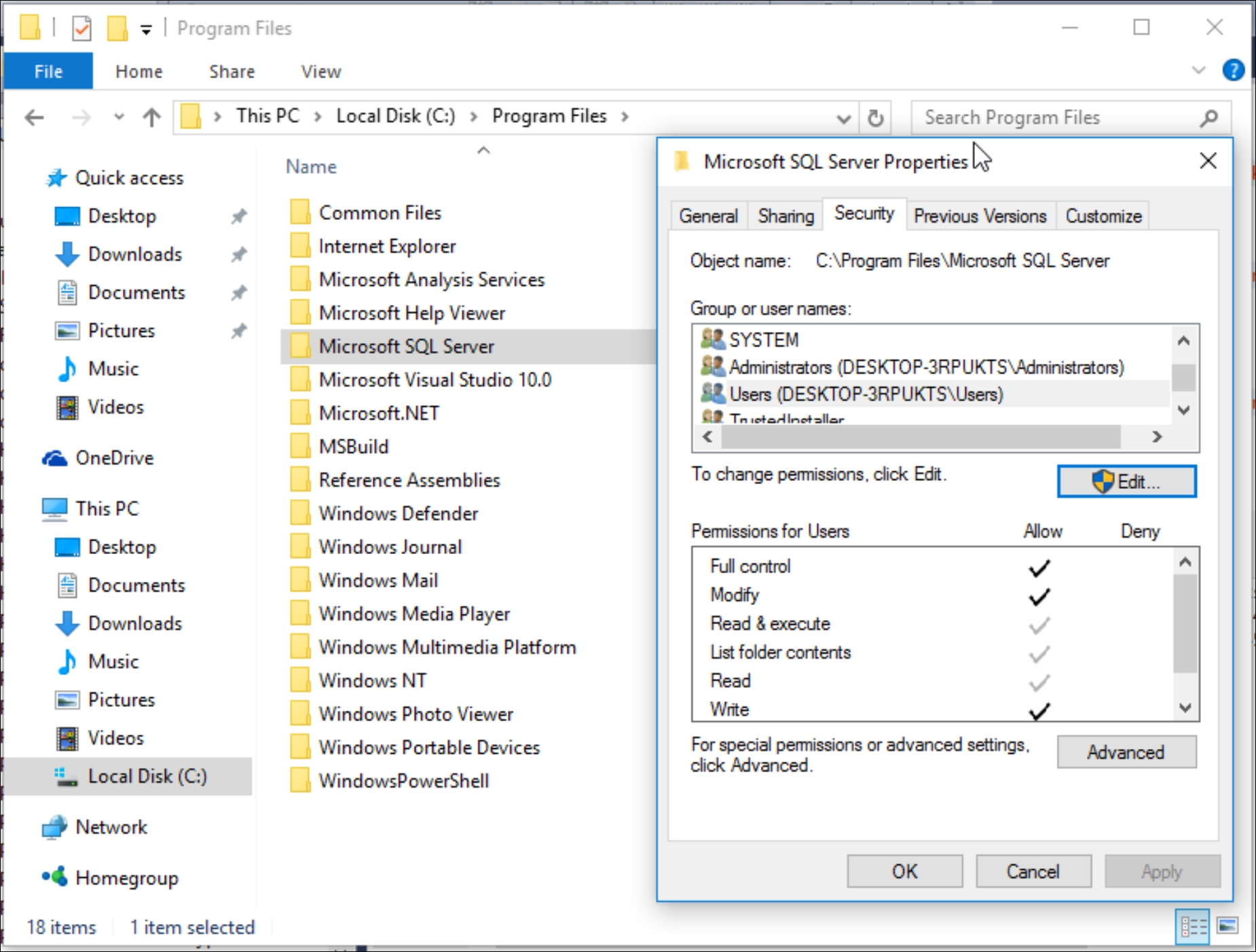

Finally, we need to ensure that the folder that houses Microsoft SQL Server 2014 also has the appropriate rights enabled for our current user. That specific folder is located at C:\Program Files\Microsoft SQL Server\.

For the purposes of our exercises, we will assign all rights for the user or group that will connect to SQL Server to the following folder:

We are finished with installing our data warehouse infrastructure as well as adding our AdventureWorks database to our data warehouse. We are now ready to connect our data warehouse directly to our BI tools.

We have come to the conclusion of the first chapter and we have covered quite a bit of ground. We summarized the core material regarding the data modeling methodology with the Kimball method. We established our definition of business intelligence, which will be applied throughout the book. We also summarized the various tools that we will be using to implement business intelligence with. Our main emphasis will be placed on implementing business intelligence best practices within various tools that will be used based on the data available to us within the AdventureWorks database.

In the next chapter, we will cover extracting additional data from the Web, which will then be added to the AdventureWorks database. This process is known as web scraping and can be performed with great success using tools such as Python and R. In addition to collecting data, we will focus on transforming the collected data for optimal query performance.

Download code from GitHub

Download code from GitHub