Welcome to the future. We have been waiting for a long, long time but it is finally here. The book you hold in your hands would not have been possible half a decade ago. Well, that is not quite true; it was possible, but it would have been placed in the science fiction area, but not anymore. The fact that you are reading this right now is proof that changes are taking place at a tremendous pace, and before you know it you will be in a place you never thought possible.

Take a look at the famous quote by Arthur C. Clarke:

Any sufficiently advanced technology is indistinguishable from magic.

This is true for HoloLens as well. Nobody was able to predict this device a decade ago, and now you have it within your reach.

However, no matter how cool the device itself is, it needs a bit of magic to come alive, and that piece of magic is the software that we are going to write. The software and the software your peers think of is going to be the lifeblood of the device. Without it, the device is just a nice looking piece of hardware. With it, the device is capable of changing the world view for a lot of people. That being said, it is also a lot of fun to write software for the device, so why not get started?

Before we delve globally into the code, let's first examine what we are talking about. I assume you have the machine somewhere near. If you do not have access to one, I suggest that you try to get it. Although the software we are going to build will run on the emulator, you will find that the experience is below par. It just is not the same as developing your software on a real device. Of course, we will use the emulator quite extensively in real-world scenarios; a typical company will have a team of somewhere between 5 and 15 professionals working on the software. It would be rather expensive to buy a device for all of them. One device per team or maybe two devices per team would be enough to make sure that the software does what it should do and that the experience is as magical as it can be.

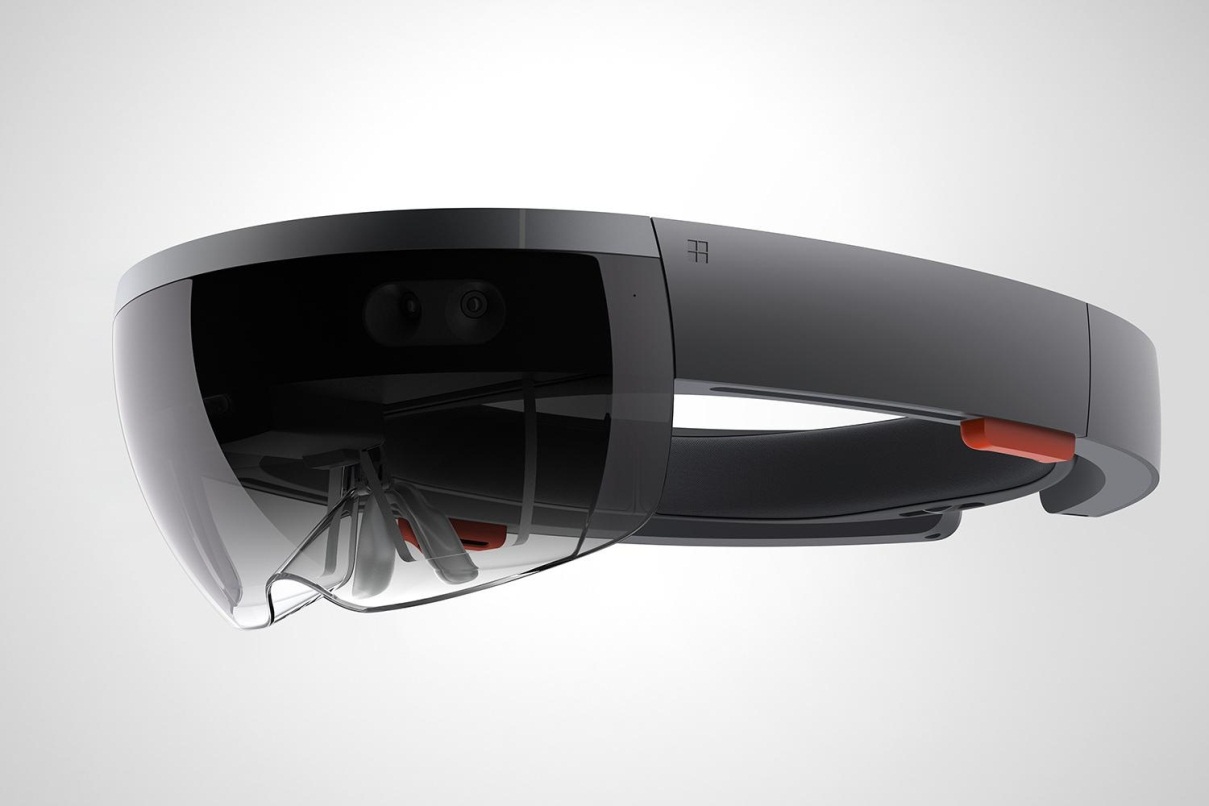

Let's examine the hardware for a bit. What are we talking about? What is a HoloLens? What parts does it consist of?

In case you have never seen a HoloLens before, this is what it looks like:

The device

The HoloLens is a wearable computer. That is something that we have to keep in mind all the time when we write software--people will walk around with the device on their head, and we need to take that into account. It is not only wearable, it is also head mounted.

The device works without any cables attached to it, and the six built-in (non-replaceable unfortunately) batteries make sure that you can use it for 3-4 hours. They designed it like this because the device is meant to be worn on the head and be walked around with. I know, I just said that but this is so fundamentally different from what you are used to that I cannot stress this enough. If you have developed applications for mobile platforms, such as mobile phones or tablets, you might argue those are wearable as well. Well, technically they are, but those devices generally do not interact with their environment. They are location-agnostic. They might know where they are, but they do not really care, but the HoloLens does and so your software should care about the location as well.

The HoloLens communicates with the environment and with its user using a whole lot of sensors and output devices. Let's go through them.

How does the HoloLens know its location? If you look at the device and take a closer look at its front, you will see five tiny cameras. These are the eyes of the device; these are what make the device aware of its surroundings.

The camera you see in the middle is a normal, 2 megapixel RGB style camera, like the ones you will find in your mobile phone. The four cameras to the sides, two to the right and two to the left, are the environment cameras. They look at the area around you and are partially responsible to produce the knowledge the device needs to know what the room you are in looks like. I said partially, because there is another camera in the middle and front of the device. You cannot really see it since it is hidden behind the see-through visor. This camera is the IR camera. The device emits infrared light that is detected by this piece of hardware. By calculating the time it takes for the light to return, the HoloLens can measure depth and thus create a 3D image of your room. If you are familiar with Microsoft Kinect, you will recognize this; essentially, you will be wearing a tiny Kinect controller on your head. If you are not familiar with that amazing device, I suggest you look at https://developer.microsoft.com/en-us/windows/kinect for more information. It is worth getting to know the background of a lot of the principles described in this book.

Next to vision-based input devices, there is also a microphone. Actually, there are four of these in a microphone array. This means that you can give the device spoken commands. The device itself listens to what you are saying, but we can also use this capability in our own software.

So, we have all the input parts. Now, we also need to get data out to the user. The most obvious part of this is the way the HoloLens displays the images. Since the HoloLens is a see-through device, meaning that it is transparent, the device only needs to provide you with the graphics the game or application needs to perform. This is done by the use of what Microsoft calls Holographic lenses. These two lenses are basically two tiny transparent computer screens in front of your eyes, where the device projects its images. Yes, I said transparent screens; these tiny computer screens are almost completely see-through. The resolution of the screens is 1268 x 720 pixels per eye, which seems low, but in reality is enough to generate good-looking graphics. Remember that the screens are tiny, so a lot more pixels will not make a lot of difference. In my experience, the graphics are just great and I noted that this low resolution was not a problem.

Next to the graphics part, there are also some speakers on the device. Microsoft calls them spatial sound speakers, meaning that they are capable of placing sounds in a three dimensional world. The speakers are quite obvious when you look at the device; they are the two red bars in the middle of the headband that will be just above your ears when you wear it. The effect is remarkably good--even with the use of just two tiny speakers, the sound quality is great, and you really feel the sound coming from a place in the room around you. Of course, this can be something we can use in our software. When we place items in our scene that we want the user to look at, we can have it make some noise and people will instinctively turn in the direction the sound is coming from, and this works even if we place the sounds behind the users. In practice, this works really well, and we will use this later on. The reason they decided to place the speakers in this location is that by putting them in front of your ears instead of on top of them, you will not be able to hear the actual real world around you anymore. This way, the virtual sounds blend in nicely with the real-world sounds.

All these input and output devices have to be connected to some sort of computer. With competing products in the virtual reality world, this is done by hooking up the device to some sort of an external computer. This could be a mobile phone, desktop, or laptop computer. Of course, with that last option, you lose the possibility of walking around, so the engineers at Microsoft have not gone down that route. Instead, they put all computing parts inside the device. You will find a complete Windows 10 computer and a special piece of hardware that Microsoft calls the Holographic Processing Unit, or HPU in short, inside the device. So, the computer consists of a CPU, a GPU, and an HPU, making it quite powerful.

The HPU is there for a reason--the computer itself is not that powerful. The machine basically is a 32-bit machine with 2GB of memory (the HPU has 1GB of its own), so the computing power is somewhat limited. If the CPU or the GPU would also have to do the processing of all the raw camera data it receives, it would be too slow to be practical. Having a more powerful general-purpose CPU will help of course, but that would mean the batteries will have to be bigger as well. Next, there would be a problem with heat--a faster computer generates more heat. At some point, it will need active cooling instead of the passive cooling the HoloLens currently has. By having a dedicated processing unit that decodes all the data before passing the results on to the rest of the device, Microsoft is able to have a relatively lightweight, but still fast machine, an impressive piece of engineering, if you ask me.

The power for all of this comes from three nonreplaceable batteries, located at the back of the device. The reason that they are in the back is so that they can act as a counter weight to all the hardware in front, thus delivering a nicely balanced device that is comfortable to wear. The batteries are good for 2-3 hours of usage, depending on the applications you run on it. Recharging is done by plugging a micro-USB cable attached into a power source in it, and takes about 4 hours to complete.

There is a lot we know about the device. However, this is the state of the hardware as it is at the time of writing this book. This might change without notice. However, let me sum up the device for you anyway:

Computing power:

- A 32-bit Intel processor

- 2 GB RAM

- Microsoft Holographic Processing Unit

Sensors:

- Four environment-understanding cameras (two left and two right)

- Depth camera (center front)

- 2-megapixel photo or high-definition video camera (center front)

- Four microphones

- Ambient light sensor

Input and output:

- Two spatial sound speakers

- An audio 3.5 mm jack for headphones

- Volume up and down buttons

- Brightness up and down buttons

- A power button

- Battery status LEDs (five in total, each representing 20% of the charge)

- Wi-Fi 802.11ac

- Bluetooth 4.1

- Micro USB 2.0 (used for power and debugging)

Optics:

- Two see-through Holographic lenses

- Two HD 16:9 light engines, running 1268 x 720 pixels each

- Automatic pupillary distance calibration

- Holographic Resolution, 2.3 million total light points

- Holographic Density, more than 2,500 radiants

Miscellaneous:

- Weight: 579 grams

- Storage: 64 GB flash memory

Let's dive more deeply into the last three points in the optics part.

First is the automatic pupillary distance calibration. Let's be honest--no device except for a 3D printer can generate true 3D objects. It will always be a two-dimensional graphic, but presented in such a way that our brain gets tricked into seeing the missing third dimension. To achieve this, the device creates two slightly different images for each eye. We will get into that in later chapters, but for now let's just take this for granted. Our brain will see the difference between those two images and deduce the depth from that. However, the effect of this depends on one big factor--the images have to be exactly right. The position of each pixel has to be at exactly the right spot.

This means that we cannot just put a pixel in a X-Y coordinate on the display--a pixel that is meant to be in the middle of our view has to be presented right at the center of our pupils, and since no two eyes are the same, the device has to shift the logical center of the display a bit. In the early prototypes of the HoloLens, this was done manually by having a person look at a dot on the screen and then adjust the dials to make sure that the subject only sees on a single, sharp defined point. The automatic pupillary distance calibration in HoloLens now takes care of this, ensuring that every user has the same great experience.

The holographic resolution and density also need a bit more explaining. The idea here is that the actual number of pixels is not relevant. What is relevant is the number of radiants and light points. A light point is a single point of light that the user can see. This is a virtual point we perceive floating somewhere in mid-air. In reality, pixels are made out of these light points. There are many more light points than pixels. This makes sure that the device has enough power to produce pixels the person can actually see. The higher the number of light points you have, the brighter and crisper each pixel seems. The radiant is the number of light points per radian. As you probably know, a radian is a measure of angles, just like degrees. One radian is about 57.2958 degrees. Then, 2,500 radiants mean that for each radian, or 57.2958 degrees angles, we will have 2,500 light points. From this, you can deduce that objects closer by will have a better density of light points than objects far away.

Wearing the device is something that needs some love and attention. The displays I mentioned earlier are actually pretty small. In practice, that is okay. Since they are so close to your eyes, you will not notice their size, but it does mean that a user has to position them fairly precisely in order to have a great experience. The best way to do this is to adjust the band so that is big enough to fit over your head. There is a cogwheel at the back of the head strap that you can turn to make the band wider or narrower. Place the band over your head and make sure that the front of the band is in the middle of your forehead. Then, turn the wheel to make it as tight as you can without making it uncomfortable. The reason that it has to be this tight is that the device--although it is nicely balanced, will shift around when you move about the room. When you move, the display will be unaligned and that can cause a narrower field of view and even can lead to nausea.

When you have balanced the device, you can move the actual visor about. It can move forward and backward to accommodate people wearing glasses and can also tilt up and down a bit. Make sure that your eyes are in the center of the screen, something that is easily done since you will notice images being cut off.

Let's turn the device on--at the back, you will find the power button. Next to that are five LEDs that indicate whether the device is active and the state of the batteries. Each LED stands for 20% of the remaining power; so, assuming your device is fully charged, you will see five LEDs that are switched on. When you wear the device and press the power button, you will be greeted by a friendly "Hello", followed by either a "Scanning your environment" message or the start menu. The "Scanning your environment" message means that the device is looking at the environment. It will map all surfaces and will see if it recognizes them. If it does, it will load the known environment and use that, otherwise it will store a new one.

If this is the first time you have turned on the device, you have to personalize it. The steps necessary are simple, and the wizard will walk you through it, I will not go into that here. However, it is important to note that one step really should not be skipped--the network configuration. The device does not have a built-in GPS receiver and will rely on the network to identify the place it is in. It will store the meshes that make up the rooms and identify those with the network identifier. This way, it will know whether you are using it in your house or in your office, for instance. Next to that, applications, such as Cortana, need network connectivity to run, so the device can only really reliably be used in areas with a decent Wi-Fi reception.

One of the steps required is entering your Microsoft account. If you do not have one, I advise you to set one up before starting the device.

The usage of the device takes some getting used to. In the center of your view, you will see a tiny bright dot--this is your pointer. This dot will remain in the center of your view, so the only way to move it about is to move your head. A lot of first-time users of HoloLens will move their hands in front of the device as if they are trying to persuade the dot to move but that will not work. You need to look at something in order to interact with it.

There are two sorts of pointers in the default HoloLens world--one is the aforementioned dot, the other one is a circle. When the device sees your finger or hand in front of the sensors, it will let you know this and inform you that it is ready to receive commands--the dot will turn into this circle. Now, you can use one of the two default gestures. One is the air tap. Some people struggle with this one, but it is fairly straightforward.

You make a fist, point an index finger toward the sky and then move that finger forward without bending it, all the time leaving the rest of your hand where it is; that is it, tapping with a finger. Ensure that you don't bend the finger, do not move the whole hand, do not turn the hand, or just use one finger. It does not really matter if you use your right or left hand; the device will pick it up.

Next to the air tap gesture, we have the bloom gesture. Although this is slightly more complicated, people seem to have fewer issues with this one. Start with a closed fist in front of the device, palm upward. Next, open your hands and spread your fingers wide--just imagine your hand is a flower opening up.

This gesture is used to go back to the Start menu or the main starting point of an application.

That is it! There are no more gestures. Well, there is the tap-and-hold gesture (move your finger down and keep it there while moving your hand up and down, right and left or back and forth to move stuff about) but that is just a variation of the airtap.

The first time someone puts on the device, it should be calibrated. Calibration means that the screens need to be aligned to the center of the wearer's pupils. Obviously, each person has a different number for this; this number is called the interpupillary distance (IPD). Mine, for instance, is 66.831 millimeters, meaning that my pupils are almost 67 millimeters apart. This is important since this determines how effective the three dimensional effect is. A lot of people skip this step or do it only once for the initial user, but that is a big mistake. If this number is not correctly set, you will have a slightly offset image that just doesn't feel right.

The calibration is done with the calibration tool, one of the applications preinstalled on the device. This application will guide you through the process. The tool first shows a blue rectangle and asks whether you can see all corners. Ensure that the rectangle is in the center of your view by adjusting the device on your head. If you got it right, you can say the word next to move to the next stage. Yes, this is voice controlled, which makes sense--the device is still calibrating your vision, so it cannot rely on airtaps. The next phase consists of showing you outlines of a finger in a circle; you are supposed to place your finger in that outline. You have to do this five times for each eye, with the other eye closed. This process should not take very long, but it is extremely important that you do this for every new user.

The resulting IPD number is not visible in the device, but can be read out in the device portal. The number is unique for the combination of this user for this device--you cannot use that number for the same user on another device. I suggest that you write down that number for users who will be using the device more than once. That way, you can enter it in the device portal without having to go through the calibration process every time.

Note

One word of warning--the device is not meant to be used by children under the age of 13 years. The reason for this is that their eyes tend to be rather close to each other and thus need IPD values too small to work. There are limits to what this number can be, although Microsoft has not yet disclosed these numbers. Having a wrong IPD could result in motion sickness during its use, so it is recommended that you have this set right and prevent children from playing with the device.

Let's take a look at the device portal. Each HoloLens has a built-in web server that serves up pages that tell you things about your device and the state it is in. This can be very useful, as the device itself will give almost no information beside the fact that the network it is connected to, the volume of the sound, and the battery level. The portal gives a lot more information that can be very useful. Besides that, the portal also gives a way to see what the user is seeing, through something called Mixed Reality Capture; we will talk more on that later.

In the HoloLens device portal main screen, you can see the main screen of the device portal. There is quite a lot of information here that will tell you all sorts of things you need to know about the device. However, the first question is, How do we get here?

The HoloLens device portal main screen

The device portal is switched off by default. You have to explicitly enable this option by going to the settings screen; once there, select the Update option and you have the option to choose the For Developers page. Here, you can switch the Developer Mode on, which is a necessity if you want to deploy apps from your development environment to the device. You can pair devices; again, you need this to deploy apps and debug them, and you enable or disable the device portal.

If you set up your device correctly and have it hooked up to a network, you can retrieve the IP address of the device by going to the settings screen, selecting Network, and then selecting the Advanced settings option. Here, you will see all network settings, including the IP address the device currently uses.

When you enter that IP address in a browser, you will be greeted with a security warning that the certificate that the device uses is not trusted by default. This is something we will fix later on.

The first time you use the portal, you will need to identify yourself. The device is protected by a username/password combination to prevent other users from messing with your device.

Assuming that you have taken care of all this, you can now see the device portal main screen, just like the one I showed you before. The screen can be divided into three distinct parts.

At the top, you will see a menu bar that tells you things about the device itself, such as the level of the batteries, the temperature the device is running at (in terms of cool, warm, and hot), and the option to shut down or reboot the device.

On the left-hand side, there is a menu where you choose the different options you want to do or control.

The menu is divided into three submenus:

- Views

- Performance

- System

The Views menu deals with the general information screen and the things your device actually sees. The other two, Performance and System, contain detailed information about the inner workings of the device and the applications running on it. We will have a thorough look at those when we get to debugging applications in later chapters, so for now I will just say that they exist.

The options are as follows:

- Home

- 3D View

- Mixed reality capture

By default, you will get the home screen. At the center of the screen, you will see information about the device. Here, you can see the following items:

- Device status: This is indicated by a green checkmark if everything is okay or a red cross if things are not okay. The next point confirms it.

Windows information: This indicates the name of the device and the version of the software it runs.

The preferences part: Here, you can see or set the IPD we talked about earlier. If you have written down other people's IPD, you can enter them here and then click on

Saveto store it on the device. This saves you from running the calibration tool over and over again. You can also change the name of the device here and set its power behavior: for example, when do you want the device to turn itself off?

The 3D view gives you a sense of what the depth sensor and the environment sensors are seeing:

There are several checkboxes you can check and uncheck to determine how much information is shown. In the preceding screenshot, you can see an example in 3D View, with the default options checked.

The sphere you see represents the user's head and the yellow lines are the field of view of the device. In other words, the preceding screenshot is what the user will see projected on the display, minus the real world that the user will see at all times.

You have the following checkboxes available to fine-tune the view:

Tracking optionsForce visual trackingPauseView optionsShow floorShow frustumShow stabilization planeShow meshShow details

Next, you have two buttons allowing you to update and save the surface reconstruction.

The first two options are fairly straightforward; Force Visual Tracking will force the screen and the device to continuously update the screens and data. If this is unchecked, the device will optimize its data streams and only update if there is something changing. Pause, of course, completely pauses capturing the data.

The View options are a bit more interesting. The Show floor and Show frustum options enable and disable the checkerboard floor and the yellow lines indicating the views, respectively. The stabilization plane is a lot more interesting. This plane is a stabilized area, which is calculated by averaging the last couple of frames the sensors have received. Using this plane, the device can even out tiny miscalculations by the system. Remember that the device only knows about its environment by looking through the cameras, so there might be some errors. This plane, located two meters from the device in the virtual world, is the best place to put static items, such as menus. Studies have shown that this is the place where people feel the most comfortable looking at items. This is the "Goldilocks zone," not too far, not too close but just right.

If you check the Show mesh checkbox, you can see what the devices see. The device scans the environment in infrared. Thus, it cannot see actual colors. The infrared beam measures distances. The grayscale mesh is simply a way to visualize the infrared information.

The depth image from the device

As you can see in the screenshot, I am currently writing this sitting on the right most chair at a table with five more chairs. The red plane you see is the stabilization plane, just in front of the wall I am facing. The funny shape in front of the sphere you see is actually my head--I moved the device in my hands to scan the area instead of putting it on my head, so it mistakenly added me as part of the surroundings.

The picture looks messy, but that is the way the device sees the world. With this information, the HoloLens knows where the table top surface is, where the floor is, and so on.

You can use the Save button to save the current scene and use that in the emulator or in other packages. This is a great way for developers to use an existing environment, such as their office, in the emulator. The Update button is there because, although the device constantly updates its view of the world, the portal page does not. Sometimes, it misses updates because of the high amount of data that is being sent, and thus you might have to manually update the view. Again, this is only for the portal page--the device keeps updating all the time, around five times per second.

The last checkbox is Show details. When this is selected, you will get additional information from the device with regards to the hands it sees, the rotation of the device, and the location of the device in the real world. By location, I am not talking about the GPS location; remember that the device does not have a GPS sensor, but I am talking about the amount of movement in three dimensions since the tracking started.

By turning this option on, we can learn several things. First, it can identify two hands at the same time. It can also locate each hand in the space in front of the device. We can utilize this later when we want to interact with it.

The data we get back looks like this:

Detailed data from the device

In the preceding table, we have information about the hands, the head rotation, and the origin translation vector.

Each hand that is being tracked gets a unique ID. We can use this to identify when the hand does something, but we have to be careful when using this ID. As soon as the hand is out of view for a second and it returns just a moment later, it will be assigned a new ID. We cannot be sure that this is the same hand--maybe someone else standing beside the user is playing tricks with us and puts their hand in front of the screen.

The coordinates are in meters. The X is the horizontal position of the center of the hand, the Y is the vertical position. The Z indicates how far we have extended our hand in front of us. This number is always negative--the lower is it, the further away the hand is. These numbers are relative to the center of the front of the device.

The head rotation gives us a clue as to how the head is tilted in any direction. This is expressed in a quaternion, a term we will see much more in later chapters. For now, you can think of this as a way to express angles.

Last in this part of the screen is the Origin Translation Vector. This gives us a clue as to where the device is compared to its starting position. Again, this is in meters and X still stands for horizontal movement, Y for vertical, and Z for movement back and forth.

The last screen in the Views part is the Mixed Reality Capture. This is where you can see the combined output from both the RGB camera in front of the device and the generated images displayed on the screens. In other words, this is where we can see what the user sees. Not only can we see but we can also hear what the user is hearing. We have options to turn on the sounds the user gets as well as relay what the microphones are picking up.

This can be done in three different quality levels--high, medium, and low.

The following table shows the different settings for the mixed capture quality:

Setting | Vertical Lines | Frames per second | Bits per second |

High | 720 | 30 | 5 Mbits |

Medium | 480 | 30 | 2.5 MBits |

Low | 240 | 15 | 0.6 |

Several users have noticed that the live streaming is not really live--most users have experienced a delay, ranging from two to six seconds. So be prepared when you want to use this in a presentation where you want to show the audience what you, as the wearer, see.

If you want to switch the quality levels, you have to stop the preview. It will not change the quality midstream.

Besides watching, you can also record a video of the stream or take a snapshot picture of it.

Below the live preview, you can see the contents of the video and photo storage on the device itself--any pictures you take with the device and any video you shoot with the device will show up here, so you can see them, download them to your computer, or delete them.

Now that you have your device set up and know how to operate it and see the details of the device as a developer, it is time to have a look at the preinstalled software.

The HoloLens Start screen

When the device is first used, you will see it comes with a bunch of apps preinstalled. On the device, you will find the following apps:

- Calibration: We have talked about this before; this is the tool that measures the distance between the pupils

- Learn gestures: This is an interactive introduction to using the device that takes you through several steps to learn all the gestures you can use

- Microsoft Edge: The browser you can use to browse the web, see movies, and so on

- Feedback: A tool to send feedback to the team

- Setting: We have seen this one before as well

- Photos: This is a way to see your photos and videos; this uses your OneDrive

- Store: The application store where you can find other applications and where your own applications, when finished, will show up

- Holograms: A demo application that allows you to place holograms anywhere in your room, look at them from different angles, and also scale and rotate them. Some of them are animated and have sounds

- Cortana: Since the HoloLens runs Windows 10, Cortana is available; you can use Cortana from most apps by saying "Hello Cortana"; this is a nice way to avoid using gestures when, for instance, you want to start an app.

I suggest you play around a bit. Start with Learn Gestures after you have set up the device and used the Calibration tool. After that, use the Hologram application to place holograms all around you. Walk around the holograms and see how steady they are. Note the effect of a 3D environment--when you look at things from different sides, they really come to life. Other 3D display technologies, such as 3D monitors and movies, do not allow this kind of freedom, and you will notice how strong this effect is.

Can you imagine what it would be like to write this kind of software yourself? Well, that is just what we are about to do.

It is about time we start writing some code. I will show you the different options you have when you want to write software for the HoloLens and give you some helpful pointers for setting up an ideal development environment for these kinds of projects.

First of all, you will need Windows 10. The version you use does not matter if you have a device; however, when you want to use the emulator, you need to have the professional edition of Windows 10. The home edition does not support Hyper-V, the virtualization software that the emulator uses to run a virtual version of the HoloLens software.

Next, you need a development environment. This is Visual Studio 2015 Update 2 at least. You can use the free community edition, the most expensive and compete enterprise edition, or any version in between--the code will work just fine no matter what version you use.

When you install Visual Studio, make sure that you have the Universal Windows Platform (UWP) tools (at least version 1.3.1) and the Windows 10 SDK (at least version (10.0.10586) installed. If you want to use the emulator, and trust me, you do want this, you have to download that as well. Again, in this case, you need to have Hyper-V on your machine and have it enabled.

I assume that you have your environment set up and you have Visual Studio running. Let's start with our first program. Just follow along:

- Choose

FileNewProjectVisual C#WindowsUniversalHolographicHolographic DirectX 11 App (Universal Windows)

New Project wizard in Visual Studio

- After this, you will get a version selector. The versions displayed depend on the SDKs you have installed on your machine. For now, you can just accept the defaults, as long as you make sure that the target version is at least build 10586.

SDK version selector

- Name the project and the solution

HelloHoloWorld.

That's it! Congratulations! You have just created your very first HoloLens application. Granted that it is not very spectacular, but it does what it needs to do.

Note

As a side note, I will use the term "app" from now on to distinguish mobile or holographic applications from-full-blown applications that run on desktop computers. The latter are usually called "applications", whereas the former, usually smaller items, are called apps.

To make sure that everything works fine, we will first see if we can deploy the app to the HoloLens emulator. If you have decided not to install this and choose to use a physical device instead, please skip to the next part.

In the menu bar, you can choose the environment you want to run the app on. You might be familiar with this, but if you are not, this is where you specify where the app will be deployed. You have several options, such as the local machine and a remote machine. Those two are the most used in normal development, such as if you are writing a universal Windows platform application or a website, but that will not work for us. We need to deploy the app to a Holographic capable device such as the emulator. If you have installed that, it will show up here in the menu, as follows:

The version of the emulator might be different on your system--Microsoft continuously updates its software, so you might have a newer version. The one shown here is the latest one that was available during the writing of this book.

Now that you have selected this, you can select Run (through the green arrow, pressing F5, or through the Debug | Start Debugging menu). If you do that the emulator will start up. This might take some time. After all, it is starting up a new machine in the Hyper-V environment, loading Windows 10, and deploying your app.

However, after a couple of minutes, you will be greeted with the following view:

Our first HoloLens app in the emulator!

You will see a rotating multicolored cube on a black background.

Before we can dive into the code, it would be worthwhile to learn to use the emulator a bit. Obviously, you cannot use the gestures we have talked about before--it will not see what you are doing and you have to emulate these. Fortunately, it is not too hard to get used to the controls. You can use the mouse or the keyboard, or you can attach an Xbox controller to your machine and use that as well. We will cover the main controls; the more advanced options will be discussed when we need them in later chapters.

The following table shows the Keyboard, mouse, and Xbox controls in the emulator:

Desired gesture | Keyboard and mouse | Xbox controller |

Walk forward, back, left, and right | W, S, A, and D | Left stick |

Look up, down, left, and right | Click and drag the mouse; use arrow keys on the keyboard. | Right stick |

Air tap | Right-click on the mouse. Press Enter on the keyboard | A button |

Bloom gesture | Windows key of F2 | B button |

Hand movement for scrolling, resizing, and zooming | Press Alt key + right mouse button, then move the mouse. | Hold right trigger and A button, then move the right stick |

In the center of your view, you will see a dot; this is the cursor. Do not try to move this dot--this will always be in that spot. Move the emulator with the walk and look options to position the dot on the menu item you want to choose, then perform the air tap with the options mentioned above. This takes some practice, so try it out for a little while; it will be worth the effort.

Note

When you are using the emulator, you will note that Alt + Tab does not get you back. This does make sense since you are working in a separate computer and Alt + Tab does not work on HoloLens. You really have to click somewhere out of the emulator screen to redirect the input back to your own computer.

Using the emulator can be a great tool in your toolbox, especially when you are working in a team; having a device for each developer is usually not an option. The devices are pretty expensive, and you don't need one to develop your app. Of course, when you are working on a project, it is invaluable to deploy to the device every now and then and see how your code behaves in the real world. After all, you can only experience the true power of the HoloLens by putting it on.

So, we need to deploy the code to the device. First of all, you need to make sure that you have the Developer Mode switched on. You can do this by going to the Settings app and selecting the For Developers option in the Update part. There, you can switch the Developer mode on.

Once you have done this, you can start the deployment from Visual Studio to the device. To do this, you have two options:

Use Wi-Fi deploymentUse USB deployment

Since you already have set up the device to use the network, you can use the option. This one tends to be slightly slower than using the USB deployment, but it has the advantage that you can do this without having a wire attached to the device. Trust me--this has some advantages. I have done a deployment through USB only to discover I could not immediately stand up and walk around my holograms--I forgot the cable was still attached to the device.

If you chose the Wi-Fi deployment option, you can choose Remote as your deployment target. If you do this the first time, you will get a menu where you can pick the device out of a list of available devices. The autodiscovery method does not always work--every now and then, you need to enter the IP address manually.

You can find this IP address in the Settings | Network & Internet | Advanced Options menu on the device. This is the same IP address you use to launch the device portal.

The Remote Connections dialog

Make sure that you have the Authentication Mode set to Universal, otherwise your device will not accept the connection.

You need to take an additional step to deploy--your device needs to pair with your computer. This way you can be sure that no other people connect to it without you knowing it. If you start the debugger using the Remote Machine option or try to deploy to the Remote Machine, you will get a dialog box asking you for a PIN.

Visual Studio asking for the PIN to pair

This pin can be obtained in the device by navigating to the Settings app, then to Update, and then selecting For Developers. Here, you can pair the device. If you select this, you will get a PIN globally for each machine you want to connect. Enter this pin in the dialog and you are all set to go--the device should receive your app now. If you choose Deploy in Visual Studio, the app will show up in your list on the device. You can find it by starting the Start screen with the Bloom gesture, then select the + on the right-hand side of the menu. There, you will find the list with all the installed apps, including your new HelloHoloWorld project. Air-tap this to start it. If you are done with it, you can do the Bloom again to go back to the main menu.

Note

If you happen to forget the pin, do not worry. All you have to do is enter a wrong number three times in a row. After that, the device will ask you to reconnect once again from scratch, enabling you to think of a new pin.

If you choose Debug, the app will start automatically--you do not have to select it in the device.

Since the device has a USB port, we can connect it to the computer. Unfortunately, it does not show up in the explorer--we cannot access the filesystem, for instance. However, we can use this to deploy our app to the device. All you have to do in Visual Studio is select as target Device and it will deploy it through the USB cable. As I said before, this is slightly faster than using the Wi-Fi connection, but it gives you fewer options to walk around during debugging.

The code you just wrote is a C#, UWP Windows 10 app using DirectX through the SharpDX library. In later chapters, we will examine this much more closely. DirectX is the technology that allows us to write fast-running apps that use a lot of graphics, such as the holograms we create. However, DirectX is a C++ library and can officially only be used in C++ programs. Luckily, the people at SharpDX have written a wrapper around this, so we can use this in our C# applications and apps as well, this is the reason why the HoloLens SDK developers included this in the template. For more information about SharpDX, I suggest that you have a look at their site at http://sharpdx.org/.

The app uses some libraries from the Windows SDK. However, if you look closely at the References part in the solution, you will note that there are no Holographic-specific libraries included. The reason for this is that the APIs needed to run Holographic apps are standard in the Windows 10 runtime. That is right; your Windows 10 computer has all the code it needs to run Holographic apps. Unfortunately, the hardware you have will not support these APIs, so they are not available to use. If you try to deploy this app to a normal machine, you will get errors--the required capabilities are not available and the runtime will refuse to install the app.

This means that our app is a standard Windows 10 UWP app with some extra capabilities added. If you right-click on the Package.appxmanifest, you will find the following tag somewhere:

<Dependencies>

<TargetDeviceFamily Name="Windows.Holographic" MinVersion="10.0.10240.0" MaxVersionTested="10.0.10586.0" />

</Dependencies> This is what makes sure that our device accepts our app and other systems do not--the app is marked for usage in Windows. holographic-capable device. The numbers you see in MinVersion and MaxVersionTested may differ--these depend on the SDK versions that you have installed and chose when you created the project.

If you have written UWP apps before, you will see that the structure of this app is quite different. This is mostly because of the code SharpDX needs to start up. After all, a Holographic app needs a different kind of user interface than a normal screen-based app. There is no notion of a screen, no place to put controls, labels, or textboxes, and no real canvas. Everything we do needs to be done in a 3D world. An exception to this is when we create 2D apps that we want to deploy to the 3D environment. Examples of this are the Edge Browser and the now familiar Settings app. Those are standard UWP apps running on HoloLens. We will see how to build this later on.

The project should look like this:

The project structure

As you can see, we have the normal Properties and References parts. Next, we have the expected Program.cs and Assets folder. That folder contains our logos, start screen, and other assets we usually see with UWP apps. Program.cs is the starting point of the app. We also can identify the HelloHoloWorld_TemporaryKey.pfx. The app needs to be signed in order to run on the device, and this is the key that does this. You cannot use this key to deploy to the store, but since we have set our device in developer mode, it will accept our app with a test key such as this one. Package.appxmanifest we have already looked at.

The other files and folder might not be that familiar.

The Common folder contains a set of helper classes that help us use SharpDX. It has some camera helpers that act as viewport to the 3D world, timers that help us with the animation of the spinning cube, and so on.

The Content folder has the code needed to draw our spinning cube. This has the shaders and renderers that SharpDX needs to draw the cube we see when we start the app. We will have a closer look at how this works in later chapters.

The AppViewSource.cs and AppView.cs files contain the AppViewSource and AppView classes, respectively. These are the boilerplate code files that launch our scene in the app. AppViewSource is used in Program.cs, and all it does is start a new AppView. AppView is where most of the Holographic magic happens.

The following are the five different things this AppView class does:

Housekeeping: The constructor and the

Dispose()method live here. The code needs to be IDisposable since most DirectX-based code is unmanaged. This means we need to clean things up when needed.IFrameworkViewmembers: IFrameworkView is the interface DirectX uses to draw its contents to. This contains the methods to load resources, run the view, and set a window that receives the graphics, and so on.

Application Lifecycle event handlers: If you have developed Store apps before, you will recognize these; this is where we handle suspension, reactivating, and other lifecycle events.

Window event handlers: Next to the lifecycle events, there are some other events that can occur during runtime:

OnVisibilityChangedandOnWindowClosedcan happen. This is where they are handled.Input event handler: I mentioned before that you can hook up a Bluetooth keyboard to the device. If you do that, the user can press keys on that keyboard and you need to handle those. This is where that part is done. I would recommend against using this in normal scenarios. People walking around with a HoloLens will not normally carry a keyboard with them. However, in certain use cases, this might be desirable.

In the IFrameworkView.Initialize method, the app creates a new instance of the HelloHoloWorldMain class. This class is where our custom code is placed, so basically this is our entry point. The rest can be considered as boilerplate code, stuff the app needs to do anything at all.

In the following chapters, we will adapt this class heavily, but for now I want to show you another way to create HoloLens apps that will give you much nicer results much more easily. We will start to use Unity3D.

Microsoft says the best way to build HoloLens apps is to use Unity3D. Unity3D, or Unity as some people call it, is a cross-platform game engine. This application was first used to create OS X applications in 2005, but has grown to support more than 15 platforms at the moment. Since one of those platforms is Direct3D on Windows, this was an obvious choice for the HoloLens team as the way to build 3D worlds.

Next to being a game engine, it also is a development environment for this engine, making it a great tool with which to create HoloLens apps. Unity3D natively supports HoloLens as a platform, so you do not need to install plugins.

Unity is not free. However, the Unity Personal license is all you need if you want to develop HoloLens apps and is free if you fulfill the requirements. I suggest that you go to their website to look up the exact license agreement, but basically it says that if the company using Unity has less than $100,000 in annual gross revenue, you are free to use the tool.

Note

If you are unfamiliar with Unity I can reassure you that there is nothing magical about it and the tool is not that hard to learn. In this and in the following chapters, I will show you all you need to know to work with it.

When you install Unity from their website, you also need to install the Unity plugin for Visual Studio. There is a very good reason for this. Unity allows you to deploy to all their platforms from within the Unity editor itself, with one notable exception--the HoloLens. The final compile and build and the deployment still need to be done through Visual Studio.

Next to the Build and Deploy scenarios, we will also need Visual Studio to write scripts. Although you can use MonoDevelop, a free independent development tool based on the Mono framework, I still recommend using Visual Studio. You need Visual Studio anyway, so why not take advantage of the power of this IDE?

One word of warning--you should make sure that you have enough memory in your machine to have two instances of Visual Studio running at the same time. You will use one instance to edit your scripts, and the other to do the building and deploying of the UWP application.

Scripts in Unity are pieces of code that enhance or change the behavior of objects or add new behavior to them. Unity itself is more a 3D design environment and leaves the writing of the scripts to other tools. So you will find yourself switching between the Unity editor and the Visual Studio editor quite a lot.

When you have all the tools installed, it is time to start up Unity. Immediately, you will notice a big difference between Unity projects and Visual Studio projects. The latter has solution files and project files that determine what goes together to create a project. Unity, however, uses a folder to determine what a project is.

When you start up Unity, you will be greeted by a screen that gives you the option to open a previous project or to create a new one. When you select New Project, you will get this:

The Unity new project screen

Unity wants you to give the project a name, a folder where the files will be stored, and an organization to which the license is assigned.

We can choose to have a 3D or 2D application, which we will leave to the default 3D option. The Add Asset Package gives you the option to add additional packages, but we will not use that here for now. The Analytics option is quite handy to debug your app.

Press Create project to have Unity create the necessary folders and files for you.

The default Unity screen

The screen we see is what the game looks like. For now, this is an empty screen with some sort of ground and sky above it. In the left-most panel, you see the objects currently available. Unity maintains a hierarchy of objects, and this is where you can see them. In the lower part of the screen, you see the Assets we have in our project. Currently, we do not have any assets, so this is empty, but we will later add some.

The two items we do have are a camera and a light source. The light is important--without light there is nothing to see in our virtual world. The camera is usually the way we look at the scene, but in the HoloLens this works slightly different--we need two cameras. One for each eye, remember? Fortunately, the tool takes care of this for us, so we do not need to worry about it.

We need to fix the camera a bit though. Remember when we started up the emulator and everything was black? The reason for this is that anything that is rendered black in the device is going to be transparent. The reason for this is quite straightforward--the device adds light points to the real world and black would mean it removes light points from the real world. This is unfortunately physically impossible. So anything that is black will not get any light point and is, therefore, transparent. We need to change this here as well. Select the Main Camera in the left side and see how the properties appear on the right-hand side in the inspector . This is where we can make changes to our assets, in this case, our camera.

First, we need to change the position. The default camera is placed at the coordinates {0, 1, -10}. In HoloLens, however, we are the camera. We are at the center of what is going on, so we need to change these to camera coordinates to {0,0,0}. You enter these values manually or you can click the cogwheel in the top-right corner and click on Reset. This will reset all values to the default which in our case is {0,0,0}.

Another thing we need to change is the color of the virtual world. We do this by changing Clear flags. This is the color that is being used when no pixels need to be drawn for our scene. In a game, it would be nice to have a default background such as the one we see now, but in HoloLens, we want the default to be transparent and thus black.

Change Clear Flags from Skybox to Solid color and change the background color underneath this to black (RGB--0,0,0).

Every now and then, Unity might show Skybox again when we select other objects, but this is something we can ignore.

The camera itself has a "MainCamera" tag. This means that the SDK will take this camera and use it as the point of view. You can have multiple cameras in a scene, but only one camera can be the main camera. By default, this tag is already assigned, so we do not need to change anything here.

The properties of the main camera

Our scene looks quite dull. We have nothing in our world besides a black background. So let's add something a bit more interesting to our world.

We will add a sphere in our world. To do this, take the following steps:

- Make sure that the camera is not selected anymore--click anywhere in the hierarchy panel and verify that the inspector window is empty.

- Click on the

Createbutton at the top of the hierarchy window. - Select

Sphere. You will note that the sphere is added to the hierarchy, although it is not visible. The reason for this is that, in order to make the whole system performant, the insides of our 3D objects are not rendered. Since the sphere is placed at location {0,0,0}, the camera is inside the sphere and we cannot see it. - Move the sphere by selecting it in the hierarchy panel and change its location in the inspector window. Move it about three meters away from the user and move it half a meter below the head of the user. Since the HoloLens is located at {0,0,0}, this means we have to place the sphere at {0,-0.5, 3}.

- Scale the sphere (a sphere with a diameter of 1 meter is quite large) and make it 25 centimeters.

You will end up with something like this:

Our scene with the newly added sphere

Like I have said before, scripting, an important part of Unity projects, is done in C#. I want to add an empty script here, just to show you how its done and what happens if you do so.

In the pane at the bottom, named Assets, right click and create a new folder named Scripts. Although this is not required, it is always a good idea to organize your project in logical folders. This way you can always find your components when you need them. Double-click on that folder to open it and see its contents. It should be empty. Right-click again and select Create -> C# Script. Name it PointerHelper. Unity will add the .cs extension to the file, so you should not do that.

Once you have added this empty script, take a look at the inspector pane on the right side. You will see it is not empty at all--the script contains a class named PointerHelper, derived from MonoBehaviour with two methods in it--Start and Update.

We will dive into this later on, but for now I will tell you that this is common with most scripts. The Start() method is called when the script is first loaded, and the Update() method is called each frame. We will discuss frames later on.

When you have created this script, we need to attach it to an object or an asset in our project. This particular script will later on allow us to create an object that shows the user where they are looking. Therefore, it makes sense to attach it to the camera. To do this, drag the script upward to the hierarchy pane and hover right above Main Camera. If you do this right, you will see that the Camera is selected in a light blue color. If it is a darker shade of blue, you will also see a line underneath the Camera object, stating that this script will be a child item of Camera instead of being a part of it. It needs to be a part of it, not a child.

You can always check whether you attached it correctly by selecting the camera and looking at the inspector pane. At the bottom of that pane, you will see the PointerHelper (Script) component being added to the cameras properties.

We will come back to this script later on.

Of course, before building your project, it is a good idea to save it. If you click on Save or use the Ctrl+S key combination, Unity will ask you to save your scene. A scene is a collection of objects laid out in a 3D world. In our case, this is the collection containing the light source, the camera, and our sphere. Just give your scene a name, I used main and placed that in the subfolder Scenes under Assets (which is the default).

When you have saved your project, we can start to build the code. However, before we do that, we have to tell Unity that we are working on a Windows Holographic application. To do that, do the following:

- Go the

File | BuildSettings or press Ctrl + Shift + B. - In the dialog you see now, press the

Add open scenesbutton. This makes sure that our current scene is part of the package. - In the platform selection box, we can choose for which platform we would like to build our app. As you can see, there is quite a large choice of platforms, but we will choose

Windows Storeand then click on theSwitch Platformbutton. You can tell we now have this platform as the default by the appearance of the Unity logo behind theWindows Storeoption. - On the right-hand side, we can set specific Windows Store options.

- SDK: Windows 10.

- UWP Build Type: D3D (meaning DirectX 3D).

- Build and Run does not matter here; we can leave that.

- Check Unity C# Projects.

- Check Development Build.

Unity Build Settings for HoloLens

- Now, before we press

Build, we need to do one more thing--click on thePlayerSettings...button. This is where you set the properties for the Unity Player, thus the application that loads the scenes and performs the animations, interactions, and so on. We, however, do not use the Unity Player but use our own. Still, we need to set one very important property: - Click on

Player Settings, and see that the Inspector changes. - Click on

Other Settings. - Check

Virtual Reality Supportedand verify that the Virtual Reality SDK containsWindows Holographic. This makes sure that we have two cameras when we run the app. - Now, we can press

Build. Unity will ask us for a place to put the C# and Visual Studio files. I usually create a folder in the current folder with the nameApp. Press Select Folder and Unity will build the code for us.

The first time you do this, you will notice this takes some time. It has to generate quite a lot of files, and it needs to package up all our assets. The next time only the changes need to be processed, so it will be much quicker.

When the building is done, Unity will open an Explorer window where our project is. You will see the newly created App folder. If you open that, you will find the .sln file, in my case HelloHoloOnUnity.sln. This is a Visual Studio solution file we can open in Visual Studio. Let's do this!

When you open the solution in Visual Studio, you will see three different projects. If you only see one, you have opened the .sln file in the root folder. Trust me, this will happen quite often. The names are the same and the folders look quite similar. However, the one with the three projects is the one we can use to build and deploy; so, open that one. Again, this is found in the App folder you created and pointed at in the last step of the Build in Unity.

As I said before, the solution contains three different projects:

Assembly-CSharp(Universal Windows)Assembly-CSharp-firstpass(Universal Windows)HelloHoloOnUnity(Universal Windows)

The last project is our final project, the one we will deploy to the device. The first is a placeholder containing our scripts. If you look at it, you will see in the Scripts folder our PointerHelper.cs file:

The solution structure for a Unity project

If you make changes to the scripts here, they will also be visible in Unity. So this is the place to write the specific code for your application.

The second project is a helper-like project that ties the projects together. We do not have to worry about that now.

The actual project itself, in our case, HelloHoloOnUnity, is nothing more than a loader. It loads the Unity3D player and launches our scenes. The player is deployed to the device and that takes care of running the application. There is not much we can do in this code base now.

Take a look at the configurations in the Build or Deploy drop-down. We have three configurations now, but although some names might seem familiar, they are not quite what you are used to. We have the following options:

Configuration name | Optimizations | Profiler enabled | Usage |

Debug | No | Yes | Debug your scripts |

Master | Yes | No | Deploy to the Store |

Release | Yes | Yes | Test the application and test performance. |

Also, note that the processor architecture is ARM, by default. This is a left-over from the Phone tooling on which the HoloLens SDK is based. However, the HoloLens uses a x86 processor, so you need to set this to x86 as well. I suggest that you use Debug/X86 for now. We also have the now familiar options for the deployment. You can use the emulator, the device through Wi-Fi (Remote Machine), or the device through USB (Device)--which one you choose is up to you.

Build the project, and run the debugger. The build will take some time at first. It needs to get all the packages from the NuGet server. It will build all code; then deploy the whole app and all dependencies to the device or emulator; it then starts the app and attaches the debugger. This whole process can take a couple of minutes.

However, after this is done the first time, the next build and deployments will be much faster.

When it starts up, you will be greeted in the virtual world with a nice Made With Unity logo. After a little while, this goes away. In front of you, about three meters away and about half a meter below your eyes, you will see a white sphere floating in mid-air.

Walk around it! Look at it! Crawl underneath it! Try and grab it!

That last part doesn't work. The default near clipping plane in Unity is 30 centimetres, meaning that everything that is closer than that will not be rendered. Microsoft says it is best to set the clipping plane to 85 centimetre but, to be honest, 30 works just fine, and that is what they use in their own applications anyway. So I tend to keep it at that.

Of course, you can never grab the object; it is virtual. But to be honest -- you were tempted, right?

We have accomplished a lot. We have explored the device, and looked at it both on the inside and on the outside. We have seen what components there are. We have looked at the calibration and played a bit with the default apps. We have written a UWP app using DirectX and deployed it to both the emulator and the actual device. We have created our first Unity project and deployed that as well.

Now, it is time to take a step back and reflect a bit on how to create a great-looking holographic application. The first step in this process is always to have a great idea. I have one. We are going to build it together, but before we can do this, we need to design it and set things up. This is what we will do in the next chapter.

Download code from GitHub

Download code from GitHub