A Solution Approach

As a prerequisite, you should have a basic understanding of microservices and software architecture style. Having a basic understanding could help you to understand the concepts and this book thoroughly.

After reading this book, you could implement microservices for on-premise or cloud production deployment and learn the complete life-cycle from design, development, testing, and deployment with continuous integration and deployment. This book is specifically written for practical use and to ignite your mind as a solution architect. Your learning will help you to develop and ship products for any type of premise, including SaaS, PaaS, and so on. We'll primarily use the Java and Java-based framework tools such as Spring Boot and Jetty, and we will use Docker as a container.

In this chapter, you will learn the eternal existence of microservices, and how it has evolved. It highlights the large problems that on-premise and cloud-based products face and how microservices deals with it. It also explains the common problems encountered during the development of SaaS, enterprise, or large applications and their solutions.

In this chapter, we will learn the following topics:

- Microservices and a brief background

- Monolithic architecture

- Limitation of monolithic architecture

- The benefits and flexibility that microservices offer

- Microservices deployment on containers such as Docker

Evolution of microservices

Martin Fowler explains:

Let's get some background on the way it has evolved over the years. Enterprise architecture evolved more from historic mainframe computing, through client-server architecture (two-tier to n-tier) to Service-Oriented Architecture (SOA).

The transformation from SOA to microservices is not a standard defined by an industry organization, but a practical approach practiced by many organizations. SOA eventually evolved to become microservices.

Adrian Cockcroft, a former Netflix Architect, describes it as:

Similarly, the following quote from Mike Gancarz, a member that designed the X Windows system, which defines one of the paramount precepts of Unix philosophy, suits the microservice paradigm as well:

Microservices shares many common characteristics with SOA, such as the focus on services and how one service decouples from another. SOA evolved around monolithic application integration by exposing API that was mostly Simple Object Access Protocol (SOAP) based. Therefore, middleware such as Enterprise Service Bus (ESB) is very important for SOA. Microservices are less complex, and even though they may use the message bus it is only used for message transport and it does not contain any logic. It is simply based on smart endpoints.

Tony Pujals defined microservices beautifully:

Though Tony only talks about the HTTP, event-driven microservices may use the different protocol for communication. You can make use of Kafka for implementing the event-driven microservices. Kafka uses the wire protocol, a binary protocol over TCP.

Monolithic architecture overview

Microservices is not something new, it has been around for many years. For example, Stubby, a general purpose infrastructure based on Remote Procedure Call (RPC) was used in Google data centers in the early 2000s to connect a number of service with and across data centers. Its recent rise is owing to its popularity and visibility. Before microservices became popular, there was primarily monolithic architecture that was being used for developing on-premise and cloud applications.

Monolithic architecture allows the development of different components such as presentation, application logic, business logic, and Data Access Objects (DAO), and then you either bundle them together in Enterprise Archive (EAR) or Web Archive (WAR), or store them in a single directory hierarchy (for example, Rails, NodeJS, and so on).

Many famous applications such as Netflix have been developed using microservices architecture. Moreover, eBay, Amazon, and Groupon have evolved from monolithic architecture to a microservices architecture.

Now that you have had an insight into the background and history of microservices, let's discuss the limitations of a traditional approach, namely monolithic application development, and compare how microservices would address them.

Limitation of monolithic architecture versus its solution with microservices

As we know, change is eternal. Humans always look for better solutions. This is how microservices became what it is today and it may evolve further in the future. Today, organizations are using Agile methodologies to develop applications--it is a fast-paced development environment and it is also on a much larger scale after the invention of cloud and distributed technologies. Many argue that monolithic architecture could also serve a similar purpose and be aligned with Agile methodologies, but microservices still provides a better solution to many aspects of production-ready applications.

To understand the design differences between monolithic and microservices, let's take an example of a restaurant table-booking application. This application may have many services such as customers, bookings, analytics and so on, as well as regular components such as presentation and database.

We'll explore three different designs here; traditional monolithic design, monolithic design with services, and microservices design.

Traditional monolithic design

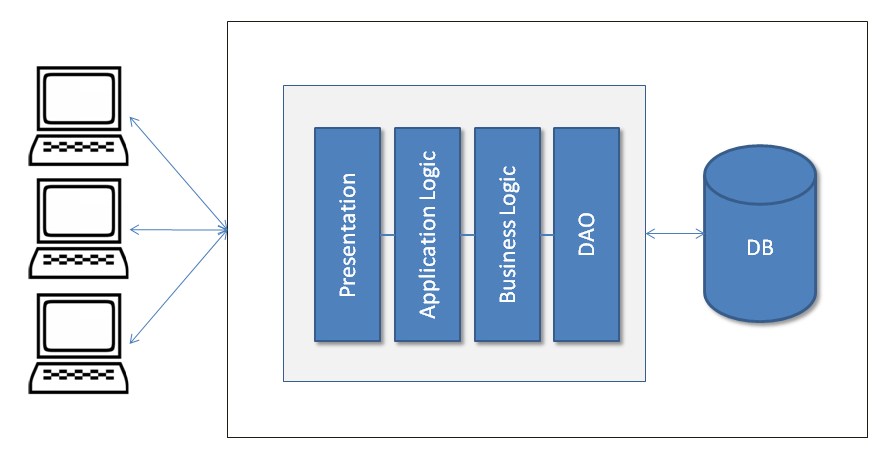

The following diagram explains the traditional monolithic application design. This design was widely used before SOA became popular:

In traditional monolithic design, everything is bundled in the same archive such as Presentation code, Application Logic and Business Logic code, and DAO and related code that interacts with the database files or another source.

Monolithic design with services

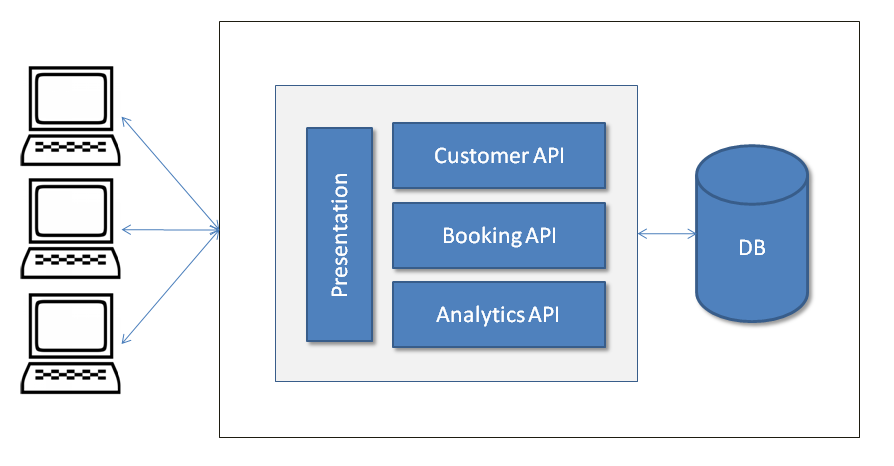

After SOA, applications started being developed based on services, where each component provides the services to other components or external entities. The following diagram depicts the monolithic application with different services; here services are being used with a Presentation component. All services, the Presentation component, or any other components are bundled together:

Services design

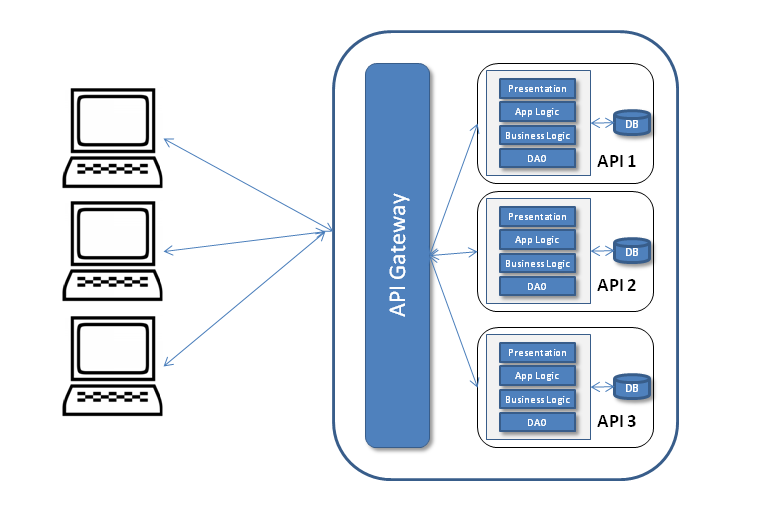

The following third design depicts the microservices. Here, each component represents autonomy. Each component could be developed, built, tested, and deployed independently. Here, even the application User Interface (UI) component could also be a client and consume the microservices. For the purpose of our example, the layer designed is used within µService.

The API Gateway provides the interface where different clients can access the individual services and solve the following problems:

What do you do when you want to send different responses to different clients for the same service? For example, a booking service could send different responses to a mobile client (minimal information) and a desktop client (detailed information) providing different details, and something different again to a third-party client.

A response may require fetching information from two or more services:

After observing all the sample design diagrams, which are very high-level designs, you might find out that in monolithic design, the components are bundled together and tightly coupled.

All the services are part of the same bundle. Similarly, in the second design figure, you can see a variant of the first figure where all services could have their own layers and form different APIs, but, as shown in the figure, these are also all bundled together.

Conversely, in microservices, design components are not bundled together and have loose coupling. Each service has its own layers and DB, and is bundled in a separate archive. All these deployed services provide their specific APIs such as Customers, Bookings, or Customer. These APIs are ready to consume. Even the UI is also deployed separately and designed using µService. For this reason, it provides various advantages over its monolithic counterpart. I would still remind you that there are some exceptional cases where monolithic application development is highly successful, such as Etsy, and peer-to-peer e-commerce web applications.

Now let us discuss the limitations you'd face while working with Monolithic applications.

One dimension scalability

Monolithic applications that are large when scaled, scale everything as all the components are bundled together. For example, in the case of a restaurant table reservation application, even if you would like to scale the table-booking service, it would scale the whole application; it cannot scale the table-booking service separately. It does not utilize the resources optimally.

In addition, this scaling is one-dimensional. Running more copies of the application provides the scale with increasing transaction volume. An operation team could adjust the number of application copies that were using a load-balancer based on the load in a server farm or a cloud. Each of these copies would access the same data source, therefore increasing the memory consumption, and the resulting I/O operations make caching less effective.

Microservices gives the flexibility to scale only those services where scale is required and it allows optimal utilization of the resources. As we mentioned previously, when it is needed, you can scale just the table-booking service without affecting any of the other components. It also allows two-dimensional scaling; here we can not only increase the transaction volume, but also the data volume using caching (Platform scale).

A development team can then focus on the delivery and shipping of new features, instead of worrying about the scaling issues (Product scale).

Microservices could help you scale platform, people, and product dimensions as we have seen previously. People scaling here refers to an increase or decrease in team size depending on microservices' specific development and focus needs.

Microservice development using RESTful web-service development makes it scalable in the sense that the server-end of REST is stateless; this means that there is not much communication between servers, which makes it horizontally scalable.

Release rollback in case of failure

Since monolithic applications are either bundled in the same archive or contained in a single directory, they prevent the deployment of code modularity. For example, many of you may have experienced the pain of delaying rolling out the whole release due to the failure of one feature.

To resolve these situations, microservices gives us the flexibility to rollback only those features that have failed. It's a very flexible and productive approach. For example, let's assume you are the member of an online shopping portal development team and want to develop an application based on microservices. You can divide your application based on different domains such as products, payments, cart, and so on, and package all these components as separate packages. Once you have deployed all these packages separately, these would act as single components that can be developed, tested, and deployed independently, and called µService.

Now, let's see how that helps you. Let's say that after a production release launching new features, enhancements, and bug fixes, you find flaws in the payment service that need an immediate fix. Since the architecture you have used is based on microservices, you can rollback the payment service instead of rolling back the whole release, if your application architecture allows, or apply the fixes to the microservices payment service without affecting the other services. This not only allows you to handle failure properly, but it also helps to deliver the features/fixes swiftly to a customer.

Problems in adopting new technologies

Monolithic applications are mostly developed and enhanced based on the technologies primarily used during the initial development of a project or a product. It makes it very difficult to introduce new technology at a later stage of the development or once the product is in a mature state (for example, after a few years). In addition, different modules in the same project, that depend on different versions of the same library, make this more challenging.

Technology is improving year on year. For example, your system might be designed in Java and then, a few years later, you want to develop a new service in Ruby on Rails or NodeJS because of a business need or to utilize the advantages of new technologies. It would be very difficult to utilize the new technology in an existing monolithic application.

It is not just about code-level integration, but also about testing and deployment. It is possible to adopt a new technology by re-writing the entire application, but it is time-consuming and a risky thing to do.

On the other hand, because of its component-based development and design, microservices gives us the flexibility to use any technology, new or old, for its development. It does not restrict you to using specific technologies, it gives a new paradigm to your development and engineering activities. You can use Ruby on Rails, NodeJS, or any other technology at any time.

So, how is it achieved? Well, it's very simple. Microservices-based application code does not bundle into a single archive and is not stored in a single directory. Each µService has its own archive and is deployed separately. A new service could be developed in an isolated environment and could be tested and deployed without any technical issues. As you know, microservices also owns its own separate processes; it serves its purpose without any conflict such as shared resources with tight coupling, and processes remain independent.

Since a microservice is by definition a small, self-contained function, it provides a low-risk opportunity to try a new technology. That is definitely not the case where monolithic systems are concerned.

You can also make your microservice available as open source software so it can be used by others, and if required it may interoperate with a closed source proprietary one, which is not possible with monolithic applications.

Alignment with Agile practices

There is no question that monolithic applications can be developed using Agile practices, and these are being developed. Continuous Integration (CI) and Continuous Deployment (CD) could be used, but the question is—does it use Agile practices effectively? Let's examine the following points:

- For example, when there is a high probability of having stories dependent on each other, and there could be various scenarios, a story could not be taken up until the dependent story is complete

- The build takes more time as the code size increases

- The frequent deployment of a large monolithic application is a difficult task to achieve

- You would have to redeploy the whole application even if you updated a single component

- Redeployment may cause problems to already running components, for example, a job scheduler may change whether components impact it or not

- The risk of redeployment may increase if a single changed component does not work properly or if it needs more fixes

- UI developers always need more redeployment, which is quite risky and time-consuming for large monolithic applications

The preceding issues can be tackled very easily by microservices, for example, UI developers may have their own UI component that can be developed, built, tested, and deployed separately. Similarly, other microservices might also be deployable independently and, because of their autonomous characteristics, the risk of system failure is reduced. Another advantage for development purposes is that UI developers can make use of the JSON object and mock Ajax calls to develop the UI, which can be taken up in an isolated manner. After development completes, developers can consume the actual APIs and test the functionality. To summarize, you could say that microservices development is swift and it aligns well with the incremental needs of businesses.

Ease of development – could be done better

Generally, large monolithic application code is the toughest to understand for developers, and it takes time before a new developer can become productive. Even loading the large monolithic application into IDE is troublesome, and it makes IDE slower and the developer less productive.

A change in a large monolithic application is difficult to implement and takes more time due to a large code base, and there will be a high risk of bugs if impact analysis is not done properly and thoroughly. Therefore, it becomes a prerequisite for developers to do thorough impact analysis before implementing changes.

In monolithic applications, dependencies build up over time as all components are bundled together. Therefore, the risk associated with code change rises exponentially as code changes (number of modified lines of code) grows.

When a code base is huge and more than 100 developers are working on it, it becomes very difficult to build products and implement new features because of the previously mentioned reason. You need to make sure that everything is in place, and that everything is coordinated. A well-designed and documented API helps a lot in such cases.

Netflix, the on-demand internet streaming provider, had problems getting their application developed, with around 100 people working on it. Then, they used a cloud and broke up the application into separate pieces. These ended up being microservices. Microservices grew from the desire for speed and agility and to deploy teams independently.

Micro-components are made loosely coupled thanks to their exposed API, which can be continuously integration tested. With microservices' continuous release cycle, changes are small and developers can rapidly exploit them with a regression test, then go over them and fix the eventual defects found, reducing the risk of a deployment. This results in higher velocity with a lower associated risk.

Owing to the separation of functionality and single responsibility principle, microservices makes teams very productive. You can find a number of examples online where large projects have been developed with minimum team sizes such as eight to ten developers.

Developers can have better focus with smaller code and resultant better feature implementation that leads to a higher empathic relationship with the users of the product. This conduces better motivation and clarity in feature implementation. An empathic relationship with users allows a shorter feedback loop and better and speedy prioritization of the feature pipeline. A shorter feedback loop also makes defect detection faster.

Each microservices team works independently and new features or ideas can be implemented without being coordinated with larger audiences. The implementation of end-point failures handling is also easily achieved in the microservices design.

Recently, at one of the conferences, a team demonstrated how they had developed a microservices-based transport-tracking application including iOS and Android applications within 10 weeks, which had Uber-type tracking features. A big consulting firm gave a seven months estimation for the same application to its client. It shows how microservices is aligned with Agile methodologies and CI/CD.

Microservices build pipeline

Microservices could also be built and tested using the popular CI/CD tools such as Jenkins, TeamCity, and so on. It is very similar to how a build is done in a monolithic application. In microservices, each microservice is treated like a small application.

For example, once you commit the code in the repository (SCM), CI/CD tools trigger the build process:

- Cleaning code

- Code compilation

- Unit test execution

- Contract/Acceptance test execution

- Building the application archives/container images

- Publishing the archives/container images to repository management

- Deployment on various Delivery environments such as Dev, QA, Stage, and so on

- Integration and Functional test execution

- Any other steps

Then, release-build triggers that change the SNAPSHOT or RELEASE version in pom.xml (in case of Maven) build the artifacts as described in the normal build trigger. Publish the artifacts to the artifacts repository. Tag this version in the repository. If you use the container image then build the container image as a part of the build.

Deployment using a container such as Docker

Owing to the design of microservices, you need to have an environment that provides flexibility, agility, and smoothness for continuous integration and deployment as well as for shipment. Microservices deployments need speed, isolation management, and an Agile life-cycle.

Products and software can also be shipped using the concept of an intermodal-container model. An intermodal-container is a large standardized container, designed for intermodal freight transport. It allows cargo to use different modes of transport—truck, rail, or ship without unloading and reloading. This is an efficient and secure way of storing and transporting stuff. It resolves the problem of shipping, which previously had been a time consuming, labor-intensive process, and repeated handling often broke fragile goods.

Shipping containers encapsulate their content. Similarly, software containers are starting to be used to encapsulate their contents (products, applications, dependencies, and so on).

Previously, Virtual Machines (VMs) were used to create software images that could be deployed where needed. Later, containers such as Docker became more popular as they were compatible with both traditional virtual stations systems and cloud environments. For example, it is not practical to deploy more than a couple of VMs on a developer's laptop. Building and booting a VM is usually I/O intensive and consequently slow.

Containers

A container (for example, Linux containers) provides a lightweight runtime environment consisting of the core features of virtual machines and the isolated services of operating systems. This makes the packaging and execution of microservices easy and smooth.

As the following diagram shows, a container runs as an application (microservice) within the Operating System. The OS sits on top of the hardware and each OS could have multiple containers, with a container running the application.

A container makes use of an operating system's kernel interfaces, such as cnames and namespaces, that allow multiple containers to share the same kernel while running in complete isolation to one another. This gives the advantage of not having to complete an OS installation for each usage; the result being that it removes the overhead. It also makes optimal use of the Hardware:

Docker

Container technology is one of the fastest growing technologies today, and Docker leads this segment. Docker is an open source project and it was launched in 2013. 10,000 developers tried it after its interactive tutorial launched in August 2013. It was downloaded 2.75 million times by the time of the launch of its 1.0 release in June 2013. Many large companies have signed the partnership agreement with Docker, such as Microsoft, Red Hat, HP, OpenStack, and service providers such as Amazon Web Services, IBM, and Google.

As we mentioned earlier, Docker also makes use of the Linux kernel features, such as cgroups and namespaces, to ensure resource isolation and packaging of the application with its dependencies. This packaging of dependencies enables an application to run as expected across different Linux operating systems/distributions, supporting a level of portability. Furthermore, this portability allows developers to develop an application in any language and then easily deploy it from a laptop to a test or production server.

Containers are comprised of just the application and its dependencies including the basic operating system. This makes it lightweight and efficient in terms of resource utilization. Developers and system administrators get interested in container's portability and efficient resource utilization.

Everything in a Docker container executes natively on the host and uses the host kernel directly. Each container has its own user namespace.

Docker's architecture

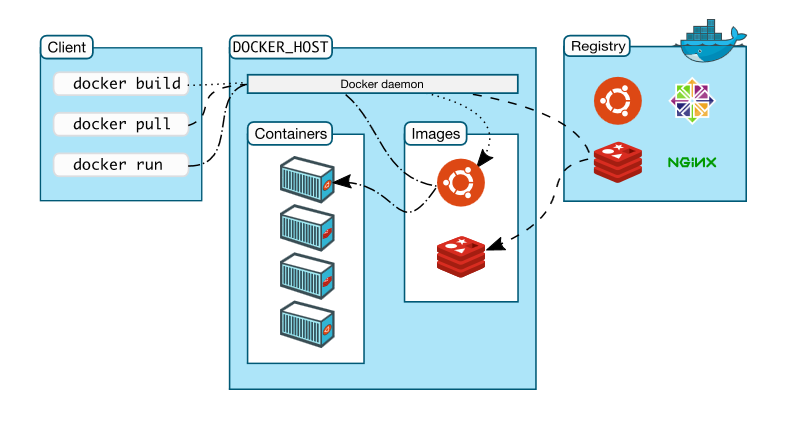

As specified on Docker documentation, Docker architecture uses client-server architecture. As shown in the following figure (sourced from Docker's website: https://docs.docker.com/engine/docker-overview/), the Docker client is primarily a user interface that is used by an end user; clients communicate back and forth with a Docker daemon. The Docker daemon does the heavy lifting of the building, running, and distributing of your Docker containers. The Docker client and the daemon can run on the same system or different machines.

The Docker client and daemon communicate via sockets or through a RESTful API. Docker registers are public or private Docker image repositories from which you upload or download images, for example, Docker Hub (hub.docker.com) is a public Docker registry.

The primary components of Docker are:

- Docker image: A Docker image is a read-only template. For example, an image could contain an Ubuntu operating system with Apache web server and your web application installed. Docker images are a build component of Docker and images are used to create Docker containers. Docker provides a simple way to build new images or update existing images. You can also use images created by others and/or extend them.

- Docker container: A Docker container is created from a Docker image. Docker works so that the container can only see its own processes, and have its own filesystem layered onto a host filesystem and a networking stack, which pipes to the host-networking stack. Docker Containers can be run, started, stopped, moved, or deleted.

Deployment

Microservices deployment with Docker deals with three parts:

- Application packaging, for example, JAR

- Building Docker image with a JAR and dependencies using a Docker instruction file, the Dockerfile, and command docker build. It helps to repeatedly create the image

- Docker container execution from this newly built image using command docker run

The preceding information will help you to understand the basics of Docker. You will learn more about Docker and its practical usage in Chapter 5, Deployment and Testing. Source and reference, refer to: https://docs.docker.com.

Summary

In this chapter, you have learned or recapped the high-level design of large software projects, from traditional monolithic to microservices applications. You were also introduced to a brief history of microservices, the limitation of monolithic applications, and the benefits and flexibility that microservices offer. I hope this chapter helped you to understand the common problems faced in a production environment by monolithic applications and how microservices can resolve such problem. You were also introduced to lightweight and efficient Docker containers and saw how containerization is an excellent way to simplify microservices deployment.

In the next chapter, you will get to know about setting up the development environment from IDE, and other development tools, to different libraries. We will deal with creating basic projects and setting up Spring Boot configuration to build and develop our first microservice. We will be using Java 9 as the language and Spring Boot for our project.

Download code from GitHub

Download code from GitHub