Docker Overview

Welcome to Mastering Docker, Third Edition! This first chapter will cover the Docker basics that you should already have a pretty good handle on. But if you don't already have the required knowledge at this point, this chapter will help you with the basics, so that subsequent chapters don't feel as heavy. By the end of the book, you should be a Docker master, and will be able to implement Docker in your environments, building and supporting applications on top of them.

In this chapter, we're going to review the following high-level topics:

- Understanding Docker

- The differences between dedicated hosts, virtual machines, and Docker

- Docker installers/installation

- The Docker command

- The Docker and container ecosystem

Technical requirements

In this chapter, we are going to discuss how to install Docker locally. To do this, you will need a host running one of the three following operating systems:

- macOS High Sierra and above

- Windows 10 Professional

- Ubuntu 18.04

Check out the following video to see the Code in Action:

Understanding Docker

Before we look at installing Docker, let's begin by getting an understanding of the problems that the Docker technology aims to solve.

Developers

The company behind Docker has always described the program as fixing the "it works on my machine" problem. This problem is best summed up by an image, based on the Disaster Girl meme, which simply had the tagline Worked fine in dev, ops problem now, that started popping up in presentations, forums, and Slack channels a few years ago. While it is funny, it is unfortunately an all-too-real problem and one I have personally been on the receiving end of - let's take a look at an example of what is meant by this.

The problem

Even in a world where DevOps best practices are followed, it is still all too easy for a developer's working environment to not match the final production environment.

For example, a developer using the macOS version of, say, PHP will probably not be running the same version as the Linux server that hosts the production code. Even if the versions match, you then have to deal with differences in the configuration and overall environment on which the version of PHP is running, such as differences in the way file permissions are handled between different operating system versions, to name just one potential problem.

All of this comes to a head when it is time for a developer to deploy their code to the host and it doesn't work. So, should the production environment be configured to match the developer's machine, or should developers only do their work in environments that match those used in production?

In an ideal world, everything should be consistent, from the developer's laptop all the way through to your production servers; however, this utopia has traditionally been difficult to achieve. Everyone has their way of working and their own personal preferences—enforcing consistency across multiple platforms is difficult enough when there is a single engineer working on the systems, let alone a team of engineers working with a team of potentially hundreds of developers.

The Docker solution

Using Docker for Mac or Docker for Windows, a developer can easily wrap their code in a container that they have either defined themselves, or created as a Dockerfile while working alongside a sys-admin or operations team. We will be covering this in Chapter 2, Building Container Images, as well as Docker Compose files, which we will go into more detail about in Chapter 5, Docker Compose.

They can continue to use their chosen IDE and maintain their workflows when working with the code. As we will see in the upcoming sections of this chapter, installing and using Docker is not difficult; in fact, considering how much of a chore it was to maintain consistent environments in the past, even with automation, Docker feels a little too easy—almost like cheating.

Operators

I have been working in operations for more years than I would like to admit, and the following problem has cropped regularly.

The problem

Let's say you are looking after five servers: three load-balanced web servers, and two database servers that are in a master or slave configuration dedicated to running Application 1. You are using a tool, such as Puppet or Chef, to automatically manage the software stack and configuration across your five servers.

Everything is going great, until you are told, We need to deploy Application 2 on the same servers that are running Application 1. On the face of it, this is no problem—you can tweak your Puppet or Chef configuration to add new users, vhosts, pull the new code down, and so on. However, you notice that Application 2 requires a higher version of the software that you are running for Application 1.

To make matters worse, you already know that Application 1 flat out refuses to work with the new software stack, and that Application 2 is not backwards compatible.

Traditionally, this leaves you with a few choices, all of which just add to the problem in one way or another:

- Ask for more servers? While this traditionally is probably the safest technical solution, it does not automatically mean that there will be the budget for additional resources.

- Re-architect the solution? Taking one of the web and database servers out of the load balancer or replication, and redeploying them with the software stack for Application 2, may seem like the next easiest option from a technical point of view. However, you are introducing single points of failure for Application 2, and also reducing the redundancy for Application 1: there was probably a reason why you were running three web and two database servers in the first place.

- Attempt to install the new software stack side-by-side on your servers? Well, this certainly is possible and may seem like a good short-term plan to get the project out of the door, but it could leave you with a house of cards that could come tumbling down when the first critical security patch is needed for either software stack.

The Docker solution

This is where Docker starts to come into its own. If you have Application 1 running across your three web servers in containers, you may actually be running more than three containers; in fact, you could already be running six, doubling up on the containers, allowing you to run rolling deployments of your application without reducing the availability of Application 1.

Deploying Application 2 in this environment is as easy as simply launching more containers across your three hosts and then routing to the newly deployed application using your load balancer. As you are just deploying containers, you do not need to worry about the logistics of deploying, configuring, and managing two versions of the same software stack on the same server.

We will work through an example of this exact scenario in Chapter 5, Docker Compose.

Enterprise

Enterprises suffer from the same problems described previously, as they have both developers and operators; however, they have both of these entities on a much larger scale, and there is also a lot more risk involved.

The problem

Because of the aforementioned risk, along with the fact that any downtime could cost sales or impact reputation, enterprises need to test every deployment before it is released. This means that new features and fixes are stuck in a holding pattern while the following takes place:

- Test environments are spun up and configured

- Applications are deployed across the newly launched environments

- Test plans are executed and the application and configuration are tweaked until the tests pass

- Requests for change are written, submitted, and discussed to get the updated application deployed to production

This process can take anywhere from a few days to a few weeks, or even months, depending on the complexity of the application and the risk the change introduces. While the process is required to ensure continuity and availability for the enterprise at a technological level, it does potentially introduce risk at the business level. What if you have a new feature stuck in this holding pattern and a competitor releases a similar—or worse still—the same feature, ahead of you?

This scenario could be just as damaging to sales and reputation as the downtime that the process was put in place to protect you against in the first place.

The Docker solution

Let me start by saying that Docker does not remove the need for a process, such as the one just described, to exist or be followed. However, as we have already touched upon, it does make things a lot easier as you are already working consistently. It means that your developers have been working with the same container configuration that is running in production. This means that it is not much of a step for the methodology to be applied to your testing.

For example, when a developer checks their code that they know works on their local development environment (as that is where they have been doing all of their work), your testing tool can launch the same containers to run your automated tests against. Once the containers have been used, they can be removed to free up resources for the next lot of tests. This means that, all of a sudden, your testing process and procedures are a lot more flexible, and you can continue to reuse the same environment, rather than redeploying or reimaging servers for the next set of testing.

This streamlining of the process can be taken as far as having your new application containers push all the way through to production.

The quicker this process can be completed, the quicker you can confidently launch new features or fixes and keep ahead of the curve.

The differences between dedicated hosts, virtual machines, and Docker

So, we know what problems Docker was developed to solve. We now need to discuss what exactly Docker is and what it does.

Docker is a container management system that helps us easily manage Linux Containers (LXC) in an easier and universal fashion. This lets you create images in virtual environments on your laptop and run commands against them. The actions you perform to the containers, running in these environments locally on your machine, will be the same commands or operations that you run against them when they are running in your production environment.

This helps us in that you don't have to do things differently when you go from a development environment, such as the one on your local machine, to a production environment on your server. Now, let's take a look at the differences between Docker containers and typical virtual machine environments.

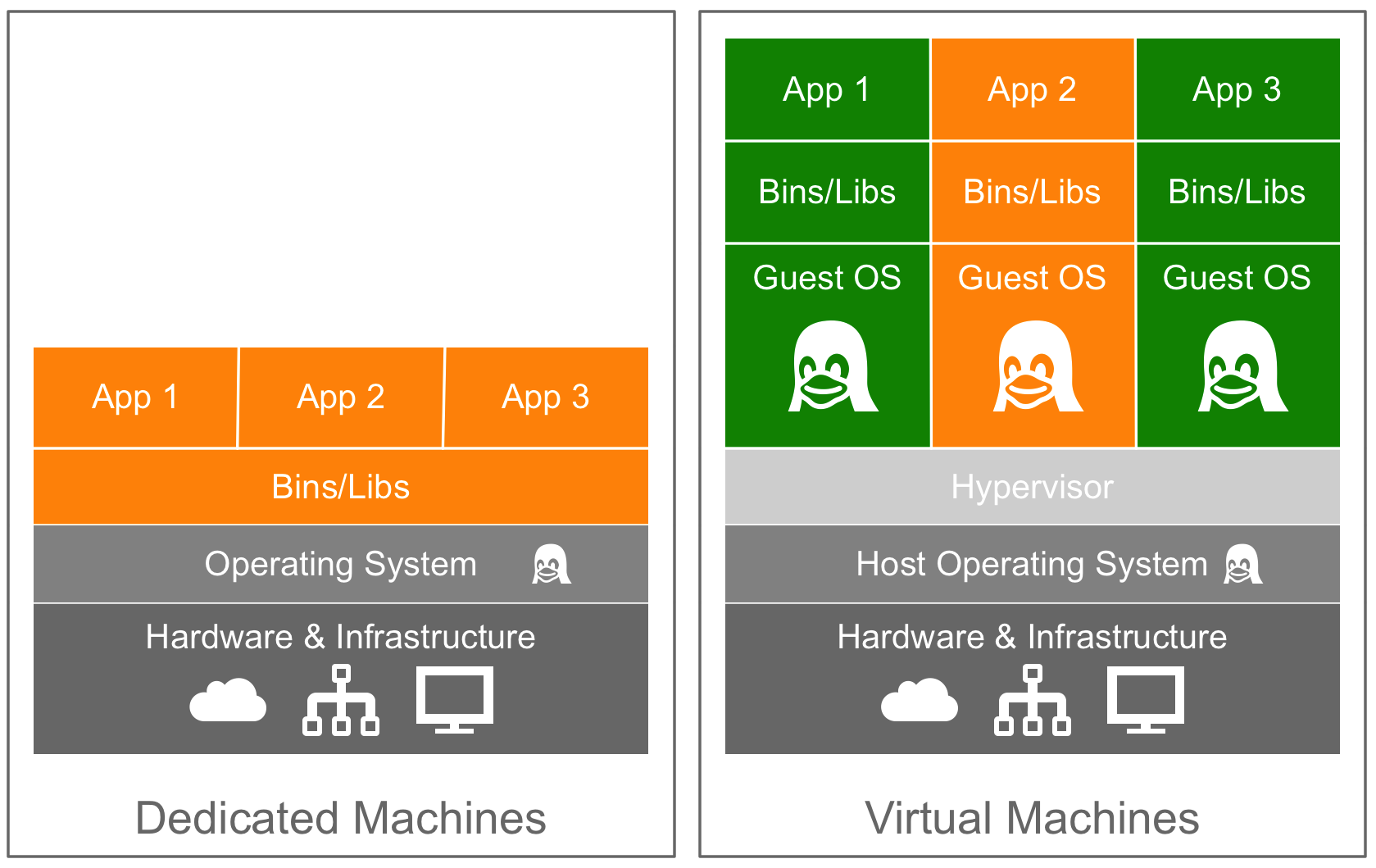

The following diagram demonstrates the difference between a dedicated, bare-metal server and a server running virtual machines:

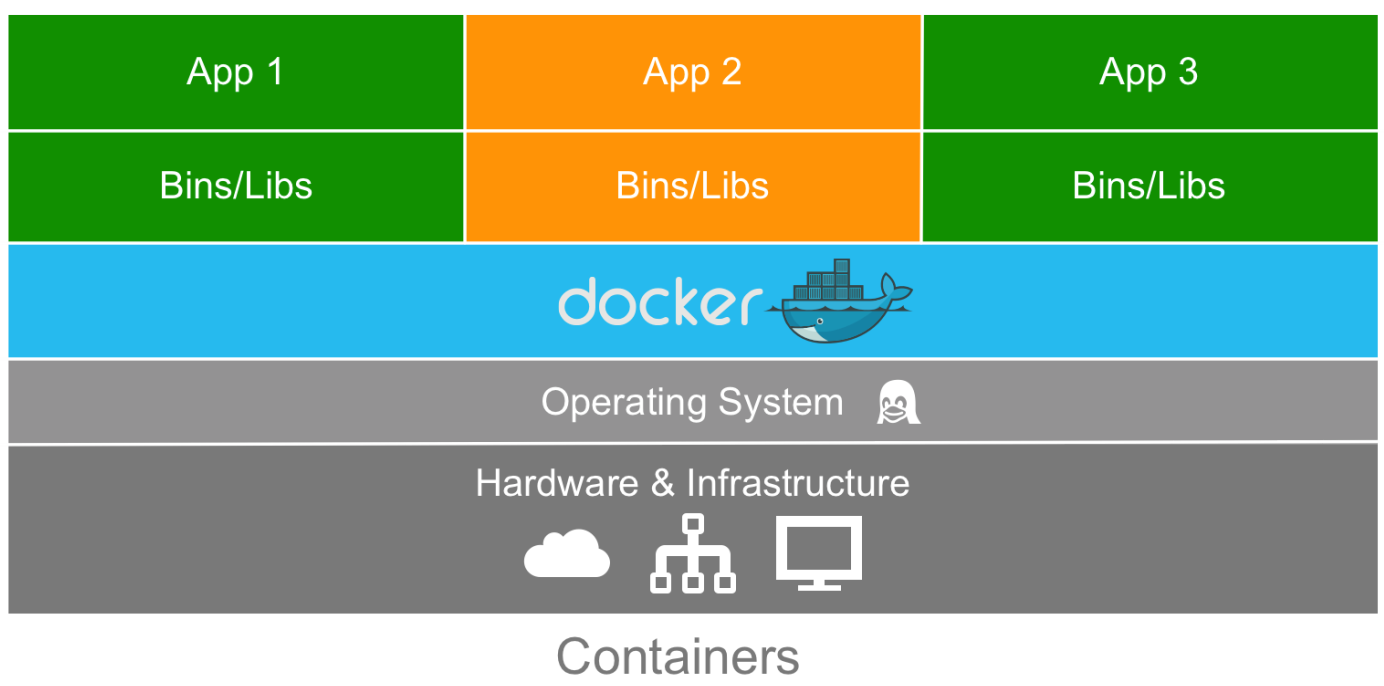

As you can see, for a dedicated machine we have three applications, all sharing the same orange software stack. Running virtual machines allow us to run three applications, running two completely different software stacks. The following diagram shows the same orange and green applications running in containers using Docker:

This diagram gives us a lot of insight into the biggest key benefit of Docker, that is, there is no need for a complete operating system every time we need to bring up a new container, which cuts down on the overall size of containers. Since almost all the versions of Linux use the standard kernel models, Docker relies on using the host operating system's Linux kernel for the operating system it was built upon, such as Red Hat, CentOS, and Ubuntu.

For this reason, you can have almost any Linux operating system as your host operating system and be able to layer other Linux-based operating systems on top of the host. Well, that is, your applications are led to believe that a full operating system is actually installed—but in reality, we only install the binaries, such as a package manager and, for example, Apache/PHP and the libraries required to get just enough of an operating system for your applications to run.

For example, in the earlier diagram, we could have Red Hat running for the orange application, and Debian running for the green application, but there would never be a need to actually install Red Hat or Debian on the host. Thus, another benefit of Docker is the size of images when they are created. They are built without the largest piece: the kernel or the operating system. This makes them incredibly small, compact, and easy to ship.

Docker installation

Installers are one of the first pieces you need to get up and running with Docker on both your local machine and your server environments. Let's first take a look at which environments you can install Docker in:

- Linux (various Linux flavors)

- macOS

- Windows 10 Professional

In addition, you can run them on public clouds, such as Amazon Web Services, Microsoft Azure, and DigitalOcean, to name a few. With each of the various types of installers listed previously, Docker actually operates in different ways on the operating system. For example, Docker runs natively on Linux, so if you are using Linux, then how Docker runs on your system is pretty straightforward. However, if you are using macOS or Windows 10, then it operates a little differently, since it relies on using Linux.

Let's look at quickly installing Docker on a Linux desktop running Ubuntu 18.04, and then on macOS and Windows 10.

Installing Docker on Linux (Ubuntu 18.04)

As already mentioned, this is the most straightforward installation out of the three systems we will be looking at. To install Docker, simply run the following command from a Terminal session:

$ curl -sSL https://get.docker.com/ | sh

$ sudo systemctl start docker

You will also be asked to add your current user to the Docker group. To do this, run the following command, making sure you replace the username with your own:

$ sudo usermod -aG docker username

These commands will download, install, and configure the latest version of Docker from Docker themselves. At the time of writing, the Linux operating system version installed by the official install script is 18.06.

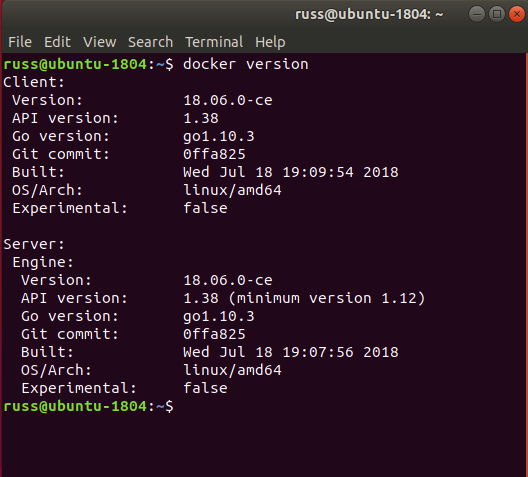

Running the following command should confirm that Docker is installed and running:

$ docker version

You should see something similar to the following output:

There are two supporting tools that we are going to use in future chapters, which are installed as part of the Docker for macOS or Windows 10 installers.

To ensure that we are ready to use these tools in later chapters, we should install them now. The first tool is Docker Machine. To install this, we first need to get the latest version number. You can find this by visiting the releases section of the project's GitHub page at https://github.com/docker/machine/releases/. At the time of writing, the version was 0.15.0—update the version number in the commands in the following code block with whatever the latest version is when you install it:

$ MACHINEVERSION=0.15.0

$ curl -L https://github.com/docker/machine/releases/download/v$MACHINEVERSION/docker-machine-$(uname -s)-$(uname -m) >/tmp/docker-machine

$ chmod +x /tmp/docker-machine

$ sudo mv /tmp/docker-machine /usr/local/bin/docker-machine

To download and install the next and final tool, Docker Compose, run the following commands, again checking that you are running the latest version by visiting the releases page at https://github.com/docker/compose/releases/:

$ COMPOSEVERSION=1.22.0

$ curl -L https://github.com/docker/compose/releases/download/$COMPOSEVERSION/docker-compose-`uname -s`-`uname -m` >/tmp/docker-compose

$ chmod +x /tmp/docker-compose

$ sudo mv /tmp/docker-compose /usr/local/bin/docker-compose

Once it's installed, you should be able to run the following two commands confirm the versions of the software is correctly:

$ docker-machine version

$ docker-compose version

Installing Docker on macOS

Unlike the command-line Linux installation, Docker for Mac has a graphical installer.

You can download the installer from the Docker store, at https://store.docker.com/editions/community/docker-ce-desktop-mac. Just click on the Get Docker link. Once it's downloaded, you should have a DMG file. Double-clicking on it will mount the image, and opening the image mounted on your desktop should present you with something like this:

Once you have dragged the Docker icon to your Applications folder, double-click on it and you will be asked whether you want to open the application you have downloaded. Clicking Yes will open the Docker installer, showing the following:

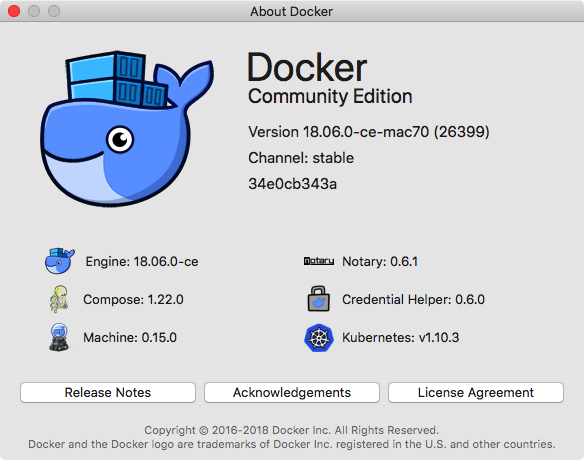

Click on Next and follow the onscreen instructions. Once it is installed and started, you should see a Docker icon in the top-left icon bar on your screen. Clicking on the icon and selecting About Docker should show you something similar to the following:

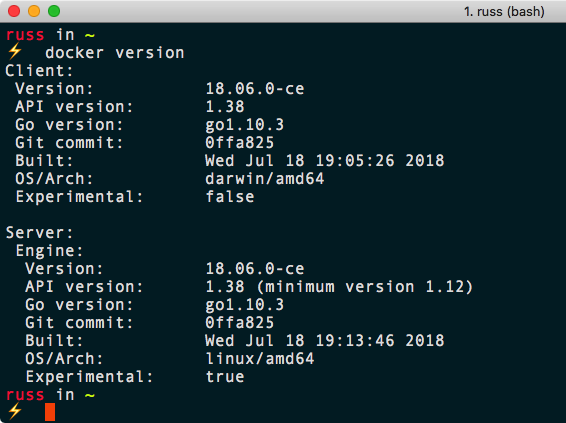

You can also open a Terminal window. Run the following command, just as we did in the Linux installation:

$ docker version

You should see something similar to the following Terminal output:

You can also run the following commands to check the versions of Docker Compose and Docker Machine that were installed alongside Docker Engine:

$ docker-compose version

$ docker-machine version

Installing Docker on Windows 10 Professional

Like Docker for Mac, Docker for Windows uses a graphical installer.

Docker for Windows has this requirement due to its reliance on Hyper-V. Hyper-V is Windows' native hypervisor and allows you to run x86-64 guests on your Windows machine, be it Windows 10 Professional or Windows Server. It even forms part of the Xbox One operating system.

You can download the Docker for Windows installer from the Docker store at https://store.docker.com/editions/community/docker-ce-desktop-windows/. Just click on the Get Docker button to download the installer. Once it's downloaded, run the MSI package and you will be greeted with the following:

Click on Yes, and then follow the onscreen prompts, which will go through not only installing Docker, but also enabling Hyper-V, if you do not already have it enabled.

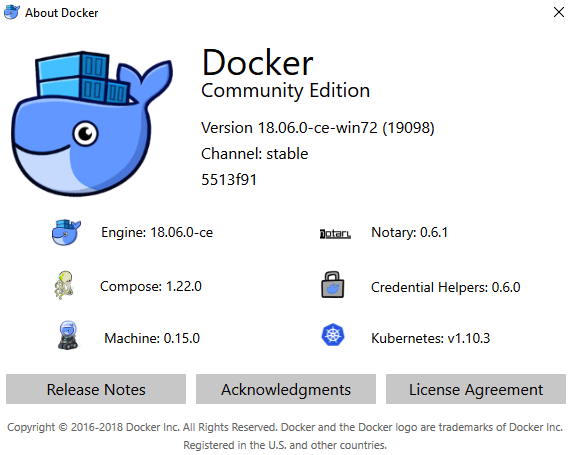

Once it's installed, you should see a Docker icon in the icon tray in the bottom right of your screen. Clicking on it and selecting About Docker from the menu will show the following:

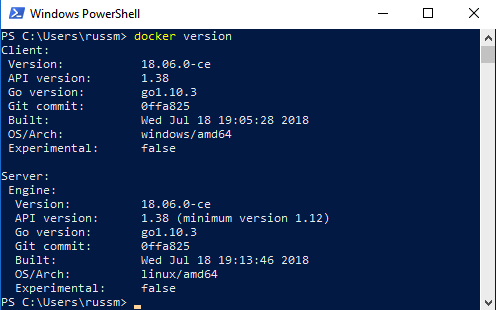

Open a PowerShell window and type the following command:

$ docker version

This should also show you similar output to the Mac and Linux versions:

Again, you can also run the following commands to check the versions of Docker Compose and Docker Machine that were installed alongside Docker Engine:

$ docker-compose version

$ docker-machine version

Again, you should see a similar output to the macOS and Linux versions. As you may have started to gather, once the packages are installed, their usage is going to be pretty similar. This will be covered in greater detail later in this chapter.

Older operating systems

If you are not running a sufficiently new operating system on Mac or Windows, then you will need to use Docker Toolbox. Consider the output printed from running the following command:

$ docker version

On all three of the installations we have performed so far, it shows two different versions, a client and server. Predictably, the Linux version shows that the architecture for the client and server are both Linux; however, you may notice that the Mac version shows the client is running on Darwin, which is Apple's Unix-like kernel, and the Windows version shows Windows. Yet both of the servers show the architecture as being Linux, so what gives?

That is because both the Mac and Windows versions of Docker download and run a virtual machine in the background, and this virtual machine runs running a small, lightweight operating system based on Alpine Linux. The virtual machine runs using Docker's own libraries, which connect to the built-in hypervisor for your chosen environment.

For macOS, this is the built-in Hypervisor.framework, and for Windows, Hyper-V.

To ensure that no one misses out on the Docker experience, a version of Docker that does not use these built-in hypervisors is available for older versions of macOS and unsupported Windows versions. These versions utilize VirtualBox as the hypervisor to run the Linux server for your local client to connect to.

For more information on Docker Toolbox, see the project's website at https://www.docker.com/products/docker-toolbox/, where you can also download the macOS and Windows installers.

The Docker command-line client

Now that we have Docker installed, let's look at some Docker commands that you should be familiar with already. We will start with some common commands and then take a peek at the commands that are used for the Docker images. We will then take a dive into the commands that are used for the containers.

The first command we will be taking a look at is one of the most useful commands, not only in Docker, but in any command-line utility you use—the help command. It is run simply like this:

$ docker help

This command will give you a full list of all of the Docker commands at your disposal, along with a brief description of what each command does. For further help with a particular command, you can run the following:

$ docker <COMMAND> --help

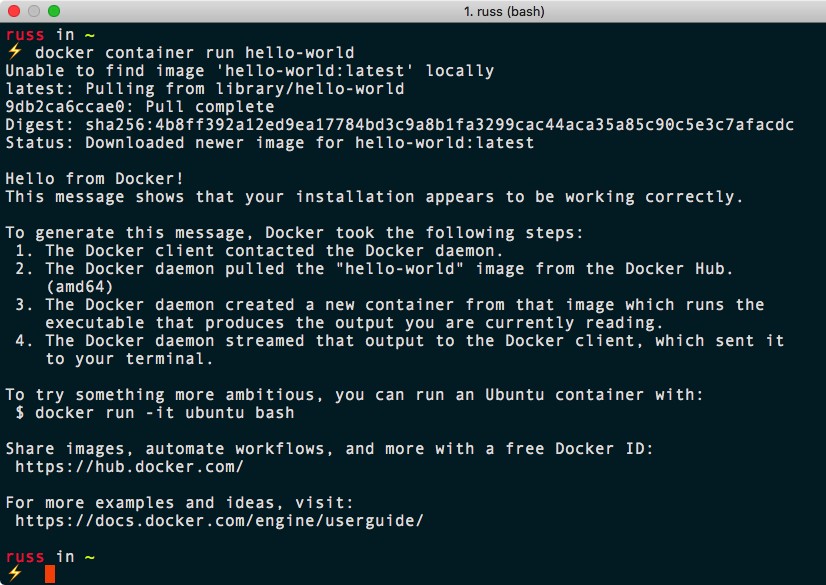

Next, let's run the hello-world container. To do this, simply run the following command:

$ docker container run hello-world

It doesn't matter what host you are running Docker on, the same thing will happen on Linux, macOS, and Windows. Docker will download the hello-world container image and then execute it, and once it's executed, the container will be stopped.

Your Terminal session should look like the following:

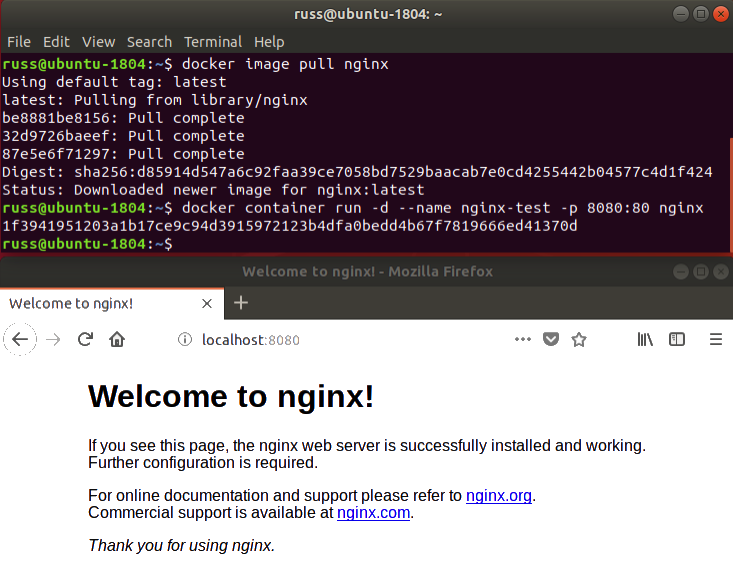

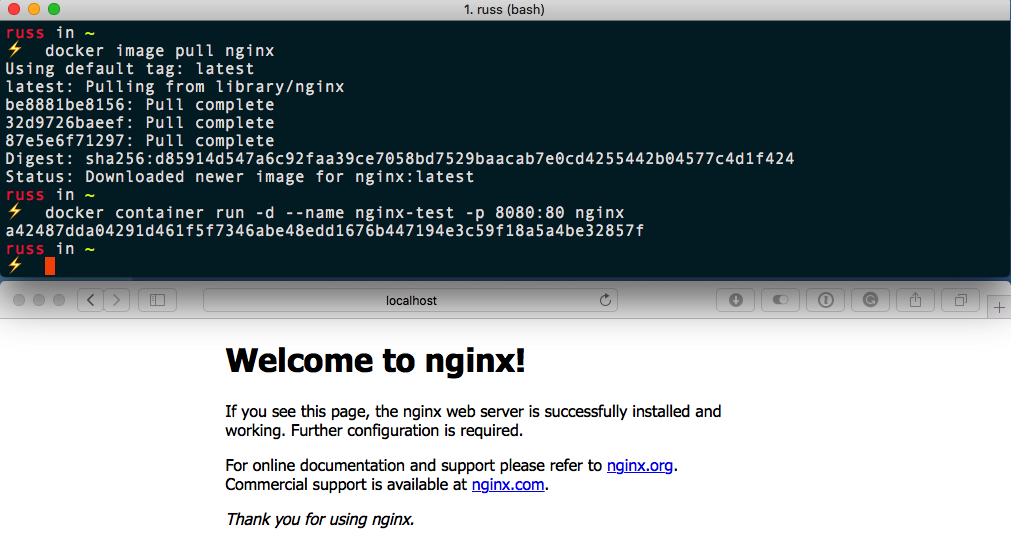

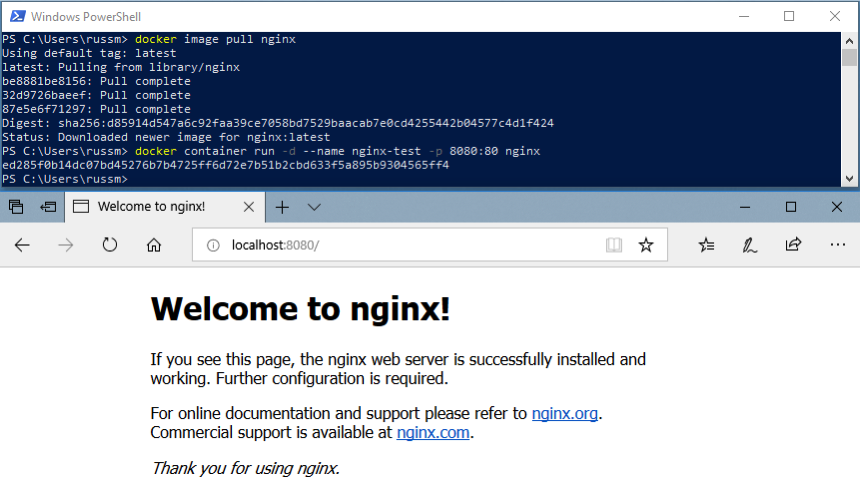

Let's try something a little more adventurous—let's download and run a nginx container by running the following two commands:

$ docker image pull nginx

$ docker container run -d --name nginx-test -p 8080:80 nginx

The first of the two commands downloads the nginx container image, and the second command launches a container in the background, called nginx-test, using the nginx image we pulled. It also maps port 8080 on our host machine to port 80 on the container, making it accessible to our local browser at http://localhost:8080/.

As you can see from the following screenshots, the command and results are exactly the same on all three OS types. Here we have Linux:

This is the result on macOS:

And this is how it looks on Windows:

In the following three chapters, we will look at using the Docker command-line client in more detail. For now, let's stop and remove our nginx-test container by running the following:

$ docker container stop nginx-test

$ docker container rm nginx-test

As you can see, the experience of running a simple nginx container on all three of the hosts on which we have installed Docker is exactly the same. As am I sure you can imagine, trying to achieve this without something like Docker across all three platforms is a challenge, and also a very different experience on each platform. Traditionally, this has been one of the reasons for the difference in local development environments.

Docker and the container ecosystem

If you have been following the rise of Docker and containers, you will have noticed that, over the period of the last few years, the messaging on the Docker website has been slowly changing, from headlines about what containers are to more of a focus on the services provided by Docker as a company.

One of the core drivers for this is that everything has traditionally been lumped into being known just as "Docker," which can get confusing. Now that people do not need educating as much on what a container is or the problems they can solve with Docker, the company needed to try and start to differentiate themselves from other companies that sprung up to support all sorts of container technologies.

So, let's try and unpick everything that is Docker, which involves the following:

- Open source projects: There are several open source projects started by Docker, which are now maintained by a large community of developers.

- Docker CE and Docker EE: This is the core collection of free-to-use and commercially supported Docker tools built on top of the open source components.

- Docker, Inc.: This is the company founded to support and develop the core Docker tools.

We will also be looking at some third-party services in later chapters. In the meantime, let's go into more detail on each of these, starting with the open source projects.

Open source projects

Docker, Inc. has spent the last two years open sourcing and donating a lot of its core projects to various open source foundations and communities. These projects include the following:

- Moby Project is the upstream project upon which the Docker Engine is based. It provides all of the components needed to assemble a fully functional container system.

- Runc is a command-line interface for creating and configuring containers, and has been built to the OCI specification.

- Containerd is an easily embeddable container runtime. It is also a core component of the Moby Project.

- LibNetwork is a Go library that provides networking for containers.

- Notary is a client and server that aims to provide a trust system for signed container images.

- HyperKit is a toolkit that allows you to embed hypervisor capabilities into your own applications, presently it only supports the macOS and the Hypervisor.framework.

- VPNKit provides VPN functionality to HyperKit.

- DataKit allows you to orchestrate application data using a Git-like workflow.

- SwarmKit is a toolkit that allows you to build distributed systems using the same raft consensus algorithm as Docker Swarm.

- LinuxKit is a framework that allows you to build and compile a small portable Linux operating system for running containers.

- InfraKit is a collection of tools that you can use to define infrastructure to run your LinuxKit generated distributions on.

On their own, you will probably never use the individual components; however, each of the projects mentioned is a component of the tools which are maintained by Docker, Inc. We will go a little more into these projects in our final chapter.

Docker CE and Docker EE

There are a lot of tools supplied and supported by Docker, Inc. Some we have already mentioned, and others we will cover in later chapters. Before we finish this, our first chapter, we should get an idea of the tools we are going to be using. The most of important of them is the core Docker Engine.

This is the core of Docker, and all of the other tools that we will be covering use it. We have already been using it as we installed it in the Docker installation and Docker commands sections of this chapter. There are currently two versions of Docker Engine; there is the Docker Enterprise Edition (EE) and the Docker Community Edition (CE). We will be using Docker CE throughout this book.

From September 2018, the release cycle for the stable version of Docker CE will be biannual, which means that it will have a seven-month maintenance cycle. This means that you have plenty of time to review and plan any upgrades. At the time of writing, the current timetable for Docker CE releases is:

- Docker 18.06 CE: This is the last of the quarterly Docker CE releases, released July 18th 2018.

- Docker 18.09 CE: This release, due late September/early October 2018, is the first release of the biannual release cycle of Docker CE.

- Docker 19.03 CE: The first supported Docker CE of 2019 is scheduled to be released March/April 2019.

- Docker 19.09 CE: The second supported release of 2019 is scheduled to be released September/October 2019.

As well as the stable version of Docker CE, Docker will be providing nightly builds of the Docker Engine via a nightly repository (formally Docker CE Edge), and also monthly builds of Docker for Mac and Docker for Windows via the Edge channel.

Docker also provides the following tools and services:

- Docker Compose: A tool that allows you to define and share multi-container definitions; it is detailed in Chapter 5, Docker Compose.

- Docker Machine: A tool to launch Docker hosts on multiple platforms; we will cover this in Chapter 7, Docker Machine.

- Docker Hub: A repository for your Docker images, covered in the next three chapters.

- Docker Store: A storefront for official Docker images and plugins as well as licensed products. Again, we will cover this in the next three chapters.

- Docker Swarm: A multi-host-aware orchestration tool, covered in detail in Chapter 8, Docker Swarm.

- Docker for Mac: We have covered Docker for Mac in this chapter.

- Docker for Windows: We have covered Docker for Windows in this chapter.

- Docker for Amazon Web Services: A best-practice Docker Swarm installation that targets AWS, covered in Chapter 10, Running Docker in Public Clouds.

- Docker for Azure: A best-practice Docker Swarm installation that targets Azure, covered in Chapter 10, Running Docker in Public Clouds.

Docker, Inc.

Docker, Inc. is the company formed to develop Docker CE and Docker EE. It also provides SLA-based support services for Docker EE. Finally, they are offer consultative services to companies who wish take their existing applications and containerize them as part of Docker's Modernize Traditional Apps (MTA) program.

Summary

In this chapter, we covered some basic information that you should already know (or now know) for the chapters ahead. We went over the basics of what Docker is, and how it fares compared to other host types. We went over the installers, how they operate on different operating systems, and how to control them through the command line. Be sure to remember to look at the requirements for the installers to ensure you use the correct one for your operating system.

Then, we took a small dive into using Docker and issued a few basic commands to get you started. We will be looking at all of the management commands in future chapters, to get a more in-depth understanding of what they are, as well as how and when to use them. Finally, we discussed the Docker ecosystem and the responsibilities of each of the different tools.

In the next chapters, we will be taking a look at how to build base containers, and we will also look in depth at Dockerfiles and places to store your images, as well as using environmental variables and Docker volumes.

Questions

- Where can you download Docker for Mac and Docker for Windows from?

- What command did we use to download the NGINX image?

- Which open source project is upstream for the core Docker Engine?

- How many months are in the support lifecycle for a stable Docker CE release?

- Which command would you run to find out more information on the Docker container subset of commands?

Further reading

In this chapter we have mentioned the following hypervisors:

- macOS Hypervisor framework: https://developer.apple.com/reference/hypervisor/

- Hyper-V: https://www.microsoft.com/en-gb/cloud-platform/server-virtualization

We referenced the following blog posts from Docker:

- Docker CLI restructure blog post: https://blog.docker.com/2017/01/whats-new-in-docker-1-13/

- Docker Extended Support Announcement: https://blog.docker.com/2018/07/extending-support-cycle-docker-community-edition/

Next up, we discussed the following open source projects:

- Moby Project: https://mobyproject.org/

- Runc: https://github.com/opencontainers/runc

- Containerd: https://containerd.io/

- LibNetwork; https://github.com/docker/libnetwork

- Notary: https://github.com/theupdateframework/notary

- HyperKit: https://github.com/moby/hyperkit

- VPNKit: https://github.com/moby/vpnkit

- DataKit: https://github.com/moby/datakit

- SwarmKit: https://github.com/docker/swarmkit

- LinuxKit: https://github.com/linuxkit/linuxkit

- InfraKit: https://github.com/docker/infrakit

- The OCI specification: https://github.com/opencontainers/runtime-spec/

Finally, the meme mentioned at the start of the chapter can be found here:

- Worked fine in Dev, Ops problem now - http://www.developermemes.com/2013/12/13/worked-fine-dev-ops-problem-now/