They say the first steps are the hardest, and beginning a new game is no exception. Therefore, we should use as much help as possible to make those first steps easier, and get into the fun parts of game development. To support the new WinRT platform, we need some new templates, and there are plenty to be had in Visual Studio 2012. Most important to us is the Direct3D App template, which provides the base code for a C++ Windows Store application, without any of the XAML that the other templates include.

The template that we've chosen will provide us with the code to create a WinRT window, as well as the code for the Direct3D components that will allow us to create the game world. This chapter will focus on explaining the code included so that you understand how it all works, as well as the changes needed to prepare the project for our own code.

In this chapter we will cover the following topics:

Creating the application window

Initialising Direct3D

Direct3D devices and contexts

Render targets and depth buffers

The graphics pipeline

What a game loop looks like

Clearing and presenting the screen

Let's begin by starting up Visual Studio 2012 (the Express Edition is fine) and creating a new Direct3D App project. You can find this project in the same window by navigating to New Project | Templates | Visual C++ | Windows Store. Once Visual Studio has finished creating the project, we need to delete the following files:

CubeRenderer.hCubeRenderer.cppSimpleVertexShader.hlslSimplePixelShader.hlsl

Once these files have been removed, we need to remove any references to the files that we just removed. Now create a new header (Game.h) and code (Game.cpp) file and add the following class declaration and some stub functions into Game.h and Game.cpp, respectively. Once you've done that, search for any references to CubeRenderer and replace them with Game. To compile this you'll also need to ensure that any #include statements that previously pointed to CubeRenderer.h now point to Game.h.

Note

Remember that Microsoft introduced the C++/CX (Component Extensions) to help you write C++ code that works with WinRT. Make sure that you're creating the right type of class.

#pragma once

#include "Direct3DBase.h"

ref class Game sealed : public Direct3DBase

{

public:

Game();

virtual void Render() override;

void Update(float totalTime, float deltaTime);

};Tip

Downloading the example code

You can download the example code files for all Packt books you have purchased from your account at http://www.packtpub.com. If you purchased this book elsewhere, you can visit http://www.packtpub.com/support and register to have the files e-mailed directly to you.

Here we have the standard constructor, as well as an overridden

Render() function from the Direct3DBase base class. Alongside, we have a new function named Update(), which will be explained in more detail when we cover the game loop later in this chapter.

We add the following stub methods in Game.cpp to allow this to compile:

#include "pch.h"

#include "Game.h"

Game::Game(void)

{

}

void Game::Render()

{

}

void Game::Update(float totalTime, float deltaTime)

{

}Once these are in place you can compile to ensure everything works fine, before we move on to the rest of the basic game structure.

Windows 8 applications use a different window system compared to the Win32 days. When the application starts, instead of describing a window that needs to be created, you provide an implementation of IFrameworkView that allows your application to respond to events such as resume, suspend, and resize.

When you implement this interface, you also have control over the system used to render to the screen, just as if you had created a Win32 window. In this book we will only use DirectX to create the visuals for the screen; however, Microsoft provides another option that can be used in conjunction with DirectX (or on its own). XAML is the user interface system that provides everything from controls to media to animations. If you want to avoid creating a user interface yourself, this would be your choice. However, because XAML uses DirectX for rendering, some extra steps need to be taken to ensure that the two work together properly. This is beyond the scope of the book, but I strongly recommend you look into taking those extra steps if you want to take advantage of an incredibly powerful user interface system.

The most important methods for us right now are Initialize, SetWindow, and Run, which can all be found in the Chapter1 class (Chapter1.h) if you're following along with the sample code. These three methods are where we will hook up the code for the game. As of now, the template has already included some code referring to the CubeRenderer. To compile the code we need to replace any references to CubeRenderer inside our Chapter1 class with a reference to Game.

All games start up, initialize, and run in a loop until it is time to close down and clean up. The big difference that games have over other applications is they will often load, reinitialize, and destroy content multiple times over the life of the process, as the player progresses through different levels or stages of the game.

Another difference lies in the interactive element of games. To create an immersive and responsive experience, games need to iterate through all of the subsystems, processing input and logic before presenting video and audio to the player at a high rate. Each video iteration presented to the player is called a Frame. The performance of games can be drawn from the number of frames that appear on the monitor in a second, which leads to the term Frames Per Second or FPS (not to be confused with First Person Shooter). Modern games need to process an incredible amount of data and repeatedly draw highly detailed objects 30-60 times per second, which means that they need to do all of the work in a short span of time. For modern games that claim to run at 60 FPS, this means that they need to complete all of the processing and rendering in under 1/60th of a second. Some games spread the processing across multiple frames, or make use of multithreading to allow intensive calculations, while still maintaining the desired frame rate. The key thing to ensure here is that the latency from user input to the result appearing on screen is minimized, as this can impact the player experience, depending on the type of game being developed.

The loop that operates at the frame rate of the game is called the game loop. In the days of Win32 games, there was a lot of discussion about the best way to structure the game loop so that the system would have enough time to process the operating system messages. Now that we have shifted from polling operating system messages to an event-based system, we no longer need to worry, and can instead just create a simple while loop to handle everything.

A modern game loop would look like the following:

while the game is running

{

Update the timer

Dispatch Events

Update the Game State

Draw the Frame

Present the Frame to the Screen

}Although we will later look at this loop in detail, there are two items that you might not expect. WinRT provides a method dispatch system that allows for the code to run on specific threads. This is important because now the operating system can generate events from different OS threads and we will only receive them on the main game thread (the one that runs the game loop). If you're using XAML, this becomes even more important as certain actions will crash if they are not run on the UI thread. By providing the dispatcher, we now have an easy way to fire off non-thread safe actions and ensure that they run on the thread we want them to.

The final item you may not have seen before involves presenting the frame to the screen. This will be covered in detail later in the chapter, but briefly, this is where we signal that we are done with drawing and the graphics API can take the final rendered frame and display it on the monitor.

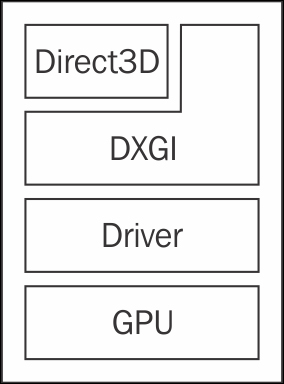

Direct3D is a rendering API that allows you to write your game without having to worry about which graphics card or driver the user may have. By separating this concern through the Component Object Model (COM) system, you can easily write once and have your code run on the hardware from NVIDIA or AMD.

Now let's take a look at Direct3DBase.cpp, the class that we inherited from earlier. This is where DirectX is set up and prepared for use. There are a few objects that we need to create here to ensure we have everything required to start drawing.

The first is the graphics device, represented by an ID3D11Device object. The device represents the physical graphics card and the link to a single adapter. It is primarily used to create resources such as textures and shaders, and owns the device context and swap chain.

Direct3D 11.1 also supports the use of feature levels to support older graphics cards that may only support Direct3D 9.0 or Direct3D 10.0 features. When you create the device, you should specify a list of feature levels that your game will support, and DirectX will handle all of the checks to ensure you get the highest feature level you want that the graphics card supports.

You can find the code that creates the graphics device in the Direct3DBase::CreateDeviceResources() method. Here we allow all possible feature levels, which will allow our game to run on older and weaker devices. The key thing to remember here is that if you want to use any graphics features that were introduced after Direct3D 9.0, you will need to either remove the older feature levels from the list or manually check which level you have received and avoid using that feature.

Once we have a list of feature levels, we just need a simple call to the D3D11CreateDevice() method, which will provide us with the device and immediate device context.

Note

nullptr is a new C++11 keyword that gives us a strongly-defined null pointer. Previously NULL was just an alias for zero, which prevented the compiler from supporting us with extra error-checking.

D3D11CreateDevice( nullptr, D3D_DRIVER_TYPE_HARDWARE, nullptr, D3D11_CREATE_DEVICE_BGRA_SUPPORT, featureLevels, ARRAYSIZE(featureLevels), D3D11_SDK_VERSION, &device, &m_featureLevel, &context );

Most of this is pretty simple: we request a hardware device with BGRA format layout support (see the Swap chain section for more details on texture formats) and provide a list of feature levels that we can support. The magic of COM and Direct3D will provide us with an ID3D11Device and ID3D11DeviceContext that we can use for rendering.

The device context is probably the most useful item that you're going to create. This is where you will issue all draw calls and state changes to the graphics hardware. The device context works together with the graphics device to provide 99 percent of the commands you need to use Direct3D.

By default we get an immediate context along with our graphics device. One of the main benefits provided by a context system is the ability to issue commands from worker threads using deferred contexts. These deferred contexts can then be passed to the immediate context so that their commands can be issued on a single thread, allowing for multithreading with an API that is not thread-safe.

To create an immediate ID3D11DeviceContext, just pass a pointer to the same method that we used to create the device.

Deferred contexts are generally considered an advanced technique and are outside the scope of this book; however, if you're looking to take full advantage of modern hardware, you will want to take a look at this topic to ensure that you can work with the GPU without limiting yourself to a single CPU core.

If you're trying to remember which object to use when you're rendering, remember that the device is about creating resources throughout the lifetime of the application, while the device context does the work to apply those resources and create the images that are displayed to the user.

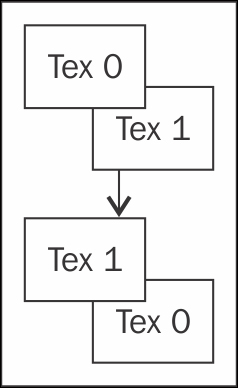

Working with Direct3D exposes you to a number of asynchronous devices, all operating at different rates independent of each other. If you drew to the same texture buffer that the monitor used to display to the screen, you would see the monitor display a half-drawn image as it refreshes while you're still drawing. This is commonly known as screen tearing.

To get around this, the concept of a swap chain was created. A swap chain is a series of textures that the monitor can iterate through, giving you time to draw the frame before the monitor needs to display it. Often, this is accomplished with just two texture buffers known as a front buffer and a back buffer. The front buffer is what the monitor will display while you draw to the Back Buffer. When you're finished rendering, the buffers are swapped so that the monitor can display the new frame and Direct3D can begin drawing the next frame; this is known as double buffering.

Sometimes two buffers are not enough; for example, when the monitor is still displaying the previous frame and Direct3D is ready to swap the buffers. This means that the API needs to wait for the monitor to finish displaying the previous frame before it can swap the buffers and let your game continue.

Alternatively, the API may discard the content that was just rendered, allowing the game to continue, but wasting a frame and causing the front buffer to repeat if the monitor wants to refresh while the game is drawing. This is where three buffers can come in handy, allowing the game to continue working and render ahead.

Double buffering

The swap chain is also directly tied to the resolution, and if the display area is resized, the swap chain needs to be recreated. You may think that all the games are full screen now and should not need to be resized! Remember that the snap functionality in Windows 8 resizes the screen, requiring your game to match the available space. Alongside the creation code, we need a way to respond to the window resize events so that we can resize the swap chain and elegantly handle the changes.

All of this happens inside Direct3DBase::CreateWindowSizeDependentResources. Here we will check to see if there is a resize, as well as some orientation-handling checks so that the game can handle rotation if enabled. We want to avoid the work that we don't need to do, and one of the benefits of Direct3D 11.1 is the ability to just resize the buffers in the swap chain. However, the really important code here executes if we do not already have a swap chain.

Many parts of Direct3D rely on creating a description structure that contains the information required to create the device, and then pass that information to a Create() method that will handle the rest of the creation. In this case, we will make use of a DXGI_SWAP_CHAIN_DESC1 structure to describe the swap chain. The following code snippet shows what our structure will look like:

swapChainDesc.Width = static_cast<UINT>(m_renderTargetSize.Width); swapChainDesc.Height = static_cast<UINT>(m_renderTargetSize.Height); swapChainDesc.Format = DXGI_FORMAT_B8G8R8A8_UNORM; swapChainDesc.Stereo = false; swapChainDesc.SampleDesc.Count = 1; swapChainDesc.SampleDesc.Quality = 0; swapChainDesc.BufferUsage = DXGI_USAGE_RENDER_TARGET_OUTPUT; swapChainDesc.BufferCount = 2; swapChainDesc.Scaling = DXGI_SCALING_NONE; swapChainDesc.SwapEffect = DXGI_SWAP_EFFECT_FLIP_SEQUENTIAL;

There are a lot of new concepts here, so let's work through each option one by one.

The Width and Height properties are self-explanatory; these come directly from the CoreWindow instance so that we can render at native resolution. If you want to force a different resolution, this would be where you specify that resolution.

The Format defines the layout of the pixels in the texture. Textures are represented as an array of colors, which can be packed in many different ways. The most common way is to lay out the different color channels in a B8G8R8A8 format. This means that the pixel will have a single byte for each channel: Blue, Green, Red, and Alpha, in that order. The UNORM tells the system to store each pixel as an unsigned normalized integer. Put together, this forms DXGI_FORMAT_B8G8R8A8_UNORM.

The BGRA pixel layout

Often, the R8G8B8A8 pixel layout is also used, however, both are well-supported and you can choose either.

The next flag, Stereo, tells the API if you want to take advantage of the Stereoscopic Rendering support in Direct3D 11.1. This is an advanced topic that we won't cover, so leave this as false for now.

The SampleDesc substructure describes our multisampling setting. Multisampling refers to a technique, commonly known as MSAA or Multisample antialiasing. Antialiasing refers to a technique that is used to reduce the sharp, jagged edges on polygons that arise from trying to map a line to the pixels when the line crosses through the middle of the pixel. MSAA resolves this by sampling within the pixel and filtering those values to get a nice average that represents detail smaller than a pixel. With antialiasing you will see nice smooth lines, at the cost of extra rendering and filtering. For our purposes, we will specify a single count, and zero quality, which tells the API to disable MSAA.

The BufferUsage enumeration tells the API how we plan to use the swap chain, which lets it make performance optimizations. This is most commonly used when creating normal textures, and should be left alone for now.

The Scaling parameter defines how the back buffer will be scaled if the texture resolution does not match the resolution that the operating system is providing to the monitor. You only have two options here: DXGI_SCALING_STRETCH and DXGI_SCALING_NONE.

The SwapEffect describes what happens when a swap between the front buffer and the back buffer(s) occurs. We're building a Windows Store application, so we only have one option here: DXGI_SWAP_EFFECT_FLIP_SEQUENTIAL. If we were building a desktop application, we would have a larger selection, and our final choice would depend on our performance and hardware requirements.

Now you may be wondering what DXGI is, and why we have been using it during the swap chain creation. Beginning with Windows Vista and Direct3D 10.0, the DirectX Graphics Infrastructure (DXGI) exists to act as an intermediary between the Direct3D API and the graphics driver. It manages the adapters and common graphics resources, as well as the Desktop Window Manager, which handles compositing multiple Direct3D applications together to allow multiple windows to share the same screen. DXGI manages the screen, and therefore manages the swap chain as well. That is why we have an ID3D11Device and an IDXGISwapChain object.

Once we're done, we need to use this structure to create the swap chain. You may remember, the graphics device creates resources, and that includes the swap chain. The swap chain, however, is a DXGI resource and not a Direct3D resource, so we first need to extract the DXGI device from the Direct3D device before we can continue. Thankfully, the Direct3D device is layered on top of the DXGI device, so we just need to convert the ID3D11Device1 to an IDXGIDevice1 with the following piece of code:

ComPtr<IDXGIDevice1> dxgiDevice; DX::ThrowIfFailed(m_d3dDevice.As(&dxgiDevice));

Then we can get the adapter that the device is linked to, and the factory that serves the adapter, with the following code snippet:

ComPtr<IDXGIAdapter> dxgiAdapter;

DX::ThrowIfFailed(

dxgiDevice->GetAdapter(&dxgiAdapter));

ComPtr<IDXGIFactory2> dxgiFactory;

DX::ThrowIfFailed(

dxgiAdapter->GetParent(

__uuidof(IDXGIFactory2),

&dxgiFactory

));Using the IDXGIFactory2, we can create the IDXGISwapChain that is tied to the adapter.

Note

The 1 and 2 at the end of IDXGIDevice1 and IDXGIFactory2 differentiate between the different versions of Direct3D and DXGI that exist. Direct3D 11.1 is an add-on to Direct3D 11.0, so we need a way to define the different versions. The same goes for DXGI, which has gone through multiple versions since Vista.

dxgiFactory->CreateSwapChainForCoreWindow( m_d3dDevice.Get(), reinterpret_cast<IUnknown*>(window), &swapChainDesc, nullptr, &m_swapChain );

When we create the swap chain, we need to use a specific method for Windows Store applications, which takes the

CoreWindow instance that we received when we created the application as a parameter. This would be where you pass a HWND Window handle, if you were using the old Win32 API. These handles let Direct3D connect the resource to the correct window and ensure that it is positioned properly when composited with the other windows on the screen.

Now we have a swap chain, almost ready for rendering. While we still have the DXGI device, we can also let it know that we want to enable a power-saving mode that ensures only one frame is queued up for display at a time.

dxgiDevice->SetMaximumFrameLatency(1);

This is especially important in Windows Store applications, as your game may be running on a mobile device, and your players wouldn't want to suddenly lose a lot of battery rendering frames that they do not need.

The next step is to get a reference to the back buffer in the swap chain, so that we can make use of it later on. First, we need to get the back buffer texture from the swap chain, which can be easily done with a call to the

GetBuffer() method. This will give us a pointer to a texture buffer, which we can use to create a render target view, as follows:

ComPtr<ID3D11Texture2D> backBuffer;

m_swapChain->GetBuffer(

0,

__uuidof(ID3D11Texture2D),

&backBuffer

);Direct3D 10 and later versions provide access to the different graphics resources using constructs called views. These let us tell the API how to use the resource, and provide a way of accessing the resources after creation.

In the following code snippet we are creating a render target view (ID3D11RenderTargetView), which, as the name implies, provides a view into a render target. If you haven't encountered the term before, a render target is a texture that you can draw into, for use later. This allows us to draw to off-screen

textures, which we can then use in many different ways to create the final rendered frame.

m_d3dDevice->CreateRenderTargetView( backBuffer.Get(), nullptr, &m_renderTargetView )

Now that we have a render target view, we can tell the graphics context to use this as the back buffer and start drawing, but while we're initializing our graphics let's create a depth buffer texture and view so that we can have some depth in our game.

A depth buffer is a special texture that is responsible for storing the depth of each pixel on the screen. This can be used by the GPU to quickly cull pixels that are hidden by other objects. Being able to avoid drawing objects that we cannot see is important, as drawing those objects still takes time, even though they do not contribute to the scene. Previously, I mentioned that we need to draw a frame in a small amount of time to achieve certain frame rates.

In complex games, this can be difficult to achieve if we are drawing everything, so culling is important to ensure that we can achieve the performance we want.

The depth buffer is an optional feature that isn't automatically generated with the swap chain, so we need to create it ourselves. To do this, we need to describe the texture we want to create with a D3D11_TEXTURE2D_DESC structure. Direct3D 11.1 provides a nice helper structure in the form of a CD3D11_TEXTURE2D_DESC that handles filling in common values for us, as follows:

CD3D11_TEXTURE2D_DESC depthStencilDesc( DXGI_FORMAT_D24_UNORM_S8_UINT, static_cast<UINT>(m_renderTargetSize.Width), static_cast<UINT>(m_renderTargetSize.Height), 1, 1, D3D11_BIND_DEPTH_STENCIL );

Here we're asking for a texture that has a pixel format of 24 bits for the depth, in an unsigned normalized integer, and 8 bits for the stencil in an unsigned integer. The Stencil part of this buffer is an advanced feature that lets you assign a value to pixels in the texture. This is most often used for creating a mask, and support is provided for only rendering to regions with a specific stencil value.

After this, we will set the width and height to match the swap chain, and fill in the Array Size and Mip

Levels so that we can reach the parameter that lets us describe the usage of the texture. The Array Size refers to the number of textures to create. If you want an array of textures combined as a single resource, you can use this parameter to specify the count, but we only want one texture, so we will set this to 1.

Mip Levels are increasingly smaller textures that match the main texture. This is used to allow for performance optimizations when rendering the texture at a distance where the original resolution is overkilled. For example, say you have a screen resolution of 800 by 600. If you want three Mip levels, you will receive an 800 x 600 texture, a 400 x 300 texture, and a 200 x 150 texture. The graphics card has hardware to filter and must use the correct texture, thus reducing the amount of wastage involved in rendering. Our depth buffer here will never be rendered at a distance, so we don't need to use up extra memory providing different Mip Levels; we will just set this to 1 to say that we only want the original resolution texture.

Finally, we will tell the structure that we want this texture to be bound as a depth stencil. This lets the driver make optimizations to ensure that this special texture can be quickly accessed where needed. We round this out by creating the texture using the following description structure:

m_d3dDevice->CreateTexture2D( &depthStencilDesc, nullptr, &depthStencil )

Now that we have a depth buffer texture, we need a depth stencil view (ID3D11DepthStencilView) to bind it, as with our render target earlier. We will use another description structure for it (CD3D11_DEPTH_STENCIL_VIEW_DESC). However, we can get away with just a single parameter and the type of texture; in this case it is a D3D11_DSV_DIMENSION_TEXTURE2D. We can then create the view, ready for use, as shown:

m_d3dDevice->CreateDepthStencilView( depthStencil.Get(), &depthStencilViewDesc, &m_depthStencilView )

Now that we have a device, context, swap chain, render target, and depth buffer, we just need to describe one more thing before we're ready to kick off the game loop. The viewport describes the layout of the area we want to render. In most cases, you will just define the full size of the render target here; however, some situations may need you to draw to just a small section of the screen, maybe for a split screen mode. The viewport lets you define the region once and render as normal for that region, and then define a new viewport for the new region so you can render into it.

To create this viewport, we just need to specify the x and y coordinates of the top-left corner, as well as the width and height of the viewport. We want to use the entire screen, so our top-left corner is x = 0, y = 0, and our width and height match our render target, as shown:

CD3D11_VIEWPORT viewport( 0.0f, 0.0f, m_renderTargetSize.Width, m_renderTargetSize.Height ); m_d3dContext->RSSetViewports(1, &viewport);

Now that we have created everything, we just need to finish up by setting the render target view and depth stencil view so that the API knows to use them. This is done with a single call to the

m_d3dContext->OMSetRenderTargets() method, passing the number of render targets, a pointer to the first render target view pointer, and a pointer to the depth stencil view.

Let's step aside for a moment to understand the stages that the graphics card goes through when it renders something to the screen, also called the graphics pipeline.

To do this, we need to understand what exactly is drawn to the screen, even when we're just working in 2D. The base component of something drawn onto the screen is a vertex. The vertex is a point in space, that, when combined with at least two other points, forms a solid triangle that can be rendered. By combining multiple triangles together, you can create anything from a simple square to a detailed 3D model with thousands of triangles.

Often, vertices will share the same space, so we need a way to reduce the repetition and memory use by only defining a vertex once. However, how do we indicate which triangles use this vertex? This is where the index enters. Just as with arrays, the index allows you to map to a particular vertex using much less memory. This way you can have a lot of data per vertex, and reference a single vertex multiple times by defining the triangles with an array of indices.

How does all this get mapped and calculated so that we render the right thing? This is where the Input Assembler (IA) comes into play. The IA takes the vertex and index buffers (arrays) and builds a list of triangle vertices that need to be drawn. These are then passed to the vertex shader.

The vertex shader is a piece of code that we use to map the 3D position of a vertex to its 2D position on the screen. We'll be using DirectXTK for this so we can avoid worrying about vertex shaders for now; however if you want to start adding awesome visual features and take advantage of the hardware, you will want to learn about these shaders. Vertex shaders are written in a language called the High Level Shader Language (HLSL).

Once we have a transformed vertex, we can take advantage of some new Direct3D 10.0 and Direct3D 11.0 features: the tessellation shader and the geometry shader.

Tessellation refers to the act of increasing geometric detail by adding more triangles. This is done through two different shaders, the hull shader and the domain shader. Tessellation is an advanced topic that is too big for this book. I encourage you to take a look at the many resources available online if you're interested in this topic.

Geometry shaders were introduced in Direct3D 10.0, and provide a way to generate geometry completely on the GPU. These allow for some interesting tricks; however, they're also well outside the scope of this book, so we'll skip over them for now. The hull, domain, and geometry shaders are optional features that are not available on pre-Direct3D 10.0 hardware.

Once we have processed the vertices, they are sent to the rasterizer, which is responsible for interpolating across the screen and finding the region of pixels that represent the object. This happens automatically and results in the input to the pixel shader.

The pixel shader is where the final color of the pixel is determined. Here, we can apply lighting effects to generate the photorealistic scenes we see in modern games, or anything else that we want. In the pixel shader stage, the developer has full control over the final look, which can result in some crazy visual effects, as evident in the many demoscene projects that are created each year.

Note

The demoscene is a collection of developers who create visual experiences, often set to music, and in incredibly small executables. It's easy to find demo challenges that require a maximum executable size of 64k or even 4k.

Finally, we will end up with a lot of pixels, some even trying to share the same spot on the texture. This is where the Output Merger combines everything down into a flat 2D texture that can be displayed on the screen. The Output Merger handles resolving the pixels down to a single color and writing them out to our render target and depth buffer.

The core of our game happens in the game loop. This is where all of the logic and subsystems are processed, and the frame is rendered, many times per second. The game loop consists of two major actions: updating and drawing.

Now we can get to the action. A game is a simulation of a world, and just like a movie simulates motion by displaying multiple frames per second, games simulate a living world by advancing the simulation many times per second, each time stopping to draw a view into the current state of the game world.

The simplest update will just advance the timer, which is provided to us through the BasicTimer class. The timer keeps track of the amount of time that has elapsed since the previous frame, or since the timer was started—usually the start of the game. Keeping track of the time is important because we need the

frame delta (time since the last frame) to correctly advance the simulation by just the right amount. We also work at a millisecond level, sometimes even a fraction of a millisecond, so we need to make use of the floating point data types to appropriately track these values.

Once the time has been updated, most games will accept and parse player input, as this often has the most important effect on the world. Doing this before the rest of the processing means you can act on input immediately rather than delaying by a frame. The amount of time between an input by the player and a reaction in the game world appearing on screen is called latency. Many games that require fast input need to reduce or eliminate latency to ensure the player has a great experience. High latency can make the game feel sluggish or laggy, and frustrate players.

Once we've processed the time and input, we need to process everything else required to make the game world seem alive. This can include (but is not limited to) the following:

Physics

Networking

Artificial Intelligence

Gameplay

Pathfinding

Audio

Once we have an up-to-date game world, we need to display a view of the world to the players so that they can receive some kind of feedback for their actions. This is done during the draw stage, which can either be the cheapest or most expensive part of the frame, depending on what you want to render.

The Draw() method (sometimes also called the

Render() method) is commonly broken down into three sections: clearing the screen, drawing the world, and presenting the frame to the monitor for display.

During the rendering of each frame, the same render target is reused. Just like any data, it remains there unless we clear it away before trying to make use of the texture. Clearing the screen is paramount if you use a depth buffer. If you do not clear the depth buffer, the old data will be used and can prevent certain visuals from rendering when they should, as the GPU believes that something has already been drawn in front.

Clearing the render target and depth buffer allows us to reinitialize the data in each pixel to a clean value, ready for use in the new frame.

To clear both the buffers we need to issue two commands, one for each. This is the first time we will use the views that we created earlier. Using our ID3D11DeviceContext, we will call both the

ClearRenderTargetView() and ClearDepthStencilView() methods to clear the buffers. For the first method you need to pass a color that will be set across the buffer. In most cases setting black (0, 0, 0, 1 in RGBA) will be enough; however, you may want to set a different color for debug purposes, which you can do here with a simple float array.

Clearing the depth just needs a single floating point value, in this case 1.0f, which represents the farthest distance from the camera. Data in the depth buffer is represented by values between 0 and 1, with 0 being the closest to the camera. We also need to tell the command which parts of the depth buffer we want to clear. We won't use the stencil buffer, so we will just clear the depth buffer using D3D11_CLEAR_DEPTH, and leave the default of 0 for the stencil value.

The auto keyword is a new addition to C++ in the C++11 specifi cation. It allows the compiler to determine the data type, instead of requiring the programmer to specify it explicitly.

auto rtvs = m_renderTargetView.Get();

m_d3dContext->OMSetRenderTargets(

1,

&rtvs,

m_depthStencilView.Get()

);

const float clearCol[4] = { 0.0f, 0.0f, 0.0f, 1.0f };

m_d3dContext->ClearRenderTargetView(

rtvs,

clearCol

);

m_d3dContext->ClearDepthStencilView(

m_depthStencilView.Get(),

D3D11_CLEAR_DEPTH,

1.0f,

0);You'll notice that aside from clearing the depth buffer, we're also setting some render targets at the start. This is because in Windows 8 the render target is unbound when we present the frame, so at the start of the new frame we need to rebind the render target so that the GPU has somewhere to draw to.

Now that we have a clean slate, we can start rendering the world. We'll get into this in the next chapter, but this is where we will draw our textures onto the screen to create the world. The user interface is also drawn at this point, and once this stage is complete you should end up with the finished frame, ready for display.

Once we have a frame ready to display, we need to tell Direct3D that we are done drawing and it can flip the back buffer with the front buffer. To do this we tell the API to present the frame.

When we present the frame, we can indicate to DXGI that it should wait until the next vertical retrace before swapping the buffers. The vertical retrace is the period of time where the monitor is not refreshing the screen. It comes from the days of CRT monitors where the electron beam would return to the top of the screen to start displaying a new frame.

We previously looked at tearing, and how it can impact the visual quality of the game. To fix this issue we use VSync. Try turning off VSync in a modern game and watch for lines in the display where the frame is broken by the new frame.

Another thing we can do when we present is define a region that has changed, so that we do not waste power updating all of the screen when only part of it has changed. If you're working on a game you probably won't need this; however, many other Direct3D applications only need to update part of the screen and this can be a useful optimization in an increasing mobile and low-power world.

To get started, we need to define a DXGI_PRESENT_PARAMETERS structure in which we will define the region that we want to present, as follows:

DXGI_PRESENT_PARAMETERS parameters = {0};

parameters.DirtyRectsCount = 0;

parameters.pDirtyRects = nullptr;

parameters.pScrollRect = nullptr;

parameters.pScrollOffset = nullptr;In this case we want to clear the entire screen, so Direct3D lets us indicate that by presenting with zero dirty regions.

Now we can commit by using the Present1() method in the swap chain:

m_swapChain->Present1(1, 0, ¶meters);

The first parameter defines the sync interval, and this is where you would enable or disable VSync. The interval can be any positive integer, and refers to how many refreshes should complete before the swap occurs. A value of zero here will result in VSync being disabled, while a value of one would lock the presentation rate to the monitor refresh rate. You can also use a value above one, which will result in the refresh rate being divided by the provided interval. For example, if you have a monitor with a 60 Hz refresh rate, a value of one would present at 60 Hz, while a value of two would present at 30 Hz.

At this point, we've done everything we need to initialize and render a frame; however, you'll notice the generated code adds some more lines, as shown in the following code snippet:

m_d3dContext->DiscardView(m_renderTargetView.Get()); m_d3dContext->DiscardView(m_depthStencilView.Get());

These two lines allow the driver to apply some optimizations by hinting that we will not be using the contents of the back buffer after this frame. You can get away with not including these lines if you want, but it doesn't hurt to add them and maybe reap the benefits later.

Now you should understand how to get started with a basic Direct3D 11.1 application in Windows 8. We've covered the creation of the application, including how to create the new modern window, and how to initialize Direct3D with all of its individual parts.

We have also covered the graphics pipeline; how each stage works together to produce the final image. From there we looked at all of the components we created, such as the device, context, swap chain, render target, and depth stencil. We then looked at how to apply those to start creating frames to display. By clearing the screen, we ensured that there is a fresh slate for the images and effects we want to draw later, and then we presented those frames, looking at how to specify regions of the screen to optimize for performance when you only change part of the screen.

Next steps

Now we have a running Direct3D app that displays a nice single color (or nothing at all), ready to start rendering textures and other objects onto the screen. In the next chapter we'll start drawing images and text to the screen before we can move on to creating a game.

This chapter skipped over some smaller topics such as lost devices and the correct way to resize the screen; however, the generated code provides all of this. I'd encourage you to take a look at the rest of the code in Direct3DBase.cpp; it has plenty of comments to help you understand the rest of the code.