"For over a decade prophets have voiced the contention that the organization of a single computer has reached its limits and that truly significant advances can be made only by interconnection of a multiplicity of computers."

-Gene Amdahl.

Gettingthemost out of your software is something all developers strive for, and concurrency, and the art of concurrent programming, happens to be one of the best ways in order for you to improve the performance of your applications. Through the careful application of concurrent concepts into our previously single-threaded applications, we can start to realize the full power of our underlying hardware, and strive to solve problems that were unsolvable in days gone past.

With concurrency, we are able to improve the perceived performance of our applications by concurrently dealing with requests, and updating the frontend instead of just hanging until the backend task is complete. Gone are the days of unresponsive programs that give you no indication as to whether they've crashed or are still silently working.

This improvement in the performance of our applications comes at a heavy price though. By choosing to implement systems in a concurrent fashion, we typically see an increase in the overall complexity of our code, and a heightened risk for bugs to appear within this new code. In order to successfully implement concurrent systems, we must first understand some of the key concurrency primitives and concepts at a deeper level in order to ensure that our applications are safe from these new inherent threats.

In this chapter, I'll be covering some of the fundamental topics that every programmer needs to know before going on to develop concurrent software systems. This includes the following:

- A brief history of concurrency

- Threads and how multithreading works

- Processes and multiprocessing

- The basics of event-driven, reactive, and GPU-based programming

- A few examples to demonstrate the power of concurrency in simple programs

- The limitations of Python when it comes to programming concurrent systems

Concurrency was actually derived from early work on railroads and telegraphy, which is why names such as semaphore are currently employed. Essentially, there was a need to handle multiple trains on the same railroad system in such a way that every train would safely get to their destinations without incurring casualties.

It was only in the 1960s that academia picked up interest in concurrent computing, and it was Edsger W. Dijkstra who is credited with having published the first paper in this field, where he identified and solved the mutual exclusion problem. Dijkstra then went on to define fundamental concurrency concepts, such as semaphores, mutual exclusions, and deadlocks as well as the famous Dijkstra's Shortest Path Algorithm.

Concurrency, as with most areas in computer science, is still an incredibly young field when compared to other fields of study such as math, and it's worthwhile keeping this in mind. There is still a huge potential for change within the field, and it remains an exciting field for all--academics, language designers, and developers--alike.

The introduction of high-level concurrency primitives and better native language support have really improved the way in which we, as software architects, implement concurrent solutions. For years, this was incredibly difficult to do, but with this advent of new concurrent APIs, and maturing frameworks and languages, it's starting to become a lot easier for us as developers.

Language designers face quite a substantial challenge when trying to implement concurrency that is not only safe, but efficient and easy to write for the users of that language. Programming languages such as Google's Golang, Rust, and even Python itself have made great strides in this area, and this is making it far easier to extract the full potential from the machines your programs run on.

In this section of the book, we'll take a brief look at what a thread is, as well as at how we can use multiple threads in order to speed up the execution of some of our programs.

A thread can be defined as an ordered stream of instructions that can be scheduled to run as such by operating systems. These threads, typically, live within processes, and consist of a program counter, a stack, and a set of registers as well as an identifier. These threads are the smallest unit of execution to which a processor can allocate time.

Threads are able to interact with shared resources, and communication is possible between multiple threads. They are also able to share memory, and read and write different memory addresses, but therein lies an issue. When two threads start sharing memory, and you have no way to guarantee the order of a thread's execution, you could start seeing issues or minor bugs that give you the wrong values or crash your system altogether. These issues are, primarily, caused by race conditions which we'll be going, in more depth inChapter 4, Synchronization Between Threads.

The following figure shows how multiple threads can exist on multiple different CPUs:

Within a typical operating system, we, typically, have two distinct types of threads:

Python works at the user-level, and thus, everything we cover in this book will be, primarily, focused on these user-level threads.

When people talk about multithreaded processors, they are typically referring to a processor that can run multiple threads simultaneously, which they are able to do by utilizing a single core that is able to very quickly switch context between multiple threads. This switching context takes place in such a small amount of time that we could be forgiven for thinking that multiple threads are running in parallel when, in fact, they are not.

When trying to understand multithreading, it's best if you think of a multithreaded program as an office. In a single-threaded program, there would only be one person working in this office at all times, handling all of the work in a sequential manner. This would become an issue if we consider what happens when this solitary worker becomes bogged down with administrative paperwork, and is unable to move on to different work. They would be unable to cope, and wouldn't be able to deal with new incoming sales, thus costing our metaphorical business money.

With multithreading, our single solitary worker becomes an excellent multitasker, and is able to work on multiple things at different times. They can make progress on some paperwork, and then switch context to a new task when something starts preventing them from doing further work on said paperwork. By being able to switch context when something is blocking them, they are able to do far more work in a shorter period of time, and thus make our business more money.

In this example, it's important to note that we are still limited to only one worker or processing core. If we wanted to try and improve the amount of work that the business could do and complete work in parallel, then we would have to employ other workers or processes as we would call them in Python.

Let's see a few advantages of threading:

- Multiple threads are excellent for speeding up blocking I/O bound programs

- They are lightweight in terms of memory footprint when compared to processes

- Threads share resources, and thus communication between them is easier

There are some disadvantages too, which are as follows:

- CPython threads are hamstrung by the limitations of the global interpreter lock (GIL), about which we'll go into more depth in the next chapter.

- While communication between threads may be easier, you must be very careful not to implement code that is subject to race conditions

- It's computationally expensive to switch context between multiple threads. By adding multiple threads, you could see a degradation in your program's overall performance.

Processes are very similar in nature to threads--they allow us to do pretty much everything a thread can do--but the one key advantage is that they are not bound to a singular CPU core. If we extend our office analogy further, this, essentially, means that if we had a four core CPU, then we can hire two dedicated sales team members and two workers, and all four of them would be able to execute work in parallel. Processes also happen to be capable of working on multiple things at one time much as our multithreaded single office worker.

These processes contain one main primary thread, but can spawn multiple sub-threads that each contain their own set of registers and a stack. They can become multithreaded should you wish. All processes provide every resource that the computer needs in order to execute a program.

In the following image, you'll see two side-by-side diagrams; both are examples of a process. You'll notice that the process on the left contains only one thread, otherwise known as the primary thread. The process on the right contains multiple threads, each with their own set of registers and stacks:

With processes, we can improve the speed of our programs in specific scenarios where our programs are CPU bound, and require more CPU horsepower. However, by spawning multiple processes, we face new challenges with regard to cross-process communication, and ensuring that we don't hamper performance by spending too much time on this inter-process communication (IPC).

UNIX processes are created by the operating system, and typically contain the following:

- Process ID, process group ID, user ID, and group ID

- Environment

- Working directory

- Program instructions

- Registers

- Stack

- Heap

- File descriptors

- Signal actions

- Shared libraries

- Inter-process communication tools (such as message queues, pipes, semaphores, or shared memory)

The advantages of processes are listed as follows:

Here are the disadvantages of processes:

- No shared resources between processes--we have to implement some form of IPC

- These require more memory

In Python, we can choose to run our code using either multiple threads or multiple processes should we wish to try and improve the performance over a standard single-threaded approach. We can go with a multithreaded approach and be limited to the processing power of one CPU core, or conversely we can go with a multiprocessing approach and utilize the full number of CPU cores available on our machine. In today's modern computers, we tend to have numerous CPUs and cores, so limiting ourselves to just the one, effectively renders the rest of our machine idle. Our goal is to try and extract the full potential from our hardware, and ensure that we get the best value for money and solve our problems faster than anyone else:

With Python's multiprocessing module, we can effectively utilize the full number of cores and CPUs, which can help us to achieve greater performance when it comes to CPU-bounded problems. The preceding figure shows an example of how one CPU core starts delegating tasks to other cores.

In all Python versions less than or equal to 2.6, we can attain the number of CPU cores available to us by using the following code snippet:

# First we import the multiprocessing module

import multiprocessing

# then we call multiprocessing.cpu_count() which

# returns an integer value of how many available CPUs we have

multiprocessing.cpu_count()Not only does multiprocessing enable us to utilize more of our machine, but we also avoid the limitations that the Global Interpreter Lock imposes on us in CPython.

One potential disadvantage of multiple processes is that we inherently have no shared state, and lack communication. We, therefore, have to pass it through some form of IPC, and performance can take a hit. However, this lack of shared state can make them easier to work with, as you do not have to fight against potential race conditions in your code.

Event-driven programming is a huge part of our lives--we see examples of it every day when we open up our phone, or work on our computer. These devices run purely in an event-driven way; for example, when you click on an icon on your desktop, the operating system registers this as an event, and then performs the necessary action tied to that specific style of event.

Every interaction we do can be characterized as an event or a series of events, and these typically trigger callbacks. If you have any prior experience with JavaScript, then you should be somewhat familiar with this concept of callbacks and the callback design pattern. In JavaScript, the predominant use case for callbacks is when you perform RESTful HTTP requests, and want to be able to perform an action when you know that this action has successfully completed and we've received our HTTP response:

If we look at the previous image, it shows us an example of how event-driven programs process events. We have our EventEmitters on the left-hand side; these fire off multiple Events, which are picked up by our program's Event Loop, and, should they match a predefined Event Handler, that handler is then fired to deal with the said event.

Callbacks are often used in scenarios where an action is asynchronous. Say, for instance, you applied for a job at Google, you would give them an email address, and they would then get in touch with you when they make their mind up. This is, essentially, the same as registering a callback except that, instead of having them email you, you would execute an arbitrary bit of code whenever the callback is invoked.

Turtle is a graphics module that has been written in Python, and is an incredible starting point for getting kids interested in programming. It handles all the complexities that come with graphics programming, and lets them focus purely on learning the very basics whilst keeping them interested.

It is also a very good tool to use in order to demonstrate event-driven programs. It features event handlers and listeners, which is all that we need:

import turtle

turtle.setup(500,500)

window = turtle.Screen()

window.title("Event Handling 101")

window.bgcolor("lightblue")

nathan = turtle.Turtle()

def moveForward():

nathan.forward(50)

def moveLeft():

nathan.left(30)

def moveRight():

nathan.right(30)

def start():

window.onkey(moveForward, "Up")

window.onkey(moveLeft, "Left")

window.onkey(moveRight, "Right")

window.listen()

window.mainloop()

if __name__ == '__main__':

start()In the first line of this preceding code sample, we import the turtle graphics module. We then go up to set up a basic turtle window with the title Event Handling 101 and a background color of light blue.

After we've got the initial setup out of the way, we then go on to define three distinct event handlers:

moveForward: This is for when we want to move our character forward by 50 unitsmoveLeft/moveRight: This is for when we want to rotate our character in either direction by 30 degrees

Once we've defined our three distinct handlers, we then go on to map these event handlers to the up, left, and right key presses using the onkey method.

Now that we've set up our handlers, we then tell them to start listening. If any of the keys are pressed after our program has started listening, then we will fire its event handler function. Finally, when you run the preceding code, you should see a window appear with an arrow in the center, which you can move about with your arrow keys.

Reactive programming is very similar to that of event-driven, but instead of revolving around events, it focuses on data. More specifically, it deals with streams of data, and reacts to specific data changes.

RxPy is the Python equivalent of the very popular ReactiveX framework. If you've ever done any programming in Angular 2 and proceeding versions, then you will have used this when interacting with HTTP services. This framework is a conglomeration of the observer pattern, the iterator pattern, and functional programming. We essentially subscribe to different streams of incoming data, and then create observers that listen for specific events being triggered. When these observers are triggered, they run the code that corresponds to what has just happened.

We'll take a data center as a good example of how reactive programming can be utilized. Imagine this data center has thousands of server racks, all constantly computing millions upon millions of calculations. One of the biggest challenges in these data centers is keeping all these tightly packed server racks cool enough so that they don't damage themselves. We could set up multiple thermometers throughout our data center to ensure that we aren't getting too hot anywhere, and send the readings from these thermometers to a central computer as a continuous stream:

Within our central control station, we could set up a RxPy program that observes this continuous stream of temperature information. Within these observers, we could then define a series of conditional events to listen out for, and then react whenever one of these conditionals is hit.

One such example would be an event that only triggers if the temperature for a specific part of the data center gets too warm. When this event is triggered, we could then automatically react and increase the flow of any cooling system to that particular area, and thus bring the temperature back down again:

import rx

from rx import Observable, Observer

# Here we define our custom observer which

# contains an on_next method, an on_error method

# and an on_completed method

class temperatureObserver(Observer):

# Every time we receive a temperature reading

# this method is called

def on_next(self, x):

print("Temperature is: %s degrees centigrade" % x)

if (x > 6):

print("Warning: Temperate Is Exceeding Recommended Limit")

if (x == 9):

print("DataCenter is shutting down. Temperature is too high")

# if we were to receive an error message

# we would handle it here

def on_error(self, e):

print("Error: %s" % e)

# This is called when the stream is finished

def on_completed(self):

print("All Temps Read")

# Publish some fake temperature readings

xs = Observable.from_iterable(range(10))

# subscribe to these temperature readings

d = xs.subscribe(temperatureObserver())The first two lines of our code import the necessary rx module, and then from there import both observable and observer.

We then go on to create a temperatureObserver class that extends the observer. This class contains three functions:

on_next: This is called every time our observer observes something newon_error: This acts as our error-handler function; every time we observe an error, this function will be calledon_completed: This is called when our observer meets the end of the stream of information it has been observing

In the on_next function, we want it to print out the current temperature, and also to check whether the temperature that it receives is under a set of limits. If the temperature matches one of our conditionals, then we handle it slightly differently, and print out descriptive errors as to what has happened.

After our class declaration, we go on to create a fake observable which contains 10 separate values using Observable.from_iterable(), and finally, the last line of our preceding code then subscribes an instance of our new temperatureObserver class to this observable.

GPUs are renowned for their ability to render high resolution, fast action video games. They are able to crunch together the millions of necessary calculations per second in order to ensure that every vertex of your game's 3D models are in the right place, and that they are updated every few milliseconds in order to ensure a smooth 60 FPS.

Generally speaking, GPUs are incredibly good at performing the same task in parallel, millions upon millions of times per minute. But if GPUs are so performant, then why do we not employ them instead of our CPUs? While GPUs may be incredibly performant at graphics processing, they aren't however designed for handling the intricacies of running an operating system and general purpose computing. CPUs have fewer cores, which are specifically designed for speed when it comes to switching context between operating tasks. If GPUs were given the same tasks, you would see a considerable degradation in your computer's overall performance.

But how can we utilize these high-powered graphics cards for something other than graphical programming? This is where libraries such as PyCUDA, OpenCL, and Theano come into play. These libraries try to abstract away the complicated low-level code that graphics APIs have to interact with in order to utilize the GPU. They make it far simpler for us to repurpose the thousands of smaller processing cores available on the GPU, and utilize them for our computationally expensive programs:

These Graphics Processing Units (GPU) encapsulate everything that scripting languages are not. They are highly parallelizable, and built for maximum throughput. By utilizing these in Python, we are able to get the best of both worlds. We can utilize a language that is favored by millions due to its ease of use, and also make our programs incredibly performant.

In the following sections, we will have a look at the various libraries that are available to us, which expose the power of the GPU.

PyCUDA allows us to interact with Nvidia's CUDA parallel computation API in Python. It offers us a lot of different advantages over other frameworks that expose the same underlying CUDA API. These advantages include things such as an impressive underlying speed, complete control of the CUDA's driver API, and most importantly, a lot of useful documentation to help those just getting started with it.

Unfortunately however, the main limitation for PyCUDA is the fact that it utilizes Nvidia-specific APIs, and as such, if you do not have a Nvidia-based graphics card, then you will not be able to take advantage of it. However, there are other alternatives which do an equally good job on other non-Nvidia graphics cards.

OpenCL is one such example of an alternative to PyCUDA, and, in fact, I would recommend this over PyCUDA due to its impressive range of conformant implementations, which does also include Nvidia. OpenCL was originally conceived by Apple, and allows us to take advantage of a number of heterogeneous platforms such as CPUs, GPUs, digital signal processors, field-programmable gate arrays, and other different types of processors and hardware accelerators.

There currently exist third-party APIs for not only Python, but also Java and .NET, and it is therefore ideal for researchers and those of us who wish to utilize the full power of our desktop machines.

Theano is another example of a library that allows you to utilize the GPU as well as to achieve speeds that rival C implementations when trying to solve problems that involve huge quantities of data.

It's a different style of programming, though, in the sense that Python is the medium in which you craft expressions that can be passed into Theano.

Note

The official website for Theano can be found here: http://deeplearning.net/software/theano/

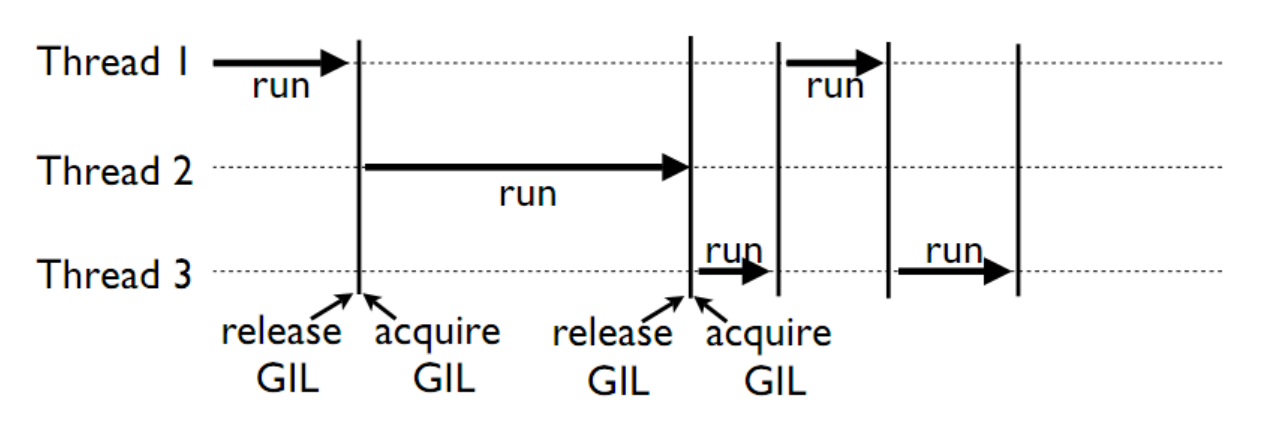

Earlier in the chapter, I talked about the limitations of the GIL or the Global Interpreter Lock that is present within Python, but what does this actually mean?

First, I think it's important to know exactly what the GIL does for us. The GIL is essentially a mutual exclusion lock which prevents multiple threads from executing Python code in parallel. It is a lock that can only be held by one thread at any one time, and if you wanted a thread to execute its own code, then it would first have to acquire the lock before it could proceed to execute its own code. The advantage that this gives us is that while it is locked, nothing else can run at the same time:

In the preceding diagram, we see an example of how multiple threads are hampered by this GIL. Each thread has to wait and acquire the GIL before it can progress further, and then release the GIL, typically before it has had a chance to complete its work. It follows a random round-robin approach, and you have no guarantees as to which thread will acquire the lock first.

Why is this necessary, you might ask? Well, the GIL has been a long-disputed part of Python, and over the years has triggered many a debate over its usefulness. But it was implemented with good intentions and to combat the non-thread safe Python memory management. It prevents us from taking advantage of multiprocessor systems in certain scenarios.

Guido Van Rossum, the creator of Python, posted an update on the removal of the GIL and its benefits in a post here: http://www.artima.com/weblogs/viewpost.jsp?thread=214235. He states that he wouldn't be against someone creating a branch of Python that is GIL-less, and he would accept a merge of this code if, and only if, it didn't negatively impact the performance of a single-threaded application.

There have been prior attempts at getting rid of the GIL, but it was found that the addition of all the extra locks to ensure thread-safety actually slowed down an application by a factor of more then two. In other words, you would have been able to get more work done with a single CPU than you would have with just over two CPUs. There are, however, libraries such as NumPy that can do everything they need to without having to interact with the GIL, and working purely outside of the GIL is something I'm going to be exploring in greater depth in the future chapters of this book.

It must also be noted that there are other implementations of Python, such as Jython and IronPython, that don't feature any form of Global Interpreter Lock, and as such can fully exploit multiprocessor systems. Jython and IronPython both run on different virtual machines, so, they can take advantage of their respective runtime environments.

Jython is an implementation of Python that works directly with the Java platform. It can be used in a complementary fashion with Java as a scripting language, and has been shown to outperform CPython, which is the standard implementation of Python, when working with some large datasets. For the majority of stuff though, CPython's single-core execution typically outperforms Jython and its multicore approach.

The advantage to using Jython is that you can do some pretty cool things with it when working in Java, such as import existing Java libraries and frameworks, and use them as though they were part of your Python code.

IronPython is the .NET equivalent of Jython and works on top of Microsoft's .NET framework. Again, you'll be able to use it in a complementary fashion with .NET applications. This is somewhat beneficial for .NET developers, as they are able to use Python as a fast and expressive scripting language within their .NET applications.

If Python has such obvious, known limitations when it comes to writing performant, concurrent applications, then why do we continue to use it? The short answer is that it's a fantastic language to get work done in, and by work, I'm not necessarily talking about crunching through a computationally expensive task. It's an intuitive language, which is easy to pick up and understand for those who don't necessarily have a lot of programming experience.

The language has seen a huge adoption rate amongst data scientists and mathematicians working in incredibly interesting fields such as machine learning and quantitative analysis, who find it to be an incredibly useful tool in their arsenal.

In both the Python 2 and 3 ecosystems, you'll find a huge number of libraries that are designed specifically for these use cases, and by knowing about Python's limitations, we can effectively mitigate them, and produce software that is efficient and capable of doing exactly what is required of it.

So now that we understand what threads and processes are, as well as some of the limitations of Python, it's time to have a look at just how we can utilize multi-threading within our application in order to improve the speed of our programs.

One excellent example of the benefits of multithreading is, without a doubt, the use of multiple threads to download multiple images or files. This is, actually, one of the best use cases for multithreading due to the blocking nature of I/O.

To highlight the performance gains, we are going to retrieve 10 different images from http://lorempixel.com/400/200/sports, which is a free API that delivers a different image every time you hit that link. We'll then store these 10 different images within a temp folder so that we can view/use them later on.

All the code used in these examples can be found in my GitHub repository here: https://github.com/elliotforbes/Concurrency-With-Python.

First, we should have some form of a baseline against which we can measure the performance gains. To do this, we'll write a quick program that will download these 10 images sequentially, as follows:

import urllib.request

def downloadImage(imagePath, fileName):

print("Downloading Image from ", imagePath)

urllib.request.urlretrieve(imagePath, fileName)

def main():

for i in range(10):

imageName = "temp/image-" + str(i) + ".jpg"

downloadImage("http://lorempixel.com/400/200/sports", imageName)

if __name__ == '__main__':

main()In the preceding code, we begin by importing urllib.request. This will act as our medium for performing HTTP requests for the images that we want. We then define a new function called downloadImage, which takes in two parameters, imagePath and fileName. imagePath represents the URL image path that we wish to download. fileName represents the name of the file that we wish to use to save this image locally.

In the main function, we then start up a for loop. Within this for loop, we generate an imageName which includes the temp/ directory, a string representation of what iteration we are currently at--str(i)--and the file extension .jpg. We then call the downloadImage function, passing in the lorempixel location, which provides us with a random image as well as our newly generated imageName.

Upon running this script, you should see your temp directory sequentially fill up with 10 distinct images.

Now that we have our baseline, it's time to write a quick program that will concurrently download all the images that we require. We'll be going over creating and starting threads in future chapters, so don't worry if you struggle to understand the code. The key point of this is to realize the potential performance gains to be had by writing programs concurrently:

import threading

import urllib.request

import time

def downloadImage(imagePath, fileName):

print("Downloading Image from ", imagePath)

urllib.request.urlretrieve(imagePath, fileName)

print("Completed Download")

def executeThread(i):

imageName = "temp/image-" + str(i) + ".jpg"

downloadImage("http://lorempixel.com/400/200/sports", imageName)

def main():

t0 = time.time()

# create an array which will store a reference to

# all of our threads

threads = []

# create 10 threads, append them to our array of threads

# and start them off

for i in range(10):

thread = threading.Thread(target=executeThread, args=(i,))

threads.append(thread)

thread.start()

# ensure that all the threads in our array have completed

# their execution before we log the total time to complete

for i in threads:

i.join()

# calculate the total execution time

t1 = time.time()

totalTime = t1 - t0

print("Total Execution Time {}".format(totalTime))

if __name__ == '__main__':

main()In the first line of our newly modified program, you should see that we are now importing the threading module; this will enable us to create our first multithreaded application. We then abstract our filename generation, and call the downloadImage function into our own executeThread function.

Within the main function, we first create an empty array of threads, and then iterate 10 times, creating a new thread object, appending this to our array of threads, and then starting that thread.

Finally, we iterate through our array of threads by calling for i in threads, and call the join method on each of these threads. This ensures that we do not proceed with the execution of our remaining code until all of our threads have finished downloading the image.

If you execute this on your machine, you should see that it almost instantaneously starts the download of the 10 different images. When the downloads finish, it again prints out that it has successfully completed, and you should see the temp folder being populated with these images.

Both the preceding scripts do exactly the same tasks using the exact same urllib.request library, but if you take a look at the total execution time, then you should see an order of magnitude improvement on the time taken for the concurrent script to fetch all 10 images.

So, we've seen exactly how we can improve things such as downloading images, but how do we improve the performance of our number crunching? Well, this is where multiprocessing shines if used in the correct manner.

In this example, we'll try to find the prime factors of 10,000 random numbers that fall between 20,000 and 100,000,000. We are not necessarily fussed about the order of execution so long as the work gets done, and we aren't sharing memory between any of our processes.

Again, we'll write a script that does this in a sequential manner, which we can easily verify is working correctly:

import time

import random

def calculatePrimeFactors(n):

primfac = []

d = 2

while d*d <= n:

while (n % d) == 0:

primfac.append(d)# supposing you want multiple factors repeated

n //= d

d += 1

if n > 1:

primfac.append(n)

return primfac

def main():

print("Starting number crunching")

t0 = time.time()

for i in range(10000):

rand = random.randint(20000, 100000000)

print(calculatePrimeFactors(rand))

t1 = time.time()

totalTime = t1 - t0

print("Execution Time: {}".format(totalTime))

if __name__ == '__main__':

main()The first two lines make up our required imports--we'll be needing both the time and the random modules. After our imports, we then go on to define the calculatePrimeFactors function, which takes an input of n. This efficiently calculates all of the prime factors of a given number, and appends them to an array, which is then returned once that function completes execution.

After this, we define the main function, which calculates the starting time and then cycles through 10,000 numbers, which are randomly generated by using random's randint. We then pass these generated numbers to the calculatePrimeFactors function, and we print out the result. Finally, we calculate the end time of this for loop and print it out.

If you execute this on your computer, you should see the array of prime factors being printed out for 10,000 different random numbers, as well as the total execution time for this code. For me, it took roughly 3.6 seconds to execute on my Macbook.

So now let us have a look at how we can improve the performance of this program by utilizing multiple processes.

In order for us to split this workload up, we'll define an executeProc function, which, instead of generating 10,000 random numbers to be factorized, will generate 1,000 random numbers. We'll create 10 processes, and execute the function 10 times, though, so the total number of calculations should be the exact same as when we performed the sequential test:

import time

import random

from multiprocessing import Process

# This does all of our prime factorization on a given number 'n'

def calculatePrimeFactors(n):

primfac = []

d = 2

while d*d <= n:

while (n % d) == 0:

primfac.append(d)# supposing you want multiple factors repeated

n //= d

d += 1

if n > 1:

primfac.append(n)

return primfac

# We split our workload from one batch of 10,000 calculations

# into 10 batches of 1,000 calculations

def executeProc():

for i in range(1000):

rand = random.randint(20000, 100000000)

print(calculatePrimeFactors(rand))

def main():

print("Starting number crunching")

t0 = time.time()

procs = []

# Here we create our processes and kick them off

for i in range(10):

proc = Process(target=executeProc, args=())

procs.append(proc)

proc.start()

# Again we use the .join() method in order to wait for

# execution to finish for all of our processes

for proc in procs:

proc.join()

t1 = time.time()

totalTime = t1 - t0

# we print out the total execution time for our 10

# procs.

print("Execution Time: {}".format(totalTime))

if __name__ == '__main__':

main()This last code performs the exact same function as our originally posted code. The first change, however, is on line three. Here, we import the process from the multiprocessing module. Our following, the calculatePrimeFactors method has not been touched.

You should then see that we pulled out the for loop that initially ran for 10,000 iterations. We now placed this in a function called executeProc, and we also reduced our for loops range to 1,000.

Within the main function, we then create an empty array called procs. We then create 10 different processes, and set the target to be the executeProc function, and pass in no args. We append this newly created process to our procs arrays, and then we start the process by calling proc.start().

After we've created 10 individual processes, we then cycle through these processes which are now in our procs array, and join them. This ensures that every process has finished its calculations before we proceed to calculate the total execution time.

If you execute this now, you should see the 10,000 outputs now print out in your console, and you should also see a far lower execution time when compared to your sequential execution. For reference, the sequential program executed in 3.9 seconds on my computer compared to 1.9 seconds when running the multiprocessing version.

This is just a very basic demonstration as to how we can implement multiprocessing into our applications. In future chapters, we'll explore how we can create pools and utilize executors. The key point to take away from this is that we can improve the performance of some CPU-bound tasks by utilizing multiple cores.

By now, you should have an appreciation of some of the fundamental concepts that underlie concurrent programming. You should have a grasp of threads, processes, and you'll also know some of the limitations and challenges of Python when it comes to implementing your own concurrent applications. Finally, you have also seen firsthand some of the performance improvements that you can achieve if you were to add different types of concurrency to your applications.

I should make it clear now that there is no silver bullet that you can apply to every application and see consistent performance improvements. One style of concurrent programming might work better than another depending on the requirements of your application, so in the next few chapters, we'll look at all the different mechanisms you can employ and when to employ them.

In the next chapter, we'll have a more in-depth look at the concept of concurrency and parallelism, as well as the differences between the two concepts. We'll also look at some of the main bottlenecks that constrain our concurrent systems, and you'll learn the different styles of computer system architecture, and how it can help us achieve greater performance.

Download code from GitHub

Download code from GitHub