"All slow application abandon, ye who enter here." (Freely adapted from Dante's Divine Comedy Poem—http://en.wikipedia.org/wiki/Divine_Comedy)

One day like many, on a JBoss AS Forum:

"Hi

I am running the Acme project using JBoss 5.1.0. My requirement is to allow 1000 concurrent users to access the application. But when I try to access the application with 250 users, the server slows down and finally throws an exception "Could not establish a connection with the database. Does anyone have an idea please help me to solve my problem."

In the beginning, performance was not a concern for software. Early programming languages like C or Cobol were doing a decent job of developing applications and the end user was just discovering the wonders of information technology that would allow him to save a lot of time.

Today we are all aware of the rapidly changing business environment in which we work and live and the impact it has on business and information technology. We recognize that an organization needs to deliver faster services to a larger set of people and companies, and that downtime or poor responses of those services will have a significant impact on the business.

To survive and thrive in such an environment, organizations must consider it an imperative task for their businesses to deliver applications faster than their competitors or they will risk losing potential revenue and reputation among customers.

So tuning an application in today's market is firstly a necessity for survival, but, there are even more subtle reasons, like using your system resources more efficiently. For example, if you manage to meet your system requirements with fewer fixed costs (let's say by using eight CPU machine instead of a 16 one) you are actually using your resources more efficiently and thus saving money. As an additional benefit you can also reduce some variable costs like the price of software licenses, which are usually calculated on the amount of CPUs used.

On the basis of these premises, it's time to reconsider the role of performance tuning in your software development cycle, and that's what this book aims to do.

This book is an exhaustive guide to improving the performance of your Java EE applications running on JBoss AS and on the embedded web container (Jakarta Tomcat). All the guidelines and best practices contained in this book have been patiently collected through years of experience from the trenches and from the suggestions of valuable people, and ultimately in a myriad of blogs, and each one has contributed to improve the quality of this book.

The performance of an application running on the application server is the result of a complex interaction of many aspects. Like a puzzle, each piece contributes ultimately to define the performance of the final product. So our challenge will be to teach how to write fast applications on JBoss AS, but also how to tune all the components and hardware which are a part of the IT system. As we suppose that our prime reader will not be interested in learning the basics of the application server, nor how to get started with Java EE, we will go straight to the heart of each component and elaborate on the strategies to improve their performance.

Should you be interested in learning more about the application server itself you can refer to the JBoss community at http://community.jboss.org/ or you can have a look at my previous book: https://www.packtpub.com/jboss-as-5-development/book.

The term "performance" commonly refers to how quickly an application can be executed. In terms of the user's perspective on performance, the definition is quite easy to grasp. For example, a fast website means one that is able to load web pages very quickly. From an administrator's point of view, the concept needs to be translated into meaningful numbers. As a matter of fact, the expert can distinguish two ways to measure the performance of an application:

Response Time

Throughput

The Response Time can be defined as the time it takes for one user to perform a task. For example, on a website, after the customer submits one e-commerce form, the time it takes to process the order and for rendering and displaying the result in a new page is the response time for this functionality. As you can see, the concept of performance is essentially the same as from the end user perspective, but it is translated into numbers.

In practice, as shown in the following image, the Response Time includes the network roundtrip to the application server, the time to execute the business logic in your middleware (including the time to contact external legacy systems) and the latency to return the response to the client.

At this point the concept of Response Time should be quite clear, but you might wonder if this measurement is a constant; actually it is not. The Response Time changes accordingly with the load on the application. A single operation cannot be indicative of the overall performance: you have to consider how long the procedure takes to be executed in a production environment, where you have a considerable amount of customers running.

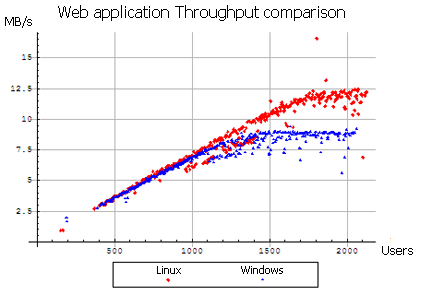

Another performance-related counter is Throughput. Throughput is the number of transactions that can occur in a given amount of time. This is a fundamental parameter that is used to evaluate not only the performance of a website, but also the commercial value of a software. The Throughput is usually measured in Transactions Per Second (TPS) and obviously an application that has a TPS higher than its competitors is also the one with higher commercial value—all other features standing equal.

The following image, depicts a Throughput comparison between a Linux Server and a Windows Server, as part of a complete benchmark (http://www.webperformanceinc.com/library/reports/windows_vs_linux_part1/index.html):

As we have just learnt, we cannot define performance within the context of a single user who is testing the application. The performance of an application is tightly coupled with the number of users, so we need to define another variable which is known as Scalability. Scalability refers to the capability of a system to increase total Throughput under an increased load when resources are added. It can be seen from two different perspectives:

The following image is a synthetic representation of the two different perspectives:

Both solutions have pros and cons: generally vertical scaling requires a greater hardware expenditure because it needs upgrading to powerful enterprise servers, but it's easier to implement as it requires fewer changes in your configuration.

Horizontal scaling on the other hand, requires little investment on cheaper hardware (which has a linear expenditure) but it introduces a more complex programming model, thus it needs an expert hand as it concerns configuration and might require some changes in your application too.

At this point you will have grasped that performance tuning spans over several components, including the application delivered and the environment where it is running. However, we haven't addressed which is the right moment for starting to tune your applications. This is one of the most underestimated issues in software development and it is commonly solved by applying tuning only at two stages:

While coding your classes

At the end of software development

Tuning your applications as you code is a consolidated habit of software developers, at first because it's funny and satisfying to optimize your code and see an immediate improvement in the performance of a single function. However, the other side of the coin is that most of these optimizations are useless. Why? It is statistically proven that within one application only 10-15 % of the code is executed frequently, so trying to optimize code blindly at this stage will produce little or no benefit at all to your application.

The second favorite anti-pattern adopted by developers is starting the tuning process just at the end of the software development cycle. For good reason, this can be considered a bad habit. Firstly, your tuning session will be more complex and longer: you have to analyze again the whole application roundtrip while hunting for bottlenecks again. Supposing you are able to isolate the cause of the bottleneck, you still might be forced to modify critical sections of your code, which, at this stage, can turn easily into a nightmare.

Think, for example, of an application which uses a set of JSF components to render trees and tables. If you discover that your JSF library runs like a crawl when dealing with production data, you have very little you can do at this stage: either you rewrite the whole frontend or you find a new job.

So the moral of the story is: you cannot think of performance as a single step in the software development process; it needs to be a part of your overall software development plan. Achieving maximum performance from your software requires continued effort throughout all phases of development, not just coding. In the next section we will try to uncover how performance tuning fits in the overall software development cycle.

Having determined that tuning needs to be a part of the software development cycle, let's have a look at the software cycle with performance engineering integrated.

As you can see, the software process contains a set of activities (Analysis, Design, Coding, and Performance Tuning) which should be familiar to analyst programmers, but with two important additions: at first there is a new phase called Performance Test which begins at the end of the software development cycle and will measure and evaluate the complete application. Secondly, every software phase contains Performance focal points, which are appropriate for that software segment.

Now let's see in more detail how a complete software cycle is carried on with performance in mind:

Analysis: Producing high quality, fast applications always starts with a correct analysis of your software requirements. In this phase you have to define what the software is expected to do by providing a set of scenarios that illustrate your functional domain. This translates in creating use cases, which are diagrams that describe the interactions of users within the system. These use cases are a crucial step in determining what type of benchmarks are needed by your system: for example, here we assume that your application will be accessed by 500 concurrent users, each of whom will start a database connection to retrieve data from a database as well as use a JMS connection to fire an action. Software analysis, however, spans beyond the software requirements and should consider critical information, such as the kind of hardware where the application will run or the network interfaces that will support its communication.

Design: In this phase, the overall software structure and its nuances are defined. Critical points like the number of tiers needed for the package architecture, the database design, and the data structure design are all defined in this phase. A software development model is thus created. The role of performance in this phase is fundamental, architects should perform the following:

Quickly evaluate different algorithms, data structures, and libraries to see which are most efficient.

Design the application so that it is possible to accommodate any changes if there are new requirements that could impact performance.

Code: The design must be now translated into a machine-readable form. The code generation step performs this task. If the design is performed in a detailed manner, code generation can be accomplished without much complication. If you have completed the previous phases with an eye on tuning you should partially know which functions are critical for the system, and code them in the most efficient way. We say "partially" because only when you have dropped the last line of code will you be able to test the complete application and see where it runs quickly and where it needs to be improved.

Performance Test: This step completes the software production cycle and should be performed before releasing the application into production. Even if you have been meticulous at performing the previous steps, it is absolutely normal that your application doesn't meet all the performance requirements on the first try. In fact, you cannot predict every aspect of performance, so it is necessary to complete your software production with a performance test. A performance test is an iterative process that you use to identify and eliminate bottlenecks until your application meets its performance objectives. You start by establishing a baseline. Then you collect data, analyze the results, and make configuration changes based on the analysis. After each set of changes, you retest and measure to verify that your application has moved closer to its performance objectives.

The following image synthesizes the cyclic process of performance tuning:

You are now aware that performance tuning is an iterative process which continues until the software has met your goals in terms of Response Time and Throughput. Let's see more in detail how to proceed with every single step of the process:

The first part of performance tuning consists of building up a baseline. In practice you need to figure out the conditions under which the application will perform. The more you understand exactly how your application will be used, the more successful your performance tuning will be. If you have invested some days in an accurate analysis you should have already got the basis upon which you will develop your performance objectives which are usually measured in terms of response times, throughput (requests per second), and resource utilization level.

Tip

Plan for average users or for peak?

There are many types of statistics that can be useful when you are building a baseline, however one of your goals should be to develop a profile of your application's workload with special attention to the peaks. For example, many business applications experience daily or monthly peaks depending on a variety of factors. This is especially true for organizations like travel agencies or airline companies which expect great differences in workload in different periods of the year. In this kind of scenario, it doesn't make sense to set up a baseline on the average number of users: you have no choice but to use the worst case; that is the peak of users.

In order to collect data, all applications should be instrumented to provide information for performance analysis. This can be broken down in a set of activities:

Set up your application server with the same settings and hardware as the production environment and produce a replica of database/naming directories if you can't use the production legacy systems for testing.

Isolate the testing environment so that you don't skew those tests by involving network traffic that doesn't belong in your tests.

Install the appropriate tools, which will start the load test and the counterpart software that collect data from the benchmark. The next chapter will point you towards some great resources which can be used to start a session of performance tuning.

If you surf the net you can find plenty of benchmarks affirming that X is faster than Y. Even if micro benchmarks are useful to quickly calculate the response of a single variable (for example, the time to execute a stored procedure), they are of little or no use for testing complex systems. Why? Because many factors in enterprise systems produce their effects after the system has been tested extensively: think about caching systems or JVM garbage collection tuning as a clue.

Investing a huge amount of time for your tuning session is, however, not realistic as you will likely fail to meet your budget goals, so your performance tests should be completed by a fixed timeline.

Balancing these two factors, we could say that a good performance tuning session should last at least 20-30 minutes (besides warm-up activities, if any) for bread-and-butter applications like the sample Pet Store demo application (http://java.sun.com/developer/releases/petstore/). Larger applications, on the other hand, require more functionality to test and engage a considerable amount of system resources. A complete test plan can demand, in this case, some hours or even days to be completed. As a matter of fact, some dynamics (like the garbage collector) can take time to unfold its effects; benchmarking these kinds of applications on a short-time basis can thus be useless or even misleading.

Luckily you can organize your time in such a way that the tuning sessions are planned carefully during the day and then executed with batch scripts at night.

With the amount of data collected, you have evidence of which areas show a performance penalty: keep in mind, however, that this might just be the symptom of a problem which arises in a different area of your application. Technically speaking the analysis procedure can be split into the following activities:

Identify the locations of any bottlenecks.

Think of a hypothesis which could be the cause of the bottleneck.

Consider any factors that may prove/disprove your hypothesis.

At the end of these activities, you should be ready to create a new test which isolates the factor that we suppose to be the cause of the bottleneck.

For example, supposing you are in the middle of a tuning session of an enterprise application. You have identified (Step 1) that the application occasionally pauses and cannot complete all transactions within the strict timeout setting.

Your hypothesis (Step 2) is that the garbage collector configuration needs to be changed because it's likely that there are too many full cycles of garbage collection (garbage collection is explained in detail in Chapter 3, Core JVM Tuning).

As a proof of your hypothesis (Step 3) you are going to add in the configuration a switch that prints the details of each garbage collection.

In definitive, by carefully examining performance indicators, you can correctly isolate the problem and thus identify the main problems, which must be addressed first. If the data you collect is not complete, then your analysis is likely to be inaccurate and you might need to retest and collect the missing information or use further analysis tools.

When your analysis has terminated you should have a list of indicators that need testing: you should first establish a priority list so that you can first address those issues that are likely to provide the maximum payoff.

Tip

It's important to stress that you must apply each change individually otherwise you can distort the results and make it difficult to identify potential new performance issues.

And that's it! Get your instruments ready and launch another session of performance testing. You can stop adjusting and measuring when you believe you're close enough to the response times to satisfy your requirements.

As a side note consider that optimizing code can introduce new bugs so the application should be tested during the optimization phase. A particular optimization should not be considered valid until the application using that optimization's code path has passed quality assessment.

One of the most pervasive myths about Java Enterprise applications is that they simply are slow. The notion of Java being "slow" in popular discussions is often poorly calibrated but, unfortunately, widely believed. The most compelling reason for this sentiment dates back to the first releases of Java Development Kit. In 1995, Java was much slower as the first implementations of the Java Virtual Machine didn't have a Just In Time complier, the garbage collector algorithms were not so refined and, generally speaking, lots of applications were written using classes with poor performance numbers (for example, Input/Output streams without buffering, or abuse of thread-safe collections classes like the java.io.Vector).

While the debate continues in many forums, featuring benchmarks generally with the "elder brother" C++, there is some truth in it; that is today (some time ago), many Java applications are still awfully slow. Why?

What happened is that, ironically, even if Sun engineers were able to deliver faster JVMs release after release, programming Java Enterprise applications became more and more complex, and therefore so did writing fast Java applications.

Not so long ago the archetype of a Java Application was made up of a Front Layer (usually developed with JSPs or Swing) and some Middleware, usually developed with a mix of Servlets and Data Access Objects (DAO) that contained the interfaces for the legacy system.

In such a scenario, the architect had to take care of fewer counters and there was only one, or perhaps two protocols involved in the communications (HTTP and RMI).With a minimal application and web server tuning along with some DBA tips you could bring home the desired result.

Today's enterprise applications are much more complex; take for example the input: it can come from HTML as well as a thick client or a web service, or even a mobile device. Also, lots of Java programming interfaces have been screened by other frameworks to simplify or enhance the productivity of the developer. For example, Java Server Faces (JSF) specification has been built on the top of Servlet/JSPs and then custom libraries (like RichFaces) have been built on the top of JSF. Another good example is the Hibernate framework, which has been built on the top of JDBC, and then Entities have been built on the top of Hibernate.

We might continue discussing other good examples, however the truth is that each of these extra layers inevitably carry some overhead, and have their own best practices which are usually unknown to the majority of developers.

Our conclusion is that today Java applications have a higher performance potential than they once did, but this needs expert hands and a solid tuning methodology to be allowed in the Eden where fast applications live.

Nevertheless, tuning Java Enterprise applications is more complex than standalone applications as it requires monitoring and configuring additional components like the application server, which acts as a container for the application, and all resources which are directly controlled by the application server. In the next section, we are going to explore all the single areas which have an impact on the performance of an enterprise application.

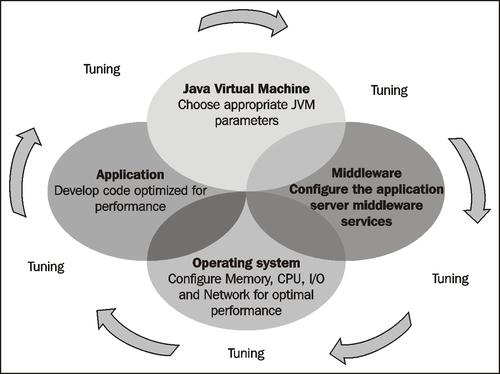

Configuration and tuning settings can be divided into four main categories:

Java Virtual Machine (JVM) tuning

Middleware tuning

Application tuning

Operating system / Hardware tuning

Let's enter more in detail in each area:

JVM tuning: Every Java application runs in a Virtual Machine, so with proper configuration of JVM parameters (in particular those related to memory and garbage collection), it's possible to achieve better performance of your Java applications. The configuration of JVM has changed a lot since the first releases of Java, and most developers are not aware that the default JVM parameters are usually not optimal for running large applications. We will cover this topic in more detail in Chapter 3, Core JVM tuning, which is entirely dedicated to JVM tuning.

Middleware tuning is managed to control how an application server provides services for running applications and their components. The application server is pretty complex stuff and at the same time, a fertile ground for optimizations for expert users. The application server contains a core configuration that is common to all applications (think about the pool of thread which is responsible for invoking other components), and also a set of Java EE services which are available for use (like EJB, the web container, JMS, and so on). Each of these services has a default configuration which can be just as good for average applications, but need to be tweaked in order to obtain superior performance.

Application tuning requires that you write efficient code in your application, as well as adopt the best performing libraries to achieve the desired task.

Most tuning experts agree that application tuning accounts for about 75% of the overall tuning process. This doesn't mean that hardware and correct administration configuration is useless. The truth is that even the best hardware and application server configuration will not provide dramatic performance numbers if you are running a poorly coded application. Just to mention a few:

Are you using queries without index on the

wherefields?Are you gathering massive data in the HTTP session?

Are you issuing a

select *and trying to cache all the data in the middle tier

If you are performing any of these mistakes then there is little you can fix with proper JVM configuration or application server tuning alone.

Operating system tuning relates to configuring your system and hardware resources so that they can efficiently run the software resources discussed previously. The most common hardware tuning is concerned with physical memory: if you determine that your application has a memory bottleneck, and it's not caused by inefficient coding, you have no other choice but to add more memory to your machine(s).

Another hot point for tuning hardware is CPU: each application that runs on a server gets a time slice of the CPU. The CPU might be able to efficiently handle all of the processes running on the computer, or it might be overloaded. By examining processor activity and the activity of individual processes including thread creation, thread switching, context switching, and so on, you can gain good insight into processor workload and performance. Again, if the CPU is the bottleneck and it cannot be solved by application tuning, you have to consider adding more CPUs or splitting the load on an array of servers.

Hardware tuning also includes input/output tuning. Executing long-running file I/O operations, data encryption and decryption, or reading too much data from database tables can turn I/O operation into a serious bottleneck. A shortage of physical memory might also lead to an excessive input-output activity if the data cannot fit in the physical memory. Slow hard disks are another factor to consider and are the only possible solution if you still have disk I/O bottlenecks after optimizing all other factors.

The last hardware component we need to mention is the Network, which is the means by which different applications communicate. Tuning the network means shortening the number of hops your application needs to do in order to reach external systems. You also need to configure your protocol transmission in the most efficient way so that in turn your packets are routed in the most efficient way. Again, if you still have a bottleneck in this area, the last solution is to upgrade to a new set of network devices.

Tip

Is it possible to optimize all areas of tuning?

Theoretically yes, but in practice, optimization will generally focus on improving just one or two aspects of performance: for example execution time, memory usage, disk space, bandwidth, power consumption, or some other resource. This will usually require a trade-off where one factor is optimized at the expense of others. For example, increasing the size of cache improves runtime performance, but also increases the memory consumption. Other common trade-offs include code clarity and conciseness. In practice you have to define some priorities and code accordingly.

The following image synthesizes the concepts we have just covered:

In this chapter, you have learnt the basics of the performance tuning process: let's shortly recap the most significant points:

Performance can be evaluated with two main counters: The Response Time and the Throughput. The Response Time can be defined as the time it takes for one user to perform a task. The Throughput is the number of transactions that can occur in a given amount of time.

In order to meet higher loads, applications need to be scalable. You can scale your applications vertically (that is switching to servers with higher capabilities) or horizontally (that is adding a line of servers).

In order to improve the performance of your applications, you have to consider all resources which are around the application: the Java Virtual Machine, the middleware, the hardware, and how you code the application itself.

Maximum application performance can be achieved only if performance tuning is considered to be a part of your overall software development plan.

In the next chapter, we are going to introduce a few essential tools, which you can freely download, and use in order to tune your Enterprise applications and your operating system as well.