AI Cloud Foundations

Today, every organization aspires to be a leader in adopting the latest technological advancements. The success of such adoption in recent years has been achieved by leveraging the data landscape surrounding businesses. In this chapter, we will talk about how AI can be leveraged using Microsoft's Azure platform to derive business value from that data landscape. Azure offers several hundred services, and choosing the right service is challenging. In this chapter, we will give a high-level overview of the choices a data scientist, developer, or data engineer has for building and deploying AI solutions for their organization. We will start with a decision tree that can guide technology choices so that you understand which services you should consider.

In this chapter, we will cover the following topics:

- Cognitive Services/bots

- Azure Machine Learning Studio

- Azure Machine Learning services

- Machine Learning Server

- Azure Databricks

The importance of artificial intelligence

Artificial intelligence (AI) is ever-increasingly being interwoven into the complex fabric of our technology-driven lives. Whether we realize it or not, AI is becoming an enabler for us to accomplish our day-to-day tasks more efficiently than we've ever done before. Personal assistants such as Siri, Cortana, and Alexa are some of the most visible AI tools that we come across frequently. Less obvious AI tools are ones such as those used by rideshare firms that suggest drivers move to a high-density area, and adjust prices dynamically based on demand.

Across the world, there are organizations at different stages of the AI journey. To some organizations, AI is the core of their business model. In other organizations, they see the potential of leveraging AI to compete and innovate their business. Successful organizations recognize that digital transformation through AI is key to their survival over the long term. Sometimes, this involves changing an organization's business model to incorporate AI through new technologies such as the Internet of Things (IoT). Across this spectrum of AI maturity, organizations face challenges implementing AI solutions. Challenges are typically related to scalability, algorithms, libraries, accuracy, retraining, pipelines, integration with other systems, and so on.

The field of AI has been around for several decades now, but it's growth and adoption over the last decade has been tremendous. This can be attributed to three main drivers: large data, large compute, and enhanced algorithms. The growth in data stems mostly from entities that generate data, or from human interactions with those entities. The growth in compute can be attributed to improved chip design, as well as innovative compute technologies. Algorithms have improved partly due to the open source community and partly due to the availability of larger data and compute.

The emergence of the cloud

Developing AI solutions in the cloud helps organizations leapfrog their innovation, in addition to alleviating the challenges described here. One of the first steps is to bring all the data close together or in the same tool for easy retrieval. The cloud is the most optimal landing zone that meets this requirement. The cloud provides near-infinite storage, easy access to other data sources, and on-demand compute. Solutions that are built on the cloud are easier to maintain and update, due to there being a single pane of control. The availability of improved or customized hardware at the click of a button was unthinkable a few years back.

Innovation in the cloud is so rapid that developers can build a large variety of applications very efficiently. The ability to scale solutions on-demand and tear them down after use is very economical in multiple use cases. This permits projects to start small and scale up as demand goes up. Lastly, the cloud provides the ability to deploy applications globally in a manner that's consistent for both the end user and developers.

Essential cloud components for AI

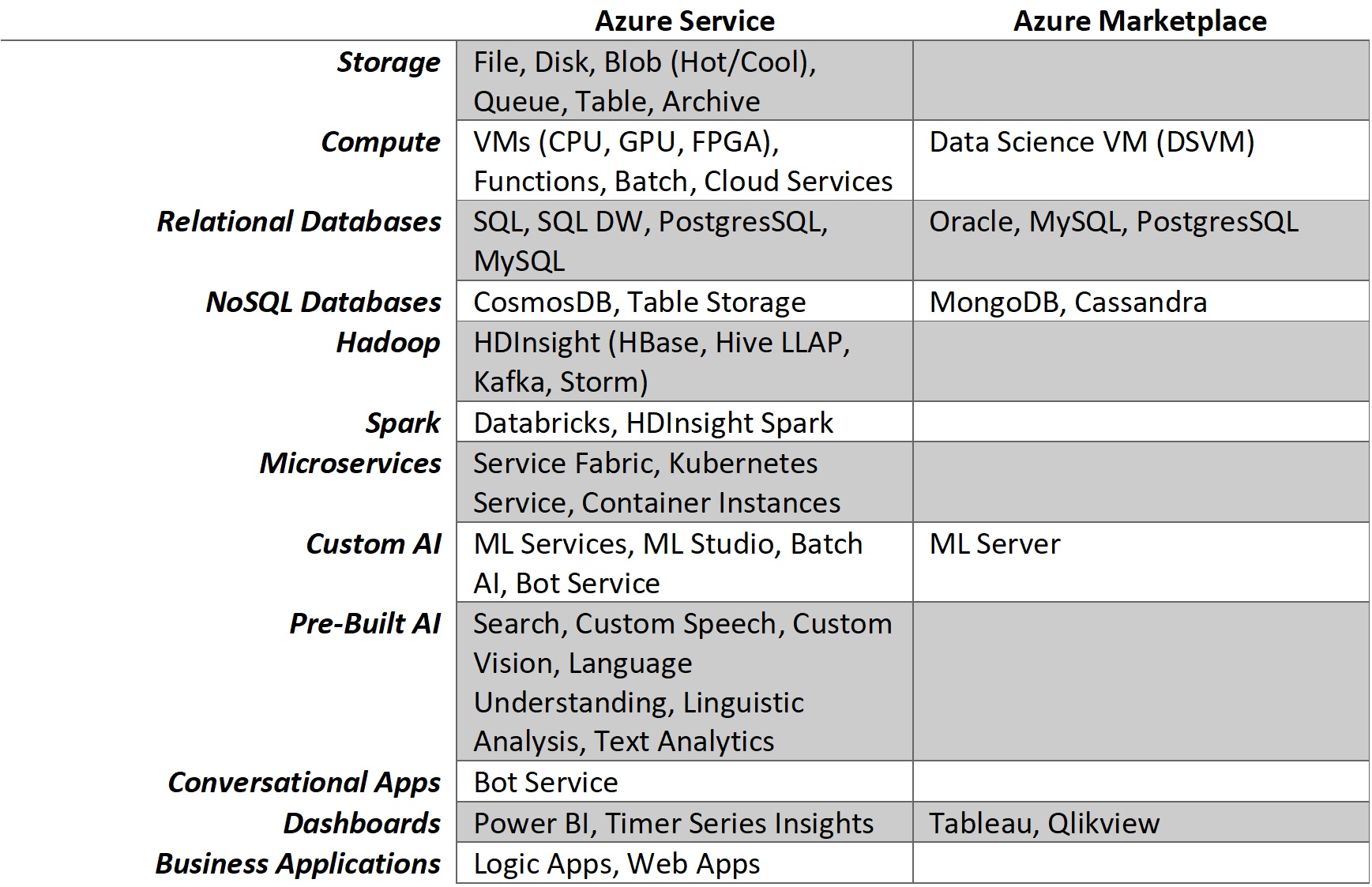

Any cloud AI solution will have different components, all modular, individually elastic, and integrated with each other. A broad framework for cloud AI is depicted in the following diagram. At the very base is Storage, which is separate from Compute. This separation of Storage and Compute is one of the key benefits of the cloud, which permits the user to scale one separate from the other. Storage itself may be tiered based on throughput, availability, and other features. Until a few years back, the Compute options were limited to the speed and generation of the underlying CPU chips. Now, we have options for GPU and FPGA (short- for field-programmable gate array) chips as well. Leveraging Storage and Compute, various services are built on the cloud fabric, which makes it easier to use ingest data, transform it, and build models. Services based on Relational Databases, NoSQL, Hadoop, Spark, and Microservices are some of the most frequent ones used to build AI solutions:

At the highest level of complexity are the various AI-focused services that are available on the cloud. These services fall on a spectrum with fully customizable solutions at one end, and easy-to-build solutions at the other. Custom AI is typically a solution that allows the user to bring in their own libraries or use proprietary ones to build an end-to-end solution. This typically involves a lot of hands-on coding and gives the builder complete control over different parts of the solution. Pre-Built AI is typically in the form of APIs that expose some type of service that can be easily incorporated into your solution. Examples of these include custom vision, text, and language-based AI solutions.

However complex the underlying AI may be, the goal of most applications is to make the end user experience as seamless as possible. This means that AI solutions need to integrate with general applications that reside in the organization solution stack. A lot of solutions use Dashboards or reports in the traditional BI space. These interfaces allow the user to explore the data generated by the AI solution. Conversational Apps are usually in the form of an intelligent interface (such as a bot) that interacts with the user in a conversational mode.

The Microsoft cloud – Azure

Microsoft's mission is been to empower every person and organization on Earth to achieve more. Microsoft Azure is a cloud platform designed to help customers achieve the intelligent cloud and the intelligent edge. Their vision is to help customers infuse AI into every application, both in the cloud and on compute devices of all form factors. With this in mind, Microsoft has developed a wide set of tools that can help its customer build AI into their applications with ease.

The following table shows the different tools that can be used to develop end-to-end AI solutions with Azure. The Azure Service column indicates those services that are owned and managed by Microsoft (first-party services). The Azure Marketplace column indicates third-party services or implementations of Microsoft products on Azure virtual machines, Infrastructure as a service (IaaS):

The preceding table shows the different tools that can be used to develop end-to-end AI solutions on Azure. Due to the pace of the innovation of Azure, it is not easy to keep up with all the services and their updates.

One of the challenges that architects, developers, and data scientists face is picking the right Azure components for their solution.

Choosing AI tools on Azure

In this book, we will assume that the you have knowledge and experience of AI in general. The goal here is not to touch on the basics of the various kinds of AI or to choose the correct algorithm; we assume you have a good understanding of what algorithms to choose in order to solve a given business need.

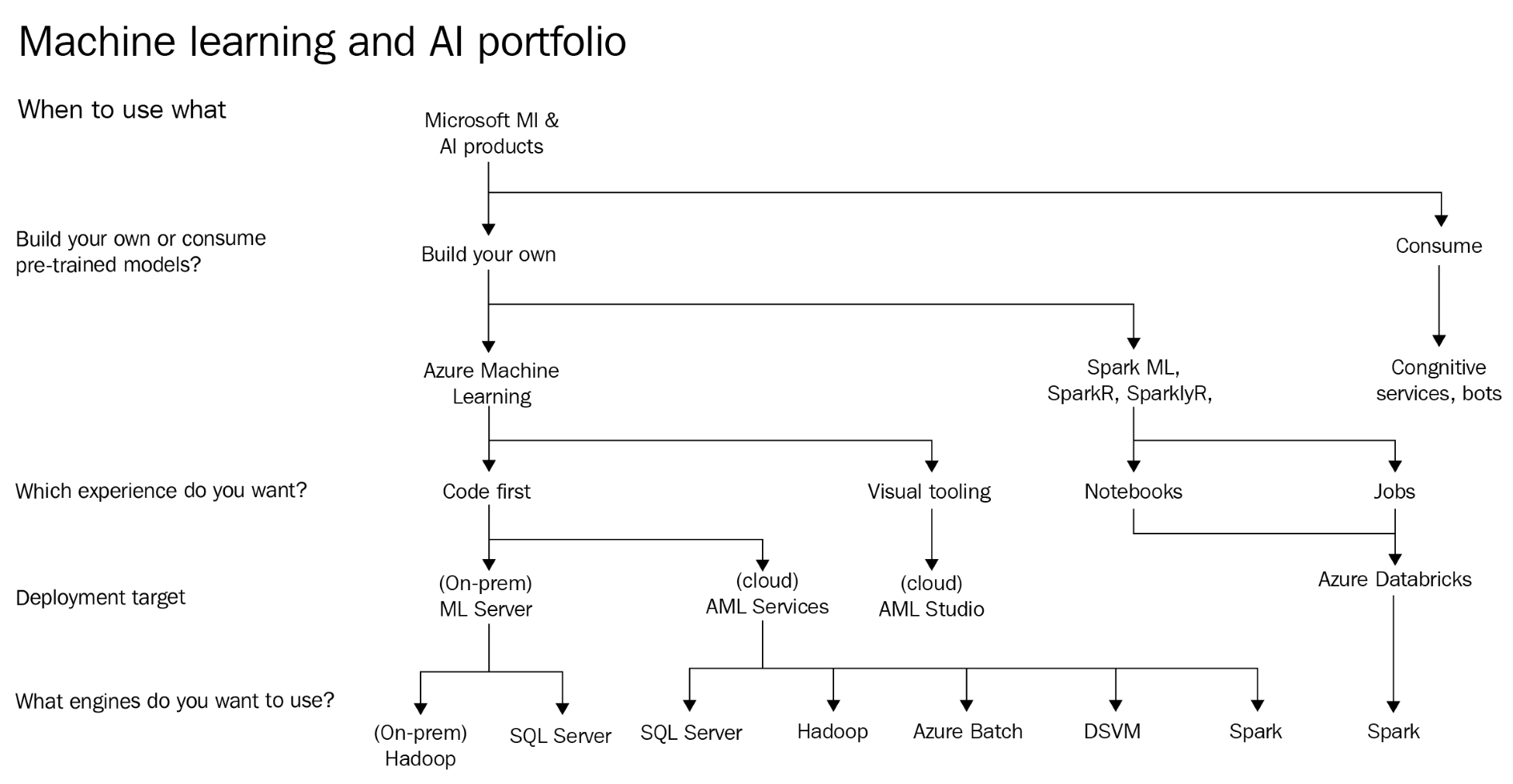

The following diagram shows a decision tree that can help you choose the right Azure AI tools. It is not meant to be comprehensive; just a guide to the correct technology choices. There are a lot of options that cross over, and this was difficult to depict on this diagram. Also keep in mind that an efficient AI solution would leverage multiple tools in combination:

The preceding diagram shows a decision tree that helps users of Microsoft's AI platform. Starting from the top, the first question is whether you would like to Build your own models or consume pre-trained models. If you are building your own models, then it involves data scientists, data engineers, and developers at various stages of the process. In some use cases, developers prefer to just consume pre-trained models.

Cognitive Services/bots

Developers who would like to consume pre-trained AI models, typically use one of Microsoft's Cognitive Services. For those who are building conversational applications, a combination of Bot Framework and Cognitive Services is the recommended path. We will go into the details of Cognitive Services in Chapter 3, Cognitive Services, and Chapter 4, Bot Framework, but it is important to understand when to choose Cognitive Services.

Cognitive Services were built with the goal of giving developers the tools to rapidly build and deploy AI applications. Cognitive Services are pre-trained, customizable AI models that are exposed via APIs with accompanying SDKs and web services. They perform certain tasks, and are designed to scale based on the load against it. In addition, they are also designed to be compliant with security standards and other data isolation requirements. At the time of writing, there are broadly five types of Cognitive Services offered by Azure:

- Knowledge

- Language

- Search

- Speech

- Vision

Knowledge services are focused on building data-based intelligence into your application. QnA Maker is one such service that helps drive a question-and-answer service with all kinds of structured and semi-structured content. Underneath, the service leverages multiple services in Azure. It abstracts all that complexity from the user and makes it easy to create and manage.

Language services are focused on building text-based intelligence into your application. The Language Understanding Intelligent Service, (LUIS) is one type of service that allows users to build applications that can understand natural conversation and pass on the context of the conversation, also known as NLP (short for Natural-language processing), to the requesting application.

Search services are focused on providing services that integrate very specialized search tools for your application. These services are based on Microsoft's Bing search engine, but can be customized in multiple ways to integrate search into enterprise applications. The Bing Entity Search service is one such API that returns information about entities that Bing determines are relevant to a user's query.

Speech services are focused on providing services that allow developers to integrate powerful speech-enabled features into their applications, such as dictation, transcription, and voice command control. The custom speech service enables developers to build customized language modules and acoustic models tailored to specific types of applications and user profiles.

Vision services provide a variety of vision-based intelligent APIs that work on images or videos. The Custom Vision Service can be trained to detect a certain class of images after it has been trained on all the possible classes that the application is looking for.

Each of these Cognitive Services has limitations in terms of their applicability to different situations. They also have limits on scalability, but they are well-designed to handle most enterprise-wide AI solutions. Covering the limits and applicability of the services is outside the scope of this book and is well documented.

As a developer, once you, knowingly or unknowingly, hit the limitations of Cognitive Services, the best option is to build your own models to meet your business requirements. Building your own AI models involves ingesting data, transforming it, performing feature engineering on it, training a model, and eventually, deploying the model. This can end up being an elaborate and time-consuming process, depending on the maturity of the organization's capabilities for the different tasks. Picking the right set of tools involves assessing that maturity during the different steps of the process and using a service that fits the organizational capabilities. Referring to the preceding diagram, the second question that gets asked of organizations that want to build their own AI models is related to the kind of experience they would like.

Azure Machine Learning Studio

Azure Machine Learning (Azure ML) Studio is the primary tool, purely a web-based GUI, to help build machine learning (ML) models. Azure ML Studio is an almost code-free environment that allows the user to build end-to-end ML solutions. It has Microsoft Research's proprietary algorithms built in, which can do most machine learning tasks with real simplicity. It can also embed Python or R code to enhance its functionality. One of the greatest features of Azure ML Studio is the ability to create a web service in a single click. The web service is exposed in the form of a REST endpoint that applications can send data to. In addition to the web service, an Excel spreadsheet is also created, which accesses the same web service and can be used to test the model's functionality and share it easily with end users.

At time of writing, the primary limitation with Azure ML Studio is the 10 GB limit on an experiment container. This limit will be explained in detail in Chapter 6, Scalable Computing for Data Science, but for now, it is sufficient to understand that Azure ML Studio is well-suited to training datasets that are in the 2 GB to 5 GB range. In addition, there are also limits to the amount of R and Python code that you can include in ML Studio, and its performance, which will be discussed in detail later.

ML Server

For a code-first experience, there are multiple tools available in the Microsoft portfolio. If organizations are looking to deploy on-premises (in addition to the cloud), the only option available is Machine Learning Server (ML Server). ML Server is an enterprise platform that supports both R and Python applications. It supports all the activities involved in the ML process end-to-end. ML Server was previously known as R Server and came about through Microsoft's acquisition of revolution analytics. Later, Python support was added to handle the variety of user preferences.

In ML Server, users can use any of the open source libraries as part of their solution. The challenge with a lot of the open source tooling is that it takes a lot of additional effort to get it to scale. Here, ML Server's RevoScaleR and revocalepy libraries provide that scalability for large datasets by efficiently managing data on disk and in memory. In terms of scalability, it is proven that ML Server can scale either itself or the compute engine. To scale ML Server, it is important to note that the only way is to scale up. In other words, this means that you create a single server with more/faster CPU, memory, and storage. It does not scale out by creating additional nodes of ML Server. To achieve scalability, ML Server also leverages the compute on the data engines with which it interacts. This is done by shifting the compute context to distributed compute, such as Spark or Hadoop. There is also the ability to shift the compute context to SQL Server with both R and Python so that the algorithms run natively on SQL Server without having to move the data to the compute platform.

The challenges with ML Server are mostly associated with the limitations surrounding R itself, since Python functionality is relatively new. ML Server needs to be fully managed by the user, so it adds an additional layer of management. The lack of scale-out features also poses a challenge in some situations.

Azure ML Services

Azure ML Services is a relatively new service on Azure that enhances productivity in the process of building AI solutions. Azure ML Services has different components. On the user's end, Azure ML Workbench is a tool that allows users to pull in data, transform it, build models, and run them against various kinds of compute. Workbench is a tool that users run on their local machines and connect to Azure ML services. Azure ML Services itself runs on Azure and consists of experimentation and model management for ML. The experimentation service keeps track of model testing, performance, and any other metrics you would like to track while building a model. The model management service helps manage the deployment of models and manages the overall life cycle of multiple models built by individual users or large teams.

When leveraging Azure ML Services, there are multiple endpoints that can act as engines for the services. At the time of writing, only Python-based endpoints are supported. SQL Server, with the introduction of built-in Python services, can act as an endpoint. This is beneficial, especially if the user has most of the data in SQL tables and wants to minimize data movement.

If you have leveraged Spark libraries for ML at scale on ML Services, then you can deploy to Spark-based solutions on Azure. Currently, these can be either Spark on HDInsight, or any other native implementation of Apache Spark (Cloudera, Hortonworks, and so on).

If the user has leveraged other Hadoop-based libraries to build ML Services, then those can be deployed to HDInsight or any of the Apache Hadoop implementations available on Azure.

Azure Batch is a service that provides large-scale, on-demand compute for applications that require such resources on an ad hoc or scheduled basis. The typical workflow for this use case involves the creation of a VM cluster, followed by the submission of jobs to the cluster. After the job is completed, the cluster is destroyed, and users do not pay for any compute afterward.

The Data Science Virtual Machine (DSVM) is a highly customized VM template built on either Linux or Windows. It comes pre-installed with a huge variety of curated data science tools and libraries. All the tools and libraries are configured to work straight out of the box with minimal effort. The DSVM has multiple applications, which we will cover in Chapter 7, Machine Learning Server, including utilization as a base image VM for Azure Batch.

One of the most highly scalable targets for running models built by Azure ML Services is to leverage containers through Docker and orchestration via Kubernetes. This is made easier by leveraging Azure Kubernetes Services (AKS). Azure ML Services creates a Docker image that helps operationalize an ML model. The model itself is deployed as containerized Docker-based web services, while leveraging frameworks such as TensorFlow, and Spark. Applications can access this web service as a REST API. The web services can be scaled up and down by leveraging the scaling features of Kubernetes. More details on this topic will be covered in Chapter 10, Building Deep Learning Solutions.

The challenge with Azure ML Services is that it currently only supports Python. The platform itself has gone through some changes, and the heavy reliance on the command-line interface makes the interface not as user-friendly as some other tools.

Azure Databricks

Azure Databricks is one of the newest additions to the tools that can be used to build custom AI solutions on Azure. It is based on Apache Spark, but is optimized for use on the Azure platform. The Spark engine can be accessed by various APIs that can be based on Scala, Python, R, SQL, or Java. To leverage the scalability of Spark, users need to leverage Spark libraries when dealing with data objects and their transformations. Azure Databricks leverages these scalable libraries on top of highly elastic and scalable Spark clusters that are managed by the runtime. Databricks comes with enterprise-grade security, compliance, and collaboration features that distinguish it from Apache Spark. The ability to schedule and orchestrate jobs is also a great feature to have, especially when automating and streamlining AI workflows. Spark is also a great, unified platform for performing different analytics: interactive querying, ML, stream processing, and graph computation.

The challenge with Azure Databricks is that it is relatively new in Azure and does not integrate directly with some services. Another challenge is that users who are new to Spark would have to refactor their code to incorporate Spark libraries, without which they cannot leverage the benefits of the highly distributed environment available.

Summary

In summary, this chapter has given a brief overview of all the different services that are available on Azure to build AI solutions. In the innovative cloud world, it is hard to find a single solution that encompasses all the desired outcomes for an AI project. The goal of this book is to guide users on picking the right tool for the right task. Mature organizations realize that being agile and flexible is key to innovating in the cloud. In the next chapter, we will see TDSP stages and its tools.

Download code from GitHub

Download code from GitHub