Introduction to Google Cloud Platform

This chapter begins with a brief introduction to cloud computing. We then introduce the Google Cloud Platform (GCP) with an overview of its history and its concepts. We will then look into some of its concepts, tools, and services. We will also map and compare how Amazon Web Services (AWS) and Microsoft Azure public clouds match up to GCP products. Lastly, we will set up an account in GCP using the free tier that allows you a 12-month, $300 free trial of all GCP products.

In this chapter, we will cover the following:

- Introduction to cloud computing

- Introduction to GCP

- GCP services

- Data centers and regions

- AWS and Azure in comparison to GCP

- Exploring GCP

Introduction to cloud computing

In the simplest terms, cloud computing is the practice of delivering computing services such as servers, storage, networking, databases, and applications over the internet. In such a delivery model, the consumer, typically a business or an enterprise, only pays for the resources they use without having to pay for the capital investment cost of building and maintaining the data centers.

There are both financial and technological benefits for adopting a cloud computing approach. Companies transform their capital costs to operational costs and are able to pay for what they use rather than pay for idle infrastructure. Cloud computing also eliminates the cost of purchasing and maintaining expensive hardware and data center space. The pay-as-you-go model allows for increasing or decreasing resource consumption without having to pre-purchase hardware.

Companies can also focus on rapid innovation without having to worry about the backend infrastructure's ability to support it. Cloud companies are rapidly introducing new services on high performance hardware platforms that can be consumed on-demand by end users. Typically, companies either migrate entirely to the cloud or use a hybrid model of connecting their on-premise infrastructure to a cloud provider and migrate workloads as needed.

Some good initial use cases for the cloud include development and testing environments, data archiving, data mining, and disaster recovery. All these cases will help reduce capital costs and the speed of deployment and consumption makes cloud computing an ideal platform for these use cases.

Most cloud computing services fall into three broad categories: Infrastructure as a Service (IaaS), Platform as a Service (PaaS), and Software as a Service (SaaS).

With IaaS, you rent the IT infrastructure, which includes servers, virtual machines, networks, storage, and operating systems, on a pay-as-you-go basis. With PaaS, you are given access to an on-demand environment that allows you to quickly deploy, test, and develop your application without having to worry about the underlying IT infrastructure. PaaS is ideal for developers who only care about quickly deploying their application and not worrying about the server, compute, or its storage.

SaaS is a way of delivering software applications over the internet on a subscription model. A good example of SaaS is your Gmail email account. You are subscribed to your email by signing up for it and use the email software that is written, maintained, secured, and managed by Google.

Introducing GCP

GCP's initial release was on October 6, 2011. Since then it has become one of the most used public cloud platforms and is continuing to grow. GCP offers a suite of cloud services that run on the same infrastructure that Google uses to host their end-user products such as Google search, Gmail, and YouTube. This makes it important because Google not only continues to innovate for its customers but also benefits from its own investment into the platform. Google began operations by launching its Google App engine back in 2008. Since then we have seen multiple other services introduced and the list keeps on growing.

GCP services

While GCP services are many, we can broadly categorize them into four different services. They are compute services, storage services, networking services, and big data services. Apart from these, there are other cloud services such as identity and security management, management tools, data transfer, and machine learning.

Compute services

GCP offers you a wide variety of computing services that allow you complete flexibility as to how you want to manage your computing assets. Depending on your application and its requirements, you can choose to deploy a traditional custom virtual machine or use Google's App Engine to run the application:

- Compute engine: Allows you to deploy and run high-performance virtual machines in Google data centers. You can deploy either a pre-configured virtual machine or customize the resources as per your requirements.

- Apps engine: Allows you to deploy your application on a fully managed platform which is completely supported by Google. This allows you to simply deploy your application and have it running without you having to worry about the underlying infrastructure.

- Kubernetes engine: This service allows you to run containers on GCP. This means your containerized applications can be deployed on GCP using the Kubernetes engine service without you having to manage the underlying cluster yourself. Google's Site Reliability Engineers (SREs) constantly monitor the cluster, which relieves you of that responsibility.

- Cloud Functions: This service allows you to run code and respond to events on the fly in a true serverless model. This means allowing code to respond to events is determined by you. This also means you will be billed only if your code runs, making it very cost effective.

Storage services

The following are the types of storage services:

- Cloud storage: An object storage that can be used for a variety of use cases and is accessible via a REST API. This offering allows geo-redundancy with its multi-regional capability and can be used for both high performance storage requirements to archival storage.

- Cloud SQL: A fully managed (replicated and backed-up) database service that allows you to easily get started with your MySQL and PostgreSQL databases in the cloud. The offering also comes with a standard API and also built-in migration tools to migrate your current databases to the cloud.

- Cloud BigTable: Cloud BigTable is the database for all your NoSQL database requirements. The service can scale to hundreds of petabytes easily, which makes it suitable for enterprise data analysis. BigTable also integrates easily with other big data tools such as Hadoop.

- Cloud Spanner: Cloud Spanner is a relational database service that aims at providing highly scalable and strongly consistent database service for the cloud. This is a fully managed service that can offer transactional consistency and synchronous replication of databases across multiple geographies.

- Cloud Datastore: Cloud Datastore is another service set apart from Cloud BigTable that is suitable for your key-value pair NoSQL database requirements. The services comes with other features such as sharding and replication.

- Persistent Disk: Persistent Disk is persistent high performance block storage that can be attached to your Google compute engine instance or Google Kubernetes engine. The service allows you to resize storage without any downtime and is offered in both HDD and SSD formats. You can also mount one disk on multiple machine instances allowing multi-reader capability.

Networking services

These are the networking services:

- Virtual Private Cloud (VPC): Virtual private cloud allows you to connect multiple GCP resources together or create internal isolated resources that can be managed easily. You can also deploy firewalls, Virtual Private Networks (VPNs), routes, and custom IP ranges.

- Cloud load balancing: This service allows you to distribute your incoming traffic across multiple Google Compute Engines. Cloud load balancing also lets you do autoscaling and can scale your backend instances depending on the incoming traffic load.

- Cloud CDN: Google's cloud delivery network allows you to distribute your content for lower latency and faster access. Google has over 90 edge points globally spread across multiple continents that make it easy for you to decrease your serving costs.

- Cloud interconnect: This service allows you to directly connect your on-premises data center to Google's network. You can either peer with Google or interconnect depending on your bandwidth requirements and peering capabilities.

- Cloud DNS: This is Google's highly available global DNS network and comes with an API to allow management of records and zones.

Big data

The following are the big data services:

- BigQuery: BigQuery is an enterprise data warehouse that allows you to store and query massive datasets by enabling fast SQL queries using Google's underlying infrastructure.

- Cloud dataflow: A fully managed service that allows real-time batch and stream data processing. The service also integrates with Stackdriver, Google's unified logging and monitoring solution, letting you monitor and troubleshoot issues as they happen.

- Cloud dataproc: Cloud dataproc is a fully managed cloud service to run Apache spark and Apache Hadoop clusters.

- Cloud datalab: A powerful tool that allows you to explore and visualize large datasets.

- Cloud dataprep: A service that helps in structured and unstructured data analysis by means of visually exploring and cleaning it.

- Cloud pub/sub: A service built for stream analytics that allows you to publish and subscribe to data streams for big data analysis.

- Google genomics: A service that allows you to query the genomic information of large research projects.

- Google DataStudio: Allows you to turn your data into informative dashboards.

We will look at all services in greater detail in the following chapters.

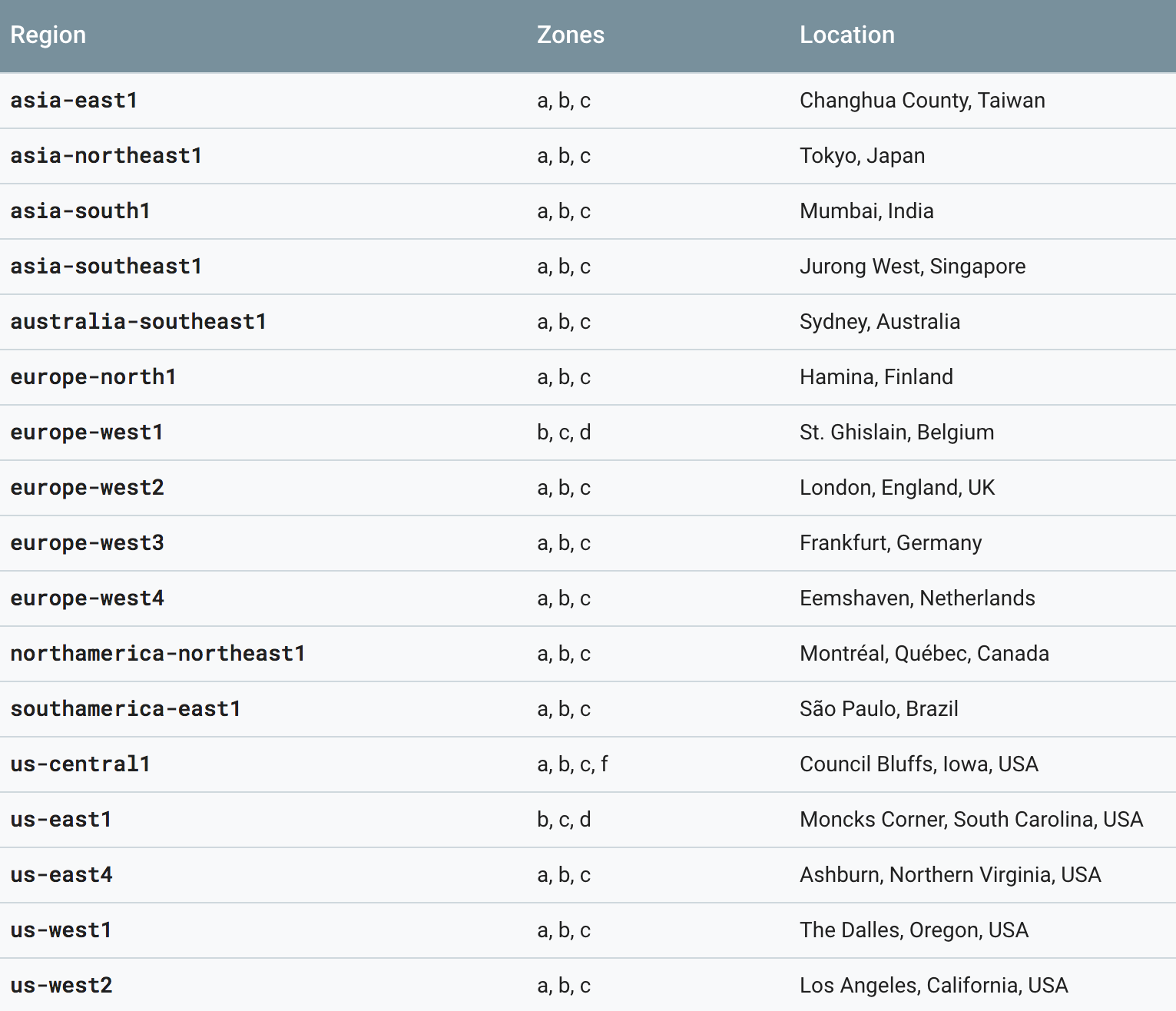

Data centers and regions

GCP services are located across North and South America, Europe, Asia, and Australia. These locations are further divided into regions and zones. A region is an independent geographic area that consists of one or more zones. In total, Google has about 17 regions, 52 zones, and over 100 points of presence (points of presence is a local access point for an ISP). Each zone is identified by a letter, for example, zone a in the US-Central region is named us-central1-a.

When you deploy a cloud resource, they get deployed in a specific region and in a specific zone within that region. Any resource deployed in a single zone will not be redundant—if the zone fails, so will the resource. If you need fault tolerance and high availability, you must deploy the resource in multiple zones within that region to protect against unexpected failures. A disaster recovery plan will be needed in order to protect your entire application against a regional failure.

All regions are expected to have a minimum of three zones:

The roundtrip latency of networks between zones within a region is less than 5 ms:

|

Current regions and number of zones |

Oregon, Los Angeles, Iowa, South Carolina, North Virginia, Montreal, Sao Paolo, Netherlands, London, Belgium, Frankfurt, Mumbai, Finland, Singapore, Sydney, Taiwan, Tokyo |

|

Future regions and number of zones |

Hong Kong, Osaka, Zurich |

Relating AWS and Azure to GCP

If you are familiar with Amazon's AWS or Microsoft's Azure, then this table will help you relate their associated services to what GCP has to offer. Only a few services are shown in the table:

|

Amazon Web Services |

Microsoft Azure |

Google Cloud Platform |

|

Amazon EC2 |

Azure Virtual Machines |

Google Compute Engine |

|

AWS Elastic Beanstalk |

Azure App Services |

Google App Engine |

|

Amazon EC2 Container Service |

Azure Container Service |

Google Kubernetes Engine |

|

Amazon DynamoDB |

Azure Cosmos DB |

Google Cloud Bigtable |

|

Amazon Redshift |

Microsoft Azure SQL Data warehouse |

Google BigQuery |

|

Amazon Lambda |

Azure Functions |

Google Cloud Functions |

|

Amazon S3 |

Azure Blob Storage |

Google Storage |

|

AWS Direct Connect |

Azure ExpressRoute |

Google Cloud Interconnect |

|

AWS SNS |

Azure Service Bus |

Google Cloud Pub/Sub |

|

AWS Cloudwatch |

Application Insights |

Stackdriver Monitoring |

Exploring GCP

Let's dive a little deeper into GCP by creating an account and getting familiar with the console and command-line interface. There are three ways to access GCP—via console, via a command-line interface using the gcloud command-line tool, and Google Cloud SDK client libraries. But before that, we need to understand the concept of projects.

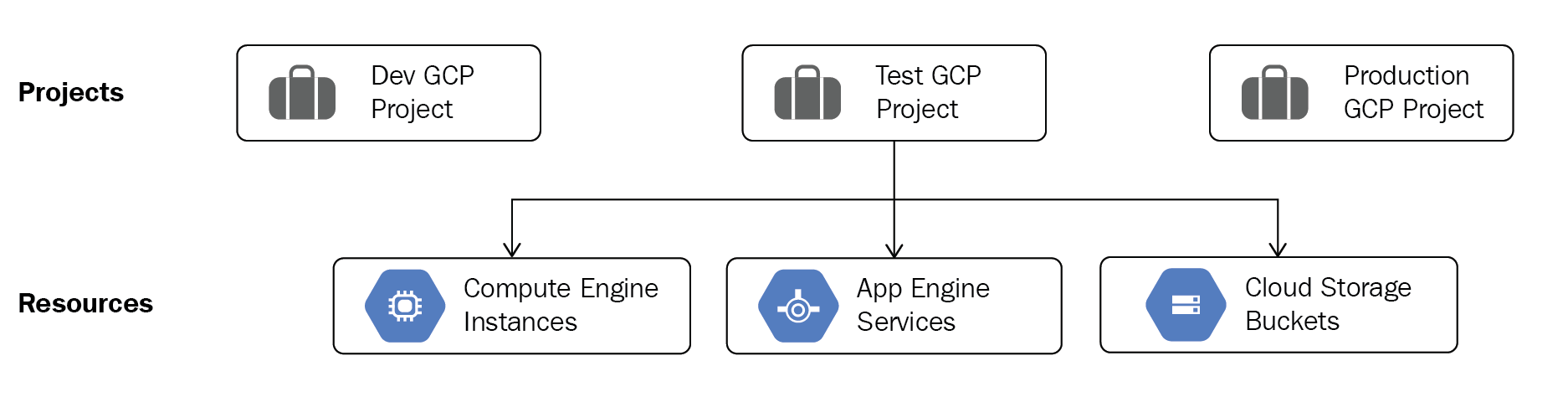

In GCP, any cloud resources that you create must belong to a project. A project is basically an organizing entity for any cloud resource that you wish to deploy. All resources deployed within a single project can communicate easily with each other, for example two compute engine virtual machines can easily communicate with each other within a project without having to go through a gateway. This, however, is subject to region and zone limitations. It is important to note that resources in one project can talk to resources in another project only through an external network connection.

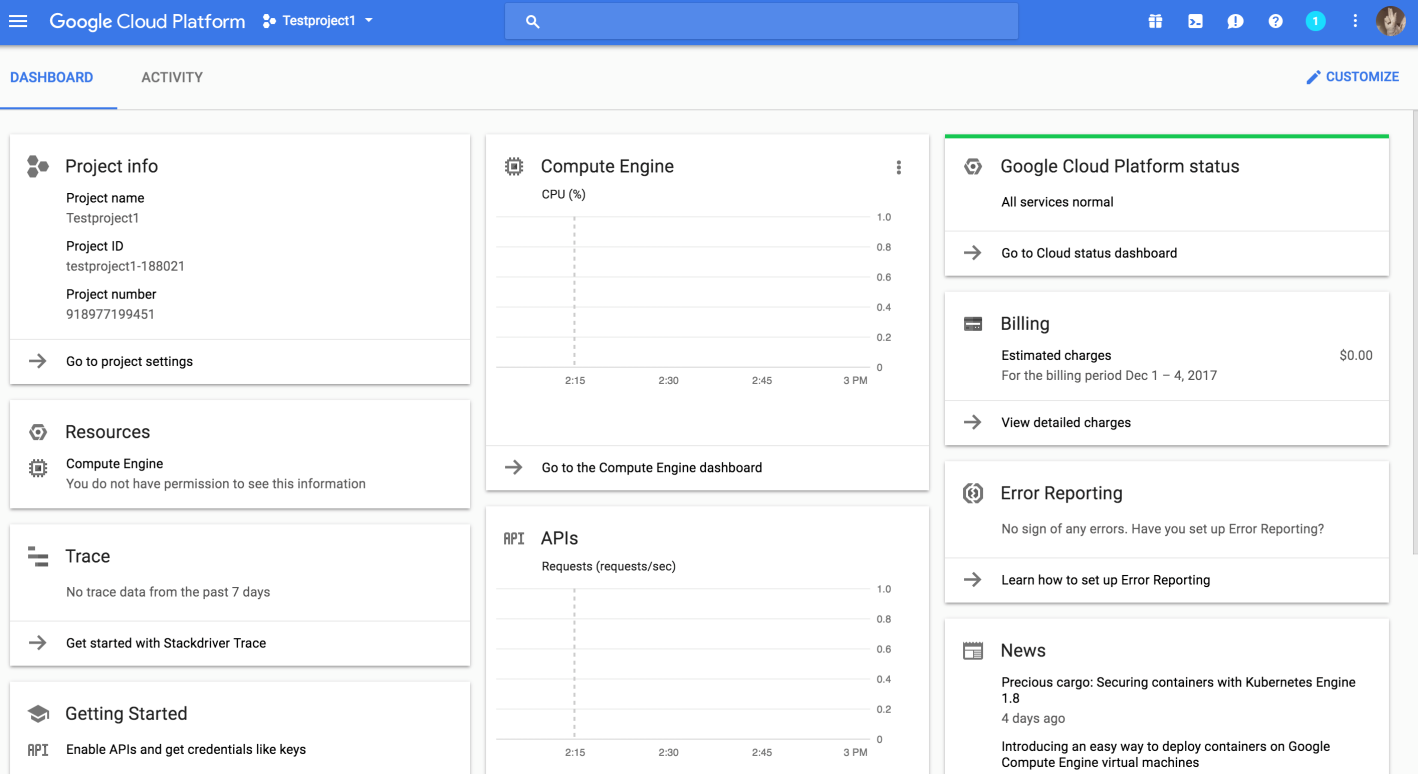

Each project has a project name, a project ID, and a project number. The project ID has to be a unique name across the cloud platform (or Google can generate an ID for you). Remember that even if the project has been deleted, its ID cannot be reused again:

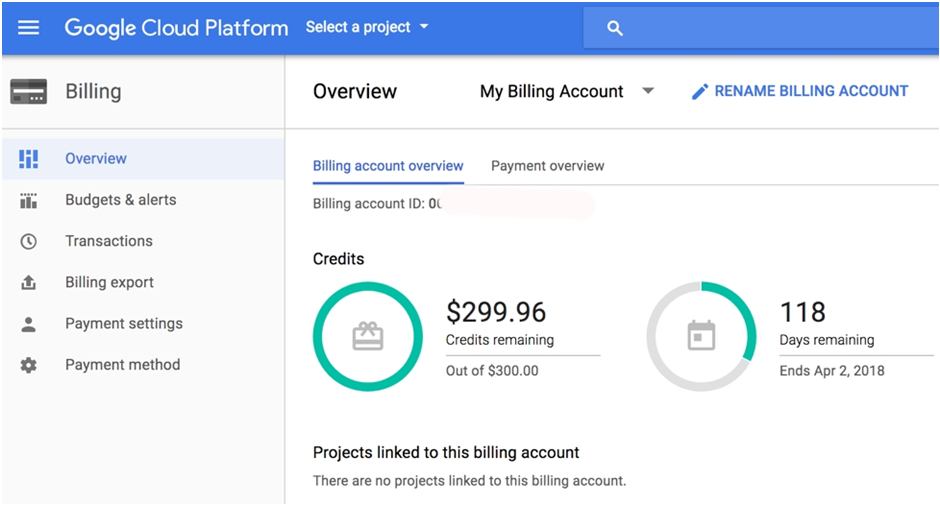

GCP allows you a free trail that provides you with $300 of credit towards any Google product. Your trial lasts for 12 months and expires automatically after that. If you exceed your free $300 credit, your services will be turned off but you will not be charged or billed, making this a safe way to explore and learn more about GCP.

To get started:

- Go to https://cloud.google.com and click on TRY IT FREE:

- Once you create an account and log in, agree to the terms and conditions and fill out your details along with a valid credit card number.

- Once logged in you will see a Billing Overview:

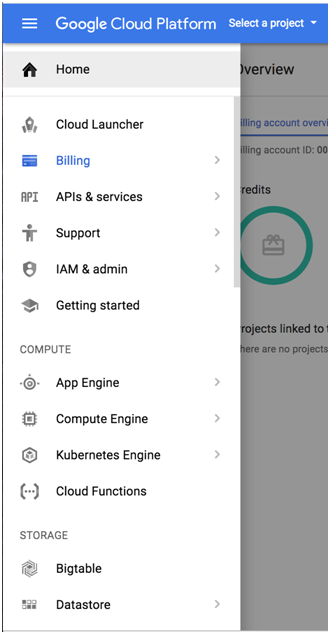

Let's look at how to access different GCP services using the console:

- Click on the menu on the left to drop down the list of services. Feel free to scroll down this list to explore:

- On the right, let's look at another way of accessing your GCP instance using the cloud shell tool that allows you to manage your resources from the command line in any browser. The

on the top right activates your Google cloud shell. This opens a new frame at the bottom of the browser and displays a prompt. It may take a few seconds for the shell session to be established:

on the top right activates your Google cloud shell. This opens a new frame at the bottom of the browser and displays a prompt. It may take a few seconds for the shell session to be established:

Creating your first project

Alternatively, if you prefer using your terminal, you can download and install the SDK to use gcloud on your terminal. It is important to remember that gcloud is part of the Google Cloud SDK.

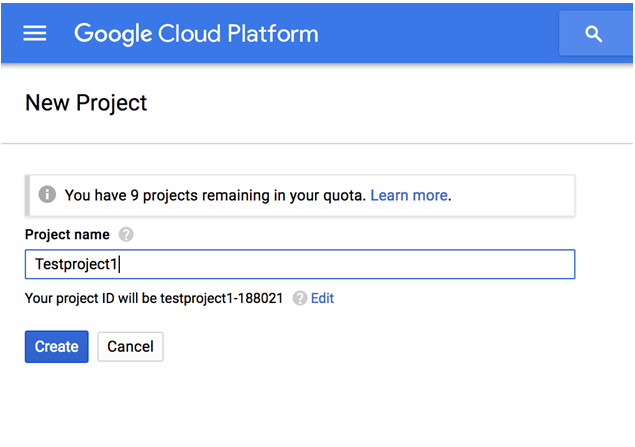

We can get started at deploying services by first creating a project:

- In the preceding illustration, click Create to create your first project:

- You can pick any project name and GCP auto-generates a project ID for you. If you need to customize the project ID in accordance with your organization's standards, click Edit. Remember that this project ID needs to be unique.

- Click Create when done.

- Once the project is created, your DASHBOARD will show you all info related to your project and its associated resources:

- On the left, note the Project name, Project ID, and the Project number.

- Click on Project settings. You will see that you are able to change the Project name but cannot change the Project ID or the Project number. Project settings can also be accessed by going to IAM & admin | Settings:

You can even shut down a project by clicking on the Shut Down option. This will cause all traffic and billing to stop on the project and shut down all resources within a project. You will have 30 days to restore such a project before its deleted. You also have an option to migrate a project. This comes in handy if you are part of an organization and want to move a project over to the organization unit. You will be able to do this if you are a G suite or a cloud premium customer with a support package. Ideally, this is something that keeps projects and permissions at an organization level, rather than at an individual level.

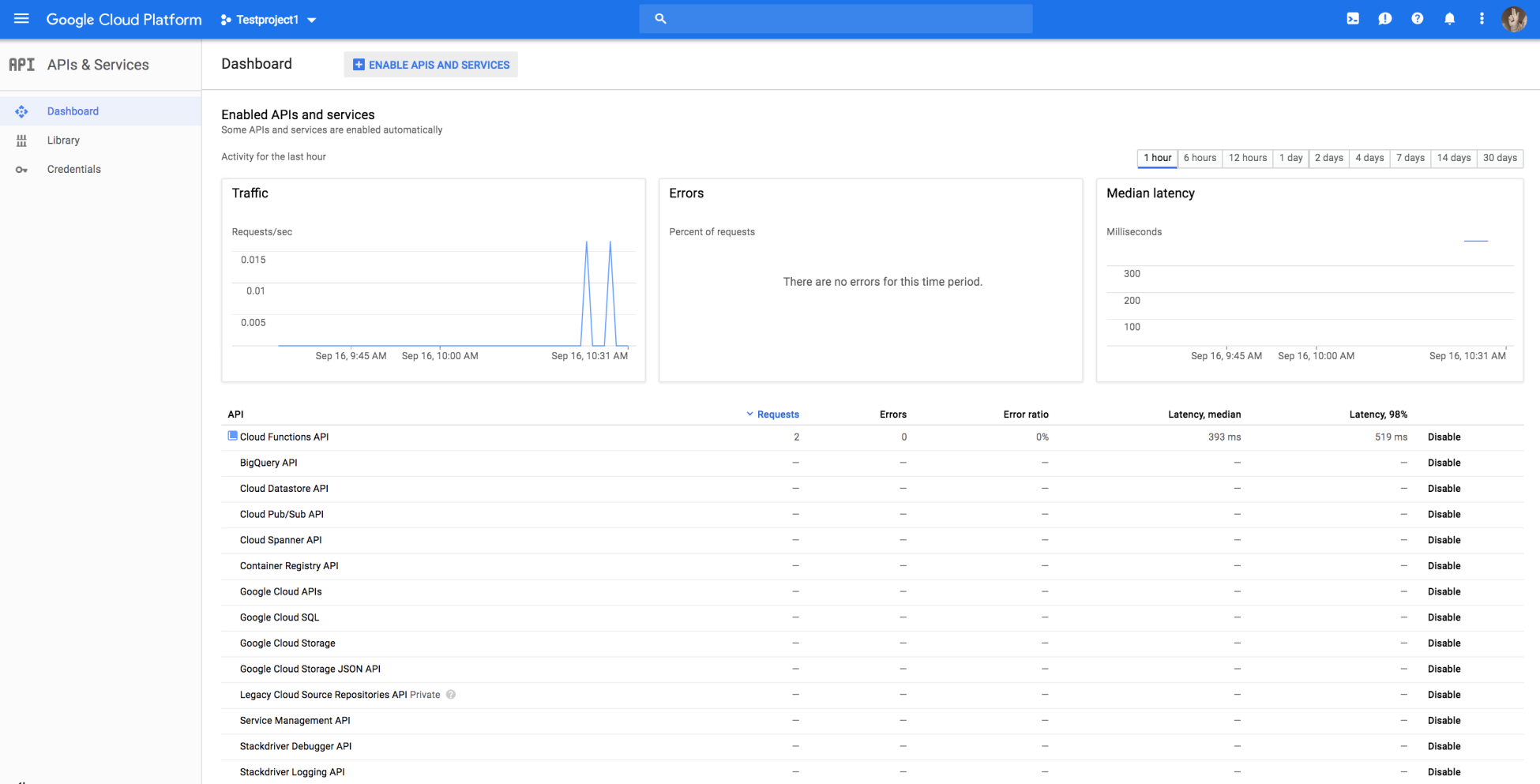

Let's look at enabling APIs as services for your project to allow us to use API access to services. APIs are automatically enabled whenever you try to launch a service using the console. For example, if you attempt to deploy a Google Compute Engine virtual machine, the initialization of that service will enable the Google Cloud Compute API:

- Go to Menu | API's and Services | Dashboard:

All APIs associated with services are disabled by default and you can enable specific ones as required by your application.

- Click on ENABLE APIS AND SERVICES and search for the Google Cloud Compute API. Click Enable. You can also click on Try this API to test the API through the browser console.

Once the API is enabled, you will see all the info related to this API in the dashboard. You can even choose to disable the API if needed:

Using the command line

Let's look at using the gcloud command to create a project. gcloud is part of the Google Cloud SDK. You will need to download and install it on your machine in order to use the gcloud commands from your terminal. Alternatively, you may use the cloud shell console from within the browser. Go to https://cloud.google.com/sdk/downloads to download the relevant package as it applies to your machine and install it:

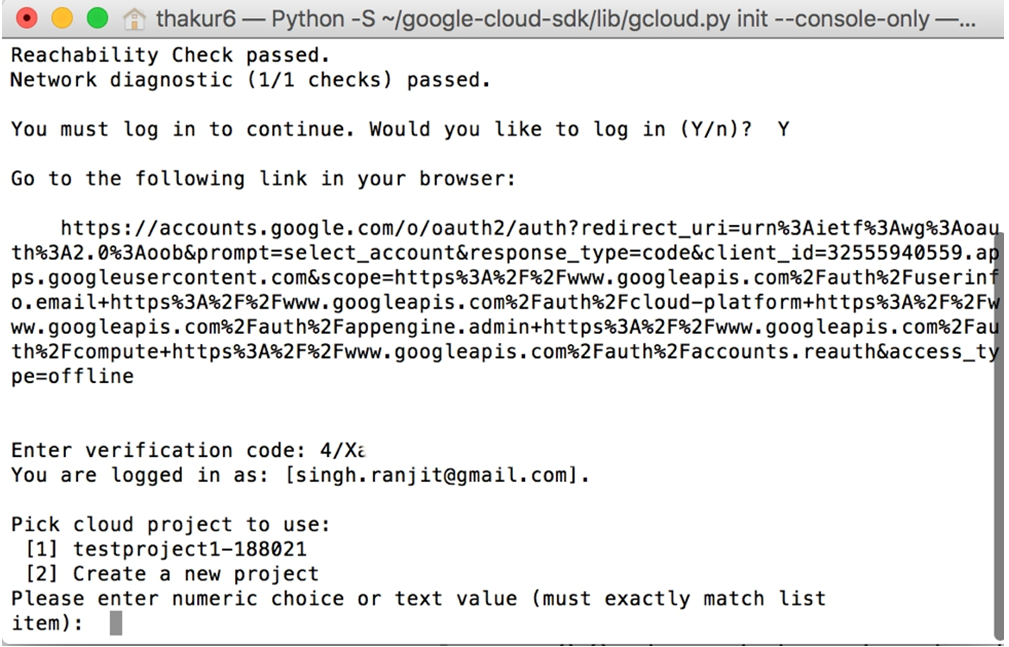

- Once you have installed the SDK on your machine, we need to initialize it. This is done by running the gcloud init command to perform the initial setup tasks. If you ever need to change a setting or create a new configuration, simply re-run gcloud init.

- Open the terminal on your machine and type gcloud init. This opens a browser to allow you to log in to your account. If you want to avoid the browser, type gcloud init --console-only.

- If you use the -console-only flag, then copy and paste the browser URL in the terminal and then copy the key back into the console:

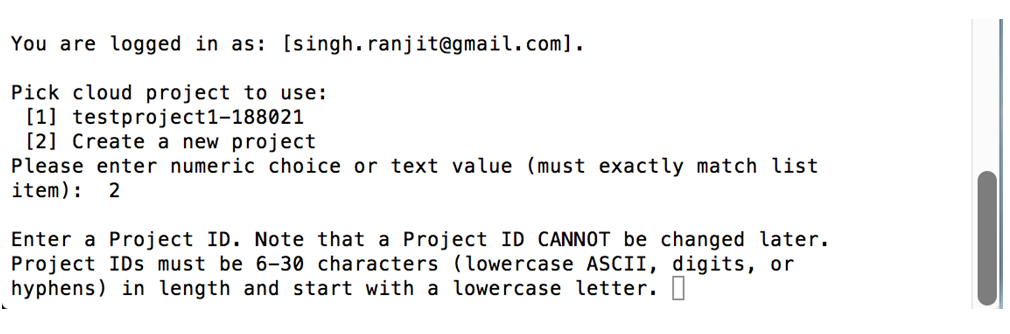

- Enter the numeric choice for the project to use. To create new project, type 2:

- Enter a unique project ID and click Enter. This will create a new project.

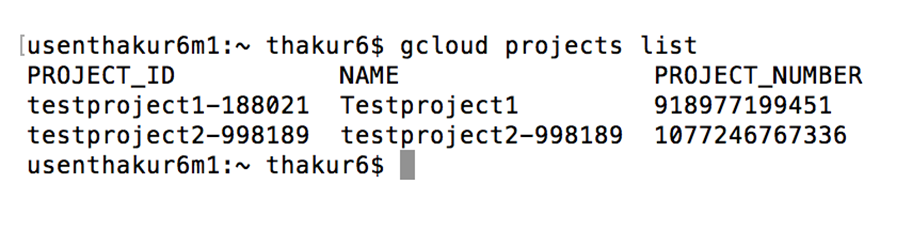

- To list all projects, type gcloud projects list:

Summary

We are off to a good start with a brief understanding of the history of GCP and its services. We looked at all the data center regions where GCP is offered and discussed their aspects and also a list of services. We also spent time creating a free tier account and explored the GCP console and created projects.

In Chapter 2, Google Cloud Platform Compute, we will look into learning about GCP Compute and its various aspects.