"My program is not fast enough. Users are saying that it is not performing well. What can I do?"

These are the words I hear a lot when consulting on different programming projects. Sometimes the answer is simple, sometimes hard, but almost always the critical part of the answer lies in the question. More specifically, in one word - performing.

What do we mean when we say that a program is performing well? Actually, nobody cares. What we have to know is what users mean when they say that the program is not performing well. And users, you'll probably admit, look at the world in a very different way than we programmers.

Before starting to measure and improve the performance of a program, we have to find out what users really mean by the word performance. Only then can we do something productive about it.

We will cover the following topics in this chapter:

- What is performance?

- What do we mean when we say that a program performs well?

- What can we tell about the code speed by looking at the algorithm?

- How does the knowledge of compiler internals help us write fast programs?

- Why is it better to measure than to guess?

- What tools can we use to find the slow parts of a program?

To better understand what we mean when we say that a program is performing well, let's take a look at a user story. In this book, we will use a fictitious person, namely Mr. Smith, Chief of Antarctica Department of Forestry. Mr. Smith is stationed in McMurdo Base, Antarctica, and he doesn't have much real work to do. He has already mapped all the forests in the vicinity of the station and half of the year it is too dark to be walking around and counting trees, anyway. That's why he spends most of his time behind a computer. And that's also why he is very grumpy when his programs are not performing well.

Some days he writes long documents analyzing the state of forests in Antarctica. When he is doing that, he wants the document editor to perform well. By that he actually means that the editor should work fast enough so that he doesn't feel any delay (or lag, as we call the delay when dealing with user input) while typing, preparing graphs, formatting tables, and so on.

In this scenario, performance simply means working fast enough and nothing else. If we speed up the operation of the document editor by a factor of two, or even by a factor of ten, that would make no noticeable improvement for our Mr. Smith. The document editor would simply stay fast enough as far as he is concerned.

The situation completely changes when he is querying a large database of all of the forests on Earth and comparing the situation across the world to the local specifics of Antarctica. He doesn't like to wait and he wants each database query to complete in as short a time as possible. In this case, performance translates to speed. We will make Mr. Smith a happier person if we find a way to speed up his database searches by a factor a ten. Or even a factor of five; or two. He will be happy with any speedup and he'd praise us up to the heavens.

After all this hard work, Mr. Smith likes to play a game. While the computer is thinking about a next move, a video call comes in. Mr. Smith knows he's in for a long chat and he starts resizing the game window so that it will share the screen with a video call application. But the game is thinking hard and is not processing user input and poor Mr. Smith is unable to resize it, which makes him unhappy.

In this example, Mr. Smith simply expects that the application's user interface will respond to his commands. He doesn't care if the application takes some time to find the next move, as long as he can do with the application what he wants to. In other words, he wants a user interface that doesn't block.

It is obvious from the previous example that we don't always mean the same thing when we talk about a program's speed. There is a real speed, as in the database example, and there is a perceived speed, hinted at in the document editor and game scenario. Sometimes we don't need to improve the program speed at all. We just have to make it not stutter while working (by making the user interface responsive at all times) and users will be happy.

We will deal with two types of performance in this book:

- Programs that react quickly to user input

- Programs that perform computations quickly

As you'll see, the techniques to achieve the former and the latter are somehow different. To make a program react quickly, we can sometimes just put a long operation (as was the calculation of the next move in the fictitious game) into a background thread. The code will still run as long as in the original version but the user interface won't be blocked and everybody will be happy.

To speed up a program (which can also help with a slowly-reacting user interface), we can use different techniques, from changing the algorithm to changing the code so that it will use more than one CPU at once to using a hand-optimized version, either written by us or imported from an external library.

To do anything, we have to know which part of the code is causing a problem. If we are dealing with a big legacy program, problematic part may be hard to find. In the rest of this chapter, we will look at different ways to locate such code. We'll start by taking an educated guess and then we'll improve that by measuring the code speed, first by using home-grown tools and then with a few different open source and commercial programs.

Before we start with the dirty (and fun) job of improving program speed, I'd like to present a bit of computer science theory, namely the Big O notation.

You don't have to worry, I will not use pages of mathematical formulas and talk about infinitesimal asymptotics. Instead, I will just present the essence of the Big O notation, the parts that are important to every programmer.

In the literature and, of course, on the web, you will see expressions such as O(n), O(n^2), O(1) and similar. This fancy-looking notation hides a really simple story. It tells us how much slower the algorithm will become if we increase the data size by a factor of n.

Note

The n^2 notation means "n to the power of two", or n2. This notation is frequently used on the internet because it can be written with the standard ASCII characters. This book uses the more readable variant O(n2).

Let's say we have an algorithm with complexity of O(n), which on average takes T seconds to process input data of size N. If we increase the size of the data by a factor of 10 (to 10*N), then the algorithm will (on average) also use 10 times more time (that is, 10*T) to process the data. If we process 1,000 times more data, the program will also run 1,000 times slower.

If the algorithm complexity is O(n2), increasing the size of the data by a factor of 10 will cause the algorithm to run 102 or 100 times longer. If we want to process 1,000 times more data, then the algorithm will take 1,0002 or a million times longer, which is quite a hit. Such algorithms are typically not very useful if we have to process large amounts of data.

Note

Most of the time, we use the Big O notation to describe how the computation time relates to the input data size. When this is the case, we call the Big O notation time complexity. Nevertheless, sometimes the same notation is used to describe how much storage (memory) the algorithm is using. In that case, we are talking about a space complexity.

You may have noticed that I was using the word average a lot in the last few paragraphs. When talking about the algorithm complexity, we are mostly interested in the average behavior, but sometimes we will also need to know about the worst behavior. We rarely talk about best behavior because users don't really care much if the program is sometimes faster than average.

Let's look at an example. The following function checks whether a string parameter value is present in a string list:

function IsPresentInList(strings: TStrings; const value: string): Boolean;

var

i: Integer;

begin

Result := False;

for i := 0 to strings.Count - 1 do

if SameText(strings[i], value) then

Exit(True);

end;What can we tell about this function? The best case is really simple—it will find that the value is equal to strings[0] and it will exit. Great! The best behavior for our function is O(1). That, sadly, doesn't tell us much as that won't happen frequently in practice.

The worst behavior is also easy to find. If the value is not present in the list, the code will have to scan all of the strings list before deciding that it should return False. In other words, the worst behavior is O(n), if the n represents the number of elements in the list. Incidentally (and without proof), the average behavior for this kind of search is also O(n).

Note

The Big O limits don't care about constant factors. If an algorithm would use n/2 steps on average, or even just 0.0001 * n steps, we would still write this down as O(n). Of course, a O(10 * n) algorithm is slower than a O(n) algorithm and that is absolutely important when we fine-tune the code, but no constant factor C will make O(C * n) faster than O(log n) if n gets sufficiently large.

There are better ways to check whether an element is present in some data than searching the list sequentially. We will explore one of them in the next section, Big O and Delphi data structures.

While the function of n inside the O() notation can be anything, there are some O functions that appear constantly in standard programming problems. The following table shows those Big O limits and the most common examples of problems that belong to each class:

Time complexity | Common examples of problems with that time complexity |

O(1) | Accessing array elements |

O(log n) | Search in an ordered list |

O(n) | Linear search |

O(n log n) | Quick sort (average behavior) |

O(n2) | Quick sort (worst behavior), naive sort (bubblesort, insertion sort, selection sort) |

O(cn) | Recursive Fibonacci, travelling salesman problem using dynamic programming (c is some numeric constant) |

If we care about program performance, then O(1) algorithms are of special interest to us as they present algorithms which don't get slower (at least not noticeably) when we increase the problem size. We'll see an example of such O(1) algorithms in the next section.

When we deal with algorithms that search in some datasets, we usually try to make them behave as O(log n), not O(n), as the former slows down much, much slower than the latter.

Another big class of problems deals with sorting the data. While the naive approaches sort in O(n2), better algorithms (such as mergesort and quicksort) need on average just O(n log n) steps.

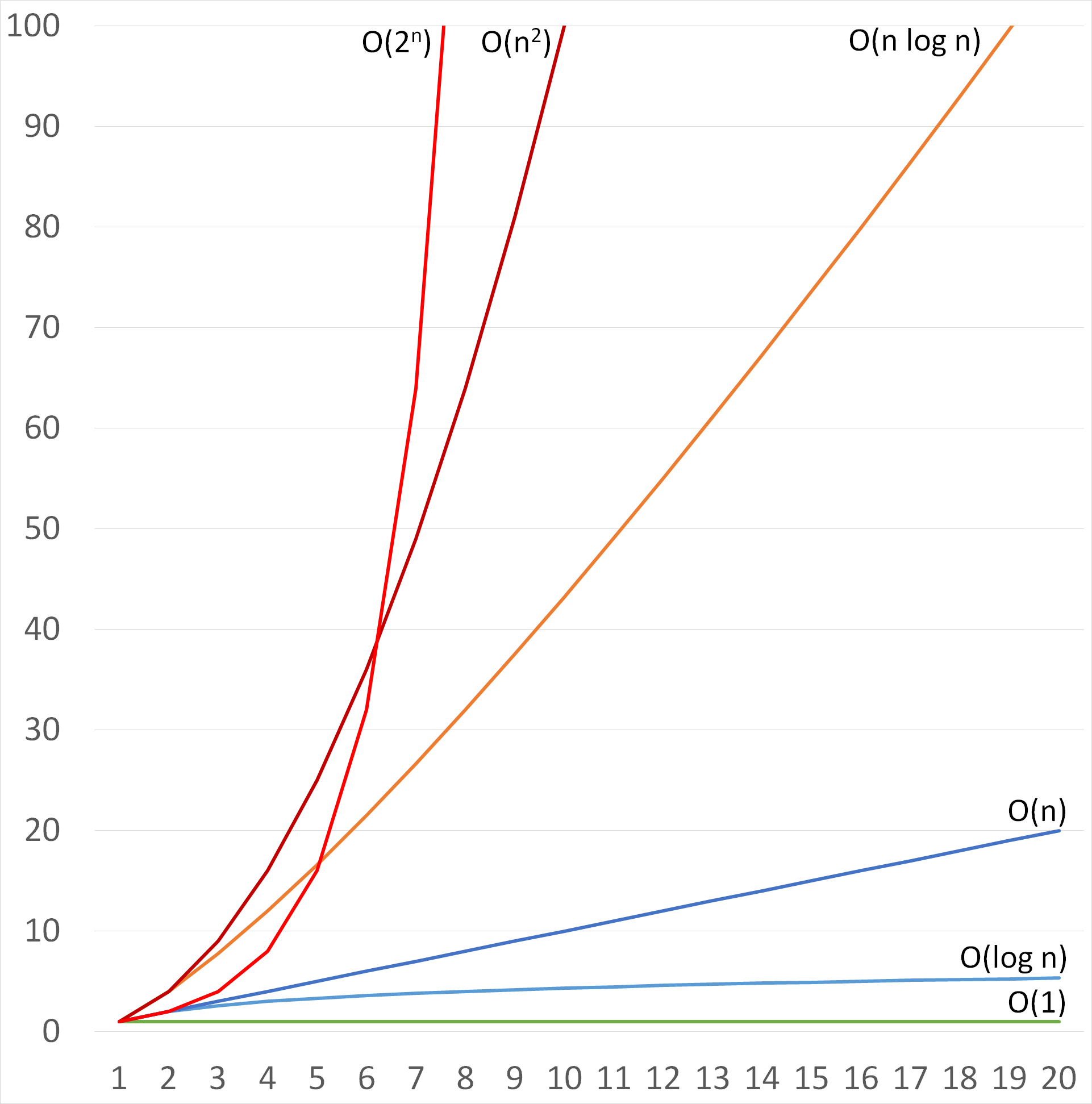

The following image shows how the time complexity for these typical limits (we have used 2n as an example of a more generic cn) grows when we increase the problem size up to 20-fold:

Most frequently encountered Big-O limits

We can see that O(1) and O(log n) grow very slowly. While O(n log n) grows faster than O(n), it also grows much slower than O(n2), which we had to stop plotting when data was increased nine-fold.

The O(2n) starts slowly and looks like a great solution for small data sizes (small n), but then it starts rising terribly fast, much faster than O(n2).

The following table shows how fast O(n log n) and O(n2) are growing if we compare them with O(n) and how quickly O(2n) explodes.

The data column shows the data size increase factor. The number 10 in this column, for example, represents input with 10 times more elements than in the original data:

Data size | O(1) | O(log n) | O(n) | O(n log n) | O(n2) | O(2n) |

1 | 1 | 1 | 1 | 1 | 1 | 1 |

2 | 1 | 2 | 2 | 4 | 4 | 2 |

10 | 1 | 4 | 10 | 43 | 100 | 512 |

20 | 1 | 5 | 20 | 106 | 400 | 524,288 |

100 | 1 | 8 | 100 | 764 | 10,000 | 1029 |

300 | 1 | 9 | 300 | 2,769 | 90,000 | 1090 |

We can see from this table that O(log n) algorithms present a big improvement over O(n) algorithms (8 versus 100 times increase in time when data increases 100-fold). We can also see that the O(2n) quickly becomes completely unmanageable.

The last cell in this table is particularly interesting. There are different estimates for the number of elementary particles (electrons, protons, neutrons, and so on) in the visible universe, but they all lie somewhere around 1090. Suppose we have a computer which can solve an O(2n) in a reasonable time. If we would increase the input data by a factor of just 300, then we would need 1090 computers to solve the new problem in the same time. That is as much as the number of particles in the visible universe!

Delphi's Run-Time Library (RTL) contains many data structures (classes that are specifically designed to store and retrieve data), mostly stored in System.Classes and System.Generics.Collection units that greatly simplify everyday work. We should, however, be aware of their good and bad sides.

Every data structure in the world is seeking a balance between four different types of data access: accessing the data, inserting the data, searching for data, and deleting data. Some data structures are good in some areas, others in different ones, but no data structure in this world can make all four operations independent of data size.

When designing a program, we should therefore know what our needs are. That will help us select the appropriate data structure for the job.

The most popular data structure in Delphi is undoubtedly TStringList. It can store a large amount of strings and assign an object to each of them. It can—and this is important—work in two modes, unsorted and sorted. The former, which is a default, keeps strings in the same order as they were added while the latter keeps them alphabetically ordered.

This directly affects the speed of some operations. While accessing any element in a string list can always be done in a constant time (O(1)), adding to a list can take O(1) when the list is not sorted and O(log n) when the list is sorted.

Why that big difference? When the list is unsorted, Add just adds a string at its end. If the list is, however, sorted, Add must first find a correct insertion place. It does this by executing a bisection search, which needs O(log n) steps to find the correct place.

The reverse holds true for searching in a string list. If it is not sorted, IndexOf needs to search (potentially) the whole list to find an element. In a sorted list, it can do it much faster (again by using a bisection) in O(log n) steps.

We can see that TStringList offers us two options - either a fast addition of elements or a fast lookup, but not both. In a practical situation, we must look at our algorithm and think wisely about what we really need and what will behave better.

To sort a string list, you can call its Sort method or you can set its Sorted property to True. There is, however, a subtle difference that you should be aware of. While calling Sort sorts the list, it doesn't set its internal is sorted flag and all operations on the list will proceed as if the list is unsorted. Setting Sorted := True, on the other hand, does both - it sets the internal flag and calls the Sort method to sort the data.

To store any (non-string) data, we can use traditional TList and TObjectList classes or their more modern generic counterparts, TList<T> and TObjectList<T>. They all always work in an unsorted mode and so adding an element takes O(1) while finding and removing an element takes O(n) steps.

All provide a Sort function which sorts the data with a quicksort algorithm (O(n log n) on average) but only generic versions have a BinarySearch method, which searches for an element with a bisection search taking O(log n) steps. Be aware that BinarySearch requires the list to be sorted but doesn't make any checks to assert that. It is your responsibility to sort the list before you use this function.

If you need a very quick element lookup, paired with a fast addition and removal, then TDictionary is the solution. It has methods for adding (Add), removing (Remove) and finding a key (ContainsKey and TryGetValue) that, on average, function in a constant time, O(1). Their worst behavior is actually quite bad, O(n), but that will only occur on specially crafted sets of data that you will never see in practical applications.

I've told you before that there's no free lunch and so we can't expect that TDictionary is perfect. The big limitation is that we can't access the elements it is holding in a direct way. In other words, there is no TDictionary[i]. We can walk over all elements in a dictionary by using a for statement, but we can't access any of its elements directly. Another limitation of TDictionary is that it does not preserve the order in which elements were added.

Delphi also offers two simple data structures that mimic standard queue—TQueue<T>—and stack—TStack<T>. Both have very fast O(1) methods for adding and removing the data, but they don't offer any bells and whistles—there is no direct data access, we cannot search for data, and so on. We can only insert (Enqueue in queue or Push in stack) and remove (Dequeue and Pop) data.

To help you select the right tool for the job, I have put together a table showing the most important data structures and their most important methods, together with average and (when they differ from the average) worst-case time complexities:

Data structure | Operation | Average | Worst |

TStringList | Direct access | O(1) | |

Add | O(1) / O(log n) | ||

Insert | O(1) | ||

Delete | O(1) | ||

IndexOf | O(n) / O(log n) | ||

Sort | O(n log n) | O(n2) | |

TList, TObjectList | Direct access | O(1) | |

Add | O(1) | ||

Insert | O(1) | ||

Delete | O(1) | ||

Remove | O(n) | ||

IndexOf | O(n) | ||

Sort | O(n log n) | O(n2) | |

TList<T>, TObjectList<T> | Direct access | O(1) | |

Add | O(1) | ||

Insert | O(1) | ||

Delete | O(1) | ||

Remove | O(n) | ||

IndexOf | O(n) | ||

BinarySearch | O(log n) | ||

Sort | O(n log n) | O(n2) | |

TDictionary | Direct access | Not possible | |

Add | O(1) | O(n) | |

Remove | O(1) | O(n) | |

TryGetValue | O(1) | O(n) | |

ContainsKey | O(1) | O(n) | |

ContainsValue | O(n) | ||

TQueue<T> | Direct access | Not possible | |

Enqueue | O(1) | ||

Dequeue | O(1) | ||

TStack<T> | Direct access | Not possible | |

Push | O(1) | ||

Pop | O(1) |

The table shows the time complexity of the most important operations on built-in Delphi data structures. Complexity for the worst case is only listed if it differs from the average complexity.

Enough with the theory already! I know that you, like me, prefer to talk through the code. As one program explains more than a thousand words could, I have prepared a simple demo project: RandomWordSearch.

This program functions as a very convoluted random word generator. When started, it will load a list of 370,101 English words from a file. It will also prepare three internal data structures preloaded with these words:

Random word generator

The program shows three buttons to the user. All three run basically the same code. The only difference is the test function which is passed to the centralized word generator as a parameter:

procedure TfrmRandomWordSearch.FindGoodWord(const wordTest: TWordCheckDelegate);

var

word: string;

isWordOK: boolean;

time: TStopwatch;

begin

time := TStopwatch.StartNew;

repeat

word := GenerateWord;

isWordOK := wordTest(word);

until isWordOK or (time.ElapsedMilliseconds > 10000);

if isWordOK then

lbWords.ItemIndex := lbWords.Items.Add(Format('%s (%d ms)', [word, time.ElapsedMilliseconds]))

else

lbWords.ItemIndex := lbWords.Items.Add('timeout');

end;The core of the FindGoodWord method can be easily described:

- Generate a random word by calling

GenerateWord. - Call the test function

wordTeston that word. If this function returnsFalse, repeat Step 1. Otherwise show the word.

The code is a bit more complicated because it also checks that the word generation part runs for at most 10 seconds and reports a timeout if no valid word was found in that time.

The random word generator GenerateWord is incredibly simple. It just appends together lowercase English letters until the specified length (settable in the user interface) is reached:

function TfrmRandomWordSearch.GenerateWord: string;

var

pos: integer;

begin

Result := '';

for pos := 1 to inpWordLength.Value do

Result := Result + Chr(Ord('a') + Random(Ord('z') - Ord('a') + 1));

end;Let's now check the data preparation phase. The not very interesting (and not shown here) OnCreate handler loads data from a file into a TStringList and calls the LoadWords method:

procedure TfrmRandomWordSearch.LoadWords(wordList: TStringList);

var

word: string;

begin

FWordsUnsorted := TStringList.Create;

FWordsUnsorted.Assign(wordList);

FWordsSorted := TStringList.Create;

FWordsSorted.Assign(wordList);

FWordsSorted.Sorted := True;

FWordsDictionary := TDictionary<string,boolean>.Create(wordList.Count);

for word in wordList do

FWordsDictionary.Add(word, True);

end;The first data structure is an unsorted TStringList, FWordsUnsorted. Data is just copied from the list of all words by calling the Assign method.

The second data structure is a sorted TStringList, FWordsSorted. Data is firstly copied from the list of all words. The list is then sorted by setting FWordsSorted.Sorted := True.

The last data structure is a TDictionary, FWordsDictionary. A TDictionary always stores pairs of keys and values. In our case, we only need the keys part as there is no data associated with any specific word, but Delphi doesn't allow us to ignore the values part and so the code defines the value as a boolean and always sets it to True.

Although the dictionaries can grow, they work faster if we can initially set the number of elements that will be stored inside. In this case that is simple—we can just use the length of the wordList as a parameter to TDictionary.Create.

The only interesting part of the code left is OnClick handlers for all three buttons. All three call the FindGoodWord method, but each passes in a different test function.

When you click on the Unsorted list button, the test function checks whether the word can be found in the FWordsUnsorted list by calling the IndexOf function. As we will mostly be checking non-English words (remember, they are just random strings of letters), this IndexOf will typically have to compare all 307,101 words before returning -1:

procedure TfrmRandomWordSearch.btnUnsortedListClick(Sender: TObject);

begin

FindGoodWord(

function (const word: string): boolean

begin

Result := FWordsUnsorted.IndexOf(word) >= 0;

end);

end;When you click on the Sorted list button, the test function calls FWordsSorted.IndexOf. As this TStringList is sorted, IndexOf will use a binary search that will need at most log(307101) = 19 (rounded up) comparisons to find out that a word is not found in the list. As this is much less than 307.101, we can expect that finding words with this approach will be much faster:

procedure TfrmRandomWordSearch.btnSortedListClick(Sender: TObject);

begin

FindGoodWord(

function (const word: string): boolean

begin

Result := FWordsSorted.IndexOf(word) >= 0;

end);

end;A click on the last button, Dictionary, calls FWordsDictionary.ContainsKey to check if the word can be found in the dictionary, and that can usually be done in just one step. Admittedly, this is a bit of a slower operation than comparing two strings, but still the TDictionary approach should be faster than any of the TStringList methods:

procedure TfrmRandomWordSearch.btnDictionaryClick(Sender: TObject);

begin

FindGoodWord(

function (const word: string): boolean

begin

Result := FWordsDictionary.ContainsKey(word);

end);

end;If we use the terminology from the last section, we can say that the O(n) algorithm (unsorted list) will run much slower than the O(log n) algorithm (sorted list), and that the O(1) algorithm (dictionary) will be the fastest of them all. Let's check this in practice.

Start the program and click on the Unsorted list button a few times. You'll see that it typically needs few hundred milliseconds to a few seconds to generate a new word. As the process is random and dependent on CPU speed, your numbers may differ quite a lot from mine. If you are only getting timeout messages, you are running on a slow machine and you should decrease the Word length to 3.

If you increment the Word length to 5 and click the button again, you'll notice that the average calculation time will grow up to a few seconds. You may even get an occasional timeout. Increase it to 6 and you'll mostly be getting timeouts. We are clearly hitting the limits of this approach.

Prepare now to be dazed! Click on the Sorted list button (while keeping the Word length at 6) and words will again be calculated blazingly fast. On my computer, the code only needs 10 to 100 milliseconds to find a new word:

Testing with different word length and with first two algorithms

To better see the difference between a sorted list and a dictionary, we have to crank up the word length again. Setting it to 7 worked well for me. The sorted list needed from a few 100 milliseconds to a few seconds to find a new word while the dictionary approach mostly found a new word in under 100 milliseconds.

Increase the Word length to 8 and the sorted list will start to time out while the dictionary will still work. Our O(1) approach is indeed faster than the O(log n) code:

Comparing sorted list (first six words and the two after "timeout") with the dictionary approach (next five and last two).

While the knowledge of algorithms and their complexity is useful, it will not help in every occasion. Sometimes you already use the best possible algorithm but your program is still too slow. At such times, you'll want to find the slow parts of the program and speed them up with whatever trick possible. To do that, you have to find them first, which is the topic of the rest of this chapter.

While I was talking about theory and data structures and best practices, our friend Mr. Smith read everything about polar forests on the internet and then got really bored. In a moment of sheer panic—when he was thinking about watching videos of cute kittens for the whole polar night—he turned to programming. He checked various internet sources and decided that Delphi is the way to go. After all, he could use it to put together a new game for iOS and Android and make lots of money while waiting for the sun to show up!

As he didn't have any programming literature on the Antarctic base, he learned programming from internet resources. Sadly, he found most of his knowledge on Experts Exchange and Yahoo Answers and his programs sometimes reflect that.

As of this moment, he has written one working program (and by working I mean that it compiles), but he is not really sure what the program actually does. He only knows that the program is a bit slow and because of that he named it SlowCode.

His program is a console mode program which upon startup calls the Test method:

procedure Test;

var

data: TArray<Integer>;

highBound: Integer;

begin

repeat

Writeln('How many numbers (0 to exit)?');

Write('> ');

Readln(highBound);

if highBound = 0 then

Exit;

data := SlowMethod(highBound);

ShowElements(data);

until false;

end;That one, at least, is easy to grasp. It reads some number, passes it to something called SlowMethod (hmm, Mr. Smith really should work on naming techniques), and then passes the result (which is of type TArray<Integer>) to a method called ShowElements. When the user types in 0, the program exits.

Let's check the ShowElements function:

function SlowMethod(highBound: Integer): TArray<Integer>;

var

i: Integer;

temp: TList<Integer>;

begin

temp := TList<Integer>.Create;

try

for i := 2 to highBound do

if not ElementInDataDivides(temp, i) then

temp.Add(i);

Result := Filter(temp);

finally

FreeAndNil(temp);

end;

end;The code creates a list of integers. Then it iterates from a value 2 to the value that was entered by the user and for each value calls ElementInDataDivides, passing in the list and the current value. If that function returns True, the current value is entered into the list.

After that, SlowMethod calls the Filter method which does something with the list and converts it into an array of integers which is then returned as a function result:

function ElementInDataDivides(data: TList<Integer>; value: Integer): boolean;

var

i: Integer;

begin

Result := True;

for i in data do

if (value <> i) and ((value mod i) = 0) then

Exit;

Result := False;

end;The ElementInDataDivides function iterates over all the numbers in the list and checks if any element in the list divides the value (with the additional constraint that this element in the list must not be equal to the value).

Let's check the last part of the puzzle—the Filter function:

function Reverse(s: string): string;

var

ch: char;

begin

Result := '';

for ch in s do

Result := ch + Result;

end;

function Filter(list: TList<Integer>): TArray<Integer>;

var

i: Integer;

reversed: Integer;

begin

SetLength(Result, 0);

for i in list do

begin

reversed := StrToInt(Reverse(IntToStr(i)));

if not ElementInDataDivides(list, reversed) then

begin

SetLength(Result, Length(Result) + 1);

Result[High(Result)] := i;

end;

end;

end;This one again iterates over the list, reverses the numbers in each element (changes 123 to 321, 3341 to 1433, and so on) and calls ElementInDataDivides on the new number. If it returns True, the element is added to the returned result in a fairly inefficient way.

I agree with Mr. Smith—it is hard to tell what the program does. Maybe it is easiest to run it and look at the output:

It looks like the program is outputting prime numbers. Not all prime numbers, just some of them. (For example, 19 is missing from the list, and so is 23.) Let's leave it at that for the moment.

We can tell more about the program, about its good and bad parts, if we look at it through the eyes of time complexity, in terms of the Big O notation.

We'll start where the code starts—in the SlowMethod method. It has a loop iterating from 2 to the user-specified upper bound, for i := 2 to highBound do. The size of our data, or n, is therefore equal to highBound and this for loop has time complexity of O(n):

for i := 2 to highBound do

if not ElementInDataDivides(temp, i) then

temp.Add(i);Inside this loop, the code calls ElementInDataDivides followed by an occasional temp.Add. The latter will execute in O(1), but we can't say anything about the ElementInDataDivides before we examine it.

This method also has a loop iterating over the data list. We can't guess how many elements are in this list, but in the short test that we just performed, we know that the program writes out 13 elements when processing values from 2 to 100:

for i in data do

if (value <> i) and ((value mod i) = 0) then

Exit;For the purpose of this very rough estimation I'll just guess that the for i in data do loop also has a time complexity of O(n).

In SlowMethod we therefore have an O(n) loop executing another O(n) loop for each element, which gives us a O(n2) performance.

The SlowMethod then calls the Filter method which also contains O(n) for loop calling ElementInDataDivides, which gives us O(n2) complexity for this part:

for i in list do

begin

reversed := StrToInt(Reverse(IntToStr(i)));

if not ElementInDataDivides(list, reversed) then

begin

SetLength(Result, Length(Result) + 1);

Result[High(Result)] := i;

end;

end;There's also a conversion to string, some operation on that string, and conversion back to the integer StrToInt(Reverse(IntToStr(i))). It works on all elements of the list (O(n)) but in each iteration it processes all characters in the string representation of a number. As a length of the number is proportional to log n, we can say that this part has complexity of O(n log n), which can be ignored as it is much less than the O(n2) complexity of the whole method.

There are also some operations hidden inside SetLength, but at this moment we don't know yet what they are and how much they contribute to the whole program. We'll cover that area in Chapter 4, Memory Management.

The SlowMethod therefore consists of two parts, both with complexity O(n2). Added together, that would give us 2*n2, but as we ignore constant factors (that is, 2) in the Big O notation, we can only say that the time complexity of SlowMethod is O(n2).

So what can we say simply by looking at the code?

- The program probably runs in O(n2) time. It will take around 100 times longer to process 10,000 elements than 1,000 elements.

- There is a conversion from the integer to the string and back (

Filter), which has complexity of only O(n log n) but it would still be interesting to know how fast this code really is. - There's a time complexity hidden behind the

SetLengthcall which we know nothing about. - We can guess that most probably the

ElementInDataDividesis the most time-consuming part of the code and any improvements in this method would probably help. - Fixing the terrible idea of appending elements to an array with

SetLengthcould probably speed up a program, too.

As the code performance is not everything, I would also like to inform Mr. Smith about a few places where his code is less than satisfactory:

- The prompt How many numbers is misleading. A user would probably expect it to represent the number of numbers outputted, while the program actually wants to know how many numbers to test.

- Appending to

TArray<T>in that way is not a good idea. UseTList<T>for temporary storage and call itsToArraymethod at the end. If you needTArray<T>, that is. You can also do all processing usingTList<T>. SlowMethodhas two distinctive parts - data generation, which is coded as a part ofSlowMethod, and data filtering, which is extracted in its own method,Filter. It would be better if the first part is extracted into its own method, too.- Console program, really? Console programs are good for simple tests, but that is it. Learn VCL or FireMonkey, Mr. Smith!

We can now try and optimize parts of the code (ElementInDataDivides seems to be a good target for that) or, better, we can do some measuring to confirm our suspicions with hard numbers.

In a more complicated program (what we call a real life), it would usually be much simpler to measure the program performance than to do such analysis. This approach, however, proves to be a powerful tool if you are using it while designing the code. Once you hear a little voice nagging about the time complexities all the time while you're writing a code, you'll be on the way to becoming an excellent programmer.

There is only one way to get a good picture about the fast and slow parts of a program—by measuring it. We can do it manually, by inserting time-measuring calls in the code, or we can use specialized tools. We have a name for measuring—profiling—and we call specialized tools for measuring profilers.

In the rest of this chapter, we'll look at different techniques of measuring the execution speed. First we will measure the now familiar program, SlowCode, with a simple software stopwatch and then we'll look at a few open source and commercial profilers.

Before we start, I'd like to point out a few basic rules that apply to all profiling techniques:

- Always profile without the debugger. The debugger will slow the execution in unexpected places and that will skew the results. If you are starting your program from the Delphi IDE, just press Ctrl+Shift+F9 instead of F9.

- Try not to do anything else on the computer while profiling. Other programs will take the CPU away from the measured program which will make it run slower.

- Take care that the program doesn't wait for user action (data entry, button click) while profiling. This will completely skew the report.

- Repeat the tests a few times. Execution times will differ because Windows (and any other OS that Delphi supports) will always execute other tasks besides running your program.

- All the above especially holds for multithreaded programs, which is an area explored in Chapters 5 to 7.

Delphi includes a helpful unit called System.Diagnostics, which implements a TStopwatch record. It allows us to measure time events with a better precision than 1 millisecond and has a pretty exhaustive public interface, as shown in the code fragment below:

type

TStopwatch = record

public

class function Create: TStopwatch; static;

class function GetTimeStamp: Int64; static;

procedure Reset;

procedure Start;

class function StartNew: TStopwatch; static;

procedure Stop;

property Elapsed: TTimeSpan read GetElapsed;

property ElapsedMilliseconds: Int64 read GetElapsedMilliseconds;

property ElapsedTicks: Int64 read GetElapsedTicks;

class property Frequency: Int64 read FFrequency;

class property IsHighResolution: Boolean read FIsHighResolution;

property IsRunning: Boolean read FRunning;

end;To use a stopwatch, you first have to create it. You can call TStopwatch.Create to create a new stopped stopwatch or TStopwatch.StartNew to create a new started stopwatch. As TStopwatch is implemented as a record, there's no need to destroy a stopwatch object.

When a stopwatch is started, it is measuring time. To start a stopwatch, call the Start method and to stop it, call the Stop method. The IsRunning property will tell you if the stopwatch is currently started. Call the Reset method to reset the stopwatch to zero.

The TStopwatch contains a few functions that return the currently measured time. The most precise of them is ElapsedTicks, but as there is no built-in (public) function to convert this into standard time units, this function is hard to use. My recommendation is to just use the ElapsedMilliseconds property which will give you elapsed (measured) time in milliseconds.

For a simple demo, this code will return 1,000 or a bit more:

function Measure1sec: int64; var sw: TStopwatch; begin sw := TStopwatch.StartNew; Sleep(1000); Result := sw.ElapsedMilliseconds; end;

Let's now use this function to measure the SlowMethod method.

First, you have to add the System.Diagnostics unit to the uses list:

uses System.SysUtils, System.Generics.Collections, System.Classes, System.Diagnostics;

Next, you have to create this stopwatch inside SlowMethod, stop it at the end, and write out the elapsed time:

function SlowMethod(highBound: Integer): TArray<Integer>;

var

// existing variables

sw: TStopwatch;

begin

sw := TStopwatch.StartNew;

// existing code

sw.Stop;

Writeln('SlowMethod: ', sw.ElapsedMilliseconds, ' ms');

end;We can use this code to verify the theory that SlowCode has time complexity O(n2). To do this, we have to measure the execution times for different counts of processed numbers (different values entered at the How many numbers prompt).

I did some testing for selected values from 10,000 to 1,000,000 and got the following numbers:

Highest number | Execution time [ms] |

10,000 | 15 |

25,000 | 79 |

50,000 | 250 |

75000 | 506 |

100,000 | 837 |

250,000 | 4,515 |

500.000 | 15564 |

750,000 | 30,806 |

1,000,000 | 54,219 |

If you repeat the tests, you will of course measure different values, but the growth rate should be the same.

For a quick confirmation, I have plotted a Scatter Chart of this data in Excel and the result surely looks like a square function. To be more sure, I have added a power trendline which created a function in the form of nc, where c was a constant that Excel has calculated from the data. In the case of my specific measurements, this fitting function was y = 10-6 * x1.7751, which is not that far from x2:

Curve fitting in Excel (left) and Wolfram Alpha (right)

Next, I repeated this curve fitting process on the wonderful Wolfram Alpha (www.wolframalpha.com) where you can find a Regression Calculator Widget, a tool designed specifically for this task. I entered measurements into the widget and it calculated a fitting function of 4.76372×10^-8 * x^2 + 0.0064733 * x - 143.666. If we ignore all unimportant factors and constants, the only part left is x^2. Another confirmation for our analysis!

Now we know how the program behaves in global terms, but we still have no idea of which part of the code is the slowest. To find that out, we also have to measure execution times of the ElementInDataDivides and Filter methods.

These two methods are called multiple times during the execution of the program so we can't just create and destroy a stopwatch each time Filter (for example) is executed. We have to create a global stopwatch, which is a bit inconvenient in this program because we have to introduce a global variable.

If you check the SlowCode_Stopwatch program, you'll see that it actually creates three global stopwatches, one for each of the functions that we want to measure:

var Timing_ElementInData: TStopwatch; Timing_Filter: TStopwatch; Timing_SlowMethod: TStopwatch;

All three stopwatches are created (but not started!) when the program starts:

Timing_ElementInData := TStopwatch.Create; Timing_Filter := TStopwatch.Create; Timing_SlowMethod := TStopwatch.Create;

When the program ends, the code logs elapsed time for all three stopwatches:

Writeln('Total time spent in SlowMethod: ',

Timing_SlowMethod.ElapsedMilliseconds, ' ms');

Writeln('Total time spent in ElementInDataDivides: ',

Timing_ElementInData.ElapsedMilliseconds, ' ms');

Writeln('Total time spent in Filter: ',

Timing_Filter.ElapsedMilliseconds, ' ms');In each of the three methods, we only have to Start the stopwatch at the beginning and Stop it at the end:

function Filter(list: TList<Integer>): TArray<Integer>; // existing variables begin Timing_Filter.Start; // existing code Timing_Filter.Stop; end;

The only tricky part is the ElementInDataDivides function which calls Exit as soon as one element divides the value parameter. The simplest way to fix that is to wrap the existing code in a try .. finally handler and to stop the stopwatch in the finally part:

function ElementInDataDivides(data: TList<Integer>; value: Integer): boolean;

var

i: Integer;

begin

Timing_ElementInData.Start;

try

Result := True;

for i in data do

if (value <> i) and ((value mod i) = 0) then

Exit;

Result := False;

finally

Timing_ElementInData.Stop;

end;

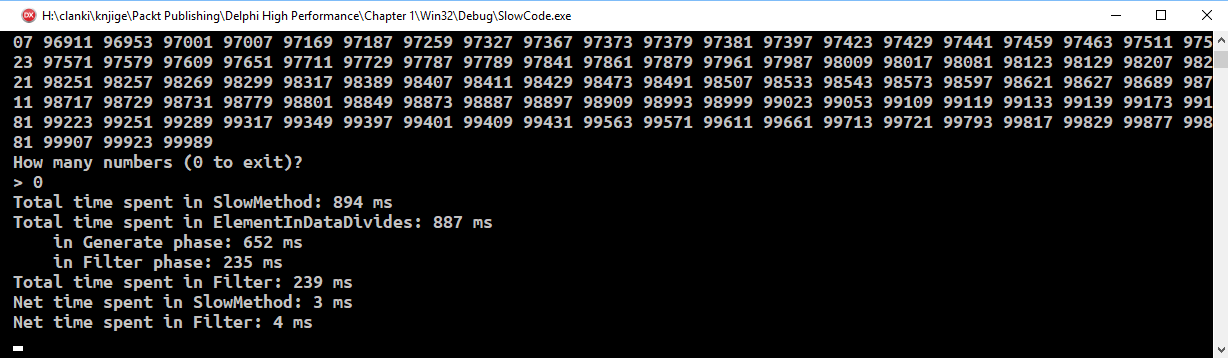

end;If you run the program and play with it for a while and then exit, you'll get a performance report. In my case, I got the following result:

We now know that most of the time is spent in ElementInDataDivides, but we don't know how many calls to it were made directly from SlowMethod and how many from the Filter method. To find that out, we have to add two new global variables and some more code:

var

Generate_ElementInData_ms: int64;

Filter_ElementInData_ms: int64;

function SlowMethod(highBound: Integer): TArray<Integer>;

var

i: Integer;

temp: TList<Integer>;

begin

Timing_SlowMethod.Start;

temp := TList<Integer>.Create;

try

Timing_ElementInData.Reset;

for i := 2 to highBound do

if not ElementInDataDivides(temp, i) then

temp.Add(i);

Generate_ElementInData_ms := Generate_ElementInData_ms +

Timing_ElementInData.ElapsedMilliseconds;

Timing_ElementInData.Reset;

Result := Filter(temp);

Filter_ElementInData_ms := Filter_ElementInData_ms +

Timing_ElementInData.ElapsedMilliseconds;

finally

FreeAndNil(temp);

end;

Timing_SlowMethod.Stop;

end;The code (which can be found in the SlowCode_Stopwatch2 program) now resets the Timing_ElementInData stopwatch before the data generation phase and adds the value of the stopwatch to Generate_ElementInData_ms afterwards. Then it resets the stopwatch again for the Filter phase and adds the value of the stopwatch to Filter_ElementInData_ms afterwards.

At the end that will give us the cumulative execution time for ElementInDataDivides called directly from SlowMethod in Generate_ElementInData_ms and the cumulative execution time for ElementInDataDivides called from Filter in Filter_ElementInData_ms.

A test run with an upper bound of 100,000 produced the following output:

Now we can be really sure that almost all the time is spent in ElementInDataDivides. We also know that approximately 75% of the time it is called directly from SlowMethod and 25% of the time from the Filter method.

We are now ready to optimize the program. We can either improve the implementation to make it faster, or replace it with a faster algorithm, or both.

But first, let's see what profilers can tell us about our program.

Measuring the speed of a program by inserting special code into the program by hand is perfect if you want to measure a very specific part of the code, but becomes cumbersome if you don't know exactly which part of the program you should focus on. In such cases, it is best to use specialized software - profilers.

Profilers can measure all kinds of parameters. Sure, they are mostly used for measuring execution speed, at a method or even on a line level, but they can do much more. They can display the call tree - a graph showing how methods call one another. They can show memory usage in a program so you can quickly find the one method which is constantly eating the memory. They can show you the coverage of your tests so you can see which code was tested and which not. And much more.

They do that magic in two ways, by sampling or instrumentation.

Sampling profiling looks at the state of your program at regular intervals (for example, 100 times per second) and each time checks which line of the code is currently being executed. Statistically, it will predominantly see lines of code that are executed most of the time.

Sampling profiling will give us only a rough overview of behavior inside the program but it will do that without affecting the program speed, and because of that is excellent for taking a first look at some code.

Instrumenting profiles do their magic by changing—instrumenting—the code. They are, in fact, doing almost exactly the same kind of changing the code as we did by inserting the stopwatch calls.

There are two ways to do instrumentation. The profiler can change the source code or it can change the program binary. Source instrumenting profilers are rare because they are less safe to use (there's always the possibility that a compiler will mess up the source) and because you have to recompile the code after it is instrumented (modified by the profiler).

Most of the instrumenting profilers on the market modify the binary code, which is a bit more tricky to implement but doesn't require a recompilation and cannot destroy the source code.

The advantage of instrumentation over sampling is that the former can collect everything that is going on inside the program and not just a few samples here and there. The disadvantage is that instrumentation reduces the speed of the program. Although instrumenting profilers take extra care to optimize the code that is inserted into your program, executing this code in many instrumented methods can still take a long time.

The other problem is the amount of collected data. A short and fast method which is called 100,000 times in 1 second will generate 100,000 data samples in an instrumenting profiler and only 100 samples in a sampling profiler (provided that it samples the code 100 times a second).

Because of all that, instrumenting profilers are best used once we already know on which part(s) of the program we want to focus.

I'll end this chapter with an overview of four profilers, two open source and free (AsmProfiler and Sampling Profiler) and two commercial (AQTime and Nexus Quality Suite), to make it easier for you to choose the best fit for your situation. These four profilers are, of course, not your only options. If you need a very precise profiling of short methods, check out ProDelphi (www.prodelphi.de). And if you need a high-end tool that will not only profile your application but help it optimize to its fullest potential, take a look at Intel's VTune Amplifier (software.intel.com/en-us/intel-vtune-amplifier-xe).

AsmProfiler is a 32-bit instrumenting and sampling profiler written by André Mussche. Its source, along with Windows exe, can be found at https://github.com/andremussche/asmprofiler. Although the latest version was released in 2016, it works well with the newest Delphi at the time of writing this book, 10.2 Tokyo.

The sampling and instrumenting profilers are used in a different way, so I'll cover them separately.

To use AsmProfiler you first have to unpack the release ZIP into some folder on your disk. That will create two subfolders—Sampling and Instrumenting. To start the sampling profiler, start AsmProfiling_Sampling from the Sampling folder and then click Start profiling.

AsmProfiler has two ways of starting a profiling session. You can start the program manually, click Select processSelect exe, browse to the compiled EXE of your program, and then click Start processnow, which will start the program or click Start sampling which will start the program and also start sampling the code.

The reasoning behind the two different ways of starting the program is that mostly you want to profile a specific part of the program, so you would load it into the profiler, navigate to the specific part, click Start sampling, do the required steps in your program, click Stop sampling, and analyze the results. Sometimes, however, you would want to profile the very startup of the program so you would want sampling to start immediately after your program is launched. The following screenshot shows the initial AsmProfiler screen:

Starting profiling session in AsmProfiler

Sampling options can be configured in the Options group. You can set the sampling interval—how many milliseconds will pass between two samples. Setting this value to 4, for example, will generate 250 (1000/4) samples per second.

Setting the sampling interval to 0 enables a continuous sampling mode which still leaves some time to threads running on the same CPU. (The sampling code calls Sleep(0) between taking two samples.) Setting it to -1 causes the samples to be taken as fast as possible. In effect, AsmProfiler will use one CPU core for itself.

You can also set the priority of sample-taking threads from the default, Normal. I will discuss threads and priorities in Chapter 5, Getting Started with the Parallel World.

After you have taken the samples and clicked Stop sampling, click the Show results button to analyze the results.

If you want to compare your results with mine, here are steps to produce the results (as shown in the following screenshot):

- Start AsmProfiler.

- Click

Select exeand select theSlowCode.exe. - Click

Start process now. - Click

Start sampling. - Enter

100,000into the program. - Click

Stop sampling. - Enter

0into the program to exit. - Click

Show results. - Click

Resultson theSampling Resultsform.

Result of a test run profiled with AsmProfiler's Sampling profiler

AsmProfiler displays results organized by threads, but it automatically highlights the main thread so you don't have to care about that detail if your program doesn't use multithreading.

In the result grid, it shows the module (main EXE or DLL), name of the function, how many times the code was found to be in this function, and times (absolute and percentage) the code spent in that function (Own), in all functions called from it (Child), and both in that function and in all functions called from it (Own+Child).

If we sort results by the time spent only in the function (Own time), we'll see that function TextIn$qqrr15System.TTextRec comes to the top. This a function that reads console input (in the Readln statement) and we can safely ignore it.

The next one on the list, ElementInDataDivides$qqrp40..., is the one that interests us. We can see that it was sampled 371 times (Calls) and that it needed 0.6 seconds to execute. If you switch to the Detailed view tab and select this function, you'll see in the Parent calls (called by...) panel that it was called 258 times from SlowMethod and 113 times from the Filter method. In reality, of course, it was called many more times, but most of them were not seen by the sampling profiler.

In this view, we can also see how many times each line of some method was hit, which will give us a good idea about where the program spends most of the time. Unfortunately, we cannot sort the data on different criteria:

Detailed view showing information for each line of the program

The names of the methods in these outputs are very weird, but that is how the Delphi compiler calls these methods internally. The part after the $ encodes the parameter types. This process is called name mangling (and the part after $ is sometimes referred to as a decoration) and it enables us to use overloaded methods with the same name and different parameters—internally they all have different names.

For example, the function SlowMethod(highBound: Integer) is internally known as SlowMethod$qqri. The qqr part specifies the fastcall calling convention (it describes how parameters are passed to the function in registers and on stack) and i identifies one integer parameter.

AsmProfiler's instrumenting profiler requires a bit more work. Firstly, you have to copy AsmProfiler.dll from the Instrumenting subfolder into a folder on the Windows environment path or into the exe folder. Secondly, you have to copy Instrumenting\API\_uAsmProfDllLoader.pas into Delphi's library path, into your project's folder or add this folder to your project's search path.

Thirdly, you have to add the _uAsmProfDllLoader unit to your project and call the following code. This will show the profiler's main form on the screen:

if _uAsmProfDllLoader.LoadProfilerDll then _uAsmProfDllLoader.ShowProfileForm;

To start profiling, call _uAsmProfDllLoader.StartProfiler(False);. To stop collecting data, call _uAsmProfDllLoader.StopProfiler;. These two calls are not strictly necessary. You can also start and stop profiling from the profiler's user interface. Modifying the code will, however, give you more control over the profiling process.

Before your program exits, unload the profiler DLL with _uAsmProfDllLoader.UnLoadProfilerDll;.

Make sure that your program has the following compiler options correctly set:

Linker,Map file=detailedCompiler,Optimization=offCompiler,Stack frames=on

The instrumenting profiler requires your program to process messages which makes it mostly useless when used in Mr. Smith's program, as it is spending most of the time inside a Readln call (and is not processing messages). As I still wanted to show you how this profiler works, I have converted SlowCode into a more modern VCL version, SlowCode_VCL.

At first I wanted to start/stop profiling right in SlowMethod:

function TfrmSlowCode.SlowMethod(highBound: Integer): TArray<Integer>; var i: Integer; temp: TList<Integer>; begin _uAsmProfDllLoader.StartProfiler(False); // existing code _uAsmProfDllLoader.StopProfiler; end;

That attempt, however, misfired, as AsmProfiler didn't want to show profiling results for SlowCode. It turned out to be better to move the start/stop calls out of this method and into the method which calls SlowCode:

procedure TfrmSlowCode.btnTestClick(Sender: TObject); var data: TArray<Integer>; begin outResults.Text := ''; outResults.Update; _uAsmProfDllLoader.StartProfiler(False); data := SlowMethod(inpHowMany.Value); _uAsmProfDllLoader.StopProfiler; ShowElements(data); end;

A version of the program, ready for profiling with the AsmProfiler, is stored in the SlowCode_VCL_Instrumented project. You will still have to download AsmProfiler and store AsmProfiler.dll and _uAsmProfDllLoader.pas into appropriate places.

When you start the program, a small form will appear alongside the program's main form. From here you can start and stop profiling, select items (methods) that should be profiled (Select items), and open results of the profiling session (Show results):

AsmProfiler's instrumenting profiler

We are interested only in three methods, so click the Select items button, select the ElementInDataDivides, Filter and SlowMethod methods, and click OK:

Selecting methods to be profiled

Next, enter 100000 into the How many numbers field and click the Test button. You don't have to start and stop the profiler, as the program will do that. When the values are calculated and displayed on the screen, click the Show results button. Don't close the profiled program as that would close the profiler form, too.

The result form of the instrumenting profiler is very similar to the equivalent form of the sampling profiler. The most interesting feature is the Unit overview tab, which combines detailed timing information and a call tree:

Unit overview display

We can see that ElementInDataDivides is in fact called 99,999 times directly from the SlowMethod and only 9,592 times from the Filter method, not 258 and 113 times, as shown by the sampling profiler.

AsmProfiler gives a good combination of a global overview and detailed analysis, although it is rough around the edges and requires more effort on your part than more polished commercial profilers.

The Sampling Profiler is, as its name suggests, a sampling profiler for Delphi, written by Eric Grange. You can find it at www.delphitools.info. Although it officially supports only Delphi 5 to XE4, it will function well with applications written in modern Delphis.

The strongest part of the Sampling Profiler is its configurability for multithreaded sampling. You can specify which CPUs will execute the profiler and which profiled application. You can also focus on a specific thread by issuing a OutputDebugString('SAMPLING THREAD threadID') command from your code (replace threadID with the real ID of the thread you want to profile). It is also very simple to turn profiling on or off by calling OutputDebugString('SAMPLING ON') and OutputDebugString('SAMPLING OFF').

An interesting feature of the Sampling Profiler, which other profilers don't provide, is the ability to enable web server in the profiler. After that, we can use a browser to connect to the profiler (if firewalls allow us, of course) and we get an instant insight into the currently most executed lines of our program:

Live status view from remote location

The weakest point of the Sampling Profiler is its complete inability to select methods that are of interest to us. As we can see in the following screenshot, we get some methods from Generics.Collections mixed between methods from SlowCode. This only distracts us from our task—trying to find the slow parts of SlowCode.

Saying all that, I must admit that the display of profiling results is really neatly implemented. The results view is simple, clean, and easy to use:

Simple and effective result view

The Sampling Profiler would be a perfect solution for occasional profiling if it would only allow us to select topics of interest.

AQTime is a performance and memory profiler for C/C++, Delphi, .NET, Java, and Silverlight, produced by SmartBear Software. It supports 32- and 64-bit applications and can be found at www.smartbear.com.

Previously, a special Standard version of AQTime was included with RAD Studio, C++Builder, and Delphi. This offer was only available for releases XE to XE8 and the licensing was not renewed after that. If you want to use AQTime with any other Delphi release, you have to buy AQTime Professional.

For testing purposes, you can install a trial version of AQTime Professional, which will only allow you to run five profiling sessions. Dedicate some time to testing and use your five sessions wisely!

AQTime Professional supports all Delphi version from 2006 to Tokyo and you can even use it in Visual Studio, which is a great plus for multiplatform developers. It contains a variety of profilers—from the performance profiler (binary instrumentating profiler) and the sampling profiler, to the coverage profiler (to see which parts of the program were executed) and more specific tools such as BDE SQL profiler, static analysis (a code analysis tool which is not really a profiler), and more.

It integrates nicely into the Delphi IDE but you can also use it as a standalone application. That gives you more flexibility during the profiling and result analysis and that's why I also used the standalone AQTime Professional for the examples.

To prepare your program for profiling, make sure that the following compiler options are set:

Compiler,Stack frames=onCompiler,Debug information=Debug informationCompiler,Local symbols=trueLinker,Debug information=true

In order for AQTime to be able to find the source file for your project, you have to specify a search path. Go to Options | Options, then select General | Search directory, and add all the folders with your source files.

Next you can choose to profile all units, but unless you are using a sampling profiler this will slow down the execution in a typical program a lot. It is better to select just a few units or, as in our example, just a few methods.

The easiest way to do that is to create a new profiling area and the easiest way to do that is to select one or more methods in the left tree (use Shift+click and Ctrl+click), then right-click and select Add selected to | New profiling area. After that you can add additional methods to that profiling area by right-clicking and selecting Add selected to | Existing profiling area, or simply with drag-and-drop.

When creating a new profiling area, you also have to choose whether to profile on a method or on a line level by checking or unchecking the Collect info about lines checkbox:

Creating new profiling area

Then start the program from AQTime—or select the AQTime |Run with profiling menu from Delphi, do the necessary steps you want to profile, and exit. AQTime will show the profiling results. Similarly to all other profilers, it will show a grid with measured methods, net time spent in each, time with children, and a hit count—an indicator showing how many times the method executed.

More interesting info is hiding in the lower panel. There is a very detailed Call Graph, which displays a call tree for the selected method, and a very useful Editor panel, which shows the source together with the hit count information for each line:

Editor view showing hit count for instrumented methods

AQTime is a great tool provided that you stay away from the very limited Standard edition and go directly for the Professional.

Nexus Quality Suite (NQS) is a successor to the long-defunct TurboPower's SleuthQA, published by NexusQA Pty Ltd. It supports 32- and 64-bit applications written in Delphi from 5 to 10.2 Tokyo. You can find it at www.nexusdb.com.

The trial version has fewer limitations than AQTime's. Some functions are disabled and some are limited in the quantity of collected data. Still, the program is not so limited that you wouldn't be able to test it out.

NQS integrates into Delphi's Tools menu and extends it with all the profilers it brings to Delphi. Of the most interest to us are Method Timer, an instrumenting profiler working at a method level, and Line Timer, an instrumenting profiler working at a line level. There is also a Block Timer, an instrumenting profiler working on a block level (a for loop, for example), which was not working correctly at the time of writing this book and so I wasn't able to test it. That's really bad luck, as there are no other profilers working on a block level and it would be really interesting to compare it with more standard approaches.

A few other tools are also interesting from the profiling viewpoint. Coverage Analyst will help you analyze code coverage, which is an important part of unit testing. After all, you definitely want to know whether your unit tests test all of the methods in a unit or not.

Also interesting is CodeWatch, which hunts for bugs in the code by looking for memory and resource leaks.

All these profilers, with the exception of CodeWatch, are available in 32-bit and 64-bit versions, although the 64-bit operation is not as stable as in the 32-bit counterparts. I was only able to use Line Timer in 32-bit mode, for example, while Method Timer worked flawlessly in 32-bit and 64-bit modes.

Both Method Timer and Line Timer require no special preparation. You just have to have debug information turned on in the linker options.

When you start the Method Timer, a profiler window opens. Click on the Routines button to select methods to profile. To change the profiling status of a method, double-click its name or right-click and select Profile Status |Enable Profile Status For Selected.

When you are done, press F9 to start the program, go through the steps that you want to profile, and exit the program.

The program will then display basic timing information including net time per method and gross time (what other programs call "time with children"). If you click on a method, the lower two panes will display information about methods that called the current method and methods that were called from the current method.

If you double-click on a method, another window will appear showing the source code for the selected method but without any information about the profiling results:

Method Timer result window

Line Timer has a similar user interface. First you select the methods to be profiled in the Routines view, then you run the program, and at the end examine the results in the Line Times window.

This profiler has a display that is a bit different from other profilers that support line-level profiling. It is not grouped by methods, but by line numbers. This gives us an immediate overview of the most critical part of the code, but is hard to integrate into a bigger picture.

As in the Method Timer, a double-click on a line in the results grid opens up an editor window which displays the source code, together with the time spent in each profiled line and the number of times this line was executed:

Line Timer with built-in code display

Nexus Quality Suite is a nice set of tools and we can only hope that its stability will improve with future releases.

This chapter provided a broad overview of the topics we'll be dealing with in this book. We took a look at the very definition of performance. Next we spent some time describing the Big O notation for describing time and space complexity and we used it in a simple example.

In the second part of the chapter, we looked into the topic of profiling. We used a manual approach and specialized tools—profilers—to find the slowest part of a simple program.

In the next chapter, I'll briefly return to the topic of selecting the correct algorithm for the job. With a few examples, I'll show you how an algorithm can make or break a program's performance.

Download code from GitHub

Download code from GitHub

![RESTful Services with Delphi [Video]](https://static.packt-cdn.com/products/9781789951882/cover/smaller)