In this chapter, we will cover the following topics:

Creating a new number sequence

Renaming the primary key

Merging two records

Adding document handling notes

Using a normal table as temporary table

Copying a record

Building a query object

Using a macro in a SQL statement

Executing a direct SQL statement

Enhancing the data consistency check

Exporting to an XML file

Importing from an XML file

Creating a comma-separated value file

Reading a comma-separated value file

Deleting all company transactional data

This chapter focuses on data manipulation exercises. Here, we will discuss how to work with query objects from X++ code. We will also discuss how to reuse macros in X++ SQL statements and how to send SQL statements directly to the database. This chapter will explain how to rename primary keys, how to merge and copy records, how to add document handling notes to selected records, and how to clean up the testing system by deleting all transactional data.

Number sequences in Dynamics AX are used to generate a specifically formatted number for record identification. Numbers could be anything from voucher numbers or transaction identification numbers to customer or vendor accounts.

When developing custom functionality, very often one of the tasks is to add a new number sequence to the system to support newly created tables. Dynamics AX contains a list of NumberSeqReference derivative classes, which hold the number sequence data for the specific module.

These classes are read by the number sequence wizard which detects existing number sequences and proposes to create the missing or newly created ones. The wizard is normally run as a part of the application initialization, but it can be rerun at any time later when expanding Dynamics AX functionality and new standard number sequences are required. The wizard also has to be rerun if new custom number sequences are added to the system.

In this recipe, we will add a new number sequence to the system. In a standard application, employee number is not driven by any number sequence, so we will enhance this by creating an employee number sequence functionality.

Open the NumberSeqReference_General class in AOT, and add the following code to the bottom of

loadModule():numRef.DataTypeId = typeId2ExtendedTypeId(typeid(EmplId)); numRef.ReferenceHelp = literalstr("Employee identification"); numRef.WizardContinuous = false; numRef.WizardManual = NoYes::No; numRef.WizardAllowChangeDown = NoYes::Yes; numRef.WizardAllowChangeUp = NoYes::Yes; numRef.WizardHighest = 9999; numRef.SortField = 7; this.create(numRef);Run the number sequence wizard by clicking on the Wizard button in Basic | Setup | Number sequences | Number sequences, and click Next:

Delete everything apart from the line where Module is Basic and Reference is Employee (use keyboard shortcut ALT+F9 to delete lines). Note the number sequence code Basi_202, and click Next:

On the last page, click Finish to complete the wizard:

Find the newly created number sequence in Number sequences form:

Open Basic | Setup | Company information and go to the Number sequences tab page. Here, we should see the new number sequence code along with the existing ones:

The last thing to do is to create a helper method for this sequence. Locate CompanyInfo table in AOT and add the following method:

public server static NumberSequenceReference numRefEmplId() { return NumberSeqReference::findReference( typeid2extendedtypeid(typeid(EmplId))); }

We start the recipe by adding a number sequence initialization code into the NumberSeqReference_General class. As we can understand from its name, it holds initialization of all general number sequences that do not belong to any particular module. Employee number sequence partially matches this criterion, although we could have added the code to the NumberSeqReference_HRM class, which is used in the Human Resources module. Moreover, we could have created a totally new NumberSeqReference class. This is normally the case when new custom modules are built.

The code in loadModule() defines default number sequence settings to be used in the wizard like data type, description, highest possible number, etc. Additional options like starting sequence number, number format, and others could also be added here. All mentioned options could be changed while running the wizard. On the second step of the wizard, the Details >> button can be used to display more options. The options could also be changed later in the Number sequences form before or after the number sequence is actually used.

Once completed, the wizard creates a new record in the Number sequences form, which can then be used by the system.

The number sequences added to the NumberSeqReference classes are also automatically shown on relevant parameter forms. Sequences in the NumberSeqReference_General class are shown in the Company information form. For example, number sequences in the NumberSeqReference_HRM class are displayed in the Human Resource parameters form.

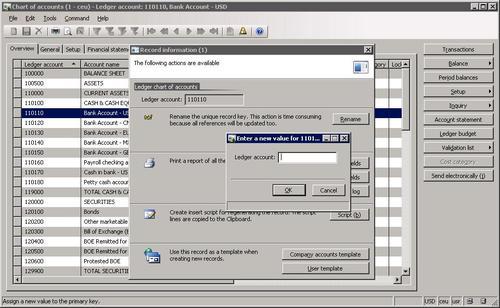

Most of you who are familiar with the application probably used the standard Rename function. This function allows us to rename the primary key of almost any record. It is irreplaceable if a record was saved by mistake or simply needs renaming. The function ensures data consistency, that is, all related records are renamed too. It can be accessed from the Record information form, which can be opened by selecting Record info from the right-click menu on any record:

When it comes to mass renaming, this function might be very time consuming as it would need to be run on every record. An alternative is to create a job that automatically runs through all required records and calls this function.

This recipe will explain how the record primary key can be renamed through code.

As an example, we will create a job that renames general ledger account 110110 by adding the letter C in front of it. You can use any other ledger account in this example.

Open AOT to create a new job called LedgerAccountRename, and enter the following code:

static void LedgerAccountRename(Args _args) { LedgerTable ledgerTable; ; select firstonly ledgerTable where ledgerTable.AccountNum == '110110'; if (ledgerTable.RecId) { ledgerTable.AccountNum = 'C' + ledgerTable.AccountNum; ledgerTable.renamePrimaryKey(); } }Open General ledger | Chart of Accounts Details and find the account to be renamed:

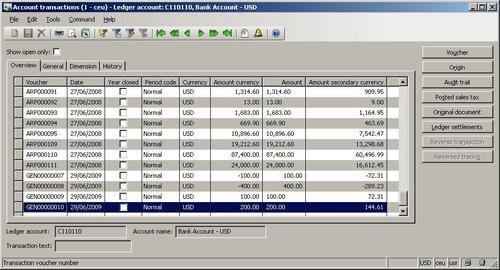

Click Transactions to note existing transactions:

Now, run the job. To check if the renaming was successful and the account retained all its transactions and other related records, open General ledger | Chart of Accounts Details, and find the new account:

Click Transactions to see that existing transactions are still in place:

In this recipe, first we select the desired ledger account, that is, 110110. If the where clause is removed, then in such a case, all ledger accounts would be updated to have C in front of each account.

Next, and finally, we call the table's renamePrimaryKey(), which does the actual renaming. It finds all related records and updates them with the new account number. The operation might take a while depending on the volume of data, as the system has to update every related record.

I noticed that sometimes for various operational reasons, people by accident create duplicate records in the system like vendors, customers, ledger accounts, etc and start entering transactions against them. The reasons could vary from rushing to input new information to the lack of knowledge of how to use the system. But regardless of why this happened, they will always need someone with technical skills to fix it.

In this recipe, we will explore how to correct such a situation by merging two records including all their related transactions. For the demonstration, we will merge two vendor accounts 5001 and 5002 into a single one, that is, 5001.

Open AOT, create a new job called VendAccountMerge, and enter the following code:

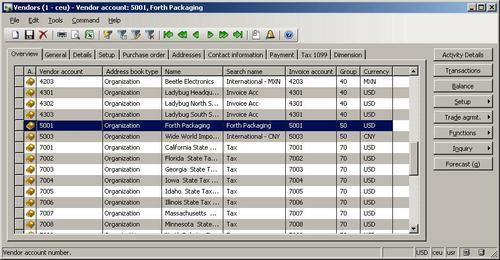

static void VendAccountMerge(Args _args) { VendTable vendTable; VendTable vendTableDelete; PurchJournalAutoSummary jourSummary; #define.vend('5001') #define.vendDelete('5002') ; ttsbegin; delete_from jourSummary where jourSummary.VendAccount == #vendDelete; select firstonly forupdate vendTableDelete where vendTableDelete.AccountNum == #vendDelete; select firstonly forupdate vendTable where vendTable.AccountNum == #vend; vendTableDelete.merge(vendTable); vendTable.doUpdate(); vendTableDelete.doDelete(); ttscommit; }Open Account payable | Vendor Details to check the vendors to be merged:

Run the job to merge the vendor accounts.

Once vendor accounts are merged, all their related records are going to be merged too, that is, all transactions, contacts, addresses, configuration settings, and so on from both vendors will be moved to a single one. Normally, there are no issues with merged transactional data, but vendor configuration settings may issue duplicate errors.

For example, vendor 5001 has bank account Bank1 and vendor 5002 has bank account Bank2. After merging both vendors, vendor 5001 will have both bank accounts attached. If both bank accounts were named the same, then the system would issue a duplicate record error.

So before we start merging records, we need to either manually or programmatically delete or rename settings that might issue duplicate errors. In this example, in the first block of code, right after the variable declaration, we delete only the default auto-summary posting settings, which are stored in the PurchJournalAutoSummary table. Depending on the circumstances, other settings like vendor bank accounts, requests for quotes, and so on, have to be corrected before the actual merge.

Next two blocks of code find both vendor records for further updating.

Calling member method merge() on the record to be deleted transfers all of its data and related records to the destination, which is specified as a first argument.

The last thing to do is to save the destination record and delete the first one.

Such a technique can be used to merge two or even more records. Besides vendors, it could also be customers, ledger accounts, and other such similar records. Every case has to be investigated separately as each record type contains different relations with other tables.

It is a good practice to add some kind of note to the record when doing data renaming, merging, or any other data manipulation task, whether it's manual or automatic. Dynamics AX allows adding a note or a file to any records by using the so called Document handling feature.

By default, it is enabled for all tables, but can be restricted to fewer tables by changing its configuration parameters.

Document handling can be accessed from the form toolbar by selecting the Document handling icon or choosing Document handling from the Command menu. A form appears, which allows adding notes or files to any currently selected record.

Dynamics AX also allows us to add document handling notes from the code, which helps developers or consultants to add additional information when doing various data migration or conversion tasks.

In this recipe, we will add a note to vendor account 5001.

Open AOT and create a new job called VendAccountDocu, and enter the following code:

static void VendAccountDocu(Args _args) { DocuRef docuRef; #define.vend('5001') #define.docuType('Note') ; docuRef.RefCompanyId = curext(); docuRef.RefTableId = tablenum(VendTable); docuRef.RefRecId = VendTable::find(#vend).RecId; docuRef.TypeId = #docuType; docuRef.Name = 'Imported'; docuRef.Notes = 'This vendor was imported.'; docuRef.insert(); }Run the job to create the note.

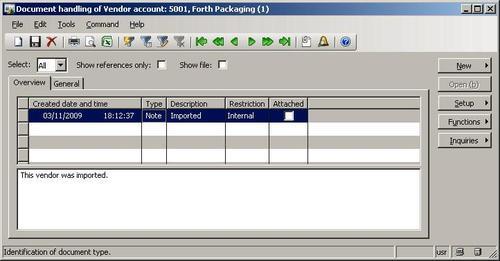

Open Accounts payable | Vendor Details, and locate the vendor account:

Click the Document handling button on the form toolbar or select Document handling from the Command menu to view the note added by our code:

All document handling notes are stored in the DocuRef table, where three fields, RefCompanyId, RefTableId, and RefRecId are used to identify the parent record. In our recipe, we set those fields to the current company account, vendor table ID, and vendor account record ID respectively.

Next, we set note type, name, and description and insert the document handling record. In this way, we added a note to the record. The code in this recipe could be also added to a separate method for further reuse.

Standard Dynamics AX contains numerous temporary tables, which are used by the application and could be used in custom modifications too. Although new temporary tables could also be easily created using AOT, sometimes it is not effective. One of the cases could be when the temporary table is very similar or exactly the same as an existing "real" one. The goal of this recipe is to demonstrate an approach to how standard non-temporary tables could be used as temporary.

As an example, we will use the vendor table to insert and display a couple of temporary records without affecting actual data.

In AOT, create a new class called VendTableTmp with the following code:

class VendTableTmp { } server static void main(Args _args) { VendTable vendTable; ; vendTable.setTmp(); vendTable.AccountNum = '1000'; vendTable.Name = 'Vendor 1'; vendTable.PartyId = '1'; vendTable.doInsert(); vendTable.clear(); vendTable.AccountNum = '1002'; vendTable.Name = 'Vendor 2'; vendTable.PartyId = '2'; vendTable.doInsert(); while select vendTable { info(strfmt( "%1 - %2", vendTable.AccountNum, vendTable.Name)); } }

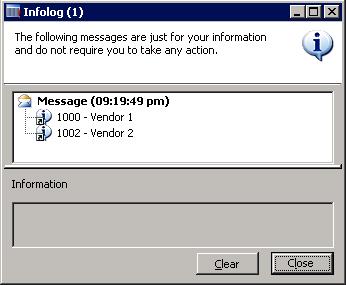

Run the class to see results:

The principle of this recipe is in the setTmp() method. It is available on all tables, and it declares the current table instance to behave as a temporary table in the current scope. So in this recipe, we first call setTmp() on the vendTable table to make it temporary in the scope of this method. That means any data manipulations will be lost once the execution of this method is over and actual table content will not be affected.

Next, we insert couple of test records. Here, we use doInsert() to bypass any validation rules that are not required in this recipe.

The last thing to do is to check for newly created records by listing the vendTable table. We can see that although the table contains many actual records, only the ones which we have inserted here are listed.

I've experienced that one of the tasks often used when manipulating data is record copying. For various reasons, an existing record needs to be modified and saved as a new one. The most obvious example could be when a user requires a function that allows him or her to quickly duplicate records on any of the existing forms.

There are several ways of copying one record into another in X++. In this recipe, we will explain the usage of table data() method, global buf2buf() function, and their differences. As an example, we will copy one of the existing customer records into a new one. Normally, copying a customer involves more data around the customer like customer contacts, bank accounts, printing settings, and similar, but for demonstration purposes, we will assume that our goal is only to copy the customer record itself without worrying about related data.

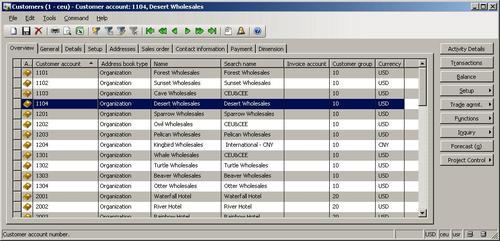

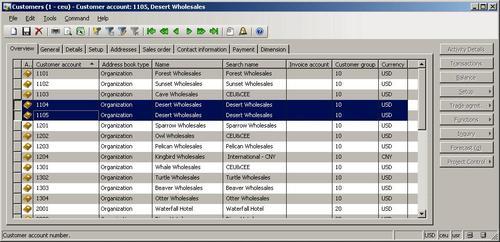

Open Accounts receivable | Customer Details, and find the customer to be copied. In this example, we will use 1104:

Open AOT, create a new job called CustTableCopy with the following code and run it:

static void CustTableCopy(Args _args) { CustTable custTable1; CustTable custTable2; ; custTable1 = CustTable::find('1104'); ttsbegin; custTable2.data(custTable1); custTable2.AccountNum = '1105'; custTable2.PartyId = ''; custTable2.PartyId = DirParty::createPartyFromCommon( custTable2).PartyId; if (!custTable2.validateWrite()) { throw Exception::Error; } custTable2.insert(); ttscommit; }

Open Accounts receivable | Customer Details again, and notice that there two identical customer records now:

In this recipe, we have two variables—custTable1 for original record and custTable2 for new one. First, we find the original record by calling find() on the CustTable table.

Next, we copy it to the new one. Here, we use the data() table member method, which copies all data fields from one variable to another.

After that, we set a new customer account number and new address book ID (we have to clear it before). These two fields are part of unique table indexes, and the system would issue an error if one of them is already used.

Finally, we call insert() on the table, if validateWrite() is successful. In this way, we have created a new customer record, which is exactly the same as the existing one apart from the two fields.

As we saw before, the data() method copies all table fields including system fields like record ID, company account, created user, and so on. Most of the time, it is OK because when the new record is saved, the system fields are overwritten with the new values. But this is not the case when copying records between different companies.

If we were to modify the previously created job to include the changecompany() statement, the job would not work. Company ID would still be copied from original record to the new one and would not be changed during the insert.

To solve similar problems, Dynamics AX provides another function called buf2buf(). It is very similar to the table's data() method with one major difference. buf2buf() copies all data fields excluding the system ones. The code in the function is as follows:

static void buf2Buf( Common _from, Common _to) { DictTable dictTable = new DictTable(_from.TableId); fieldId fieldId = dictTable.fieldNext(0); while (fieldId && ! isSysId(fieldId)) { _to.(fieldId) = _from.(fieldId); fieldId = dictTable.fieldNext(fieldId); } }

We can clearly see that during the copying process, all the table fields are traversed, but the system fields are excluded. We can also see that this function is slower than the internal data(), as it checks and copies field-by-field.

Now that we have learned about this function, let's use it. Let's update a previous example to copy customer records from one Dynamics AX company to another. Do not forget that in practice, such copying would not make any sense as much customer related data will not be copied, but here it will be used as a good example. Update the previous job to (replace TST with your company):

static void CustTableCopy(Args _args)

{

CustTable custTable1;

CustTable custTable2;

;

custTable1 = CustTable::find('1104');

changecompany('TST')

{

ttsbegin;

buf2buf(custTable1, custTable2);

custTable2.AccountNum = '1105';

custTable2.PartyId = '';

custTable2.PartyId = DirParty::createPartyFromCommon(

custTable2).PartyId;

if (!custTable2.validateWrite())

{

throw Exception::Error;

}

custTable2.insert();

ttscommit;

}

}There are only two differences here. First we change the current company before we start creating a new record. Second, we replace data() with buf2buf(). The latter function accepts two records as arguments—source and destination. When we run this job, a new record will be created in another company.

Query objects are used to visually build SQL statements. They are used by Dynamics AX reports, views, forms, and other objects. Normally queries are stored in AOT, but they can also be created from code dynamically. This is normally done when visual tools cannot handle complex and dynamic queries. In this recipe, we will create one dynamically from code.

As an example, we will build a query that selects all active customers who belong to group 10 and have at least one sales order.

Open AOT, create a new job called CustTableSales, and enter the following code:

static void CustTableSales(Args _args) { Query query; QueryBuildDataSource qbds1; QueryBuildDataSource qbds2; QueryBuildRange qbr1; QueryBuildRange qbr2; QueryRun queryRun; CustTable custTable; ; query = new Query(); qbds1 = query.addDataSource(tablenum(CustTable)); qbds1.addSortField( fieldnum(CustTable, Name), SortOrder::Ascending); qbr1 = qbds1.addRange(fieldnum(CustTable,Blocked)); qbr1.value(queryvalue(CustVendorBlocked::No)); qbr2 = qbds1.addRange(fieldnum(CustTable,CustGroup)); qbr2.value(queryvalue('10')); qbds2 = qbds1.addDataSource(tablenum(SalesTable)); qbds2.relations(false); qbds2.joinMode(JoinMode::ExistsJoin); qbds2.addLink( fieldnum(CustTable,AccountNum), fieldnum(SalesTable,CustAccount)); queryRun = new QueryRun(query); while (queryRun.next()) { custTable = queryRun.get(tablenum(CustTable)); info(strfmt( "%1 - %2", custTable.Name, custTable.AccountNum)); } }

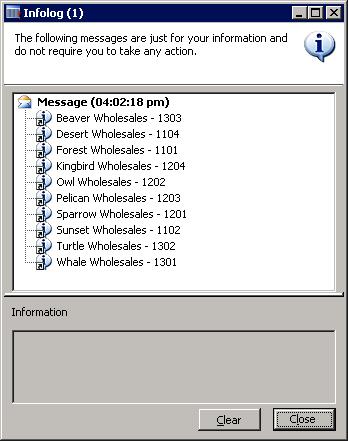

Run the job, and the following screen should appear:

First, we create a new query object. Next, we add a new CustTable data source to the query by calling its addDataSource() member method. The method returns a reference to the QueryBuildDataSource object—qbds1. Here, we call addSortField() to enable sorting by customer name.

The following two blocks of code creates two filter ranges. The first is to show only active customers and the second one is to list only customers belonging to a single group 10. Those two filters are automatically added together using the SQL AND operator. QueryBuildRange objects are created by calling the addRange() member method of the QueryBuildDataSource object with the field ID number as argument. Range value is set by calling value() on the QueryBuildRange object itself. It is a good practice to use queryvalue() or a similar function to process values before applying them as a range. More functions like querynotvalue(), queryrange(), and so on can be found in the Global application class. Note that these functions actually process data using the SysQuery application class, which in turn has even more interesting helper methods that might be handy for every developer.

Adding another data source to an existing one connects both data sources using the SQL JOIN operator. It this example, we are displaying customers that have at least one sales order. We start by adding the SalesTable table as another data source. We are going to use custom relations between those tables, so we need to disable standard relations by calling the relations() method with false as an argument. Calling joinMode() with JoinMode::ExistsJoin as a parameter ensures that a record from a parent data source will be displayed only if the relation exists in its attached data source. And finally, we create a relation by calling addLink() and passing the field ID number of both tables.

Last thing to do is to create and run the queryRun object and show the selected data on the screen.

It is worth mentioning a couple of specific cases when working with query objects from code. One of them is how to use the OR operator and the other one is how to address array fields.

As you have already noted, regardless of how many ranges are added, all of them will be added together using the SQL AND operator. In most cases, it is fine, but sometimes complex user requirements demand ranges to be added using SQL OR. There might be a number of work-arounds, like using temporary tables or similar, but I use the Dynamics AX feature that allows passing raw SQL as a range.

In this case, the range has to be formatted like the fully qualified SQL WHERE clause including field names, operators, and values. Each separate clause has to be in brackets. It is also very important that filter values, especially if they are specified by the user, have to be properly formatted before using them in a query.

Let's replace the code from the previous example:

qbr2.value(queryValue('10'));with the new code:

qbr2.value(strfmt(

'((%1 = "%2") || (%3 = "%4"))',

fieldstr(CustTable,CustGroup),

queryvalue('10'),

fieldstr(CustTable,Currency),

queryvalue('EUR')));Now, the result would also include all the customers having the default currency EUR.

Some table fields in Dynamics AX are based on extended data types, which contains more than one array element. An example in a standard application could be financial dimensions based on the Dimension extended data type or project sorting based on ProjSortingId. Although such fields are very much the same as normal fields, in queries, they should be addressed slightly different. To demonstrate the usage, let's modify the example by filtering the query to list only customers containing a specific Purpose value. In the standard application, Purpose is the third financial dimension, where the first is Department and the second is Cost centre.

First, let's declare a new QueryBuildRange object in the variable declaration section:

Next, we add the following code right after the qbr2.value(…) code:

qbr3 = qbds1.addRange(

fieldid2ext(fieldnum(CustTable,Dimension),3));

qbr3.value(queryvalue('Site1'));Notice that we use the global fieldid2ext() function, which converts the field ID and the array number into a valid number to be used by addRange(). This function can also be used anywhere, where addressing the dimension fields is required. The value 3 as its second argument here means that we are using a third dimension, that is, Purpose. In my application, I have purposes defined as Site1, Site2, and Site3, so I simply use the first one as filter criteria.

Now, when we run this job, the customer list based on previous criteria will be reduced even more to match customers having only a specific Purpose set.

In a standard Dynamics AX application, there are macros like InventDimJoin and InventDimSelect, which are reused numerous times across the application. Those macros are actually full or partial X++ SQL queries, which can be called with various arguments. Such approach saves developing time and makes upgrades easier.

In this recipe, we will create a small macro, which holds a single where clause to display only active vendor records. Then, we will create a job which uses created macro for displaying a vendor list.

Open AOT, and create a new macro called VendTableNotBlocked with the following code:

(%1.Blocked == CustVendorBlocked::No)

In AOT, also create a new job called VendTableMacro with the following code:

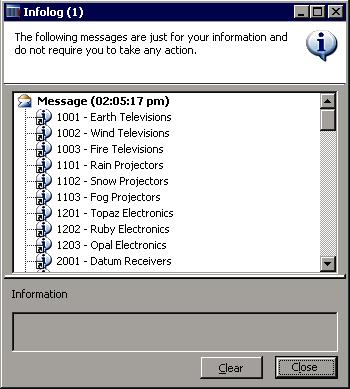

static void VendTableMacro(Args _args) { VendTable vendTable; ; while select vendTable where #VendTableNotBlocked(vendTable) { info(strfmt( "%1 - %2", vendTable.AccountNum, vendTable.Name)); } }Run the job, and inspect the results, as displayed on the screen:

First, we define a macro that holds our where clause. Normally, the purpose of defining SQL in a macro is to reuse it a number of times in various places. We use %1 as an argument. More arguments could be used.

Next, we create a job with the select statement. Here, we use the previously created macro in a where clause. We pass vendTable as an argument.

The query works like any other query, but the advantage is that the code in the macro could be reused elsewhere.

Note that although using a macro in a SQL statement can reduce the amount of code, too much code in it might reduce the SQL statement's readability for other developers. So keep it balanced.

Dynamics AX allows developers to build X++ SQL statements flexible enough to fit any custom business process. But in several cases, the usage of X++ SQL is either not effective or not possible at all.

One of the cases is when we run data upgrade tasks during an application version upgrade. The standard application contains a set of data upgrade tasks to be completed during the version upgrade. If the application is highly customized, then most likely, the standard tasks has to be modified to reflect data dictionary customizations or even a new set of tasks have to be created to make sure data is handled correctly during the upgrade.

Normally, at this stage, SQL statements are so complex that they can only be created using database-specific SQL and executed directly in the database. Additionally, running direct SQL statements dramatically increases data upgrade performance because most of the code is executed on the database server where all data resides. This is very important while working with large volumes of data.

Another case when we would need to use direct SQL statements is when we want to connect to an external database using the ODBC driver. In this case, X++ SQL is not supported at all.

This recipe will demonstrate how to execute direct SQL statements. We will connect to the current Dynamics AX database directly using an additional connection and will retrieve the list of vendor accounts.

Open AOT, and create a new class called vendTableSql with the following code:

class VendTableSql { } server static void main(Args _args) { UserConnection userConnection; Statement statement; str sqlStatement; SqlSystem sqlSystem; SqlStatementExecutePermission sqlPermission; ResultSet resultSet; DictTable tblVendTable; DictField fldAccountNum; DictField fldName; DictField fldDataAreaId; DictField fldBlocked; ; tblVendTable = new DictTable(tablenum(VendTable)); fldAccountNum = new DictField( tablenum(VendTable), fieldnum(VendTable,AccountNum)); fldName = new DictField( tablenum(VendTable), fieldnum(VendTable,Name)); fldDataAreaId = new DictField( tablenum(VendTable), fieldnum(VendTable,DataAreaId)); fldBlocked = new DictField( tablenum(VendTable), fieldnum(VendTable,Blocked)); sqlSystem = new SqlSystem(); sqlStatement = 'SELECT %2, %3 FROM %1 ' + 'WHERE %4 = %6 AND %5 = %7'; sqlStatement = strfmt( sqlStatement, tblVendTable.name(DbBackend::Sql), fldAccountNum.name(DbBackend::Sql), fldName.name(DbBackend::Sql), fldDataAreaId.name(DbBackend::Sql), fldBlocked.name(DbBackend::Sql), sqlSystem.sqlLiteral(curext(), true), sqlSystem.sqlLiteral(CustVendorBlocked::No, true)); userConnection = new UserConnection(); statement = userConnection.createStatement(); sqlPermission = new SqlStatementExecutePermission( sqlStatement); sqlPermission.assert(); resultSet = statement.executeQuery(sqlStatement); CodeAccessPermission::revertAssert(); while (resultSet.next()) { info(strfmt( "%1 - %2", resultSet.getString(1), resultSet.getString(2))); } }

Run the class, and notice the list of vendors retrieved directly from the database:

We start the code by creating DictTable and DictField objects for handling the vendor table and its fields later in the query.

A new SqlSystem object also has to be created. It will be used to convert Dynamics AX types to SQL types.

Next, we set up a SQL statement with %1, %2, and other placeholders for table or field names or values to be inserted later.

The main query creation happens next when the query placeholders are replaced with the right values. Here we use previously created DictTable and DictField type objects by calling their name() methods with the DbBackend::Sql enumeration as an argument. This ensures that we pass the name exactly how it is used in the database. For example, the Dimension field in VendTable actually consists of a set of three fields, which are named in the database DIMENSION, DIMENSION2_, and DIMENSION3_ respectively and using DbBackend::Sql would return us exactly that.

We also use the sqlLiteral() of the previously created sqlSystem object to properly format SQL values to make sure that they do not have any unsafe characters.

Once the SQL statement is ready, we initialize a direct connection to the database and run the statement. The result is a resultSet object, and we get the results by using the while statement and calling its next() until the end.

Note that we create an sqlPermission object of type SqlStatementExecutePermission here and call its assert() before executing the statement. This is required in order to comply with Dynamics AX SQL execution security requirements.

Another thing to mention is that when building direct SQL queries, special attention has to be paid to license, configuration, and security keys. Some tables or fields might be disabled in the application and may not be present in the database.

The code in this recipe can be also used to connect to the external ODBC databases. We only need to replace the UserConnection class with OdbcConnection and use text names instead of DictTable and DictField.

The Standard Dynamics AX application provides an alternate way of building direct SQL statements by using a set of SQLBuilder classes. By using those classes, we can create SQL statements as objects as oppose to text. We will demonstrate next how to use a set of SQLBuilder classes. We will create the same SQL statement as before.

First in AOT, we create another class called VendTableSqlBuilder with the following code::

class VendTableSqlBuilder { } server static void main(Args _args) { UserConnection userConnection; Statement statement; str sqlStatement; SqlStatementExecutePermission sqlPermission; ResultSet resultSet; SQLBuilderSelectExpression selectExpr; SQLBuilderTableEntry vendTable; SQLBuilderFieldEntry accountNum; SQLBuilderFieldEntry name; SQLBuilderFieldEntry dataAreaId; SQLBuilderFieldEntry blocked; ; selectExpr = SQLBuilderSelectExpression::construct(); vendTable = selectExpr.addTableId( tablenum(VendTable)); accountNum = vendTable.addFieldId( fieldnum(VendTable,AccountNum)); name = vendTable.addFieldId( fieldnum(VendTable,Name)); dataAreaId = vendTable.addFieldId( fieldnum(VendTable,DataAreaId)); blocked = vendTable.addFieldId( fieldnum(VendTable,Blocked)); vendTable.addRange(dataAreaId, curext()); vendTable.addRange(blocked, CustVendorBlocked::No); selectExpr.addSelectFieldEntry( SQLBuilderSelectFieldEntry::newExpression( accountNum, 'AccountNum')); selectExpr.addSelectFieldEntry( SQLBuilderSelectFieldEntry::newExpression( name, 'Name')); sqlStatement = selectExpr.getExpression(null); userConnection = new UserConnection(); statement = userConnection.createStatement(); sqlPermission = new SqlStatementExecutePermission( sqlStatement); sqlPermission.assert(); resultSet = statement.executeQuery(sqlStatement); CodeAccessPermission::revertAssert(); while (resultSet.next()) { info(strfmt( "%1 - %2", resultSet.getString(1), resultSet.getString(2))); } }

In this method, we first create a new selectExpr object, which is based on the SQLBuilderSelectExpression class. It represents the object of the SQL statement.

Next, we add the VendTable table to it by calling its member method addTableId(). The method returns a reference to the vendTable object of type SQLBuilderTableEntry, which corresponds to a table node in a SQL query.

Then, we create four field objects of type SQLBuilderFieldEntry to be used later and two ranges to show only this company account and only active vendor accounts.

We use addSelectFieldEntry() to add two fields to be selected. Here we use the previously created field objects.

The SQL statement is generated once getExpression() is called and the rest of the code is the same as in the previous example.

Running the class would give us results exactly like the ones we got before.

It is highly recommended from time-to-time to run the standard Dynamics AX data consistency check found in Basic | Periodic | Consistency check to check system data integrity. This function finds orphan data, validates parameters, and does many other things. But in one of my recent projects, I have noticed that it does not do everything. For example, it does not check the data in the AssetLedgerAccounts table, which holds the set up fixed asset posting profiles.

In this recipe, we will see how we can enhance the standard Dynamics AX consistency check to include more tables in its data integrity validation.

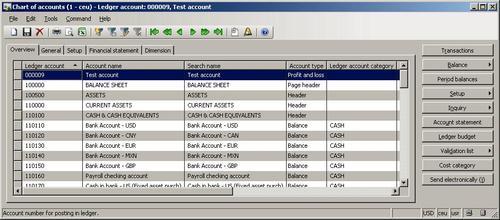

Before we start, we need to create an invalid setup to make sure we can simulate data inconsistency. Open General ledger | Chart of Account Details and create a new ledger account, for instance, 000009:

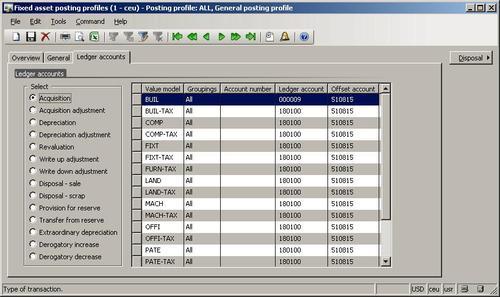

Open General ledger | Setup | Fixed assets | Posting profiles and specify the new account in the Ledger account field, for example, for any records:

Go back to the Chart of accounts form, and delete the previously created account.

Now, we have a non-existing ledger account in the fixed asset posting settings.

Open the LedgerConsistencyCheck class in AOT, and add the following code to the end of its

run():this.kernelCheckTable(tablenum(AssetLedgerAccounts));

Open Basic | Periodic | Consistency check, select General ledger node, and click Execute on the right-hand side:

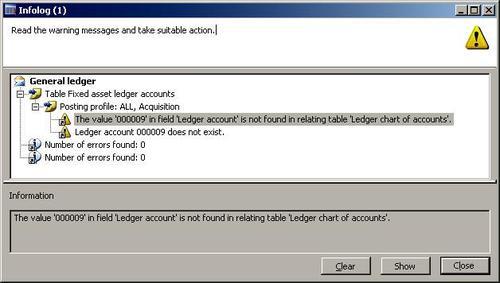

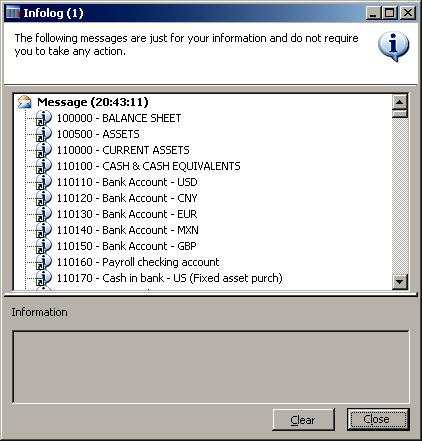

Now, the message displayed in Infolog tells us that the account is missing in the fixed asset posing settings:

The consistency check in Dynamics AX validates only the predefined list of tables for each module. The system contains a number of classes derived from SysConsistencyCheck. For example, the CustConsistencyCheck class is responsible for validating the Accounts receivable module, LedgerConsistencyCheck—for General ledger and so on. Each class performs a check for the tables defined in its run().In this recipe, we include the AssetLedgerAccounts table into the latter class, that is, LedgerConsistencyCheck. AssetLedgerAccounts table is where fixed asset posting settings are stored and in this way, we ensure that the settings are validated during the General ledger consistency check.

Briefly, Extensible Markup Language (XML) defines a set of rules for encoding documents electronically. It allows creating of all kind of structured documents. In Dynamics AX, XML files are widely used across the application. For example, user profiles can be exported as XML files. Business data like financial statements can also be exported as XBRL (eXtensible Business Reporting Language) files, which are based on XML.

Probably, the main thing that is associated with XML in Dynamics AX is the Application Integration Framework. It is an infrastructure that allows exposing business logic or exchanging data with other external systems. The communication is done by using XML formatted documents. By using existing XML framework application classes prefixed with Axd, you can export or import data from or to the system in an XML format. It is also possible to create new Axd classes using the AIF Document Service Wizard from the Tools | Development tools | Wizards menu to support exporting and importing newly created tables.

Dynamics AX also contains a set of application classes prefixed with Xml like XmlDocument, and XmlNode. Basically, those classes are wrappers around the System.XML namespace in .NET Framework.

In this recipe to show the principle of XML, we will create a new simple XML document by using the latter classes. We will create the file with the data from the chart of accounts table and will save it as an XML file.

Open AOT and create a new class called CreateXmlFile with the following code. Replace

<documents>with your own path (use double backslashes for folder separation, that is,\\):class CreateXmlFile { } public static void main(Args _args) { XmlDocument doc; XmlElement nodeXml; XmlElement nodeTable; XmlElement nodeAccount; XmlElement nodeName; LedgerTable ledgerTable; #define.filename('<documents>\\accounts.xml') ; doc = XmlDocument::newBlank(); nodeXml = doc.createElement('xml'); doc.appendChild(nodeXml); while select ledgerTable { nodeTable = doc.createElement(tablestr(LedgerTable)); nodeTable.setAttribute( fieldstr(LedgerTable, RecId), int642str(ledgerTable.RecId)); nodeXml.appendChild(nodeTable); nodeAccount = doc.createElement( fieldstr(LedgerTable, AccountNum)); nodeAccount.appendChild( doc.createTextNode(ledgerTable.AccountNum)); nodeTable.appendChild(nodeAccount); nodeName = doc.createElement( fieldstr(LedgerTable, AccountName)); nodeName.appendChild( doc.createTextNode(ledgerTable.AccountName)); nodeTable.appendChild(nodeName); } doc.save(#filename); }

Run the class. The XML file accounts.xml should be created in the specified folder. Open it using Internet Explorer, and review the created XML structure:

We start by creating a new XmlDocument, which represents an XML structure using its newBlank() method. Then we create its root node named xml using createElement(), and add the node to the document by calling the document's appendChild() method.

Next, we go though the LedgerTable table and do the following for each record:

Create a new XmlElement node, which is named exactly as the table name, and add this node to the root node.

Create a node representing the account number field and its child node representing its value. The account number node is created using

createElement(), and its value is created usingcreateTextNode(). ThecreateTextNode()method basically adds a value as text with no XML tags.Add the account number node to the table node.

Create a node representing the account name field and its child node representing its value.

Finally, we save the created XML document as a file.

In this way, we could create documents having virtually any structure.

The exporting to an XML file recipe has already explained the importance of XML in Dynamics AX, and how XML documents could be used for exporting data from the system.

In this recipe, we will continue about XML. We will create a piece of code that reads XML files. As a source file, we will use the previously created accounts.xml file.

Open AOT, and create a new class called ReadXmlFile with the following code. Replace

<documents>with your own path (use double backslashes for folder separation, i.e.\\):class ReadXmlFile { } public static void main(Args _args) { XmlDocument doc; XmlNodeList data; XmlElement nodeTable; XmlElement nodeAccount; XmlElement nodeName; #define.filename('<documents>\\accounts.xml') ; doc = XmlDocument::newFile(#filename); data = doc.selectNodes('//'+tablestr(LedgerTable)); nodeTable = data.nextNode(); while (nodeTable) { nodeAccount = nodeTable.selectSingleNode( fieldstr(LedgerTable, AccountNum)); nodeName = nodeTable.selectSingleNode( fieldstr(LedgerTable, AccountName)); info(strfmt( "%1 - %2", nodeAccount.text(), nodeName.text())); nodeTable = data.nextNode(); } }

Run the class. Infolog should display the contents of the

accounts.xmlfile on the screen:

In this recipe, we first create a new XmlDocument. We create it from the file and hence we have to use newFile(). Then we get all the document nodes of the table as XmlNodeList. We also get its first element by calling nextNode().

Next, we loop through all the list elements and do the following:

Get an account number node as an XmlElement.

Get an account name node as an XmlElement.

Display the text of both nodes in Infolog. Normally, this should be replaced with more sensible code to process the data.

Get the next list element.

In this way, we retrieve the data from the XML file. A similar approach could be used to read any other XML file.

Comma-separated value (CSV) files are widely used across various systems. Although nowadays modern systems use XML formats for data exchange, CSV files are still popular because of the simplicity of their format. Normally, the data in the file is organized so one line corresponds to one record and each line contains a number of values normally separated by commas. Record and value separators could be any other symbol depending on the system requirements.

I have been successfully using CSV files for custom data migration tasks from/to Dynamics AX when the standard Data export/import utility is not enough. Speaking about Dynamics AX Data export/import utility, I have to mention that besides Binary and Excel formats, it can also handle CSV files.

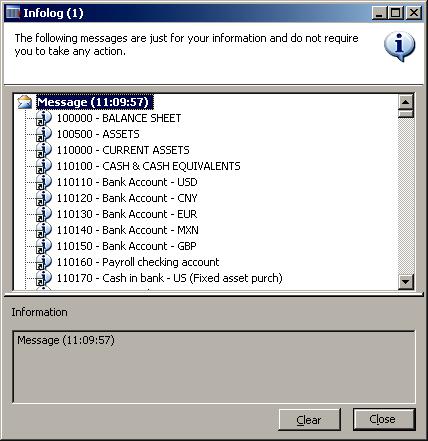

In this recipe, we will learn how to create a custom comma-separated file from code. We will export a list of ledger accounts—account number and name.

Open AOT, and create a new class called CreateCommaFile with the following code. Replace

<documents>with your own path (use double backslashes for folder separation, i.e.\\):class CreateCommaFile { } public static client void main(Args _args) { CommaIo file; container line; LedgerTable ledgerTable; #define.filename('<documents>\\accounts.csv') #File ; file = new CommaIo(#filename, #io_write); if (!file || file.status() != IO_Status::Ok) { throw error("File cannot be opened."); } while select ledgerTable { line = [ ledgerTable.AccountNum, ledgerTable.AccountName]; file.writeExp(line); } }

Run the class. A new file called

accounts.csvshould be created in the specified folder. Open that file with Notepad to view the results:

In the variable declaration section of the main() method of the CreateCommaFile class along with other variables we define a name for the output file. Normally, this should be replaced with a proper input variable. Here, we also define a standard #File macro which contains a number of file-handling modes like #io_read, #io_write, #io_append, etc., file types, delimiters, and other things.

Next, we create a new CSV file by calling new() on a standard CommaIo class. It accepts two parameters—filename and mode. For mode, we use #io_write from the #File macro to make sure a new file is created and opened for further writing. If a file with the given name already exists, then it will be overwritten. To make sure that a file is created successfully, we check if the object file exists and its status is valid, otherwise we show an error message.

In multilingual environments, it is better to use CommaTextIo. It behaves the same way as CommaIo does plus it supports Unicode, which allows us to process data with various language-specific symbols.

And finally, we loop though the LedgerTable table, store its account number and name fields into a container, and write them to the file using writeExp().

In this way, we create a new comma-separated value file with the list of ledger accounts.

You probably already noticed that the main() method has a client modifier, which forces its code to run on the client. When dealing with large amounts of data, it is more effective to run the code on the server. To do that, we need to change the modifier to server. The class below generates exactly the same file as before, except that this file is created on the folder on the server's file system:

class CreateCommaFileServer { } public static server void main(Args _args) { CommaIo file; container line; LedgerTable ledgerTable; FileIoPermission perm; #define.filename('<documents>\\accounts.csv') #File ; perm = new FileIoPermission(#filename, #io_write); perm.assert(); file = new CommaIo(#Filename, #io_write); if (!file || file.status() != IO_Status::Ok) { throw error("File cannot be opened."); } while select ledgerTable { line = [ ledgerTable.AccountNum, ledgerTable.AccountName]; file.writeExp(line); } CodeAccessPermission::revertAssert(); }

Make sure you replace <documents> with your own folder on the server. Use double backslashes for folder separation, i.e. \\.

File manipulation on the server is protected by Dynamics AX code access security and we must use the FileIoPermission class to make sure we match the requirements. That's why we have to call the following code before opening the file to assert permissions:

perm = new FileIoPermission(#Filename, #io_write); perm.assert();

and the following code after we completed all file operations to make sure we clear assertions:

Besides data import/export, CSV files could be used for integration between systems. It is probably the most simple integration approach. I've seen and worked on a number of projects where an external application, for example a specialized billing system, generates CSV files every day. Dynamics AX runs a periodic batch job, which reads the generated files every night and imports the data, for instance, sales orders. Although this is not a real-time integration, in most cases it does the job and does not require any additional components like Dynamics AX Application Integration Framework or something similar.

Another well known example is when external companies are hired to manage the payroll. On a periodic basis, they send CSV files to the finance department, which are then loaded into the General journal in Dynamics AX and processed as usual.

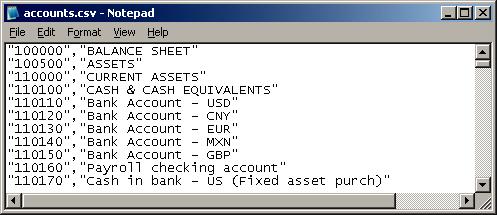

In this recipe, we will learn how to read CSV files from code. As an example, we will process the file created in a pervious recipe.

In AOT, create a new class named ReadCommaFile with the following code. Replace

<documents>with your own path (use double backslashes for folder separation, i.e.\\):class ReadCommaFile { } public static client void main(Args _args) { CommaIo file; container line; #define.filename('<documents>\\accounts.csv') #File ; file = new CommaIo(#filename, #io_read); if (!file || file.status() != IO_Status::Ok) { throw error("File cannot be opened."); } while (file.status() == IO_Status::Ok) { line = file.read(); if (line != connull()) { info(con2str(line, ' - ')); } } }

Run the class to view the file's content:

.

As in the previous recipe, we first create a new file object using CommaIo. This time we use #io_read as the mode to make sure that the existing file is opened for reading only. We also perform the same validations to make sure that the file object is correctly created, otherwise we show an error message.

Next and finally, we read the file line by line until we reach the end of the file. Here we use the while loop until the file status changes from IO_Status::OK to IO_Status::FileTooShort, which means no more lines exist in the file. Inside the loop, we call read() on the file object, which returns the current line as a container and moves the internal file cursor to the next line. File data is then simply outputted to the screen using the standard global info() function in conjunction with con2str(), which converts a container to a string for displaying.

The last element of code where data is outputted normally should be replaced by proper code that processes the incoming data.

File reading, like file writing, could also be executed on a server to improve performance. The modifier client has to be changed to server, and code with the FileIoPermission class has to be added to fulfill the code access security requirements. The modified class should look like the following:

class ReadCommaFileServer { } public static server void main(Args _args) { CommaIo file; container line; FileIoPermission perm; #define.filename('<documents>\\accounts.csv') #File ; perm = new FileIoPermission(#filename, #io_read); perm.assert(); file = new CommaIo(#Filename, #io_read); if (!file || file.status() != IO_Status::Ok) { throw error("File cannot be opened."); } while (file.status() == IO_Status::Ok) { line = file.read(); if (line != connull()) { info(con2str(line, ' - ')); } } CodeAccessPermission::revertAssert(); }

Normally, during the testing stage of any Dynamics AX project, system configuration is adjusted depending on the testing results. This process may be performed as many times as required until the system is ready. During this process, it might appear that at some point the system have too many transactions, which may impact further tests. If this happens, then the data is wiped out by exporting configuration data, deleting whole company, and importing configuration data back. Data clean-up is also required when "fresh" retesting is required.

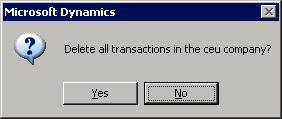

Although the described process does the job, it might be slightly time consuming. During my developments, I found the undocumented class called SysDatabaseTransDelete in the standard Dynamics AX application. Once started, it deletes all transactional data in the current company. It allows us to quickly get rid of all transactions and leaves configuration data intact.

But although this class is useful during the testing stages, it definitely should not be used in production environments.

In this recipe, we will explore how to delete all transactional data using the mentioned application class.

First, we need to make a copy of an existing company account to make sure we are not deleting the data in the current company. Open Administration | Company accounts, and select the current company:

Click on the Duplicate button, and then type in the details for a new company:

After a couple of moments, a new company should be created. Select it in the form and click on the Select button to make sure you start working in it:

Next open AOT, find the SysDatabaseTransDelete class in AOT, and run it.

Click Yes to acknowledge that you want to delete all transactions:

In this recipe, we use the SysDatabaseTransDelete class, which is included in the standard Dynamics AX application. No additional coding is required. We simply need to run it in the company that we want to clean up. This class is not included in any menu, so it has to be started from AOT. The class deletes all data in the current company account from all tables belonging to the Transaction, WorksheetHeader, and WorksheetLine table groups.