It’s time we looked at how to properly work with textures within a material, something that we’ll do now thanks to the small toy tank prop we’ve used in the last two recipes. The word properly is the key element in the previous sentence, for even though we’ve worked with images in the past, we haven’t really manipulated them inside the editor or seen how we can adjust them without leaving the editor. It’s time we did that, so let’s take care of it in this recipe!

Getting ready

You probably know the drill by now—just like in previous recipes, there’s not a lot that you’ll actually need in order to follow along. Most of the assets that we are going to be using can be acquired through the Starter Content asset pack, except for a few that we provide alongside this book’s Unreal Engine 5 project.

As you are about to see, we´ll continue using our famous toy tank model and material, so you can use either those or your own assets; we are dealing with fairly simple assets here, and the important bit is the actual logic that we are going to create within the materials.

If you want to along with me, feel free to open the level named 02_03_Start just so that we are looking at the same scene.

How to do it…

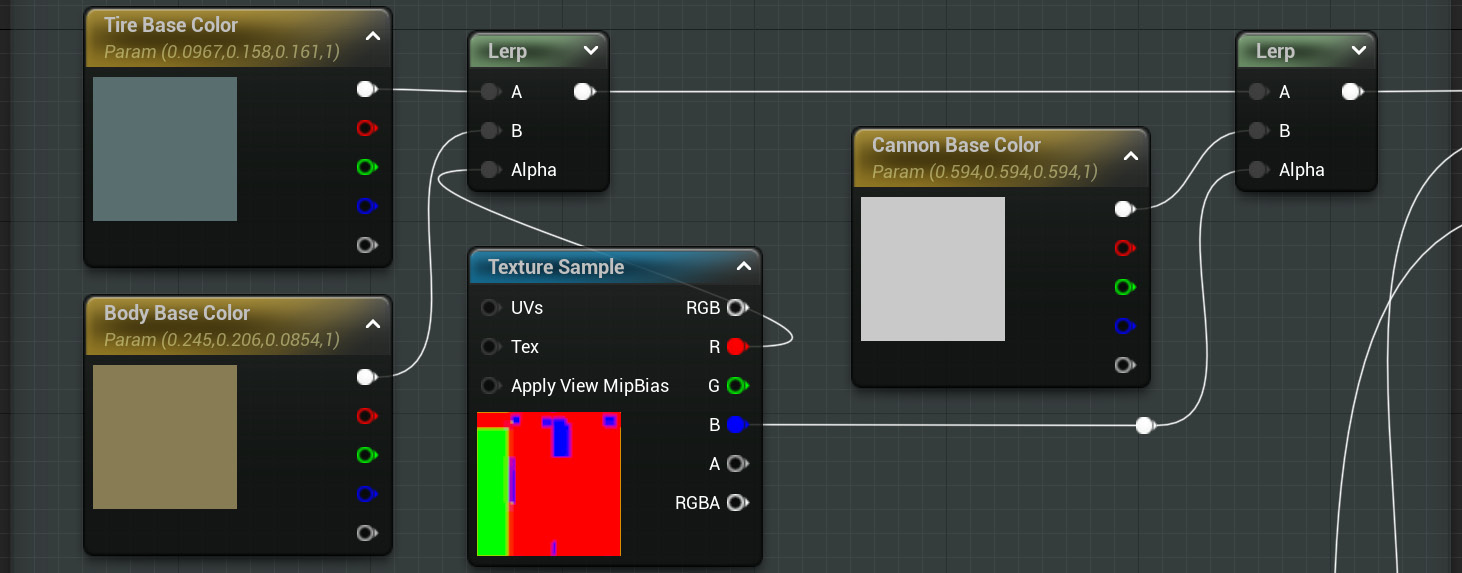

So far, all we’ve used in our toy tank material are simple colors—and even though they look good, we are going to make the jump to realistic textures this time. Let’s start:

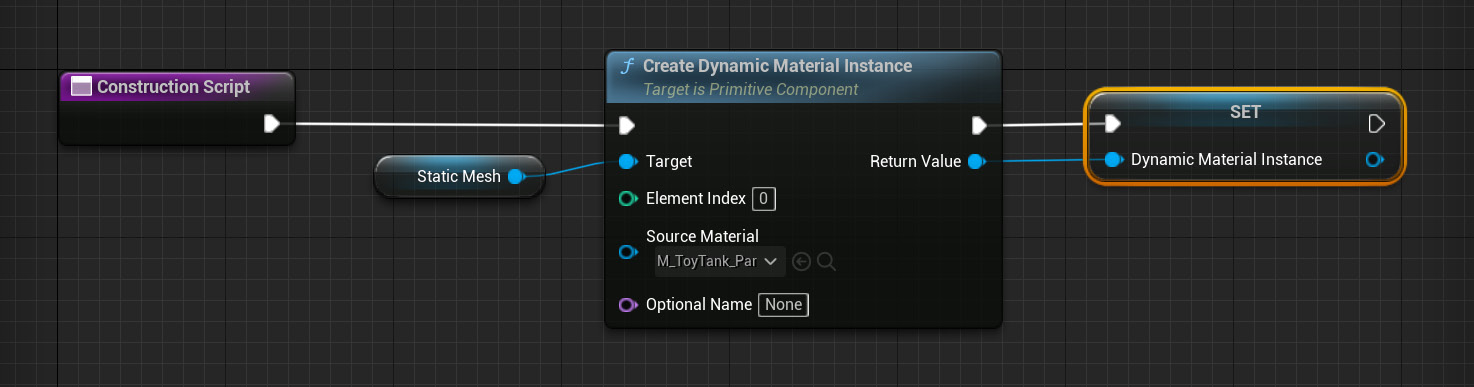

- Duplicate the material we created in the first recipe of this chapter, M_ToyTank. This is going to be useful, as we don’t want to reinvent the wheel so much as expand a system that already works. Having everything separated with masks and neatly organized will speed things up greatly this time. Name the new asset whatever you like—I’ve gone with M_ToyTank_Textured.

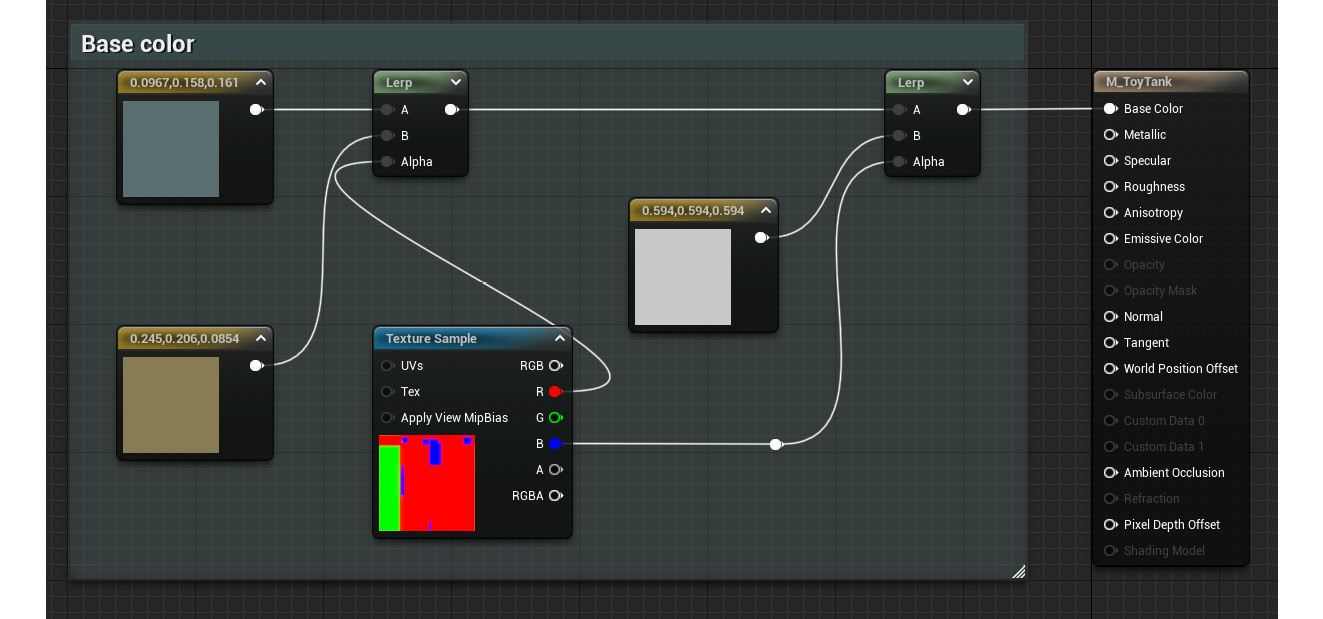

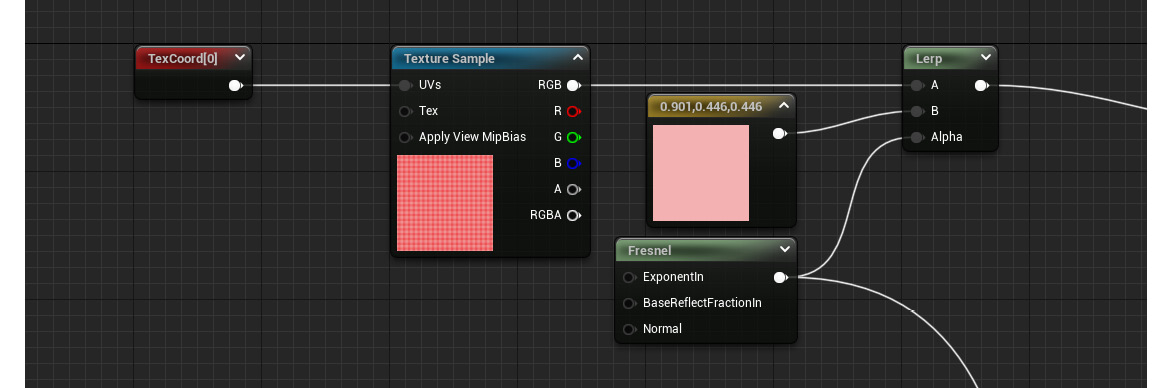

- Open the material graph by double-clicking on the newly created asset and focus on the Base Color section of the material. Look for the Constant3Vector node that is driving the appearance of the main body of the tank (the one using a brownish shade) and delete it.

- Create a new Texture Sample node and assign the T_Wood_Pine_D texture to it (this asset comes with the Starter Content asset pack, so we should already have access to it).

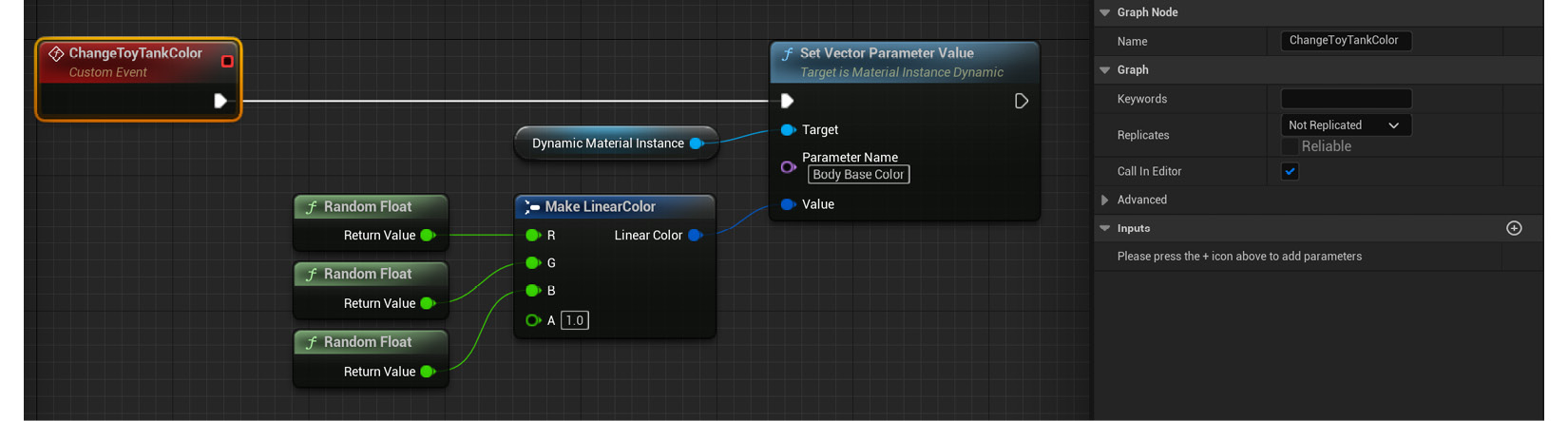

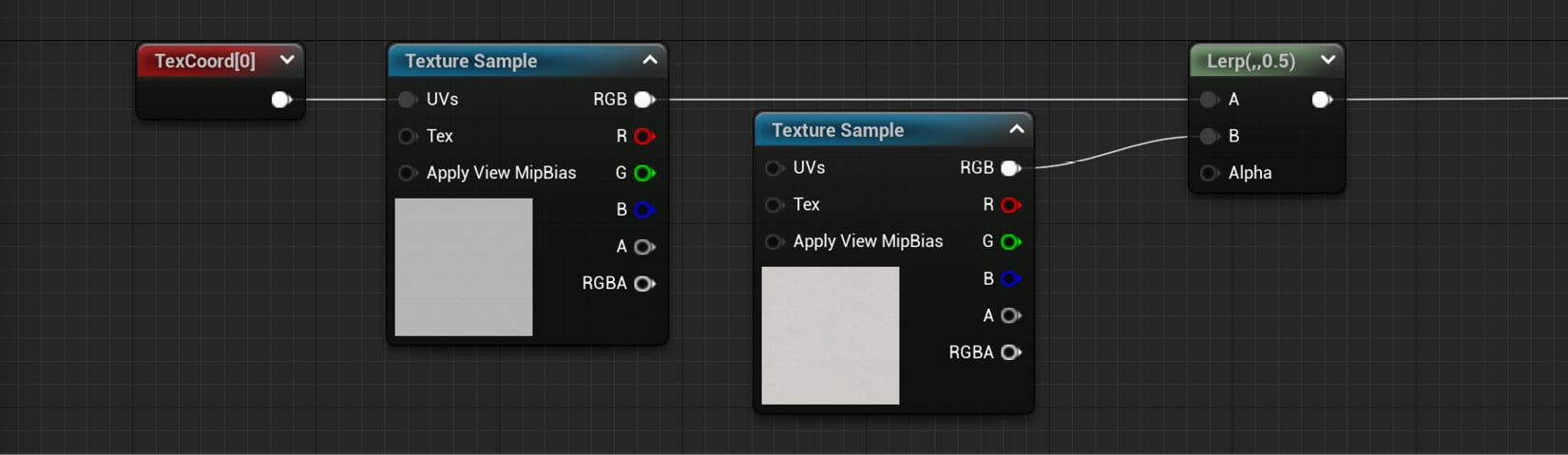

- Connect the RGB output pin of the new Texture Sample node to the B input pin of the Lerp node, to which the recently deleted Constant3Vector node used to be connected:

Figure 2.14 – The now-adjusted material graph

This will drive the appearance of the main body of the tank.

Tip

There’s a shortcut for the Texture Sample node: the letter T on your keyboard. Hold it and left-click anywhere within the material graph to create one.

There are a couple of extra things that we’ll be doing with this texture next—first, we can adjust the tiling so that the wood veins are a bit closer to each other once we apply the material to the model. Second, we might want to rotate it just to modify the current direction of the screenshot. Even though this is an aesthetic choice, it’s useful to get familiar with these nodes, as they are often quite useful when we need to use them.

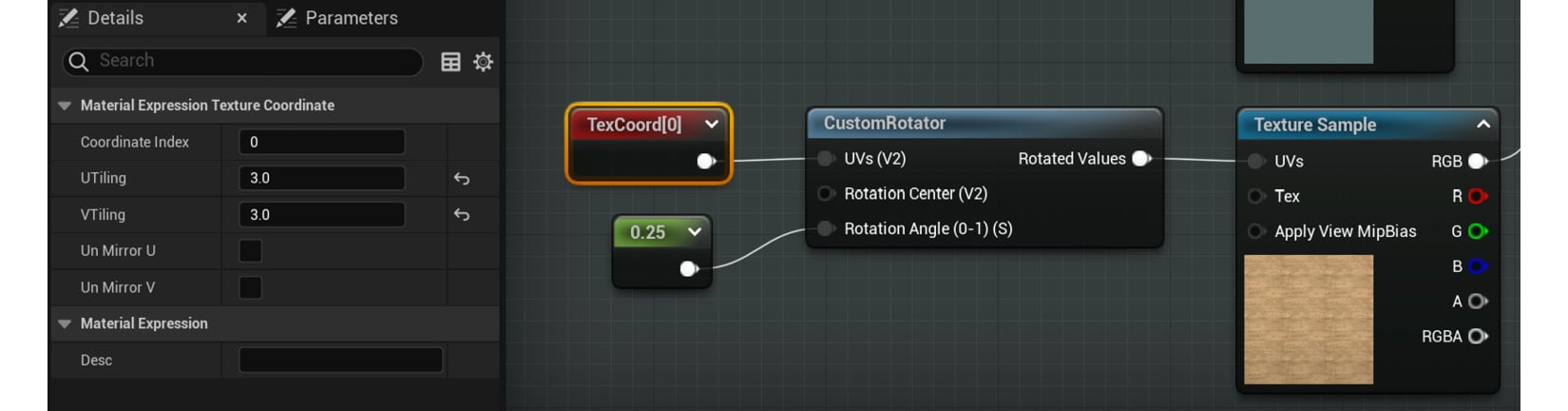

- While holding down the U key on your keyboard, left-click in an empty space of the material graph to add a Texture Coordinate node. Assign a value of 3 to both its U Tiling and V Tiling settings, or adjust it until you are happy with the result.

- Next, create a Custom Rotator node and place it immediately after the previous Texture Coordinate node.

- With the previous node in place, plug the output of the Texture Coordinate node into the UVs (V2) input pin of the Custom Rotator node.

- Connect the Rotated Values output node of the Custom Rotator node to the UVs input pin of the Texture Sample node used to display the wood texture.

- Create a Constant node and plug it into the Rotation Angle (0-1) (S) node of the Custom Rotator node. Give it a value of 0.25 to rotate the texture 90 degrees (you can find more information regarding why a value of 0.25 equals 90 degrees in the How it works… section).

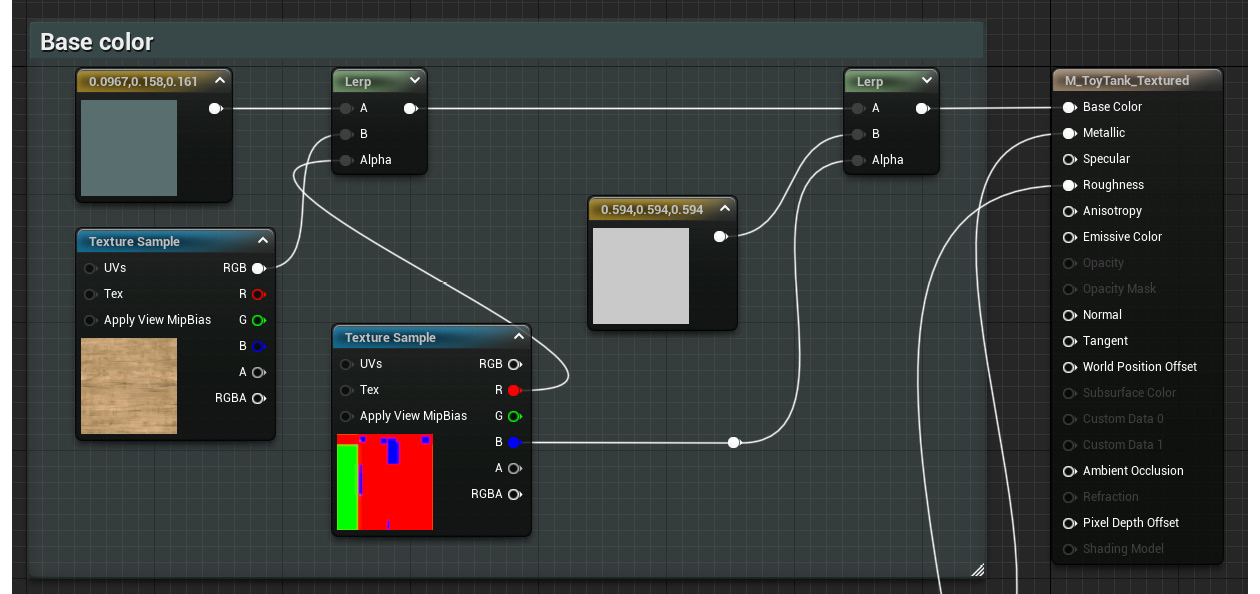

Here is what the previous sequence of nodes should look like:

Figure 2.15 – A look at the previous set of nodes

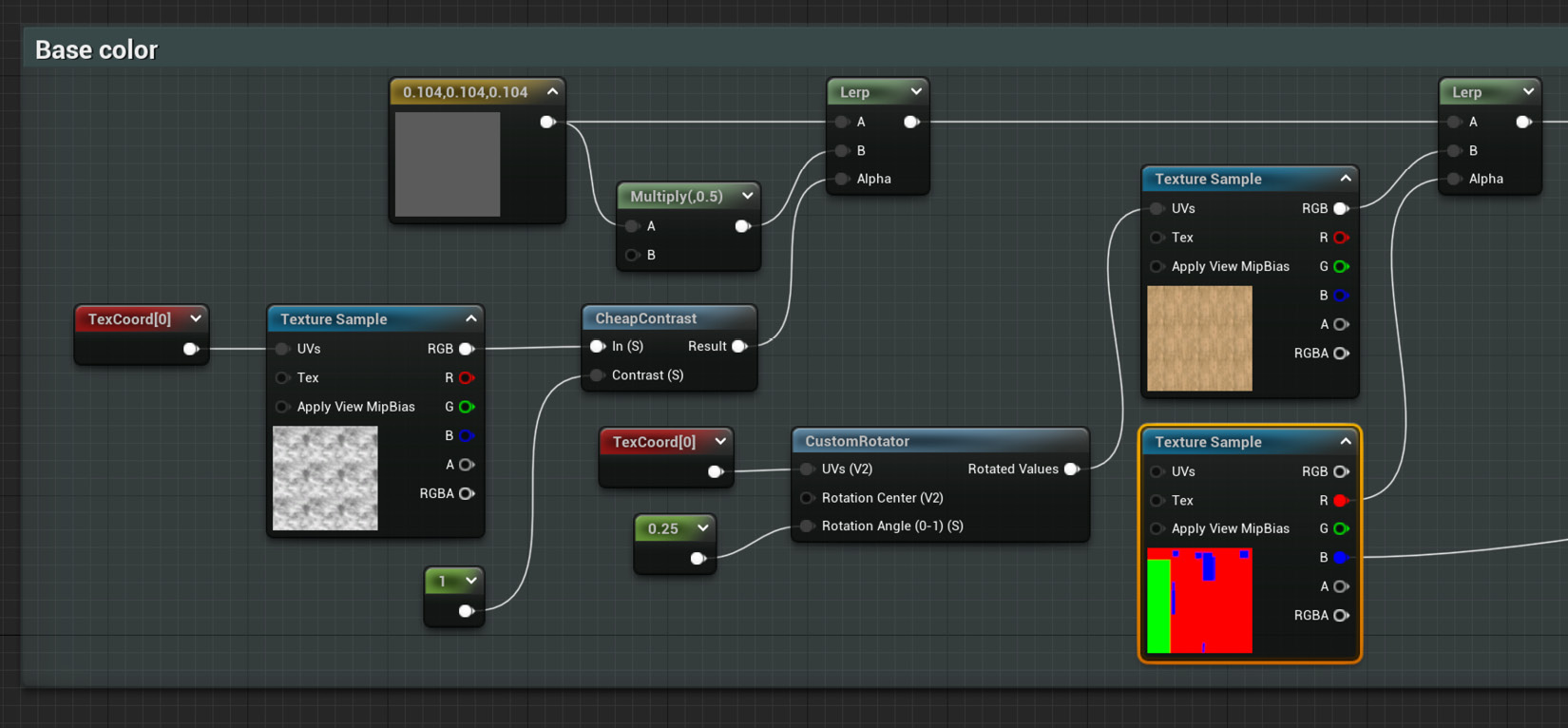

Let’s now continue to add a little extra variation to the other parts of the material. I’d like to focus on the other two masked elements next—the tires and the metallic sections—and make them a little bit more interesting visually speaking.

- We can start by changing the color of the Constant3Vector node that we are using to drive the color of the toy tank’s tires to something that better represents that material (rubber). We’ve been using a blueish color so far, so something closer to black would probably be better.

- After that, create a Multiply node and place it right after the previous Constant3Vector node. This is a very common type of node that does what its name implies: it outputs the result obtained after multiplying the inputs that reach this pin. One way to create it is by simply holding down the M key of the keyboard and left-clicking anywhere in the material graph.

- With the previous Multiply node created, connect its A input pin to the output of the same Constant3Vector node driving the color of the rubber tires.

- As for the B input pin, assign a value of 0.5 to it, something that you can do through the Details panel of the Multiply node without needing to create a new Constant node.

- Interpolate between the default value of the Constant3Vector node for the rubber tires and the result of the previous multiplication by creating a Lerp node. You can do this by connecting the output of said Constant3Vector node to the A input pin of a new Lerp node and the result of the Multiply node to the B input pin. Don’t worry about the Alpha parameter for now—we’ll take care of that next.

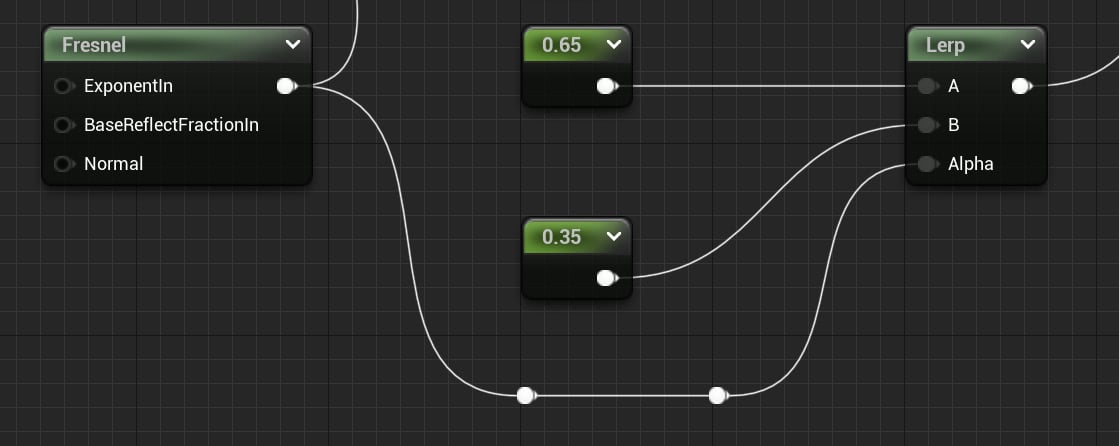

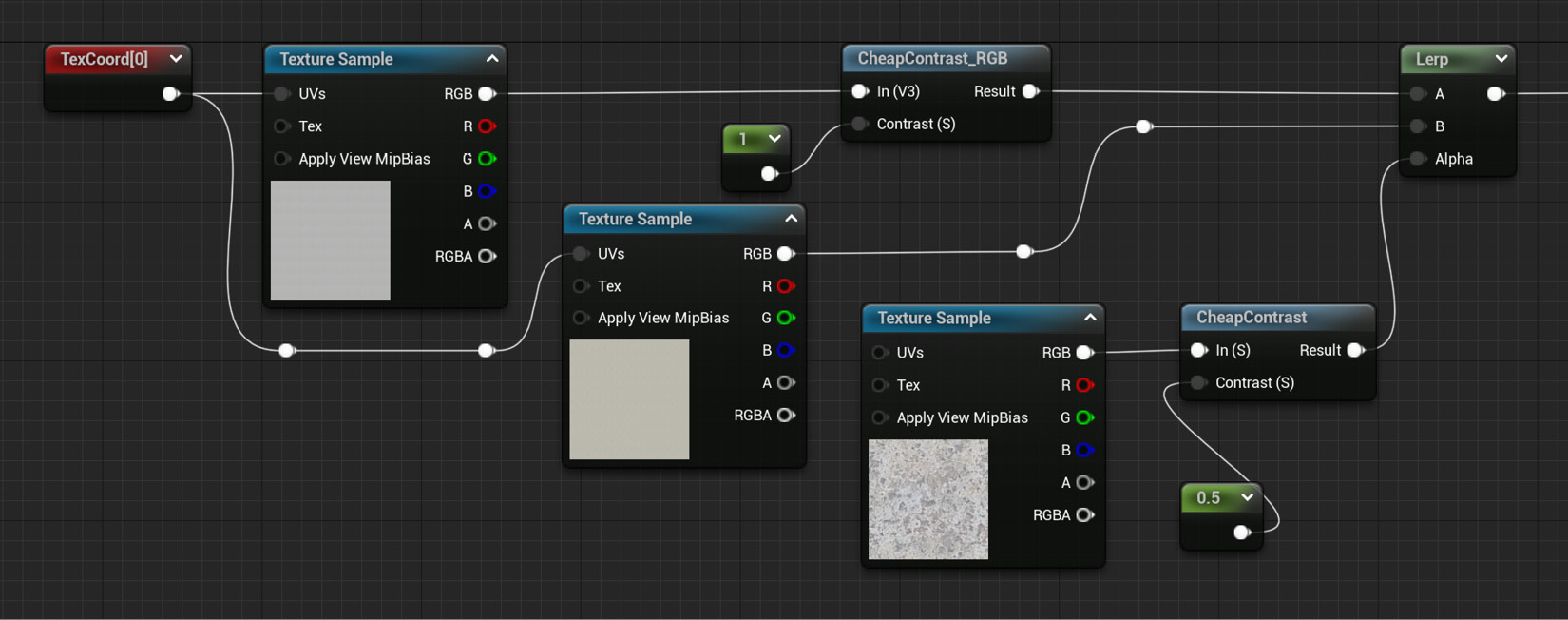

- Create a Texture Sample node and assign the T_Smoked_Tiled_D asset as its default value.

- Next, create and connect a Texture Coordinate node to the previous Texture Sample node and assign a higher value than the default 1 to both its U Tiling and V Tiling parameters; I’ve gone with 3, just so that we can see the effect this will have more clearly in the future.

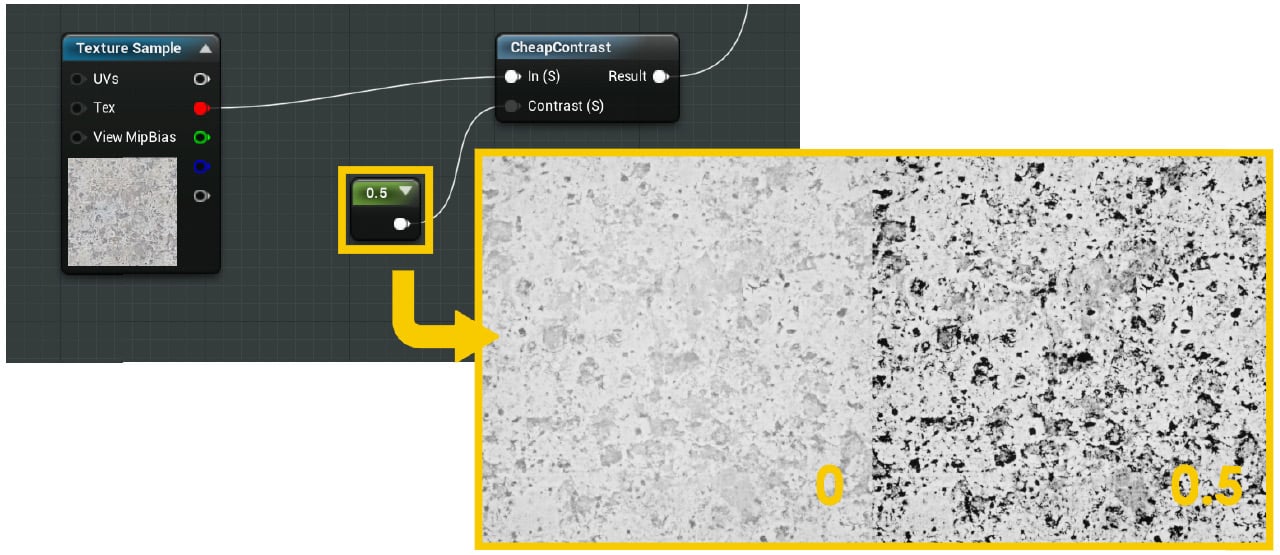

- Proceed to drag a wire out of the output pin of the Texture Sample node for the new black-and-white smoke texture and create a Cheap Contrast node.

- We’ll need to feed the previous Cheap Contrast node with some values, which we can do by creating a Constant node and connecting it to the Contrast (S) input pin of the previous node. This will increase the difference between the dark and white areas of the texture it is affecting, making the final effect more obvious. You can leave the default value of 1 untouched, as that will already give us the desired effect.

- Connect the result of the Cheap Contrast node to the Alpha input pin of the Lerp node we created in step 14 and connect the output of that to the original Lerp node which is being driven by the T_TankMasks texture mask.

Here is a screenshot that summarizes the previous set of steps:

Figure 2.16 – The modified color for the toy tank tires

We’ve managed to introduce a little bit of color variation thanks to the use of the previous smoke texture as a mask. This is something that we’ll come back to in future recipes, as it’s a very useful technique when trying to create non-repetitive materials.

Tip

Clicking on the teapot icon in the Material Editor viewport will let you use whichever mesh you have selected on the Content Browser as the visible asset. This is useful for previewing changes without having to move back and forth between different viewports.

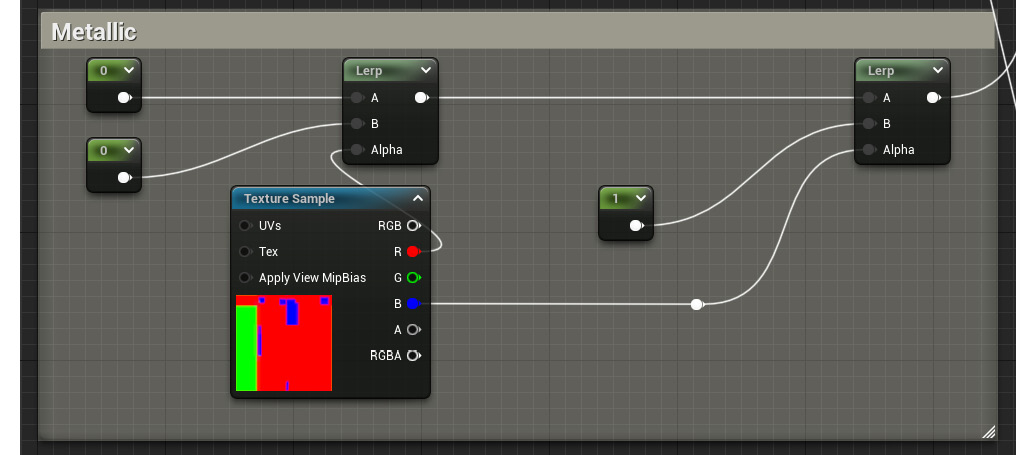

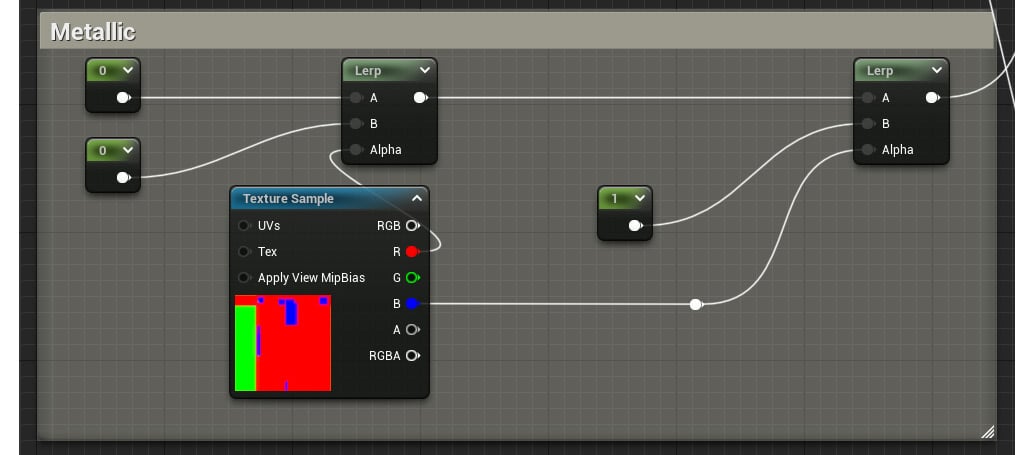

Finally, let’s introduce some extra changes to the metallic parts of the model.

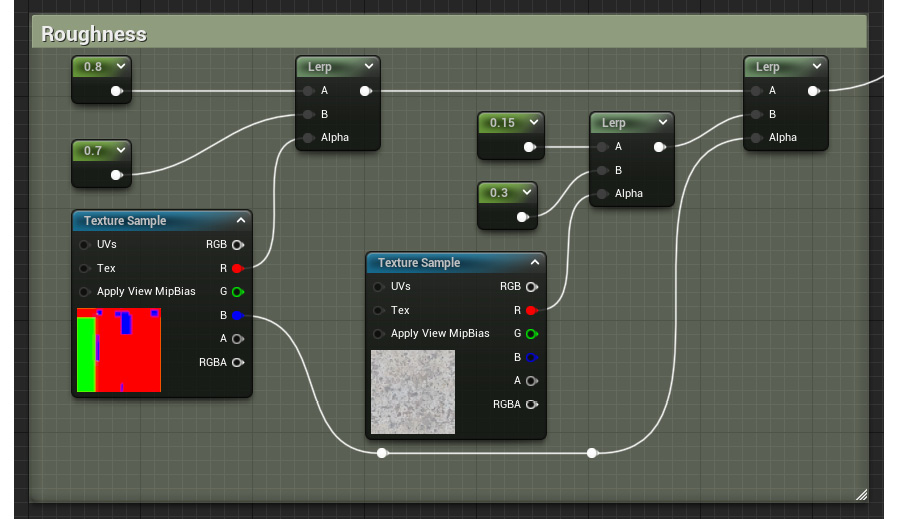

- To do that, head over to the Roughness section of the material graph.

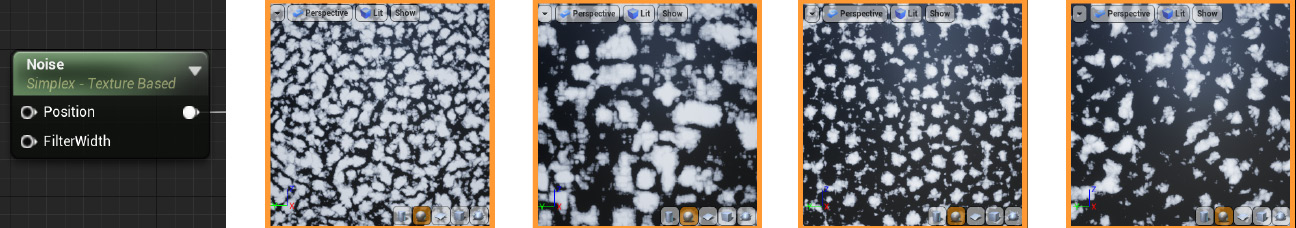

- Create a Texture Sample parameter and assign the T_MacroVariation texture to it. This will serve as the Alpha pin for the new Lerp node we are about to create.

- Add two simple Constant nodes and give them two different values. Keep in mind that these will affect the roughness of the metallic parts when choosing the values, so values lower than 0.3 will work well when making those parts reflective.

- Place a new Lerp node and plug the previous two new Constant nodes into the A and B input pins.

- Connect the red channel of the Texture Sample node we created in step 21 to the Alpha input pin of the previous Lerp node.

Important note

The reason why we are connecting the red channel of the previous Texture Sample node to the Alpha input pin of the Lerp node is simply that it offers a grayscale value which we can use in our favor to drive the mixture of the different roughness values.

- Finally, replace the initial Constant that was driving the roughness value of the metallic parts with the node network we created in the three previous steps. The graph should now look something like this:

Figure 2.17 – The updated roughness section of our material

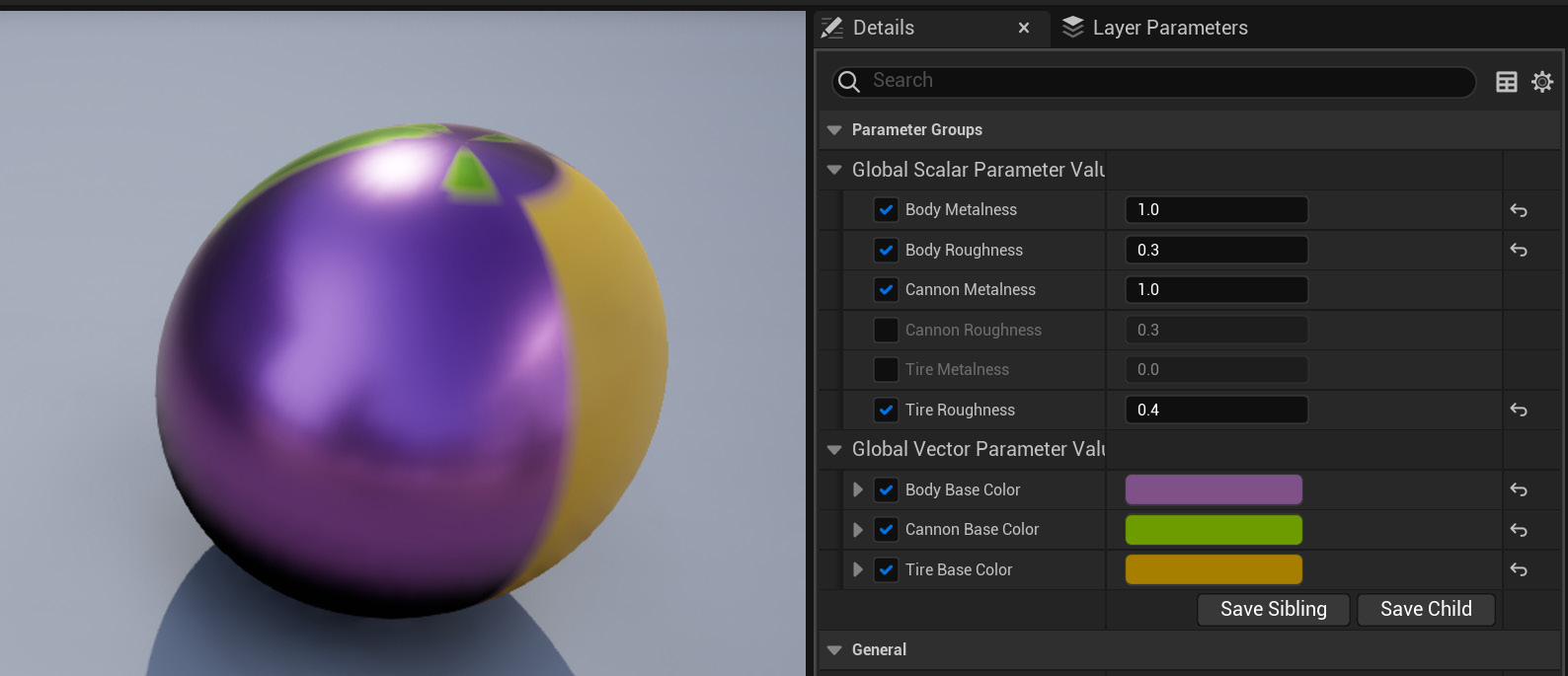

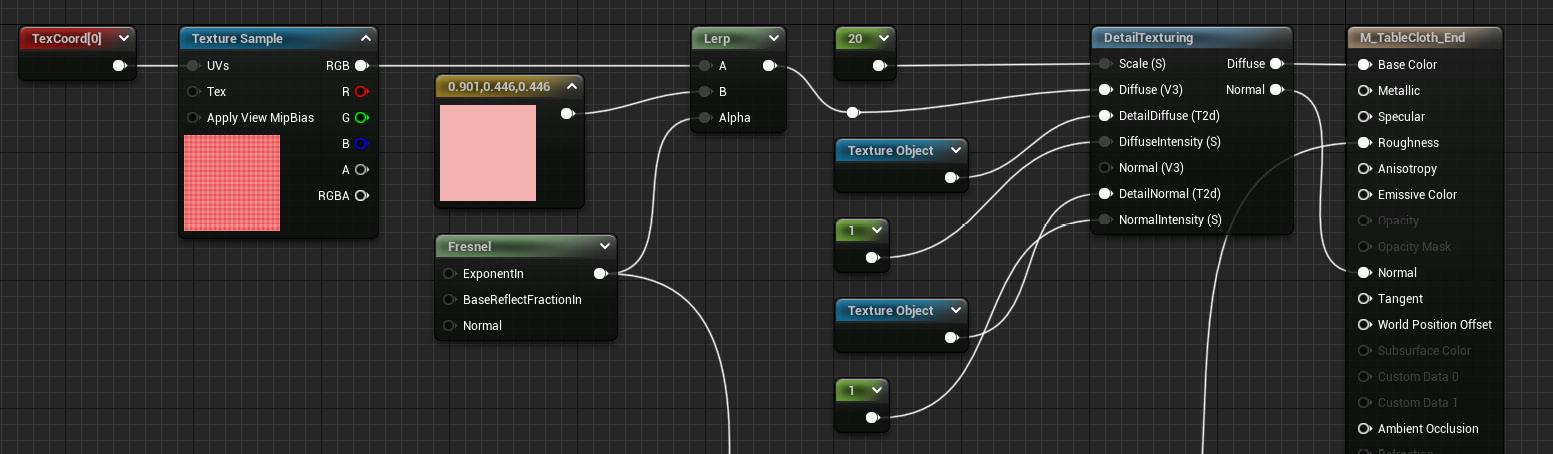

And after doing that, let’s now... oh, wait; I think we can call it a day! After all of those changes have been made, we will be left with a nice new material that is a more realistic version of the shader we had previously created. Furthermore, everything we’ve done constitutes the basics of setting up a real material in Unreal Engine 5. Combining textures with math operations, blending nodes according to different masks, and taking advantage of different assets to create specific effects are everyday tasks that many artists working with the engine have to face. And now, you know how to do that as well! Let’s check out the results before moving on:

Figure 2.18 – The final look of the textured toy tank material

How it works…

We’ve had the opportunity to look at several new nodes in this recipe, so let’s take a moment to review what they do before moving forward.

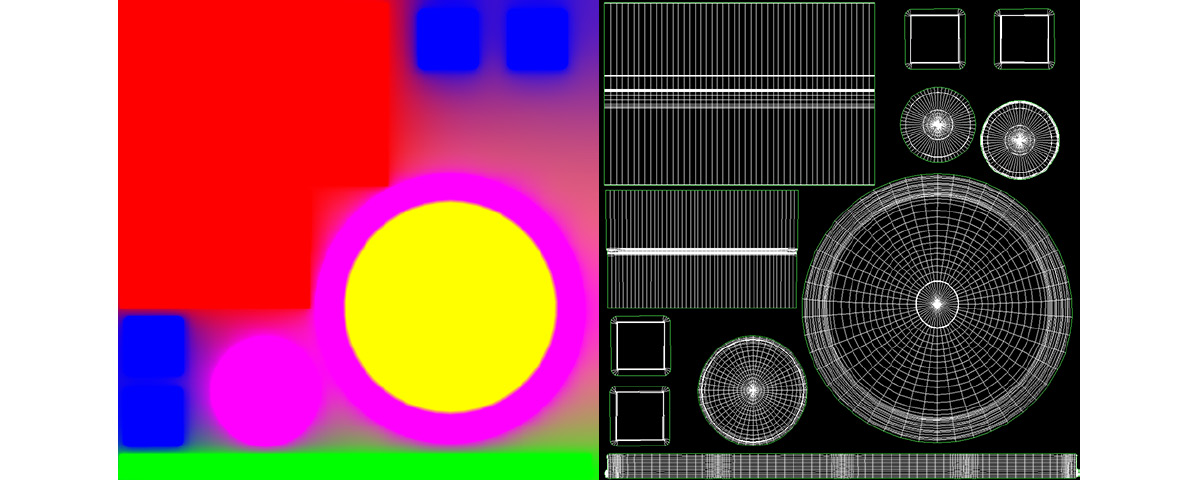

One of the first ones we used was the Texture Coordinate one, which affects the scaling of the textures that we apply to our models. The Details panel gives us access to a couple of parameters within it, U Tiling and V Tiling. Modifying those settings will affect the size of the textures that are affected by this node, with higher values making the images appear smaller. In reality, the size of the textures doesn’t really change, as their resolution stays the same: what changes is the amount of UV space that they occupy. A value of 1 for both the U Tiling and the V Tiling parameters means that any textures connected to the Texture Coordinate node occupy the entirety of the UV space, while a value of 2 means that the same texture is repeated twice over the same extent. Decoupling the U Tiling parameter from the V Tiling parameter lets us affect the repetition pattern independently on those two axes, giving us a bit more freedom when adjusting the look of our materials.

Important note

There’s a third parameter that we can tweak within the Texture Coordinate node— Coordinate Index. The value specified in this field affects the UV channel we are affecting, should we use more than one.

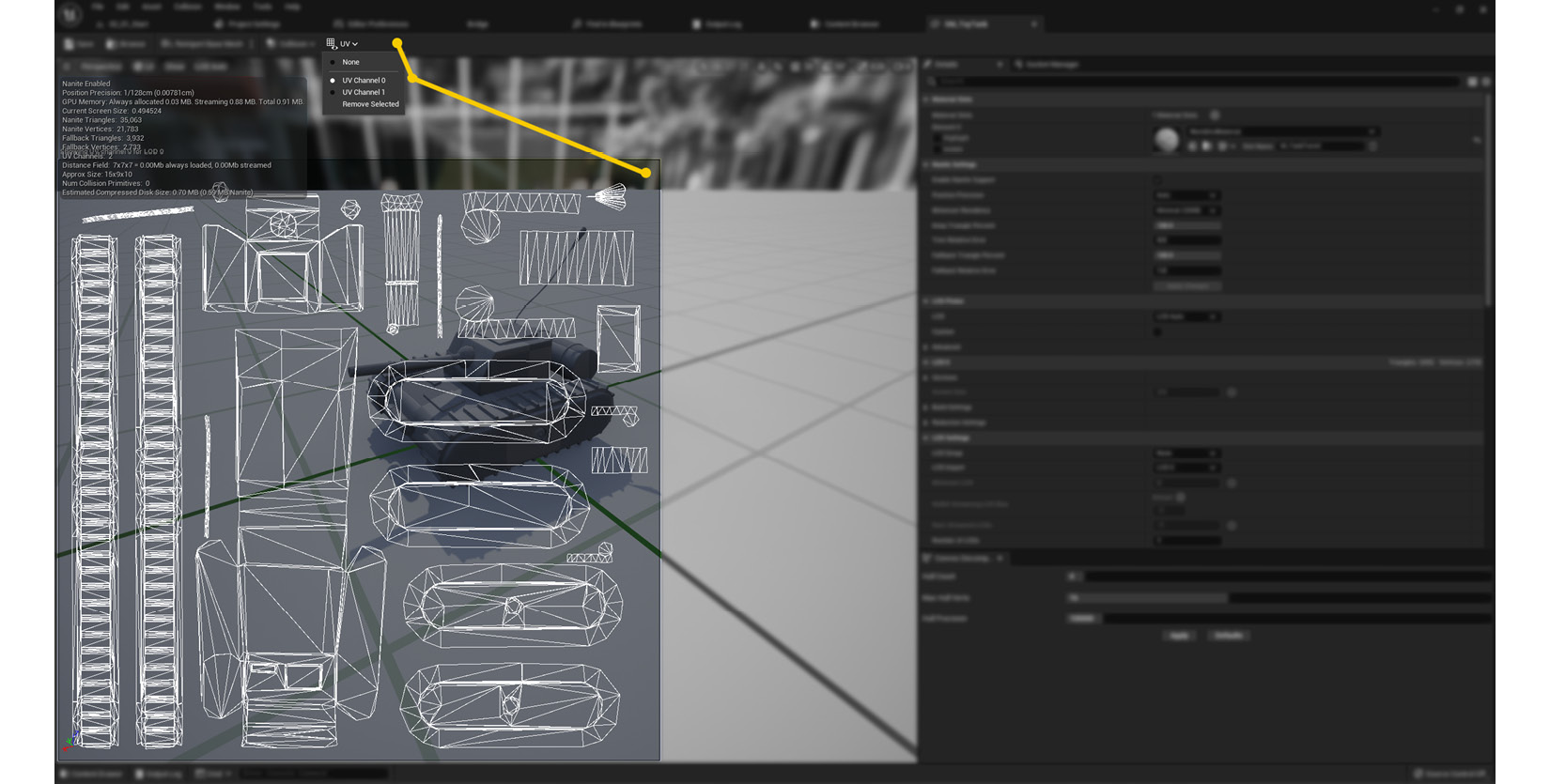

On that note, it’s probably important to highlight the importance of well-laid-out UVs. Seeing as how many of the nodes that we’ve used directly affect the UV space, it only makes sense that the models with which we work contain correctly unwrapped UV maps. Errors here will most likely impact the look of any textures that you try to apply, so make sure to check for mistakes in this area.

Another node that we used to modify the textures used in this recipe was the Custom Rotator node. This one adjusts the rotation of the images you are using, so it’s not very difficult to conceptualize. The trickiest setting to wrap our heads around is probably the Constant that drives the Rotation Angle (0-1) (S) parameter, which dictates the degrees by which we rotate a given texture. We need to feed a value within the 0 to 1 range, 0 being no rotation and 1 mapping to 360 degrees. A value of 0.25, the one we used in this recipe, corresponds exactly to 90 degrees following a simple rule of three.

Something that we can also affect using the Custom Rotator node are the UVs of the model, just as we did with the Texture Coordinate node before (in fact, we plugged the latter into the UVs (V2) input pin of the former). This allows us to increase or decrease the repetition of the texture before we rotate it. Finally, the Rotation Centre (V2) parameter allows us to determine the center of the rotation. This works according to the UV space, so a value of (0,0) will see the texture rotating around its bottom-left corner, a value of (0.5,0.5) will rotate the image around its center, and a value of (1,1) will do the same around the upper-right corner.

The third node we will discuss is the Multiply one. It is rather simple in terms of what it does: it multiplies two different values. The two elements need to be of a similar nature —we can only multiply two Constant3Vector nodes, two Constant2Vector nodes, two Texture Sample nodes, and so on. The exception to this rule is the simple Constant nodes, which can always be combined with any other input. The Multiply node is often used when adjusting the brightness of a given value, which is what we’ve done in this recipe.

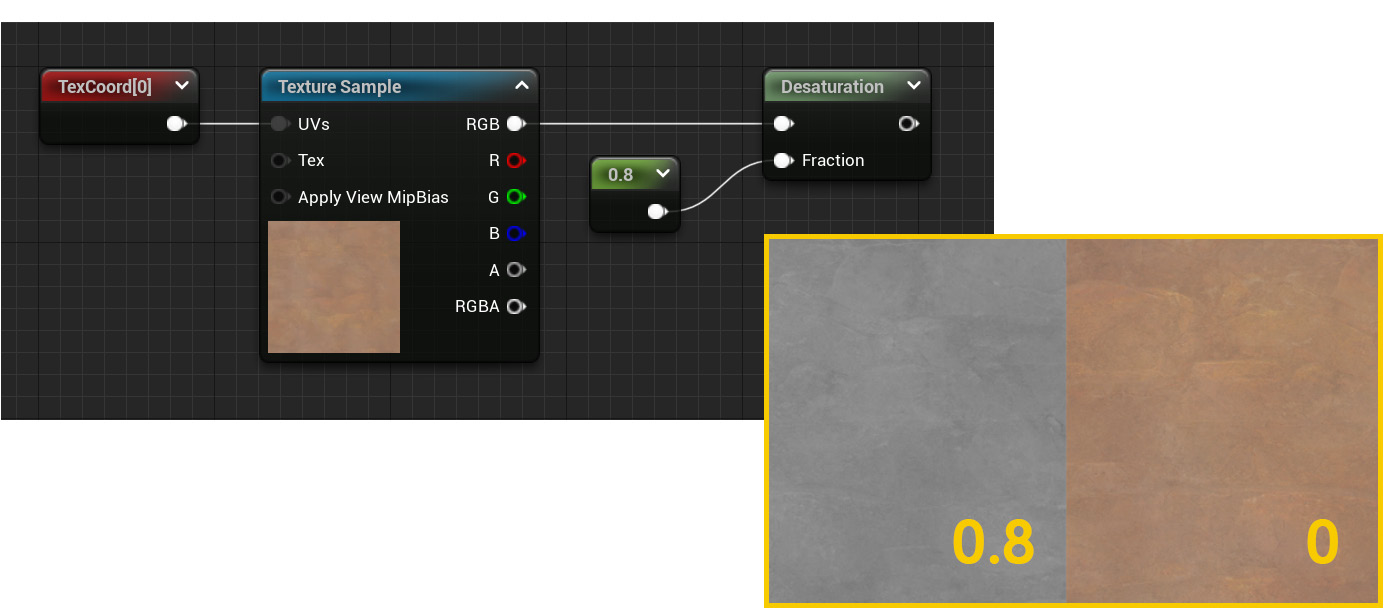

Finally, the last new node we saw was Cheap Contrast. This is useful for adjusting the contrast of what we hook into it, with the default value of 0 not adjusting the input at all. We can use a constant value to adjust the intensity of the operation, with higher values increasing the difference between the black and the white levels. This particular node works with grayscale values—should you wish to perform the same operation in a colored image, note that there’s another CheapContrast_RGB node that does just that.

Tip

A useful technique that lets you evaluate the impact of the nodes you place within the material graph is the Start Previewing Node option. Right-click on the node that you want to preview and choose that option to start displaying the result of the node network you’ve chosen up to that point. The results will become visible in the viewport.

See also

I want to leave you with a couple of links that provide more information regarding Multiply, Cheap Contrast, and some other related nodes:

Argentina

Argentina

Australia

Australia

Austria

Austria

Belgium

Belgium

Brazil

Brazil

Bulgaria

Bulgaria

Canada

Canada

Chile

Chile

Colombia

Colombia

Cyprus

Cyprus

Czechia

Czechia

Denmark

Denmark

Ecuador

Ecuador

Egypt

Egypt

Estonia

Estonia

Finland

Finland

France

France

Germany

Germany

Great Britain

Great Britain

Greece

Greece

Hungary

Hungary

India

India

Indonesia

Indonesia

Ireland

Ireland

Italy

Italy

Japan

Japan

Latvia

Latvia

Lithuania

Lithuania

Luxembourg

Luxembourg

Malaysia

Malaysia

Malta

Malta

Mexico

Mexico

Netherlands

Netherlands

New Zealand

New Zealand

Norway

Norway

Philippines

Philippines

Poland

Poland

Portugal

Portugal

Romania

Romania

Russia

Russia

Singapore

Singapore

Slovakia

Slovakia

Slovenia

Slovenia

South Africa

South Africa

South Korea

South Korea

Spain

Spain

Sweden

Sweden

Switzerland

Switzerland

Taiwan

Taiwan

Thailand

Thailand

Turkey

Turkey

Ukraine

Ukraine

United States

United States