The Open Web Application Security Project (OWASP) published its first OWASP API Security Top 10 list in December 2019, and since then, the API security community has grown rapidly, with API security start-ups attracting significant investment and increasing interest from developers and security practitioners alike to learn resources on the topic. Unfortunately, during this period, there has also been a marked rise in the number of security incidents relating to insecure APIs. Recent analysis suggests a 681% rise in API attacks and that nearly one in two organizations has experienced a security incident related to APIs.

In a way, APIs are a victim of their own success – because of their rapid proliferation and the high economic value of the data they protect, they are now the most popular target for attackers.

We’ll now have a look at the so-called API economy and how the near-exponential growth in the number of APIs creates challenges for organizations as they become the favorite vector for attackers.

The growth of the API economy

To fully appreciate the importance of API security, let us first consider the growth of the so-called API economy. Let’s understand a bit more about what is meant by this term – Forbes defines an API economy as “an enabler for turning a business or organization into a platform.” A platform can leverage APIs to do the following:

- Provide services and data to consumers for a price

- Consume services and data from other providers to enhance your business

What is the API economy?

The API revolution has led to the emergence of API-first businesses such as Twilio and has allowed other organizations to expose their core offerings via APIs (Google Maps is a good example). The disruptive nature of the API economy is best seen in the financial services industry – typically, this has been an industry resistant to innovation due to regulatory and compliance requirements. By using APIs to expose selected core services, banks can embrace new models without disrupting their core IT systems. By adopting open standards that can be certified – such as the Open Banking API – banks can achieve interoperability while ensuring transactional integrity. The online money transfer service Wise uses APIs to provide B2B and B2C services and offers banking-as-a-service (BaaS) to third parties by renting out their APIs.

Advantages of an API economy

There are several key benefits to an API economy:

- Reduced time-to-market: Organizations can use APIs to consume services from third parties rather than having to create those services themselves, resulting in faster development life cycles.

- Drive value: Organizations can expose new and innovative services using APIs and open new markets.

- Competitive advantage: By getting to market faster and using APIs to drive innovation, adopters can increase their competitive advantage.

- Improved efficiency: APIs allow IT teams to deliver immediate value by exposing APIs, rather than having to build and deploy mobile or web applications.

- Security: Mobile and web applications expose a vast attack surface to adversaries. By focusing development on APIs, this attack surface can be reduced and focus given to the hardening and security of these APIs.

API adoption allows organizations to deliver more value and functionality while simultaneously reducing cost and time to market.

The scale of the API economy

It is difficult to provide an accurate estimation of the scale of an API economy, and even if it was possible, this estimate would soon be invalidated due to the nearly exponential growth of the space. The API community at Nordic APIs (https://nordicapis.com/20-impressive-api-economy-statistics/) has produced a survey on the scale of the API economy; the following are some headline figures:

- Over 90% of developers use APIs

- The popular API test platform Postman has over 46 million API collections

- 83% of all internet traffic belongs to APIs

- There are over 2 million API GitHub repositories

- The API management market is valued at $5.1 billion in 2023

- 93% of communication service providers use OpenAPI specifications

- 91% of organizations have had an API security incident

On the back of a growing API economy, major capital investment has poured into the market for API tool vendors, management platforms, and security tools.

Challenges to an API economy

The rapid adoption of APIs brings with it several challenges in addition to the benefits. The first challenge is that of inventory — because APIs can be easily built and deployed and have a finite lifetime, organizations are struggling to keep track of their API inventory, resulting in shadow (hidden) and zombie (outdated) APIs.

The second challenge is that of governance — as APIs proliferate, organizations face challenges with governing the development and deployment process, ensuring that data and privacy requirements are met and that the API life cycle is managed from cradle to grave.

The biggest challenge, however, is that of security. As noted earlier in this section, APIs can reduce an organization’s overall attack surface; however, this comes at the cost of a new security paradigm – APIs are a new attack surface, and the threats are different. In the next section, we’ll explore these security challenges in more detail.

APIs are popular with developers

Developers love APIs — nearly all developers work with APIs and nearly all modern architectures are API-centric. While containerization has driven the breakdown of the monolith and the emergence of microservices, it is APIs that form the connecting tissue between these services.

The benefit of APIs to developers are numerous, including the following:

- They form an abstraction between services and allow encapsulation of functionality.

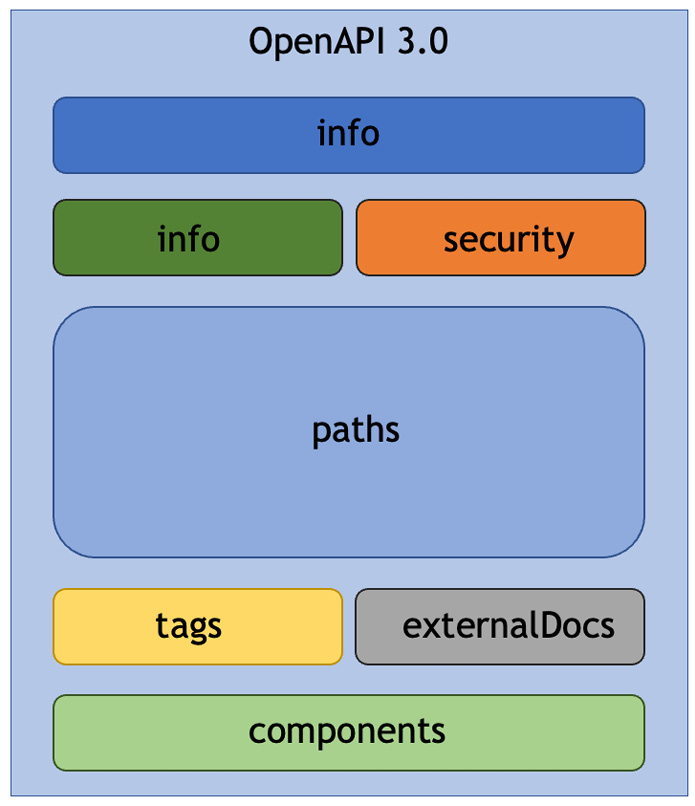

- They define a clear interface via an OpenAPI specification that serves as a contract for the API.

- They allow a truly polyglot environment where different APIs can be implemented in the most suitable programming language for the task at hand.

- They simplify data exchange as APIs generally use JSON, XML, or YAML.

- They facilitate ease of testing, using tools such as Postman or tools that can validate API functionality against the OpenAPI Specification.

- They propel ease of development. The API development ecosystem is rich with powerful tooling for the development and testing of APIs. Moreover, fully featured API frameworks exist for most modern programming languages.

These factors have fueled the API-first paradigm where applications are built in a bottom-up approach, starting with the APIs, then the business logic, and the user interface (UI) last.

APIs are increasingly popular with attackers

While APIs are undoubtedly popular with developers, they are even more popular with attackers. Gartner reports that APIs are the number one attack vector for cybercriminals in 2022, and barely a week goes by without an API breach or vulnerability being disclosed.

There are several key reasons why APIs are a favored attack target:

- APIs are likely to be publicly accessible: By their nature, APIs are intended to be interconnected with other systems, requiring them to be exposed on public networks. This facilitates easy discovery and attack by adversaries.

- APIs are often well documented: To aid easy adoption and integration, good APIs should be documented using tools such as the Swagger UI. Unfortunately, such documentation can also be invaluable to attackers in understanding how the APIs work.

- API attacks can be automated: API interaction is headless (not requiring a UI or human interaction) and can easily be automated with scripts or dedicated attack tools. APIs are, in many cases, easier to attack than mobile or web applications.

- APIs expose valuable data: Most importantly, APIs are designed to allow access to key data assets (PII, financial, or market data), which are likely to be the highest prize for an attacker. Attackers increasingly attack APIs that inadvertently expose excessive data or allow mass exfiltration, which might not be the case with a well-crafted UI.

Your existing tools do not work well for APIs

The relatively recent emergence of APIs as the de facto conduit for application connectivity poses significant challenges to security teams and testers. Much of the existing application security (AppSec) tooling that exists was designed in an era when web applications were the primary asset to be protected. Common security tools such as static application security testing (SAST), dynamic application security testing (DAST), and software composition analysis (SCA) are far less effective in assessing APIs than they are with web or mobile applications.

Traditional perimeter protections such as network firewalls or web application firewalls (WAFs) are ineffective in protecting APIs, since they lack the context of the API interface and the expected request and response traffic. Such tools tend to be high in both false positives and false negatives.

More modern API technologies, such as API management portals (APIMs) and gateways, are essential for the operation of APIs at scale, but while they do provide security features, they do not address all attack vectors.

The key takeaway is that while tools are important as part of a defense strategy, they need to be augmented by solid defensive design and coding techniques — this is the focus of the final section of this book.

Developers often lack an understanding of API security

It is important to understand why insecure code exists in the first place if we want to address the problem.

Developers are, by nature, creative problem solvers who thrive on a challenge – unfortunately, this leads them to be over-optimistic, which can lead them to take shortcuts and optimizations, or perhaps work to unrealistic delivery schedules. This is so-called happy path coding, where developers do not fully appreciate how their code could fail or be misused by an attacker, sometimes with dire consequences.

Coupled with over-optimism is a sense of over-confidence – developers will assume they fully understand a problem but may be unaware that they are missing some crucial detail or subtlety, which again can have adverse effects. An example is the adoption of a new API framework and not carefully considering the default settings and deploying a vulnerable product.

Developers will often have a misplaced sense that bad things only happen to other people and not them. Despite witnessing examples of well-known breaches, many developers believe they will never fall victim to a similar misfortune. This general phenomenon is known as the schadenfreude effect.

The development process can be stressful with constant pressure to deliver to schedule, and this can result in compromising full implementation in favor of meeting deadlines. For example, this can include the omission of error handling code or data validation with the intent of coming back to implement them in later releases. With time pressures, this rarely happens, and code is often left in an incomplete state.

Often, developers inherit a code base to maintain that may contain significant technical debt or legacy code. Without a full understanding of the system and its complexities and foibles, developers may be disinclined to make changes to the code base in case they break functionality.

Argentina

Argentina

Australia

Australia

Austria

Austria

Belgium

Belgium

Brazil

Brazil

Bulgaria

Bulgaria

Canada

Canada

Chile

Chile

Colombia

Colombia

Cyprus

Cyprus

Czechia

Czechia

Denmark

Denmark

Ecuador

Ecuador

Egypt

Egypt

Estonia

Estonia

Finland

Finland

France

France

Germany

Germany

Great Britain

Great Britain

Greece

Greece

Hungary

Hungary

India

India

Indonesia

Indonesia

Ireland

Ireland

Italy

Italy

Japan

Japan

Latvia

Latvia

Lithuania

Lithuania

Luxembourg

Luxembourg

Malaysia

Malaysia

Malta

Malta

Mexico

Mexico

Netherlands

Netherlands

New Zealand

New Zealand

Norway

Norway

Philippines

Philippines

Poland

Poland

Portugal

Portugal

Romania

Romania

Russia

Russia

Singapore

Singapore

Slovakia

Slovakia

Slovenia

Slovenia

South Africa

South Africa

South Korea

South Korea

Spain

Spain

Sweden

Sweden

Switzerland

Switzerland

Taiwan

Taiwan

Thailand

Thailand

Turkey

Turkey

Ukraine

Ukraine

United States

United States